Automated Machine Learning on the M5 Forecasting Competition

This article is contributed. See the original author and article here.

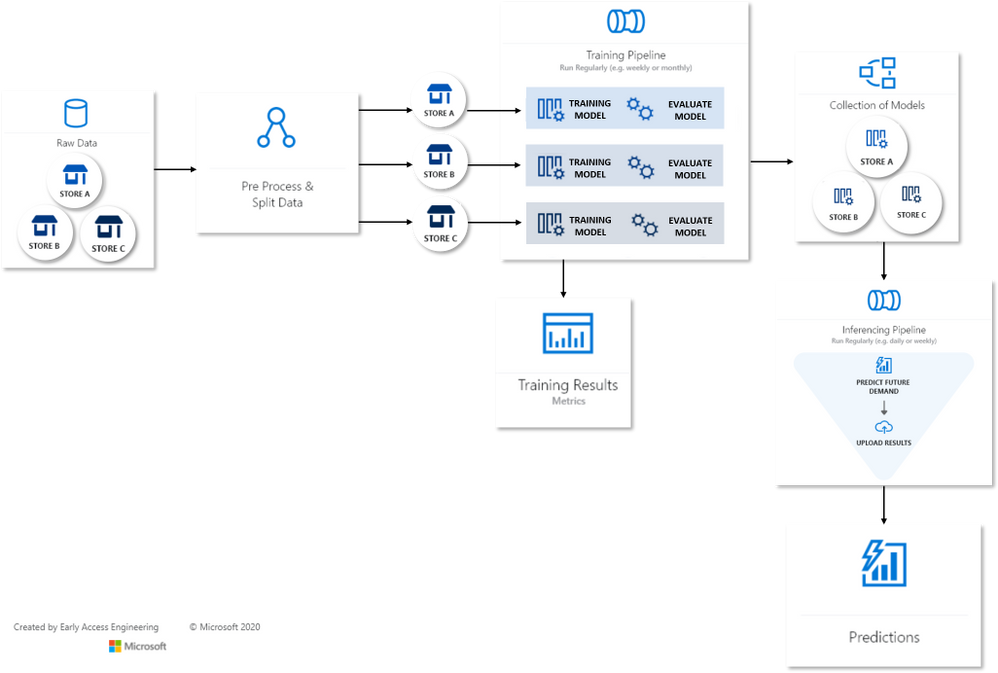

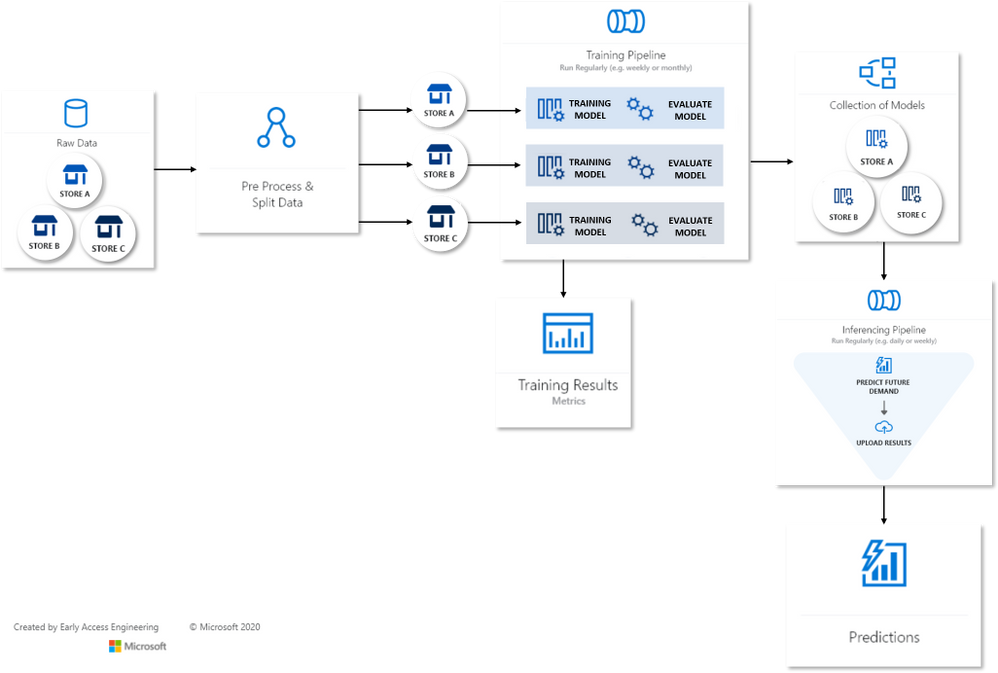

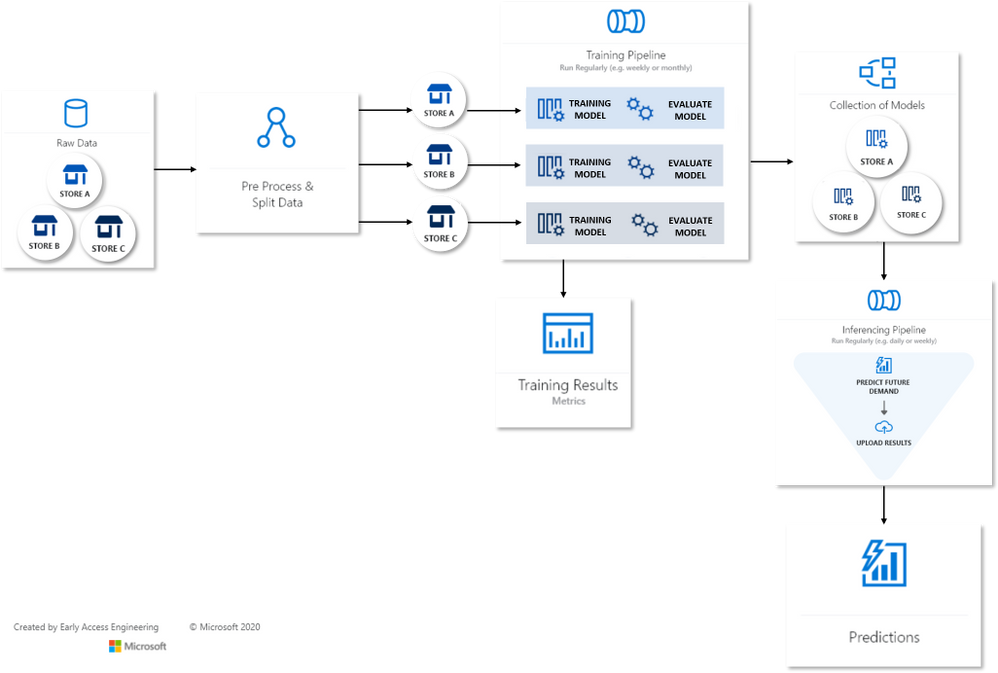

Many Models Flow Map

Many Models Flow Map

This article is contributed. See the original author and article here.

Many Models Flow Map

Many Models Flow Map

This article is contributed. See the original author and article here.

Microsoft has released updates to address multiple vulnerabilities in Microsoft software. An attacker can exploit some of these vulnerabilities to take control of an affected system.

CISA encourages users and administrators to review Microsoft’s November 2021 Security Update Summary and Deployment Information and apply the necessary updates.

This article is contributed. See the original author and article here.

https://www.youtube-nocookie.com/embed/hsNc_QjYwfw

At the beginning of November, Microsoft had their second Ignite of the year, announcing or further clarifying details around many of the latest and near-immediate future features expected to rollout to Microsoft 365. However, as many of the US Federal cloud tenants see features months (if not longer) after they hit the commercial tenant, these users are often left wondering “what’s next for us” instead of having the same excitement commercial tenant owners have coming out of these conferences.

In this episode, we meet with Microsoft architect John Moh (LinkedIn) to discuss our favorite ways to stay up to date on what’s available to us in the GCC, GCC-H, and DOD tenants!

This article is contributed. See the original author and article here.

CISA has released an Industrial Control Systems (ICS) advisory detailing multiple vulnerabilities found in Siemens Nucleus Real-Time Operating Systems (RTOS) and supporting libraries. A remote attacker could exploit some of these vulnerabilities to take control of an affected system.

CISA encourages users and administrators to review ICS Advisory: ICSA-21-313-03 Siemens Nucleus RTOS TCP/IP Stack for more information and apply the necessary mitigations.

This article is contributed. See the original author and article here.

TLDR; Using minimal API, you can create a Web API in just 4 lines of code by leveraging new features like top-level statements and more.

There are many reasons for wanting to create an API in a few lines of code:

There are a few differences:

using and namespace are gone as well, so this code:

using System;

namespace Application

{

class Program

{

static void Main(string[] args)

{

Console.WriteLine("Hello World!");

}

}

}

is now this code:

Console.WriteLine("Hello World!");

Map[VERB] function, like you see above with MapGet() which takes a route and a function to invoke when said route is hit.To get started with minimal API, you need to make sure that .NET 6 is installed and then you can scaffold an API via the command line, like so:

dotnet new web -o MyApi -f net6.0

Once you run that, you get a folder MyApi with your API in it.

What you get is the following code in Program.cs:

var builder = WebApplication.CreateBuilder(args);

var app = builder.Build();

if (app.Environment.IsDevelopment())

{

app.UseDeveloperExceptionPage();

}

app.MapGet("/", () => "Hello World!");

app.Run();

To run it, type dotnet run. A little difference here with the port is that it assumes random ports in a range rather than 5000/5001 that you may be used to. You can however configure the ports as needed. Learn more on this docs page

Ok so you have a minimal API, what’s going on with the code?

var builder = WebApplication.CreateBuilder(args);

On the first line you create a builder instance. builder has a Services property on it, so you can add capabilities on it like Swagger Cors, Entity Framework and more. Here’s an example where you set up Swagger capabilities (this needs install of the Swashbuckle NuGet to work though):

builder.Services.AddEndpointsApiExplorer();

builder.Services.AddSwaggerGen(c =>

{

c.SwaggerDoc("v1", new OpenApiInfo { Title = "Todo API", Description = "Keep track of your tasks", Version = "v1" });

});

Here’s the next line:

var app = builder.Build();

Here we create an app instance. Via the app instance, we can do things like:

app.Run()app.MapGet()app.UseSwagger()With the following code, a route and route handler is configured:

app.MapGet("/", () => "Hello World!");

The method MapGet() sets up a new route and takes the route “/” and a route handler, a function as the second argument () => “Hello World!”.

To start the app, and have it serve requests, the last thing you do is call Run() on the app instance like so:

app.Run();

To add an additional route, we can type like so:

public record Pizza(int Id, string Name);

app.MapGet("/pizza", () => new Pizza(1, "Margherita"));

Now you have code that looks like so:

var builder = WebApplication.CreateBuilder(args);

var app = builder.Build();

if (app.Environment.IsDevelopment())

{

app.UseDeveloperExceptionPage();

}

app.MapGet("/pizza", () => new Pizza(1, "Margherita"));

app.MapGet("/", () => "Hello World!");

public record Pizza(int Id, string Name);

app.Run();

Where you to run this code, with dotnet run and navigate to /pizza you would get a JSON response:

{

"pizza" : {

"id" : 1,

"name" : "Margherita"

}

}

Let’s take all our learnings so far and put that into an app that supports GET and POST and lets also show easily you can use query parameters:

var builder = WebApplication.CreateBuilder(args);

var app = builder.Build();

if (app.Environment.IsDevelopment())

{

app.UseDeveloperExceptionPage();

}

var pizzas = new List<Pizza>(){

new Pizza(1, "Margherita"),

new Pizza(2, "Al Tonno"),

new Pizza(3, "Pineapple"),

new Pizza(4, "Meat meat meat")

};

app.MapGet("/", () => "Hello World!");

app.MapGet("/pizzas/{id}", (int id) => pizzas.SingleOrDefault(pizzas => pizzas.Id == id));

app.MapGet("/pizzas", (int ? page, int ? pageSize) => {

if(page.HasValue && pageSize.HasValue)

{

return pizzas.Skip((page.Value -1) * pageSize.Value).Take(pageSize.Value);

} else {

return pizzas;

}

});

app.MapPost("/pizza", (Pizza pizza) => pizzas.Add(pizza));

app.Run();

public record Pizza(int Id, string Name);

Run this app with dotnet run

In your browser, try various things like:

{id} matching the 2 and thereby it filters down on the one item that matches.Check out these LEARN modules on learning to use minimal API

Recent Comments