This article is contributed. See the original author and article here.

We announce here that Microsoft’s Automated Machine Learning, with nearly default settings, achieves a score in the 99th percentile of private leaderboard entries for the high-profile M5 forecasting competition. Customers use Automated Machine Learning (AutoML) for ML applications in regression, classification, and time series forecasting. For example, The Kantar Group leverages AutoML for churn analysis, allowing clients to boost customer loyalty and increase their revenue.

Our M5 result demonstrates the power and effectiveness of our Many Models Solution which combines classical time-series algorithms and modern machine learning methods. Many Models is used in production pipelines by customers such as AGL, Adamed, and Oriflame for demand forecasting applications. We also use our open-source Responsible AI tools to understand how the model leverages information in the training data. All computations take place on our scalable, cloud-based Azure Machine Learning platform.

The M5 Competition

The M5 Competition, the fifth iteration of the Makridakis time-series forecasting competition, provides a useful benchmark for retail forecasting methods. The data contains historical daily sales information for about 3,000 products from 10 different Wal-Mart retail store locations. As is often the case in retail scenarios, the data has hierarchical structure along product catalog and geographic dimensions. Data features like sales price, SNAP (food stamp) eligibility, and calendar events are provided by the organizers in addition to historical sales. The accuracy track of the competition evaluates 28-day-ahead forecasts for 30,490 store-product combinations. With submissions from over 5,000 teams and 24 baseline models, the competition provides a rich set of comparisons between different modeling strategies.

Modeling Strategy

There are myriad approaches to modeling the M5 data, especially given its hierarchical structure. Since our goal is to demonstrate an automated solution, we executed what we considered the most simple strategy: build a model for each individual store-product combination. The result is a composite model with 30,490 constituent time-series models. Our Many Models Solution, born out of deep engagement with customers, is precisely suited to this task.

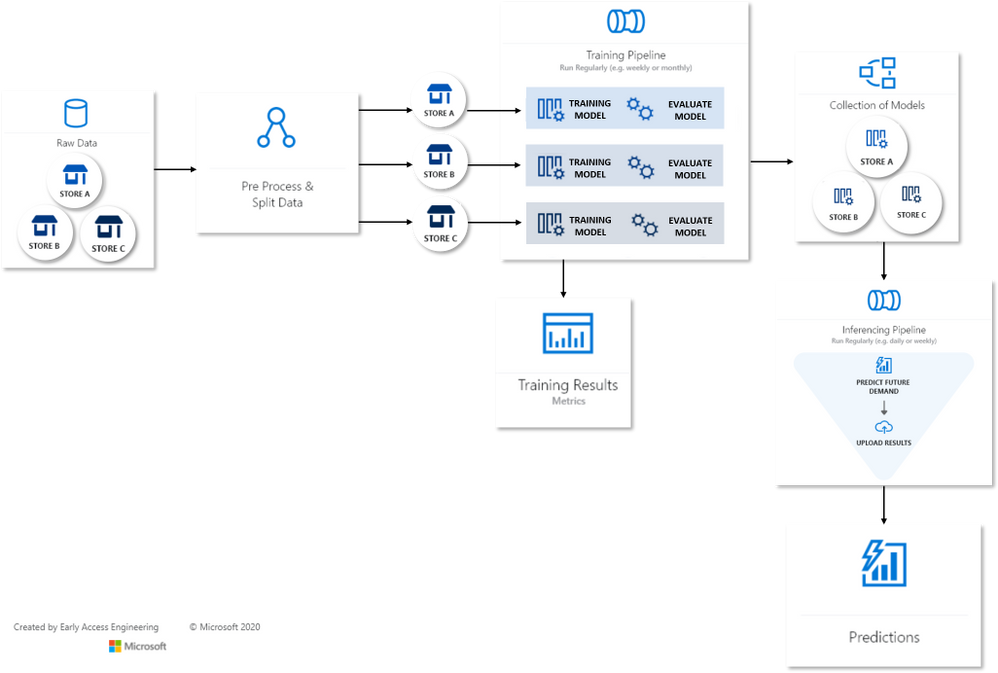

Many Models Flow Map

Many Models Flow MapThe Many Models accelerator runs independent Automated Machine Learning (AutoML) jobs on each store-product time-series, creating a model dictionary over the entire dataset. In turn, each AutoML job generates engineered features and sweeps over model classes and hyperparameters using a novel collaborative filtering algorithm. AutoML then selects the best model for each time-series via temporal cross-validation. Training and scoring are data-parallel operations for Many Models and easily scalable on Azure-managed compute resources.

Understanding the Final Model

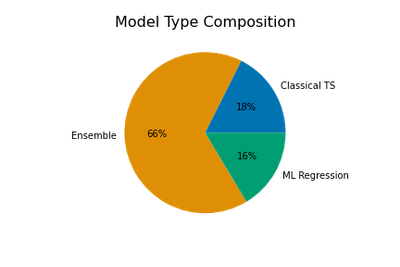

The final composite model is a mix of three model types: classical time-series models, machine learning (ML) regression models, and ensembles which can contain multiple models from either or both of the first two types. AutoML creates the ensembles from weighted combinations of top performing time-series and ML models found during sweeping. Naturally, the ensemble models are often the best models for a given store-product combo.

The chart above shows that two-thirds of the selected models are ensembles, with classical time-series and ML models making up approximately equal portions of the remainder.

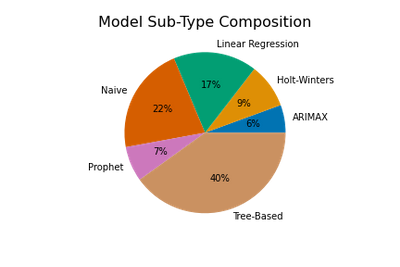

We get a more detailed view of the composite model by breaking into model sub-types. AutoML sweeps over three ML regression subtypes: regularized linear models, tree-based models, and Facebook’s Prophet model. Classical algorithms include Holt-Winters Exponential Smoothing, ARIMAX (ARIMA with regressors), and a suite of “Naive”, or persistence, models. Ensembles are weighted combinations of these sub-types.

The proportions of subtypes in the full composite model are shown above, where ensemble weights are used to apportion subtypes from each ensemble. Tree-based models like Random Forest and XGBoost that are capable of learning complex, non-linear patterns are a plurality. However, relatively simple linear and Naive time-series models are also quite common!

Feature Importance

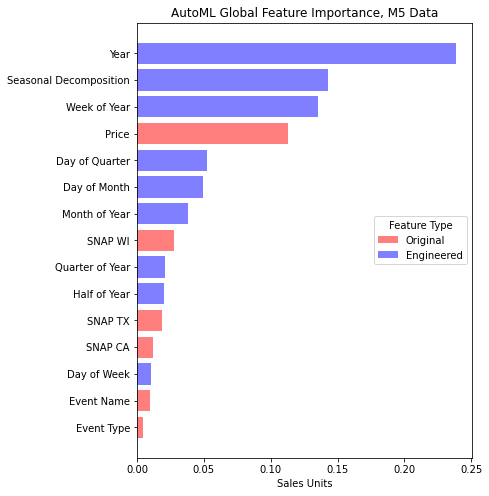

Most of AutoML’s models can make use of the data features beyond the historical sales, so we find yet more insight into the composite model by examining the impact, or importance, of these features relative to the model’s predictions. A common way to quantify feature importance is with game-theoretic Shapley value estimates. AutoML optionally calculates these for the best model selected from sweeping, so we make use of them here by aggregating values over all models in the composite.

In the feature importance chart, we distinguish between features present in the original dataset, such as price, and those engineered by AutoML to aid model accuracy. Evidently, engineered features associated with the calendar and a seasonal decomposition make the most impact on predictions. The seasonal decomposition is derived from weekly sales patterns detected by AutoML. Price is the most important of the original features which is expected in retail scenarios given the likely significant effects of price on demand.

The Value of AutoML and Many Models

Our automatically tuned composite model performs exceedingly well on the M5 data – better than 99% of the other competition entries. Many of these teams spent weeks tuning their models. Despite this excellent result, it is important to note that no single modeling approach will always be the best. In this case, we achieved great accuracy with an assumption that the product-store time-series could be modeled independently of one another. This implies that the dynamics driving changes across sales at different stores and products may vary widely. We’ve learned from several successful engagements with our enterprise customers that the Many Models approach achieves good accuracy and scales well across other forecasting scenarios as well.

From more information, see our other Many Models post: Train and Score Hundreds of Thousands of Models in Parallel.

Special thanks to Sabina Cartacio for contributing text and editorial guidance.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments