by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

Welcome back to our second post in the “Microsoft Cloud App Security: The hunt” series!

If you haven’t read the first post by Sebastien Molendijk, head over to Microsoft Cloud App Security: The hunt in a multi-stage incident – Microsoft Tech Community to see how you can leverage advanced hunting to investigate a multi-stage incident.

As stated previously, this series will be used to address the alerts and scenarios we have seen most frequently from customers and apply simple but effective queries that can be used in everyday investigations.

The below use case describes an avenue to diagnose that an insider is posing risk to an organization. One of the key things to understand about insider risk is that it is an investigation regarding inadvertent or intentional risks posed by employees or other members of the organization. It often requires the ability to understand the context of the user and also to quickly identify and manage risks. The methods we describe are one common way to get at the risk to an organization from an insider who is planning to exit the company.

Every step of this investigation should be done in coordination with your organization’s HR and Legal departments, adhering to appropriate privacy, security and compliance policies as set out by your organization. In addition, there may be training of analysts to handle this kind of investigation with specific and careful steps in accordance with your organization’s commitment to its employees.

Use case

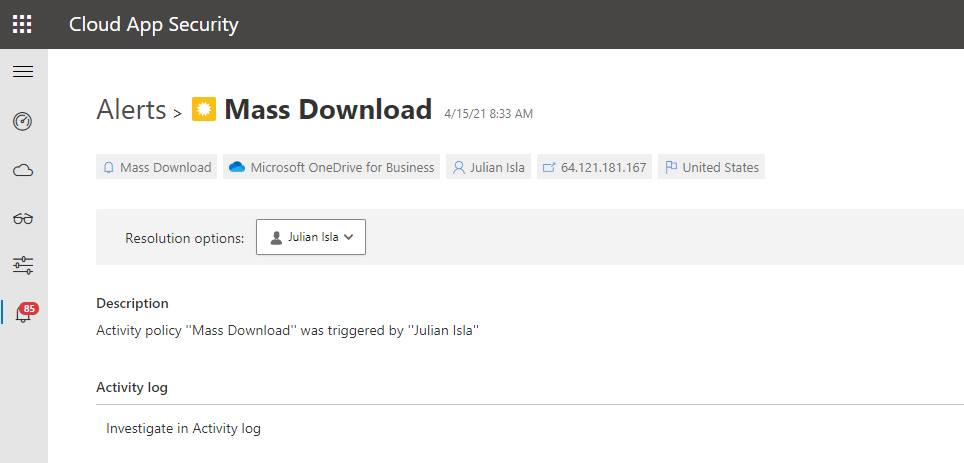

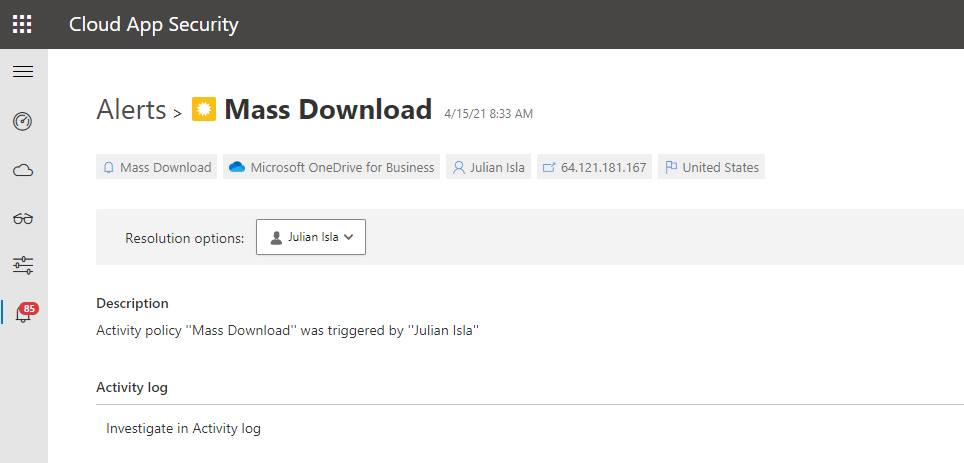

Contoso implemented Microsoft 365 Defender and is monitoring alerts using Microsoft’s security solutions. While reviewing the new alerts, our security analyst noticed a mass download alert that included a user named Julian Isla.

Julian is currently working on a highly confidential initiative called Project Hurricane. Knowing this, the analyst wants to conduct a thorough analysis in this investigation.

Our analyst can immediately see that Cloud App Security provides many key details in the alert, including the user, IP address, application and the location.

The first step for the analyst may be to gather details such as the device, the type of information downloaded, the user’s typical behavior and other possible activities that could mean data was exfiltrated.

Using the available details in the MCAS alert, and the initial questions and concerns of the investigation, we will showcase how to answer each step through an advanced hunting query and that the results of each query shape the follow-on query, allowing the investigator to piece together the full story from the activities logged.

Question 1:

|

Query Used:

|

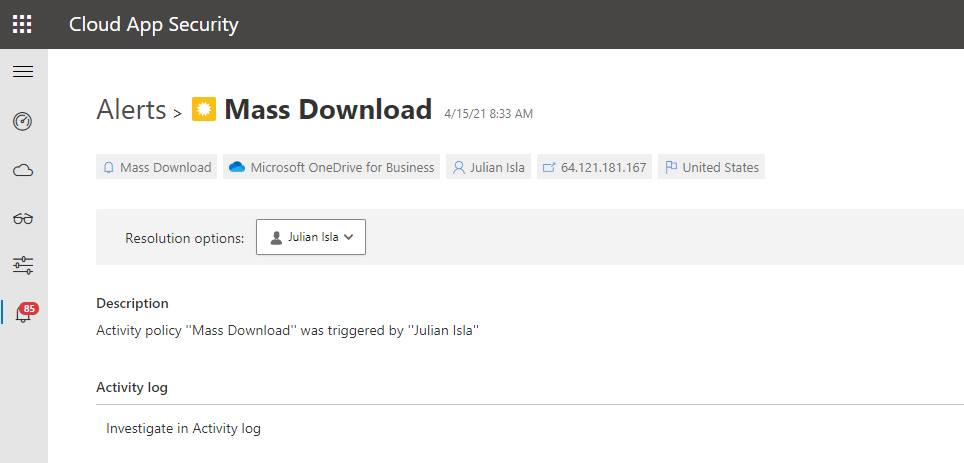

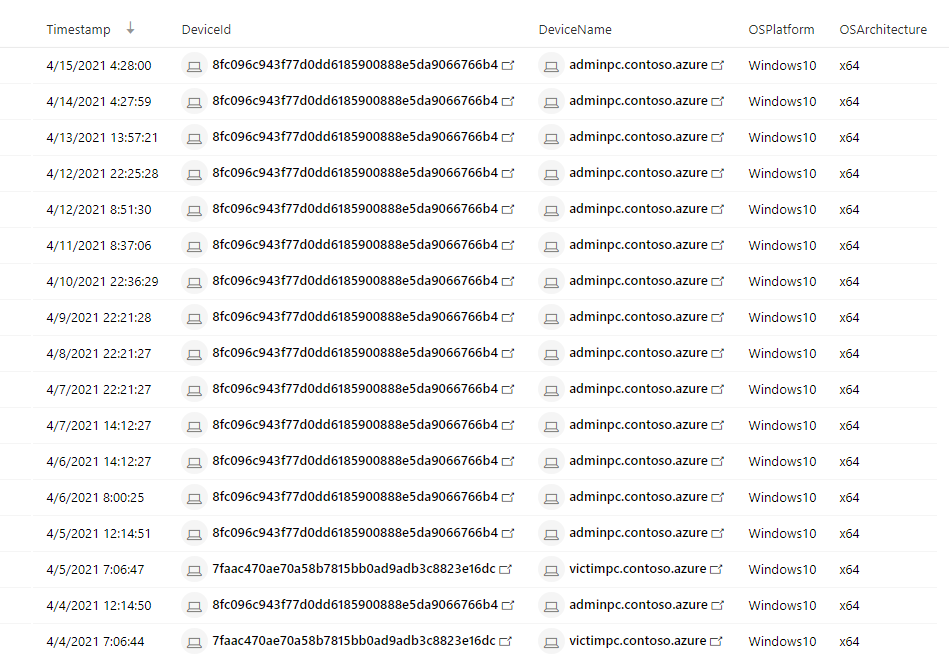

What managed devices has this user logged in to?

|

DeviceInfo

| where LoggedOnUsers has "juliani" and isnotempty(OSPlatform)

| distinct Timestamp, DeviceId, DeviceName, OSPlatform, OSArchitecture

|

NOTE: The analyst was able to extract the Security Account Manager (This can be done by using Cloud App Security’s entity page.

NOTE: If the analyst wanted to display the entire LoggedOnUsers table, the column would look like this:

[{“UserName”:”JulianI”,”DomainName”:”CONTOSO”,”Sid”:”S-1-5-21-1661583231-2311428937-3957907789-1103″}]

Result:

Using this query that surfaces Microsoft Defender for Endpoint (MDE) data, the analyst found that Julian used two devices today, adminpc.contoso.azure and victimpc.contoso.azure. More importantly, the analyst can see that Julian was on the adminpc device on the same day as the alert for a mass download was triggered.

Question 2:

|

Query Used:

|

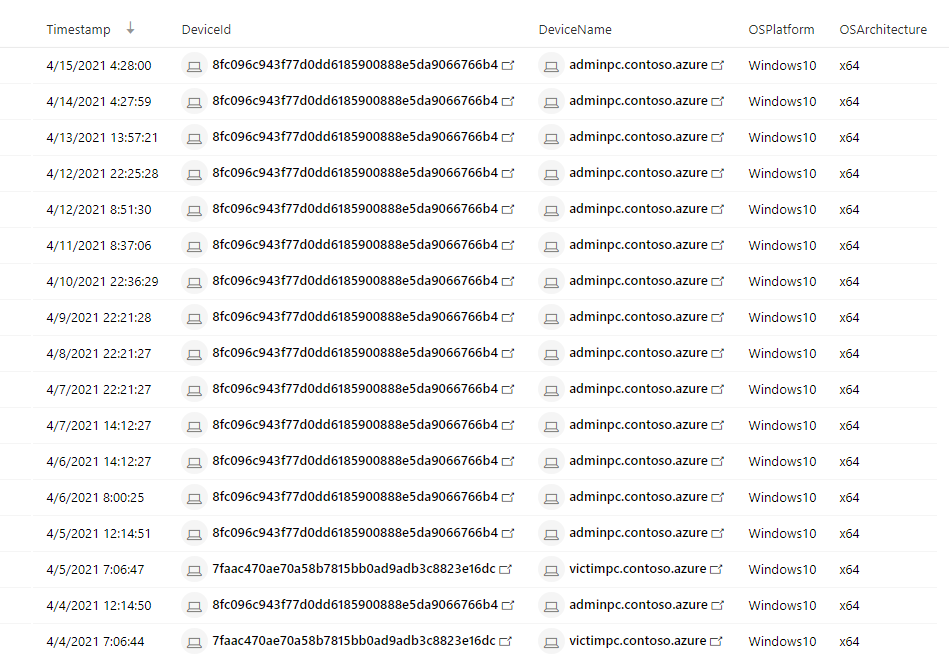

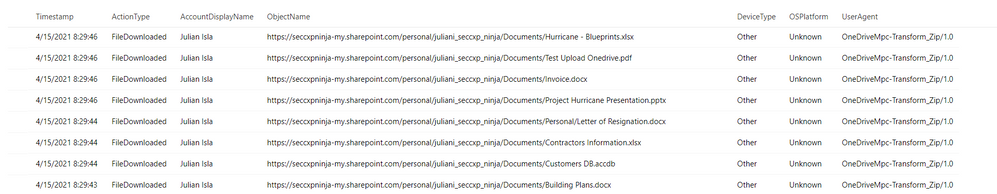

Were the files downloaded to a non-managed device?

|

let AlertTimestamp = datetime(2021-04-15T23:45:00.0000000Z);

CloudAppEvents

| where Timestamp between ((AlertTimestamp - 24h) .. (AlertTimestamp + 24h))

| where AccountDisplayName == "Julian Isla"

| where ActionType == "FileDownloaded"

| project Timestamp, ActionType, AccountDisplayName, ObjectName, DeviceType, OSPlatform, UserAgent

|

Result:

By using the CloudAppEvents table, the analyst can now view the file names and the number of files and devices Julian used to complete these downloads. They can determine by the names of the files and the device details that Julian has downloaded important proprietary company data for Project Hurricane, a high-profile initiative for a new application that includes sensitive customer data and source code.

Question 3:

|

Query Used:

|

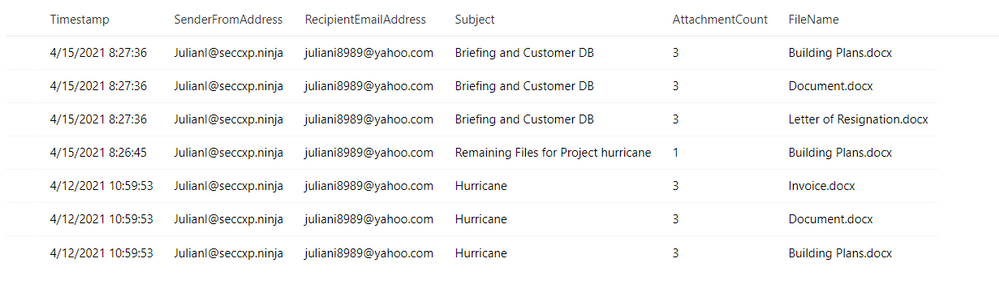

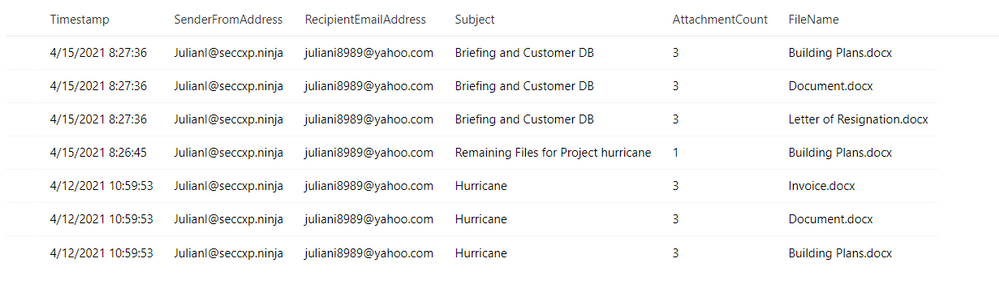

Has this user leveraged personal email in the past?

|

EmailEvents

| where SenderMailFromAddress == "JulianI@seccxp.ninja"

| where RecipientEmailAddress has "@gmail.com" or RecipientEmailAddress has "@yahoo.com" or RecipientEmailAddress has "@hotmail"

| project Timestamp, SenderFromAddress, RecipientEmailAddress, Subject, AttachmentCount, NetworkMessageId

| join EmailAttachmentInfo on NetworkMessageId, RecipientEmailAddress

| project Timestamp, SenderFromAddress, RecipientEmailAddress, Subject, AttachmentCount, FileName

|

Result:

Question 4:

|

Query Used:

|

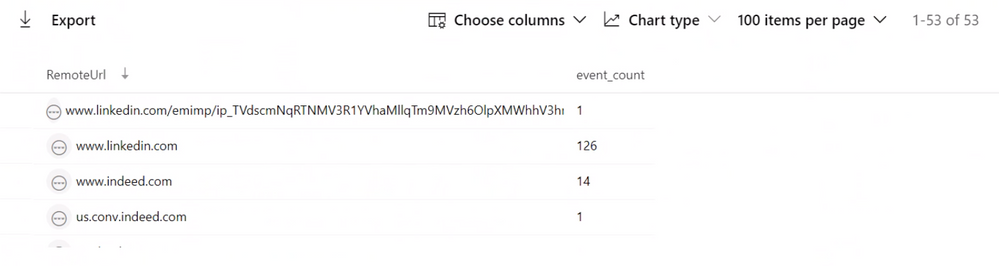

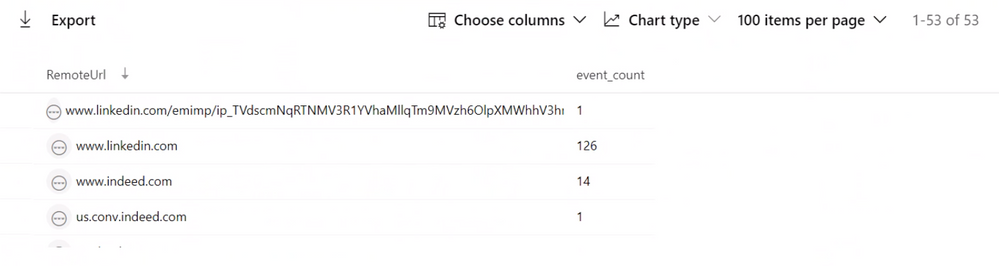

Has this user been actively job searching?

|

DeviceNetworkEvents

| where Timestamp > ago(30d)

| where DeviceName in ("adminpc.contoso.azure”, “victimpc.contoso.azure ")

| where InitiatingProcessAccountName == "juliani"

| where RemoteUrl has "linkedin" or RemoteUrl has "indeed" or RemoteUrl has "glassdoor"

| summarize event_count = count() by RemoteUrl

|

Result:

While investigating the DeviceNetworkEvents table to find if this user may have motivation to be conducting these types of activities, they can see this user is actively surfing job sites and may have plans to leave their current role at Contoso.

Question 5:

|

Query Used:

|

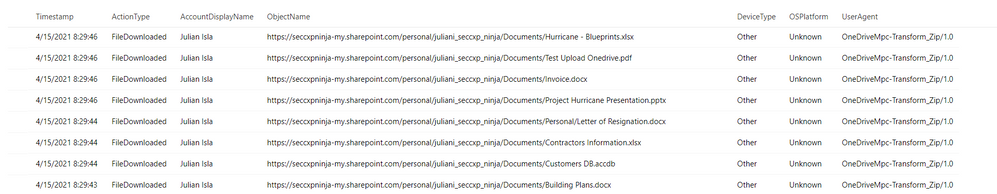

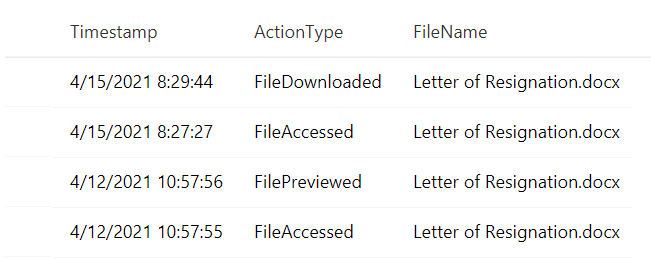

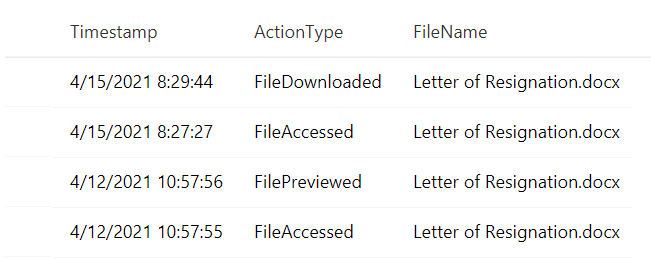

Does this user have a Letter of Resignation or Resume Saved to their local PC?

Does this user have a Letter of Resignation or Resume Saved to their personal OneDrive?

|

DeviceFileEvents

| where Timestamp > ago(30d)

| where InitiatingProcessAccountName == "juliani"

| where DeviceName in ("adminpc.contoso.azure”, “victimpc.contoso.azure ")

| where FileName has "resume" or FileName has "resignation"

| project Timestamp, InitiatingProcessAccountName, ActionType, FileName

CloudAppEvents

| where Timestamp > ago(30d)

| where AccountDisplayName == "Julian Isla"

| where Application == "Microsoft OneDrive for Business"

| extend FileName = tostring(RawEventData.SourceFileName)

| where FileName has "resume" or FileName has "resignation"

| project Timestamp, ActionType, FileName

|

Result:

The analyst is attempting to establish the user’s planned trajectory of actions and sees that they currently have a letter of resignation saved to their desktop and have recently accessed and downloaded it.

Question 6:

|

Query Used:

|

Have any removeable media or external devices been used on the PCs we discovered?

|

let DeviceNameToSearch = "adminpc.contoso.azure";

let TimespanInSeconds = 900; // Period of time between device insertion and file copy

let Connections =

DeviceEvents

| where (isempty(DeviceNameToSearch) or DeviceName =~ DeviceNameToSearch) and ActionType == "PnpDeviceConnected"

| extend parsed = parse_json(AdditionalFields)

| project DeviceId,ConnectionTime = Timestamp, DriveClass = tostring(parsed.ClassName), UsbDeviceId = tostring(parsed.DeviceId), ClassId = tostring(parsed.DeviceId), DeviceDescription = tostring(parsed.DeviceDescription), VendorIds = tostring(parsed.VendorIds)

| where DriveClass == 'USB' and DeviceDescription == 'USB Mass Storage Device';

DeviceFileEvents

| where (isempty(DeviceNameToSearch) or DeviceName =~ DeviceNameToSearch) and FolderPath !startswith "c" and FolderPath !startswith @""

| join kind=inner Connections on DeviceId

| where datetime_diff('second',Timestamp,ConnectionTime) <= TimespanInSeconds

|

Result:

Luckily, the analyst can determine that files were not exfiltrated because there is no record of a removable media device data transfer from the user’s most recently used device.

Throughout the investigation, the analyst had many avenues to pursue and potential ways to mitigate and prevent further exfiltration of data. For example, using Cloud App Security’s user resolutions, the analyst could have suspended the user. Additionally, using Microsoft Defender for Endpoint integration, the analyst could have isolated the managed device, preventing it from having any non-related network communication.

In conclusion, in this test scenario, the Contoso employee, “Julian” had been violating company policy and exfiltrating proprietary data for Project Hurricane to his personal laptop and email account for some time. They also found that the user had been actively job searching and had a recently edited version of a letter of resignation saved to t. Using the initial MCAS alert, as well as logs across Microsoft Defender for Endpoint and Microsoft Defender for Office 365, the analysts have discovered and prevented further data loss for the company by this user.

This completes our second blog, please stay tuned for other common use cases that can be easily and thoroughly investigated with Microsoft Cloud App Security and Microsoft 365 Defender!

Resources:

For more information about the features discussed in this article, please read:

Feedback

We welcome your feedback or relevant use cases and requirements for this pillar of Cloud App Security by emailing CASFeedback@microsoft.com and mention the area or pillar in Cloud App Security you wish to discuss.

Learn more

For further information on how your organization can benefit from Microsoft Cloud App Security, connect with us at the links below:

Follow us on LinkedIn as #CloudAppSecurity. To learn more about Microsoft Security solutions visit our website. Bookmark the Security blog to keep up with our expert coverage on security matters. Also, follow us at @MSFTSecurity on Twitter, and Microsoft Security on LinkedIn for the latest news and updates on cybersecurity.

Happy Hunting!

by Contributed | May 11, 2021 | Technology

This article is contributed. See the original author and article here.

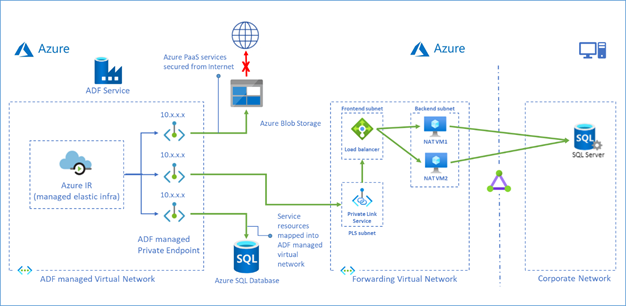

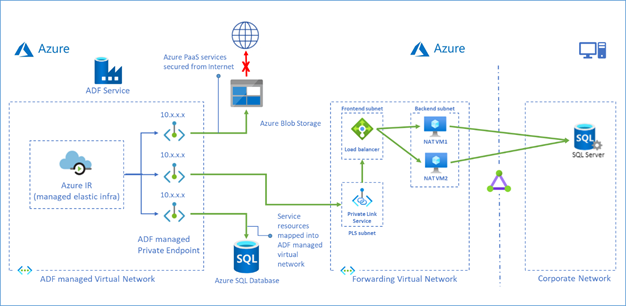

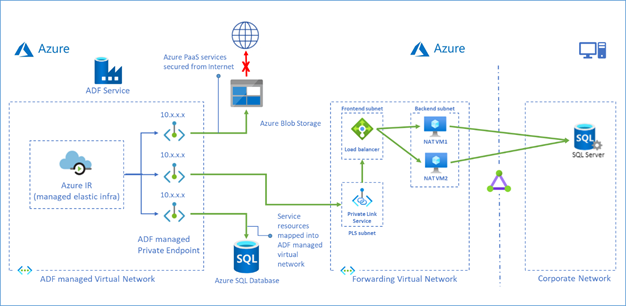

Azure Data Factory managed virtual network is designed to allow you to securely connect Azure Integration Runtime to your stores via Private Endpoint. Your data traffic between Azure Data Factory Managed Virtual Network and data stores goes through Azure Private Link which provides secured connectivity and eliminates your data exposure to the public internet.

Now we have a solution which leverages Private Link Service and Load Balancer to access on premises data stores or data stores in another virtual network from ADF managed virtual network.

To learn more about this solution, visit Tutorial – access on premises SQL Server.

You can also use this approach to access Azure SQL Database Managed Instance, see more in Tutorial – access Azure SQL Database Managed Instance.

by Contributed | May 11, 2021 | Technology

This article is contributed. See the original author and article here.

Improved network performance over the Internet is essential for edge devices connecting to the cloud. Last mile performance impacts user perceived latencies and is an area of focus for our online services like M365, SharePoint, and Bing. Although the next generation transport QUIC is on the horizon, TCP is the dominant transport protocol today. Improvements made to TCP’s performance directly improve response times and download/upload speeds.

The Internet last mile and wide area networks (WAN) are characterized by high latency and a long tail of networks which suffer from packet loss and reordering. Higher latency, packet loss, jitter, and reordering, all impact TCP’s performance. Over the past few years, we have invested heavily in improving TCP WAN performance and engaged with the IETF standards community to help advance the state of the art. In this blog we will walk through our journey and show how we made big strides in improving performance between Windows Server 2016 and the upcoming Windows Server 2022.

Introduction

There are two important building blocks of TCP which govern its performance over the Internet: Congestion Control and Loss Recovery. The goal of congestion control is to determine the amount of data that can be safely injected into the network to maintain good performance and minimize congestion. Slow Start is the initial stage of congestion control where TCP ramps up its speed quickly until a congestion signal (packet loss, ECN, etc.) occurs. The steady state Congestion Avoidance stage follows Slow Start where different TCP congestion control algorithms use different approaches to adjust the amount of data in-flight.

Loss Recovery is the process to detect and recover from packet loss during transmission. TCP can infer that a segment is lost by looking at the ACK feedback from the receiver, and retransmit any segments inferred lost. When loss recovery fails, TCP uses retransmission timeout (RTO, usually 300ms in WAN scenarios) as the last resort to retransmit the lost segments. When the RTO timer fires, TCP returns to Slow Start from the first unacknowledged segment. This long wait period and the subsequent congestion response significantly impacts performance, so optimizing Loss Recovery algorithms enhances throughput and reduces latency.

Improving Slow Start: HyStart++

We determined that the traditional slow start algorithm is overshooting the optimum rate and likely to hit an RTO during slow start due to massive packet loss. We explored the use of an algorithm called HyStart to mitigate this problem. HyStart triggers an exit from Slow Start when the connection latency is observed to increase. However, we found that sometimes false positives cause a premature exit from slow start, limiting performance. We developed a variant of HyStart to mitigate premature Slow Start exit in networks with delay jitter: when HyStart is triggered, rather than going to the Congestion Avoidance stage we use LSS (Limited Slow Start), an increase algorithm that is less aggressive than Slow Start but more aggressive than e have published our ongoing work on the HyStart algorithm as an IETF draft adopted by the TCPM working group: HyStart++: Modified Slow Start for TCP (ietf.org).

Loss recovery performance: Proportional Rate Reduction

HyStart helps prevent the overshoot problem so that we enter loss recovery in Slow Start with fewer packet losses. However, if we retransmit in large bursts. Proportional Rate Reduction (PRR) is a loss recovery algorithm which accurately adjusts the number of bytes in flight throughout the entire loss recovery period such that at the end of recovery it will be as close as possible to the congestion window. We enabled PRR by default in Windows 10 May 2019 Update (19H1).

Re-implementing TCP RACK: Time-based loss recovery

After implementing PRR and HyStart, we still noticed that we tend to consistently hit an RTO during loss recovery if many packets are lost in one congestion window. After looking at the traces, we figured out that it’s lost retransmits that cause TCP to time out. The RACK implementation shipped in Server 2016 is unable to recover lost retransmits. A fully RFC-compliant RACK implementation (which can recover lost retransmits) requires per-segment state tracking but in Server 2016, per-segment state is not stored.

In Server 2016, we built a simple circular-array based data structure to track the send time of blocks of data in one congestion window. The RACK implementation we had with this data structure has many limitations, including being unable to recover lost retransmits. During the development of Windows 10 May 2020 Update, we built per-segment state tracking for TCP and in Server 2022, we shipped a new RACK implementation which can recover lost retransmits.

(Note that Tail Loss Probe (TLP) which is part of RACK/TLP RFC and helps recover faster from tail losses is also implemented and enabled by default since Windows Server 2016.)

Improving resilience to network reordering

Last year, Dropbox and Samsung reported to us that Windows TCP had poor upload performance in their networks due to network reordering. We bumped up the priority of reordering resilience in the Windows version currently under development, we have completed our RACK implementation which is now fully compliant with the RFC. Dropbox and Samsung confirmed that they no longer observed upload performance problems with this new implementation. You can find how we collaborated with the Dropbox engineers here. In our automated WAN performance tests, we also found that the throughput in reordering test cases improved more than 10x.

Benchmarks

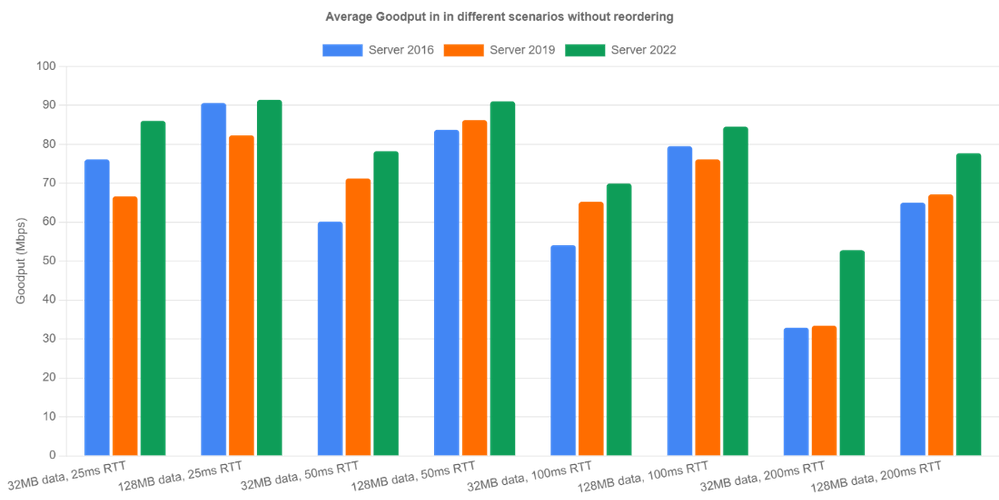

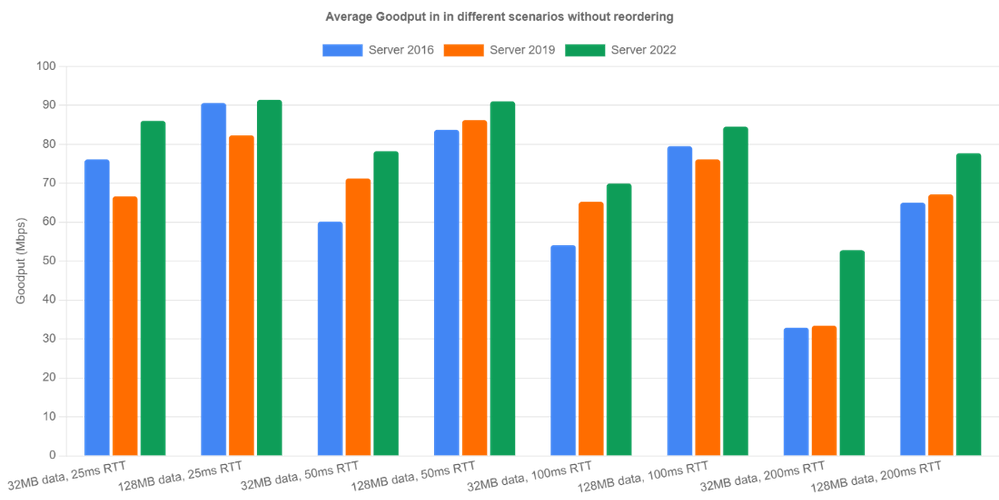

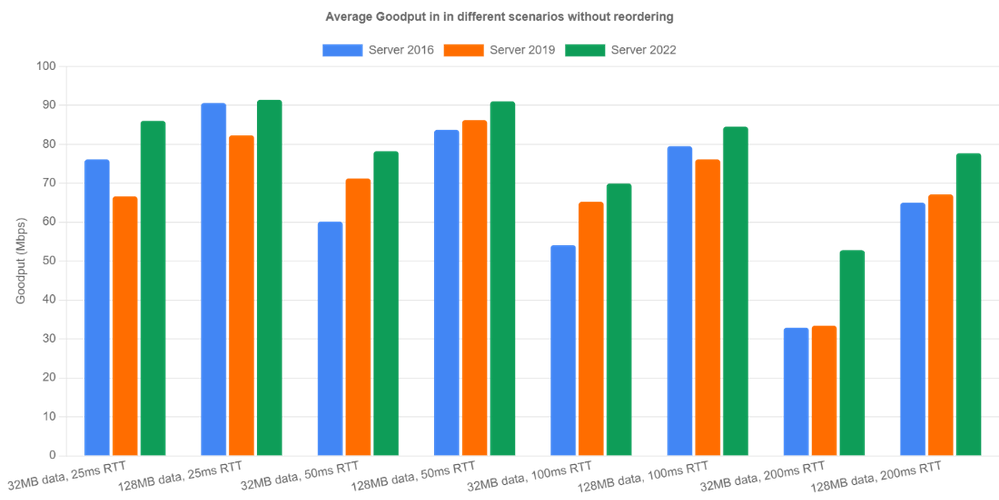

To measure the performance improvements, we set up a WAN environment by creating two NICs on a machine and connecting the two NICs with an emulated link where bandwidth, round trip time, random loss, reordering and jitter can be emulated. We did performance benchmarks on this testbed for Server 2016, Server 2019 and Server 2022 using an A/B testing framework we previously built where you can easily automate testing and data analysis. We used the current Windows build 21359 for Server 2022 in the benchmarks since we plan to backport all TCP perf improvement changes to Server 2022 soon.

Let’s look at non-reordering scenarios first. We emulated 100Mbps bandwidth and tested the three OS versions under four different round trip times (25ms, 50ms, 100ms, 200ms) and two different flow sizes (32MB, 128MB). The bottleneck buffer size was set to 1 BDP. The results are averaged over 10 iterations.

Server 2022 is the clear winner in all categories because RACK significantly reduces RTOs occurring during loss recovery. Goodput is improved by up to 60% (200ms case). Server 2019 did well in relatively high latency cases (>= 50ms). However, for 25ms RTT, Server 2016 outperformed Server 2019. After digging into the traces, we noticed that the Server 2016 receive window tuning algorithm is more conservative than the one in Server 2019 and it happened to throttle the sender, indirectly preventing the overshoot problem.

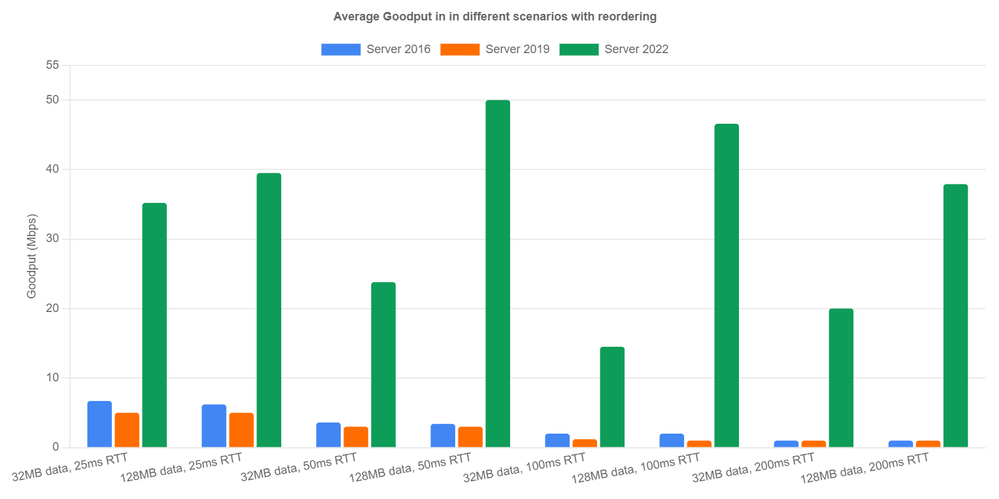

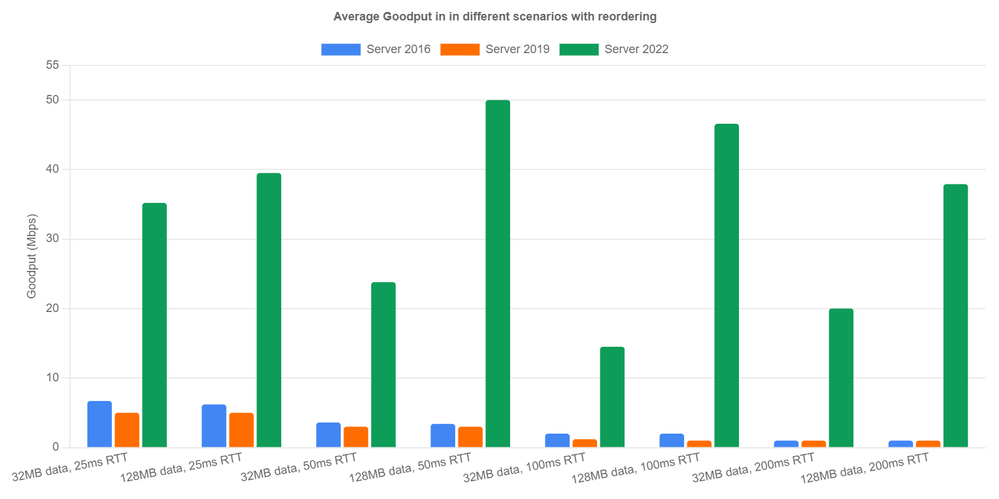

Now let’s look at reordering scenarios. Here’s how we emulate network reordering: we set a probability of reordering per packet. Once a packet is chosen to be reordered, it’s delayed by a specified amount of time instead of the configured RTT. We tested 1% reordering rate and 5ms reordering delay. Server 2016 and Server 2019 achieved extremely low goodput due to lack of reordering resilience. In Server 2022, the new RACK implementation avoided most unnecessary loss recoveries and achieved reasonable performance. We can see goodput is up over 40x in the 128MB with 200ms RTT case. In the other cases, we are seeing at least 5x goodput improvement.

Next Steps

We have come a long way in improving Windows TCP performance on the Internet. However, there are still several issues that we will need to solve in future releases.

- We are unable to measure specific performance improvements from PRR in the A/B tests. This needs more investigation.

- We have found issues with HyStart++ in networks with jitter. So we are working on making the algorithm more resilient to jitter.

- The reassembly queue limit (the max number of discontiguous data blocks allowed in receive queue), turns out to be another factor that affects our WAN performance. After this limit is reached, the receiver discards any subsequent out of order data segments until in-order data fills the gaps. When these segments are discarded, the receiver can only send back SACKs not carrying new information and make the sender stall.

— Windows TCP Dev Team (Matt Olson, Praveen Balasubramanian, Yi Huang)

by Contributed | May 11, 2021 | Technology

This article is contributed. See the original author and article here.

Today we’re announcing the general availability (GA) of Azure Service Fabric managed clusters. Azure Service Fabric managed clusters remove the complexity associated with owning and operating Service Fabric clusters by simplifying deployment and management operations.

Azure customers use Service Fabric to manage both stateless and stateful microservices at scale. While our customers love the reliability, scalability, and richness of Service Fabric’s features, they have asked us to simplify the cluster deployment experience. Our customers have also requested simpler Service Fabric certificate management and scaling operations to facilitate deployments, testing, and scaling of their Service Fabric environment.

Service Fabric managed clusters provide a simplified ARM resource model for easier deployments. This resource model eliminates the need to define individual resources such as Virtual Machines (VMs), storage, or Virtual Networks that make up the cluster. Service Fabric managed clusters also eliminate the need for cluster certificate management by providing certificates that are fully managed by Azure. This ensures that customers don’t run into incidents caused by expired cluster certificates. With Service Fabric managed clusters, operations like removing a node type that previously required multiple steps, can now be completed in a single step. In addition, Service Fabric managed clusters provide full support for Service Fabric’s features. All these improvements together allow our customers to focus on their applications instead of infrastructure management.

“We recently started using Service Fabric and given our scale, we were concerned about the operational overhead of setting up our environments. We onboarded to Service Fabric managed clusters and were pleasantly surprised by the ease of deployment. It was a lot easier to read the simplified ARM template, add extensions, and deploy the cluster.” – Azure Usage Billing

“We operate multiple Service Fabric clusters. Rotating cluster certificates was a time-consuming yearly activity that took time away from application development. We were really excited to learn about the fully managed certificates enabled via Service Fabric managed clusters. Our operations team is elated that we no longer worry about an expired certificate bringing our environment down!” – Azure WAN

“Removing node types from a Service Fabric cluster required lots of steps, and time, which made it difficult to plan for our scaling needs. Service Fabric managed clusters make it really easy to add and remove node types with a single operation enabling us to scale on demand!” – Azure SQL Telemetry

Get started with Azure Service Fabric managed clusters

You can use Quickstart templates to get started with Service Fabric managed clusters or see the Azure Service Fabric managed clusters documentation to learn more. Additionally, you can learn more about all the new features in the release notes and submit feedback on the Service Fabric GitHub repository.

by Contributed | May 11, 2021 | Technology

This article is contributed. See the original author and article here.

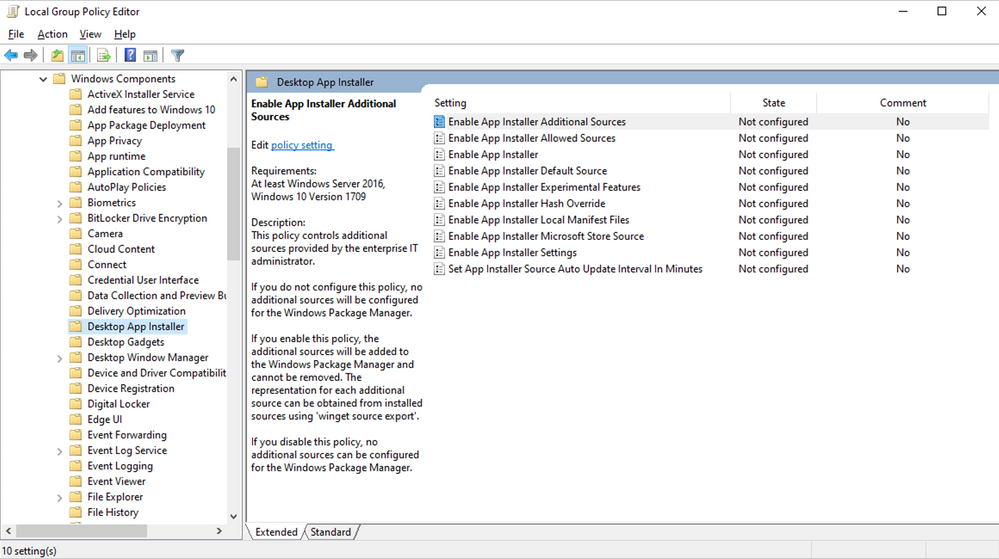

As we prepare to ship version 1.0 of Windows Package Manager, we wanted to provide guidance on how to manage Windows Package Manager using Group Policy.

We first announced the existence of Windows Package Manager at Microsoft Build in 2020. Designed to save you time and frustration, Windows Package Manager is a set of software tools that help automate the process of getting packages (applications) on Windows devices. Users can specify which apps they want installed and the Windows Package Manager does the work of finding the latest version (or the exact version specified) of that application and installing it on the user’s Windows 10 device.

Announcing Group Policy for Windows Package Manager

When we released the Windows Package Manager v0.3.1102 preview, we provided an initial set of “Desktop App Installer Policies” Group Policy Administrative Template files (ADMX/ADML)—making it easy for you review and configure Group Policy Objects targeting your domain-joined devices. To download these ADMX files today, visit the Microsoft Download Center.

Not only to these new policies empower you to enable Windows Package Manager, they enable you to control certain commands and arguments, and configure the sources to which your devices connect.

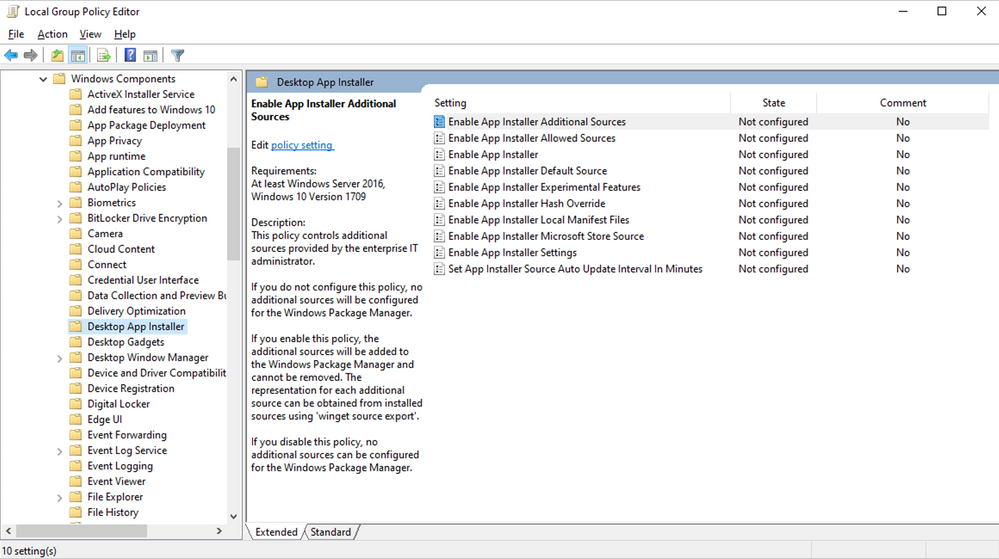

The new Desktop App Installer policies are accessible via the Local Group Policy Editor in Windows 10 as shown here:

Group Policy settings

Any policies that have been enabled or configured will be shown when a user executes winget –info. The goal is to assist users in troubleshooting unexpected behaviors they may encounter in the Windows Package Manager because of any policies that are enabled or configured. For example, a user may attempt to modify a setting controlled by policy and not be able to understand why the device does not appear to honor their setting.

Before we proceed further, let’s clarify two basic terms used with respect to Windows Package Manager:

- A package represents an app, application, or program.

- A manifest is a file (or set of data) containing meta-data providing descriptive elements for a package as well as the location of the installer, and the installers SHA256 hash. The Windows Package Manager obtains manifests from sources such as the default source available for the community repository. Additional sources may be a REST API-based service provided by an enterprise or other party. It is also possible to use a manifest from a path available locally on the machine.

Enable App Installer

This policy controls whether Windows Package Manager can be used by users. Users will still be able to execute the winget command. The default help will be displayed, and users will still be able to execute winget -? to display the help as well. Any other command will result in the user being informed the operation is disabled by Group Policy.

If you enable or do not configure this setting, users will be able to use the Windows Package Manager.

If you disable this setting, users will not be able to use the Windows Package Manager.

Enable App Installer settings

This policy controls whether users can change their settings. The settings are stored inside of a .json file on the user’s system. It may be possible for users to gain access to the file using elevated credentials. This will not override any policy settings that have been configured by this policy.

If you enable or do not configure this setting, users will be able to change settings for Windows Package Manager.

If you disable this setting, users will not be able to change settings for Windows Package Manager.

Enable App Installer Hash Override

This policy controls whether Windows Package Manager can be configured to enable the ability to override SHA256 security validation in settings. Windows Package Manager compares the installer after it has downloaded with the hash provided in the manifest.

If you enable or do not configure this setting, users will be able to enable the ability to override SHA256 security validation in Windows Package Manager settings.

If you disable this setting, users will not be able to enable the ability to override SHA256 security validation in Windows Package Manager settings.

Enable App Installer Experimental Features

This policy controls whether users can enable experimental features in Windows Package Manager. Experimental features are used during Windows Package Manager development cycle to provide previews for new behaviors. Some of these experimental features may be implemented prior to the Group Policy settings designed to control their behavior.

If you enable or do not configure this setting, users will be able to enable experimental features for Windows Package Manager.

If you disable this setting, users will not be able to enable experimental features for Windows Package Manager.

Enable App Installer Local Manifest Files

This policy controls whether users can install packages with local manifest files. If a user has a manifest available via their local file system rather than a Windows Package Manager source, they may install packages using winget install -m <path to manifest>.

If you enable or do not configure this setting, users will be able to install packages with local manifests using Windows Package Manager.

If you disable this setting, users will not be able to install packages with local manifests using Windows Package Manager.

Set App Installer Source Auto Update Interval in Minutes

This policy controls the auto-update interval for package-based sources. The default source for Windows Package Manager is configured such that an index of the packages is cached on the local machine. The index is downloaded when a user invokes a command, and the interval has passed (the index is not updated in the background). This setting has no impact on REST-based sources.

If you disable or do not configure this setting, the default interval or the value specified in settings will be used by Windows Package Manager.

If you enable this setting, the number of minutes specified will be used by Windows Package Manager.

Enable App Installer Default Source

This policy controls the default source included with Windows Package Manager. The default source for Windows Package Manager is an open-source repository of packages located at https://github.com/microsoft/winget-pkgs.

If you enable or do not configure this setting, the default source for Windows Package Manager will be available and can be removed.

If you disable this setting, the default source for Windows Package Manager will not be available.

Enable App Installer Microsoft Store Source

This policy controls the Microsoft Store as a source included with Windows Package Manager.

If you enable or do not configure this setting, the Microsoft Store source for Windows Package manager will be available and can be removed.

If you disable this setting, the Microsoft Store source for Windows Package Manager will not be available.

Enable App Installer Additional Sources

This policy controls additional sources configured for Windows Package Manager.

If you do not configure this setting, no additional sources will be configured for Windows Package Manager.

If you enable this setting, additional sources will be added to Windows Package Manager and cannot be removed. The representation for each additional source can be obtained from installed sources using winget source export.

If you disable this setting, no additional sources can be configured by the user for Windows Package Manager.

Enable Windows Package Manager Allowed Sources

This policy controls additional sources approved for users to configure using Windows Package Manager.

If you do not configure this setting, users will be able to add or remove additional sources other than those configured by policy.

If you enable this setting, only the sources specified can be added or removed from Windows Package Manager. The representation for each allowed source can be obtained from installed sources using winget source export.

If you disable this setting, no additional sources can be configured by the user for Windows Package Manager.

When will Windows Package Manager be available?

Version 1.0 of Windows Package Manager will soon ship as an automatic update via the Microsoft Store for all devices running Windows 10, version 1809 and later and we look forward to hearing your feedback. For more information on Windows Package Manager, please see the following resources:

Continue the conversation. Find best practices. Visit the Windows Tech Community.

Stay informed. For the latest updates on new releases, tools, and resources, stay tuned to this blog and follow us @MSWindowsITPro on Twitter.

Recent Comments