Introducing Azure Sentinel Solutions!

This article is contributed. See the original author and article here.

Today, we are announcing Azure Sentinel Solutions in public preview, featuring a vibrant gallery of 32 solutions for Microsoft and other products. Azure Sentinel solutions provide easier in-product discovery and single-step deployment of end-to-end product, domain, and industry vertical scenarios in Azure Sentinel. Solutions also enables Microsoft partners to deliver combined value for their integrations and productize their investments in Azure Sentinel. This experience is powered by Azure Marketplace for solutions’ discovery and deployment, and by Microsoft Partner Center for solutions’ authoring and publishing.

Azure Sentinel solutions currently include integrations as packaged content with a combination of one or many Azure Sentinel data connectors, workbooks, analytics, hunting queries, playbooks, and parsers (Kusto Functions) for delivering end-to-end product value or domain value or industry vertical value for your SOC requirements. All these solutions are available for you to use at no additional cost (regular data ingest or Azure Logic Apps cost may apply depending on usage of content in Azure Sentinel).

Few use cases of Azure Sentinel solutions are outlined as follows.

- On-demand out-of-the-box content: Solutions unlock the capability of getting rich Azure Sentinel content out-of-the-box for complete scenarios as per your needs via centralized discovery in Solutions gallery and single step deployment capability. Feel free to customize this content per your needs post deploy!

- Unlock complete product value: Discover and deploy a solution for not only onboarding the data for a certain product, but also monitor the data via workbooks, generate custom alerts via analytics in the solution package, use the queries to hunt for threats for that data source and run necessary automations as applicable for that product.

- Unlock domain value: Discover and deploy solutions for specific Threat Intelligence automation scenarios or zero-day vulnerability hunting, analytics, and response scenarios.

- Unlock industry vertical value: Get solutions for ERP scenarios or Healthcare or finance compliance needs in a single step.

- And more to unlock complete SIEM and SOAR capabilities in Azure Sentinel.

Get Started

Select from the rich set of 30+ Solutions to start working with the specific content set in Azure Sentinel immediately. Steps to discover and deploy Solutions is outlined as follows. Refer to the Azure Sentinel solutions documentation for further details.

Discover Solutions

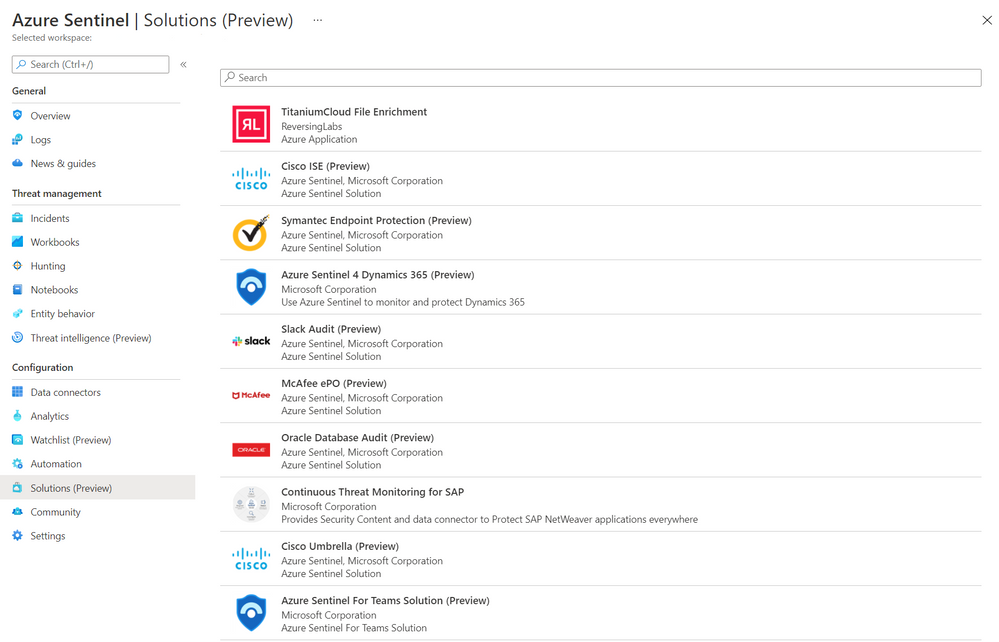

- Select Solutions (Preview) from the Azure Sentinel Solutions navigation menu.

- This displays a searchable list of solutions for you to select from.

Azure Sentinel Solutions Blade

Azure Sentinel Solutions Blade

- Click Load more at the bottom of the page to see more solutions.

- Select solution of your choice and click on it to display the solutions details view.

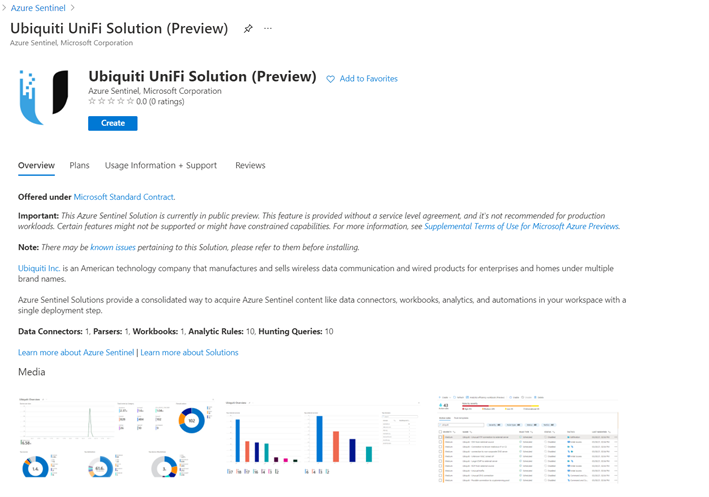

- You can now view the Overview tab that includes important details of the solution and the content included in the solution package as illustrated in the diagram below.

Solution details

Solution details - The Plans tab covers information about the license terms. All the solutions included in the Solutions gallery are available at no additional cost to install.

- The Usage Information + Support tab includes information about the publisher details for each solution and also a direct link to the support contact for the respective solution.

Deploy Solutions

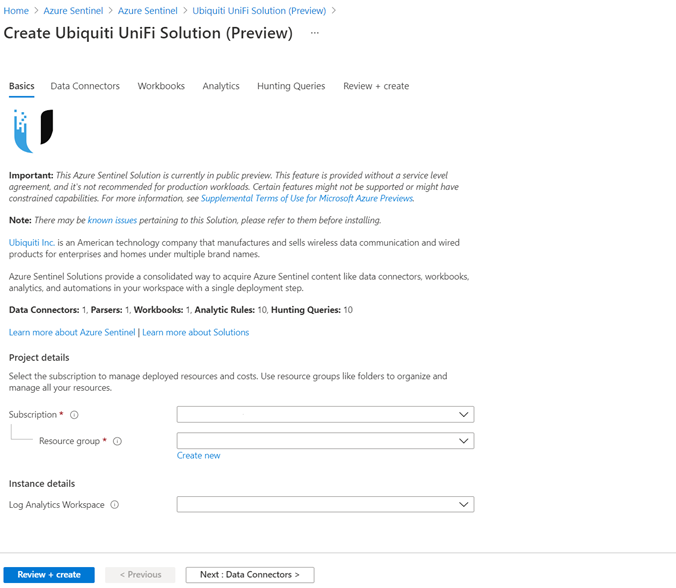

- Select the Create button in the solutions detail page to deploy the solution.

- You can now enter information in each tab of the solutions deployment flow and move to the next tab to enable deployment of this solution as illustrated in the following diagram.

Solution deploy

Solution deploy

Finally select Review and create that will trigger the validation process and upon successful validation select Create to run solution deployment.

Visit the respective feature galleries to customize (as needed), configure, and enable the relevant content included in the Solution package. For e.g., if the Solution deploys a data connector, you’ll find the new data connector in the Data connector blade of Azure Sentinel from where you can follow the steps to configure and activate the data connector.

Partner Scenario: Deliver Solutions

Microsoft partners like ISVs, Managed Service Providers, System Integrators, etc. can follow the 3-step process outlined below to author and publish a solution to deliver product, domain, or vertical value for their products and offerings in Azure Sentinel. Refer to the guidance on Azure Sentinel GitHub for further details on each step.

Step 1. Create Azure Sentinel content for your product / domain / industry vertical scenarios and validate the content.

Step 2. Package content created in the step above. Use the new packaging tool that creates the package and also runs validations on it.

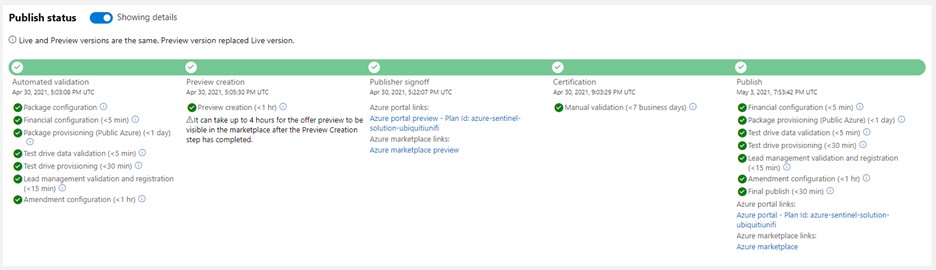

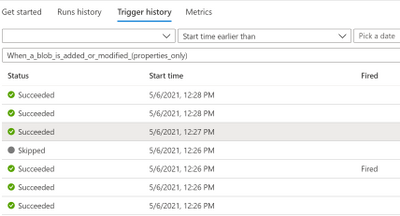

Step 3. Publish your Azure Sentinel solution by creating an offer in Microsoft Partner Center, uploading the package generated in the step above and sending in the offer for certification and final publish. Partners can track progress on their offer in Partner Center dashboard view as shown in the diagram below.  Solution build

Solution build

New Azure Sentinel Solutions

The Azure Sentinel Solutions gallery showcases 32 new solutions covering depth and breadth of various product, domain, and industry vertical capabilities. These out-of-the-box content packages enable to get enhanced threat detection, hunting and response capabilities for cloud workloads, identity, threat protection, endpoint protection, email, communication systems, databases, file hosting, ERP systems and threat intelligence solutions for a plethora of Microsoft and other products and services.

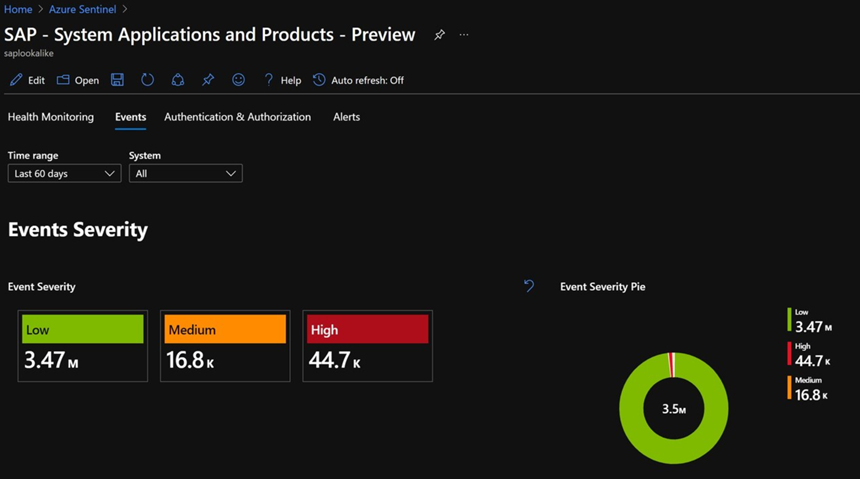

SAP Continuous Threat Monitoring

Use the SAP continuous threat monitoring solution to monitor your SAP applications across Azure, other clouds, and on-premises. This solution package includes a data connector to ingest data, workbook to monitor threats and a rich set of 25+ analytic rules to protect your applications.  SAP Solution

SAP Solution

Cisco Solutions

There are two solutions for Cisco Umbrella and Cisco Identity Services Engine (ISE). The Cisco Umbrella solution provides multiple security functions to enable protection of devices, users, and distributed locations everywhere. The Cisco ISE solution includes data connector, parser, analytics, and hunting queries to streamline security policy management and see users and devices controlling access across wired, wireless, and VPN connections to the corporate network.

PingFederate

PingFederate solution includes data connectors, analytics, and hunting queries to enable monitoring user identities and access in your enterprise.

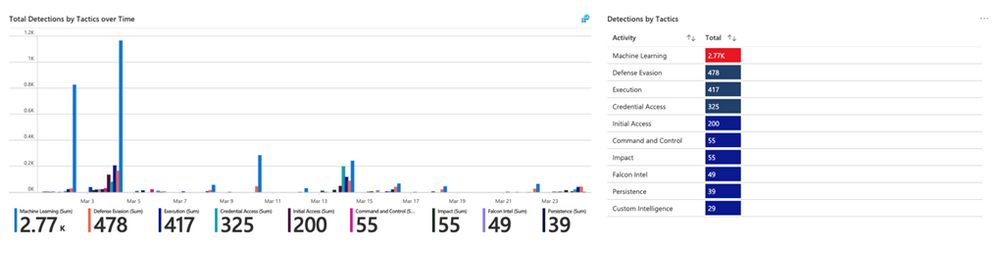

CrowdStrike Falcon Protection Platform

The CrowdStrike solution includes two data connectors to ingest Falcon detections, incidents, audit events and rich Falcon event stream telemetry logs into Azure Sentinel. It also includes workbooks to monitor CrowdStrike detections and analytics and playbooks for automated detection and response scenarios in Azure Sentinel.  CrowdStrike Solution

CrowdStrike Solution

McAfee ePolicy Orchestrator

McAfee ePolicy Orchestrator monitors and manages your network, detecting threats and protecting endpoints against these threats leveraging the data connector to ingest McAfee ePo logs and leveraging the analytics to alert on threats.

Palo Alto Prisma

Palo Alto Prisma solution includes data connector to ingest Palo Alto Cloud logs into Azure Sentinel. Leverage the analytics and hunting queries for out-of-the-box detections and threat hunting scenarios besides leveraging the workbooks for monitoring Palo Alto Prisma data in Azure Sentinel.

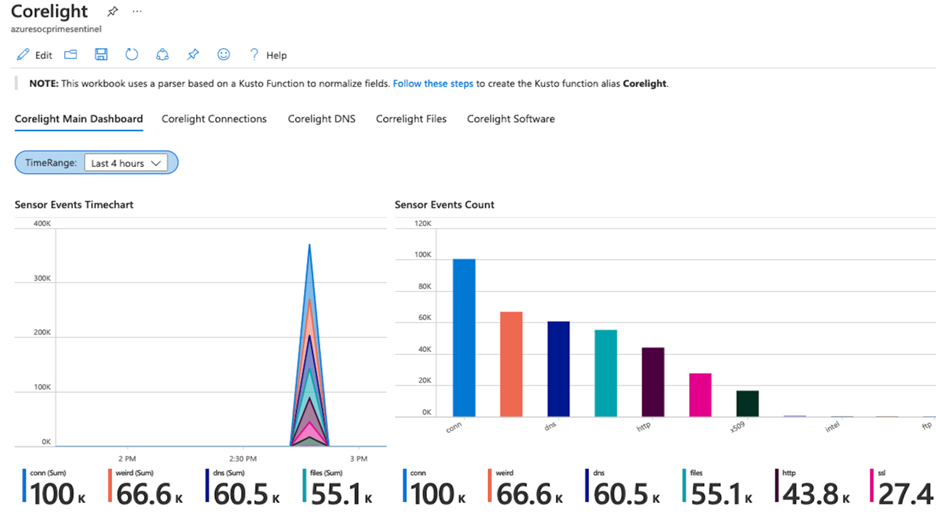

Corelight

Corelight provides a network detection and response (NDR) solution based on best-of-breed open-source technologies, Zeek and Suricata that enables network defenders to get broad visibility into their environments. The data connector enables ingestion of events from Zeek and Suricata via Corelight Sensors into Azure Sentinel. Corelight for Azure Sentinel also includes workbooks and dashboards, hunting queries, and analytic rules to help organizations drive efficient investigations and incident response with the combination of Corelight and Azure Sentinel.  Corelight Solution

Corelight Solution

Infoblox Cloud

BloxOne DDI enables you to centrally manage and automate DDI (DNS, DHCP and IPAM) from the cloud to any and all locations. BloxOne Threat Defense maximizes brand protection to protect your network and automatically extend security to your digital imperatives, including SD-WAN, IoT and the cloud. His Azure Sentinel solution powers security orchestration, automation, and response (SOAR) capabilities, and reduces the time to investigate and remediate cyberthreats.

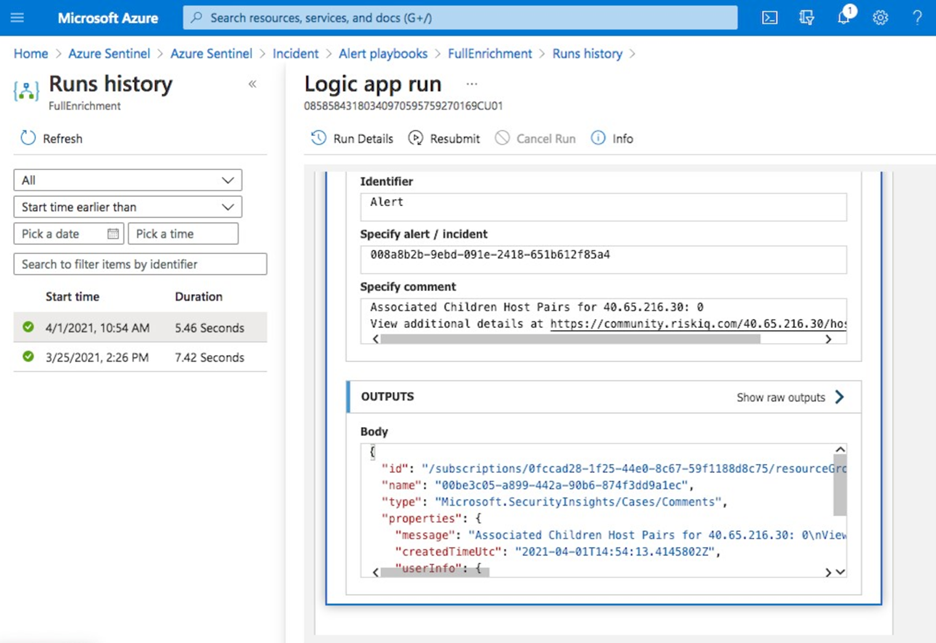

RiskIQ Illuminate Security Intelligence

RiskIQ has created several Azure Sentinel playbooks that pre-package functionality in order to enrich, add context to and automatically action incidents based on RiskIQ Internet observations within the Azure Sentinel platform. These playbooks can be configured to run automatically on created incidents in order to speed up the triage process. When an incident contains a known indicator such as a domain or IP address, RiskIQ will enrich that value with what else it’s connected to on the Internet and if it may pose a threat. If a threat is identified, RiskIQ can action the incident including elevating its status and tagging with additional metadata for analysts to review.  RiskIQ Solution

RiskIQ Solution

vArmour Application Controller

Application Controller is an easy to deploy solution that delivers comprehensive real-time visibility and control of application relationships and dependencies, to improve operational decision-making, strengthen security posture, and reduce business risk across multi-cloud deployments. This solution includes data connector to ingest vArmour data and workbook to monitor application dependency and relationship mapping info along with user access and entitlement monitoring.

VMWare Carbon Black

Use this solution to monitor Carbon Black events, audit logs and notifications in Azure Sentinel and analytic rules on critical threats and malware detections to help you get started immediately.

Symantec Solutions

There are two solutions from Symantec. Symantec Endpoint protection solution enables anti-malware, intrusion prevention and firewall features of Symantec being available in Azure Sentinel and help prevent unapproved programs from running, and response actions to apply firewall policies that block or allow network traffic.

Symantec Proxy SG solution enables organizations to effectively monitor, control, and secure traffic to ensure a safe web and cloud experience by monitoring proxy traffic.

Microsoft Teams

Teams serves a central role in both communication and data sharing in the Microsoft 365 Cloud. Since the Teams service touches on so many underlying technologies in the Cloud, it can benefit from human and automated analysis not only when it comes to hunting in logs, but also in real-time monitoring of meetings in Azure Sentinel. The solution includes analytics rules, hunting queries, and playbooks.

Slack Audit

The Slack Audit solution provides ability to get Slack events which helps to examine potential security risks, analyze your organization’s use of collaboration, diagnose configuration problems and more. This solution includes data connector, workbooks, analytic rules and hunting queries to connect Slack with Azure Sentinel.

Azure Firewall

Azure Firewall is a managed, cloud-based network security service that protects your Azure Virtual Network resources. It’s a fully stateful firewall as a service with built-in high availability and unrestricted cloud scalability. This Azure Firewall solution in Azure Sentinel provides built-in customizable threat detection on top of Azure Sentinel. The solution contains a workbook, detections, hunting queries and playbooks.

Sophos XG Firewall

Monitor the network traffic and firewall status using this solution for Sophos XG Firewall. Furthermore, enable the port scans and excessive denied connections analytic rules to create custom alerts and track as incidents for the ingested data.

Qualys VM

Monitor and detect vulnerabilities reported by Qualys in Azure Sentinel by leveraging the new solutions for Qualys VM.

Microsoft Dynamics 365

The Dynamics 365 continuous threat monitoring with Azure Sentinel solution provides you with ability to collect Dynamics 365 logs, gain visibility of activities within Dynamics 365 and analyze them to detect threats and malicious activities. The solution includes a data connector, workbooks, analytics rules, and hunting queries.

Cloudflare

This solution combines the value of Cloudflare in Azure Sentinel by providing information about the reliability of your external-facing resources such as websites, APIs, and applications. Use the detections and hunting queries to protect your internal resources such as behind-the-firewall applications, teams, and devices.

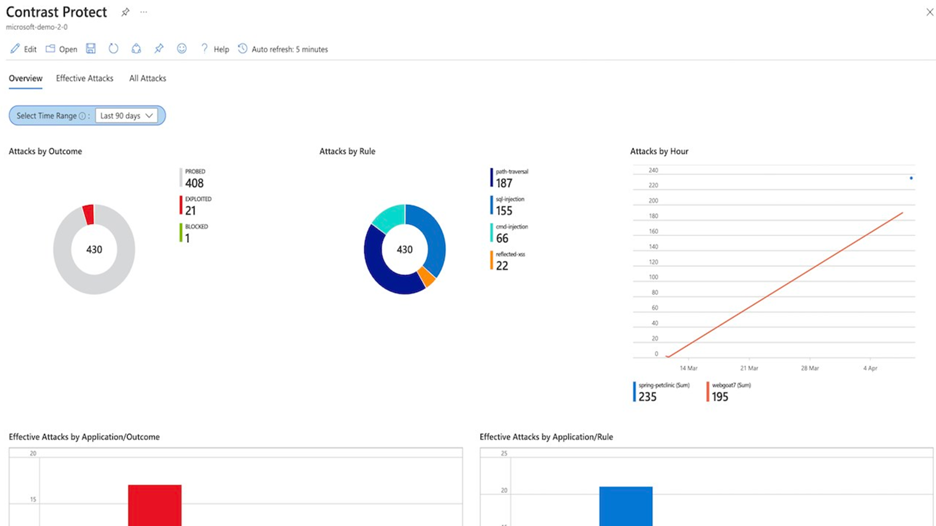

Contrast Protect

Contrast Protect empowers teams to defend their applications anywhere they run, by embedding an automated and accurate runtime protection capability within the application to continuously monitor and block attacks. Contrast Protect seamlessly integrates into Azure Sentinel so you can gain additional security risk visibility into the application layer.  Contrast Protect Solution

Contrast Protect Solution

Check Point CloudGuard

This solution includes an Azure Logic App custom connector and playbooks for Check Point to offer enhanced integration with SOAR capabilities of Azure Sentinel. Enterprises can correlate and visualize these events on Azure Sentinel and configure SOAR playbooks to automatically trigger CloudGuard to remediate threats.

Senserva

Senserva, a Cloud Security Posture Management (CSPM) for Azure Sentinel, simplifies the management of Azure Active Directory security risks before they become problems by continually producing priority-based risk assessments. Senserva information includes a detailed security ranking for all the Azure objects Senserva manages, enabling customers to perform optimal discovery and remediation by fixing the most critical issues with the highest impact items first. All Senserva’s enriched information is sent to Azure Sentinel for processing by analytics, workbooks, and playbooks in this solution.

HYAS Insight

HYAS Insight is a threat and fraud investigation solution using exclusive data sources and non-traditional mechanisms that improves visibility and triples productivity for analysts and investigators while increasing accuracy. HYAS Insight connects attack instances and campaigns to billions of indicators of compromise to understand and counter adversary infrastructure and includes playbooks to enrich and add context to incidents within the Azure Sentinel platform.

Titanium Cloud File Enrichment from ReversingLabs

TitaniumCloud is a threat intelligence solution providing up-to-date file reputation services, threat classification and rich context on over 10 billion goodware and malware files. Files are processed using ReversingLabs File Decomposition Technology. A powerful set of REST API query and feed functions deliver targeted file and malware intelligence for threat identification, analysis, intelligence development, and threat hunting services in Azure Sentinel.

Proofpoint Solutions

Two Solutions for Proofpoint enables bringing in email protection capability into Azure Sentinel. Proofpoint OnDemand Email security (POD) classifies various types of email, while detecting and blocking threats that don’t involve malicious payload. Proofpoint Targeted Attack Protection (TAP) solution helps detect, mitigate and block advanced threats that target people through email in Azure Sentinel. This includes attacks that use malicious attachments and URLs to install malware or trick users into sharing passwords and sensitive information.

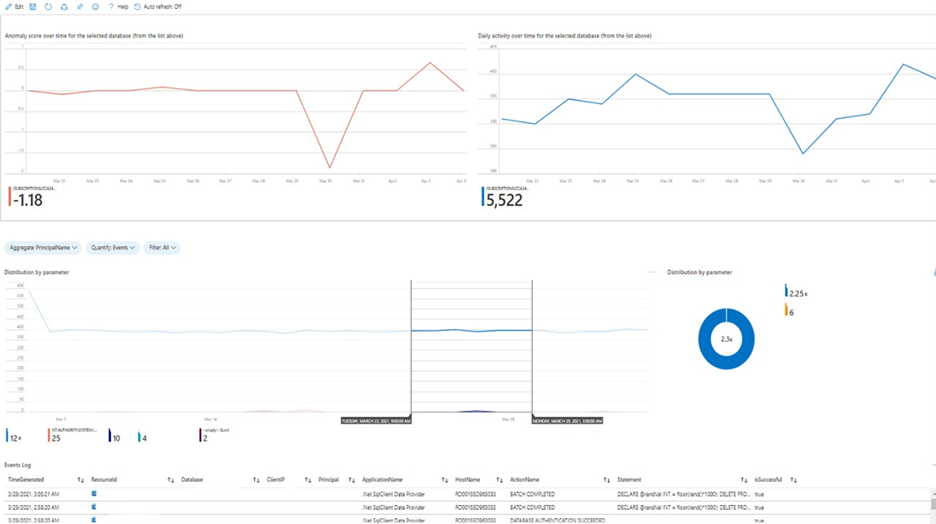

Azure SQL database

This solution provides built-in customizable threat detection for Azure SQL PaaS services in Azure Sentinel, based on SQL Audit log and with seamless integration to alerts from Azure Defender for SQL. This solution includes a guided investigation workbook with incorporated Azure Defender alerts. Furthermore, it includes analytics to detect SQL DB anomalies, audit evasion and threats based on the SQL Audit log, hunting queries to proactively hunt for threats in SQL DBs and a playbook to auto-turn SQL DB audit on.  Azure SQL Solution

Azure SQL Solution

Oracle Database

Oracle Database Unified Auditing enables selective and effective auditing inside the Oracle database using policies and conditions and brings these database audit capabilities in Azure Sentinel. This solution comes with a data connector to get the audit logs as well as workbook to monitor and a rich set of analytics and hunting queries to help with detecting database anomalies and enable threat hunting capabilities in Azure Sentinel.

Ubiquity UniFi

This solution includes data connector to ingest wireless and wired data communication logs into Azure Sentinel and enables to monitor firewall and other anomalies via the workbook and set of analytics and hunting queries.

Box

Box is a single, secure, easy-to-use platform built for the entire content lifecycle, from file creation and sharing, to co-editing, signature, classification, and retention. This solution delivers capabilities to monitor file and user activities for Box and integrates with data collection, workbook, analytics and hunting capabilities in Azure Sentinel.

Closing

Azure Sentinel Solutions is just one of several exciting announcements we’ve made for the RSA Conference 2021. Learn more about other new Azure Sentinel innovations in our announcements blog.

Discover and deploy solutions to get out-of-the-box and end-to-end value for your scenarios in Azure Sentinel. Let us know your feedback using any of the channels listed in the Resources.

We also invite partners to build and publish new solutions for Azure Sentinel. Get started now by joining the Azure Sentinel Threat Hunters GitHub community and follow the solutions build guidance.

Recent Comments