This article is contributed. See the original author and article here.

Improved network performance over the Internet is essential for edge devices connecting to the cloud. Last mile performance impacts user perceived latencies and is an area of focus for our online services like M365, SharePoint, and Bing. Although the next generation transport QUIC is on the horizon, TCP is the dominant transport protocol today. Improvements made to TCP’s performance directly improve response times and download/upload speeds.

The Internet last mile and wide area networks (WAN) are characterized by high latency and a long tail of networks which suffer from packet loss and reordering. Higher latency, packet loss, jitter, and reordering, all impact TCP’s performance. Over the past few years, we have invested heavily in improving TCP WAN performance and engaged with the IETF standards community to help advance the state of the art. In this blog we will walk through our journey and show how we made big strides in improving performance between Windows Server 2016 and the upcoming Windows Server 2022.

Introduction

There are two important building blocks of TCP which govern its performance over the Internet: Congestion Control and Loss Recovery. The goal of congestion control is to determine the amount of data that can be safely injected into the network to maintain good performance and minimize congestion. Slow Start is the initial stage of congestion control where TCP ramps up its speed quickly until a congestion signal (packet loss, ECN, etc.) occurs. The steady state Congestion Avoidance stage follows Slow Start where different TCP congestion control algorithms use different approaches to adjust the amount of data in-flight.

Loss Recovery is the process to detect and recover from packet loss during transmission. TCP can infer that a segment is lost by looking at the ACK feedback from the receiver, and retransmit any segments inferred lost. When loss recovery fails, TCP uses retransmission timeout (RTO, usually 300ms in WAN scenarios) as the last resort to retransmit the lost segments. When the RTO timer fires, TCP returns to Slow Start from the first unacknowledged segment. This long wait period and the subsequent congestion response significantly impacts performance, so optimizing Loss Recovery algorithms enhances throughput and reduces latency.

Improving Slow Start: HyStart++

We determined that the traditional slow start algorithm is overshooting the optimum rate and likely to hit an RTO during slow start due to massive packet loss. We explored the use of an algorithm called HyStart to mitigate this problem. HyStart triggers an exit from Slow Start when the connection latency is observed to increase. However, we found that sometimes false positives cause a premature exit from slow start, limiting performance. We developed a variant of HyStart to mitigate premature Slow Start exit in networks with delay jitter: when HyStart is triggered, rather than going to the Congestion Avoidance stage we use LSS (Limited Slow Start), an increase algorithm that is less aggressive than Slow Start but more aggressive than Congestion Avoidance. We have published our ongoing work on the HyStart algorithm as an IETF draft adopted by the TCPM working group: HyStart++: Modified Slow Start for TCP (ietf.org).

Loss recovery performance: Proportional Rate Reduction

HyStart helps prevent the overshoot problem so that we enter loss recovery in Slow Start with fewer packet losses. However, loss recovery itself might also incur packet losses if we retransmit in large bursts. Proportional Rate Reduction (PRR) is a loss recovery algorithm which accurately adjusts the number of bytes in flight throughout the entire loss recovery period such that at the end of recovery it will be as close as possible to the congestion window. We enabled PRR by default in Windows 10 May 2019 Update (19H1).

Re-implementing TCP RACK: Time-based loss recovery

After implementing PRR and HyStart, we still noticed that we tend to consistently hit an RTO during loss recovery if many packets are lost in one congestion window. After looking at the traces, we figured out that it’s lost retransmits that cause TCP to time out. The RACK implementation shipped in Server 2016 is unable to recover lost retransmits. A fully RFC-compliant RACK implementation (which can recover lost retransmits) requires per-segment state tracking but in Server 2016, per-segment state is not stored.

In Server 2016, we built a simple circular-array based data structure to track the send time of blocks of data in one congestion window. The RACK implementation we had with this data structure has many limitations, including being unable to recover lost retransmits. During the development of Windows 10 May 2020 Update, we built per-segment state tracking for TCP and in Server 2022, we shipped a new RACK implementation which can recover lost retransmits.

(Note that Tail Loss Probe (TLP) which is part of RACK/TLP RFC and helps recover faster from tail losses is also implemented and enabled by default since Windows Server 2016.)

Improving resilience to network reordering

Last year, Dropbox and Samsung reported to us that Windows TCP had poor upload performance in their networks due to network reordering. We bumped up the priority of reordering resilience in the Windows version currently under development, we have completed our RACK implementation which is now fully compliant with the RFC. Dropbox and Samsung confirmed that they no longer observed upload performance problems with this new implementation. You can find how we collaborated with the Dropbox engineers here. In our automated WAN performance tests, we also found that the throughput in reordering test cases improved more than 10x.

Benchmarks

To measure the performance improvements, we set up a WAN environment by creating two NICs on a machine and connecting the two NICs with an emulated link where bandwidth, round trip time, random loss, reordering and jitter can be emulated. We did performance benchmarks on this testbed for Server 2016, Server 2019 and Server 2022 using an A/B testing framework we previously built where you can easily automate testing and data analysis. We used the current Windows build 21359 for Server 2022 in the benchmarks since we plan to backport all TCP perf improvement changes to Server 2022 soon.

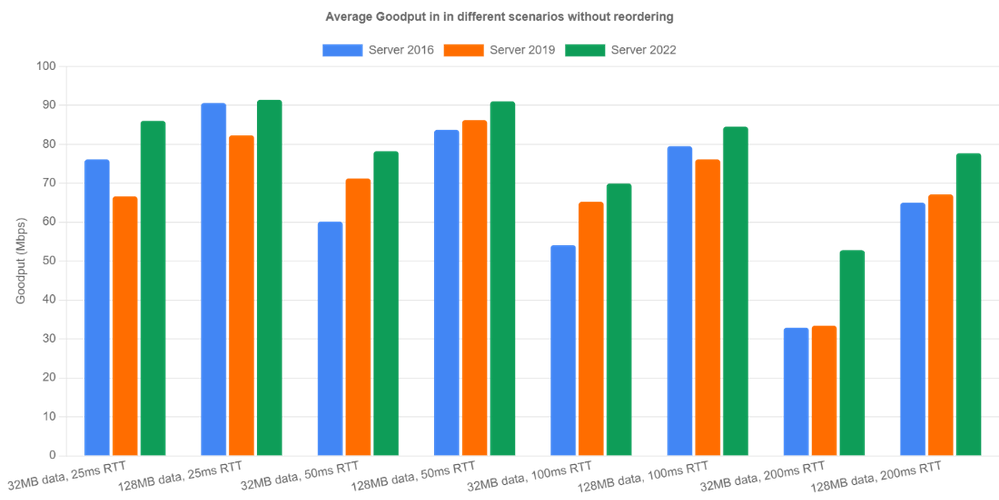

Let’s look at non-reordering scenarios first. We emulated 100Mbps bandwidth and tested the three OS versions under four different round trip times (25ms, 50ms, 100ms, 200ms) and two different flow sizes (32MB, 128MB). The bottleneck buffer size was set to 1 BDP. The results are averaged over 10 iterations.

Server 2022 is the clear winner in all categories because RACK significantly reduces RTOs occurring during loss recovery. Goodput is improved by up to 60% (200ms case). Server 2019 did well in relatively high latency cases (>= 50ms). However, for 25ms RTT, Server 2016 outperformed Server 2019. After digging into the traces, we noticed that the Server 2016 receive window tuning algorithm is more conservative than the one in Server 2019 and it happened to throttle the sender, indirectly preventing the overshoot problem.

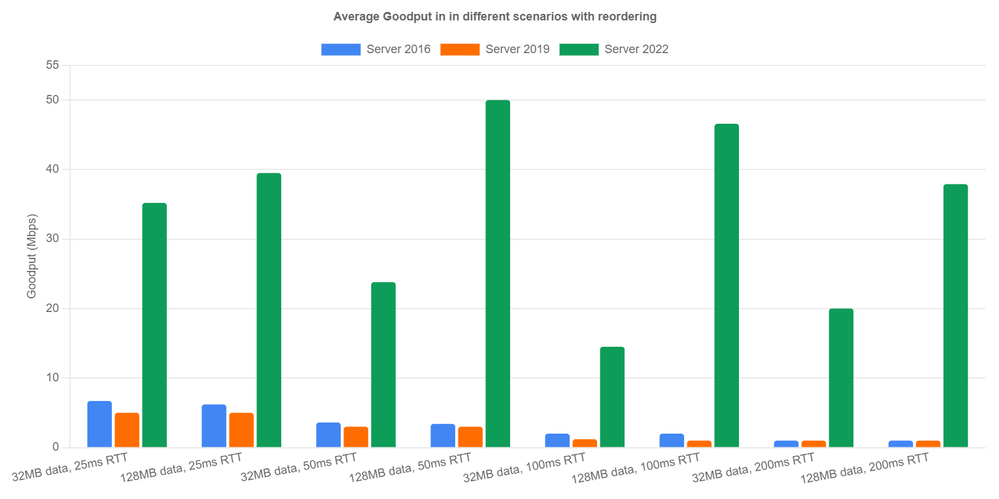

Now let’s look at reordering scenarios. Here’s how we emulate network reordering: we set a probability of reordering per packet. Once a packet is chosen to be reordered, it’s delayed by a specified amount of time instead of the configured RTT. We tested 1% reordering rate and 5ms reordering delay. Server 2016 and Server 2019 achieved extremely low goodput due to lack of reordering resilience. In Server 2022, the new RACK implementation avoided most unnecessary loss recoveries and achieved reasonable performance. We can see goodput is up over 40x in the 128MB with 200ms RTT case. In the other cases, we are seeing at least 5x goodput improvement.

Next Steps

We have come a long way in improving Windows TCP performance on the Internet. However, there are still several issues that we will need to solve in future releases.

- We are unable to measure specific performance improvements from PRR in the A/B tests. This needs more investigation.

- We have found issues with HyStart++ in networks with jitter. So we are working on making the algorithm more resilient to jitter.

- The reassembly queue limit (the max number of discontiguous data blocks allowed in receive queue), turns out to be another factor that affects our WAN performance. After this limit is reached, the receiver discards any subsequent out of order data segments until in-order data fills the gaps. When these segments are discarded, the receiver can only send back SACKs not carrying new information and make the sender stall.

— Windows TCP Dev Team (Matt Olson, Praveen Balasubramanian, Yi Huang)

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments