by Contributed | Dec 30, 2020 | Technology

This article is contributed. See the original author and article here.

Hello readers  ,

,

The Azure Automation question of today is: how can we manage (registration or de-registration) the Hybrid Runbook Workers (HRW) at scale?

In this automated world, it is fool to think that we need to go each computer one by one and run a given script to accomplish the task for the question above. My customers were struggling to find a solution to the question.

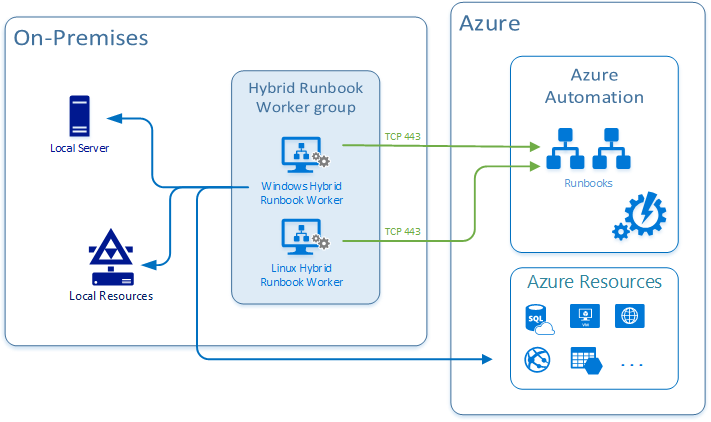

For those of you which are not aware of what an HRW is, let me give a brief description of it: Hybrid Runbook Worker feature of Azure Automation can be used to run runbooks directly on the machine (read: inside the guest OS) that’s hosting the role and against resources in the environment to manage those local resources. Hence, this feature can enable the interaction with any physical or virtual on-prem server, Azure VM as well as with any 3rd party cloud VMs.

You can read more on the official documentation page at https://docs.microsoft.com/en-us/azure/automation/automation-hybrid-runbook-worker

Coming back to the question, I found some methods to manage your HRWs at scale. The choice, which is always up to you, could be influenced by the products and the configurations already in use in your data center.

Below, I am going to describe 3 common ways:

Let’s start then …

Using Azure Automation State Configuration

As first method presented here, we can use Azure Automation State Configuration to manage our HRW at scale. Thanks to my colleague @Anthony Watherston, I was able to provide my customer with a DSC configuration file to fulfill the request.

In this case, the given computer(s), has to be already onboarded as DSC node (see Enable Azure Automation State Configuration | Microsoft Docs for more details).

So, assuming that DSC nodes are configured correctly, you can move on adding your configuration and then compiling it to make it usable by the nodes. Last step will be to assign a compiled configuration to a node (or nodes) to be applied to.

I will not dig into all necessary phases (adding, compiling, and assigning configuration). I’ll just focus on the method and will provide you with the sample configuration file (basically a .ps1 file) that you can compile and use to onboard the HRW.

NOTE: This script can onboard either a single HRW or a group, depending on the optional parameter hrwName. If you pass an empty value, then it will be assigned to the NetBIOS computer name, thus creating a single HRW.

Configuration OnboardHRW

{

Param(

[Parameter(Mandatory=$True)]

[ValidateNotNullOrEmpty()]

[string]$primaryKey,

[Parameter(Mandatory=$True)]

[ValidateNotNullOrEmpty()]

[string]$endPointUrl,

[Parameter(Mandatory=$false)]

[string]$hrwName

)

Import-DscResource -ModuleName 'PSDesiredStateConfiguration'

Node localhost

{

Script onboard

{

GetScript = { return @{Present = $true } }

TestScript = {

if (Test-Path HKLM:SOFTWAREMicrosoftHybridRunbookWorker)

{

$epURL = $using:endPointUrl

$dir = Get-ChildItem HKLM:SOFTWAREMicrosoftHybridRunbookWorker | Where-Object PSChildName -eq $epURL.Split("/")[-1]

if ($dir)

{

if((Get-ChildItem HKLM:SOFTWAREMicrosoftHybridRunbookWorker$($epURL.Split("/")[-1])).Name.Count -gt 1 )

{

return $true

}

else

{

return $false

}

}

else

{

return $false

}

}

else

{

return $false

}

}

SetScript = {

# Getting the AutomationAccount version directly from the folder inside the server. Saving 1 parameter.

$AzureAutomationVersion = Get-ChildItem -LiteralPath "C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation" | Select -ExpandProperty Name

# Validating group name paramter or setting the HRW name to be the NetBIOS computer name

$myHrwName = $using:hrwName

if(([string]::IsNullOrEmpty($myHrwName)) -or ([string]::IsNullOrWhitespace($myHrwName)))

{

$myHrwName = $env:COMPUTERNAME

}

else

{

$myHrwName = $myHrwName.Trim()

}

Import-Module -FullyQualifiedName "C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation$AzureAutomationVersionHybridRegistrationHybridRegistration.psd1"

Add-HybridRunbookWorker -GroupName $myHrwName -Url $using:endPointUrl -Key $using:primaryKey

}

}

}

}

You can modify the above configuration to be compiled using PowerShell instead of using the Azure State Configuration from the portal or to unregister the HRW. Give the Azure Automation State Configuration enough time (based on the node onboarding settings you have specified) and you will get your HRW registered on all assigned nodes.

The ‘PowerShell Remoting’ way

Another good method that I followed during some of my on-sites, is based on a PowerShell script that I created to make the HRW management at scale possible.

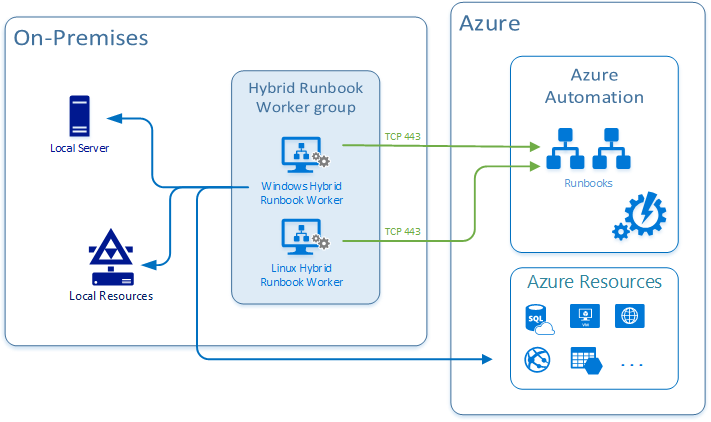

I came up with the idea of having a list of HRW, for instance in a csv file (in this case the content is supposed to be a 1:1 match of the HRW names with the computer names plus the necessary action) and passing each record as well as the necessary additional parameters to the script. This script could be executed from one computer to manage (just) one or more servers, according to what’s in the list. In case of multiple entries, we could leverage the PowerShell Remoting.

The structure of the csv is quite simple, it’s just a list of names followed by the corresponding action and separated by a comma (“,”) like the one in the screenshot below:

The script will read the list and for every record will perform the registration:

- Locally if the local computer name is equal to one of the records.

- Remotely using PowerShell Remoting, if none of the records is matching the local computer name.

NOTE: Actions have been defined according to those available in the HybridRegistration PowerShell module. Refresh is an additional one which just execute Remove and Add in sequence.

The remote installation will be done using a dedicated remote session established every time with the New-PSSession cmdlet.

You can leverage my sample script below or create a brand new one if you like. The code I used is:

param(

[Parameter(Mandatory=$True,

ValueFromPipelineByPropertyName=$false,

HelpMessage='Insert the automation account endpoint from the Azure Portal --> Automation Account --> Keys --> URL',

Position=0)]

[string]$AutomationAccountURL,

[Parameter(Mandatory=$True,

ValueFromPipelineByPropertyName=$false,

HelpMessage='Insert the automation account key from the Azure Portal --> Automation Account --> Keys --> Primary Access Key',

Position=0)]

[string]$AutomationAccountKey

)

#Region Functions

Function doAction ([string]$fAction, [string]$fAutomationAccountURL, [string]$fAutomationAccountKey, [string]$fAzureAutomationVersion, [string]$fRegKey)

{

#Logging action and computer

Write-Host "Performing action <$fAction> on server <$env:COMPUTERNAME>"

Switch($fAction)

{

"Remove"

{

try

{

Remove-HybridRunbookWorker -url $fAutomationAccountURL -key $fAutomationAccountKey -ErrorAction Stop

write-host "The hybrid worker <$env:COMPUTERNAME> has been succesfully de-registered." -ForegroundColor Green

}

catch

{

Write-Host "The hybrid worker <$env:COMPUTERNAME> was not registered."

}

if (Test-Path $fRegKey)

{

write-host "Deleting the corresponding registry key <$fRegKey> and all its subkeys."

Remove-Item $fRegKey -Recurse

write-host "Registry key <$fRegKey> and all its subkeys have been successfully deleted." -ForegroundColor Green

}

else

{

write-host "The corresponding registry key <$fRegKey> was not existing or has been succesfully removed by the de-registration process" -ForegroundColor Yellow

}

# Restarting the service

Restart-Service healthservice

}

"Add"

{

if(Test-Path "C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation$fAzureAutomationVersionHybridRegistration")

{

cd "C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation$fAzureAutomationVersionHybridRegistration"

Import-Module .HybridRegistration.psd1

try

{

Add-HybridRunbookWorker -Name $env:COMPUTERNAME -EndPoint $fAutomationAccountURL -Token $fAutomationAccountKey -ErrorAction Stop

write-host "The hybrid worker <$env:COMPUTERNAME> has been succesfully registered." -ForegroundColor Green

}

catch

{

Write-Host "Exception generated while registering hybrid worker <$env:COMPUTERNAME>. The error is: $($_.exception)" -ForegroundColor Red

}

}

else

{

write-host "Path 'C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation$fAzureAutomationVersionHybridRegistration' does not exist on computer <$env:COMPUTERNAME>. Check if the MMA is installed and that the AzureAutomation version folder is correct." -ForegroundColor Red

}

}

"Refresh"

{

# Performing a remove operation

try

{

Remove-HybridRunbookWorker -url $fAutomationAccountURL -key $fAutomationAccountKey -ErrorAction Stop

if (Test-Path $fRegKey)

{

Remove-Item $fRegKey -Recurse

}

# Restarting the service

Restart-Service healthservice

# Performing an Add operation

if(Test-Path "C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation$fAzureAutomationVersionHybridRegistration")

{

cd "C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation$fAzureAutomationVersionHybridRegistration"

Import-Module .HybridRegistration.psd1

try

{

Add-HybridRunbookWorker -Name $env:COMPUTERNAME -EndPoint $fAutomationAccountURL -Token $fAutomationAccountKey -ErrorAction Stop

write-host "The hybrid worker <$env:COMPUTERNAME> has been succesfully re-registered (refresh)." -ForegroundColor Green

}

catch

{

Write-Host "Exception generated while registering hybrid worker <$env:COMPUTERNAM>. The error is: $($_.exception)" -ForegroundColor Red

}

}

else

{

write-host "Path 'C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation$fAzureAutomationVersionHybridRegistration' does not exist. Check if the MMA is installed and that the AzureAutomation version folder is correct." -ForegroundColor Red

}

}

catch

{

Write-Host "Exception generated while removing hybrid worker <$env:COMPUTERNAME>. The error is: $($_.exception)" -ForegroundColor Red

}

}

}

}

#endregion

$currentLocation = Get-Location

$regKey = "HKLM:SOFTWAREMICROSOFTHybridRunbookWorker"

$AzureAutomationVersion = Get-ChildItem -LiteralPath "C:Program FilesMicrosoft Monitoring AgentAgentAzureAutomation" | Select -ExpandProperty Name

$srvList = Get-Content ".ServerList.csv"

ForEach ($srv in $srvList)

{

If (!($srv -like "#*")-and !([string]::IsNullOrEmpty($srv)))

{

#parsing file

[string]$srvName = $srv.Split(",")[0].trim(""",""");

[string]$Action = $srv.Split(",")[1].trim(""",""").trim(" "," ");

Set-Location $currentLocation

Start-Transcript -Path ".Manage-HybridWorker-At-Scale-$srvName.Log"

if($srvName -eq $env:COMPUTERNAME)

{

#Installing locally

Write-Host "======================================================" -ForegroundColor Magenta

Write-Host "======================================================" -ForegroundColor Magenta

write-host "Executing locally on server <$srvName>." -ForegroundColor Cyan

doAction $Action $AutomationAccountURL $AutomationAccountKey $AzureAutomationVersion $regKey

}

else

{

#Installing remotely

Write-Host "======================================================" -ForegroundColor Magenta

Write-Host "======================================================" -ForegroundColor Magenta

write-host "Connecting to server <$srvName> using PowerShell Remote session." -ForegroundColor Cyan

$psrSess = New-PSSession -ComputerName $srvName

If($psrSess.State -eq "Opened")

{

Invoke-Command -Session $psrSess -ScriptBlock ${function:doAction} -Args $Action, $AutomationAccountURL, $AutomationAccountKey, $AzureAutomationVersion, $regKey

#write-host "Installation on server <$srvName>, finished." -ForegroundColor Green

}

else

{

write-host "Error opening the remote session on server <$srvName>" -ForegroundColor Green

}

Remove-PSSession $psrSess

}

Stop-Transcript

}

}

Set-Location $currentLocation

#Logging and of script

Write-Host "All the servers in the file have been processed."

Write-Host "<<End of script>>"

To run it, you can use the command below. Make sure you replace the highlighted values:

.Manage-HybridWorker-At-Scale.ps1 -AutomationAccountURL "<PUT_YOUR_AUTOMATIONACCOUNT_URL>" -AutomationAccountKey "<PUT_YOUR_AUTOMATIONACCOUNT_PRIMARYACCESSKEY>"

Leveraging SCOM

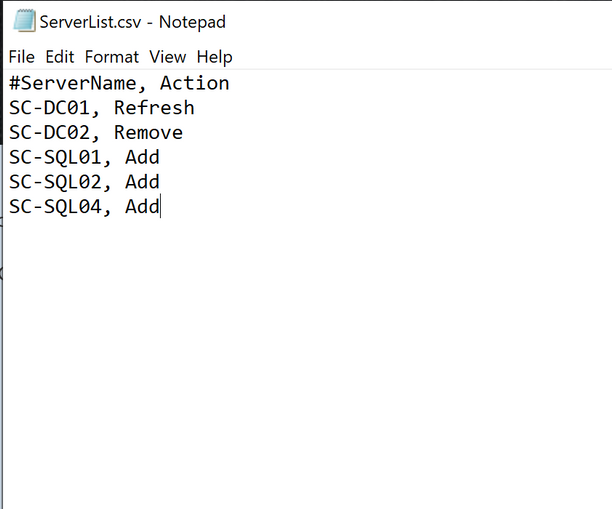

There’s also another way to achieve our goal. During a customer engagement, something shone a light in me. This customer was still using SCOM and he had the SCOM Management management pack (aka mp) from Kevin Holman imported. Should you want to know more about importing management packs, please refer the How to Import, Export, and Remove an Operations Manager Management Pack | Microsoft Docs page.

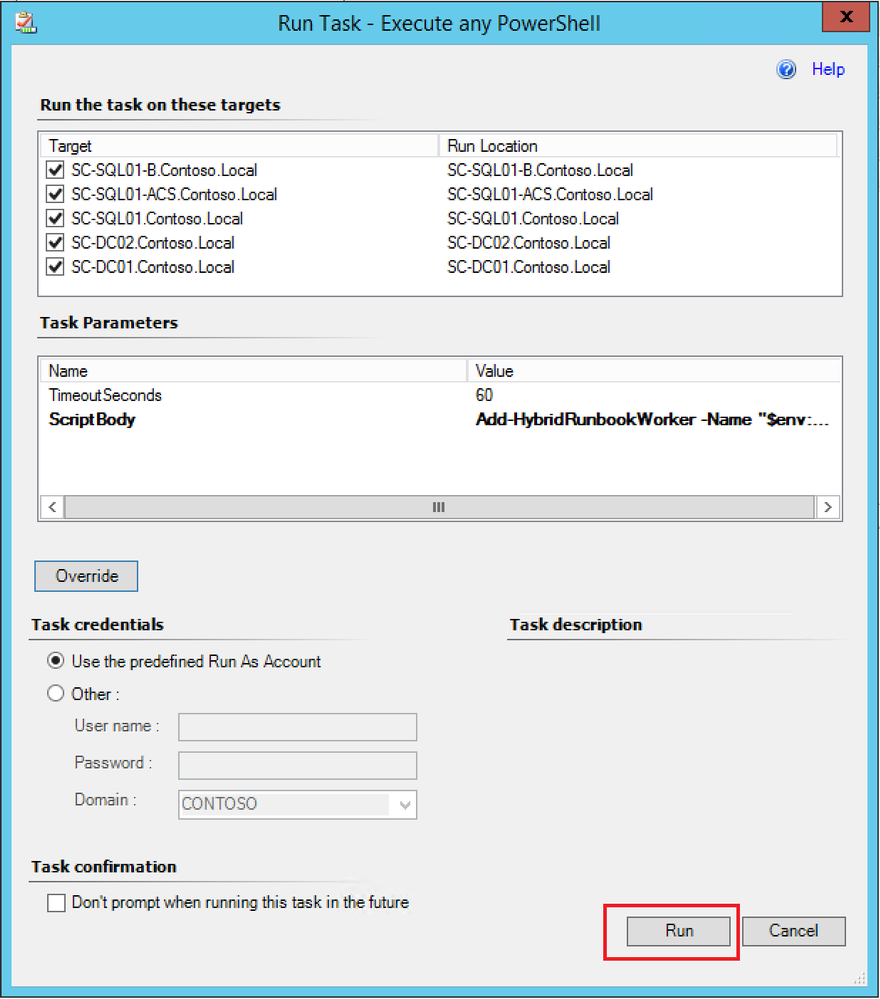

One of the greatest features in this MP is that you can execute any PowerShell script. Here comes the idea. Since all the agents were directly connected with Log Analytics and the necessary solution in place (see Deploy a Windows Hybrid Runbook Worker in Azure Automation | Microsoft Docs), why not using this agent task passing a one-line script to it? Doing this way, we could make multiple selection, meaning that we will configure at scale.

By now, you should have everything necessary in place to go ahead with this proposed approach. If so:

- Open the Operations Console (see How to Connect to the Operations and Web Console | Microsoft Docs).

- In the bottom-left corner, select Monitoring.

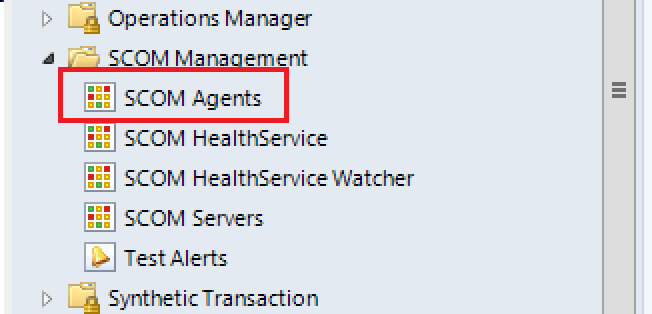

- In the folder list, navigate to the SCOM Management view folder, expand it and select SCOM Agents.

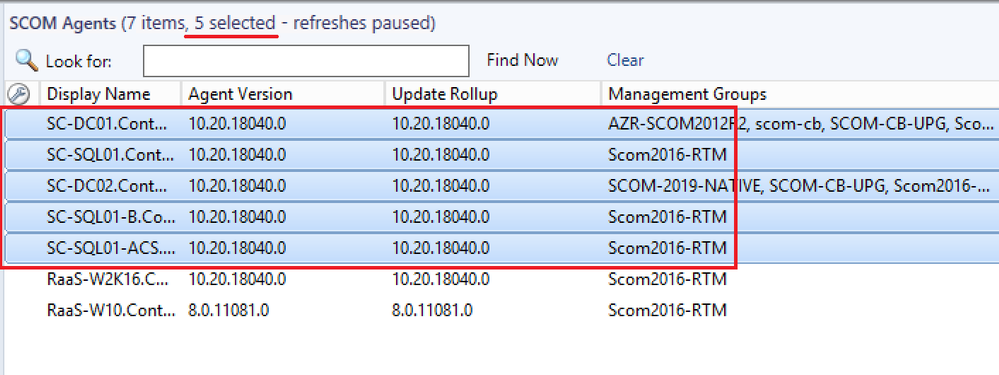

- In the center pane, select the agents you want to register as HRW.

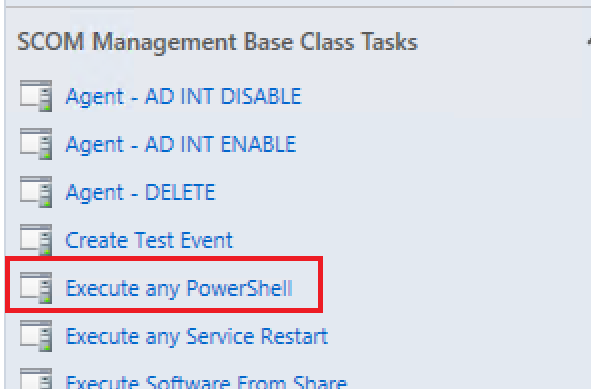

- In the Task pane on the right, under the SCOM Management Base Class Tasks, click on the task called Execute any PowerShell.

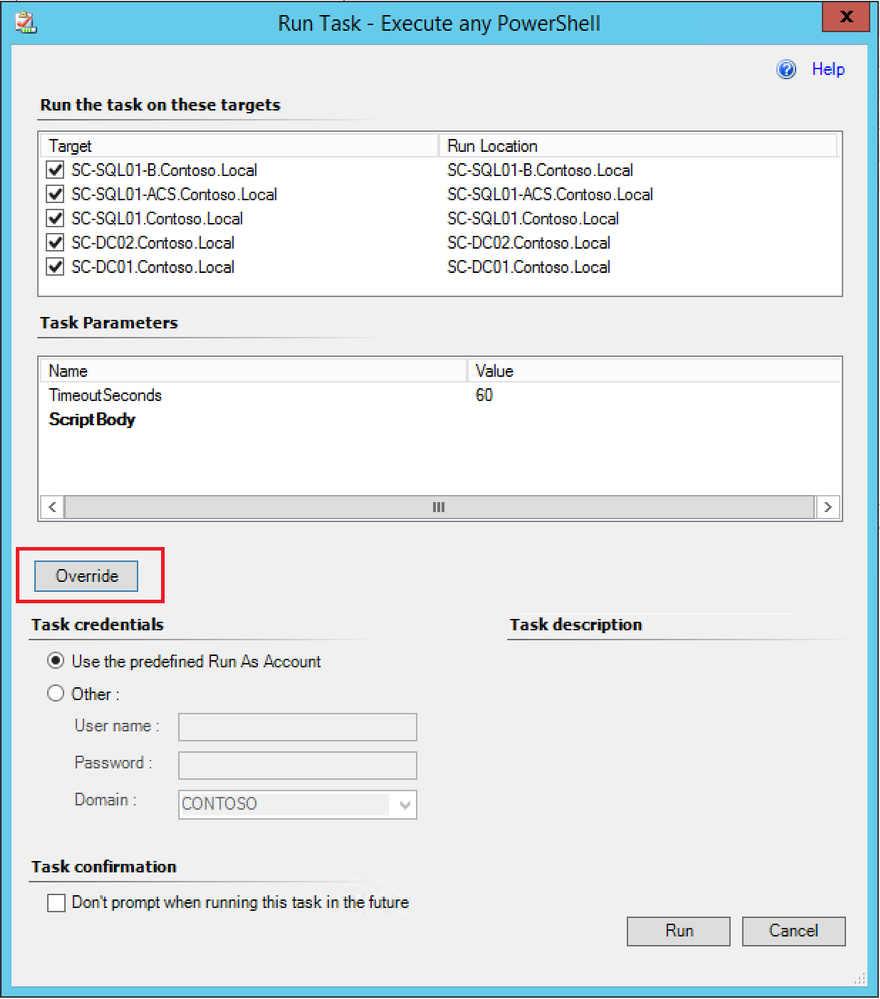

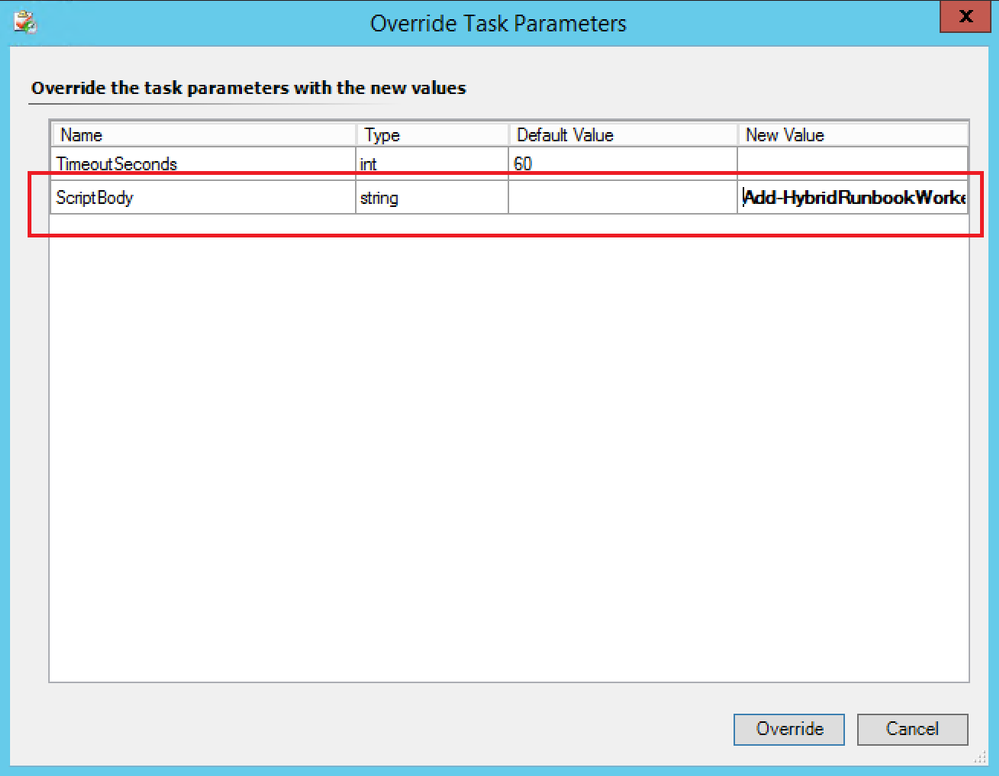

- Click on Override.

- Configure the ScriptBody parameter with one of the commands below according to the purpose and click Override:

Add-HybridRunbookWorker -Name "$env:COMPUTERNAME" -EndPoint "<YourAutomationAccountUrl>" -Token "<YourAutomationAccountPrimaryAccessKey>”

Remove-HybridRunbookWorker -url "<YourAutomationAccountUrl>" -key "<YourAutomationAccountPrimaryAccessKey>"

NOTE: You should replace the values in the above command with those pertaining to your environment.

- Click Run.

These 3 methods are surely not the only ones existing, but they’re a good starting point to start thinking AT SCALE. Isn’t it  ?

?

Thanks for reading,

Bruno.

by Contributed | Dec 29, 2020 | Technology

This article is contributed. See the original author and article here.

Introduction

One of the ways that BizTalk can access SAP servers is by using Business Application Programming Interfaces (BAPIs), as documented in Operations on BAPIs in SAP. SAP introduced BAPIs as an object-oriented way to structure business data and processes. It is assumed that the reader has some familiarity with how to use this functionality in BizTalk.

In this article, we conclude our series on BAPI transactions by presenting, step by step, another implementation of the sales orders received in batches and created in SAP by using BAPI transactions. The following was covered in past articles:

The design here is a combination of the previous concepts into a solution that integrates the powerful BizTalk pipeline features such as batch processing, XML disassembly, XML validation, and Recoverable Interchange Processing (RIP). It is also the opportunity to provide more details on topics that were briefly touched upon, in the same step by step style – the intent being to serve as the “missing documentation” for SAP BAPI transactions in BizTalk. We present how to:

- Leverage XML disassembler and XML validator in the receive location in order to “front-load” the verifications previously done in the orchestration presented in Handling Errors in SAP BAPI Transactions.

- Write a custom pipeline component to promote properties (such as BTS.InterchangeID) needed for the same orchestration instance to process all messages in a batch as a single SAP Logical Unit of Work (LUW).

- Implement layered verifications to improve the granularity of error handling presented in Handling Errors in SAP BAPI Transactions and find errors before they get to the send adapter.

All code is provided in the archive attached to this blog post.

NOTE: Starting with BizTalk Server 2016 CU6 and BizTalk Server 2020 CU1, BAPI transactions need to be enabled explicitly, by creating the registry value HKLMSOFTWAREMicrosoftBizTalk Server3.0ConfigurationEnableBizTalkSapSessionProvider of type DWORD and setting it to ‘1’, as documented in the fix page.

|

1. BAPI Operations and Schemas

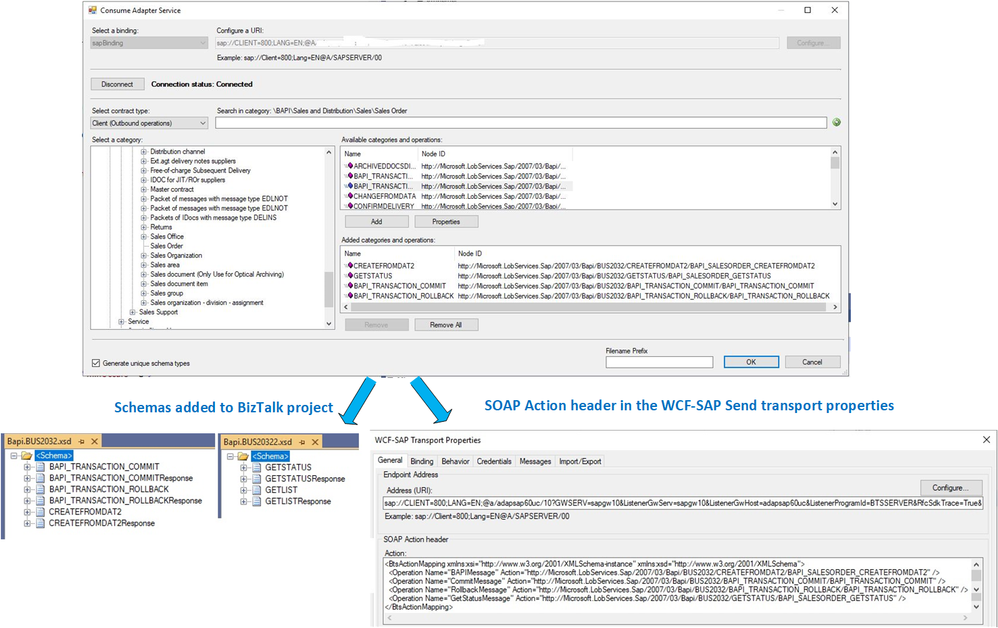

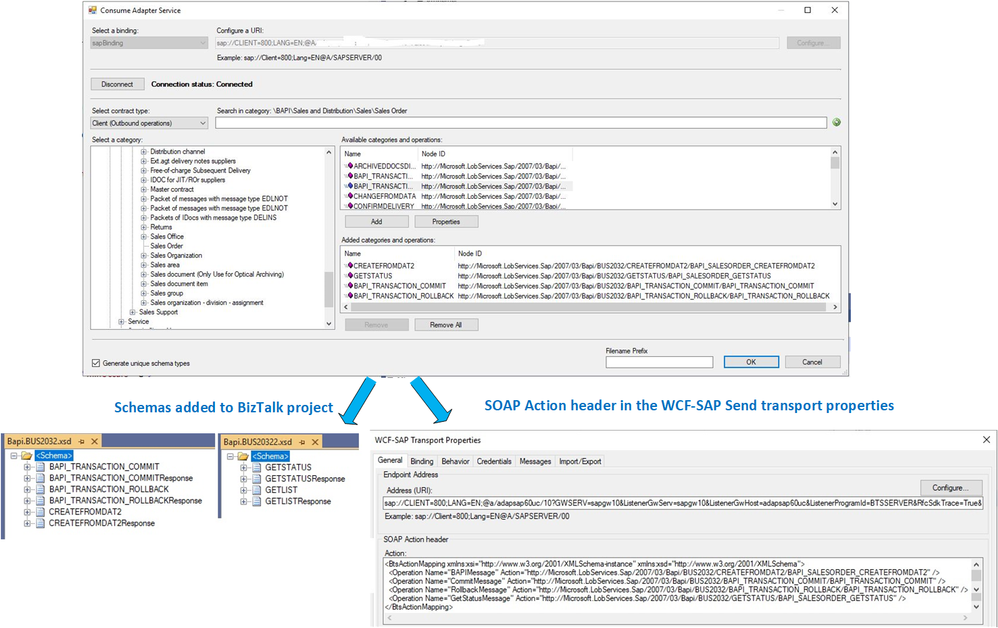

In the demo scenario, sales orders are received under the form of XML documents and transformed to BAPI Sales Order (BUS2032). The first pre-requisite is to download schemas and metadata for BAPI transactions by using the “Consume Adapter Service” in Visual Studio, as explained in this section of the SAP Adapter documentation.

The figure below depicts the generated schemas for the BAPI methods BUS2032.CREATEFROMDAT2 (shortened to CREATEFROMDAT2 in the rest of this article) and BAPI_TRANSACTION_COMMIT.

BAPI Schema Generation

BAPI Schema Generation

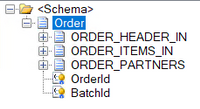

On the receiving side of BizTalk, we consider the general case where sales orders follow a simplified schema (Order) that may not necessarily correspond to the BAPI schema. A single XML document contains multiple orders, which are debatched in the receive location.

Order Schema

Order Schema

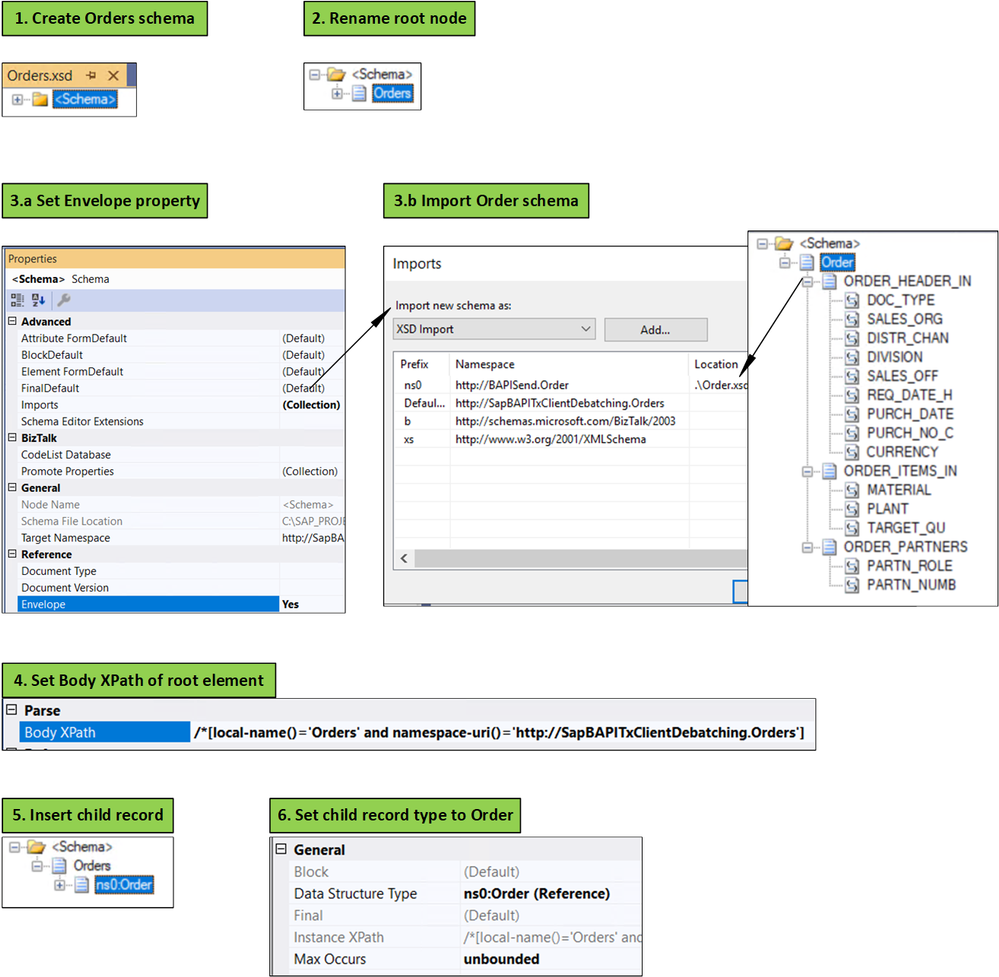

The first step for using a debatching pipeline is to define an envelope schema to represent multiple orders. This is done according to the following process, initially documented in Calling a pipeline inside an orchestration:

- Add a new schema file to the project.

- Change the root node name to “Orders”.

- In the properties window for the <schema> treeview node: (a) change the Envelope property to Yes, and (b) import the Order schema.

- In the properties window for the “Orders” root node, set the Body XPath property to /*[local-name()=’Orders’ and namespace-uri()='<namespace of the schema here’

- Insert a child record under the root node.

- In the child record properties, set the data structure type field to Order. As a result, the Order definition will appear under Orders.

Creating the Orders Envelope Schema

Creating the Orders Envelope Schema

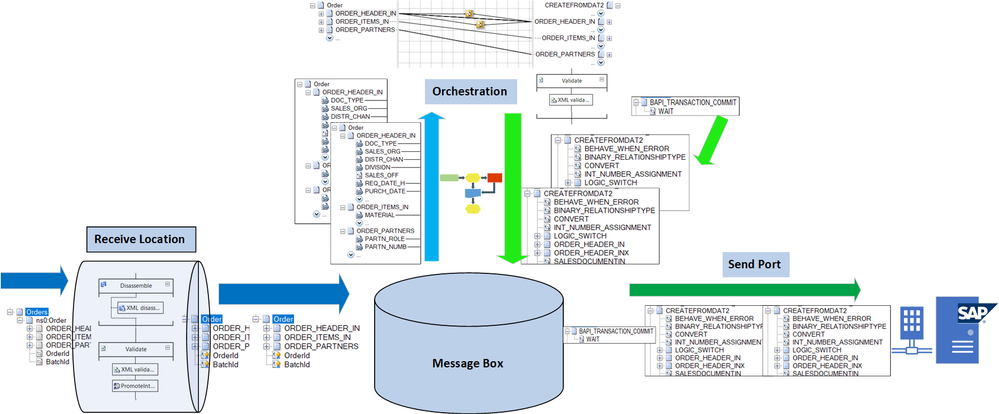

The message flow with the different schemas is shown in the following figure.

Message Flow

Message Flow

Note: The send port is actually a Static Solicit-Response port. Responses from SAP are not shown here.

We assumed that received messages follow a simplified client-defined schema (Orders/Order) rather than CREATEFROMDAT2 directly, in order to make the demo scenario more general. Everything presented below is applicable with minor changes if batches of CREATEFROMDAT2 are received instead. A client schema makes sense in an integration context, where the source is decoupled from the destination; for instance, if the organization sending sales orders does not need to be aware that these sales orders are processed in BizTalk by creating CREATEFROMDAT2 BAPI transactions. Furthermore, BAPI schemas depend on the SAP system, and they can contain several hundreds of elements, many of them not necessarily applicable to every sales orders.

2. From Interchanges to LUWs

The key to understanding what designs can accommodate BAPI transactions is interchanges.

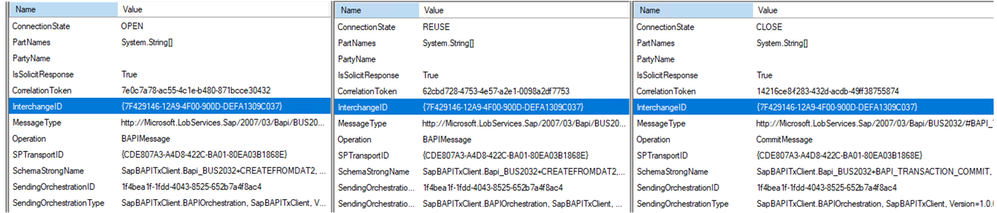

As explained in Run BAPI Transactions in SAP using BizTalk Server, the message context property Microsoft.Adapters.SAP.BiztalkPropertySchema.ConnectionState is used to associate a BAPI transaction to a Logical Unit of Work (LUW) on the SAP server. So, the first transaction has the property set to “OPEN”, subsequent transactions set it to “REUSE”, and the last transactions have either “CLOSE” or “ABORT”. This implies that messages are (1) grouped, and (2) ordered, to the extent that we can differentiate between first message, middle messages, and last message.

A series of transaction messages (i.e. OPEN-REUSE-REUSE-…-CLOSE) requires the same connection for outbound communication with the SAP server, and this connection is mapped to an LUW on the SAP server side by interchange id and send port id. The message properties look like:

BAPI Messages Context Properties

BAPI Messages Context Properties

To put it in layman’s terms, for BAPI transactions to be in the same LUW from OPEN to CLOSE/ABORT, they need to come from the same “place” (the interchange id) and to be sent out to the same “destination” (the send port) with the same route (the “session”). The use of {interchange id + send port id} is compatible with the “session” paradigm provided by the SAP Connector for Microsoft .NET (NCo) to expose the BAPI Transaction Model documented by SAP.

Common interchange id means that outbound messages are part of the same interchanges, which is achieved in the following setups:

- If messages are received by the same orchestration instance. It is the design presented in SAP BAPI Transactions Walkthrough, where incoming order messages provide a promoted id property used as correlation set in order to use the same orchestration instance for a series of related orders.

- If messages are debatched, as in Debatching SAP BAPI Transactions where debatching was done by an in-orchestration receive pipeline.

The current design is a combination of 1 and 2: Messages are debatched in the receive location and their BTS.InterchangeID property is used as correlation set by orchestration instances. Details are presented next.

3. Debatching Orders in the Receive Location

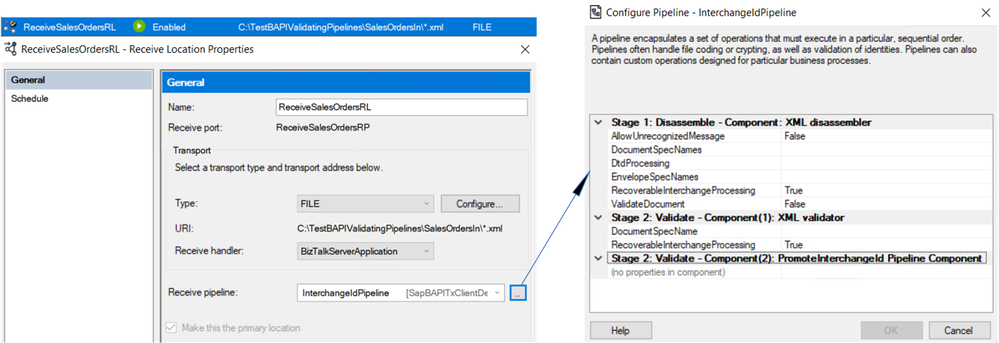

Let’s look at the receive location settings.

Receive Location Settings

Receive Location Settings

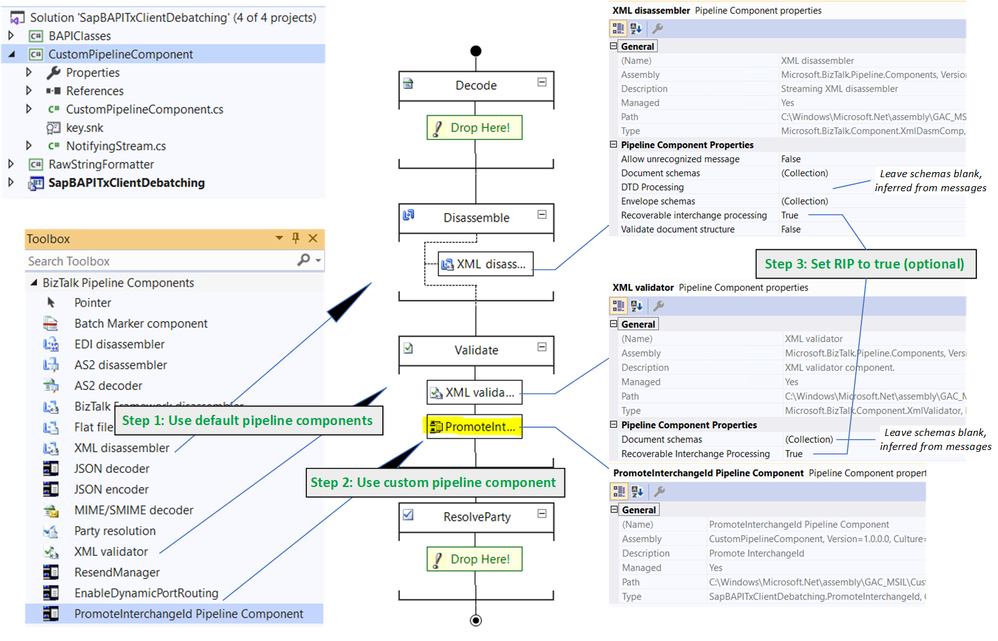

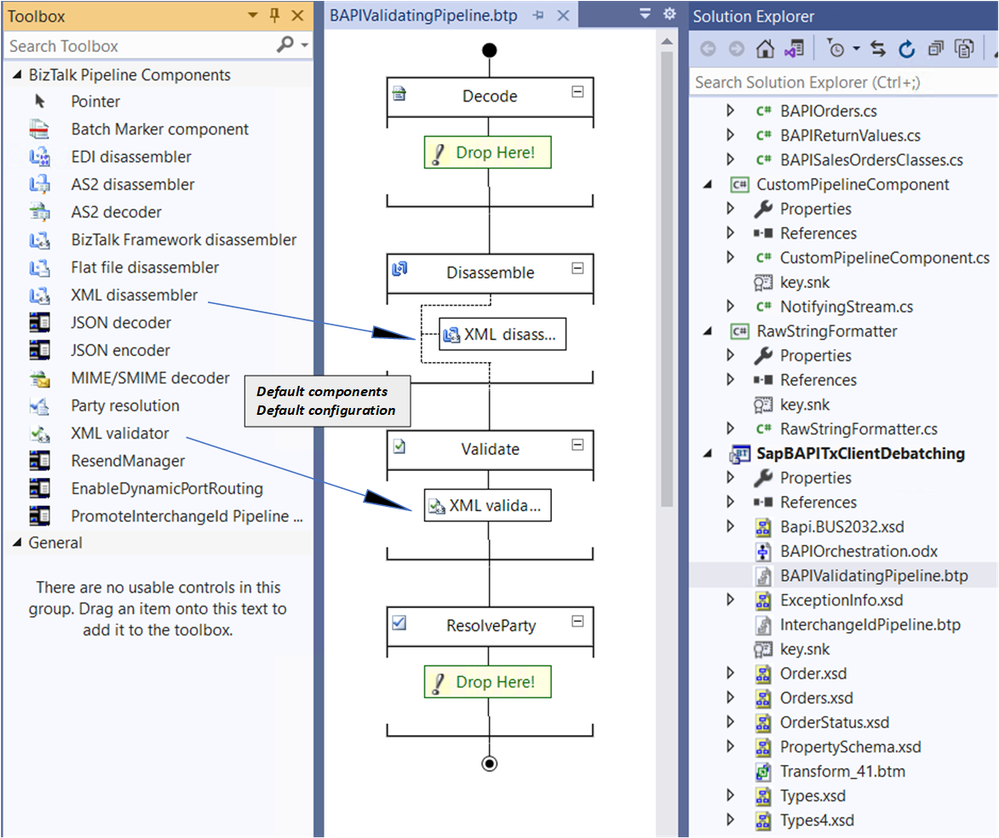

We use a custom pipeline, shown below, in order to promote the BTS.InterchangeID property, which is otherwise not accessible from within orchestrations.

Custom Receive Pipeline

Custom Receive Pipeline

The custom pipeline uses the default XML disassembler and validator, which provides access to Recoverable Interchange Processing (RIP), feature which allows processing to go on if some messages within a batch are invalid. With standard interchange processing, the existence of any invalid message in a given interchange causes the entire interchange to be suspended even if it contains one or more valid messages. In the configuration of XML disassembler and validator, schemas and envelope schemas can be left blank; BizTalk automatically resolves the envelope and inner schemas from the messages.

The last validation stage is the custom pipeline component itself, which is based on a reusable pattern introduced in the WCF LOB Adapter SDK.

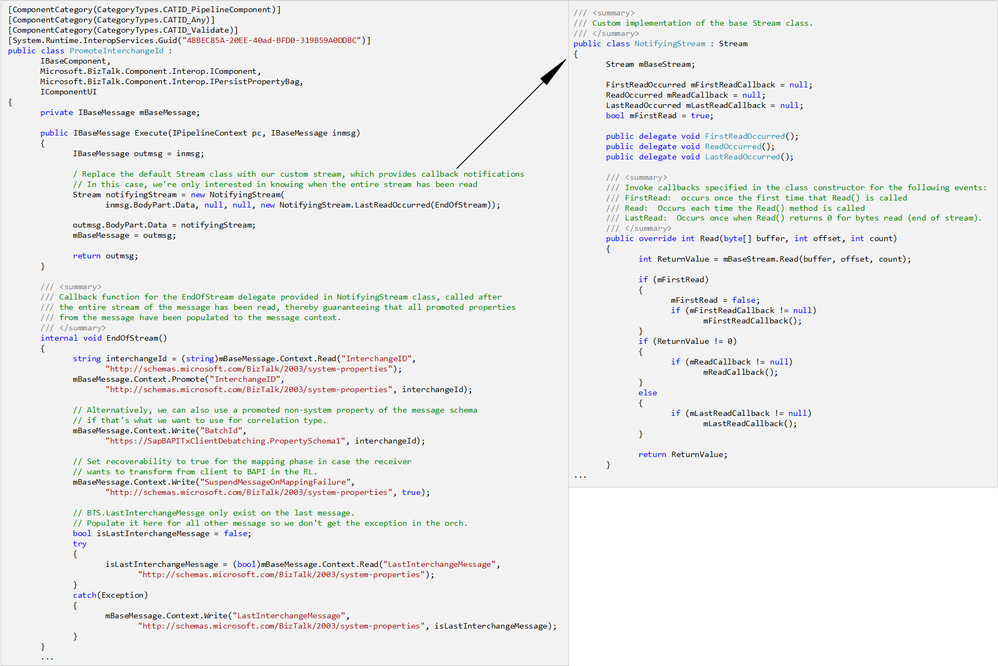

The relevant portions of the code (which may be found in the attached archive) are shown below. A class called NotifyingStream designed as a decorator of the Stream class, is used to invoke callbacks for specific events corresponding to when the stream is read. In our case, the custom pipeline component PromoteInterchangeId receives a notification when the entire stream has been read, thereby guaranteeing that all promoted properties from the message have been populated to the message context.

public IBaseMessage Execute(IPipelineContext pc, IBaseMessage inmsg)

{

IBaseMessage outmsg = inmsg;

Stream notifyingStream = new NotifyingStream(inmsg.BodyPart.Data, null, null, new NotifyingStream.LastReadOccurred(EndOfStream));

outmsg.BodyPart.Data = notifyingStream;

mBaseMessage = outmsg;return outmsg;

}

internal void EndOfStream()

{

string interchangeId = (string)mBaseMessage.Context.Read(“InterchangeID”, “http://schemas.microsoft.com/BizTalk/2003/system-properties”);

mBaseMessage.Context.Promote(“InterchangeID”, “http://schemas.microsoft.com/BizTalk/2003/system-properties”, interchangeId);

// This is the place to add more promotions as needed, as explained in the blog post

}

public class NotifyingStream : Stream

{

Stream mBaseStream;

public delegate void LastReadOccurred();

public NotifyingStream(Stream BaseStream, …, LastReadOccurred LastReadCallback)

{

mBaseStream = BaseStream;

mLastReadCallback = LastReadCallback;

}

public override int Read(byte[] buffer, int offset, int count)

{

if (mBaseStream.Read(buffer, offset, count) == 0 && mLastReadCallback != null)

{

mLastReadCallback();

}

}

// All other methods call the ones from Stream directly.

In addition to the promotion of BTS.InterchangeID, the custom pipeline component also sets the following properties, added for illustration purposes and for design alternatives considered later on:

– BatchId, which can be used as an alternative to using BTS.InterchangeID directly.

– BTS.SuspendMessageOnMappingFailure property, which can be used to add RIP behavior at the mapping phase in a receive port, as documented in Mapping Phase (Recoverable Interchange Processing).

– BTS.LastInterchangeMessage, which is used to indicate the last message in a batch. It is by default not set for other messages, thereby causing an exception if accessed from an expression in an orchestration. Adding the property to each message simplifies the orchestration later on.

The custom component was installed according to Deploying Custom Pipeline Components.

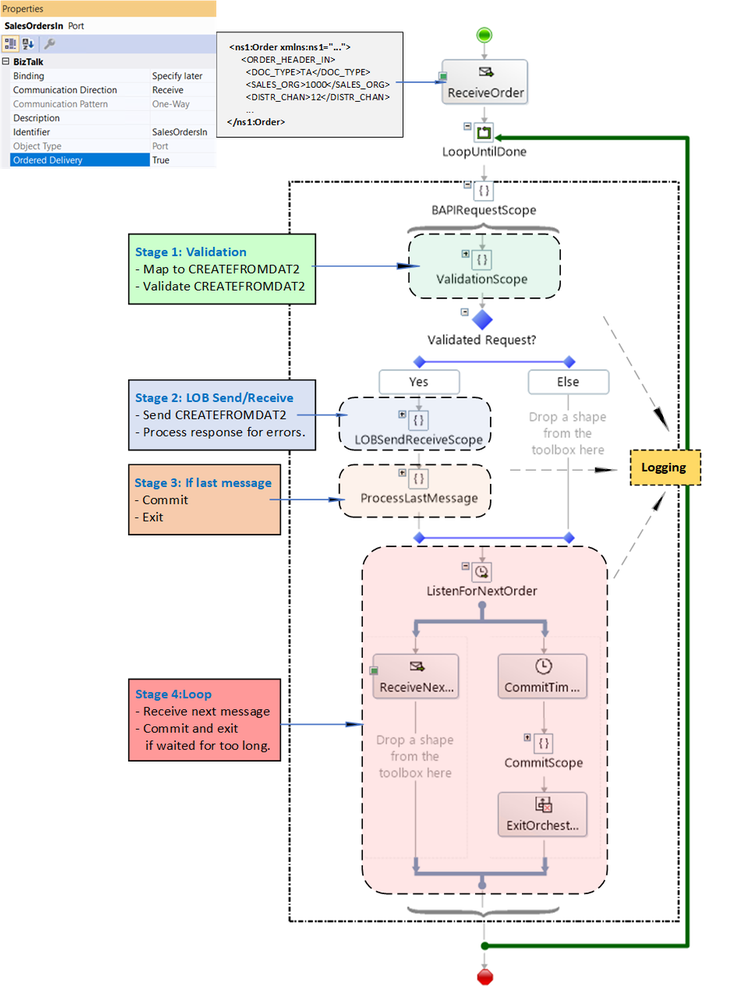

4. Orchestration Overview

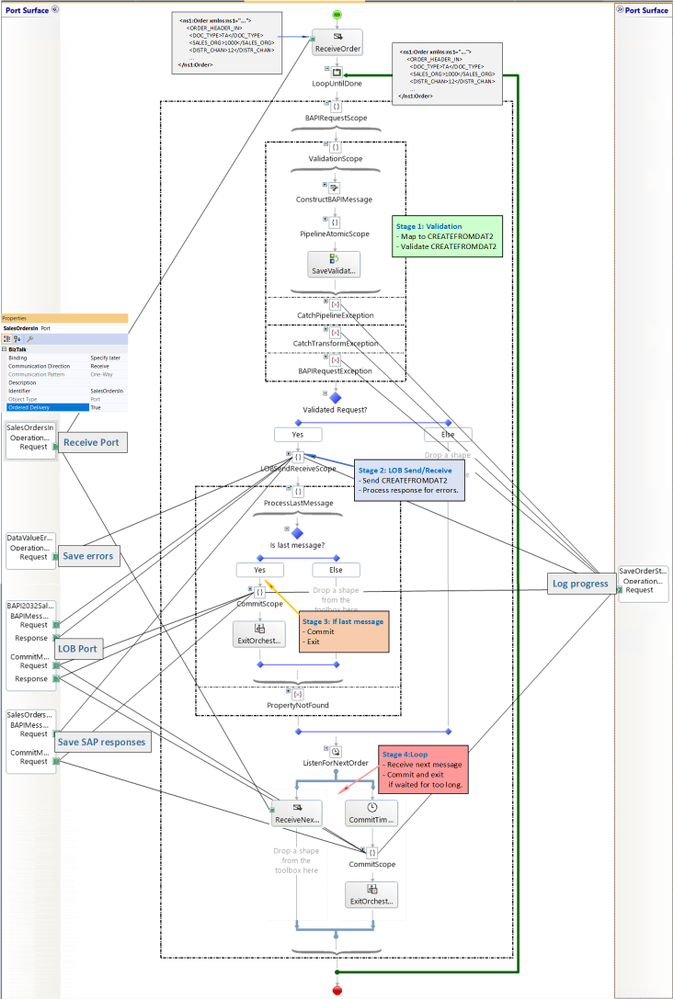

The long-running orchestration relies on (1) the interchange id to pin messages within a batch to the same instance, and (2) Ordered Delivery of Messages to differentiate first-middle-last messages of the interchange. Processing can be decomposed into four stages, illustrated below:

- Validation

- LOB send/receive

- If last message, commit and exit

- Other messages: loop.

Orchestration Stages

Orchestration Stages

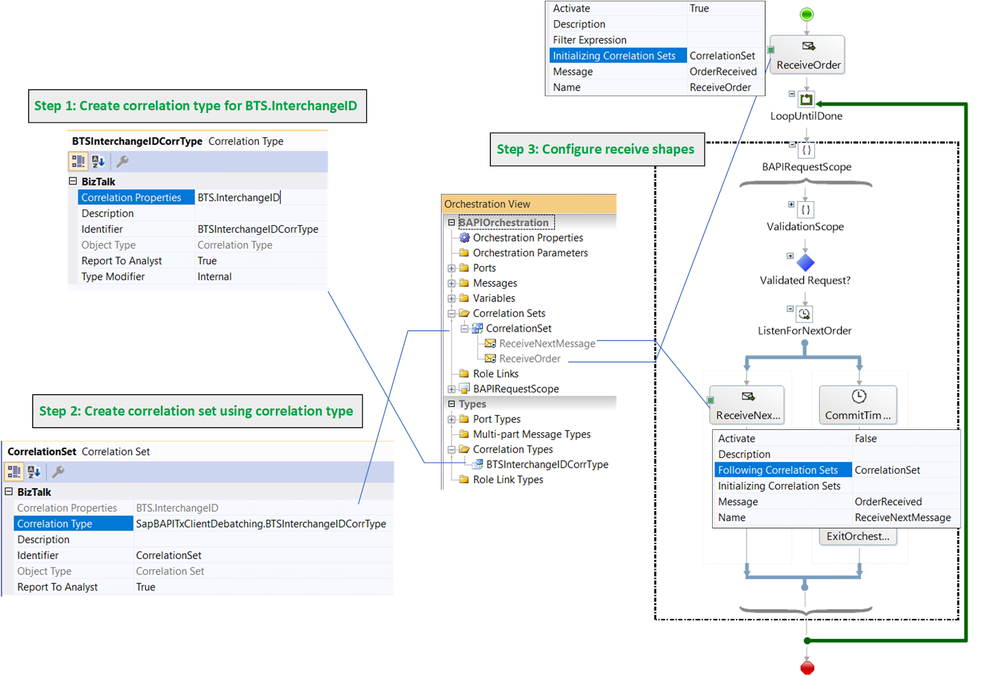

As previously mentioned, a single orchestration instance processes all messages in a batch, which are correlated based on their interchange id. More info regarding correlations may be found in Sequential Convoy & Singleton Orchestration. This is illustrated in the following figure:

BTS.InterchangeID as Orchestration Correlation

BTS.InterchangeID as Orchestration Correlation

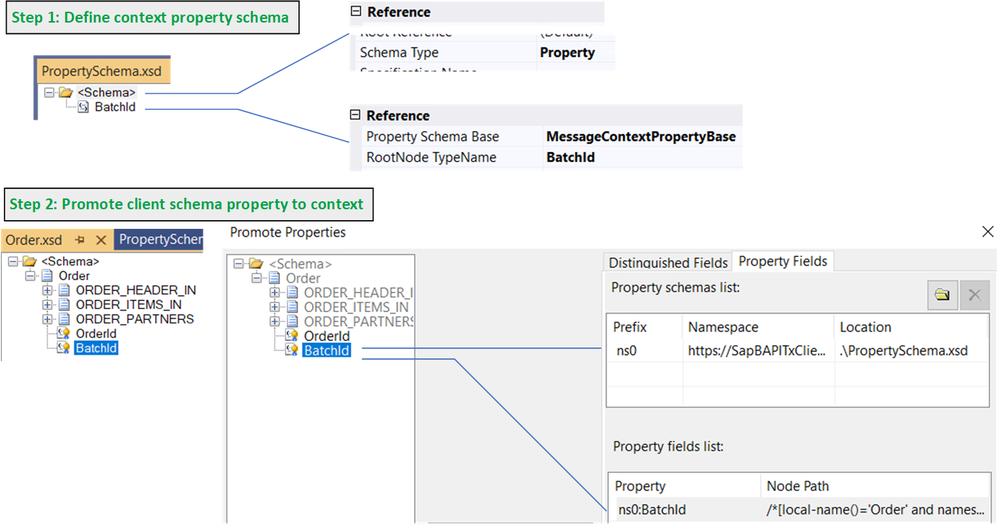

An alternative to promoting and using the system property BTS.InterchangeID directly (for instance because we don’t want to change it by mistake) is to use a custom context property (BatchId) as depicted below.

BatchId User-Created Context Property

BatchId User-Created Context Property

BatchId can be set to the BTS.InterchangeID value inside the custom pipeline component:

mBaseMessage.Context.Write(“BatchId”, “https://SapBAPITxClientDebatching.PropertySchema1”, interchangeId);

Discussion: Logging and Error Handling

Before presenting each orchestration stage in detail, it is worth mentioning that logging is for demo purposes, to show the different places of interest that could be adapted to “real-world” applications, where further processing could be hooked up.

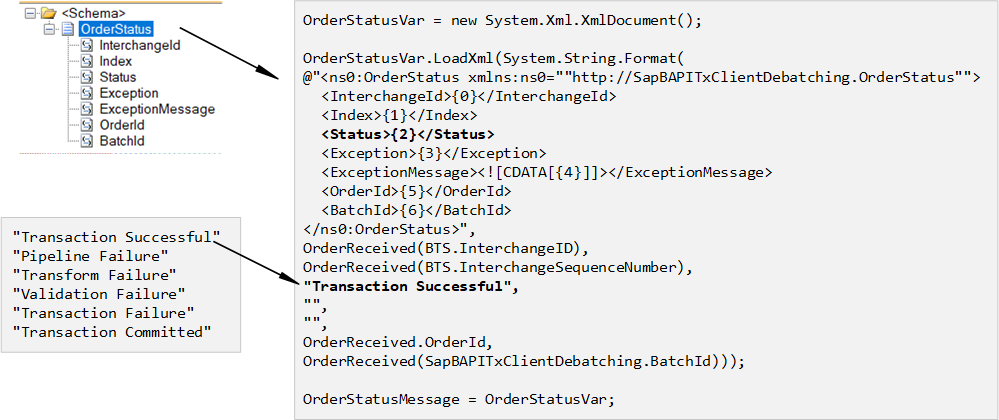

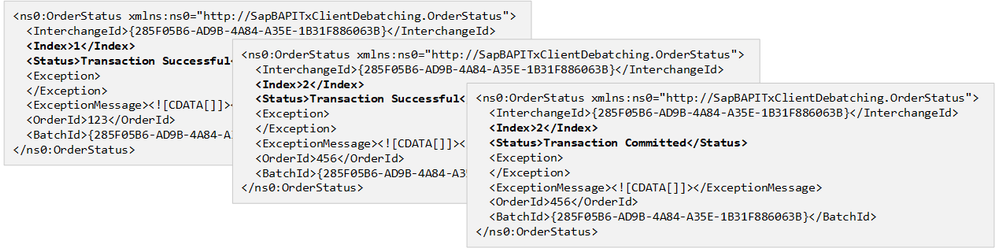

At each stage, whether it is in the regular flow or in exception handling, we keep track of the order status with the following schema and expression:

Logging

Logging

A sequence of successful order creations looks like:

Status Logs for Successful Transaction Sequence

Status Logs for Successful Transaction Sequence

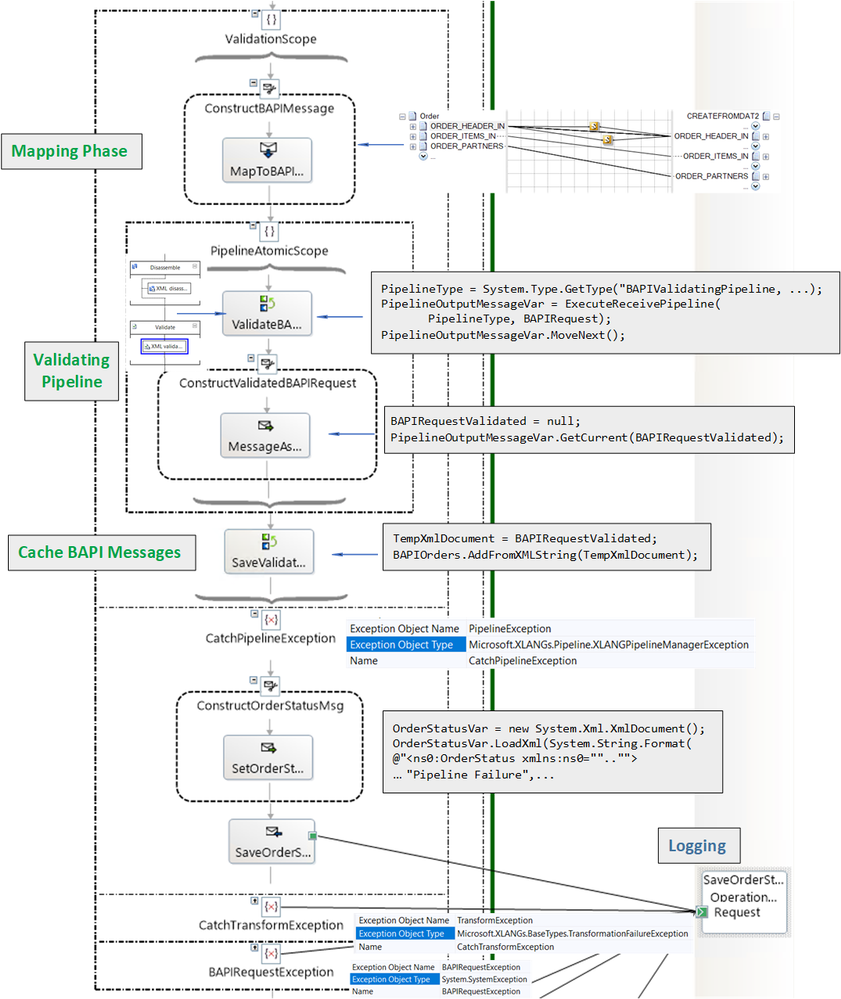

5. Orchestration Validation Stage

The receive port takes care of validating Order messages. The goal of the validation stage in the orchestration is to further validate data during and after transformation of Order to CREATEFROMDAT2, rather than letting the SAP adapter take care of it, as we saw in Handling Errors in SAP BAPI Transactions. This is not to say that one way is better than another, just that processing is distributed differently. For instance in the current design, errors caused by invalid data are caught before the data is handled in the adapter, which results in a simpler design later on, while limiting exception processing at the LOB stage to more systemic errors like connectivity. As illustrated in the figure below, in the current design, validation is layered.

Validation Phases in Orchestration

Validation Phases in Orchestration

Each validation phase is explained in detail next.

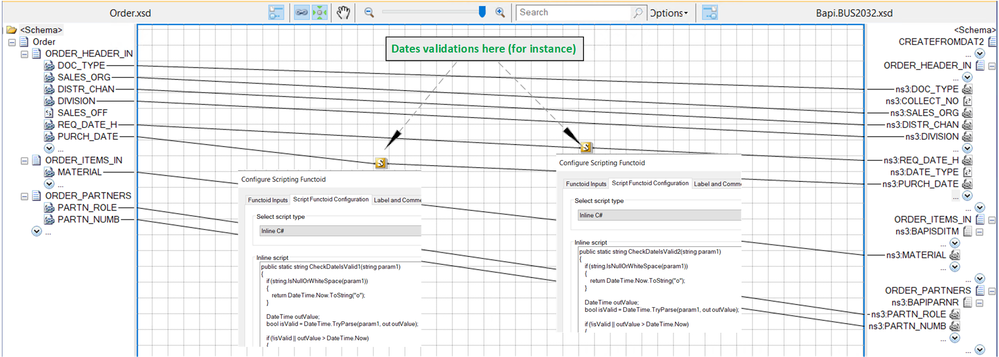

5.1 Mapping Phase

First, the mapping of Order to CREATEFROMDAT2 is also a place where value verification can happen, for instance with script functoids.

Using Script Functoids for Validations

Using Script Functoids for Validations

As an example, purchase and requisition dates can be enforced to be in the past. The script functoid in the provided code looks like:

public static string CheckDateIsValid1(string param1)

{

if (string.IsNullOrWhiteSpace(param1))

{

return DateTime.Now.ToString(“o”);

}

DateTime outValue;

bool isValid = DateTime.TryParse(param1, out outValue);

if (!isValid || outValue > DateTime.Now)

{

// For demo purposes: throw an exception explicitly.

throw new Exception(“Invalid PURCH_DATE.”);

}

// Make sure the datetime follows the xsd datatype

return outValue.ToString(“o”);

}

There is a similar functoid for other data fields.

Note: Even if script functoids are identical for different fields, the method names need to be different.

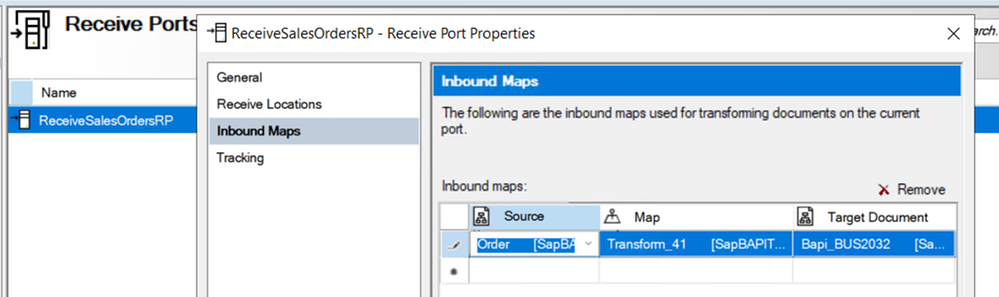

Discussion: Transform at port level?

The receive port can be configured to do the mapping instead of letting it done in the orchestration. The setting is:

Transform in Receive Port

Transform in Receive Port

In this case, the custom pipeline component also needs to set the BTS.SuspendMessageOnMappingFailure to make sure that processing goes on if a failure happens on a single Order, as documented in Mapping Phase (Recoverable Interchange Processing) It would be done as follows:

// Set recoverability to true for the mapping phase in case the receiver wants to transform from client to BAPI in the RL.

mBaseMessage.Context.Write(“SuspendMessageOnMappingFailure”, “http://schemas.microsoft.com/BizTalk/2003/system-properties”, true);

The reason for doing the mapping in the orchestration in our demo scenario is that it allows all BAPI validations to happen in a single orchestration stage.

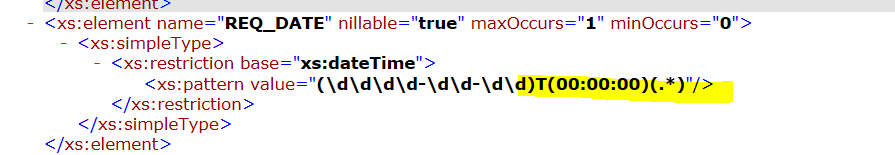

5.2 BAPI Validating Pipeline

We need the XML validator in order to catch additional restrictions in the BAPI schemas. For instance, dates have these restrictions:

Constraint on Date Format from Generated Schema

Constraint on Date Format from Generated Schema

More restrictions are documented in SAP Adapter Binding Properties.

Similarly to Debatching SAP BAPI Transaction Requests, a pipeline is called from the orchestration. This time however, a custom pipeline is needed in order to add XML validation to the default XML Receive Pipeline, which does not have XML validation by default (BizTalk Server: Default Pipelines).

BAPI Validating Pipeline

BAPI Validating Pipeline

Before using the pipeline, the following dependencies must be added to the BizTalk project:

- Microsoft.XLANGs.Pipeline.dll

- Microsoft.BizTalk.Pipeline.dll

The pipeline must be inside a scope of type Atomic because it is of non-serializable type. As a result, the orchestration has to be of type “Long-Running”. Inside the atomic scope, the first expression starts the pipeline execution:

ValidatingPipelineType = System.Type.GetType(“SapBAPITxClientDebatching.BAPIValidatingPipeline, SapBAPITxClientDebatching, Version=1.0.0.0, Culture=neutral, PublicKeyToken=9c30d143e3d921fe”);

PipelineOutputMessageVar = Microsoft.XLANGs.Pipeline.XLANGPipelineManager.ExecuteReceivePipeline(ValidatingPipelineType, BAPIRequest);

PipelineOutputMessageVar.MoveNext();

where PipelineOutputMessageVar is a variable of type Microsoft.XLANGs.Pipeline.ReceivePipelineOutputMessages.

The CREATEFROMDAT2 output of the pipeline is obtained with the expression:

BAPIRequestValidated = null;

PipelineOutputMessageVar.GetCurrent(BAPIRequestValidated);

Discussion: The alternative to validation at this stage is to let data format errors happen in the SAP adapter and handle them later on, as shown in Handling Errors in SAP BAPI Transactions. If we did not handle them, we would see the following on the first message:

A message sent to adapter “WCF-SAP” on send port “BAPI2032SalesOrdersSP” with URI “sap://CLIENT=800;LANG=EN;@a/…” is suspended.

Error details: Microsoft.ServiceModel.Channels.Common.XmlReaderParsingException:

An error occurred when trying to convert the XML string thisistoolong of RFCTYPE RFCTYPE_CHAR

with length 4 and decimals 0 to a .NET type. Parameter/field name: SALES_ORG

Error message: The length of the value for the field exceeds the allowed value. Value: 13 Field:

SALES_ORG Allowed value: 4. —> Microsoft.ServiceModel.Channels.Common.XmlReaderParsingException:

The length of the value for the field exceeds the allowed value. Value: 13

Field: SALES_ORG Allowed value: 4

at Microsoft.Adapters.SAP.SapMetadataUtilities.ConvertXmlStringToRfcStringNCo(

String data, RfcTypes type, Int32 singleByteLength, Int32 decimals, String fieldname,

Encoding encoding, Encoding realPartnerEncoding, Boolean padToExactLength, SAPConnection sapconnection)

and subsequent messages would fail due to the WCF channel left in an inconsistent state in the SAP adapter, typically with exceptions in the event log like:

A message sent to adapter “WCF-SAP” on send port “BAPI2032SalesOrdersSP” with URI “sap://CLIENT=800;LANG=EN;@a/…” is suspended.

Error details: System.InvalidOperationException: The Inner Channel to use was not found for the key

{0A73F66F-31FA-4E48-BAC5-14EAED9571D4}_{27D07099-1874-47C3-9E69-2AD1FA42DE8D};URI=sap://CLIENT=800;LANG=EN;@a/….

Specify a valid key.

5.3 Caching BAPI Messages

CREATEFROMDAT2 messages are saved in a list object to be used outside the pipeline scope:

TempXmlDocument = BAPIRequestValidated;

BAPIOrders.AddFromXMLString(TempXmlDocument);

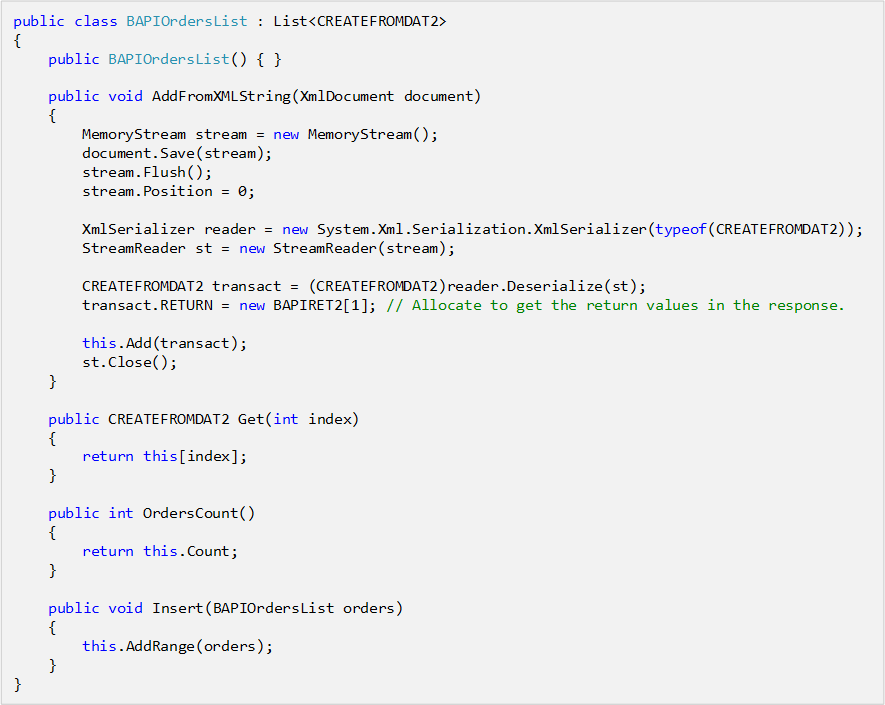

where TempXmlDocument is a variable of type XmlDocument, and BAPIOrders is of custom type BAPIOrderList added in a helper library:

BAPIOrdersList Class

BAPIOrdersList Class

The helper library also contains C# classes corresponding to the BAPI transactions schemas generated by running the Xsd.exe utility on the BAPI schemas generated in Visual Studio.

5.4 Validation Examples

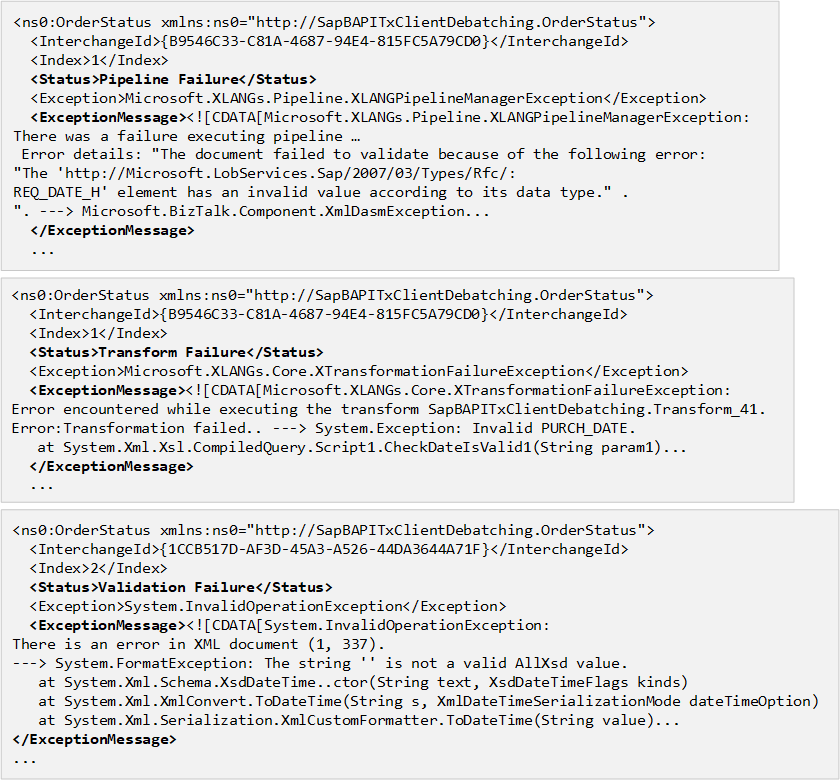

The following logs were obtained from the various validation layers:

Examples of Validation Failures

Examples of Validation Failures

Note the use of CDATA to prevent parsing of characters that would otherwise invalidate the xml format.

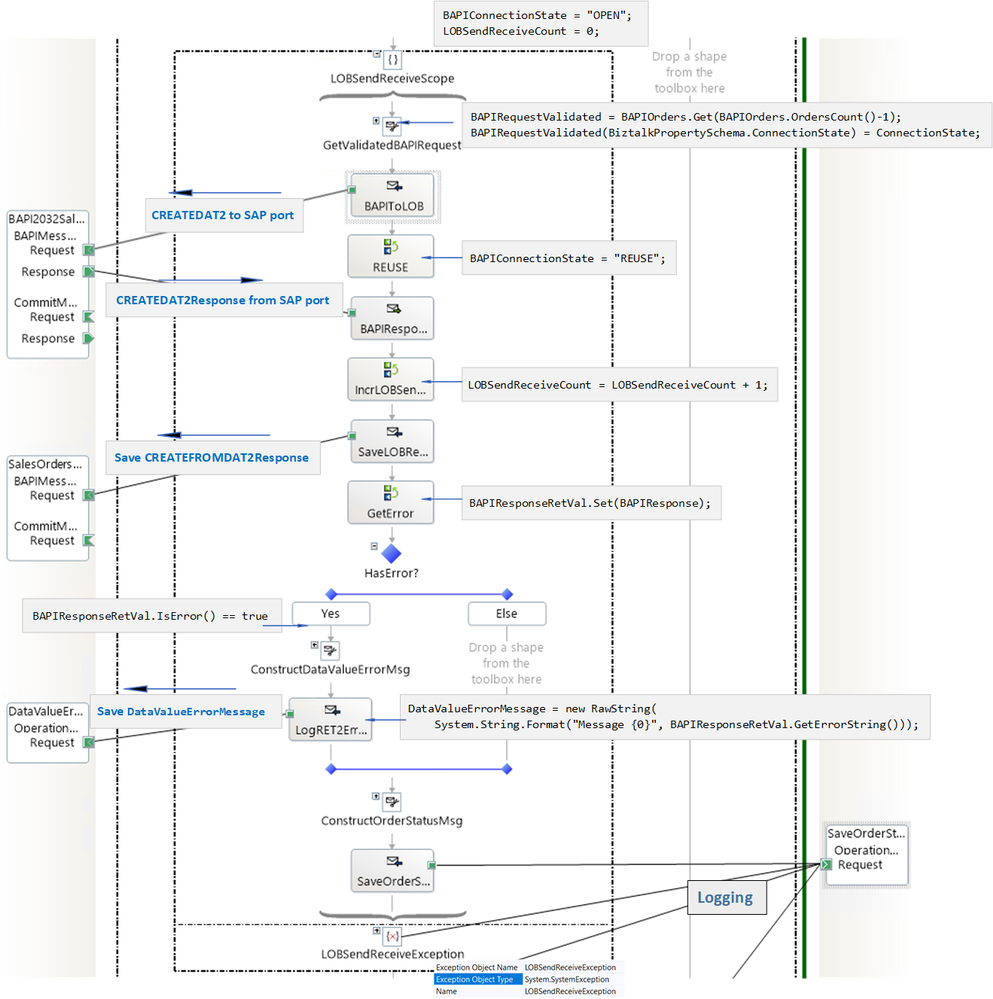

6. Orchestration LOB Send/Receive Stage

The subsequent orchestration stage is similar to what has been presented in Handling Errors in SAP BAPI Transactions. Here is a visual overview before getting into the details:

Note that the connection state is set to REUSE only after a successful send.

We look at the messages being constructed and the data being logged. What gets saved:

1. CREATEFROMDAT2Response documents received from SAP;

2. Errors contained in CREATEFROMDAT2Response documents, formatted as strings;

3. Exception strings.

As explained in Using BAPIs in Distributed Systems (ALE), a value of “E” in any of the TYPE fields of BAPIRET2 in a CREATEFROMDAT2Response indicates transaction failure. For example:

<RETURN>

<BAPIRET2 xmlns=”http://Microsoft.LobServices.Sap/2007/03/Types/Rfc/”>

<TYPE>E</TYPE>

<ID>CZ</ID>

<NUMBER>95</NUMBER>

<MESSAGE>Sales organization abcd does not exist</MESSAGE>

…

</BAPIRET2>

<BAPIRET2 xmlns=”http://Microsoft.LobServices.Sap/2007/03/Types/Rfc/”>

<TYPE>E</TYPE>

<ID>V4</ID>

<NUMBER>219</NUMBER>

<MESSAGE>Sales document was not changed</MESSAGE>

…

</BAPIRET2>

</RETURN>

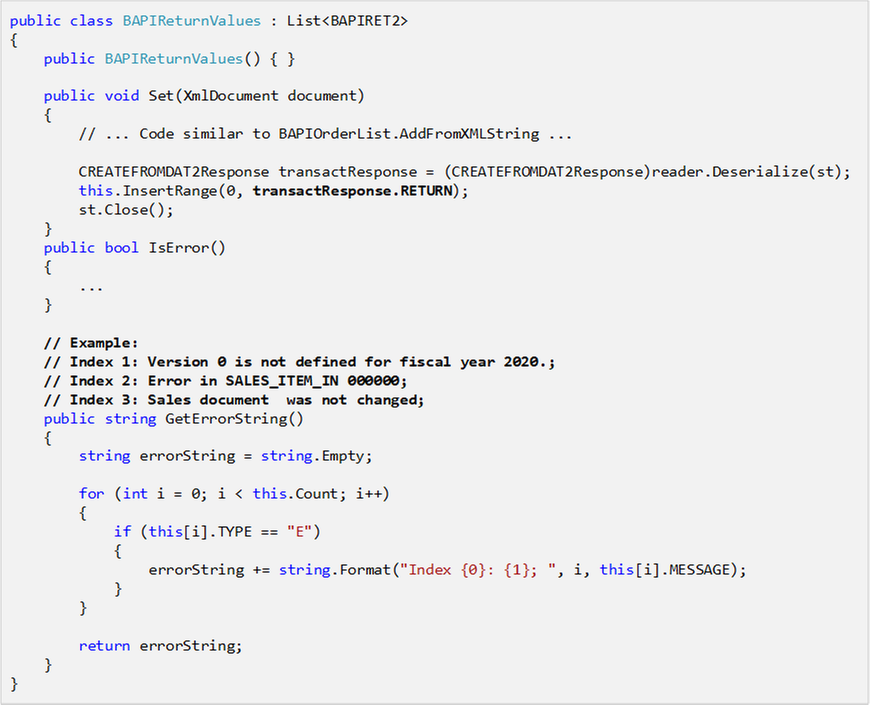

Errors are extracted by the orchestration with the following helper class (complete code in attached archive):

BAPIReturnValues

BAPIReturnValues

which is used as in the expression:

BAPIResponseRetVal.Set(BAPIResponse);

where BAPIResponse is of type CREATEFROMDAT2Response.

The method BAPIReturnValues.GetErrorString() produces the error string logged to a file by using the RawString class documented in Sending string to file location in BizTalk and included in the BizTalk code attached to this blog post.

DataValueErrorMessage = new Microsoft.Samples.BizTalk.XlangCustomFormatters.RawString(

System.String.Format(“Message {0}”, BAPIResponseRetVal.GetErrorString()));

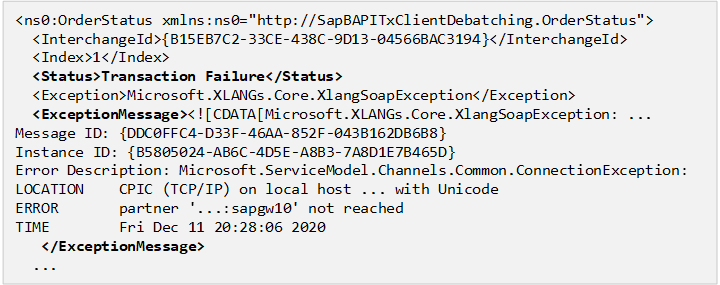

Handling Failures

Given that the data being sent to the server has been “sanitized” in previous stages, failures at this stage are expected to be more general, such as connectivity issues.

Connection Error

Connection Error

Although not done here to keep the design as simple as it can be, it is possible to handle errors by re-enqueueing CREATEFROMDAT2 messages in the BAPIOrders cached list, as was done in Handling Errors in SAP BAPI Transactions and resetting the connection state to “OPEN” so a new WCF channel may be opened to restart a new LUW. This is can be done with minor changes to the existing orchestration.

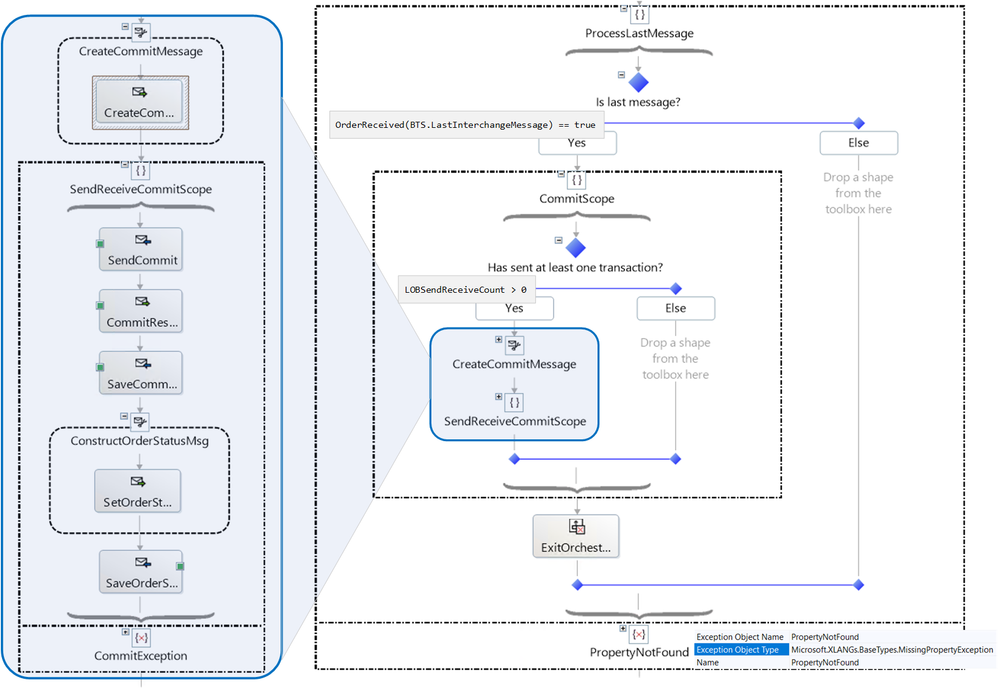

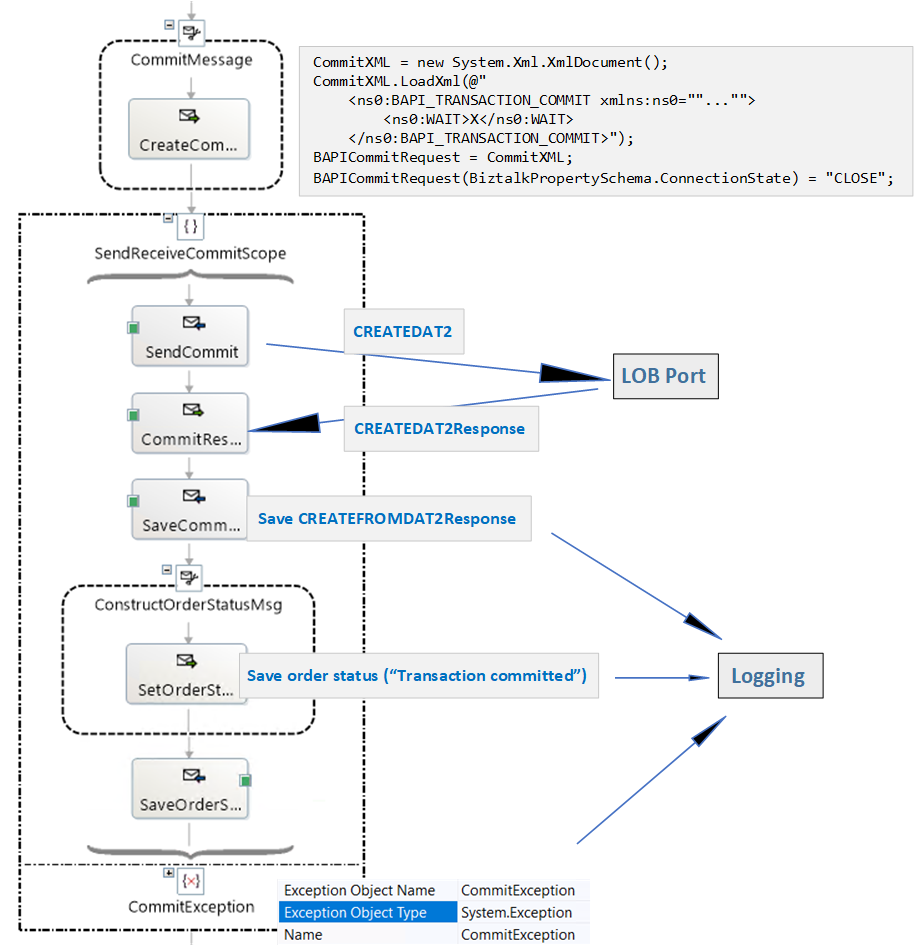

7. Last Message in Batch and Commit Stage

The last message in a batch is identified by the BTS.LastInterchangeMessage property, which by default does not exist in other messages. This causes the following decision expression:

OrderReceived(BTS.LastInterchangeMessage) == true

to throw a PropertyNotFound exception. Catching the exception indicates that a message is not the last in the batch. As an alternative, is is possible to add the property to all messages by doing the following in the custom pipeline component:

// BTS.LastInterchangeMessage only exist on the last message. Populate it here for all other message so we don’t get the exception in the orch.

bool isLastInterchangeMessage = false;

try

{

isLastInterchangeMessage = (bool)mBaseMessage.Context.Read(“LastInterchangeMessage”, “http://schemas.microsoft.com/BizTalk/2003/system-properties”);

}

catch(Exception)

{

mBaseMessage.Context.Write(“LastInterchangeMessage”, “http://schemas.microsoft.com/BizTalk/2003/system-properties”, isLastInterchangeMessage);

}

BAPI transactions are committed once the last message in the batch has been processed, regardless of whether the last transaction was successful, if at least one transaction in the batch was successful. The processing at this stage is shown below.

Processing the Last Message

Processing the Last Message

Close-up of the Commit Scope:

Commit Scope

Commit Scope

8. Exiting the orchestration

In the general case, the orchestration instance either terminates after the commit has been sent, or gets suspended if something truly unexpected happens, i.e., something that is not handled by the catch blocks in place (we did not implement general exception handlers for this reason). The orchestration flow is such that successful BAPI transactions will be committed even if the last message in the batch is invalid and causes validation error(s).

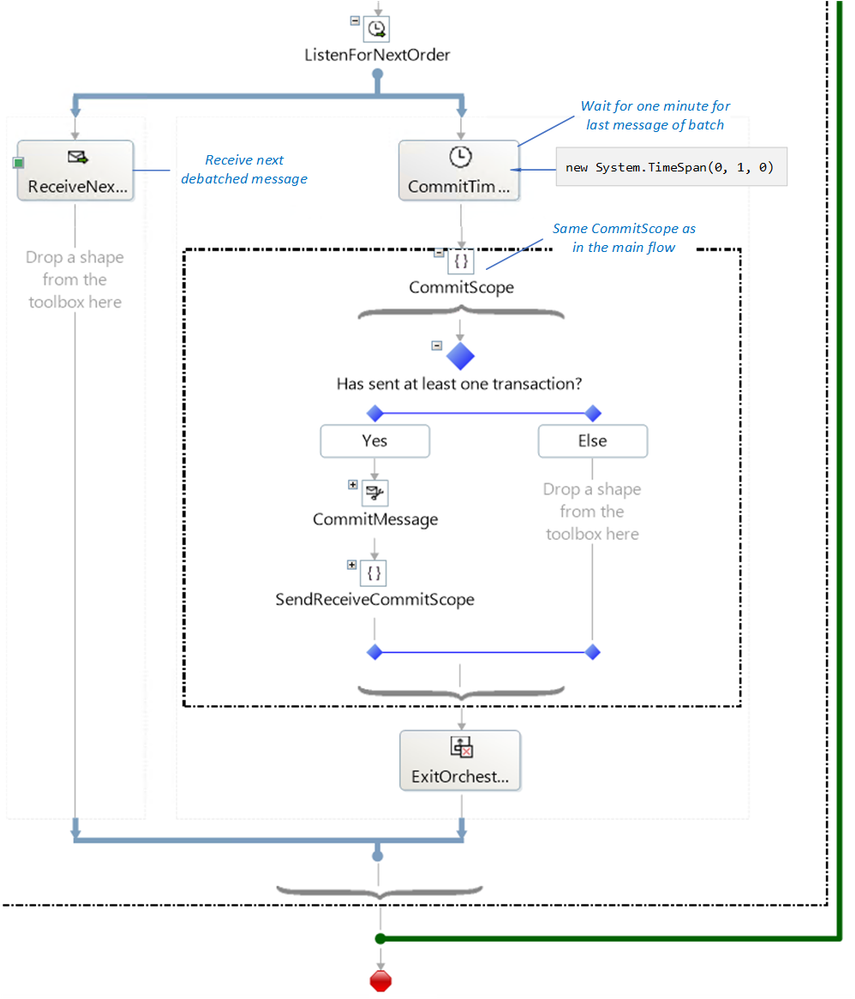

If the last message fails validation in the receive port, the orchestration instance for the batch will never receive the last message, which is suspended. To address the issue, the receive shape for the next message in a batch is placed in one of the branches of a listen shape, and in the other branch of the same shape, a delay (currently one minute) determines the maximum amount of time to wait until a commit is issued for all transactions received thus far. This is depicted in the figure below, where the CommitScope is identical to the CommitScope in the previous section.

Exit/Loop

Exit/Loop

9. Orchestration Summary

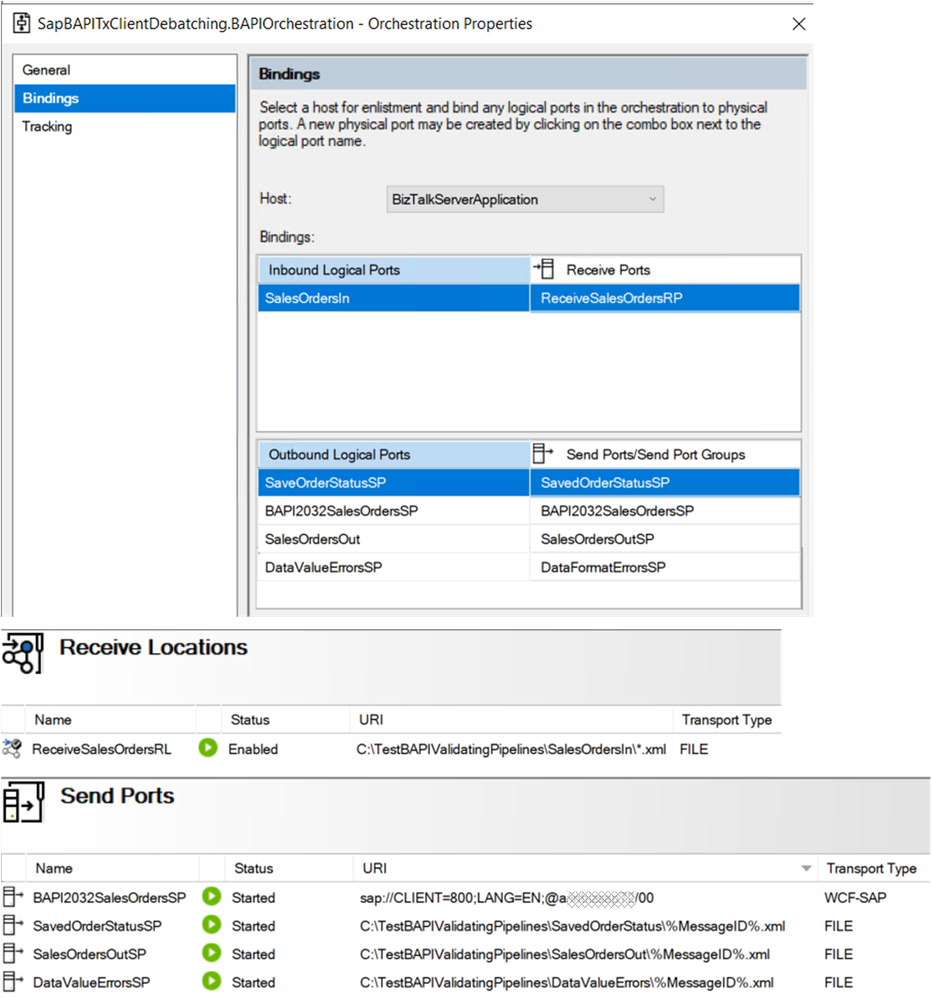

The orchestration is depicted below with all stages and connected ports.

Orchestration at a Glance

Orchestration at a Glance

The bindings file for the BizTalk artifacts is also provided in the attached archive.

BizTalk Artifacts

BizTalk Artifacts

10. Concluding Remarks

Here is a non-exhaustive list of “nice to have” features that did not make it to this article:

– Re-enqueueing of BAPI transactions in the orchestration to make it more “RIP” in the stages post validations.

– Instead of logging just one error, concatenate all errors for a given document. This can be done in the custom pipelines with the techniques presented in BizTalk custom XML validation to get error summary

– Instead of using a timer for the last message in a batch to be processed by, re-route messages suspended in the receive port to the orchestration (basically, take advantage of RIP).

– Simplify the design to debatch CREATEFROMDAT2 messages directly.

The underlying motivation for the current design was to investigate how to provide recoverable interchange processing for BAPI in the general BizTalk sense of Automatic recovery from failures of messages within a data interchange . It is a pertinent question given that (1) BAPIs in the same LUW are essentially an interchange, and (2) BAPIs can be applied to situations where best-effort is acceptable and some transactions should not block the processing of other messages. As an example of latter, BAPIs are used for high-performance mass data transfer by maximizing the number of requests before commits.

Having a “RecoverableInterchangeProcessing” property on the SAP send port configuration would be a very nice feature to have given the complexity of what was presented here.

References

Related blog articles

SAP BAPI Transactions Walkthrough

Debatching SAP BAPI Transactions

Scatter-Gather Pattern with SAP BAPI Transactions

Handling Errors in SAP BAPI Transactions

BAPI in BizTalk

Registry setting to enable BAPI transactions

Operations on BAPIs in SAP

Run BAPI Transactions in SAP using BizTalk Server

SAP Connector for Microsoft .NET

SAP Adapter Binding Properties

Get Metadata for SAP Operations in Visual Studio

Browse, search, and retrieve metadata from SAP for BAPI operations

Message Schemas for BAPI Operations

Orchestrations

Calling a pipeline inside an orchestration

Ordered Delivery of Messages

Sequential Convoy & Singleton Orchestration

Using Correlations in Orchestrations

Working with Convoy Scenarios

Walkthrough: Correlations in BizTalk Orchestration

Pipelines

Recoverable Interchange Processing

Mapping Phase (Recoverable Interchange Processing)

Deploying Custom Pipeline Components

BizTalk Server: Default Pipelines

SAP

BAPI Transaction Model documented by SAP

Using BAPIs in Distributed Systems (ALE)

Other

WCF LOB Adapter SDK

Automatic recovery from failures of messages within a data interchange

BizTalk custom XML validation to get error summary

Xsd.exe utility

Sending string to file location in BizTalk

by Contributed | Dec 28, 2020 | Technology

This article is contributed. See the original author and article here.

Introduction

A common ask from enterprise customers is the ability to monitor for the creation of Azure Subscriptions. This is not as easy as you might think so I wanted to walk you through a solution I’ve used to accomplish this. Below we will walk through creating an Azure Logic App that runs on a schedule and inserts the current subscriptions into Log Analytics. Once we have the data in Log Analytics we can either visualize new subscriptions or alert on them.

Step 1: Create a Service Principal

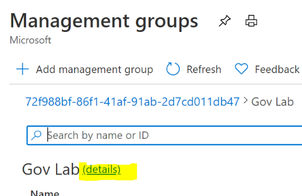

Our Logic App will utilize a Service Principal to query for the existing subscriptions. For this solution to work as intended you need to create a new Service Principal and then give them at least “Read” rights at your root Management Group.

If you’ve never created a service principal, you can follow this article:

Create an Azure AD app & service principal in the portal – Microsoft identity platform | Microsoft Docs

You’ll need the following information from the service principal:

- Application (client) id

- Tenant id

- Secret

Once the service principal has been created you need to give it reader rights at the Management Group level.

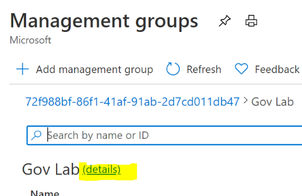

Open the “Management Group” blade in the Azure portal. From the root Management Group click on the (details) link.

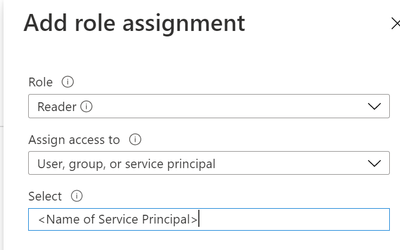

Click on “Access Control” | “Add” | “Add role assignment”

Grant the Service Principal the “Reader” role.

Step 2: Create the Logic App

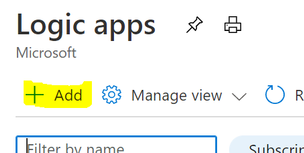

In the Logic App blade click on “Add”

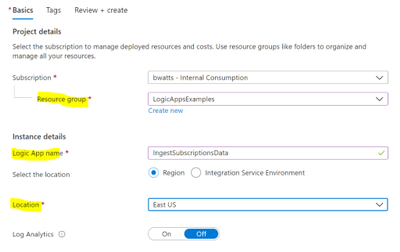

Fill in the required fields and create the Logic App.

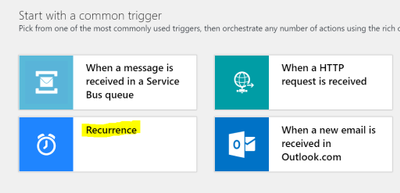

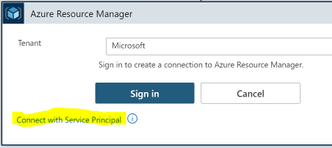

In the Logic App Designer choose the “Recurrence” template.

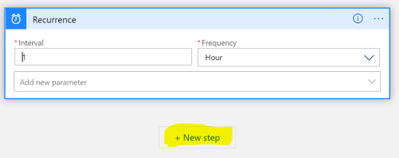

Configure the interval that you want to query for subscriptions. I chose to query every hour below. Then click on the “New step” button:

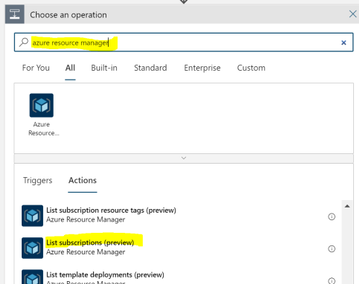

Search for “azure resource manager” and choose the “List subscriptions (preview)” action.

You want to connect with a service principal.

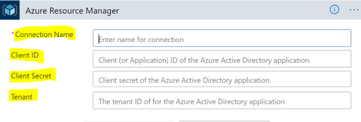

Fill in the information for your service principal (the “Connection Name” is just a display name):

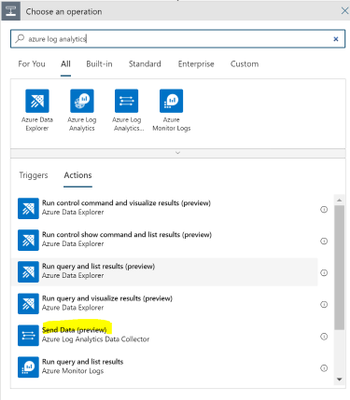

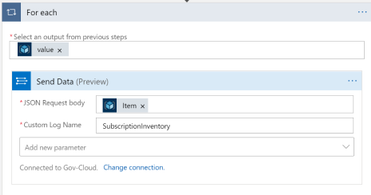

Note that this action doesn’t require any configuration besides setting up the connection. After configuring the service principal click on “New Step” and search for “Azure Log Analytics.” Choose the “Send Data (preview)” action.

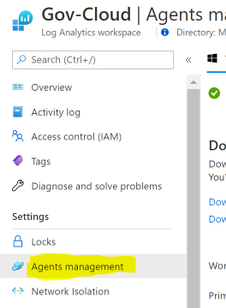

Connect to the Log Analytics workspace that you want to send the data to. You can get the workspace id and key within the Log Analytics blade in Azure:

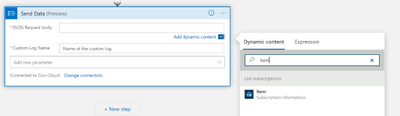

Once the connection is made to the Log Analytics Workspace you need to configure the connector:

- JSON Request Body: click in the box and then choose “Item” from the dynamic content

- Custom Log Name: Name of the log to be created in Log Analytics. Below I chose SubscriptionInventory

Note that when you choose “Item” it will put the “Send Data” action into a loop.

We can go ahead and save the Logic App and optionally run it to test the insertion of data into Log Analytics.

Step 3: Wait

This Logic App will need to run for a while before the data is useful. You can verify that the Logic App runs every hour and view the raw data in Log Analytics to verify everything is working.

Below is an example of viewing the table “SubscirptionInventory_CL” in Log Analytics

Step 4: Setting up Alerts

We will setup an alert for Subscriptions created in the last 4 hours.

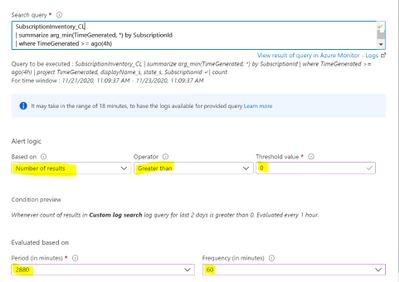

Below is the Kusto query we can use to find the subscriptions created in the last 4 hours:

SubscriptionInventory_CL

| summarize arg_min(TimeGenerated, *) by SubscriptionId

| where TimeGenerated >= ago(4h)

| project TimeGenerated, displayName_s, state_s, SubscriptionId

The key to this query is using the arg_min to get the first time we see the subscription added to log analytics. When we setup the alert we will look back a couple days and get the first occurrence of the subscription and then if the first occurrence is within the last 4 hours create an alert.

Now we are ready to create the alert within Azure Monitor. Open your Log Analytics Workspace and go to the Logs tab. Run the above query in Log Analytics and then click on “New alert rule”

**Note: I find this easier than going through Azure Monitor to create the alert because this selects your workspace and puts the correct query in the alert configuration.

You’ll see a red exclamation point next to the condition. Click on the condition to finish configuring the alert. Below are the parts you need to configure highlighted.

Now you just finish creating the alert. If you’ve never created an Azure Monitor Alert here is documentation to help you finish the process.

Create, view, and manage log alerts Using Azure Monitor – Azure Monitor | Microsoft Docs

**Note: Make sure you let the Logic App run for longer than the period you’re alerting on. In this example I’d need to let my Logic App run for at least 5 hours (4 hours is the alert threshold + 1 hour). The query relies on the history so if I run this before my Logic App has run long enough then it will trigger saying every subscription.

Step 5: Visualizing through Workbooks

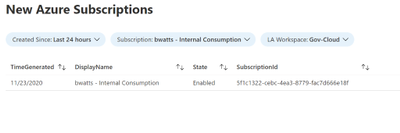

We can utilize a simple Azure Workbook to visualize the data in Log Analytics. The below workbook has the following parameters:

- Created Since: set this to show all the subscriptions created since this date

- Subscription: Filter down to the subscription that has the Log Analytics Workspace with the data

- LA Workspace: Select the Log Analytics workspace that you’re Logic App is putting data into

**Note: This workbook is assuming that the table name that your using is SubscriptionInventory_CL. If you’re using a different table name then you’ll need to modify the queries in the workbook.

Once you fill in the parameters there will be a simple table showing the day we detected the subscription, the display name, the state and the subscription id.

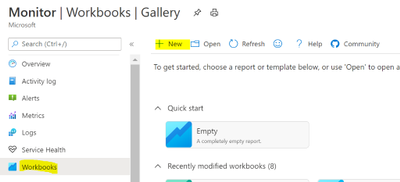

Open the Azure Monitor blade and go to the Workbook tab. Click on “New”

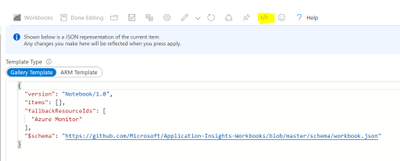

Click on the “Advanced Editor”

Replace the content from the following link:

https://raw.githubusercontent.com/bwatts64/Downloads/master/New_Subscriptions

Click on Apply:

You can now verify that you’re able to visualize the data in Log Analytics. Once you’ve verified that click on “Save” to save the newly created workbook.

Summary

Monitoring new subscription creating in your Azure Tenant is a common ask by customers. Here we have utilized a Logic App to insert our subscription data into Log Analytics. From there we can both alert and visualize new subscriptions that are created in your environment.

Recent Comments