by Contributed | Mar 18, 2021 | Technology

This article is contributed. See the original author and article here.

Host: Raman Kalyan – Director, Microsoft

Host: Talhah Mir – Principal Program Manager, Microsoft

Guest: Dawn Cappelli – VP of Global Security & CISO, Rockwell Automation

The following conversation is adapted from transcripts of Episode 5 of the Uncovering Hidden Risks podcast. There may be slight edits in order to make this conversation easier for readers to follow along. You can view the full transcripts of this episode at: https://aka.ms/uncoveringhiddenrisks

In this podcast we explore steps to take to set up and run an insider risk management program. We talk about specific organizations to collaborate with, and top risks to address first. We hear directly from an expert with three decades of experience setting up impactful insider risk management programs in government and private sector.

RAMAN: Hi, I’m Raman Kalyan, I’m with Microsoft 365 Product Marketing Team.

TALHAH: And I’m Talhah Mir, Principal Program Manager on the Security Compliance Team.

RAMAN: We have more time with Dawn Cappelli, CISO of Rockwell Automation. We’re going to talk to her about how to set up an effective insider risk management program in your organization.

TALHAH: That’s right. Getting a holistic view of what it takes to properly identify and manage that risk and do it in a way so that it’s aligned with your corporate culture and your corporate privacy requirements and legal requirements. Ramen and I talk to a lot of customers now and it’s humbling to see how front and center insider risk, insider threat management, has become, but at the same time, customers are still asking, “How do I get started?”

Dawn, what do you tell those customers, those peers of yours in the industry today, with the kind of landscape and the kind of technologies and processes and understanding we have, in terms of how to get started building out an effective program?

DAWN: First of all, you need to get HR on board. I mean, that’s essential. We have insider risk training that is specifically for HR. They have to take it every single year. We have our security awareness training that every employee in the company has to take every year, HR in addition has to take specific insider risk training. So, in that way we know that globally we’re covered. So that’s where I started, was by training HR, and that way the serious behavioral issues, I mean, IP theft is easier to detect, but sabotage is a serious issue, and it does happen.

I’m not going to say it happens in every company, but when you read about an insider cyber sabotage case, it’s really scary, because this is where you have your very technical users who are very upset about something, they are angry with the company, and they have what the psychologists called personal predispositions that make them prone to take action. Because most people, no matter how angry you are, most people are not going to actually try to cause harm, it’s just not in our human nature.

But like I said, I worked with psychologists from day one, and they said, “The people that commit sabotage, they have these personal predispositions. They don’t get along with people well, they feel like they’re above the rules, they don’t take criticism well, you kind of feel like you have to walk on eggshells around them.” And so I think a good place to start is by educating HR so that if they see that, they see someone who has that personality and they are very angry, very upset, and their behaviors are bad enough that someone came to HR to report it, HR needs to contact, even if you don’t have an insider risk team, contact your IT security team and get legal involved, because you could have a serious issue on your hand. And so, I think educating HR is a good to start.

Of course, technical controls are a good place to start. Think about how you can prevent insider threats. That’s the best thing to do is lock things down so that, first of all, people can only access what they need to, and secondly, they can only move it where they need to be able to move information. So really think about those proactive technical controls.

And then third, take that look back, like we talked about Talhah, take that look back. Pick out just some key people, go to your key business segments and say, “Hey, who’s left in the past” I mean, as long as your logs go back, if they go back six months, you can go back six months. But just give me the name of someone who’s left who had access to the crown jewels, and just take a look in all those logs and see what you see. And you might be surprised.

TALHAH: Dawn, we’re actually hearing that from our customers quite a bit. The way they frame it is that, “Why don’t you look through some of the logs I already have in the system, parse through that, to give me an insider risk profile, if you will, of what’s happening, what looks like potential shenanigans in the environment, so I can get a better sense of where I need to focus and what kind of a case I need to make to my executive sponsor so I can get started.” So that’s definitely something we’re thinking about quite deeply and hearing consistently from our customers as well.

DAWN: Yeah, because the interesting thing we found in CERT, we expected that we would find very sophisticated ways of exfiltrating information, but what we found was these are insiders, they don’t have to do anything fancy. If they can use a USB drive, they’re going to use a USB drive, especially if you don’t have an insider risk program, and so they think they can get away with it. If it’s a small amount of information, they’ll email it to their personal email account. Or if you’re an Office 365 user, just go and download the information onto a personal computer if you can, move it to a cloud site.

We found there weren’t a whole lot of really sophisticated theft of IP cases, and maybe that’s because those people weren’t caught. But if you can get to the point where you have a mature insider risk program that’s analytics based, then you have time to look at the more sophisticated ways of exfiltrating information.

RAMAN: I had a conversation with a customer about a week and a half ago. And you talked about people who are sometimes doing things maliciously, they are also doing other things. Have you looked at things like sentiment analysis? This customer was talking to me about hey, communications, like people in communications actually saying things that they shouldn’t be saying, maybe harassing people, and then that leading to other types of behaviors, to your point around sabotage. Would love for you to kind of, if that’s something that you have either implemented yourself, or if you’ve heard as part of the broader OSIT group, around the communications people, the harassment, and all that kind of stuff.

DAWN: Yeah, we did look at that when I was in CERT. Back then we found that the technologies just weren’t mature enough, so we did not have any luck with it back then. And I don’t know what Dan Costa said to you as far as what they’re doing now, but in my experience, I have not found anything that really was effective.

I tried a little experiment at Rockwell, with legal approval, and just kind of looked for words like kill, and die, you know, those kinds of words, and it came back like… IT uses those words all the time. Like, “The system died, I killed the process.” It was like, oh, this just isn’t working at all. And the other thing that made it really hard, just with sentiment analysis, people were very casual in their communications. So it was the informal communications that made it really difficult to really tell the sentiment. So yeah, I’d love to hear if the tools mature to that point, that would be great.

RAMAN: One of the things that we’ve been looking at is using Azure Cognitive Services to really start to think about natural language, to distinguish between, “That product is killer” to, “I’m going to kill you.” If we were, to your point, initially, yeah, it would be looking at keywords, and then you get overloaded with a ton of different alerts. Now if you can distinguish between the context of how the word kill was used, then you can start to highlight things like, again in a risk score type of thing, that this could be more risky communication than this other communication. Allow you to really prioritize and filter through it.

DAWN: Hmm, interesting. Do you know if anyone has gone to the European Works Councils about that kind of technology?

RAMAN: One of the things that we have been working on, is that we have customers in Europe using some of our solutions to start to look at communications, and they have been working with the various worker councils to start to think about, for example, pseudonymization. You want to anonymize the user before you go down the path of really investigating them. If you’re just highlighting this could be a possible violation, you want to do that in a way that doesn’t really invite bias or discrimination.

And if he can do that upfront, then that would allow you to say, “Hey, okay, this might be something that’s a challenge.” And one of the things that we’ve seen recently, especially with COVID and all the different stressors that people are under, is that some customers are actually using machine learning classifiers that we have for threats, and really looking, not at me trying to threaten somebody else, but me maybe threatening myself. So suicide type things, people under a lot of pressure, and we’ve seen a lot of organizations start to take that route. And also, in education, where you have a lot of young folks who might be sharing things in appropriately, their imagery or bullying, that kind of stuff. That’s another area where we’re seeing some activity around this.

DAWN: Hmm. That’s interesting. Yeah, I bring up the works councils just because when you’re talking insider risk, it’s a really important topic, that if anybody is watching this that doesn’t know what the works councils are and does business in Europe, you need to find out what they are because basically they’re there to protect the privacy of the employees in the company. And some of them have a lot of power, like in Germany, they can just block you from using a new technology, and in other countries you simply have to inform them, but they can’t stop you.

And we’re very careful about our works councils, and we have taken the approach that that’s our bar. If we can’t get something through the works councils, then we don’t do it, because we feel like they’re protecting the privacy of their employees, and all of our employees are entitled to that degree of privacy. So that’s kind of how we approach it, and so it’s kind of an all or nothing approach for us. But that’s each company’s decision to make, and it probably depends on how much business you do where and how global you really are, but it’s something that everybody should look into who’s working in insider threat.

TALHAH: With COVID-19, it’s been sort of a punch in the gut, the whole roles, having to react, personal lives, professional lives. And clearly, we’re starting to see from our customers this insider risk becoming more heightened in terms of awareness of it, and a need to manage it. Because you have work from home, and data’s being moved all over the place. What have you seen work in this environment, with your experience, how have you adjusted to this COVID reality? Have you done things differently with your program? What kind of advice would you give to your peers in the industry and how to deal with it?

DAWN: So, we were fortunate. I know a lot of companies, from what I’ve been reading, a lot of companies, their employees use desktops at their office. And when COVID struck, suddenly you have employees at home working on their personal computers. Fortunately, we didn’t have that. We’ve been using all laptops since I went to Rockwell in 2013, so it was easier for us because our employees are just working at home now. They’re off our network, but they’re using their same computer they always have, with the same controls that we’ve always had. But we are seeing a big uptick in them downloading, and again, this is not malicious, but downloading a game that has malware in it, downloading pirated copies of software, things like that.

Because they’re at home, and they’re sitting at their desk and I guess they figure, “Hey, I have my Rockwell computer here, I guess I’ll play my games on here and not fight with the kids, because now they’re home, they’re trying to do schoolwork, they’re trying to play games, they’re trying to watch movies. And I’m not going to compete for that computer, I’m going to use my Rockwell computer.” So, we’re catching a lot of those things. And that’s what I meant when I said that by using the analytics to give us more time, we’re not doing all those manual audits.

Now we have time that the C-CERT, they used to catch those things and they would just kind of, “Hey, you’re not allowed to do that, get that off of there.” Or just block it. But now they come to us because sometimes when you see someone downloading malware, we had an employee who downloaded malicious hacking tools, and our C-CERT contacted the insider risk team and said, “Hey, this is someone who’s a developer and downloaded a hacking tool, and so we’re going to hand it to you to investigate.” And we talked to their manager because we thought, oh, well maybe this is a pen tester and so he needed the hacking tools.

Well, there was no reason that he needed the hacking tools, and the manager was very concerned. Like, “What is that guy doing?” And he was sophisticated, we have a secure development environment that protects that development environment with additional controls. And he downloaded it to his Rockwell computer, and then he was trying to move it over into the secure development environment, so we saw what he was doing. And he had no good reason, but this is where we didn’t rely on the human social behaviors to trigger the investigation, we were able to cut, catch it quickly, because of that technical indicator, and because of the partnership with the C-CERT.

It’s interesting just to see, as you talk about the evolution of technology for insider threat over the years, it’s now to the point where we’re not just looking at theft of IP, we’re looking at those technical indicators that might indicate sabotage. We’re not so reliant on human behavior because, look at COVID. People are working at home, so are we really going to know when we have an employee who’s really angry and really upset and getting worse and worse? I don’t know. I don’t know if we’re going to be able to rely on those human behavior so much. If you’re in the office all day, people can see that, but if you’re on a phone call here and there, you might not pick that up.

TALHAH: That’s right. And this could lead to sabotage type scenarios where, we’ve worked for our customers this ability to detect technical indicators which may indicate somebody downloading unwanted software or malicious software, or somebody trying to tamper with security controls, is so important because these could be those leading indicators, similar to behavior indicators, these are technical indicators that could indicate an oncoming potential sabotage risk.

DAWN: We had a very interesting case, but I hate to talk about that one. Yeah, I hate to talk about that one, because this actual individual told me, I don’t want you to go out and talk about me in your conferences, Dawn, don’t ever do that. Yeah, so actually I’m not going to talk about that one. I’ll talk about a different one, though. We had a team, a test engineer team, that was under intense deadlines and really working long hours and weekends. And one day two of the employees on that team had a big, huge verbal argument. Just yelling at each other, not physical, but very, very verbal argument. So bad that someone had to go get a manager to come in and break it up. So, he broke it up. Next day the whole test environment goes down, and that’s really bad. It took three days to rebuild the environment.

When you’re working nights and weekends to make a deadline, and now you lost three days, that’s a huge deal. And the manager said, “When that first happened, I was thinking, “Well, it went down, let’s just get it back up and not worry about why until later.” But then he said, “I thought about Dawn’s insider risk presentations,” because I communicate as widely as I can around the company to everyone, not just HR, about insider risk. And he said, “I thought about Dawn’s presentation and the concerning behaviors and I thought, hmm, wonder if one of those two could have deliberately sabotage the test environment.” So, he contacted us, we got legal approval to investigate, and sure enough, when we looked, one of those guys wrote a script to bring down the entire environment.

And when we talked to him, he said, “Oh, well, I had in my goals and objectives, I had an objective that I had to write some automated scripts to maintain the environment. So, I was testing it, and it just accidentally brought everything down.” And we’re like, “Wait a second,” this was in like April, his objective was due like September 30th, the end of our fiscal year. We just didn’t buy it, and we ended up looking and we could see, he actually was executing these commands. He didn’t write a script; he was executing the commands manually and brought down the test environment.

But it was a really good case where, if that manager hadn’t thought to contact us, who knows what he would’ve done next. So, I thought that was a really good, even though we did not avert the sabotage completely, he did commit the sabotage, three days impact, which was a big deal, but it could have been much, much worse because he could have done much worse, and that could have been the next step. Just another story, Talhah.

TALHAH: Love it.

RAMAN: Yeah, I mean, that’s just crazy. This has been a great conversation, Dawn. The stories that you’ve told, they’ve just been captivating, and the last thing that you just mentioned, which is really, within an organization, to have a successful insider risk program, you really need to educate all levels of the organization, all the different teams so people can sort of look and be on the lookout for these types of things. Not only to identify the risks, but to also help maybe support people who might be under intense pressure.

DAWN: Yeah, and first of all, deterrence is huge. We talk very widely; we have an insider risk blog that we put out internally for employees. We talk about cases, we talk about what we find, because deterrence is a big thing, and I think that’s why we’re not catching as much malicious activity as we used to. Now we’re finding, almost everything we’re finding is unintentional, it’s not malicious. Because I think word has gotten around, “Hey, if you try to do that, we’re going to catch you.” We tell people that all the time, don’t even try, we’re going to catch you.

To learn more about this episode of the Uncovering Hidden Risks podcast, visit https://aka.ms/uncoveringhiddenrisks.

For more on Microsoft Compliance and Risk Management solutions, click here.

To follow Microsoft’s Insider Risk blog, click here.

To subscribe to the Microsoft Security YouTube channel, click here.

Follow Microsoft Security on Twitter and LinkedIn.

Keep in touch with Raman on LinkedIn.

Keep in touch with Talhah on LinkedIn.

Keep in touch with Dawn on LinkedIn.

by Contributed | Mar 18, 2021 | Technology

This article is contributed. See the original author and article here.

We just released new features and capabilities to the Microsoft Live Video Analytics (LVA) service and if you are thinking about Live Video Analytics (LVA) on a Windows IoT device, an Azure Percept DK (dev kit), or on other edge devices powered by AI acceleration from NVIDIA and Intel, then you will really want to learn more about it! Organizations can now drive the next wave of business automation via AI-powered, real-time analytic insights from their own video streams with Microsoft Live Video Analytics (LVA).

In line with Microsoft’s vision of simplifying AI and IoT at the edge from silicon to service, the new features and capabilities we announced at the Microsoft Ignite 2021 event will allow you to deploy LVA capabilities seamlessly on Windows IoT devices, for you to build intelligent video analytics systems leveraging and capitalizing on your Windows expertise and investments. We have also ensured that LVA functions on the new family of Azure Percept devices and works seamlessly across our partner platforms such as Intel and NVIDIA.

With our focus on ensuring a consistent experience for video analytics solutions developers, irrespective of the OS and of underlying hardware acceleration platform, here are the new capabilities that help complete your end-to-end scenarios:

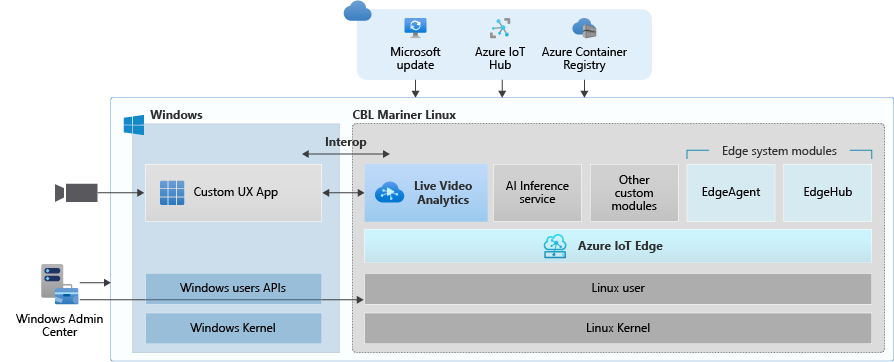

- Deploy LVA with Azure IoT Edge for Linux on Windows (EFLOW) : Leverage LVA to build and deploy Video Analytics workflows on Windows IoT devices with EFLOW.

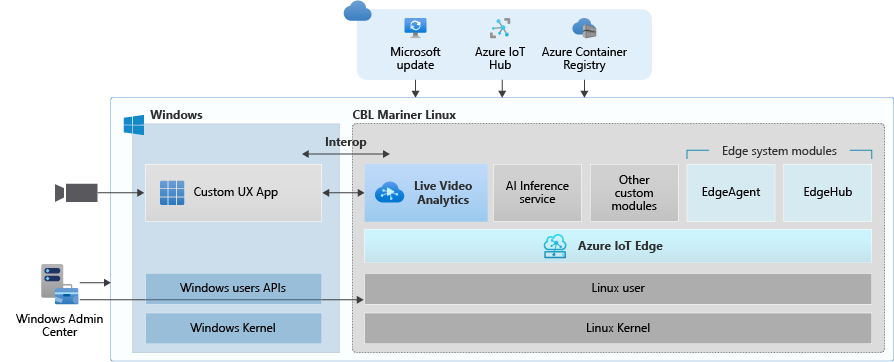

- LVA with Azure Percept: At Ignite 2021, we announced Azure Percept, an end-to-end platform for creating edge AI solutions in minutes with hardware accelerators built to integrate seamlessly with Azure AI and Azure IoT services. LVA can be leveraged on Percept to record and stream videos from edge to cloud to help you deliver business insights in real time.

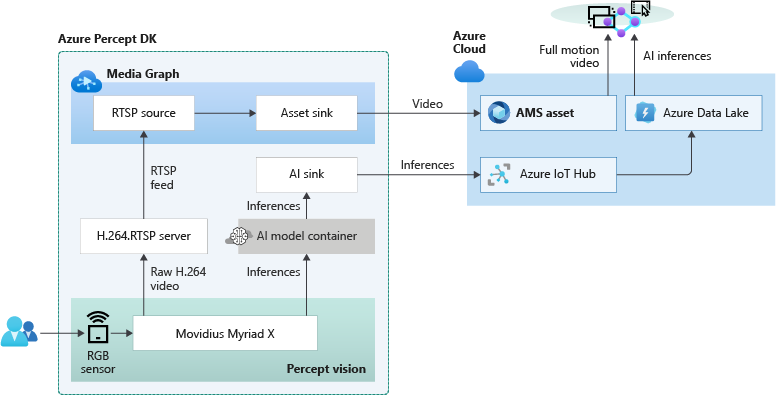

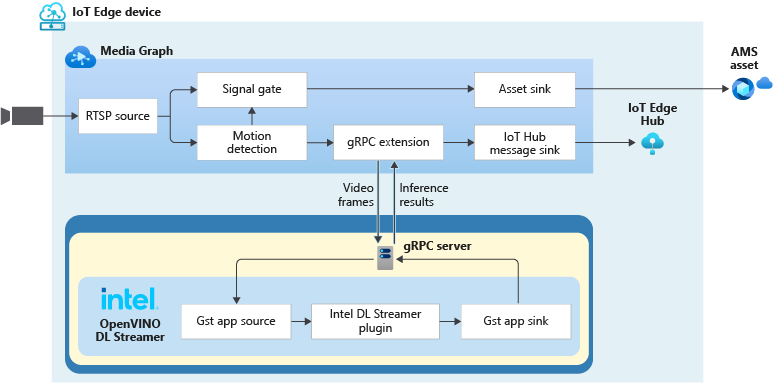

- Intel OpenVINO DL Streamer – Edge AI Extension with LVA: With the latest release of OpenVINO’s DL Streamer – Edge AI Extension from Intel, you can leverage it alongside LVA to detect, classify, and track multiple object classes (e.g., person, vehicle, bike) at high efficiency on a variety of Intel HW architectures

- NVIDIA DeepStream — AI Skills and AI Acceleration for LVA: With the latest DeepStream release (5.1), you can now deploy LVA across multiple cameras for object detection, classification and tracking on NVIDIA GPUs.

Since the preview launch of the Live Video Analytics (LVA) platform on June 2020, we evolved product capabilities and strengthened our platform to meet partner and customers’ needs in the version 2.0 refresh announced in Feb 2021 and related announcements. Additionally, we have a set of exciting capabilities that are not in the public domain yet, but we are getting ready to announce them soon at Build 2021. Please reach out to us (amshelp@microsoft.com) to learn more.

Leverage Windows edge devices as LVA processors

As a customer in industries like Manufacturing, Retail, Public Safety etc. you may have many Windows devices that are enabled as IoT sensors and processing devices. Along with Windows IoT, there is also a growing trend of Linux based containerized microservices backed by cloud-based ISV ecosystem especially for video analytics in real time. Many customers we talk to want to leverage their existing assets, be it cameras, Windows IoT devices or other IoT sensors to derive real time business intelligence by applying AI to video.

Using LVA on EFLOW you get the best of both worlds – a Windows IoT device that leverages existing Windows tooling, infrastructure investment and IT knowledge, Azure managed and deployed as well as gathering business insights via Linux based Live Video Analytics. At Ignite 2021, we delivered a set of simple steps, that can help you bring LVA and EFLOW together and unleash the power of LVA’s media graph on Windows IoT Edge devices.

As an example, you could be a retail store owner with cameras and network video recorders powered by Windows IoT and today the video might be archived and manually reviewed. With LVA and EFLOW, the operator can easily deploy Linux-based Azure Live Video Analytics on Windows, leveraging their existing Windows expertise and investments and could go from having a basic video recording system to an intelligent video analytics solution that can trigger actions driven by AI. You can also learn more about EFLOW, currently in Public preview about its features and deployments.

Live Video Analytics with Azure Percept

At Ignite 2021, our leadership team has announced Azure Percept that focuses on extending AI to the edge with an end-to-end platform that integrates Intel Movidius Myriad X vision processing unit (VPU) hardware accelerators with Azure AI and Azure IoT services and is designed to be simple to use and ready to go with minimal setup.

Percept helps customers overcome one of the key challenges of navigating the end-to-end edge AI solution creation. As a solution builder, you might already have a working AI model that you want to leverage as part of an end-to-end video analytics solution. We have partnered with the Azure Percept team to provide you with a reference solution. You can get started today by ordering your dev kit and leveraging the GitHub code.

As seen from the reference solution’s architecture below, Azure Percept leverages LVA to record video to the cloud, so that when combined with analytics metadata from the AI, you get a solution for object counting in pre-defined zones. You can visualize the results thanks to video streaming and playback capabilities of LVA.

LVA with Intel’s OpenVINO DL Streamer – AI Edge Extension

Last year, we announced an integration of LVA with Intel’s OpenVINO Model Server –Edge AI Extension module via LVA’s HTTP extension processor. This enabled our customers to run AI inferences such as object detection and classification on a variety of Intel hardware architectures (CPU, iGPU, VPU) at the edge and use cloud services like Azure Media Services and Azure IoT. At Ignite 2021, with the announcement of the OpenVINO DL Streamer – AI Edge Extension module, we are enabling additional capabilities over a highly performant gRPC extension processor while keeping the core OpenVINO inference engine the same to scale across the Intel architectures. With this integration you can now get object detection, classification and tracking for high frame rate video across multiple classes. See this tutorial for more details.

With the pre-validated configurations, pre-trained models as well as scalable hardware, users can jump start solutions to improve business efficiencies across variety of use cases such as retail, industrial, healthcare or smart cities. For example, with the vehicle classification model you can see the type of vehicle and add your own business logic i.e., validate certain vehicle types are parked in the designated area. With the object tracker you can track objects of interest and map on a timeline.

Get Started Today!

- Deploy LVA with Intel DL Streamer – Edge AI Extension using this tutorial

- Explore and deploy Intel DL Streamer – Edge AI Extension Module from Azure Marketplace

- Watch the Intel Ignite 2021 session

LVA with NVIDIA’s DeepStream SDK – AI Skills and AI Acceleration

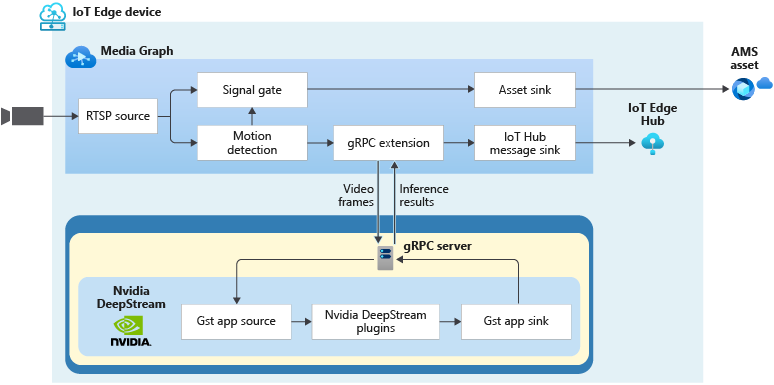

LVA and NVIDIA DeepStream SDK can be used to build hardware-accelerated AI video analytics apps that combine the power of NVIDIA graphic processing units (GPUs) with Azure cloud services, such as Azure Media Services, Azure Storage, Azure IoT, and more.

NVIDIA recently released DeepStream SDK 5.1, bringing support for NVIDIA’s Ampere architecture GPUs for massive acceleration to inference. With this release, you can leverage LVA to build video workflows that span the edge and cloud, and then combine DeepStream SDK 5.1 to build pipelines to extract insights from video.

Imagine you work for a county or city government that wants to understand traffic patterns across certain times, a retailer that wants to deliver curbside pickup to certain vehicle types, or a parking lot operator that wants to understand parking lot utilization, traffic flows and monitor in real time. With LVA managing video workflows and NVIDIA DeepStream’s investment in providing optimized AI for their underlying hardware architecture combined with the power of the Azure platform, you can now develop such video analytics pipelines from cloud to edge.

You can explore some samples on GitHub that showcase the composability of both platforms and have been tested for vehicle detection, classification, and tracking on high frame rate video. Feel free to add additional object classes such as bicycle, road sign etc. to leverage detection and tracking capability.

Get Started Today!

In closing, we’d like to thank everyone who is already participating in the Live Video Analytics on IoT Edge public preview. For those of you who are new to our technology, we encourage you to get started today with these helpful resources:

And finally, the LVA product team wants to hear about your experiences with LVA. Please feel free to contact us via TechCommunity to ask questions and provide feedback including what future scenarios you would like to see us focusing on.

**Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries.

by Contributed | Mar 18, 2021 | Technology

This article is contributed. See the original author and article here.

Written by Jason Yi, PM on the Azure Edge & Platform team at Microsoft.

Acknowledgements: Dan Lovinger

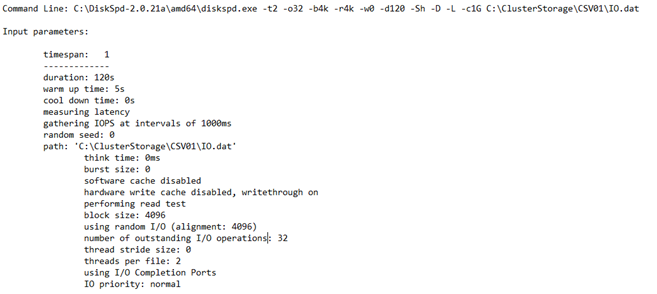

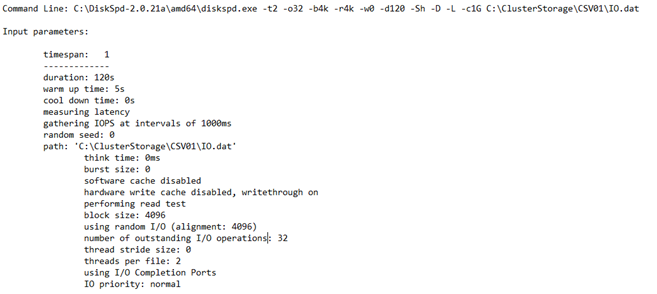

Imagine this, you have an Azure Stack HCI cluster set up and ready to go. But you have that lingering question: What is your cluster’s storage performance potential? In such cases, you can rely on micro-benchmarking tools such as DiskSpd. And if you are not aware, the tool helps you customize and configure your own synthetic workloads by tweaking built in parameters. For more information, you can read about it here.

“Visible” and Clean Data

Most folks who already have experience with DiskSpd are likely familiar with the txt output option, which is also displayed in the terminal. The purpose behind this output was to present the data in a human readable format. We also aggregated some of the finer details to generate practical metrics for the users. This also means that we determined which metrics would be considered valuable. But, did you know that there is an option to output in XML, which reveals additional, granular data such as the total IOs achieved per second.

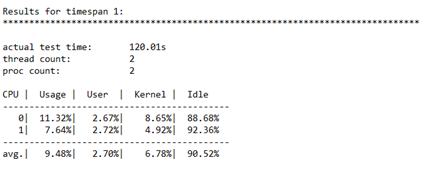

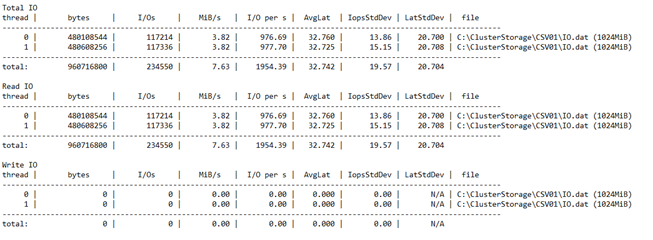

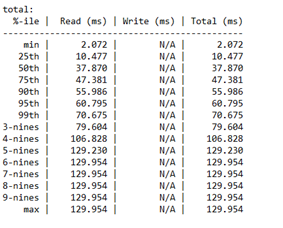

Let’s first take a few moments to review the txt output. As you may know, this output is split into four different sections:

Input settings:

CPU utilization details:

Total IO performance metrics:

Latency percentile analysis (-L parameter):

This result produces a detailed view of a couple performance metrics. That’s great, but what if you are interested in other data insights? If you did not read carefully through the DiskSpd wiki page, you may have missed the fact that there is a “hidden feature.” There is another output format that generates an XML file. This can be invoked by the -Rxml parameter and piped into an XML file with your preferred file name. But wait, there’s more! If you peep into the XML file, you will notice that there is more data than what was originally shown in the txt output, such as the total IOs achieved per second. More specifically, the XML output reveals more granular data as opposed to the aggregated data for the human eyes. If you wish to take a look, be warned – your eyes will burn from the squinting.

Table of Contents: XML

Before your eyes burn, let’s create a brief table of contents for the XML file.

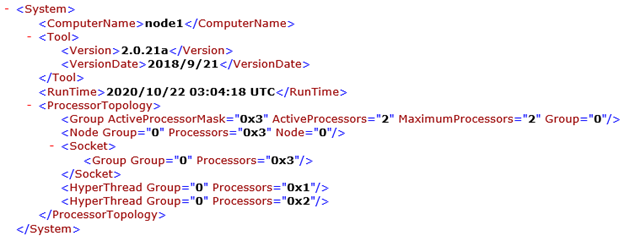

<System> Under this element, you have some basic information regarding the system itself, such as the server/VM name, DiskSpd version, number of processors, etc.

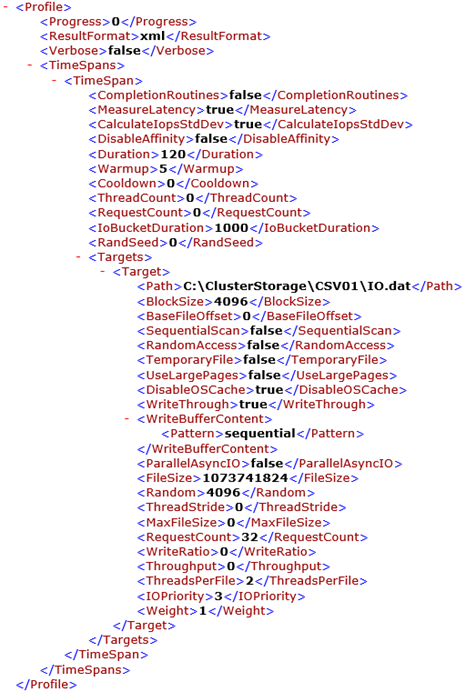

<Profile> Under this element, you will find your input parameters from when you ran DiskSpd. To name a few, this includes the queue depth, thread count, warm up time, test duration, etc. There are quite a few sub-elements within this section. Luckily, most of them are self-explanatory, and so let us focus on a few of them.

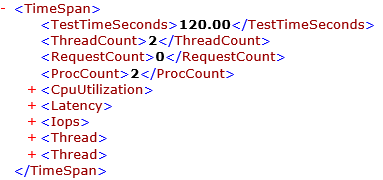

- <TimeSpans> Under this element, you will find <TimeSpan> elements. Each of those <TimeSpan> elements represent one DiskSpd test run. As you may have guessed, the content within <TimeSpan> contains a set of parameters that you, the user, specifies. For example, you can see that the <requestcount> element is set to 32 since we initially set the queue depth to be 32 when we ran DiskSpd. You can think of this section as being analogous to the “input settings” result in the txt output.

<TimeSpan> This element is not to be confused with the above <TimeSpan> element. This section contains the results of your DiskSpd test. It is similar to the data presented in the txt file, but with added granular data. More specifically, you can view the CPU usage, IOPS statistics and latency statistics (average total milliseconds, standard deviation, etc.), in their respective sub-elements:

- <CpuUtilization>

- The CPU data is broken down per core.

- <Latency>

- The latency data is broken down into separate “buckets” where each bucket corresponds to 1 percentile rank, in ascending order from 0 to 100%.

- <Iops>

- The IOPS data is broken down into separate “buckets” where each bucket corresponds to the IO data for 1 millisecond.

This may give rise to the question; can you modify the contents of this XML file and pipe it back into DiskSpd? Yes, you absolutely can! In fact, there is another parameter precisely for this purpose (-X). Here are the following steps to get you started: (great for batch testing!)

- Before using this parameter (-X), you will need to preserve the contents within the <Profile> element. Any other data that exists in the XML file may be discarded. If you plan to run the DiskSpd test with modified input parameters, be sure to make the appropriate changes in the <Profile> section.

- Optional: If you plan to run multiple DiskSpd tests, you can add more <TimeSpan> elements under <Profile>, with your desired input parameters.

- You can then run DiskSpd with the -X parameter which will take the XML file path as input and output a new XML (or txt) file with the newly generated result.

Bonus: Script to Extract IOPS

In case you wanted to start somewhere, I’ve included a short script that takes in a DiskSpd XML output named “output.xml” and extracts the total IOs achieved per second into a neat CSV file for you to view (ensure they are in the same path). This might be a good place to start if you want to get more data insights about IOPS. **Foreshadowing**

Final Remarks

Hopefully, this provides a solution for those situations where you always wanted a more detailed form of data or to run DiskSpd batch tests. You can also imagine that there are a variety of ways you can manipulate the XML output through PowerShell scripts. Alas, this is for another day.

*Script Below*

# Written by Jason Yi, PM

# 12/2020

<#

.PARAMETER d

integer number of diskspd runs (can consider it as duration since each run is one second long)

.PARAMETER path

the path to the test file

.PARAMETER rw_flag

the default is 0. 0 represents that the user wants to input their custom read/write ratio whereas 1 represents that the user wants a randomized read/write ratio

.PARAMETER g_min

the minimum g parameter (g parameter is the throughput threshold)

.PARAMETER g_max

the maximum g parameter (g parameter is the throughput threshold)

.PARAMETER b

the block size in bytes

.PARAMETER r

random IO aligned to specified size in bytes

.PARAMETER o

the queue depth

.PARAMETER t

the number of threads

.PARAMETER w

the ratio of write tests to read tests

#>

Param (

[Parameter(Position=0,mandatory=$true)][int]$d,

[Parameter(Position=2,mandatory=$true)][string]$path, # C:ClusterStorageCSV01IO.dat

[int]$rw_flag = 0,

[int]$g_min = 0,

[int]$g_max = 8000,

[int]$b = 4096,

[int]$r = 4096,

[int]$o = 32,

[int]$t = 4,

[int]$w = 0)

Function Create-Timespans{

<#

.DESCRIPTION

This function takes the input number of diskspd runs (or duration) and lasts for that input number of seconds while randomizing

the throughput threshold within a specified range. Includes same parameters initially passed in by user.

#>

Param (

[int]$d,

[string]$path,

[int]$g_min,

[int]$g_max,

[int]$b,

[int]$r,

[int]$o,

[int]$t,

[int]$w,

[int]$rw_flag

)

[xml]$xml=@”

<Profile>

<Progress>0</Progress>

<ResultFormat>xml</ResultFormat>

<Verbose>false</Verbose>

<TimeSpans>

<TimeSpan>

<CompletionRoutines>false</CompletionRoutines>

<MeasureLatency>true</MeasureLatency>

<CalculateIopsStdDev>true</CalculateIopsStdDev>

<DisableAffinity>false</DisableAffinity>

<Duration>1</Duration>

<Warmup>0</Warmup>

<Cooldown>0</Cooldown>

<ThreadCount>0</ThreadCount>

<RequestCount>0</RequestCount>

<IoBucketDuration>1000</IoBucketDuration>

<RandSeed>0</RandSeed>

<Targets>

<Target>

<Path>$path</Path>

<BlockSize>$b</BlockSize>

<BaseFileOffset>0</BaseFileOffset>

<SequentialScan>false</SequentialScan>

<RandomAccess>false</RandomAccess>

<TemporaryFile>false</TemporaryFile>

<UseLargePages>false</UseLargePages>

<DisableOSCache>true</DisableOSCache>

<WriteThrough>true</WriteThrough>

<WriteBufferContent>

<Pattern>sequential</Pattern>

</WriteBufferContent>

<ParallelAsyncIO>false</ParallelAsyncIO>

<FileSize>1073741824</FileSize>

<Random>$r</Random>

<ThreadStride>0</ThreadStride>

<MaxFileSize>0</MaxFileSize>

<RequestCount>$o</RequestCount>

<WriteRatio>$w</WriteRatio>

<Throughput>0</Throughput>

<ThreadsPerFile>$t</ThreadsPerFile>

<IOPriority>3</IOPriority>

<Weight>1</Weight>

</Target>

</Targets>

</TimeSpan>

</TimeSpans>

</Profile>

“@

# 1 flag means that the user wishes to randomize the rw ratio

# 0 flag means that the user wishes to control the rw ratio

# Basically, throw an error when the flag is no 0 or 1

if ( ($rw_flag -ne 1) -and ($rw_flag -ne 0) ){

throw “Invalid rw_flag value. Please choose 0 to provide your own rw ratio, or 1 to randomize the rw ratio.

“

}

$path = Get-Location

# loop up until the number of runs (duration) and add new timespan elements

for($i = 1; $i -lt $d; $i++){

$g_param = Get-Random -Minimum $g_min -Maximum $g_max

$true_w = Get-Random -Minimum 0 -Maximum 100

# if there is only one timespan, add another

if ($xml.Profile.Timespans.ChildNodes.Count -eq 1){

# clone the current timespan element, modify it, and append it as a child

$new_t = $xml.Profile.Timespans.Timespan.Clone()

$new_t.Targets.Target.Throughput = “$g_param”

if ($rw_flag -eq 1){

$new_t.Targets.Target.WriteRatio = “$true_w”

}

$null = $xml.Profile.Timespans.AppendChild($new_t)

}

else{

# clone the current timespan element, modify it, and append it as a child

$new_t = $xml.Profile.Timespans.Timespan[1].Clone()

$new_t.Targets.Target.Throughput = “$g_param”

if ($rw_flag -eq 1){

$new_t.Targets.Target.WriteRatio = “$true_w”

}

$null = $xml.Profile.Timespans.AppendChild($new_t)

}

}

# show updated result

$xml.Profile.Timespans.Timespan

# save into xml file

$xml.Save(“$pathexpand_profile.xml”)

}

#

# SCRIPT BEGINS #

#

# create the xml file with diskspd parameters

Create-Timespans -d $d -g_min $g_min -g_max $g_max -path $path -b $b -r $r -o $o -t $t -w $w -rw_flag $rw_flag

# create path, input file, and node variables

$path = Get-Location

# feed profile xml to DISKSPD with -X parameter (Running DISKSPD)

Invoke-Expression “.diskspd.exe -X’$pathexpand_profile.xml’ > output.xml”

$file = [xml] (Get-Content “$pathoutput.xml”)

$nodelist = $file.SelectNodes(“/Results/TimeSpan/Iops/Bucket”)

$ms = $nodelist.getAttribute(“SampleMillisecond”)

# store the bucket objects into a variable

$buckets = $file.Results.TimeSpan.Iops.Bucket

# change the millisecond values to seconds

$time_arr = 1..$d

foreach ($t in $time_arr){

$buckets[$t-1].SampleMillisecond = “$t”

}

# select the objects you want in the csv file

$nodelist |

Select-Object @{n=’Time (s)’;e={[int]$_.SampleMillisecond}},

@{n=’Total IOs’;e={[int]$_.Total}} |

Export-Csv “$pathiops_stat_seconds.csv” -NoTypeInformation -Encoding UTF8 -Force # Have to force encoding to be UTF8 or data is in one column (UCS-2)

# import modified csv once more

$fileContent = Import-csv “$pathiops_stat_seconds.csv”

# if duration is less than 7 (number of percentile ranks), then add empty rows to fill that gap

if ($d -lt 7 ) {

for($i=$d; $i -lt 7; $i++) {

# add new row of values that are empty

$newRow = New-Object PsObject -Property @{ “Time (s)” = ” }

$fileContent += $newRow

}

}

# show output in the terminal

$fileContent | Format-Table -AutoSize

# export to a final csv file

$fileContent | Export-Csv “$pathiops_stat_seconds.csv” -NoTypeInformation -Encoding UTF8 -Force

Recent Comments