by Contributed | Apr 1, 2021 | Technology

This article is contributed. See the original author and article here.

As you know, the Azure CLI already AI-build in with the az find command, and you might have seen the great feature like AI-powered PowerShell module called Az Predictor Module (Azure PowerShell Predictions), which does what the name says, predict PowerShell commands. Now with az next, the team also brought a similar feature to the Azure CLI. The team’s goal with az next is to guide users through their scenarios or sequence of jobs-to-be-done in tool, so that they could remain focused and avoid unnecessary external documentation searches.

Az next adopts our latest design guidelines and should help making the Azure CLI more approachable for all users, including beginners.

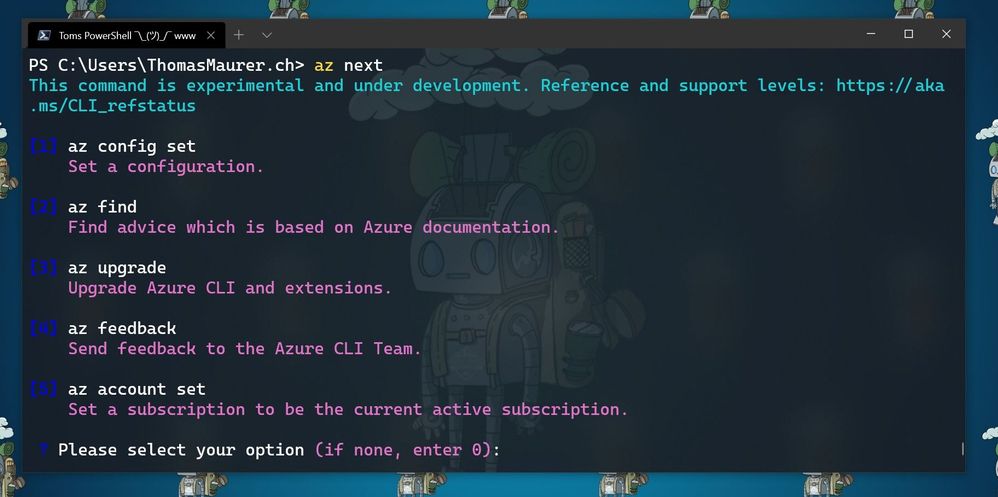

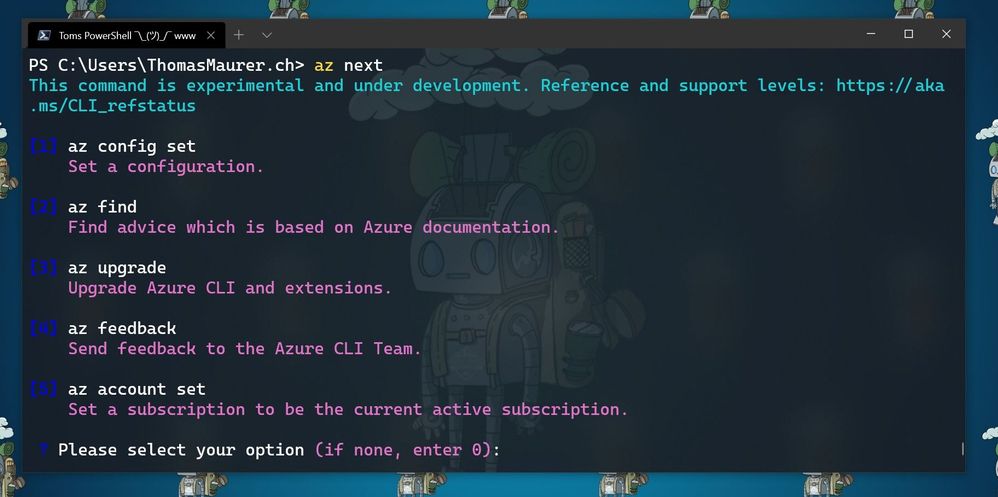

There are two scenarios in which are currently supported. The first one is a simple walkthrough for the next commands as soon as you execute az next. After that, the Azure CLI will return set of command options, which are highly likely to come after your last command. This is super helpful if you are running a sequence of commands, the Azure CLI will provide you with predictive recommendations.

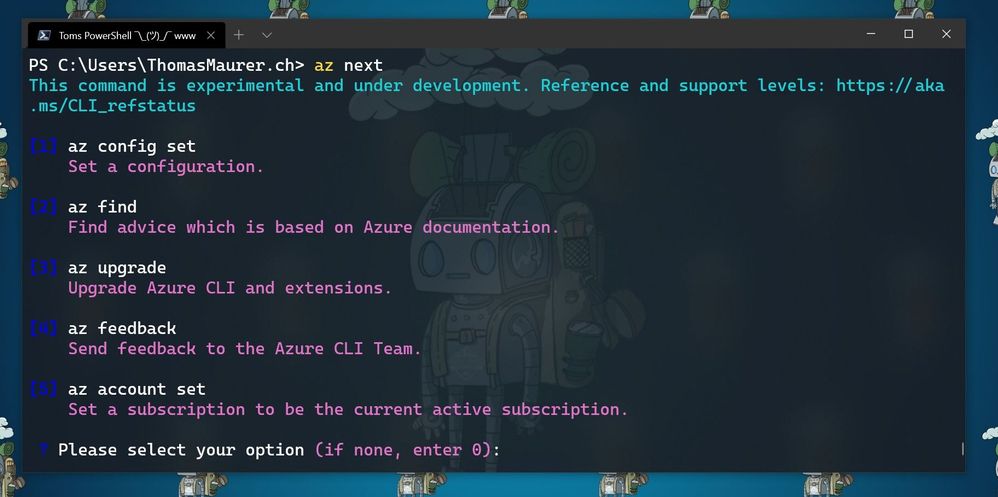

Here for example, the commands after I ran az login and logged in into my Azure environment, followed by az next.

az next after az login

az next after az login

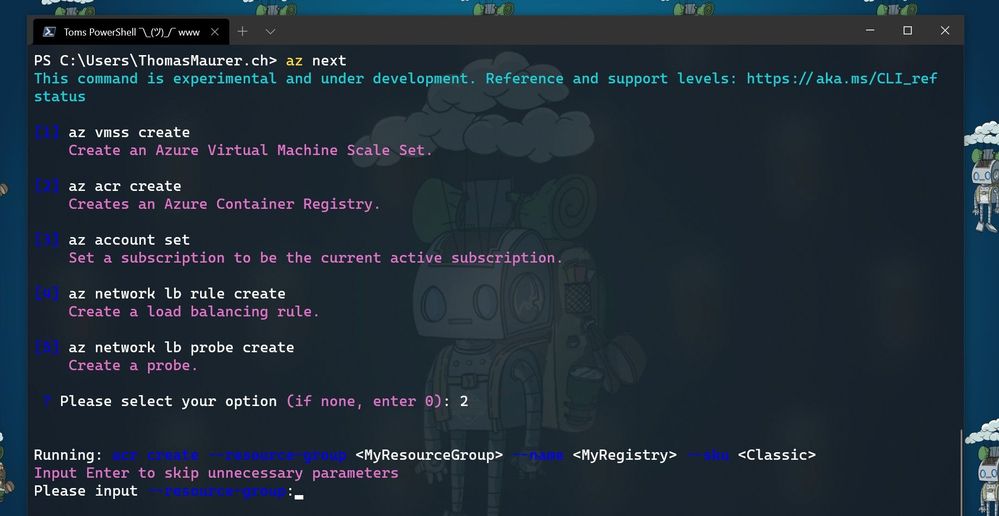

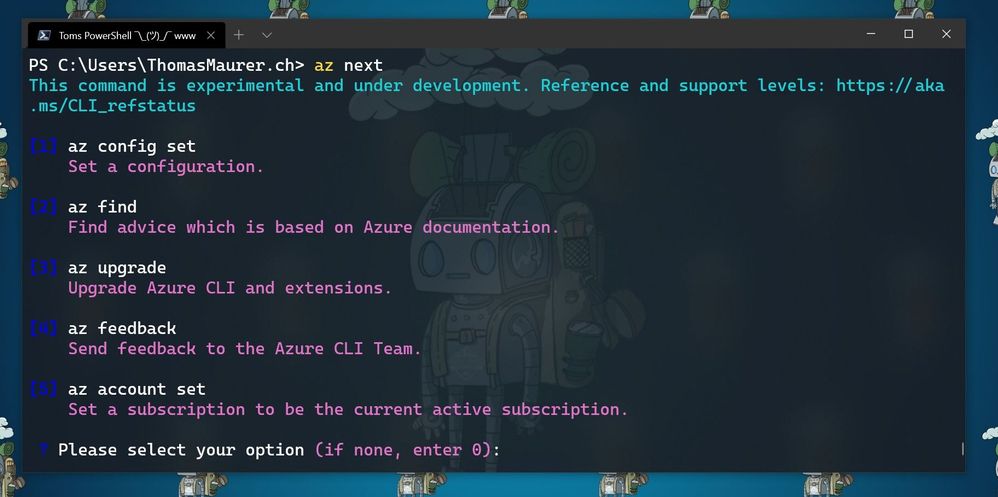

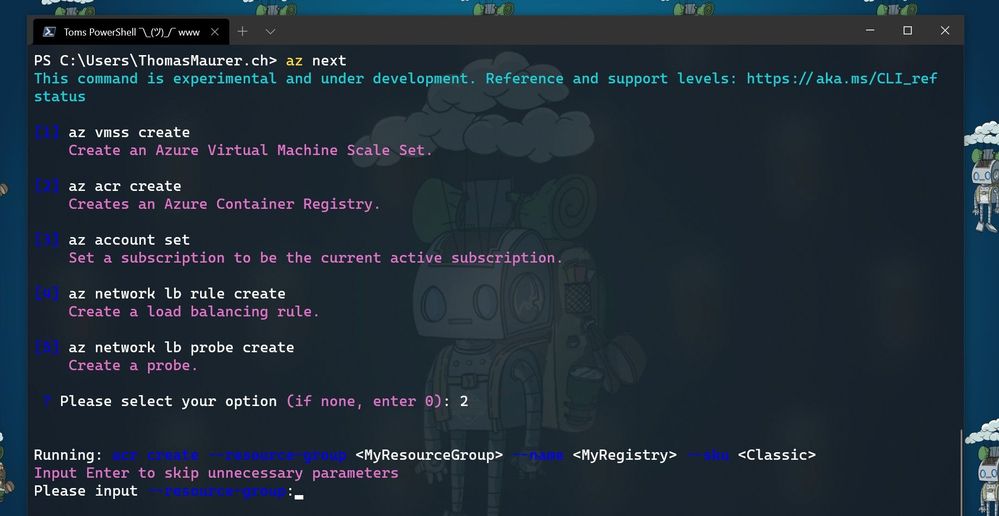

The second one is the end-to-end scenario walkthrough with the aim to help you achieve a specific scenario in mind. In these case the options show up in form of a summary instead of an explicit command, and the tool will guide you through individual command completion.

az next after creating a resource group

az next after creating a resource group

Getting started with az next

To get started with az next, you can simply start using the preview by downloading the latest Azure CLI. You can log issues or feature requests in our GitHub repo: GitHub – Azure/azure-cli: Azure Command-Line Interface

Configure az next

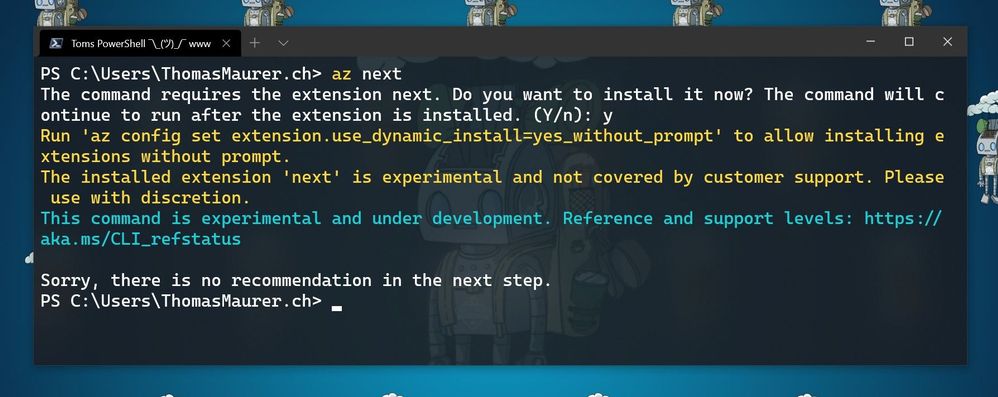

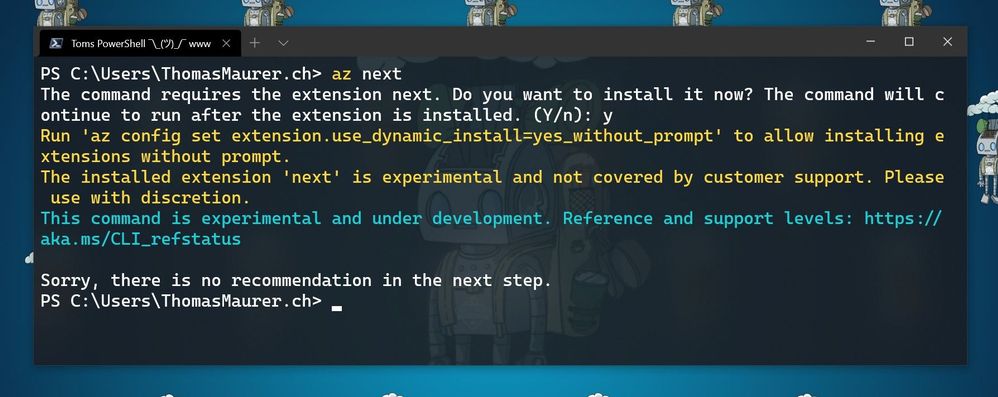

The first time you run az next, you will be prompted to install the Azure CLI extension.

Install Az next

Install Az next

If your Azure CLI doesn’t automatically ask to install the extension. you can run the following command:

az extension add -n next

Now you can configure az next, to switch between the different modes and experiences.

Set a non-interactive experience:

az config set next.execute_in_prompt = False

Set the options to be more elaborated with parameters

az config set next.show_arugments = True

For additional customization

az next –-help

Learn more

To learn more, check out the full announcement blog for the az next command here on Tech Community.

If you want to learn more about the Az Predictor Module and PowerShell Predictive IntelliSense, check out my blog posts:

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

We have released a new early technical preview of the JDBC Driver for SQL Server which contains a few additions and changes.

Precompiled binaries are available on GitHub and also on Maven Central.

Below is a summary of the new additions and changes.

Added

- Added Open Connection Retry feature #1535

- Added server recognition for Azure Synapse serverless SQL pool, and Azure SQL Edge #1543

Fixed

- Fixed potential integer overflow in TDSWriter.writeString() #1531

Getting the latest release

The latest bits are available on our GitHub repository, and Maven Central.

Add the JDBC preview driver to your Maven project by adding the following code to your POM file to include it as a dependency in your project (choose .jre8, .jre11, or .jre15 for your required Java version).

<dependency>

<groupId>com.microsoft.sqlserver</groupId>

<artifactId>mssql-jdbc</artifactId>

<version>9.3.0.jre11</version>

</dependency>

Help us improve the JDBC Driver by taking our survey, filing issues on GitHub or contributing to the project.

Please also check out our

tutorials to get started with developing apps in your programming language of choice and SQL Server.

David Engel

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

The following blog post helps you troubleshoot the reference implementation for DKE. Some of this information may apply to DKE partner implementations as well, but it covers primarily the reference implementation hosted in Azure or on IIS. At any rate, this guide does not replace the official documentation.

This blog post consists of three parts:

Part A: Checklist

Part B: Useful tools for troubleshooting DKE

Part C: Step by step troubleshooting guide

Part A: Checklist

After installing / configuring DKE using the official documentation, going through this checklist will help you in identifying and correcting errors in your setup.

The troubleshooting guide below refers to some of the steps in this checklist, using the codes prepended to the titles of the sections (e.g. «CL1»).

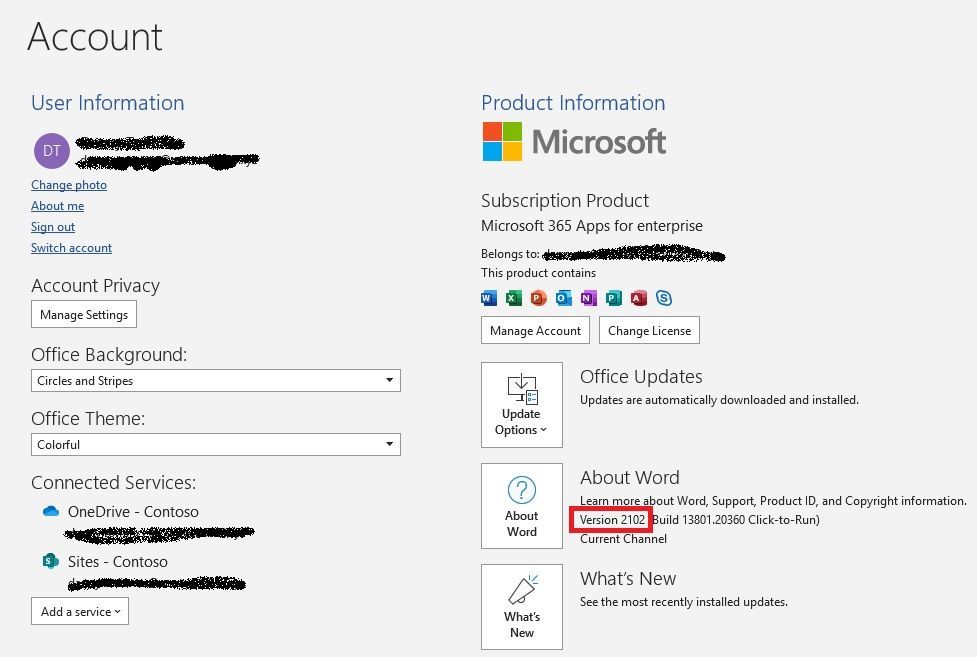

CL1: Office version

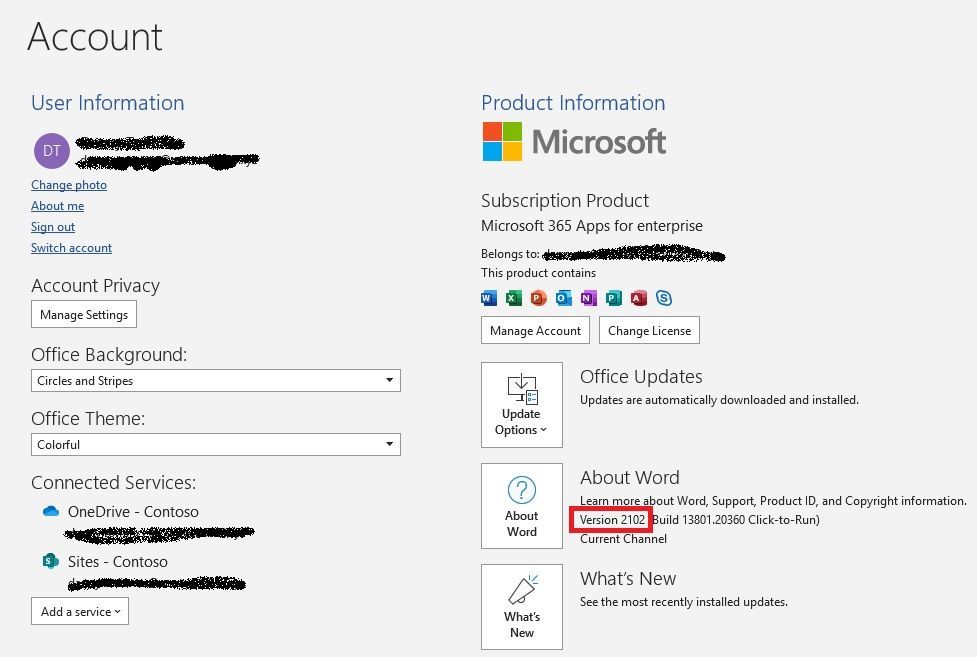

DKE is supported on Microsoft 365 Apps for enterprise version 2009 or later. Here’s how you check the version:

CL2: DKE URL in root

The DKE service URL needs to be based on the root level, i.e. not a sub directory:

CL3: No trailing slash in DKE URL

The DKE URL must not contain a trailing slash:

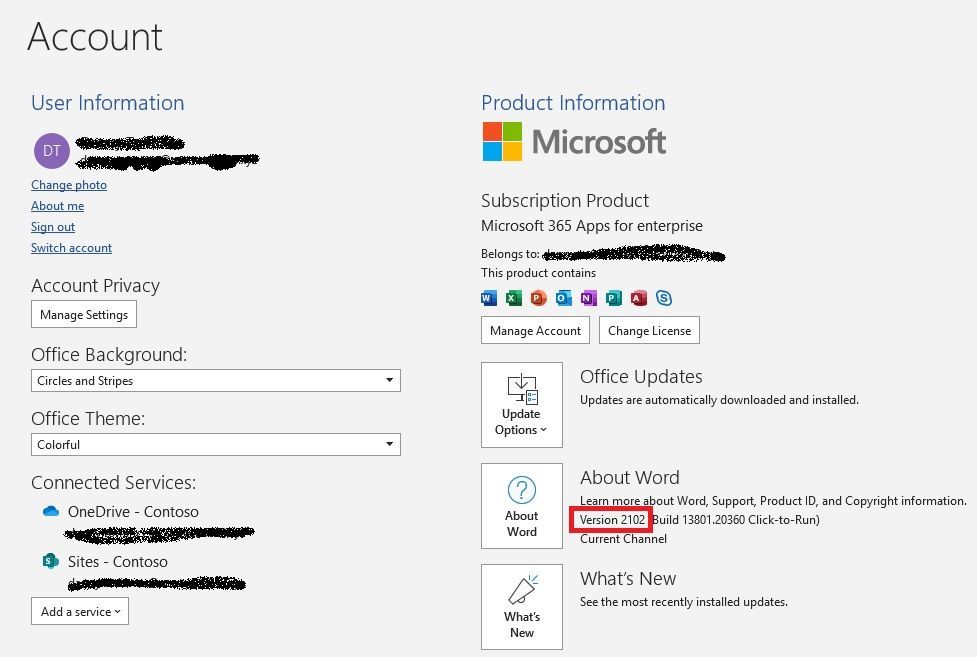

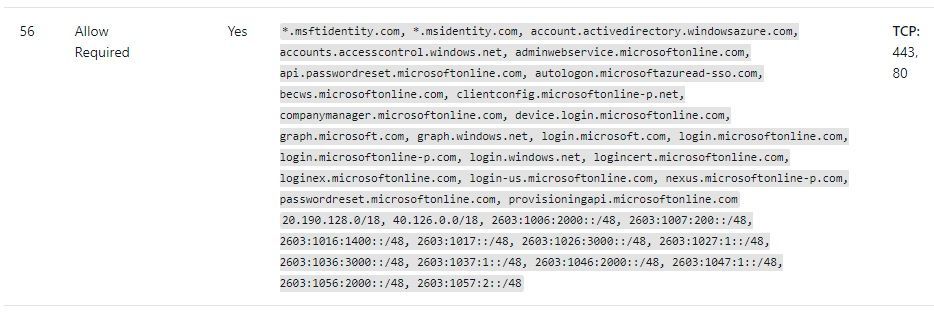

CL4: Outbound connectivity to Azure AD

In order to perform Azure AD authentication, the DKE service needs to have transparent outbound connectivity as described in box 56 of our documentation:

By adapting the source code of the DKE reference implementation, you may also use a forward proxy. The necessary changes have been implemented in an open pull request. Please observe that an anonymous proxy is required for this, i.e. a proxy that allows access to the necessary URLs without authentication.

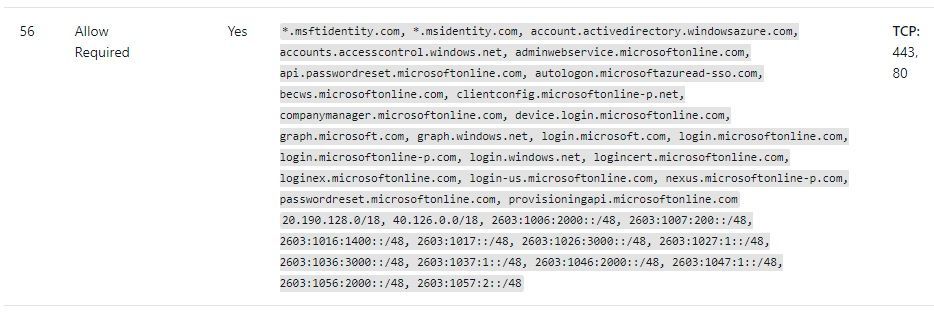

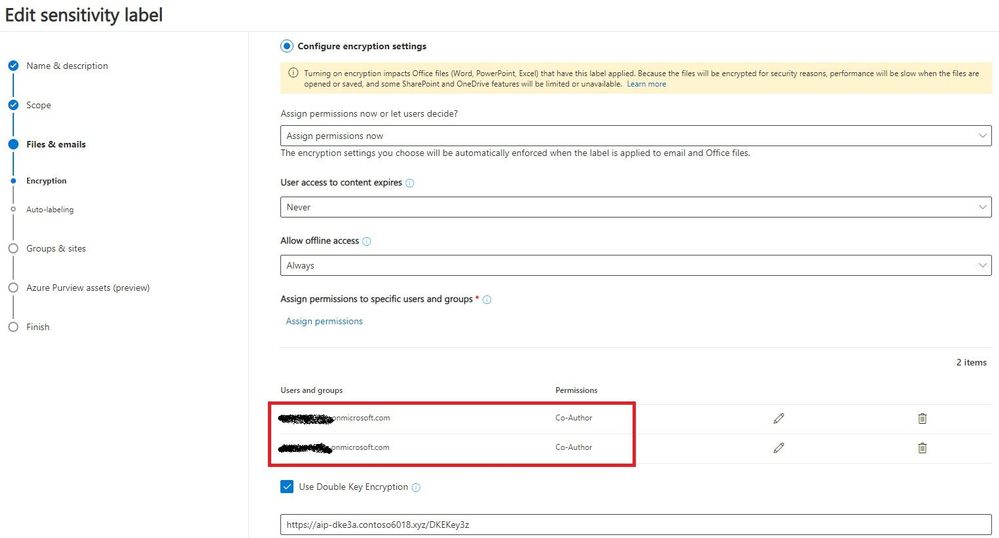

CL5: Permissions in the sensitivity label used for DKE

The sensitivity label used for DKE protection needs to provide sufficient permissions for all intended recipients of the documents. During the test phase, it’s suggested to grant permission to the whole tenant:

After DKE has been tested successfully, it’s good practice to remove permissions on the sensitivity label for users and groups that are not allowed to access the DKE service.

CL6: Web application configuration

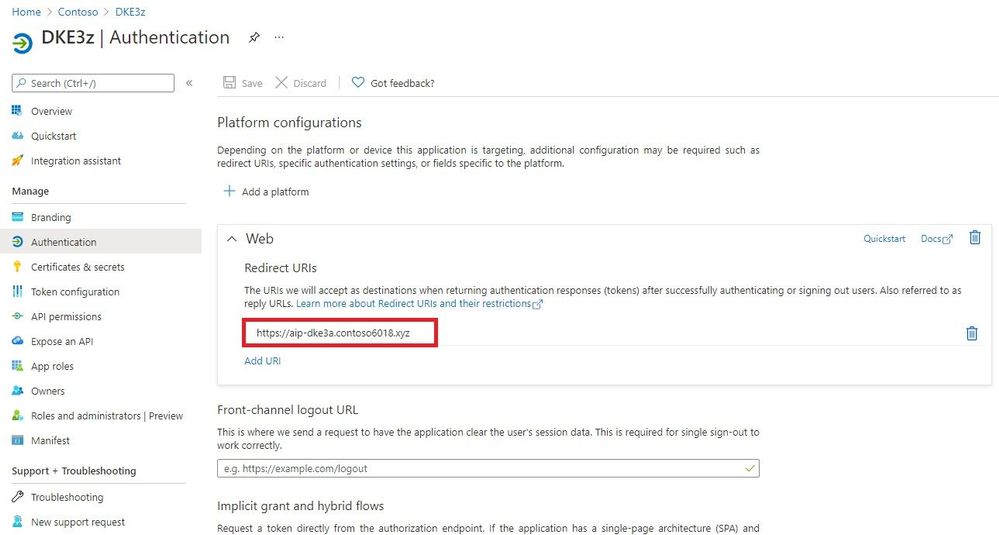

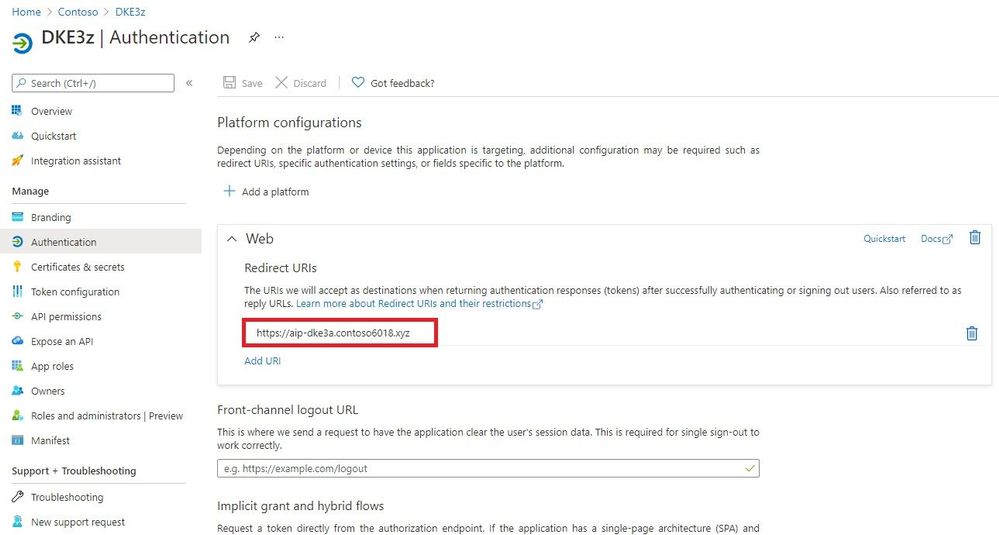

In the «Authentication» section of the DKE web application registration, verify that the redirect URI does not contain a trailing slash (see also CL3):

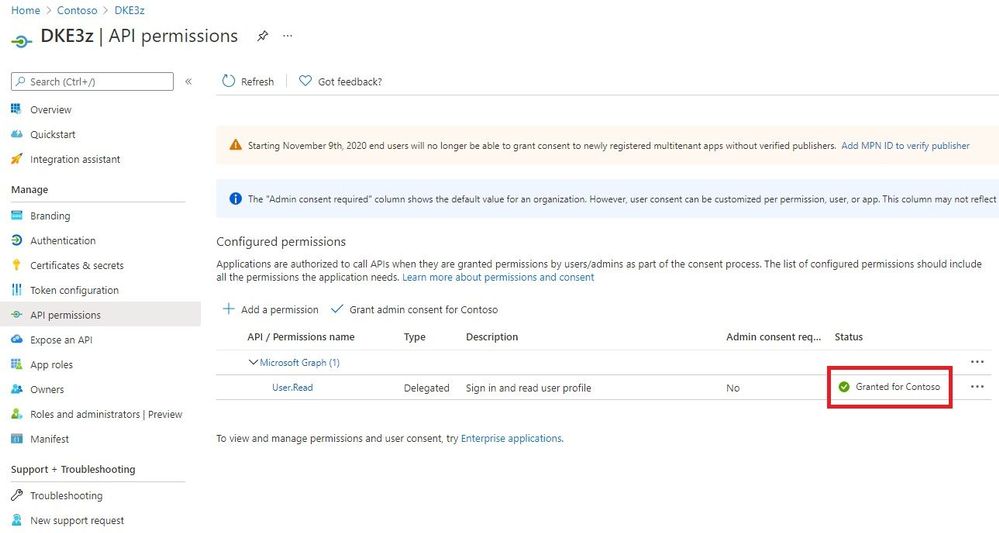

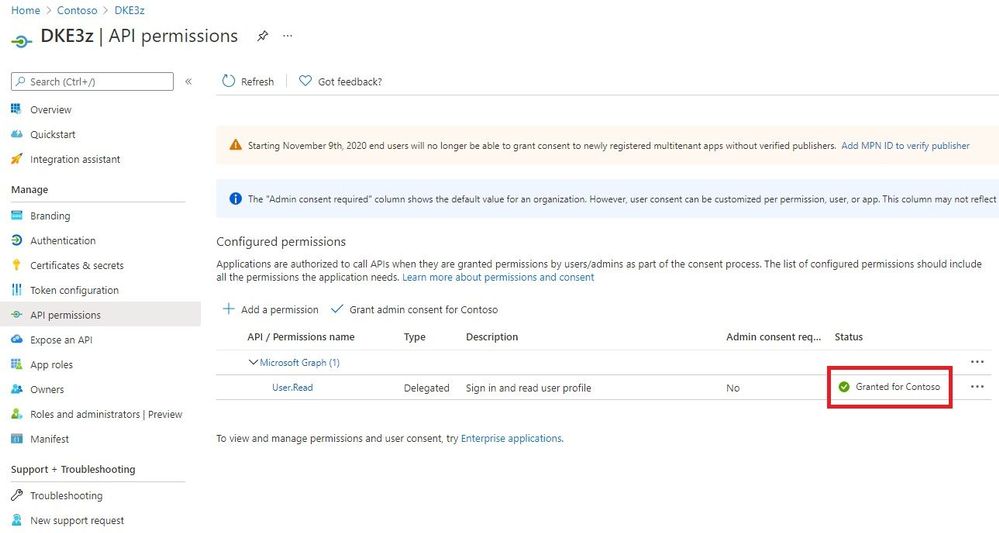

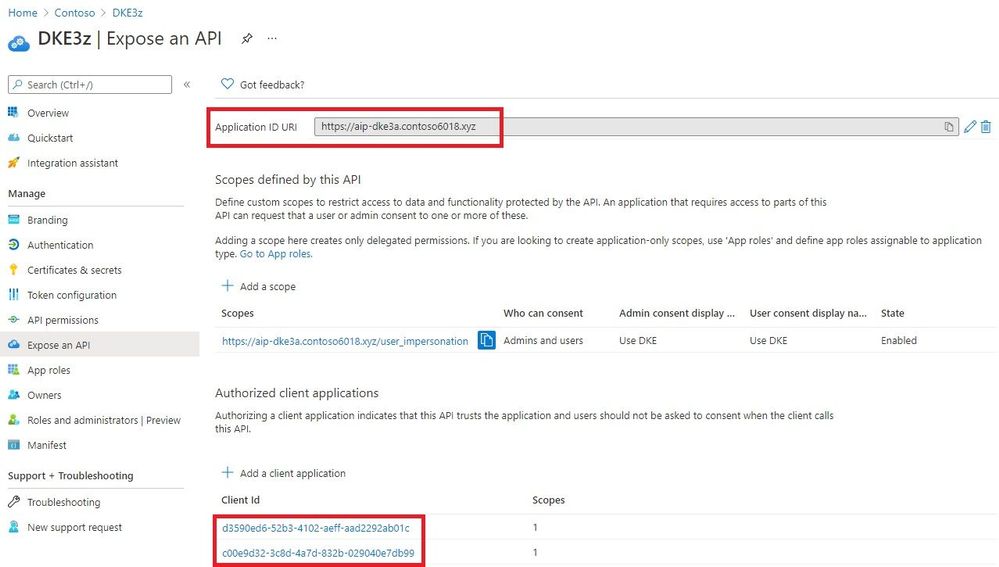

In the section “API permissions”, make sure the whole tenant has been granted consent to “User.Read”:

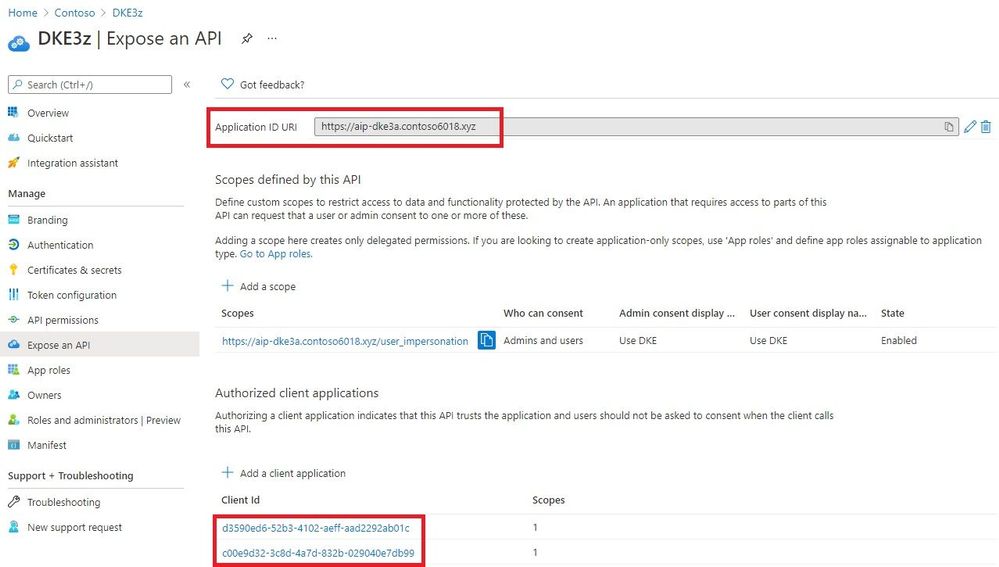

Check that these points have been addressed in the section “Expose an API”:

- The “Application ID URI” is configured as the DKE URL.

- Client Ids are registered both for Office (d3590ed6-52b3-4102-aeff-aad2292ab01c) and the AIP (Azure Information Protection) client (c00e9d32-3c8d-4a7d-832b-029040e7db99).

CL7: Recipients are allowed to use the DKE service

The DKE service authorizes users either via a list of email addresses or via membership in a local AD group. Either way, you have to ensure all test users are allowed to access the DKE service.

If you use email-based authorization, make sure email addresses of all users are included in the list of email addresses in the configuration file. Please observe that each individual user email address needs to be in quotes, e.g. [“jane.doe@contoso.com”,”albert.smith@contoso.com”].

CL8: Client connectivity to DKE and Azure AD

For acquiring the public key and for decrypting existing keys, clients needs to be able to reach the DKE service. To allow authentication, clients also require access to Azure AD.

Both transparent connectivity and forward proxies (with or without authentication) are supported.

CL9: DKE-related registry values are set on each client

Ensure the following registry values are defined on each client, please observe that some of the registry keys may also need to be created:

[HKEY_LOCAL_MACHINESOFTWAREWOW6432NodeMicrosoftMSIPCflighting]

“DoubleKeyProtection”=dword:00000001

[HKEY_LOCAL_MACHINESOFTWAREMicrosoftMSIPCflighting]

“DoubleKeyProtection”=dword:00000001

CL10: Tenant is listed in the TrustedIssuers value

Check the following setting in the ‘TokenValidationParameters’ section of the DKE configuration file:

In ‘ValidIssuers’, your Azure AD tenant needs to be listed (e.g. «https://sts.windows.net/ 7d024093-e9a7-47e4-a205-bbbd4eed8e3a/»).

Part B: Useful tools for troubleshooting DKE

The following tools have proven to be useful in debugging DKE installations.

Codes prepended to the titles of the sections (e.g. «T4») are again referenced in the step by step troubleshooting guide.

T1: Fiddler trace

Fiddler allows you to see the communication between the client and the DKE service in detail. To get a trace, consider performing the following steps:

- Install and launch Fiddler, available on https://www.telerik.com/fiddler.

- Select «ToolsOptions», switch to tab «HTTPS», check option «Decrypt HTTPS» traffic, click OK and acknowledge prompts for installing a root certificate.

- Try to reproduce the issue you want to debug.

In a Fiddler trace, you may check the communication with the DKE service.

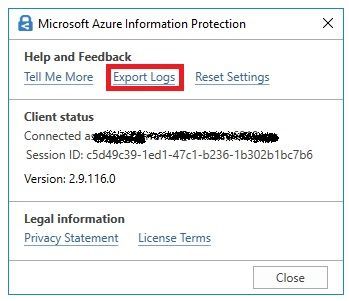

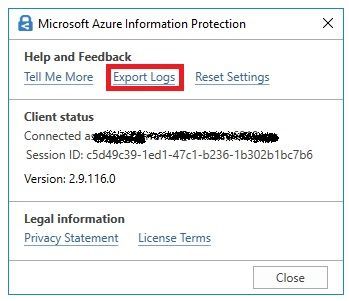

T2: Export AIP Logs

In the Word toolbar, select «Sensitivity». Choose option «Help and Feedback» and click on «Export Logs»:

The ZIP file contains the relevant logs, for instance the MSIPC logs which cover DKE activity of the client.

T3: Web Server Logs

The web server logs show two kinds of activities:

- Clients downloading the public key (when protecting content with DKE)

- DKE clients attempting to run decrypt operations (when opening DKE protected content)

Repeated attempts for decrypt operations where the server responds with 401 would indicate authentication issues.

If clients fail to protect content with DKE labels and there’s no activity in the web server logs, likely there’s a misconfiguration or a connectivity issue.

T4: Event logs

Check the event logs for any exception messages.

If you installed the DKE service on IIS, you’ll find the event log in «Windows Event Viewer», «Application log».

If you’re hosting the DKE service on an Azure web app, you’ll find the event log as follows:

- Go to your App Service

- Open left-hand menu, “Diagnose and solve problems”

- Select «Diagnostic Tools» (in the main pane)

- Open «Support Tools/Application Event Logs» on the left-hand menu of the new screen

Part C: Step by Step guide

In order to narrow down which piece is missing, we suggest to perform the troubleshooting in the following order:

- Check the web site with the validation script.

- Try to save a document protected with a DKE label.

- Have another user open a DKE protected document.

- Let a user re-label a DKE protected document by right-clicking the document and selecting «classify and protect».

Some resolution steps refer to checklist items and tools. The reference uses codes that are prepended to the titles of the checklist items (e.g. «CL1») and tools (e.g. «T4»).

Step 1: Check the web site with the validation script

We suggest to run the validation script:

[…]DoubleKeyEncryptionServicesrccustomer-key-storescripts> .key_store_tester.ps1 <DKE URL>/<Key>

If this is successful, please proceed with step 2.

However you may see the following output:

Validation request started: <DKE URL>/<Key>

Validation failure: Unable to access the provided url Not Found

Similarly, a 404 error is issued when you open the URL in a web browser.

This indicates one of the following issues:

Potential issue

|

Suggested resolution steps

|

The URL is not correct.

|

Double-check the URL

|

There’s an internal exception in the web site.

|

Check the event log on the DKE service (see tool T4)

|

Step 2: Try to save a document protected with a DKE label

(Ensure the DKE label has defined «Allow offline access:» as «Always».)

Saving the document successfully shows the client can reach the DKE service anonymously and the service provides a suitable RSA key. In this case, please proceed with step 3.

But you might encounter this behavior:

Despite having ample space on a disk (or on OneDrive), the following message is shown when saving a DKE protected document: «Word cannot save or create this file. Make sure the disk you want to save the file on it is not full, write-protected, or damaged.»

This indicates one of the following issues:

Potential issue

|

Suggested resolution steps

|

The client is not configured to use DKE.

|

Re-check the Office version (see checklist item CL1).

Verify the DKE registry keys have been imported on the client (see checklist item CL9).

|

The client cannot reach the DKE service

|

On the client, try opening the DKE-URL configured in the sensitivity label. If that fails, fix the network issue as needed.

|

Step 3: Have another user open a DKE protected document.

If user1 protects a document with DKE and user2 succeeds in opening this document, users can be authenticated to DKE. In this case you may proceed with step 4.

But a user trying to open a DKE document not protected by herself may see the following error message:

«You are not signed in to Office with an account that has permission to open this document. You may sign in a new account into Office that has permission or request permission from the content owner.»

This indicates one of the following issues:

Potential issue

|

Suggested resolution steps

|

The user hasn’t been granted permission in the sensitivity label.

|

During tests, try granting the whole tenant access in the sensitivity label permissions (see checklist item CL5).

|

The DKE service URL contains a sub-folder.

|

Verify that the DKE URL consists of the FQDN only (see checklist item CL2).

|

The web application isn’t configured correctly.

|

Check the settings in the web application (see checklist item CL6).

|

The DKE service is hosted on IIS, but it cannot reach Azure AD due to lacking outbound Internet connectivity.

|

Check for exception «System.InvalidOperationException: IDX20803: Unable to obtain configuration» in the event viewer (see tool T4).

If this exception occurs, make sure the DKE service has outbound connectivity.

|

The configuration file doesn’t grant permission for the tenant.

|

Ensure «TrustedIssuers» contains the tenant specific URL (see checklist item CL10).

|

DKE doesn’t authorize the user to access the service.

|

Check the authorization option (see checklist item CL7).

|

Step 4: Let a user re-label her own DKE protected document with right-click, «classify and protect».

(For this test, the user has protected the document herself in Office.)

If the user succeeds in re-labeling this protected document with right-click, the AIP client is also registered with the web application and an Office version supporting DKE is installed.

However, the user may see the following error message in the AIP client:

«An unknown error occurred. If this problem persists, contact your administrator or help desk.»

This indicates one of the following issues:

Potential issue

|

Suggested resolution steps

|

The client doesn’t have the correct Office version installed.

|

Re-check the Office version (see checklist item CL1).

|

The AIP client is not registered in the web application.

|

Check whether the client ID for the AIP client has also been registered in the web application (see checklist item CL6).

|

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

For the 19th annual Imagine Cup, thousands of student developers from around the world submitted impactful tech innovations. Teams were challenged to bring an idea to life that tackles a local or global issue in one of four competition categories: Earth, Education, Healthcare, and Lifestyle. Out of 40 World Finalists that pitched their projects at the World Finals, four teams were selected to advance.

These teams have reimagined solutions for issues in sustainable farming, remote learning and teaching, access to healthcare, accessibility, and that brings purpose and meaning to our lives. At the core of the solutions is innovative and original use of Azure technology, including IoT, Artificial Intelligence, App Services, Visual Studio Code, and so much more.

Meet the Top 4 teams!

Team ProTag, New Zealand

Earth category

Project: ProTag

Project description: ProTag is a smart ear tag for livestock that can detect the early onset of illness in real time – lowering costs and increasing welfare. Embedded temperature, movement, and location sensors collect data that is analyzed onboard to identify animal behaviors such as chewing, walking, and sleeping. This semi-processed data is transmitted over LoRaWAN to a cloud database, to be combined with farm features and feed into AI models trained to detect illnesses. Keeping animals healthy doesn’t just improve welfare; it increases productivity, leading to a more sustainable way of farming. Team member Tyrel Glass shared that, “The recent explosion of AI and IoT presents a unique opportunity to rethink the way farming is approached. We can put a small, low-cost ear tag on livestock that provides farmers with the insights they need to manage or even prevent illnesses. It’s an exciting, fast-paced space tackling some of the big sustainability issues we face in feeding a growing global population.”

After being selected for the top 4, ProTag shared, “It’s an awesome validation that our idea has some good merit, and we’re excited to take it further.” Looking forward to the World Championship in May, they say, “We’re excited for the mentorship and to take the idea we’ve got now and polish it over the next six weeks.”

Team Hand-On Labs, United States

Education category

Project: Hands-On Labs

Project description: Hands-On Labs is a set of remote laboratories that allow students to observe and remotely control physical tools online in real-time for their courses. The team aims to provide an active learning experience to students from any background all around the world. The platform uses Azure App Services, Storage, and Visual Studio to allow unprecedented control over various aspects such as lighting levels, camera angles, audio controls, and various control modes. The team believe that remote learning should be accessible to all students, sharing, “We need to bring active learning to students fingertips in order to raise leaders of tomorrows who can experiment, solve problems and are not afraid of making mistakes as they can observe and learn from them”.

After finding out they were selected to advance, the team shared, “It’s an honor for us to be among all these amazing students. We both come from underprivileged communities and we want to make sure everyone has access to the education they deserve.”

Team REWEBA, Kenya

Healthcare category

Project: REWEBA

Project description: REWEBA is an IoT-based early warning system for babies. It remotely monitors infant parameters during regular post-natal screening. The IoT device is used to measure infant parameters and sends measurements to doctors remotely, allowing for immediate interventions saving infants from fatal diseases and reducing infant mortality rates. It combines a variety of technologies to provide innovative functionalities for infant screening. The team are committed to solving problems faced by infants and parents in their community, sharing that “Sub-Saharan Africa remains the region with the highest under-5 mortality rate in the world. We can solve this problem using REWEBA, a remote infant monitoring system that can be used in marginalized areas thus giving everyone equal access to healthcare.”

After being selected for the top 4, the team said, “We have no words, it means a lot.” Looking ahead to the World Championship, the team are “…very excited to work with mentors {moving forwards} and give it our best.”

Team Threeotech, Thailand

Lifestyle category

Project: JustSigns

Project description: JustSigns is a web application for content creators to create sign language captions to improve media accessibility for hard of hearing viewers. JustSigns accepts Youtube video URLs, retrieves video captions, and translates all the sentences into Thai sign language grammar. Then, the application will generate a 3D sign language animation which the user can view side by side with the original video. The team’s goal is to make media accessible for all, sharing that, “We believe that our solution could radically transform the way hearing-impaired people live, work and play by allowing them to learn new things and improve themselves, to enjoy movies that they’ve never really understood before, and to explore what interests them as they are now able to access all media in the world”.

When they made it into the top 4, the team shared, “It’s unbelievable, it means a lot to us to be here. We think Imagine Cup is a huge competition, and we want to show the world our solution.”

————————

Taking on the challenges they have seen in their own lives, these incredible students have brought their passion, ingenuity, and perspective to a global stage. Their ideas push the envelope on what’s possible in order to improve our society and create a brighter and more inclusive future for all.

Join us in congratulating these teams’ incredible success so far, and follow their journey on Twitter and Instagram as they head to the World Championship to compete. The 2021 World Champion will take home USD75,000 and mentorship with Microsoft CEO, Satya Nadella.

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

The 21.03 Azure Sphere OS quality update is now available in the Retail feed. This release includes bug fixes in the Azure Sphere OS; it does not include an updated SDK. If your devices are connected to the internet, they will receive the updated OS from the cloud.

21.03 includes updates to mitigate against the following Common Vulnerabilities and Exposures (CVEs).

For more information on Azure Sphere OS feeds and setting up an evaluation device group, see Azure Sphere OS feeds and Set up devices for OS evaluation.

For self-help technical inquiries, please visit Microsoft Q&A or Stack Overflow. If you require technical support and have a support plan, please submit a support ticket in Microsoft Azure Support or work with your Microsoft Technical Account Manager. If you would like to purchase a support plan, please explore the Azure support plans.

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

The Microsoft Planner team is constantly striving to deliver capabilities that help you stay on top of your tasks—no matter where they originate. This was the impetus for integrating Planner and the Microsoft 365 Message center last year. IT admins were missing important Microsoft updates because there was no way to formally track them. Now, they can quickly convert Message center messages to Planner tasks to ensure every Microsoft release, from security enhancements to new features, are properly deployed.

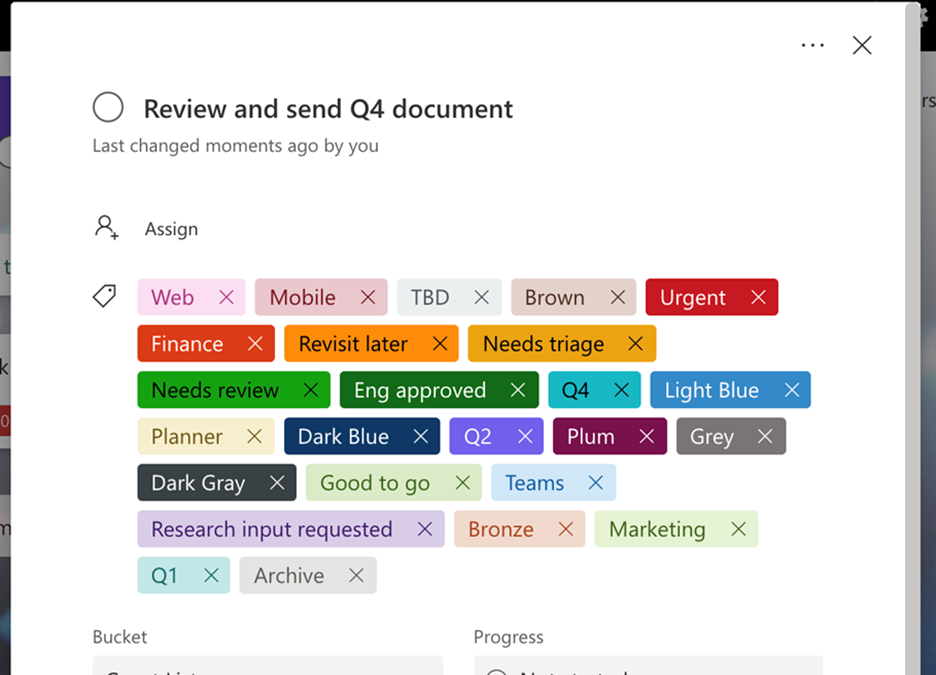

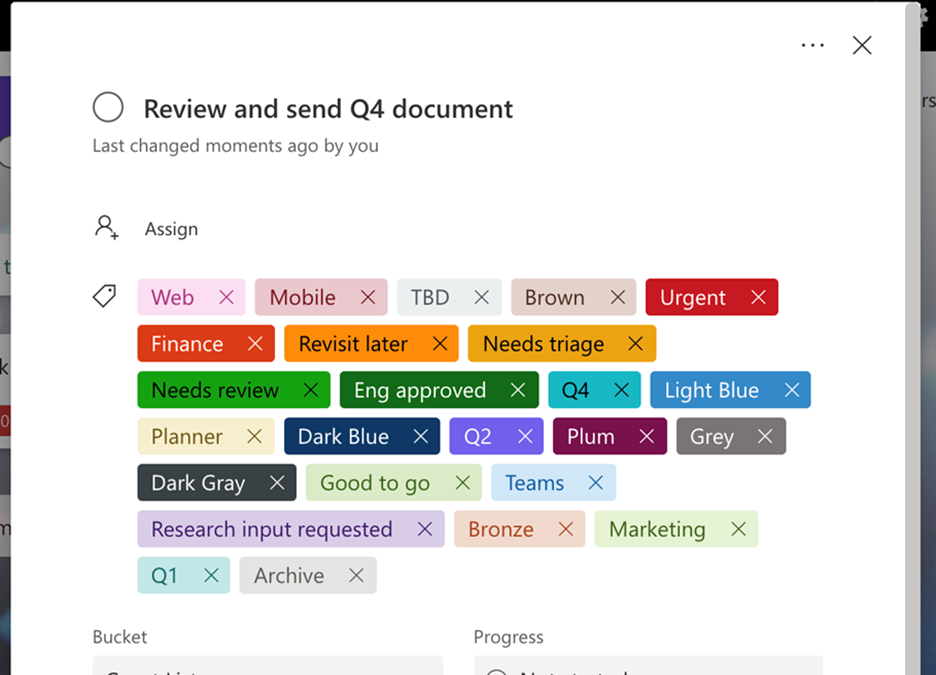

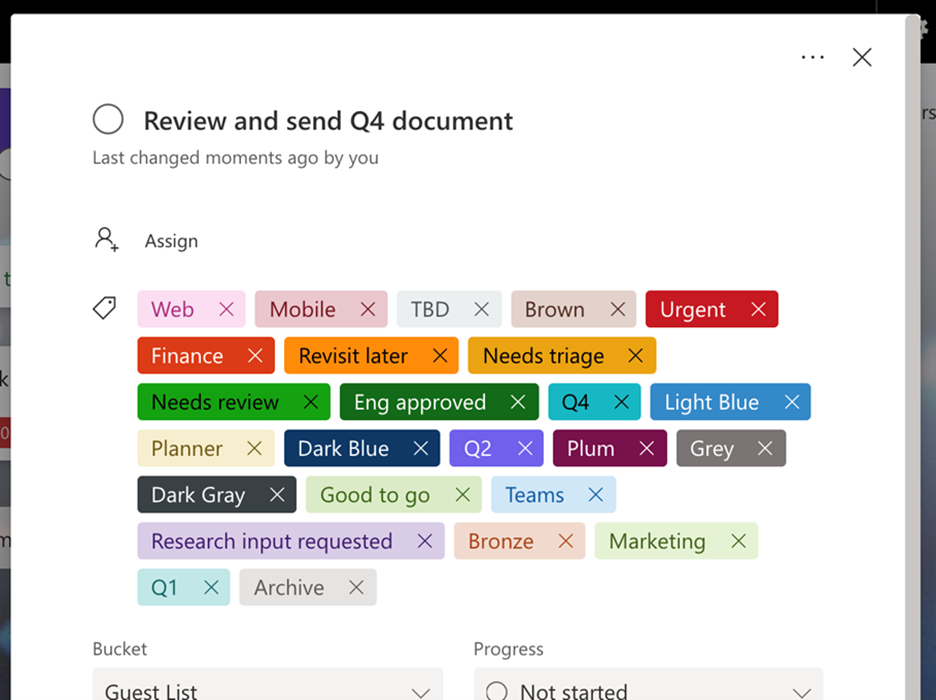

Customer asks are another driving force behind our development strategy. It was because of users like you that we released more labels earlier this month. Since its inception, Planner has only had six labels for tagging tasks. We long heard from customers this wasn’t enough, and so in early March we expanded that number to 25, enabling teams to better categorize, filter, and find their tasks.

And now, we’re excited to announce that both Planner in Message center and the 25 color-coded labels are coming to all Microsoft 365 and Office 365 government cloud offerings, including GCC, GCC High, and DoD. (Note, Planner in Message center has been available for GCC since December.) More labels are available now for all three offerings, while Planner in Message center is currently rolling out to GCC High and DoD.

You can now use up to 25 color-coded labels in Planner

If you’d like to keep up with Planner and other task management news, including updates to the Tasks app for Microsoft Teams, visit our Tech Community Blog webpage. And if you’re new to Planner or the Tasks app, our support pages for each—Planner here and Tasks app here—can help get you started.

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

Authors: Lukas Cerny, Rui Zhou, Zhongyuan Li, Karish Singh, Denoy Hossain, Xin Deik Goh

Sponsor: Prof. Joseph Connor

Supervisors: Dr. Dean Mohamedally, Dr. Emmanuel Letier, UCL

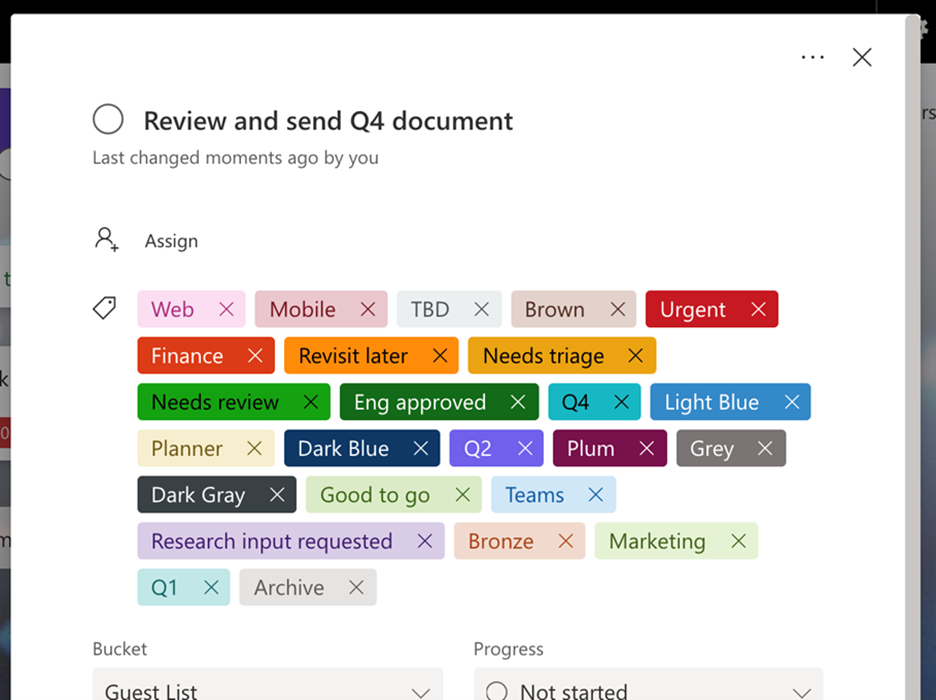

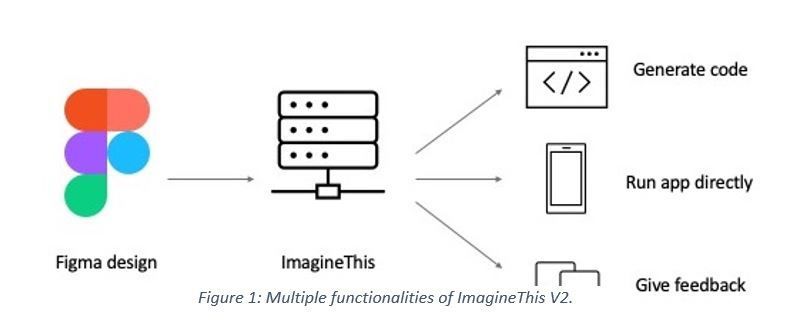

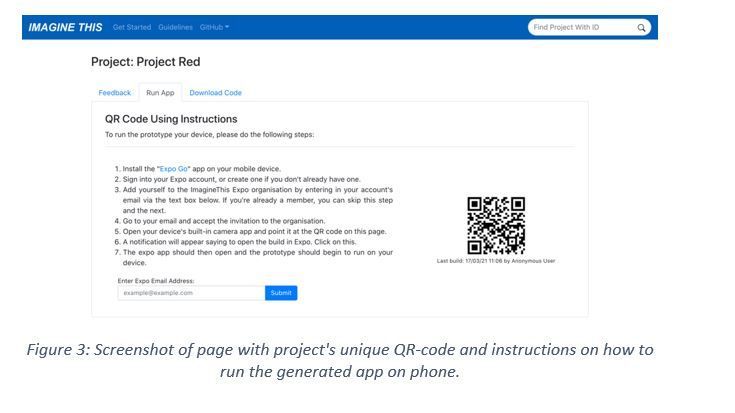

ImagineThis is a multi-functional platform on Azure for building, testing and running early-stage designs of applications without the need to write any code. It converts a wireframe sketch design from Figma, into code and publishes it in a way that anyone can run the generated app on their iOS or Android devices merely by scanning a unique QR-code. Version 1 was developed by a group of UCL students as a part of summer 2020 UCL IXN project. We have substantially expanded the capabilities with Version 2 with our Azure implementation.

Demo

Introduction

Early-stage application prototype testing is one of the most important phases of software product development. It can reveal flawed assumptions or incorrect requirements and thus save lots of time and money. App designers working with clinical trusts in the NHS are currently facing a problem where it takes much longer to develop healthcare application than wanted. This is especially important in the healthcare sector where shorter product delivery times can lead to more lives being improved or potentially saved.

One of the causes for such delays is a general lack of developers and software engineers at the NHS. Another one is length of tender processes in which external companies demonstrate their proposals for apps and the NHS has to decide which one to go with. To reduce the overall development time, our partners within the NHS commissioned a tool to be enable rapid design prototypes to generate app templates on mobile devices.

Our solution

ImagineThis version 2 is a multi-functional platform for building and testing early-stage designs of applications. It works with a well-known commercial platform Figma through which users create designs of applications. Using Figma’s API, ImagineThis fetches a JSON file of a particular design the user wishes to build and converts it into React-Native source code. React-Native was chosen due to its cross-platform nature, meaning it can run on both iOS and Android devices. The auto-generated codebase contains all necessary files and components which developers can download as a ZIP file, so that they continue working on it.

This code generation feature can save lots of time for developers as they no longer need to setup the whole codebase structure nor write code from scratch.

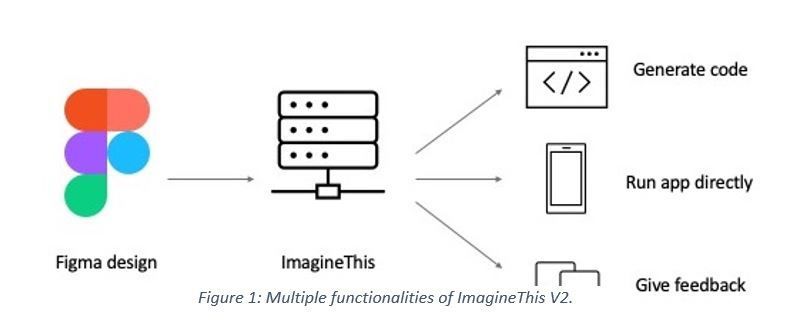

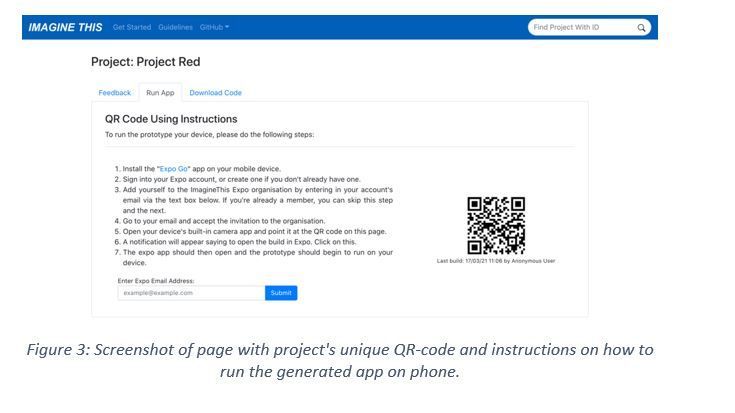

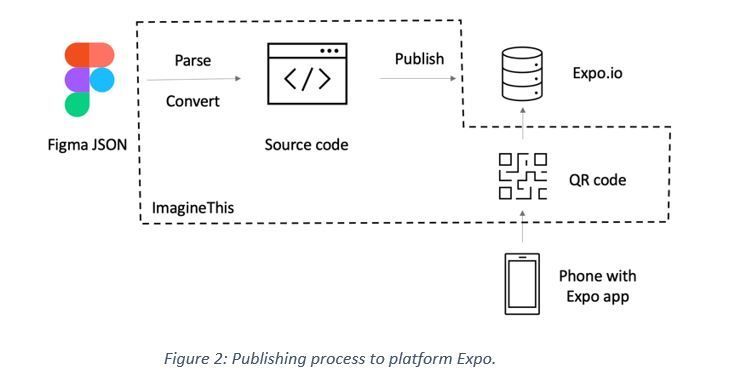

On top of that, after the code generation succeeds, ImagineThis publishes the app to a platform called Expo: an external commercial system that simplifies application development process. Expo has a mobile client Expo Go through which developers can easily test apps on their phones. We use Expo exactly for this purpose.

Furthermore, after ImagineThis publishes the generated source code to Expo, it displays a project unique QR-code that users can scan in order to open the Expo Go client where they run the published application directly on their phone, without any need for app stores or installations. This whole process works automatically and seamlessly, therefore building and running an app on user’s phone is just a matter of few clicks and no need for any coding at all! Figure 2 below graphically illustrates this process.

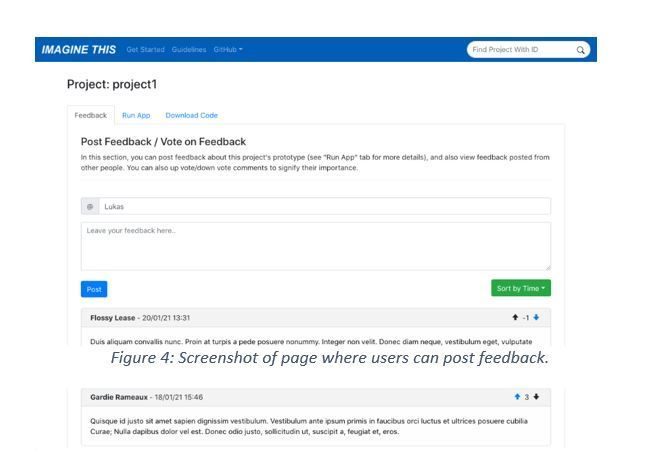

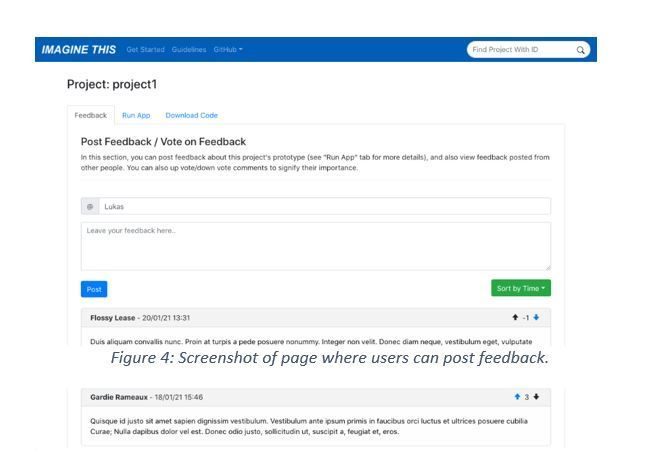

Finally, after users test the application, they can post feedback to ImagineThis and upvote or downvote feedback from other users. This information is incredibly useful for designers to make adjustments and for clinical study staff in the NHS to understand the opinion of end-users.

Architecture

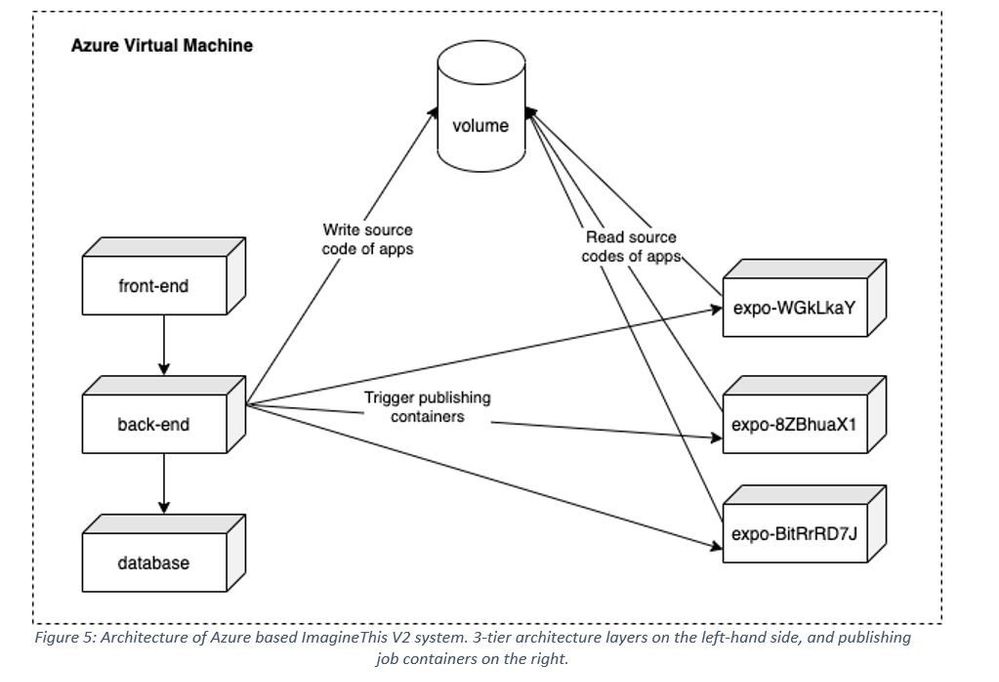

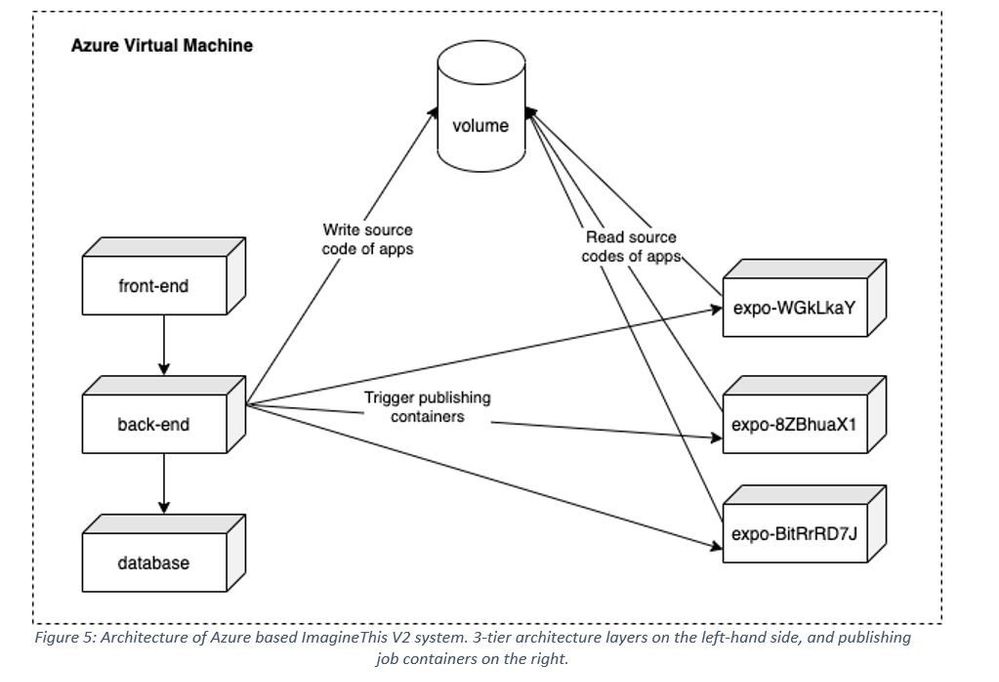

We decided that a classic 3-tier layered architecture on Azure is the most suitable one that meets our requirements. Firstly, there is a web user interface, the front-end layer, implemented with ReactJS and using React-Hooks for global state management. Secondly, there is back-end, the busines logic layer, which performs the conversion of Figma design to React-Native source code and also communicates with the database.

The back-end is a RESTful API written in Java and using Spring Boot framework. Also, we use various state-of-the-art technologies like MyBatis for mapping database to Java objects, Swagger for testing and documenting the RESTful API or Jacoco for analysing the test suite code coverage that we aim to get above 50%. We even integrated Jacoco into our continuous integration (CI) pipeline, so that we can view the code coverage metric continuously and in real-time.

Lastly, there is PostgreSQL database in the persistence layer serving as the data storage for feedback and project data.

Code conversion

Although the code conversion functionality was already implemented by the previous group in ImagineThis V1, we’ll briefly describe how that works. ImagineThis’ back-end queries the Figma API for a large JSON file representing the whole design. This, of course, requires authentication, so users have to authenticate themselves either by entering their Figma account token into ImagineThis, or through Oauth 2.0 protocol.

The Figma design has to obey certain rules (e.g. naming conventions), so that ImagineThis can interpret what individual components are; for example, we want to differentiate a button from an input field. Using these rules, ImagineThis parses the JSON file with Java gson deserialization tool and produces a tree-like object with a list of FigmaComponents. This is our abstract representation of the Figma design. With 1-to-1 relationship we map each of these FigmaComponents into ReactComponents, which are responsible for producing the application code. We consciously made the distinction between these, in order to separate concerns and responsibilities, to improve code’s testability and also to make the system extensible to new languages (like Kotlin) or design platforms.

Docker containers

We decided to use Docker containers for running each of the layers mentioned previously. Docker gave us many valuable advantages. Firstly, it vastly simplified the deployment. Since we are using docker-compose functionality to orchestrate multiple containers, deployment is just a matter of running few shell commands.

Secondly, Docker increases flexibility as it works across different operating systems and environments. Deployment was practically the same whether we ran the whole system locally on our laptops, or on the Azure production server. It will be the same even on other cloud providers as well.

Thirdly, Docker is resource efficient, as we can run all layers on just one virtual machine (VM). This is how we cut down client’s costs by half, since instead of running 2 VMs separately, one for the front-end and the other for the back-end, we run all layers on just one VM. Consequently, the whole system can easily scale horizontally merely by adding extra containers and VMs.

Finally, we used Docker containers to facilitate the publishing process. The next section describes how this works.

Figure 5: Architecture of Azure based ImagineThis V2 system. 3-tier architecture layers on the left-hand side, and publishing job containers on the right.

Publishing process

As already described, after ImagineThis generates the source code, it builds and publishes the app to Expo. The publishing process takes quite a while. Also, the only available API Expo supports is the Expo CLI. Therefore, we needed a way how to run this asynchronously and in an environment that has access to shell.

Rather than using our back-end Java server for this, we decided that spinning up a new Docker container that performs the job will be a better solution. We setup an image with a Dockerfile, which runs a shell script that builds and publishes the generated app. The advantages of using Docker containers include the ability for jobs to run simultaneously and in isolation alongside the architecture being easily scalable. Moreover, the shell script that those containers run is actually trivial, we just copy generates files from a volume and run Expo command:

# Copy generated app source code to this directory

# Note: /usr/src/app is volume shared with backend container which generates code there

cp -r /usr/src/app/$PROJECT_ID/* .

expo publish

Having said that, we are using Docker volume for data communication across different containers. As Figure 5 shows, the back-end container writes generated React-Native source code files into the volume. On the other hand, Expo publishing containers mount that volume and copy files into its own directory (as showed in the code snippet). From there they run the Expo command to build and publish the app.

Furthermore, we are using Docker as a synchronization primitive. Since containers must have unique names, we are naming these job containers as imaginethis-expo-{project-id} (in Figure 5 just expo-{project-id} for brevity). Only one container with such name can run and thus Docker will prevent the back-end from triggering another job that publishes the same project.

Future work

There are several directions in which we see ImagineThis could continue to grow. One is to improve the code generation process by using multi-threading. Currently the conversion from a Figma design into React-Native code runs sequentially in a single thread. But wireframes (application pages) could be converted in parallel which would vastly improve the conversion time.

Implementing an authentication system will be an essential improvement. Users will have to sign up and log into ImagineThis, which will let them access only those projects they are authorised for (currently all users can access all projects). Finally, we want to use Azure Kubernetes as the orchestration engine for Docker containers.

GitHub Repo

For anyone interested, our code is available publicly on Github!

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

This blog is part three of a three-part series focused on business email compromise.

In the previous two blogs in this series, we detailed the evolution of business email compromise attacks and how Microsoft Defender for Office 365 employs multiple native capabilities to help customers prevent these attacks. In Part One, we covered some of the most common tactics used in business email compromise attacks, and in Part Two, we dove a little deeper into the more advanced attacks. The BEC protections offered by Microsoft Defender for Office 365, as referenced in the previous two blogs have been helping keep Defender for Office 365 customers secure across a number of different dimensions. However, to fully appreciate and understand the unique capabilities Microsoft offers, we need to take a step back.

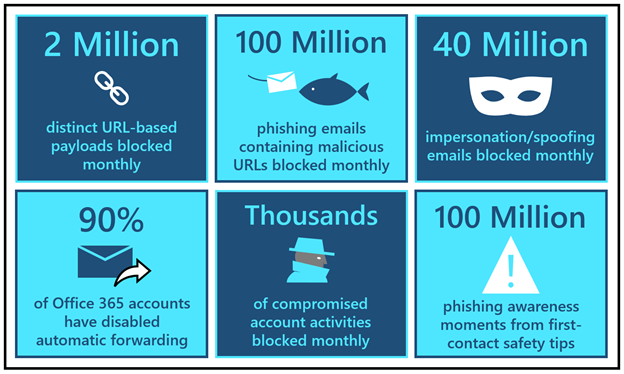

Unparalleled scale

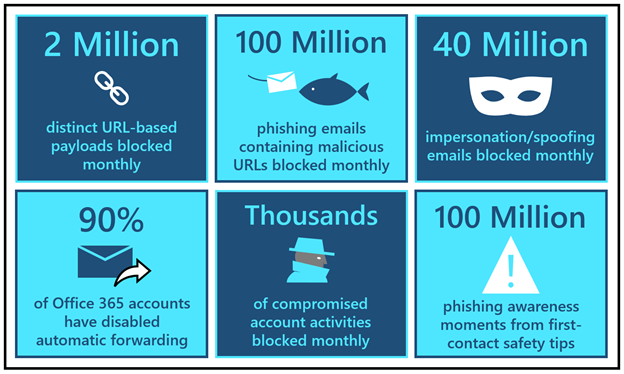

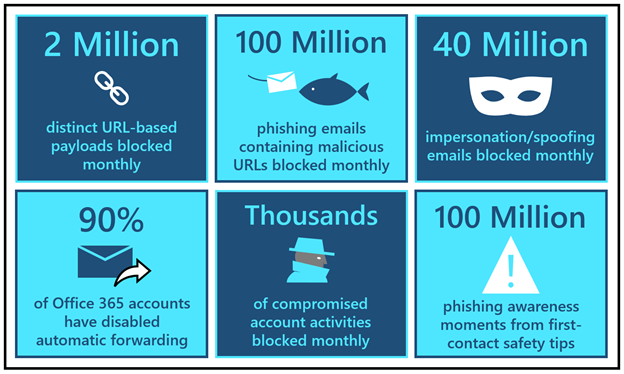

When we talk to customers about Microsoft Defender for Office 365, we always mention not only the size of our service, but the volume of data points we generate and collect throughout Microsoft. These things together help us responsibly build industry-leading AI and automation. Here are a few datapoints that can help put this into perspective:

- Every month, our detonation systems detect close to 2 million distinct URL-based payloads that attackers create to orchestrate credential phishing campaigns. Each month, our systems block over 100 million phishing emails that contain these malicious URLs.

- Every month, we detect and block close to 40 million emails that attempt to leverage domain spoofing, user impersonation, or domain impersonation – techniques that are widely utilized in business email compromise attacks.

- Clicking further into domain spoofing data, we observe that the majority of domains that send mail into Office 365 do not have a valid DMARC enforcement. That leaves them open to spoofing and that is why the Spoof Intelligence capability (as discussed in Part One) adds such a strong defense layer.

- In the last quarter, we rolled out new options in the outbound spam policy that have helped customers disable automated forwarding rules across 90% of Office 365 email accounts to further disrupt BEC attack chains.

- Additionally, our compromise detection systems are now flagging thousands of potentially compromised accounts and suspicious forwarding events. As we covered in our second blog, account compromise is a tactic used frequently in multi-stage BEC attacks. Learn more about how Defender for Office 365 automatically investigates compromised user accounts.

- Just in the last quarter, we have seen many customers implement “first-contact safety tips”, which have generated over 100 million phishing awareness moments. Learn more about first-contact safety tips.

Figure 1: BEC by the numbers

Figure 1: BEC by the numbers

Artificial intelligence meets human intelligence

At Microsoft, we’re deeply focused on simplifying security for our customers, and we heed our own advice. We build security automation solutions that eliminate the noise and allow security teams to focus on the more important things. Our detection systems are being constantly updated through automated intelligence harnessed through trillions of signals, and this helps us focus our human intelligence on diving deep into the things that help improve customer protection. Our Microsoft 365 Defender Threat Research team leverages these signals to track actors, infrastructure, and techniques used in phishing and BEC attacks to ensure Defender for Office 365 stays ahead of current and future threats.

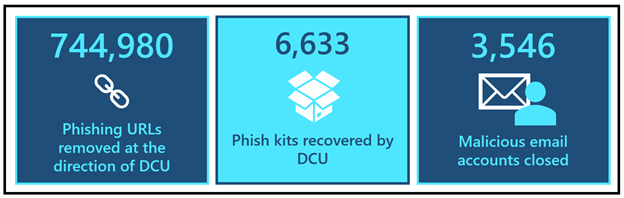

Leading the fight against cybercrime

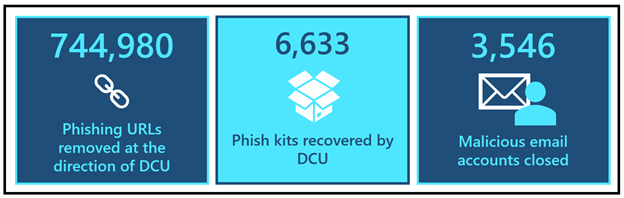

Outside of the product, we also partner closely with the Digital Crimes Unit at Microsoft to take the fight to criminal networks. Microsoft’s Digital Crimes Unit (DCU) is recognized for its global leadership in using legal and technical measures to disrupt cybercrime, including attacks like BEC. By targeting the malicious technical infrastructure used to launch cyberattacks, DCU diminishes the capability of cybercriminals to engage in nefarious activity. In 2020, DCU directed the removal of 744,980 phishing URLs and recovered 6,633 phish kits which resulted in the closure of 3,546 malicious email accounts used to collect stolen customer credentials obtained through successful phishing attacks.

Figure 2: DCU by the numbers

Figure 2: DCU by the numbers

To disrupt cybercriminals taking advantage of the COVID-19 pandemic to deceive victims, in mid-2020, the Digital Crimes Unit took legal action in partnership with law enforcement to help stop phishing campaigns using COVID-19 lures. Additionally, with the help of our unique civil case against COVID-19-themed attacks, DCU obtained a court order that proactively disabled malicious domains owned by criminals. Read more about this here.

The DCU continues to leverage its expertise and unique view into online criminal networks to uncover evidence that informs criminal referrals to appropriate law enforcement agencies around the world who are prioritizing BEC because it is one of the costliest cybercrime attacks in the world today. In fact, since launching this blog series, the FBI released their 2020 Internet Crimes Report, which contains updated statistics on BEC related losses.

To learn more about DCU, take a look at a collection of articles here. You can also check out a recent episode of our Security Unlocked podcast where Peter Anaman, a Director and Principal Investigator for DCU, discusses what it’s like to investigate these BEC attacks.

Reducing the threat of business email compromise

We’ve covered quite a bit of content in this series and it feels only appropriate that we summarize the most important things that you can do to prevent BEC attacks in your environment. We’ve compiled these recommendations from a variety of sources, including industry analysts. The good news is that with Microsoft Defender for Office 365, you can now have one integrated solution that helps you easily adopt these recommendations.

Upgrade to an email security solution that provides advanced phishing protection, business email compromise detection, internal email protection, and account compromise detection

In the second blog in this series we covered the new ways in which attackers are orchestrating these dangerous attacks that are becoming increasingly difficult to detect with legacy email gateways or point solutions. Defender for Office 365 provides a modern, end to end, compliant protection stack that protects against advanced credential phishing, business email compromise detection, internal email filtering, suspicious forwarding detection, and account compromise detection. With Microsoft Defender for Office 365, you can detect these threats in your Office 365 environment without sending data out of your tenant, making it one of the simplest and most compliant ways to protect Office 365.

Complement email security with user awareness & training

With attacks evolving every day, it’s critical that we not only build tools to prevent attacks, but also that we train users to spot suspicious messages or indicators of malicious intent. The most effective way to train your users is to emulate real threats with intelligent simulations and engage employees in defending the organization through targeted training. With Defender for Office 365 we now provide rich, native, user awareness and training tools for your entire organization. Learn more about Attack simulation training in Defender for Office 365.

Implement MFA to prevent account takeover and disable legacy authentication

Multi-factor authentication (MFA) is one of the most effective steps you can take towards preventing account compromise. As we discussed previously, new BEC attacks often rely on compromising email accounts to propagate the attack. By Setting up multi-factor authentication in Microsoft 365 and implementing security defaults you can eliminate 99.9% of account compromise attempts.

Review your protections against domain spoofing

As we shared earlier, the majority of domains that send email to Office 365 have not properly configured DMARC. Leverage Spoof Intelligence in Defender for Office 365 to protect your users from threats that spoof domains that haven’t configured DMARC. Additionally, take the necessary steps to make sure your own domains are properly configured so that they aren’t spoofed. You can implement DMARC gradually without impacting the rest of your mail flow. Configure DMARC in Microsoft 365.

Implement procedures to authenticate requests for financial or data transactions and move high-risk transactions to more authenticated systems

We use email and collaboration tools to perform a wide variety of tasks, and sharing financial data doesn’t need to be one of them. To minimize the risk of accidental sharing of sensitive information like routing numbers or credit card information, consider using Data Loss Prevention policies in Office 365. Additionally, consider, establishing a process that moves these transactions to a different system – one designed specifically for this purpose.

Closing thoughts

At Microsoft, we embrace our responsibility to create a safer world that enables organizations to digitally transform. We’ve put this blog series together with the goal of reminding customers not only of the significance of BEC, but the wide variety of prevention mechanisms available to them. If you’re looking for a comprehensive solution to protect your organization against costly BEC attacks, look no further than Microsoft Defender for Office 365.

Day in and day out, we relentlessly strive to enhance our security protections to stop evolving threats. We are committed to getting our customers secure – and helping them stay secure.

Do you have questions or feedback about Microsoft Defender for Office 365? Engage with the community and Microsoft experts in the Defender for Office 365 forum.

by Contributed | Mar 31, 2021 | Technology

This article is contributed. See the original author and article here.

We continue to expand the Azure Marketplace ecosystem. For this volume, 117 new offers successfully met the onboarding criteria and went live. See details of the new offers below:

|

Applications

|

|

1QBit 1Qloud Optimization for Azure Quantum: The 1Qloud platform from 1QB Information Technologies enables researchers, data scientists, and developers to harness the power of advanced computing resources and novel algorithms without needing to manage complex and expensive infrastructure.

|

|

8×8 Contact Center for Microsoft Teams: Fully integrated with Microsoft Teams, 8×8 Inc.’s Contact Center allows agents to connect and collaborate with experts to resolve customer issues faster. Features include performance metrics, activity history, speech analytics, and unlimited voice calling to 47 countries.

|

|

Admix: Admix is a monetization platform for game publishers. Advertisements are integrated into gameplay, making them non-intrusive. Publishers can drag and drop billboards and TVs within their virtual reality, augmented reality, or mixed reality environments.

|

|

AlmaLinux 8.3 RC: ProComputers.com provides this minimal image of AlmaLinux 8.3 RC with an auto-extending root file system and a cloud-init utilities package. AlmaLinux is an open-source, community-driven project and a 1:1 binary-compatible fork of Red Hat Enterprise Linux 8.

|

|

Automate Repetitive Process with Just One Click: Built with Microsoft Power Automate Desktop tools, CSI Interfusion’s medical claim extraction solution allows users to pull employee medical claim history details from a webpage and download them into Excel with just one click.

|

|

AUTOSCAN Mobile Warehouse Management: Enhance your enterprise resource planning system with this modern warehouse-scanning solution from CSS Computer-Systems-Support. Whether you’re scanning barcodes, QR codes, or RFID chips, AUTOSCAN automates numerous steps throughout your warehouse and shipping process chain.

|

|

Barracuda CloudGen Access Proxy: Barracuda CloudGen Access establishes access control across users and devices without the performance pitfalls of a traditional VPN. It provides remote, conditional, and contextual access to resources, and it reduces over-privileged access and associated third-party risks.

|

|

Barracuda Forensics and Incident Response: Barracuda Forensics and Incident Response protects against advanced email-borne threats, with automated incident response, threat-hunting tools, anomaly identification, and more. Administrators can send alerts to impacted users and remove malicious emails from their inboxes with a couple of clicks.

|

|

BaseCap Data Quality Manager: BaseCap’s Data Quality Manager features intuitive data quality scoring so you can know where your organization’s data quality stands in terms of completeness, uniqueness, timeliness, accuracy, and consistency. Ensure your data is fit for your use cases.

|

|

CAP2AM – Identity and Access Management: CAP2AM from Iteris Consultoria is an identity governance and administration solution that establishes an integrated task flow for corporate systems and resources. This enables organizations to synergize their governance, usability, integration, and auditing operations.

|

|

CAP Procurement: CAP Procurement, an adaptable process management suite from Iteris Consultoria, is designed for procurement organizations. CAP Procurement’s no-code/low-code application platform fosters collaboration with business partners and suppliers through the streamlining of workflows.

|

|

CentOS 7: This image from Atomized, formerly known as Frontline, provides CentOS 7 on a virtual machine with a minimal profile. CentOS is a Linux distribution compatible with its upstream source, Red Hat Enterprise Linux.

|

|

CentOS 7 Latest: This preconfigured image from Cognosys provides a version of CentOS 7 that is automatically updated at launch. CentOS is a Linux distribution compatible with its upstream source, Red Hat Enterprise Linux.

|

|

CentOS 7 Minimal: This preconfigured image from Cognosys provides a version of CentOS 7 that has been built with a minimal profile. It contains the minimal set of packages needed to install a working system on Microsoft Azure.

|

|

CentOS 8: This image from Atomized, formerly known as Frontline, provides CentOS 8. CentOS 8 offers a secure, stable, and high-performance execution environment for developing cloud and enterprise applications.

|

|

CentOS 8 Latest: This preconfigured image from Cognosys provides a version of CentOS 8 that is automatically updated at launch. CentOS is a Linux distribution compatible with its upstream source, Red Hat Enterprise Linux.

|

|

CentOS 8 Minimal: This preconfigured image from Cognosys provides a version of CentOS 8 that has been built with a minimal profile. It contains the minimal set of packages needed to install a working system on Microsoft Azure.

|

|

CHEQ Multi-channel Internal Communication Chatbot: CHEQ is an encrypted chat platform for internal communication with your employees. CHEQ works with Microsoft Teams and Viber and provides an easy way to send company announcements, documents, links, videos, and event invitations.

|

|

Colligo Briefcase: Easily access Microsoft SharePoint on Windows devices online or offline with Colligo Briefcase, an add-in for SharePoint. It combines easy-to-use apps with central configuration and auditable metrics on user adoption.

|

|

Customer Care Virtual Agent: The EY Customer Care Virtual Agent uses a pretrained Microsoft Language Understanding (LUIS) intent classifier to indicate actions a user wants to perform. A predeveloped dialog flow can be customized to meet the client-specific needs of your industry.

|

|

Debian 9: This image from Atomized, formerly known as Frontline, provides Debian 9 on a virtual machine that’s built with a minimal profile. The image offers a stable, secure, and high-performance execution environment for all workloads.

|

|

Debian 10: This image from Atomized, formerly known as Frontline, provides Debian 10 on a virtual machine that’s built with a minimal profile. The image offers a stable, secure, and high-performance execution environment for all workloads.

|

|

Digital Finance: EY Global’s Digital Finance solution utilizes the Microsoft Dynamics 365 Finance module to streamline strategic financial processes and address customer value, user experiences, processes, technology, and operational impacts.

|

|

Digital Fitness App: Upskill your employees with PwC’s Digital Fitness app. Employees take a 15-minute assessment, which provides insights into their baseline proficiency and defines customized learning paths. The app then provides bite-sized content for them to consume to enhance their digital acumen.

|

|

DocMan – Document organization made easy: BCN Group’s DocMan, an intuitive document management solution that works with Microsoft products, allows users to easily upload documents, images, and videos and to tag content, making it easier to search, filter, and retrieve.

|

|

Document Intelligence: The EY Document Intelligence platform uses machine learning, natural language processing, and computer vision to help companies review, process, and interpret documents more quickly and cost-effectively. Extract value and insights from your structured and unstructured business documents.

|

|

Energy and Commodity Price Prediction System: CogniTensor’s Energy and Commodity Price Prediction is a combination of correlation analytics and prediction dashboards that recommend when to buy energy and other required commodities based on forecast market prices.

|

|

enVista Enspire Order Management System (OMS): enVista’s Order Management System (OMS) for retail delivers enterprise inventory visibility, optimizes omnichannel order fulfillment, and empowers associates to improve customer service and satisfaction through personalized experiences.

|

|

eSync Agent SDK: eSync from Excelfore is an embedded platform for providing over-the-air updates to multiple edge devices. Developed for automotive applications, eSync gives automakers a single server front end and allows data gathering from domain controllers, electronic control units, and smart sensors.

|

|

EY Digital Enablement Energy Platform: EY Digital Enablement Energy Platform (DEEP) supports the upstream oil and gas value chain with a common data model. DEEP breaks down silos to integrate reservoir engineering with production planning, well operations with supply chain management, and land management with decommissioning.

|

|

EY Nexus for Insurance: Built on Microsoft Azure and Microsoft Dynamics 365, EY Nexus for Insurance is a platform that enables carriers to launch new products and services, develop digital ecosystems, and automate processes across the value chain.

|

|

Full Stack Camera: Full Stack Camera from Broadband Tower Inc. features video storage and AI analysis (face identification, transcription, multilingual translation), along with video recording and operations management. This app is available only in Japanese.

|

|

Fuse Open Banking Solution: The EY Fuse Open Banking Solution from EY Global enables authorized deposit-taking institutions in Australia to comply with the Consumer Data Right legislation and manage data from consumers, regulators, and open-banking collaborators.

|

|

Gate Pass Solution: CSI Interfusion’s Gate Pass, which uses the Microsoft Power Platform, digitizes pass access processes. Via mobile devices, logistics directors and supply chain managers can monitor access to facilities and analyze their delivery fleet’s efficiency.

|

|

Guidewire on Azure – CloudConnect: Built on the Guidewire platform for property and casualty insurance, PwC’s CloudConnect is designed to help insurers grow new products and brands. CloudConnect offers a quick-start approach for preconfigured lines of business and full core processing for policy administration, billing, claims, and more.

|

|

Hardened CentOS 7: This image from Atomized, formerly known as Frontline, provides CentOS 7 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened CentOS 8: This image from Atomized, formerly known as Frontline, provides CentOS 8 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened Debian 9: This image from Atomized, formerly known as Frontline, provides Debian 9 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened Debian 10: This image from Atomized, formerly known as Frontline, provides Debian 10 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened Red Hat 7: This image from Atomized, formerly known as Frontline, provides Red Hat 7 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened Red Hat 8: This image from Atomized, formerly known as Frontline, provides Red Hat 8 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened Ubuntu 16: This image from Atomized, formerly known as Frontline, provides Ubuntu 16 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened Ubuntu 18: This image from Atomized, formerly known as Frontline, provides Ubuntu 18 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Hardened Ubuntu 20: This image from Atomized, formerly known as Frontline, provides Ubuntu 20 on a virtual machine that’s protected with more than 400 security controls and hardened according to configuration baselines prescribed by a CIS Benchmark.

|

|

Harmonic VOS360 Live Streaming: Harmonic’s VOS360 transforms traditional video preparation and delivery into a SaaS offering, helping you quickly launch revenue-generating streaming services. Deliver content from anywhere in the world, with total geographic redundancy and operational resiliency.

|

|

IPSUM Plan: IPSUM-Plan from Grupo Asesor en Informática manages and supports strategic and operational planning along with budgeting and risk assessment. IPSUM-Plan can be adapted to all types of public institutions and private companies. This app is available only in Spanish.

|

|

IRM – Integrated Risk Management: KEISDATA’s Integrated Risk Management is a governance, risk, and compliance platform that offers real-time reporting and a performance-based enterprise risk management module. This app is available only in Italian.

|

|

Joomla with Ubuntu 20.04 LTS: This preconfigured image from Cognosys provides Joomla with Ubuntu 20.04 LTS, MySQL Server 8.0.23, Apache 2.4.41, and PHP 7.4.3. Joomla is a content management system for building websites and powerful online applications.

|

|

Kafkawize: Kafkawize, a self-service portal for Apache Kafka, simplifies Kafka management by assigning ownerships to topics, subscriptions, and schemas. Kafkawize requires both UI API and Cluster API applications to run.

|

|

Kx kdb+ 4.0: kdb+ is a high-performance time-series columnar database designed for rapid analytics on large-scale datasets. kdb+ on Microsoft Azure allows you to efficiently run your time-series analytics workloads.

|

|

Lynx MOSA.ic for Azure: Lynx MOSA.ic from Lynx Software Technologies acts as a bridge for wired and wireless networks used in industrial environments. With seamless connectivity, Lynx MOSA.ic enables analytics, AI, and update capabilities to be extended to manufacturing and logistics facilities.

|

|

ManageEngine PAM360 20 admins, 50 keys: ManageEngine PAM360, a unified privileged access management solution, allows password administrators and privileged users to gain granular control over critical IT assets, such as passwords, SSH keys, and license keys.

|

|

Medxnote bot: Designed for Microsoft Teams, Medxnote gives frontline healthcare workers, such as doctors and nurses, their own personal robotic assistant that connects them to any clinical data at the point of care. It also includes a secure, HIPAA-compliant healthcare messaging and communications platform.

|

|

Migesa Cloud Voice: This solution allows your organization to connect and optimize its telecommunications infrastructure through the integration of leading information technology and communications platforms in the market. This app is available only in Spanish.

|

|

officeatwork | Slide Chooser User Subscription: Kickstart your presentation with Slide Chooser by putting together a presentation based on the most up-to-date slides served to you directly in Microsoft PowerPoint on any device or platform. Simply drag and drop your curated slides into your slide libraries stored in Microsoft Teams or Microsoft SharePoint.

|

|

Officevibe for Office 365: Officevibe gives employees a space to tell their managers anything, then empowers managers to respond and act. Weekly surveys, anonymous feedback, and smarter one-on-ones give team managers a full picture of their employees’ needs, strengths, and pains. Offer the support that will help your people thrive.

|

|

Prefect: Prefect Cloud’s beautiful UI lets you keep an eye on the health of your infrastructure. Stream real-time state updates and logs, kick off new runs, and receive critical information exactly when you need it. Use Prefect Cloud’s GraphQL API to query your data any way you want. Join, filter, and sort to get the information you need.

|

|

PRIME365 Cocai Retail: This solution from Var Group is a modern and scalable Var Prime application that can cover all phases of the sales process. It’s adaptable to all chain stores and interfaces with standard connectors to Microsoft Dynamics 365. This app is available only in Italian.

|

|

PrivySign: One of Indonesia’s leading digital trusts, PrivySign provides you an easy and secure way to sign documents digitally. The digital signature is created by using asymmetric cryptography and public key infrastructure, ensuring each signature is linked to a unique and verified identity.

|

|

Procurement Planning System: CogniTensor’s Procurement Planning System is a combination of correlation analytics and prediction dashboards that are specially designed to help you make data-driven decisions for choosing the best supplier based on past performance and forecast analysis.

|

|

Proof of Value Automator for Microsoft Azure Sentinel: This platform from Satisnet configures Microsoft Azure Sentinel through a wizard so you can evaluate it in your business environment. Satisnet’s offer includes a cost modeler, support services, and a Microsoft 365-integrated email threat-hunting tool. |

|

Pure Cloud Block Store (subscription): This is Pure’s state-of-the-art software-defined storage solution delivered natively in the cloud. It provides seamless data mobility across on-premises and cloud environments with a consistent experience, regardless of where your data lives – on-premises, cloud, hybrid cloud, or multiple clouds.

|

|

Red Hat 7: This image from Atomized, formerly known as Frontline, provides Red Hat Enterprise Linux (RHEL) 7. RHEL is an open-source operating system that serves as a foundation for scaling applications and introducing new technologies.

|

|

Red Hat 8: This image from Atomized, formerly known as Frontline, provides Red Hat Enterprise Linux (RHEL) 8. RHEL is an open-source operating system that serves as a foundation for scaling applications and introducing new technologies.

|

|

Skribble Electronic Signature: Use Skribble to electronically sign documents in accordance with Swiss and European Union law. Skribble integrates with Microsoft OneDrive for Business and enables companies, departments, and teams to sign documents directly from OneDrive and Microsoft SharePoint Online.

|

|

Smarsh Cloud Capture: This cloud-native solution captures electronic communications for regulatory compliance. With Smarsh, financial services organizations can utilize the entire suite of productivity and collaboration tools from Microsoft with a fully compliant, cloud-native capture solution.

|

|

Smart Green Drivers: Say hello to reliable transport emissions data and goodbye to manual quarterly and annual greenhouse gas data collection. Empower all your drivers to discover the impact of their behavior with Smart Green Drivers. Get the ability to be carbon-neutral as you work toward net zero.

|

|

Structured Data Manager (SDM): This solution enables the discovery, analysis, and classification of data and scanning for personal and sensitive data in any database accessible through JDBC. SDM automates application lifecycle management and structured data optimization by relocating inactive data and preserving data integrity.

|

|

Tartabit IoT Bridge: The Tartabit IoT Bridge provides rapid integration between low-power wide area network devices and the Microsoft Azure ecosystem. The IoT Bridge supports connecting cellular devices via LightweightM2M and CoAP. Additionally, it supports numerous unlicensed wireless technology providers.

|

|

TekAxiom Expense Management: Effectively manage technology expenses, accurately allocate costs, and automate payment management with TekAxiom. TekAxiom provides a cloud-based platform that delivers a customer-specific modular structure. It focuses on technology spend management, fixed and mobile telephony, and more.

|

|

Tetrate Service Bridge: Tetrate Service Bridge is a comprehensive service mesh management platform for enterprises that need a unified and consistent way to secure and manage services and traditional workloads across complex, heterogeneous deployment environments. Get a complete view of all your applications with Tetrate Service Bridge.

|

|

Timesheet System: This is a timesheet solution from CSI Interfusion for your Microsoft Office 365 environment. The solution aims to provide quick approvals, insightful reports, integration with upstream or downstream systems, and self-service that can maximize process efficiency.

|

|

Ubuntu 16: This image from Atomized, formerly known as Frontline, provides Ubuntu 16. Ubuntu 16 offers a secure, stable, and high-performance execution environment for developing cloud and enterprise applications.

|

|

Ubuntu 18: This image from Atomized, formerly known as Frontline, provides Ubuntu 18. Ubuntu 18 offers a secure, stable, and high-performance execution environment for developing cloud and enterprise applications.

|

|

Ubuntu 18.04 LTS Minimal: This preconfigured image from Cognosys provides a Minimal version of Ubuntu 18.04 LTS. The unminimize command will install the standard Ubuntu Server packages if you want to convert a Minimal instance to a standard environment for interactive use.

|

|

Ubuntu 20: This image from Atomized, formerly known as Frontline, provides Ubuntu 20. Ubuntu 20 offers a secure, stable, and high-performance execution environment for developing cloud and enterprise applications.

|

|

Vigilo Ondehub: Get a management information system with tools to efficiently manage daily life in education. Vigilo will deploy a highly scalable open platform for school data management. It ensures interoperability and security, introduces artificial intelligence, breaks vendor lock-in, and opens for services from suppliers.

|

|

Virsae Service Management for UC & Contact Center: Virsae’s cloud-based analytics and diagnostics for unified communications (UC) and contact center platforms put you in the picture to keep UC running at peak performance. Go beyond simple monitoring with proactive fixes and system foresight to resolve up to 90 percent of issues.

|

|

Zammo AI SaaS: This user-friendly platform gets your business on voice platforms and allows you to easily extend your content to interactive voice response and telephone-based voice bots, as well as chatbots across many popular channels: from web and mobile to Microsoft Teams.

|

Consulting services

|

|

Adatis AI Proof of Concept: 2-Week Proof of Concept: Get an insight into the potential of AI for your organization so you can begin your journey and realize benefits in the short term. This offer from Adatis will include a findings report with a summary of next steps, including estimates of time and cost.

|

|

AI System Infrastructure: Information Services International-Dentsu Co. Ltd. will customize its AI consulting service to your company’s purpose, data, and situation, then deliver a proof of concept of an AI system. This offer is available only in Japanese. |

|

Azure Migration – Ignite and Engage: 1-Hour Briefing: Kickstart your migration journey to Microsoft Azure. In this session, Cybercom will run you through its proven migration practice that is designed to empower you with a smooth and efficient transition and transformation to the Azure cloud.

|

|

Azure Migration: 10-Week Implementation: Computacenter will provide end-to-end support on your journey to Microsoft Azure. Fully aligned to the Microsoft Cloud Adoption Framework, Computacenter offers services that assist your organization in defining the strategy, creating the plan, ensuring readiness, and more.

|

|

Azure Sentinel Workshop – 1 Day: Get an overview of Microsoft Azure Sentinel along with insights on active threats to your Microsoft 365 cloud and on-premises environments with this workshop from DynTek. Understand how to mitigate those threats using Microsoft 365 and Azure security products.

|

|

Azure Site Recovery: 2-Week Assessment: Before you start protecting VMware virtual machines using Microsoft Azure Site Recovery, get a concrete and complete picture of the expected costs with this offering from IT1. Then employ a business continuity and disaster recovery strategy that ensures your data is secure. |

|

Azure Support – CSP: Tenertech offers a fully managed service for your mission-critical Microsoft Azure environment. Its service provides easy Azure access control via Azure Lighthouse for CSP customers. Get enterprise security via Azure Sentinel as well as management and incident response.

|

|

CAI Enterprise DevOps: 6-Week Implementation: Conclusion will help you implement and realize DevOps as a Service. Switch to a sustainable way of working, based on a safe framework that uses an effective and efficient CI/CD approach. Create room for your business to innovate and respond better to the market and to customers.

|

|

Cloud Migration: Implementation: Shaping Cloud’s cloud migration strategy, planning, and delivery services are designed to support customers and deliver improvement benefits. This can be in an advisory or technical assurance capacity, or up to Shaping Cloud’s complete service.

|

|

Cloud Readiness Assessment: 2-Week Assessment: Logicalis will conduct a thorough analysis of your technology landscape and capabilities to determine what workloads should be running in the cloud. Logicalis will determine the right strategy for Microsoft Azure implementation and workload migration.

|

|

Customer Intelligence Retail: 10-Week Implementation: The Customer Intelligence Platform by ITC Infotech delivers contextual marketing and loyalty personalization with predictive modeling algorithms. Equip your marketers to perform the appropriate segmentation, persona creation, and product category analysis.

|

|

Data Estate Modernization: 10-Week Assessment: ITC Infotech will deliver real-time and predictive insights across your enterprise with platforms of intelligence for a scalable, flexible, secure data foundation that is future-ready. These services can help enterprises reduce costs by 30 percent to 50 percent.

|

|

Data Ingestion Service: 1-Hour Briefing: Adatis’ Microsoft Azure Data Ingestion framework as a service enables an easy and automated way to populate your Azure Data Lake from the myriad data sources in and around your business. This allows anyone you grant access the ability to connect and ingest data into your data lake.

|

|

Digital Diversity Intelligence: 7-Week Implementation: Globeteam uses insight from human behavior, different facility sensors, and data sources to create business value. Its solution combines these sources with classic data sources, such as customer loyalty applications, to create business value and knowledge.

|

|

Excel to Microsoft Access – Azure: 1-Hour Assessment: IT Impact’s team will evaluate your Microsoft Excel spreadsheets and recommend the best route to take based on your security and long-term goals. During this assessment, IT Impact will discuss how your data can be migrated to Microsoft Azure SQL.

|

|

Globeteam Azure Migration: 3-Week Assessment: Globeteam will analyze your on-premises infrastructure for Microsoft Azure migration and get a complete view of your business case and readiness for migration to Azure. The migration assessment will be presented in a report with all the findings, economic overview, and more.

|

|

Hybrid Integration Platform: 1-Hour Briefing: Hybrid Integration Platform: 1-Hour Briefing: QUIBIQ will assist your company in building a modern hybrid integration platform as the basis of your digital transformation. This offer includes a one-hour briefing on Microsoft Azure Integration Services with a specialist from QUIBIQ.

|

|

Hybrid Integration Platform: 2-Hour Workshop: QUIBIQ will assist your company in building a modern hybrid integration platform as the basis of your digital transformation. This offer includes a two-hour workshop with a specialist from QUIBIQ to understand your specific integration challenges.

|

|

Iono Analytics on Azure: 2-Hour Assessment: IONO is a business intelligence service that frees users from relying on outdated data and provides them with easy-to-follow interactive reports without buying expensive software licenses. End users can create dashboards, share, and analyze, and more.

|

|

IoT Accelerator Proof of Concept: 2 Weeks: Proximus will act as your trusted advisor to help you implement an end-to-end proof of concept utilizing technologies such as Microsoft Azure IoT Hub, Azure IoT Edge, and Azure containers.

|

|

LogiGuard: 3-Week Implementation: LogiGuard from Logicalis is a security solution and approach powered by Microsoft Azure, centrally managed by Azure Sentinel. Logicalis’ approach helps customers understand their security posture and adopt modern tools to help protect their environment.

|

|

Managed Services for Azure: DXC Technology’s Managed Services for Microsoft Azure provides design, delivery, and daily operational support of compute, storage, and virtual network infrastructure in Azure. DXC will monitor and manage system software, infrastructure configurations and service consumption, and more.

|

|

Manufacturing Intelligence:10-Week Implementation: ITC Infotech brings a bespoke AI/ML-powered intelligent platform to empower consumer packaged goods leaders to build stronger consumer connections, mutually rewarding retailer relationships, and streamlined supply chains. Define the roadmap of your platform.

|

|

MLOps Framework: 8-Week MVP Implementation: Slalom has developed a comprehensive MLOps-enabled advanced analytics framework, equipped to accelerate any machine learning initiative. Slalom’s 6 Pillar Framework is built using state-of-the-art Microsoft Azure services, catering to the full spectrum of end users.

|

|

NEC Professional Services: NEC Australia provides professional consultants with expertise across various technology streams, business areas, and industries. Among its engagements are single or multiperson contract assignments, retained assignments, and executive search.

|

|

Pega Azure Cloud Service Automation: 8-Week Implementation: Set up a Pega-based CRM platform on Microsoft Azure with a two-stage process. Achieve greater scalability and a high-availability solution on Azure while gaining control over cost, time, and implementation uncertainties.

|

|

Platform of Intelligence CPG: 10-Week Implementation: The Platform of Intelligence (PoI) offering from ITC Infotech will implement and deploy intelligence at scale to realize benefits in the consumer packaged goods industry. |

|

Professional Services – Cloud Application Services: NEC Australia can provide expertise in cloud application services, whether that be bespoke development, continuous improvement, or modernization. NEC Australia also possesses the analysis and development expertise to move your legacy applications to the cloud. |

|

Professional Services – Cloud Platform Services: NEC Australia’s Cloud Platform Services practice is a holistic offering that facilitates your cloud journey. The company’s certified consultants will start with an assessment of your infrastructure and guide you through architecture planning, service design, and more. |

|

Professional Services – Data & AI: NEC Australia’s Data and AI practice offers a rapid delivery of data analytics, IoT, and artificial intelligence. NEC Australia developers will help you adopt and integrate emerging technologies within your existing systems, realizing value in the short term with rapid development cycles. |

|

Professional Services – Modern Workplace: NEC Australia’s Modern Workplace practice focuses on user experience process automation and compliance by design. NEC Australia can implement intuitive, collaborative tools and supplement them with modern, cloud-first records and information management solutions. |

|