by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

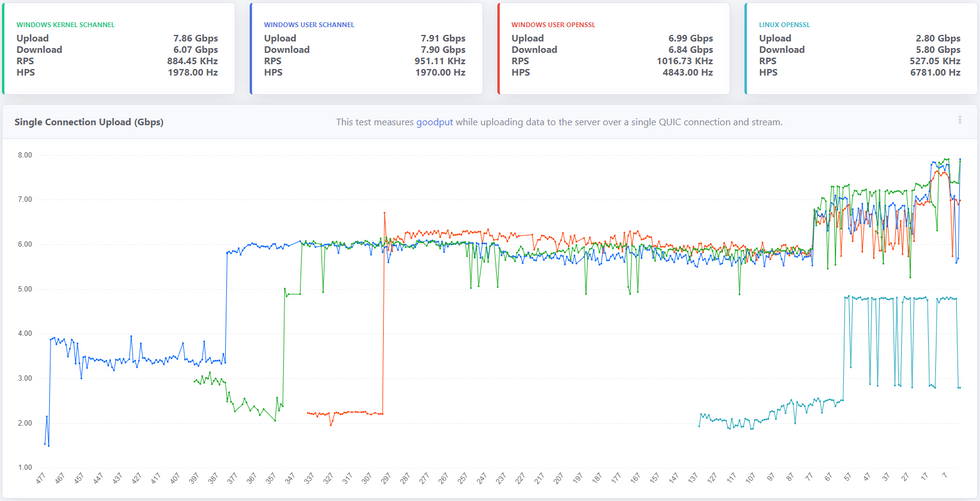

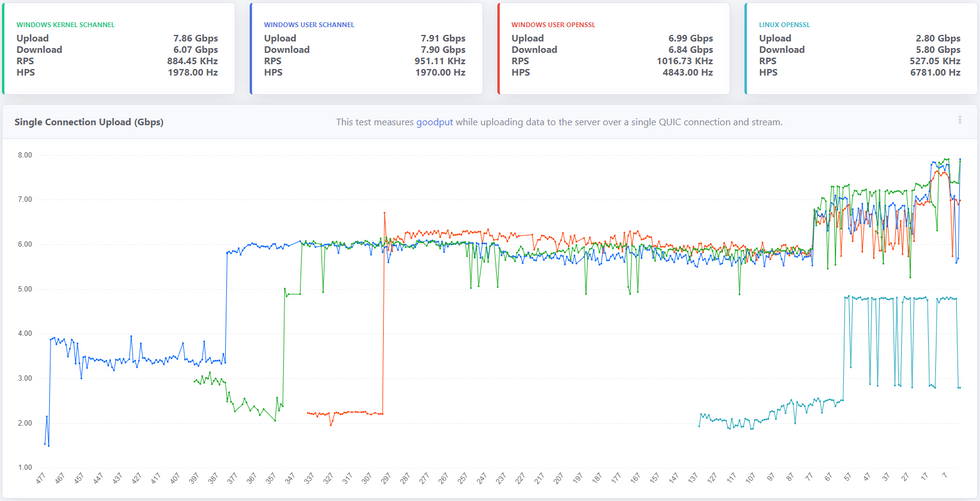

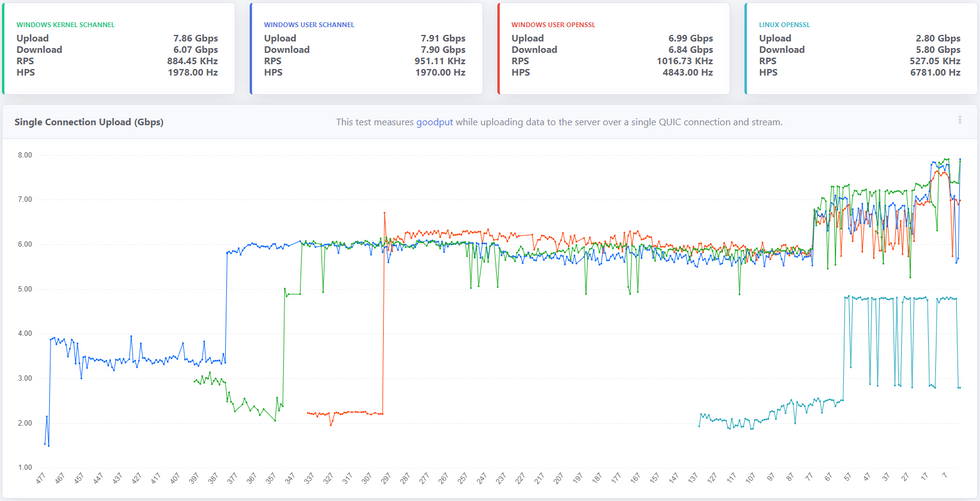

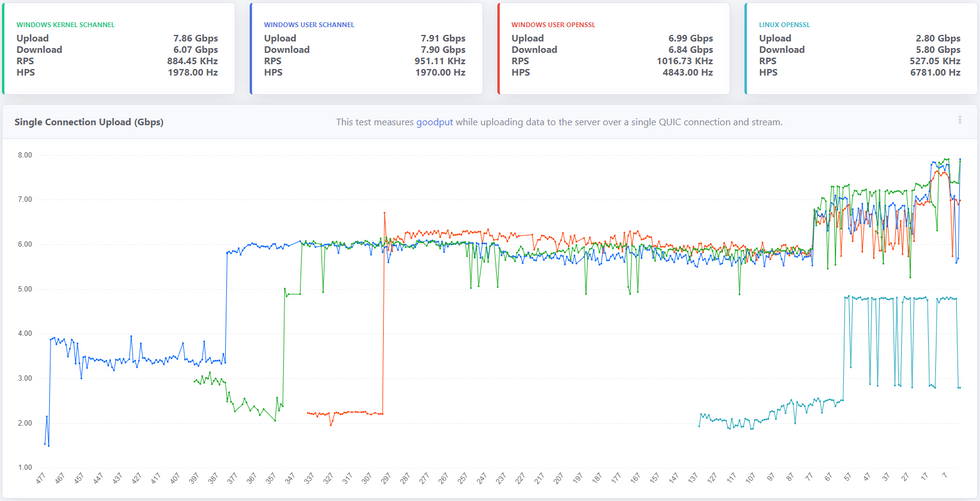

It’s been a year since we open sourced MsQuic and a lot has happened since then, both in the industry (QUIC v1 in the final stages) and in MsQuic. As far as MsQuic goes, we’ve been hard at work adding new features, improving stability and more; but improving performance has been one of our primary ongoing efforts. MsQuic recently passed the 1000th commit mark, with nearly 200 of those for PRs related to performance work. We’ve improved single connection upload speeds from 1.67 Gbps in July 2020 to as high as 7.99 Gbps with the latest builds*.

* Windows Preview OS builds; User-mode using Schannel; and server-class hardware with USO.

** x-axis above reflects the number of Git commits back from HEAD.

Defining Performance

“Performance” means a lot of different things to different people. When we talk with Windows file sharing (SMB), it’s always about single connection, bulk throughput. How many gigabits per second can you upload or download? With HTTP, more often it’s about the maximum number of requests per second (RPS) a server can handle, or the per-request latency values. How many microseconds of latency do you add to a request? For a general purpose QUIC solution, all of these are important to us. But even these different scenarios can have ambiguity in their definition. That’s why we’re working to standardize the process by which we measure the various performance scenarios. Not only does this provide a very clear message of what exactly is being measured and how, but it has also allowed for us to do cross-implementation performance testing. Four other implementations (that we know of) have implemented the “perf” protocol we’ve defined.

Performance-First Design

As already mentioned above, performance has been a primary focus of our efforts. Since the very start of our work on QUIC, we’ve had both HTTP and SMB scenarios driving pretty much every design decision we’ve made. It comes down to the following: The design must be both performant for a single operation and highly parallelizable for many. For SMB, a few connections must be able to achieve extremely high throughput. On the other hand, HTTP needs to support tens of thousands of parallel connections/requests with very low latency.

This design initially led to significant improvements at the UDP layer. We added support for UDP send segmentation and receive coalescing. Together, these interfaces allow a user mode app to batch UDP payloads into large contiguous buffers that only need to traverse the networking stack once per batch, opposed to once per datagram. This greatly increased bulk throughput of UDP datagrams for user mode.

These design requirements have led to some significant complexity internal to MsQuic as well. The QUIC protocol and the UDP (and below) work are separated onto their own threads. In scenarios with a small number of connections, these threads generally spread to separate processors, allowing for higher throughput. In scenarios with a large number of connections, effectively saturating all the processors with work, we do additional work improves parallelization.

Those are just a few of the (bigger) impacts our performance-driven design has had on MsQuic architecture. This design process has affected every part of MsQuic from the API down to the platform abstraction layer.

Making Performance Testing Integral to CI

Claiming a performant design means nothing without data to back it up. Additionally, we found that occasional, mostly manual, performance testing led to even more issues. First off, to be able to make reasonable comparisons of performance results, we needed to reduce the number of factors that might affect the results. We found that having a manual process added a lot of variability to the results because of the significant setup and tool complexity. Removing the “middleman” was super important, but frequent testing has been even more important. If we only tested once a month, it was next to impossible to identify the cause of any regressions found in the latest results; let alone prevent them from happening in the first place. That inevitably led to a significant amount of wasted time trying to track down the problem. All the while, we had regressed performance for anyone using the code in the meantime.

For these reasons, we’ve invested significant resources into making performance testing a first-class citizen in our CI automation. We run the full performance suite of tests for every single PR, every commit to main, and for every release branch. If a pull request affects performance, we know before it’s even merged into main. If it regresses performance, it’s not merged. With this system in place, we have pretty much guaranteed performance in main will only go up. This has also allowed us to confidently take external contributions to the code without fear of any regressions.

Another significant part of this automation is generating our Performance Dashboard. Every run of our CI pipeline for commits to main generates a new data point and automatically updates the data on the dashboard. The main page is designed to give a quick look at the current state of the system and any recent changes. There are various other pages that can be used to drill down into the data.

Progress So Far

As indicated in the chart at the beginning, we’ve had lots of improvements in performance over the last year. One nice feature of the dashboard is the ability to click on a data point and get linked directly to the relevant Git commit used. This allows us to easily find what code change caused the impacted performance. Below is a list of just a few of the recent commits that had the biggest impact on single connection upload performance.

- d985d44 – Improves the flow control tuning logic

- 1f4bfd7 – Refactors the perf tool

- ec6a3c0 – Fix a kernel issue related to starving NIC packet buffers

- be57c4a – Refactors how we use RSS processor to schedule work

- 084d034 – Refactors OpenSSL crypto abstraction layer

- 9f10e02 – Switches to OpenSSL 1.1.1 branch instead of 3.0

- ee9fc96 – Adds GSO support to Linux data path abstraction

- a5e67c3 – Refactors UDP send logic to run on data path thread

Most of these changes came about from this simple process:

- Collect performance traces.

- Analyze traces for bottlenecks.

- Improve biggest bottleneck.

- Test for regressions.

- Repeat.

This is a constantly ongoing process to always improve performance. We’ve done considerable work to make parts of this process easier. For instance, we’ve created our own WPA plugin for analyzing MsQuic performance traces. We also continue to spend time stabilizing our existing performance so that we can better catch possible regressions going forward.

Future Work

We’ve done a lot of work so far and come a long way, but the push for improved performance is never ending. There’s always another bottleneck to improve/eliminate. There’s always a little better/faster way of doing things. There’s always more tooling that can be created to improve the overall process. We will continue to put effort into all these.

Going forward, we want to investigate additional hardware offloads and software optimization techniques. We want to build upon the work going on in the ecosystem and help to standardize these optimizations and integrate it them into the OS platform and then into MsQuic. Our hope is that we will make MsQuic the first choice for customer workloads by bringing the network performance benefits QUIC promises without having to make a trade-off with computational efficiency.

As always, for more info on MsQuic continue reading on GitHub.

by Contributed | Apr 14, 2021 | Dynamics 365, Microsoft 365, Technology

This article is contributed. See the original author and article here.

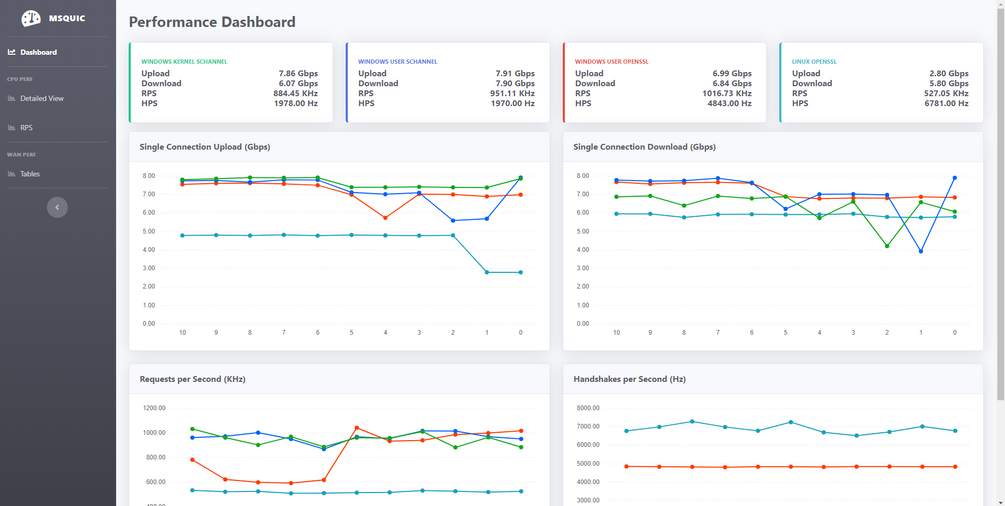

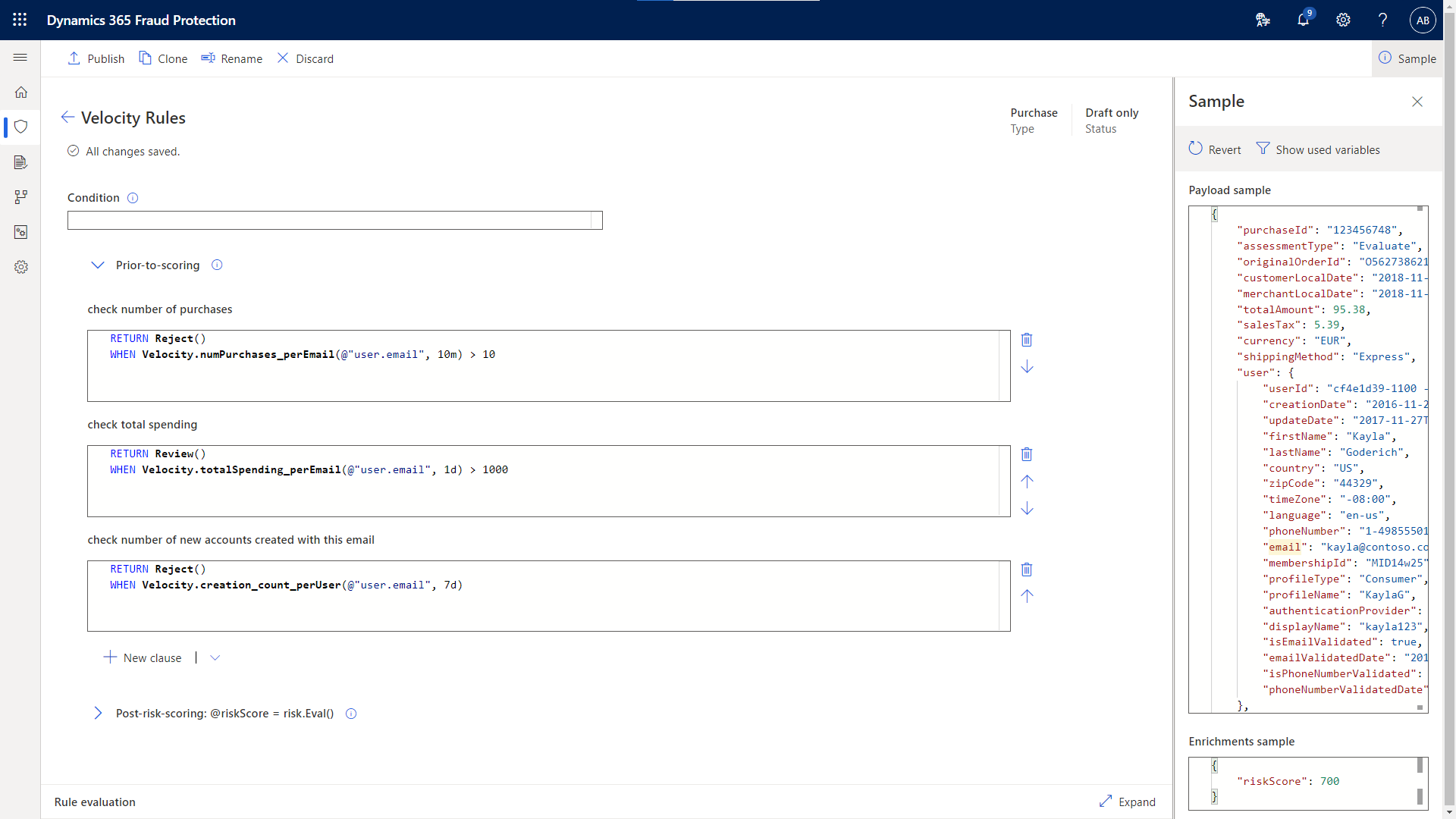

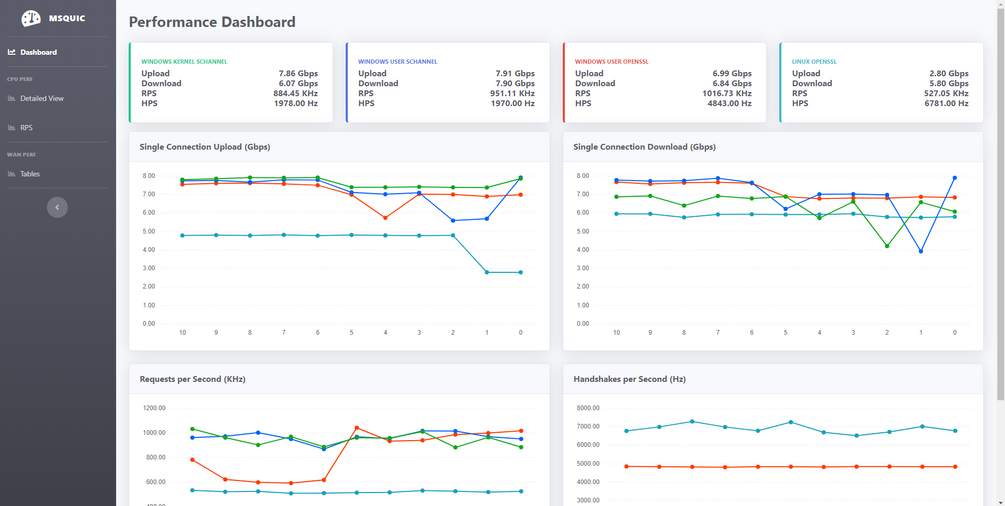

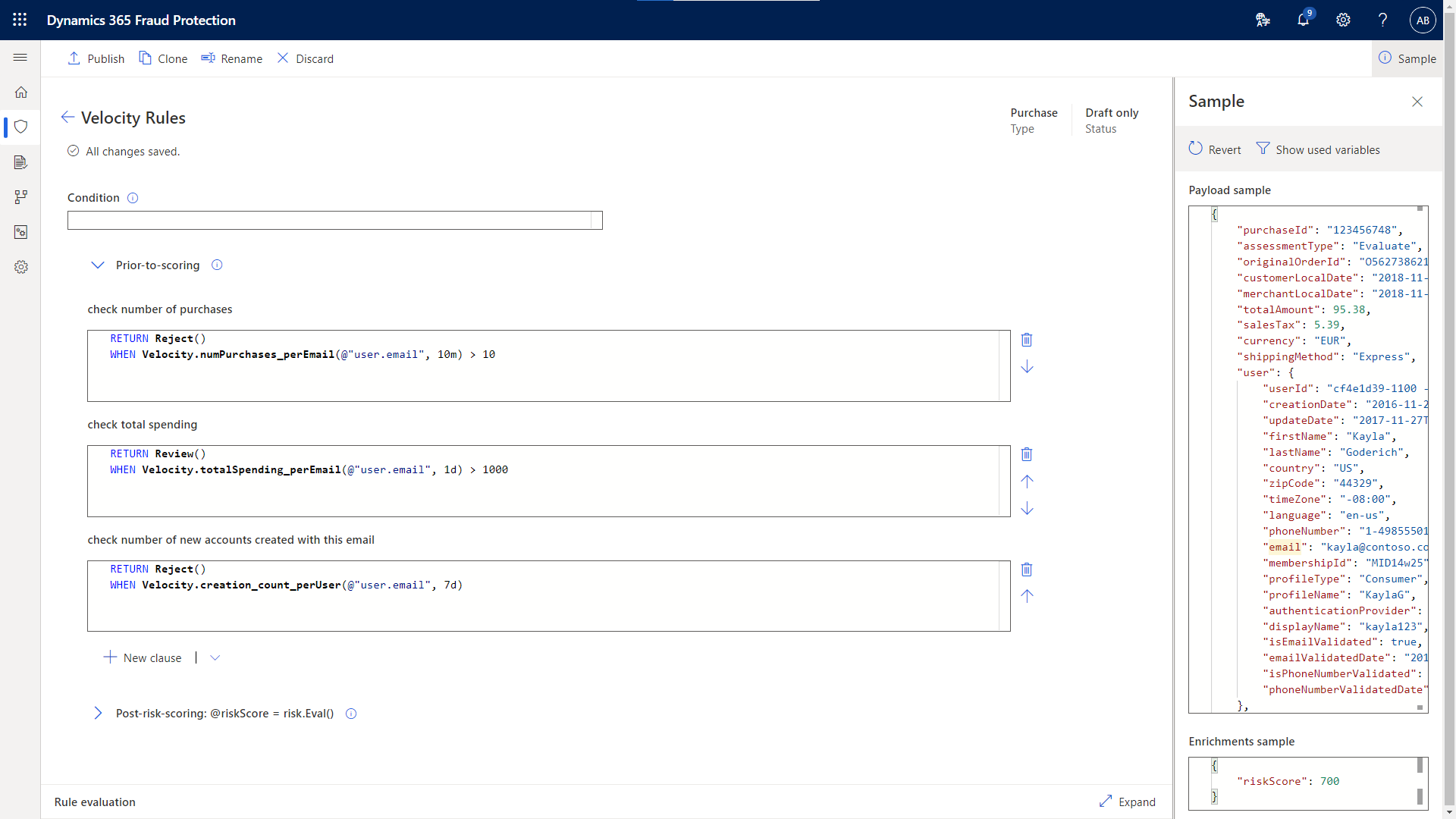

Successful fraud protection relies on a lot of information. The more insights you have into transactions and accounts, the better you will be able to detect suspicious activity. Microsoft Dynamics 365 Fraud Protection uses advanced AI models to bring together diverse sets of information into a single assessment score that indicates the overall risk of an event. Customers can create rules that threshold this score to make decisions in a manner that suits their risk appetite. However, sometimes customers also have the need to directly reason over raw attributes in their rules, for example, to detect business policy violations or to stop emerging fraud patterns specific to their business. In this preview, we are adding two features that will significantly improve the information available in the Dynamics 365 Fraud Protection rule engine: velocities and external calls.

Velocities use relationships and patterns between historical transactions to identify suspicious activity and help customers prevent loss from fraud. External calls let Dynamics 365 Fraud Protection customers ingest data from third-party information providers or from their in-house AI models. All these inputs may be needed by customers to make fully informed decisions on their events.

Identify potential fraud with velocities

How do you determine if a transaction is suspicious? In short, you have to look at the bigger picture. For example, there may be nothing suspicious about someone buying a single exercise bike online. However, if they buy fifteen exercise bikes over a short period of time, each with a different payment instrument, that might indicate possession of stolen payment information and malicious fraud. This is why monitoring the relationships and patterns between current and past transactions is essential in determining the riskiness of any given event.

Velocity detection allows customers to analyze the historical patterns of an individual or entity such as a credit card, IP address, or user email. Velocities already play a significant part in our AI-driven risk assessments, and now we are enabling our customers to define their own velocities that matter most to their business. With velocity checks, you can get the answers to questions such as: How much money has a user account spent in the last hour? How many distinct payment instruments have been used from this device in the last seven days? How many times has this user account attempted to login in the last five minutes? Customers can then utilize these historical patterns in real-time decision-making.

Some behaviors are more suspicious regardless of the business, such as hundreds of login attempts within a short interval from the same IP address. Other behaviors might be suspicious for one business, but not for another. It is not uncommon for a single customer to make two to six transactions a month at a grocery store. However, it might be more suspicious if a single customer makes two to six transactions in a month at a luxury car dealer. Velocities are an important tool for any fraud protection service, but their effectiveness depends on being able to customize it to your business.

Dynamics 365 Fraud Protection’s velocities provide customers the ability to fully customize their velocities, all the way from which attributes to monitor, to the timeframe they monitor them over, to what thresholds they want to set.

Velocities also allow customers to connect behaviors from different assessment events including account creations, account logins, and purchases. For example, a customer could block a user from logging in based on an inordinately large number of purchases made from the account in a short interval, or flag a purchase for review based on suspicious login attempts, since both velocities may be indicative of account compromise.

Make informed decisions in real-time with external calls

Until now, using the Dynamics 365 Fraud Protection’s rule engine customers could make real-time decisions based only on the data available within the product. This included data sent as part of the request payload, data uploaded in the form of lists, data generated by device fingerprinting, and risk assessment and bot detection scores produced by our AI models. However, sometimes customers may need additional signals from data sources outside the product to inform their decisions. Some customers may choose to partner with third-party information providers for additional data enrichment. Details such as address verification and phone reputation can help discover suspicious and fraudulent activity. Other customers may choose to utilize scores from their own in-house AI models tailored to their business. All these inputs may be important for customers to make fully informed decisions regarding their business.

Our external calls feature enables customers to bring in data from essentially any API endpoint on the web, ensuring they have full context and flexibility needed at the point of decision. This update continues to increase the power and scope of what can be done from within the Dynamics 365 Fraud Protection rules engine, allowing customers to utilize outside data when orchestrating their decisions.

Note that velocities and external calls are features made available in preview with reasonable consumption limits. In the future, we may bring these to general availability in an appropriate way.

Get started with Dynamics 365 Fraud Protection

Join the Dynamics 365 Fraud Protection Insider Program, to get an early view of upcoming features and discuss best practices to combat fraud.

Sign up for a free trial of Dynamics 365 Fraud Protection to try out these new features and check out the documentation for velocities and external calls, where you can learn how to create, use, and manage these new features.

Learn more about Dynamics 365 Fraud Protection capabilities including account protection, purchase protection, and loss prevention, and check out the e-book, “Protecting Customers, Revenue, and Reputation from Online Fraud.”

Finally, you can check out all the latest product updates for Microsoft Dynamics 365 and Microsoft Power Platform.

The post Customize your protection with new features in the Dynamics 365 Fraud Protection preview appeared first on Microsoft Dynamics 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

Earlier this year, we announced the preview of Always Encrypted with secure enclaves in Azure SQL Database – the feature designed to safeguard sensitive data from malware and unauthorized users by enabling rich confidential queries.

Royal Bank of Canada (RBC) is one of the customers who are already using Always Encrypted with secure enclaves. For details, see Microsoft Customer Story – RBC creates relevant personalized offers while protecting data privacy with Azure confidential computing.

For more information about Always Encrypted with secure enclaves in Azure SQL Database, see:

by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

As a major move to the more secure SHA-2 algorithm, Microsoft will allow the Secure Hash Algorithm 1 (SHA-1) Trusted Root Certificate Authority to expire. Beginning May 9, 2021 at 4:00 PM Pacific Time, all major Microsoft processes and services—including TLS certificates, code signing and file hashing—will use the SHA-2 algorithm exclusively.

Why are we making this change?

The SHA-1 hash algorithm has become less secure over time because of the weaknesses found in the algorithm, increased processor performance, and the advent of cloud computing. Stronger alternatives such as the Secure Hash Algorithm 2 (SHA-2) are now strongly preferred as they do not experience the same issues. As a result, we changed the signing of Windows updates to use the more secure SHA-2 algorithm exclusively in 2019 and subsequently retired all Windows-signed SHA-1 content from the Microsoft Download Center on August 3, 2020.

What does this change mean?

The Microsoft SHA-1 Trusted Root Certificate Authority expiration will impact SHA-1 certificates chained to the Microsoft SHA-1 Trusted Root Certificate Authority only. Manually installed enterprise or self-signed SHA-1 certificates will not be impacted; however we strongly encourage your organization to move to SHA-2 if you have not done so already.

Keeping you protected and productive

We expect the SHA-1 certificate expiration to be uneventful. All major applications and services have been tested, and we have conducted a broad analysis of potential issues and mitigations. If you do encounter an issue after the SHA-1 retirement, please see Issues you might encounter when SHA-1 Trusted Root Certificate Authority expires. In addition, Microsoft Customer Service & Support teams are standing by and ready to support you.

by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

Policy-driven Governance is a cornerstone in Enterprise-scale Landing Zone (ESLZ!). It’s possible to codify corporate, industry or country specific governance requirements declaratively using Azure Policy. ESLZ provides 90+ custom policies which help in meeting most common corporate governance requirements with a single click.

Benefits of these 90+ custom policies is documented in detail.

Following table lists these policies and the governance requirements they help in enforcing.

Deny-Public-Endpoints-for-PaaS-Services Policy Initiative includes following policies which apply on specific Azure services.

- Deny-PublicEndpoint-CosmosDB

- Deny-PublicEndpoint-MariaDB

- Deny-PublicEndpoint-MySQL

- Deny-PublicEndpoint-PostgreSql

- Deny-PublicEndpoint-KeyVault

- Deny-PublicEndpoint-Sql

- Deny-PublicEndpoint-Storage

- Deny-PublicEndpoint-Aks

Deploy-Diag-LogAnalytics PolicySet helps capturing Logs and Metrics as shown below.

Policy Name |

Log Categories |

Metrics |

Deploy-Diagnostics-AA |

JobLogs JobStreams DscNodeStatus |

AllMetrics |

Deploy-Diagnostics-ACI |

|

AllMetrics |

Deploy-Diagnostics-ACR |

|

AllMetrics |

Deploy-Diagnostics-ActivityLog |

Administrative Security ServiceHealth Alert Recommendation Policy Autoscale ResourceHealth |

|

Deploy-Diagnostics-AKS |

kube-audit kube-apiserver kube-controller-manager kube-scheduler cluster-autoscaler |

AllMetrics |

Deploy-Diagnostics-AnalysisService |

Engine Service |

AllMetrics |

Deploy-Diagnostics-APIMgmt |

GatewayLogs |

Gateway Requests Capacity EventHub Events |

Deploy-Diagnostics-ApplicationGateway |

ApplicationGatewayAccessLog ApplicationGatewayPerformanceLog ApplicationGatewayFirewallLog |

AllMetrics |

Deploy-Diagnostics-Batch |

ServiceLog |

AllMetrics |

Deploy-Diagnostics-CDNEndpoints |

CoreAnalytics |

|

Deploy-Diagnostics-CognitiveServices |

Audit RequestResponse |

AllMetrics |

Deploy-Diagnostics-CosmosDB |

DataPlaneRequests MongoRequests QueryRuntimeStatistics |

Requests” |

Deploy-Diagnostics-DataFactory |

ActivityRuns PipelineRuns TriggerRuns |

AllMetrics |

Deploy-Diagnostics-DataLakeStore |

Audit Requests |

AllMetrics |

Deploy-Diagnostics-DLAnalytics |

Audit Requests |

AllMetrics |

Deploy-Diagnostics-EventGridSub |

|

AllMetrics |

Deploy-Diagnostics-EventGridTopic |

|

AllMetrics |

Deploy-Diagnostics-EventHub |

ArchiveLogs OperationalLogs AutoScaleLogs |

AllMetrics |

Deploy-Diagnostics-ExpressRoute |

PeeringRouteLog |

AllMetrics |

Deploy-Diagnostics-Firewall |

AzureFirewallApplicationRule AzureFirewallNetworkRule AzureFirewallDnsProxy |

AllMetrics |

Deploy-Diagnostics-HDInsight |

|

AllMetrics |

Deploy-Diagnostics-iotHub |

Connections DeviceTelemetry C2DCommands DeviceIdentityOperations FileUploadOperations Routes D2CTwinOperations C2DTwinOperations TwinQueries JobsOperations DirectMethods E2EDiagnostics Configurations |

AllMetrics |

Deploy-Diagnostics-KeyVault |

AuditEvent |

AllMetrics |

Deploy-Diagnostics-LoadBalancer |

LoadBalancerAlertEvent LoadBalancerProbeHealthStatus |

AllMetrics |

Deploy-Diagnostics-LogicAppsISE |

IntegrationAccountTrackingEvents |

|

Deploy-Diagnostics-LogicAppsWF |

WorkflowRuntime |

AllMetrics |

Deploy-Diagnostics-MlWorkspace |

AmlComputeClusterEvent AmlComputeClusterNodeEvent AmlComputeJobEvent AmlComputeCpuGpuUtilization AmlRunStatusChangedEvent |

Run Model Quota Resource |

Deploy-Diagnostics-MySQL |

MySqlSlowLogs |

AllMetrics |

Deploy-Diagnostics-NetworkSecurityGroups |

NetworkSecurityGroupEvent NetworkSecurityGroupRuleCounter |

|

Deploy-Diagnostics-NIC |

|

AllMetrics |

Deploy-Diagnostics-PostgreSQL |

PostgreSQLLogs |

AllMetrics |

Deploy-Diagnostics-PowerBIEmbedded |

Engine |

AllMetrics |

Deploy-Diagnostics-PublicIP |

DDoSProtectionNotifications DDoSMitigationFlowLogs DDoSMitigationReports |

AllMetrics |

Deploy-Diagnostics-RecoveryVault |

CoreAzureBackup AddonAzureBackupAlerts AddonAzureBackupJobs AddonAzureBackupPolicy AddonAzureBackupProtectedInstance AddonAzureBackupStorage |

|

Deploy-Diagnostics-RedisCache |

|

AllMetrics |

Deploy-Diagnostics-Relay |

|

AllMetrics |

Deploy-Diagnostics-SearchServices |

OperationLogs |

AllMetrics |

Deploy-Diagnostics-ServiceBus |

OperationalLogs |

AllMetrics |

Deploy-Diagnostics-SignalR |

|

AllMetrics |

Deploy-Diagnostics-SQLDBs |

SQLInsights AutomaticTuning QueryStoreRuntimeStatistics QueryStoreWaitStatistics Errors DatabaseWaitStatistics Timeouts Blocks Deadlocks SQLSecurityAuditEvents |

AllMetrics |

Deploy-Diagnostics-SQLElasticPools |

|

AllMetrics |

Deploy-Diagnostics-SQLMI |

ResourceUsageStats SQLSecurityAuditEvents |

|

Deploy-Diagnostics-StreamAnalytics |

Execution Authoring |

AllMetrics |

Deploy-Diagnostics-TimeSeriesInsights |

|

AllMetrics |

Deploy-Diagnostics-TrafficManager |

ProbeHealthStatusEvents |

AllMetrics |

Deploy-Diagnostics-VirtualNetwork |

VMProtectionAlerts |

AllMetrics |

Deploy-Diagnostics-VM |

|

AllMetrics |

Deploy-Diagnostics-VMSS |

|

AllMetrics |

Deploy-Diagnostics-VNetGW |

GatewayDiagnosticLog IKEDiagnosticLog P2SDiagnosticLog RouteDiagnosticLog RouteDiagnosticLog TunnelDiagnosticLog |

AllMetrics |

Deploy-Diagnostics-WebServerFarm |

|

AllMetrics |

Deploy-Diagnostics-Website |

|

AllMetrics |

PolicySet Deploy-DNSZoneGroup-For-*-PrivateEndpoint targets Azure services as shown below.

Policy Name |

Azure Service |

Deploy-DNSZoneGroup-For-Blob-PrivateEndpoint |

Azure Storage Blob |

Deploy-DNSZoneGroup-For-File-PrivateEndpoint

|

Azure Storage File |

Deploy-DNSZoneGroup-For-Queue-PrivateEndpoint

|

Azure Storage Queue |

Deploy-DNSZoneGroup-For-Table-PrivateEndpoint

|

Azure Storage Table |

Deploy-DNSZoneGroup-For-KeyVault-PrivateEndpoint

|

Azure KeyVault |

Deploy-DNSZoneGroup-For-Sql-PrivateEndpoint

|

Azure SQL Database |

by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

Contributors:

Rob Garrett – Sr. Customer Engineer, Microsoft Federal

John Unterseher – Sr. Customer Engineer, Microsoft Federal

Martin Ballard – Sr. Customer Engineer, Microsoft Federal

This article replaces the previous article, which used the – now legacy – version of PnP PowerShell.

What are Learning Pathways?

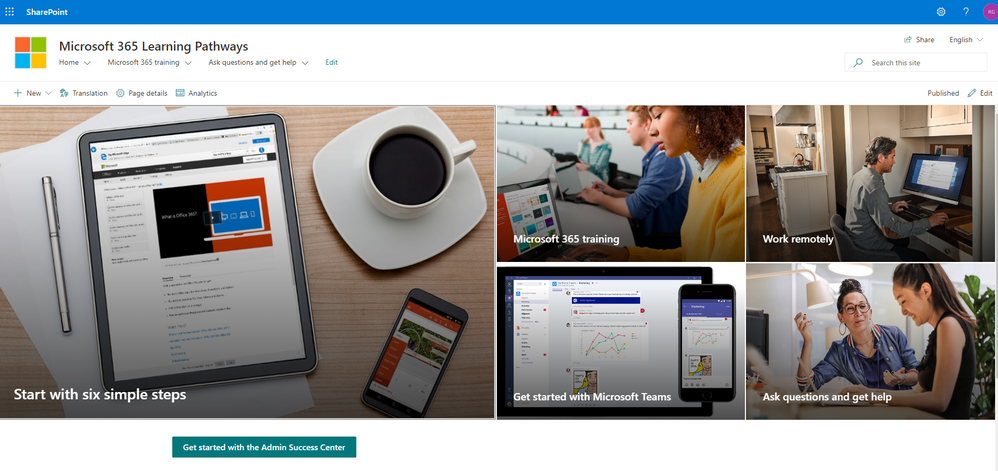

Microsoft 365 learning pathways is a customizable, on-demand learning solution designed to increase usage and adoption of Microsoft 365 services in your organization. |

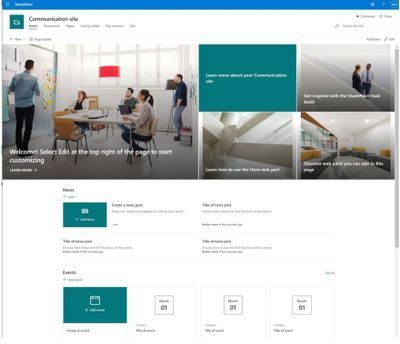

Microsoft 365 learning pathways is a customizable, on-demand learning solution designed to increase usage and adoption of Microsoft 365 services in your organization. Learning Pathways consists of a fully customizable SharePoint Online Communication site collection, with content populated from the Microsoft online catalog; so, your content is always up to date. Learning Pathways provide integrated playlists to meet the unique needs of your organization.

M365 Learning Pathways build atop of the Look Book Provisioning Service and templates (https://lookbook.microsoft.com). In a previous blog post, we detailed the nuances of the Look Book Provisioning Service and additional steps required to deploy templates to GCC High tenants. Since Learning Pathways depend on the provisioning service to create a Communication site with customizations, via a Look Book template, this post details the additional steps to follow those from the earlier blog post.

Challenge – Using Learning Pathways in GCC High

Because of provisioning limitations in the GCC High sovereign cloud, documented installation instructions result in errors. |

Microsoft strives to implement functionality parity between all sovereign clouds. However, since each Office 365 cloud type serves a different customer audience and requirements, functionality will differ between these cloud types. Of the M365 clouds – Commercial, Government Community Cloud, Government Community Cloud High, and DOD Cloud, the last two offer the least functionality to observe US federal mandates and compliance.

As Microsoft develops new functionality for Microsoft 365 and Azure clouds, we typically release new functionality to commercial customers first, and then to the other GCC, GCC High, and DOD tenants later as we comply with FedRAMP and other US Government mandates. Open-source offerings add another layer of complexity since open-source code contains community contribution and is seldom developed with government clouds in mind.

Apply Learning Pathways to GCC High

Manual configuration steps detailed below make Learning Pathways in GCC High possible.

|

Microsoft 365 Learning Pathways offers manual steps to support deployment to an existing SharePoint Online Communication site. Recall from the earlier blog post that the Look Book Provisioning Service is unable to establish a new site collection in GCC High, because of necessary restrictions. We, therefore, deploy Learning Pathways using the manual steps with a pre-provisioned Communication site collection.

Manual setup of Learning Pathways requires experience working with Windows PowerShell and the PnP PowerShell module.

Prerequisites

Before getting into the manual steps, we must meet prerequisites for manual install of Learning Pathways, the following is a summary:

- Create and designate a new Communication in SharePoint Online for Learning Pathways.

- Create a tenant-wide application catalog (steps below).

- Install the latest SharePoint PnP.

- Perform all steps as a SharePoint Tenant Administrator.

We begin by creating a new Communication site via the SharePoint Administration site:

https://mytenant-admin.sharepoint.us/_layouts/15/online/AdminHome.aspx#/siteManagement/view/ALL%20SITES

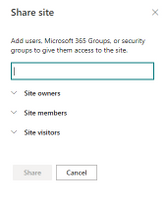

Ensure the appropriate permissions for users of the Learning Pathways site:

- Open the Learning Pathways site collection in your web browser.

- From the home page, click the Share link.

- Add students to the Site Visitors group.

- Add playlist editors of the pathways site to the Site Members group.

- Add site administrators of the pathways site to the Site Owners group.

We shall now create the tenant app catalog (if it does not already exist):

- Open the SharePoint Admin center in your browser.

https://mytenant-admin.sharepoint.us

- Select More Features in the left sidebar.

- Locate the Apps section and click Open.

https://mytenant-admin.sharepoint.us/_layouts/15/online/TenantAdminApps.aspx

- Select the App Catalog.

- If you do not already have an app catalog, provide the following details:

- Title: App Catalog

- Web Site Address Suffix: preferred suffix for the app catalog, e.g. apps.

- Administrator: SharePoint Administrator.

We shall now turn our attention to installing the latest version of PnP.PowerShell. At the time of writing this blog, the latest version of PnP.PowerShell is 1.5.x. Follow the instructions, in the box below, to install the latest pre-release version (required).

You can check the available versions of installed PnP.PowerShell with the Get-Module PnP.PowerShell -ListAvailable. If you do not have version 1.5.x or greater follow the instructions in the below box. |

PnP PowerShell installation is a prerequisite for deploying Look Book templates via PowerShell. The previous edition of this article used the – now legacy – SharePointPnpPowerShell module. At the time of writing, the new steps require the latest bits for PowerShellGet, Nuget Package Provider and PnP.PowerShell module. You only need follow these side-line steps once for a specified Windows machine.

- Open a PowerShell console as an administrator (right-click, Run As Administrator).

Note: The latest version of PnP.PowerShell is cross-platform and works with PowerShell Core (v7.x).

- Ensure unrestricted execution policy with:

Set-ExecutionPolicy Unrestricted

- Check the installed version of PowerShellGet with the following cmdlet:

Get-PackageProvider -Name PowerShellGet -ListAvailable

- If you see version 2.2.5.0 or greater, proceed to step #5.

Note: if you have PowerShell 5.1 and 7.x installed, you may have different versions of PowerShellGet for each version of PowerShell.

- Install the required version of PowerShellGet with:

Install-PackageProvider -Name Nuget -Scope AllUsers -Force

Install-PackageProvider -Name PowerShellGet -MinimumVersion 2.2.5.0 -Scope AllUsers -Force

- If you ran step #4, close and reopen your PowerShell console (again, as an administrator).

- Install PnP.PowerShell with the following:

Install-Module -Name PnP.PowerShell -AllowPrerelease -SkipPublisherCheck -Scope AllUsers -Force

- Close and reopen your PowerShell console (run as administrator not required this time).

- Confirm that PnP.PowerShell is installed with the following:

Get-Module -Name PnP.PowerShell -ListAvailable

|

- Open a new PowerShell console (v5.1 or Core 7.x).

- Ensure the PnP.PowerShell module is loaded with the following:

Import-Module -Name PnP.PowerShell

- Run the following script ONCE per tenant to create an Azure App Registration for PnP:

Note: Replace tenant with your tenant name.

Register-PnPAzureADApp -ApplicationName "PnP PowerShell" `

-Tenant [TENANT].onmicrosoft.us -Interactive `

-AzureEnvironment USGovernmentHigh `

-SharePointDelegatePermissions AllSites.FullControl User.Read.All

Login with user credentials assigned Global Administrator role.

If you previously registered PnP.PowerShell, check the App Registration in the Azure portal and make sure it has delegated permissions for AllSites.FullControl and User.Read.All.

- Make a note of the GUID returned from step 4. This is the App/Client ID of the new PnP Azure App Registration.

Deploy Learning Pathways Template

Learning Pathways deploys as from a dedicated Look Book template. The following steps details downloading the template and deploying it via PnP.PowerShell.

- Open a new PowerShell console (v5.1 or Core 7.x).

- Ensure the PnP.PowerShell module is loaded with the following:

Import-Module -Name PnP.PowerShell

- Connect to your Learning Pathways site collection with the following:

Connect-PnPOnline `

-Url "Url of your Learning Pathways Site" `

-AzureEnvironment USGovernmentHigh `

-Interactive `

-Tenant "[TENANT].onmicrosoft.us `

-Client ID "Client ID from AAD app registration in Step #16"

- Enable custom scripts on your site with the following (note: check with you security team before enabling this feature):

Set-PnPTenantSite -Identity "Url of your Learning Pathways Site" -DenyAddAndCustomizePages:$false

- Apply the template with the following:

Invoke-PnPSiteTemplate -Path M365LP.pnp

- Connect the SharePoint Framework Web Part to your learning site with the following:

Set-PnPStorageEntity `

-Key MicrosoftCustomLearningSite `

-Value "<URL of Learning Pathways site collection>" `

-Description "Microsoft 365 learning pathways Site Collection";

Set-PnPStorageEntity `

-Key MicrosoftCustomLearningTelemetryOn `

-Value $false `

-Description "Microsoft 365 learning pathways Telemetry Setting";

by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

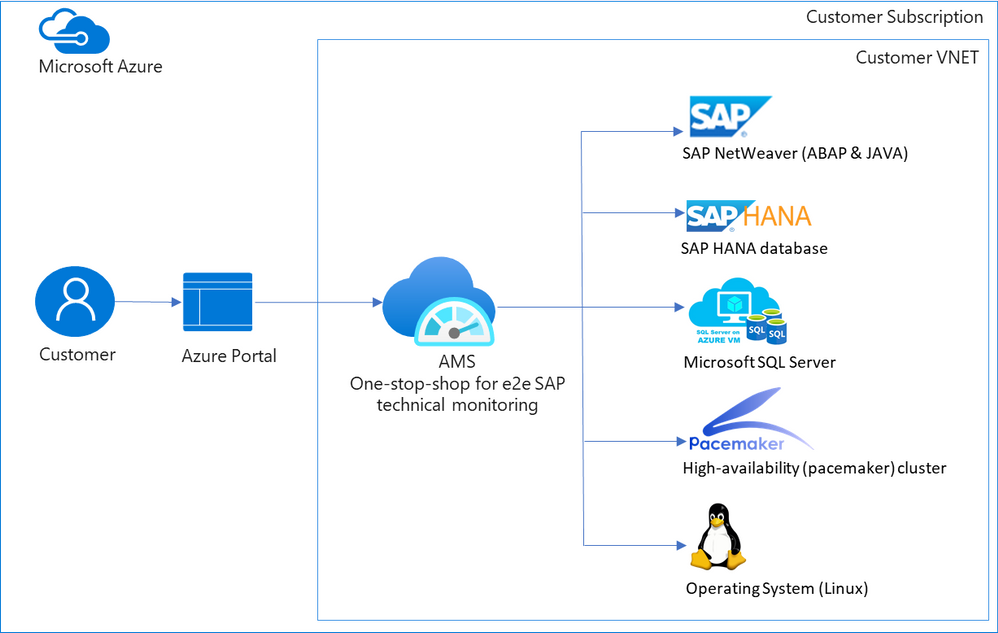

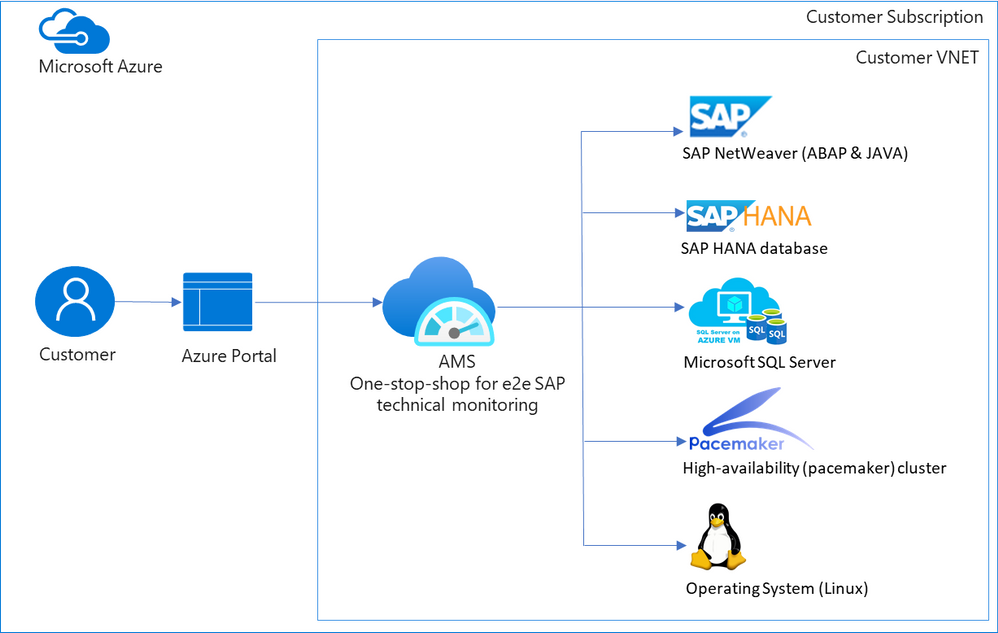

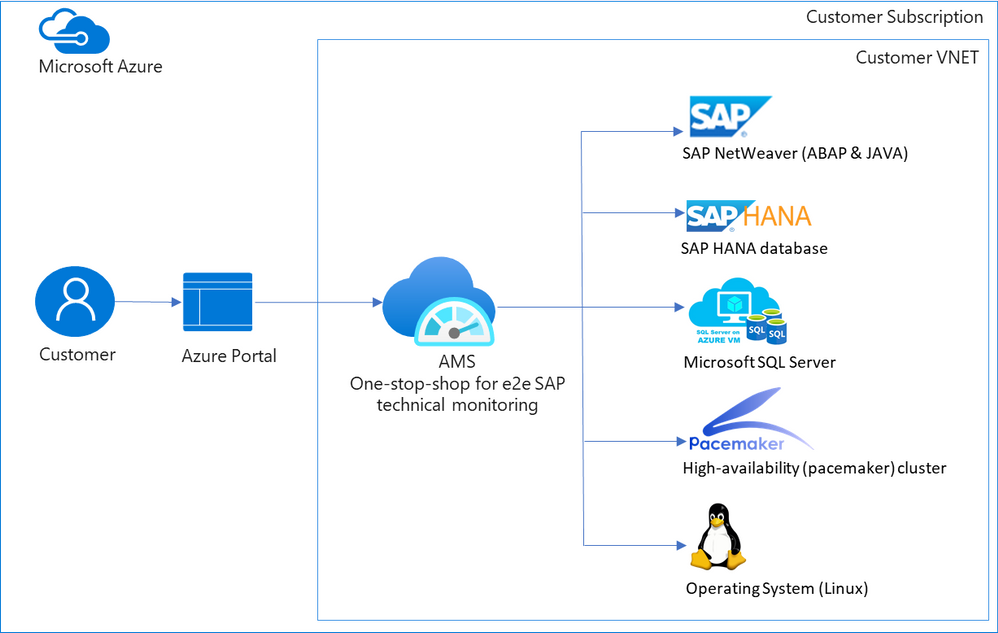

When customers move their mission critical SAP applications to Azure, a decision on which monitoring tool to use is carefully made. Various tools are evaluated, older pain points are reconsidered, and new cloud-specific monitoring needs are identified. Microsoft built Azure Monitor for SAP Solutions (AMS), currently in public preview, to address some of these needs. AMS is an Azure native monitoring solution that provides end-to-end SAP technical monitoring at one place in Azure portal.

AMS can be used by customers running their SAP workloads on Azure virtual machines as well as Azure large instances to monitor their SAP on Azure landscapes. Currently, telemetry collections for the following are supported: SAP HANA, Microsoft SQL Server and High-availability (pacemaker) clusters. And the following regions are supported: East US, East US 2, West US 2, West Europe.

Today I am excited to share the following capabilities in public preview:

- SAP NetWeaver (ABAP & JAVA)

- Operating system (SUSE & RHEL)

- Additional metrics for High-availability (pacemaker) cluster

- North Europe region

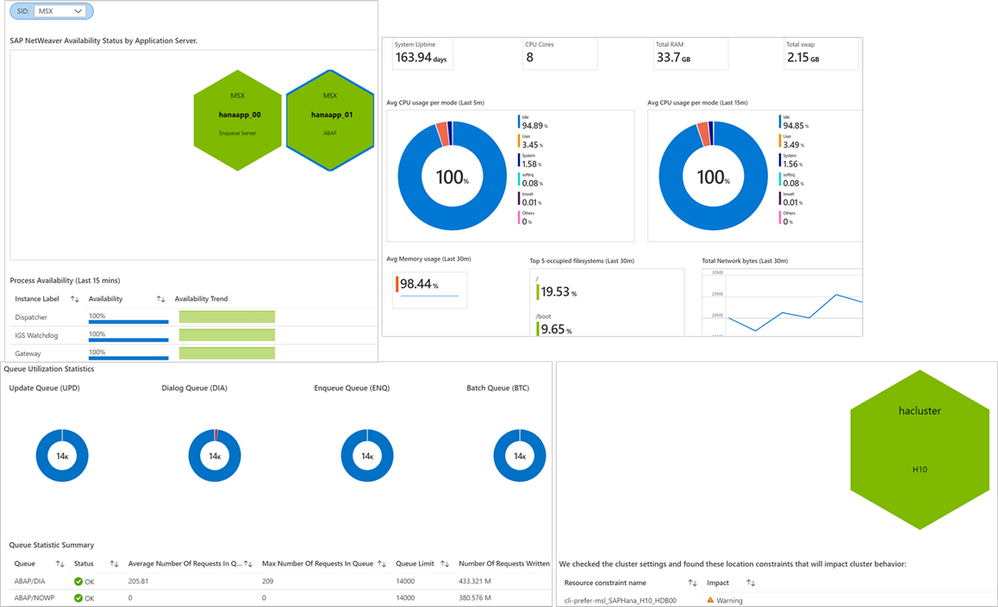

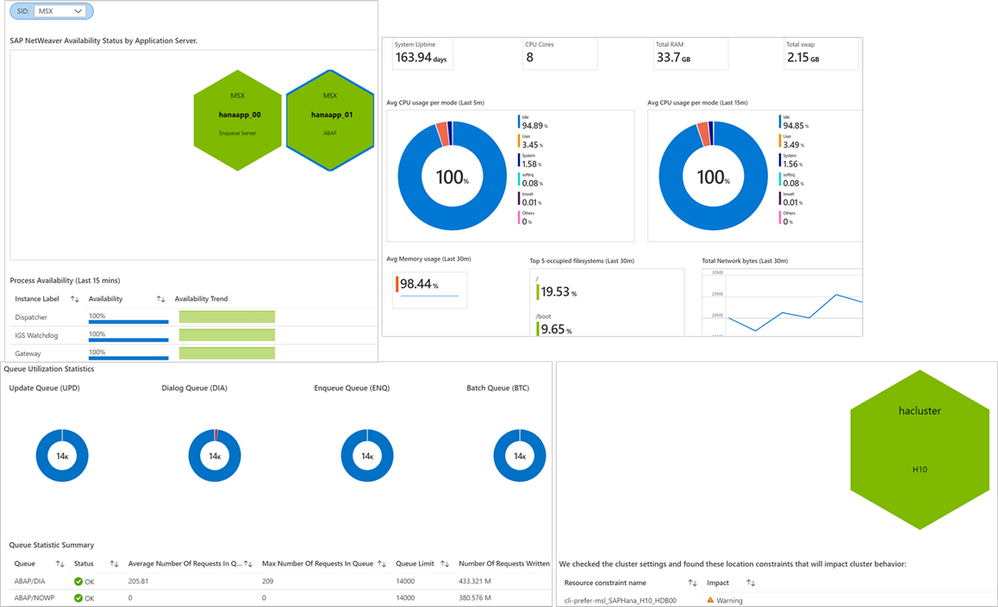

SAP NetWeaver data is collected from functions in SAP Start service which are provided on SAPControl SOAP Web Service. Following metrics are supported with this release: SAP instance availability, process availability, work process utilization, enqueue lock statistics, queue statics and trends. More to follow. Data for high-availability (pacemaker) clusters is collected from ha cluster exporter, which runs on every node in a cluster. Previously, statuses for nodes and resources were available, now customers can see information about location constraints, failure counts and trends on node and resource status. Operating systems (SUSE and RHEL) data is collected through node exporter which is required to run on each host. Metrics like:

- CPU usage by process

- Persistent memory

- Swap memory

- Memory usage

- Disk utilization,

- Network information

and more are available. OS monitoring in AMS is specifically useful for customers running workloads on Azure large instances (BareMetal).

Screenshots of visualizations from AMS

Screenshots of visualizations from AMS

With this release, AMS provides end-to-end technical monitoring for all layers in SAP’s 3-tier architecture at one place. Customers can use AMS to visualize, monitor and troubleshoot all layers and visually corelate data across them. Further, customers can create Azure dashboards, with few clicks, to visualize SAP telemetry and non-SAP telemetry (other Azure services) in single-pane-of-glass. This can be done by combining telemetry from AMS and Azure monitor.

Since end-to-end technical monitoring is available within Azure portal, both BASIS administrators and infrastructure teams in customer’s organization can use AMS to monitor SAP on Azure. Moreover, Hosters/service integrations/partners can use AMS to monitor SAP systems for their customers and view cross-tenant SAP telemetry in Azure portal through integration between AMS and Azure Light house.

To get started, log into Azure portal and search ‘Azure Monitor for SAP Solutions’ in Azure Marketplace. Please see the links below for further information.

Share your thoughts with AMS product group: AMS asks & feedback form

Learn more:

Other helpful links:

by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

SAP NetWeaver monitoring- Azure Monitoring for SAP Solutions

By

@Ramakrishna Ramadurgam

AZURE MONITOR FOR SAP SOLUTIONS

Microsoft previously announced the launch of Azure Monitor for SAP Solutions (AMS) in Public Preview– an Azure native monitoring solution for customers who run SAP workloads on Azure. With AMS, customers can view telemetry of their SAP landscapes within the Azure Portal and efficiently correlate telemetry between various layers of SAP viz-viz NetWeaver, Database and Infrastructure etc. AMS is available through Azure Marketplace in the following regions: East US, East US 2, West US 2, West Europe and North Europe. AMS does not have a license fee.

SAP NetWeaver Monitoring

SAP Systems are very complex and mission critical for many enterprises, it is imperative that we identify issues and alert based on threshold breaches with “No” human involvement. Ability to detect failures early can prevent system degradation/reliability dips of SAP systems during critical periods like Finance period closes, Payroll Processing, Holiday Sales etc. A robust and Azure native monitoring platform helps the SAP Admins to gain near real-time visibility and insights into system availability, performance and work process usage trends.

With Azure Monitor for SAP Solutions (AMS), customers can add a new provider type “SAP NetWeaver”, this provider type enables “SAP on Azure” customers to monitor SAP NetWeaver components and processes on Azure estate in Azure portal. The solution also allows for easy creation of custom visualizations and custom alerting, this new provider type ships with default visualizations that can either be used out of the box or extended to meet your requirements.

SAP NetWeaver telemetry is collected by configuring SAP NetWeaver ‘provider’ within AMS. As part of configuring the provider, customers need to provide the hostname (Central, Primary and/or Secondary Application server) of SAP system and its corresponding Instance number, Subdomain and the System ID (SID).

How SAP NW Telemetry is captured

By leveraging SAP Control Web service interface:

- The SAP start service runs on every computer where an instance of an SAP system is started.

- It is implemented as a service(sapstartsrv.exe) on Windows, and as a daemon(sapstartsrv) on UNIX.

- The SAP start service provides the following functions for monitoring SAP systems, instances, and processes.

- These services are provided on SAPControl SOAP Web Service, and used by SAP monitoring tools.

SAPStartsrv binds at port(s):

- HTTP port 5<xx>13 or HTTPS port 5<xx>14, where <xx> is the number of the instance.

- The webservice interface can be implemented via the WSDL interface definition , and this can be obtained from the below WSDL

- https://<host>:<port>?/wsdl

- The above URL is used, to generate a client proxy in web service enabled programming environments like .Net, Python.

Pre-Requisite steps to onboard to AMS-NW Provider

- The SAPcontrol webservice interface of sapstartsrv differentiates between protected and unprotected Webservice Methods, Protected methods are executed only after a successful user Authentication, this is not required for unprotected methods.

- The parameter “service/protectedwebmethods”(RZ10) , determines what methods are protected, it can have two different _Default_ values, DEFAULT or SDEFAULT.

- Customers have to do the below to unprotect any methods to enable “SAP NW Provider”

- service/protectedwebmethods = SDEFAULT -GetQueueStatistic –ABAPGetWPTable –EnqGetStatistic –GetProcessList

- After you have changed the parameter, you have to restart the sapstartsrv service using the below:

sapcontrol -nr <NR> -function RestartService

- Below are the standard out of the box SOAP Webmethods that are used for V1 Release:

Web method

|

ABAP

|

JAVA

|

Metrics

|

GetSystemInstanceList

|

X

|

X

|

Instance Availability,Message Server,Gateway,ICM, ABAP Availability

|

GetProcessList

|

X

|

X

|

If instance list is RED, we can get what Process causing that server to be RED

|

GetQueueStatistic

|

X

|

X

|

Queue Statistics(DIA/BATCH/UPD)

|

ABAPGetWPTable

|

X

|

|

Work process utilization

|

EnqGetStatistic

|

X

|

X

|

Locks

|

Asks & Feedback:

AMS asks & feedback form

AMS links:

by Contributed | Apr 14, 2021 | Dynamics 365, Microsoft 365, Technology

This article is contributed. See the original author and article here.

Major shocks to supply and demand over the course of the pandemic have exposed the fragility and vulnerabilities of the global supply chain. One silver lining is the realization, across industries, that a more agile approach is needed. To stabilize supply chains, whether localized or on a global scale, every organization must architect resiliency into their supply chains, scaling operations to meet customers’ needs, and proactively overcome disruptions to ensure business continuity and operate profitably.

This year, at Hannover Messe Digital Edition, we are showcasing new Microsoft Dynamics 365 capabilities to help companies easily scale production and distribution, keep critical processes running, and ensure continuity on the frontlines of manufacturing. Learn more about how Microsoft Cloud for Manufacturing is helping create a more resilient and sustainable future through open standards and ecosystems.

Looking back to move forward: The ripple effect of semiconductor shortages

The ripple effect created by semiconductor shortages in the past year underpins the need for greater agility and resilience across supply chains. As demand for automobiles slowed down at the beginning of the pandemic, automobile manufacturers stocked less inventory of critical semiconductor components to keep operational costs low. During the same period, demand for consumer electronics like computers and tablets grew due to the surge in remote work and online schools. As a result, the same semiconductor manufacturing company that was supplying raw materials to automobile manufacturers increased its allocation to consumer electronics manufacturing companies.

When the demand for automobiles started to rise again in the latter part of 2020, automobile manufacturers struggled to ramp up production to meet demand due to shortages in critical semiconductor components, resulting in decreased revenue and temporary plant closures. To strengthen the supply chains of these critical raw materials and wither any geopolitical storms that can disrupt the supply chain for critical components coming from Asia and Europe, organizations worldwide have started to reconfigure their supply chain network and build new agile factories.

How Microsoft is helping to create more agile, resilient supply chains

IDC’s 2020 Supply Chain Survey1 explored how companies would manage risk and enable supply chain visibility to achieve supply chain resiliency. The focus for risk and visibility from most respondent companies were primarily in supply chain planning (57 percent) and end-to-end supply chain (56 percent) including visibility into factory (39 percent). The tools that the companies expected to use to enhance visibility includes best of breed and edge supply chain application, enterprise collaboration tools, specialized BI and analysis tools, and supplier or customer portal and workflow management solutionssolutions that align to our focus areas of innovation to help organizations architect resiliency into every level of the business, from supply chains to distribution to finance and service.

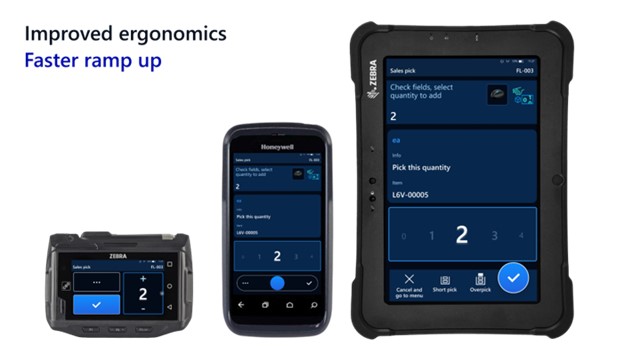

At Hannover Messe Digital Edition, we are also showcasing the new cloud and edge scale unit add-ins for Microsoft Dynamics 365 Supply Chain Management, which were previewed in October 2020, that enables companies to easily scale production and distribution during peaks and keep critical processes running at high throughput on the edge.

![Customers can set up a scale unit manager to activate an Edge scale unit or a cloud scale unit to scale production or distribution activities and overcome latency]()

We are also showcasing how manufacturers can use Mixed Reality with Dynamics 365 Supply Chain Management by integrating with Microsoft Dynamics 365 Guides. This enables manufacturers to reskill and cross-skill the frontline workers and augment their knowledge while they rapidly change factory layouts and bring new plants online.

![Customers can scan a QR code to see work instructions at their work cell while performing the task]()

Supply chain resiliency in action: Two success stories from the supply chain frontlines

Although the focus is now on resiliency, cost still needs to be controlled, and so simply ordering and holding more inventory is not the answer. What does hold promise is tighter integration and collaboration with both internal and external trading partners, faster access to data, and a new level of transparency and visibility into the end-to-end value chain made possible by digital transformation.

Wonder Cement builds a better foundation by connecting and scaling key business apps

Wonder Cement is a cutting-edge cement manufacturing company that has built its corporate culture on the values of quality, trust, and transparency. The company attributes its success to its relentless focus on customer service coupled with an emphasis on technological superiority.

Headquartered in Udaipur, Rajasthan, Wonder Cement operates three integrated cement plants and two grinding plants, with another scheduled to be online in the first quarter of 2021. Underpinning their success and growth is a reliance on innovative data technologies that enable them to maintain close connections with their markets and operations.

Wonder Cement recently brought wide-ranging process improvements to its business through the implementation of Microsoft Dynamics 365. The company is creating an improved foundation by connecting and scaling its most critical business apps. In the process, Wonder Cement has lowered inventory costs and reduced the time spent on transferring data between disparate systems. The company now has new visibility into its data and the resilience to succeed in the years ahead.

“We save 80 to 85 percent of the time we used to spend on moving data from the production system to our ERP now that everything runs on Dynamics 365. That’s a huge win for our company.” Arun Attri, Vice President (IT)

Explore how this customer-first cement company builds a better foundation by connecting and scaling key business apps with Dynamics 365.

CRC Industries aligns finance, operations, and supply chain management globally

CRC Industries has successfully created specialty products for the automotive, industrial, marine, aviation, and other markets for over 60 years. With customers in over 120 countries, seven manufacturing plants, and 26 facilities around the world, CRC has experienced almost continuous expansion over the past six decades.

As CRC grew, several unintegrated business solutions for finance, operations, and supply chain management were pieced together. The result was a mix of diverse systems that couldn’t communicate without significant manual intervention. This led to CRC being unable to take advantage of the cloud’s benefits and instead, having separate systems that reside on-premises at different locations across the globe.

“I consider Dynamics 365 the leading edge of the ERP frontier. Microsoft is listening to the customer and adapting quickly.”William McLendon, Global Manager Business Applications

In contemplating its future growth plans, CRC realized that its fragmented and disparate solutions were unsustainable. A push to standardize business processes, break down data silos, and create a single source of truth began. After considering other options, CRC selected Dynamics 365 Supply Chain Management.

“We made it our goal to standardize our business systems globally. We wanted to put the company on one enterprise resource planning solution throughout the world and have one way of doing business going forward.”Brent Laurin, Vice President of Global Operations

Learn more about how this specialty products and formulations company aligns finance, operations, and supply chain management globally with Dynamics 365.

What’s new in Dynamics 365 Supply Chain Management in April

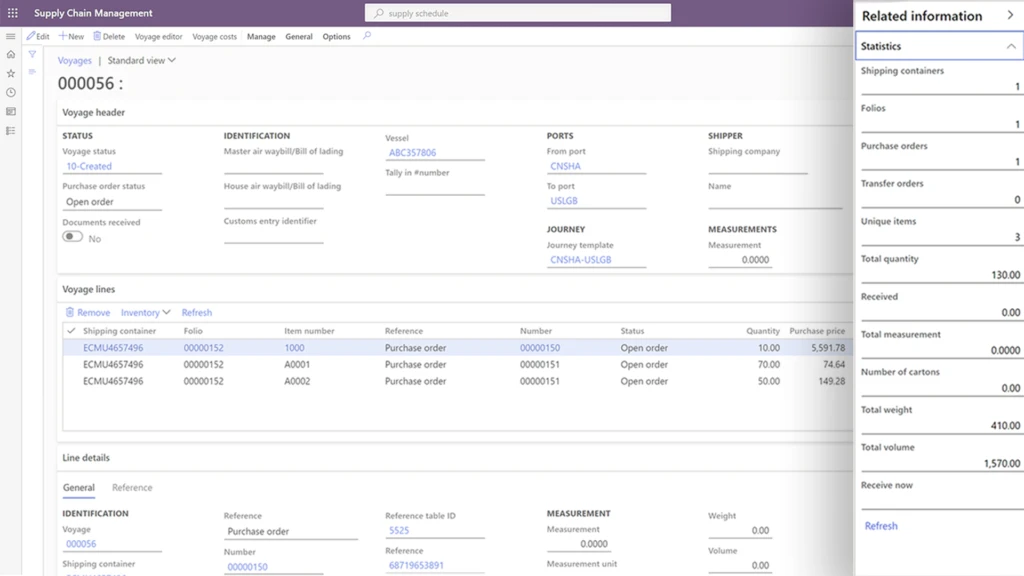

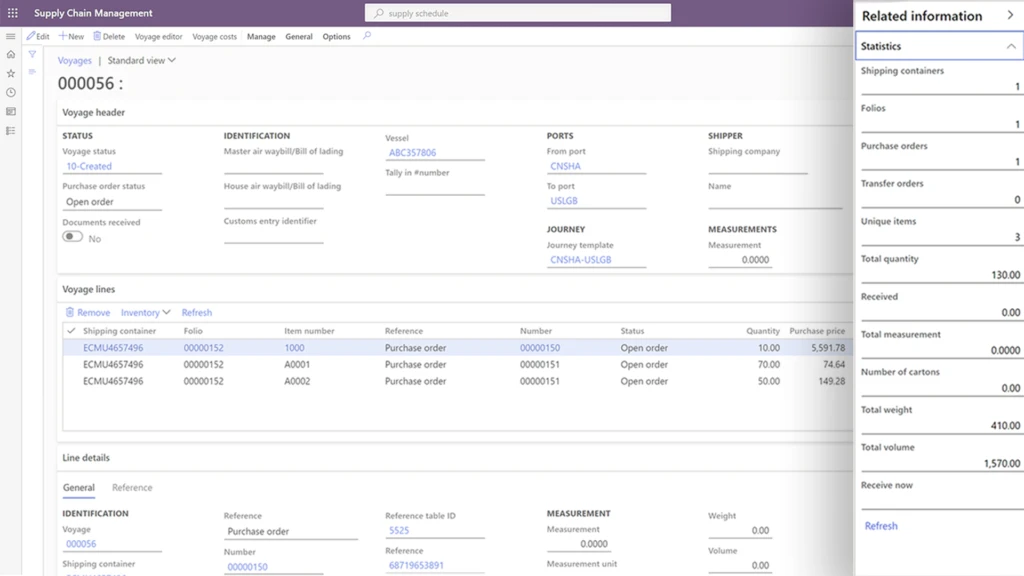

Increase visibility of inbound goods and automate landed cost calculation to maximize profitability

The new landed cost module in Dynamics 365 Supply Chain Management provides an innovative way to streamline inbound shipping by giving users complete financial and logistical control over imported freight, from the manufacturer to the warehouse.

With this visibility, arrivals are predictable, and warehouse planning and efficiency are improved.

Some key business outcomes are:

- Optimize the receiving process: Shipment of inbound orders such as purchase orders or transfer orders can be defined and organized into various transportation legs that will provide immediate visibility of stock delays that might impact customer deliveries.

- Help organizations predict costs: Automatic cost calculations can be configured for various transportation modes, duties, and other fees incurred to get a product to the warehouse.

- Help reduce administration and costing errors: Simulate shipping scenarios to predict a standard cost price for an item.

Citt uses Dynamics 365 Supply Chain Management to streamline sourcing, procurement, and management of inbound goods in transit. Now, staff members know when to replenish inventory at each store in a timely manner so that products are in stock and aren’t overstocked.

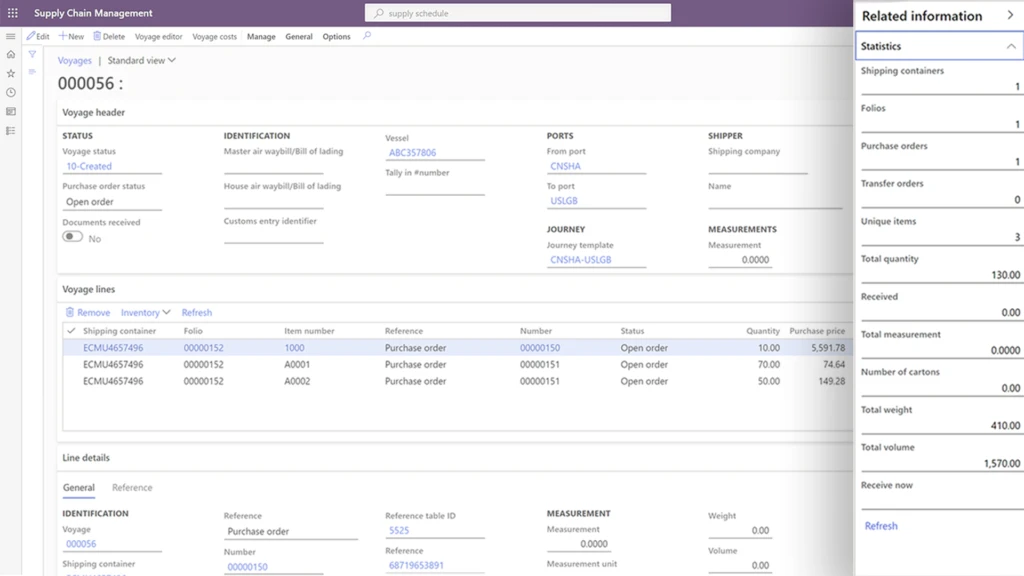

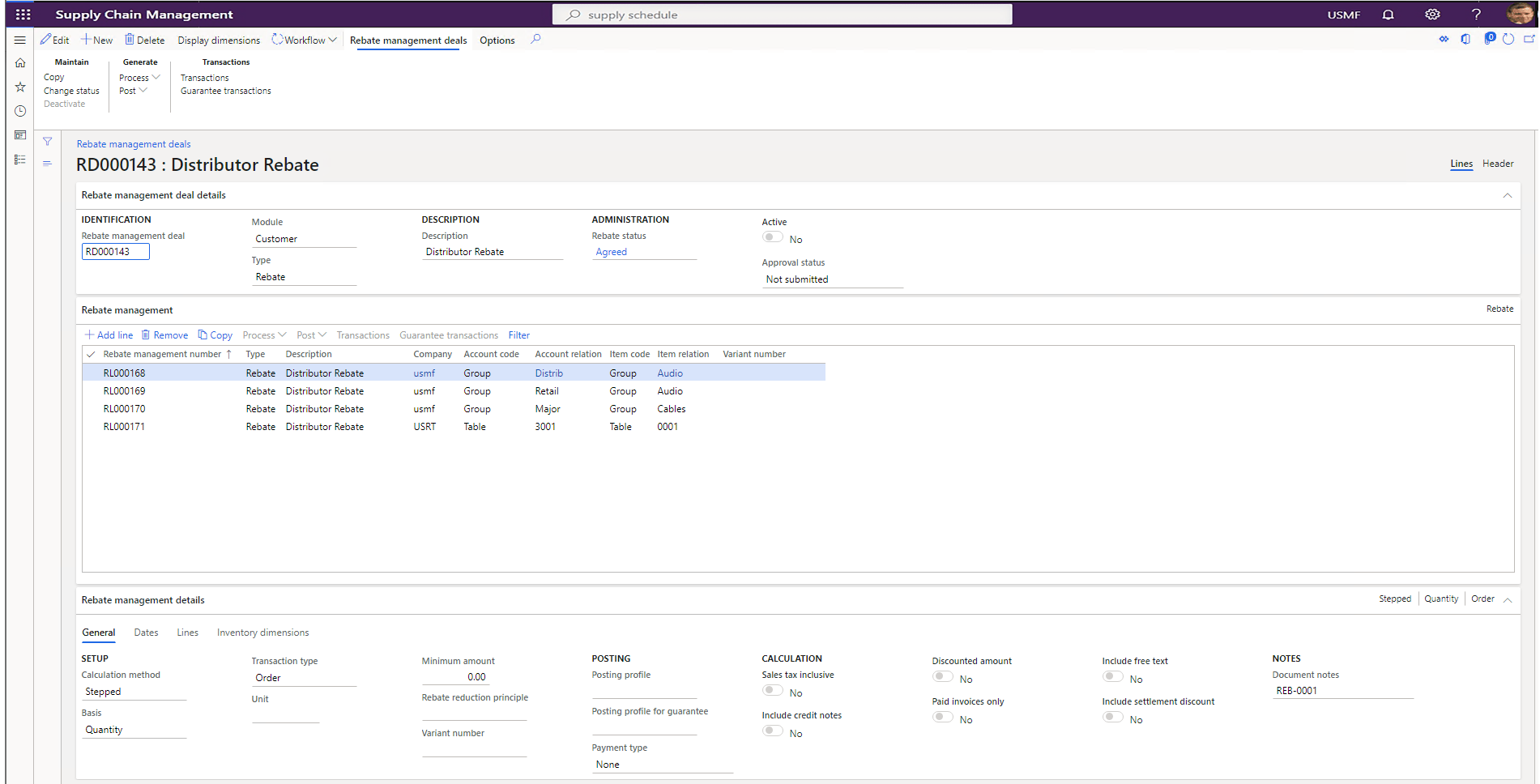

Streamline and automate rebates and royalty management to increase your bottom line

Rebates and royalty have a significant impact on a company’s margin. The rebate calculation complexity and post-event timing of claims can cause challenges for businesses to accrue, automate and track the rebate in a cost-effective way.

Rebate management provides a central place to manage customer rebates, customer royalty, or vendor rebates. It will help to identify and trace the eligibility against overlapping and concurrent agreement with accuracy and automate the claim and payment process.

Some key business outcomes are:

- Centralize, streamline, and automate rebates and royalty program management with customers and vendors. It supports tiered pricing and volume rebates.

- Increase sales, improve margins, and remain competitive with simplified trade planning and real-time reporting such as spend versus rebates.

Overcome disruptions due to quality issues and obsolete parts to keep production running

Strong product data management, formula management, and change tracking of formulations are required to succeed in a world of constantly shrinking product lifecycles, increased quality and reliability requirements, and increased focus on product safety. This helps streamline and reduce the cost of managing product data, reduce errors in production, reduce waste when making design changes, and enable new formulas to be introduced in a controlled way.

Now, companies can manage changes in your process manufacturing master data, including formulas, planning items, co-products, by-products, and catch weight items.

Some key business outcomes are:

- Ensure compliance and on-time delivery by quickly responding to changing customer or supplier specifications, regulations, and safety standards, and seamlessly managing multiple formulations.

- Minimize disruptions caused by quality issues and obsolete ingredients by quickly assessing the impact and adjusting formulas through change management to ensure production lines are running every day.

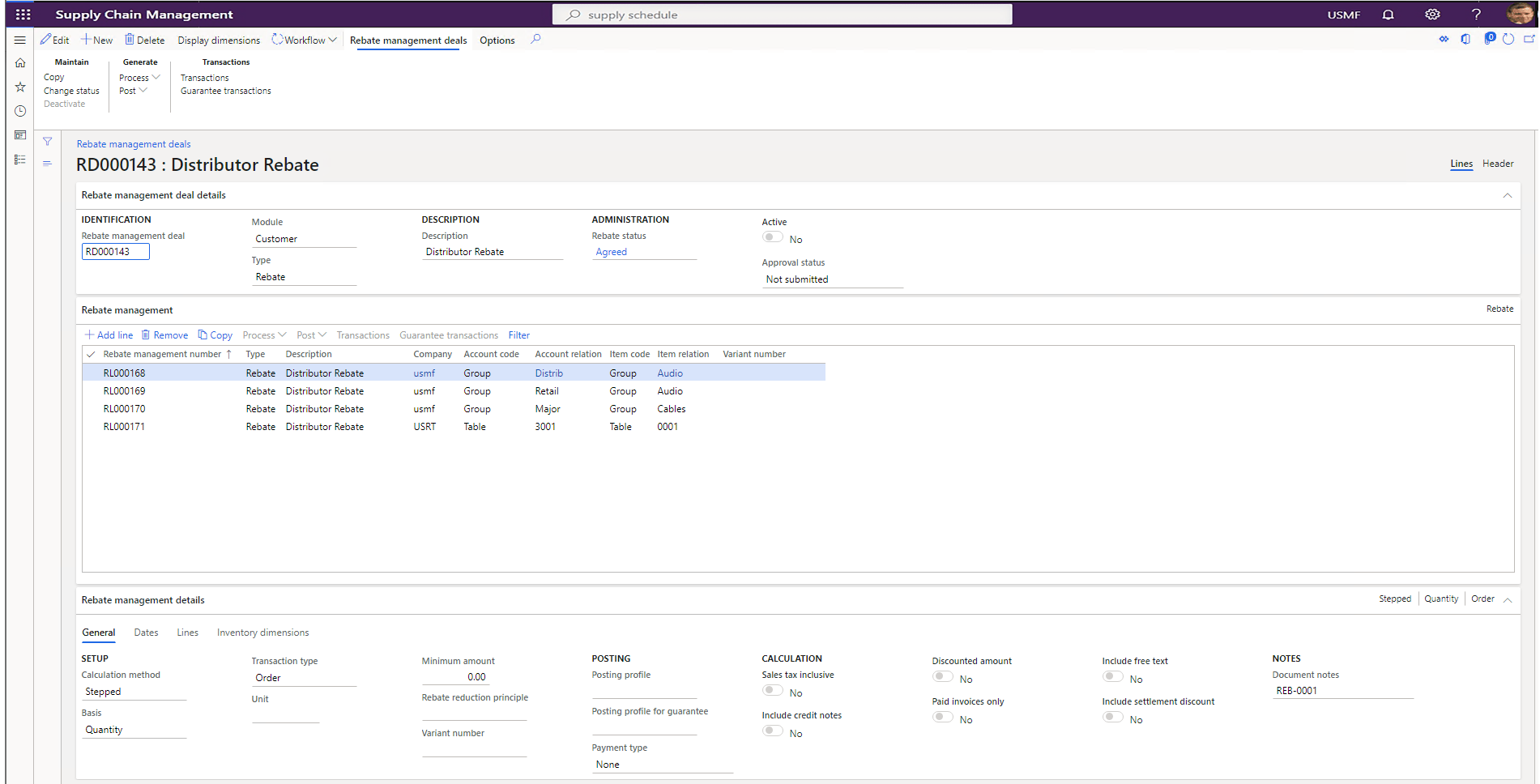

Empower frontline workforce to work with confidence and improve overall warehouse operating efficiency

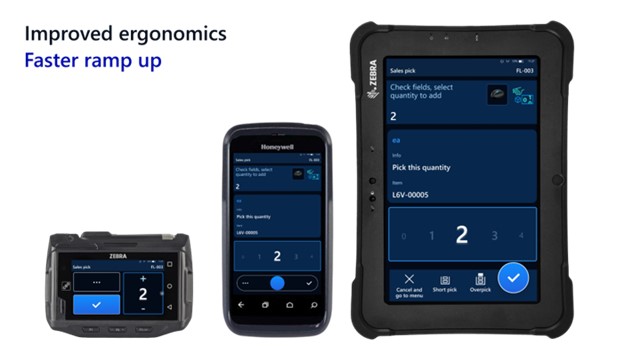

The warehouse management mobile application includes a fresh, contemporary design that is intuitive, easy to use, and supported by robust enhancements to core warehouse management logic that streamline processing. The solution is designed to help workers be more efficient, productive, and better able to complete work accurately.

Some key business outcomes are:

- Improved worker efficiency with the most important information made easy to read and in large font, large input controls to quickly dial in quantities, and saved worker preferences and device-specific settings that can be managed centrally.

- Faster ramp-up of new workers with clear titles and illustrations for each step and full-screen photos to verify product selections.

- Improved ergonomics with a high-contrast design that provides clear text even on dirty screens, custom button locations to match each worker’s grip, device, and handedness, large touch targets that make the app easy to use with gloves, and possibilities to scale font and button size independent of each other.

- Alignment with Fluent Design System visual style and interaction, a similar user interface across Dynamics 365 Guides, and production floor execution interface.

Learn more about Dynamics 365 Supply Chain Management

Learn more about Dynamics 365 Supply Chain Management, how to build resilience with an agile supply chain, or if you are ready to take the first step towards implementing one of the most forward-thinking enterprise resource planning (ERP) solution available, then contact us to see a demo or start a free trial.

Find out what’s new and planned for Dynamics 365 Supply Chain Management and listen to our recent podcast “Preparing for your supply chain’s next normal” with Frank Della Rosa and Simon Ellis.

Learn how Microsoft Cloud for Manufacturing is helping create a more resilient and sustainable future through open standards and ecosystems.

1 IDC White Paper, sponsored by Microsoft, title, doc # US47207320 published in January 2021 – “A New Breed of Cloud Applications Powers Supply Chain Agility and Resiliency.”

The post Architecting resiliency in supply chains with Microsoft Dynamics 365 appeared first on Microsoft Dynamics 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

by Contributed | Apr 14, 2021 | Technology

This article is contributed. See the original author and article here.

This blog post is the second in a series of three that examines the results of a recent IDC study, Leveraging Microsoft Learning Partners for Innovation and Impact.* The first post, New study shows the value of Microsoft Learning Partners, provides a high-level look at the benefits of using a Learning Partner to meet your technical skilling needs.

We commissioned IDC researchers to explore the value of Microsoft Learning Partners, a worldwide network of authorized training providers that work with organizations to fill skill gaps and meet their strategic learning and business goals. In the first post, we explored how Microsoft Learning Partners can play a key role in delivering learning results that make a measurable difference in organizations’ cloud initiatives and digital transformation efforts.

Among the findings, four areas consistently stood out as essential capabilities. In this post, we dive into two of them—specifically, that organizations benefit from working with a Learning Partner which provides:

- An end-to-end solution that starts with consultation to identify needs and wraps up by evaluating the program’s success.

- Value-added services that support learners, such as hands-on labs and custom content.

The study identified two other top-of-mind areas that we’ll cover in our third post—the quality of the training content and delivery, and the flexibility, scale, and speed of the partner.

To learn more about all four qualities, download Top reasons to get IT training from a Microsoft Learning Partner.

Customized, end-to-end services help you assess gaps, simplify learning, and meet goals

Learning initiatives are usually complex, involving many people and moving parts. Rather than spin out various tasks to different providers, organizations can benefit from working with one provider that can deliver an end-to-end training solution.

Microsoft Learning Partners provide a range of capabilities that help enterprises build and execute successful training initiatives, including:

- Identifying an organization’s skill gaps. As consultants, Learning Partners work with their clients to offer proactive guidance and solutions. They work with organizations to identify the requirements and then align their services to meet a client’s specific needs, coordinating and delivering the resources that meet the objectives.

- Simplifying the learning initiative. By shifting the task to a Learning Partner, organizations can efficiently move their training programs forward. Learning Partners take care of the details, aligning the curriculum to the requirements, coordinating activities, and managing learning outcomes for their clients.

A mix of value-added services meet learners’ needs and deliver results

People learn best from a variety of tools and approaches, and flexibility is important, as Dan O’Brien notes. O’Brien is the President, United States and Canada, of Fast Lane, a worldwide provider of advanced IT education for leading technical vendors. “When we train [a large professional services firm], many of their staff are billable resources who can’t sit in a five-day class. We built a tailored training model specifically for this use case: We trained one day a week over five weeks, minimizing the impact on work performance.”*

Learning Partners make a difference in their scope of offerings, including hands-on labs, a mix of self-paced and instructor-led training, custom content, role-based learning paths, mentoring and discussion groups, and assessments before, during, and after the training. Services may also extend to preparation for certification exams.

Learning Partners give organizations the tools they need to successfully meet their business and learning goals through:

- Customized learning programs. Authorized Microsoft Learning Partners make training programs as relevant as possible for learners. They can use standard courses and learning paths, and they can tailor the content or flow to suit an organization’s unique situation.

- Training delivery aligned to client requirements. Some organizations want instructor-led training and boot camps, and some prefer e-learning. Learning Partners are experts at finding the right delivery model and experience for their customers.

Next step: Get the training program that’s right for you

Microsoft Learning Partners can help organizations get the most impact from their learning initiatives. The key is to find providers that offer end-to-end solutions and value-added services for learners of all types.

Find a Microsoft Learning Partner

Related posts

Sharpen your technical skills with instructor-led training

Leading Learning Partners Association—a unique organization for delivering Microsoft training

Need another reason to earn a Microsoft Certification?

Technical certifications could help drive business optimization

Recent Comments