Access control is a fundamental building block for enterprise customers, where protecting assets at various levels is absolutely necessary to ensure that only the relevant people with certain positions of authority are given access with different privileges. This is more so prevalent in machine learning, where data is absolutely essential in building ML models, and companies are highly cautious about how the data is accessed and managed, especially with the introduction of GDPR. We are seeing an increasing number of customers seeking for explicit control of not only the data, but various stages of the machine learning lifecycle, starting from experimentation and all the way to operationalization. Assets such as generated models, cluster creation and model deployment require to be governed to ensure that controls are in line with the company’s policy.

Azure traditionally provides Role-based Access Control [1], which helps to manage access to resources; who can access these and what they can access. This is primarily achieved via the concept of roles. A role defines a collection of permissions.

Existing Roles in AML

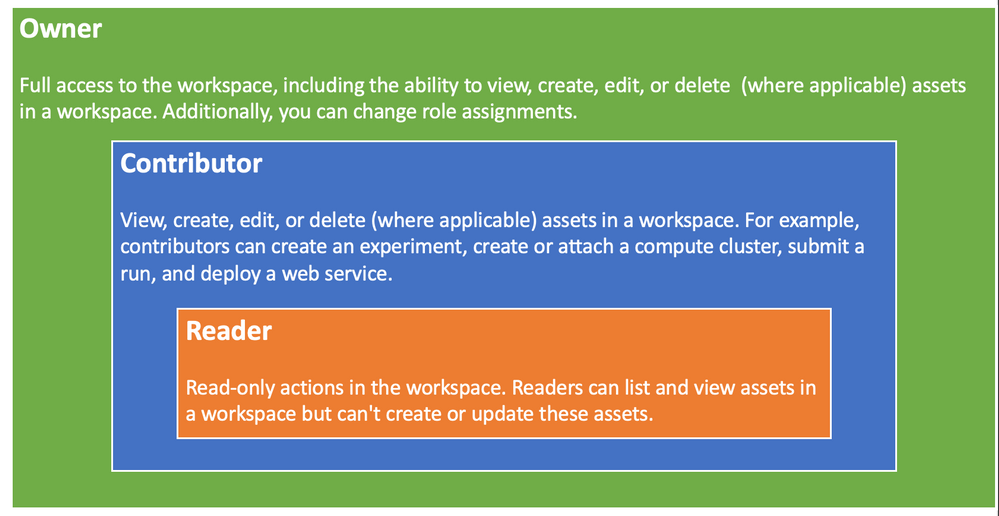

Azure Machine Learning provides three roles [3] for enterprise customers to provision as a coarse-grained access control, which is designed for simplicity in mind. The first role (Owner) has the highest level of privileges, that grants full control of the workspace. This is followed by a Contributor, which is a bit more restricted role that prevents users from changing role assignment. Reader having the most restrictive permissions and is typically read or view only (see figure 1 below).

Figure 1 – Existing AML roles

What we have found with the customers is that while Coarse-grained Access Control immensely simplifies the management of the roles, and works quite well with a small team, primarily working in the experimentation environment. However, when a company decides to operationalize the ML work, especially in the enterprise space, these roles become far too broad, and too simplistic. In the enterprise space, the deployment tends to have several stages (such as dev, test, pre-prod, prod, etc.), and require various skillset (data scientist, data engineer, etc.) with a greater control in each stage. For example, a Data Scientist may not operate in the production environment. A Data Engineer can only provision resources and should not have the ability to commission and decommission training clusters. Such governance policies are crucial for companies to be enforced and monitored to maintain integrity of their business and IT processes.

Unfortunately, such requirements cannot be captured with the existing roles. Enterprise needs a better mechanism to define policies for various assets in AML to satisfy their business specific requirements.

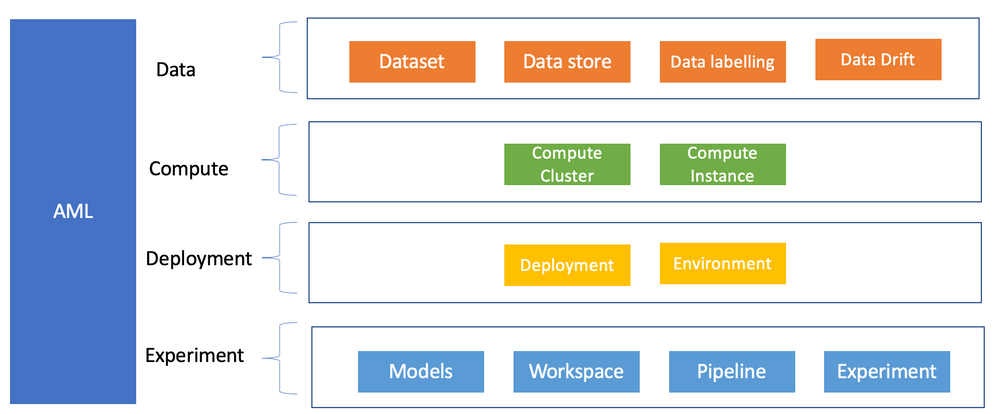

This is where the new exciting feature of advanced Role-based Access Control really shines. It is based on Fine-grained Access Control at component level (see figure 2) with a number of pre-built out of the box roles, plus the ability to create custom roles that can capture more complex governance access processes and enforce them.

Advance Fine-grained Role-based Access Control

The new advance Role-based Access Control feature of AML is really going to solve many of the enterprise problems around the ability to restrict or grant user permissions for various components. Azure AML currently defines 16 components with varying permissions.

Figure 2 – Components Level RBAC

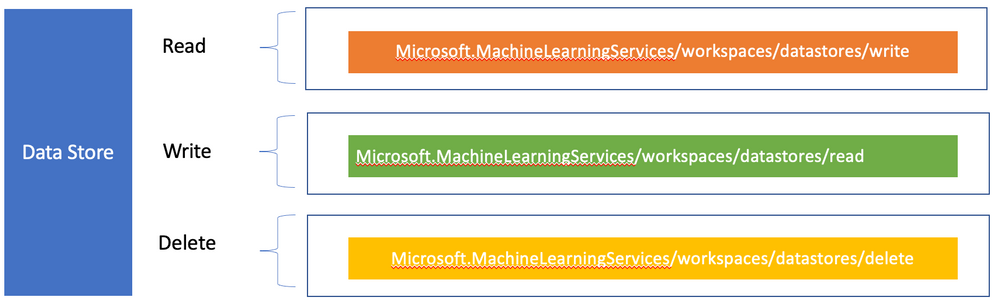

Each component defines a list of actions such as read, write, delete, etc. These actions can then be amalgamated together to create a custom specific role. To illustrate this with an example of a list of actions currently available for a Datastore component (see Figure 3 below).

Figure 3 – Datastore Actions

A datastore along with Dataset are important concepts in Azure Machine Learning, since they provide access to various data sources, with lineage and tracking ability. Many enterprises have built global Datalake that contain terabytes of data which can contain highly sensitive information. Companies are quite protective of who can access these data, along with various business justifications for how these data are being accessed/used. It is therefore imperative that a tighter access control is mandated for a specific role, such as a Data Engineer to accomplish such a task.

Fortunately, AML advance access control provide custom roles. to cater for their company specific access control, which may be a hybrid of these roles. For such requirements, Azure caters for custom roles.

Custom Role

Custom role [4] allows creation of Fine-grained Access Control on various components, such as the workspace, datastore, etc.

- Can be any combination of data or control plane actions that AzureML+AISC support.

- Useful for creating scoped roles to a specific action like an MLOps Engineer

These controls are defined in a JSON policy definition, for example.

{

“Name”: “Data Scientist”,

“IsCustom”: true,

“Description”: “Can run experiment but can’t create or delete datastore.”,

“Actions”: [“*”],

“NotActions”: [

“Microsoft.MachineLearningServices/workspaces/*/delete”,

“Microsoft.MachineLearningServices/workspaces/ datastores/write”,

“Microsoft.MachineLearningServices/workspaces/ datastores /delete”,

“Microsoft.MachineLearningServices/workspaces/datastores/write”,

“Microsoft.Authorization/*/write”

],

“AssignableScopes”: [

“/subscriptions/<subscription_id>/resourceGroups/<resource_group_name>/providers/Microsoft.MachineLearningServices/workspaces/<workspace_name>”

]

}

The above code defines a Data Scientist who can run an experiment but cannot create or delete a Datastore. This role can be created using the Azure CLI (az role definition create -role-definition filename), however, the CLI ML extension needs to be installed first.

Role Operation Workflow

In an organization, the following activities are to be undertaken by various role owners.

- Sub admin comes in for an enterprise and requests Amlcompute quota

- They create an RG and a workspace for a specific team, and also set workspace level quota

- The team lead (aka workspace admin), comes in and starts creating compute within the quota that the sub admin defined for that workspace

- Data Scientist comes in and uses the compute that workspace admin created for them (clusters or instances).

Roles for Enterprise

AML provides a single environment for doing end-to-end experimentation to operationalization. For a start-up this is really useful as they tend to operate in a very agile manner, where many iterations can happen in a short period of time and having the ability to quickly move from ideation to production really reduces their cycle time. Unfortunately, this may not be the case for the enterprise customers, where they would typically be using either two or three environments to carry out their production workload such as: Dev, QA and Prod.

Dev is used to do the experimentation, while QA is catered for satisfying various functional and non-functional requirements, followed by Prod for deployment into the production for consumer usage.

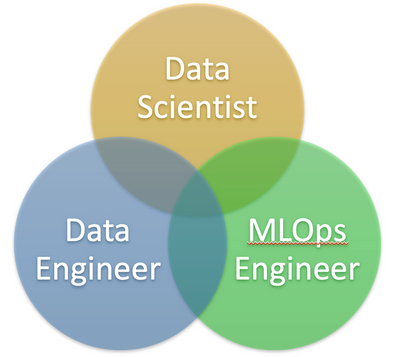

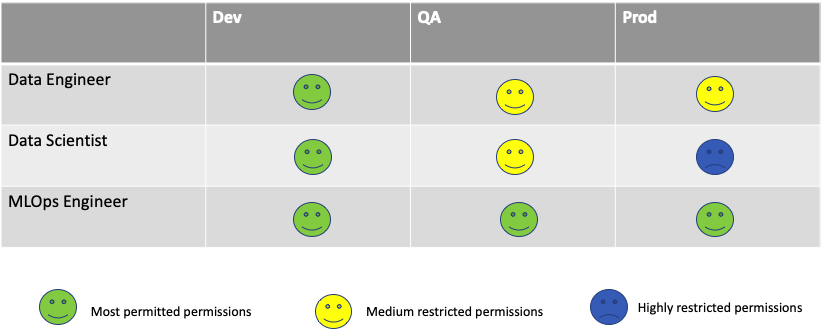

The environments would also have various roles to carry out different activities, such as Data Scientist, Data Engineer and MLOps Engineer (see figure 8 below).

Figure 8 – Enterprise Roles

A Data Scientist normally operates in the Dev environment and has full access to all the permissions related to carrying out experiments, such as provisioning training clusters, building models, etc. While some permissions are granted in the QA environment, primarily related model testing and performance, and very minimal access to the Prod environment, mainly telemetry (see below Table 1).

A Data Engineer on the other hand primarily operates in the Build and QA environment. The main focus is related to the data handling, such as data loading, doing some data wrangling, etc. They have restricted access in the Prod environment.

Table 1 – Role/environment Matrix

An MLOps Engineer has some permission in the Dev environment, but full permissions in the QA and Prod. This is because an MlOPs Engineer is tasked with building the pipeline, gluing things together, and ultimately deploying models in production.

The interesting part is how do all these roles and environments and other components fit together in Azure to provide the much-needed access governance for the enterprise customers.

Enterprise AML Roles Deployment

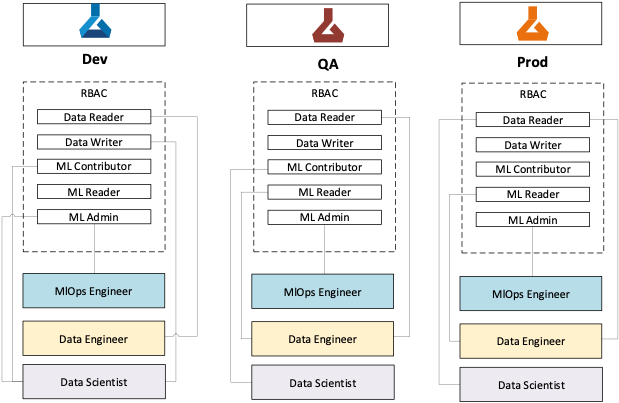

It is impressive for enterprises to be able to model these complex roles/environments mapping as shown in Table one. Fortunately these can be achieved in Azure using a combination of AD groups, roles and resource groups.

Figure 9 – Enterprise AML Roles Deployment

Fundamentally, Azure Active Directory groups play a major part in gluing all these components together to make it functional.

First step is to group the users specific to role(s) in a “Role AD group” for a given persona (DS, DE, etc.,). Then assign roles with various RBAC actions (Data Writer, MLContributor, etc.) to this AD group. All these users will now inherit the permissions specific to this role(s). Multiple AD groups will be created for different persona roles.

Separate AD groups (‘AD group for Environment’) are created for each environment (i.e. Dev, QA and Prod), the Role AD Groups are added to these Environment AD groups. This creates a mapping of users belonging to a specific role persona with given permissions to an environment.

The ‘AD group for Environment’ is then assigned to a resource group, which contains a specific AML Workspace. This ensures that the role permissions assigned to users will be enforced at the workspace level.

Summary

In this blog, we have discussed the new advance Role-based Access Control, and how it is being applied in a complex enterprise with various environments with different user personas.

The important point to note is the flexibility that comes with this new feature which can operate at any of the 16 AML components and be able to define Fine-grained Access Control for each through custom roles, and out of box four roles which should be sufficient for the majority of the customers.

References

[1] https://docs.microsoft.com/en-us/azure/role-based-access-control/overview

[2] https://azure.microsoft.com/en-gb/services/machine-learning/

[3] https://docs.microsoft.com/en-us/azure/machine-learning/concept-enterprise-security

[4] https://docs.microsoft.com/en-us/azure/role-based-access-control/custom-roles

Additional Links:

co-author: @Nishank Gupta

Recent Comments