by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

(guest post from Amrit Shandilya from the Microsoft File System Minifilter Team)

Hello! We are excited to announce that Plugfest 33 has been scheduled!

In light of global health concerns due to novel coronavirus (COVID-19), Plugfest is going virtual for the first time ever! Health and well-being of our employees, partners, customers and other guests remain our ultimate priority. In this Virtual Plugfest, VMs hosted on Azure will take the place of physical HW.

Below you will find details, useful resources, and a registration link. If you are interested in attending this online event, please review the below information and register for the event by March 23, 2021.

What is Plugfest?

The goal of Plugfest is to help you prepare your file system minifilter, network filter, or boot encryption driver for the next version of Windows by performing interoperability testing with other products.

When is Plugfest 33?

Plugfest 33 will take place in batches between Monday, April 12, 2021 and Monday, April 26, 2021.

Who are we looking for?

Independent Software Vendors (ISVs), developers writing file system minifilter drivers and/or network filter drivers for Windows.

How much does it cost?

FREE – There is no cost to attend this event.

Why should I go?

Here are four major benefits of Plugfest:

- The opportunity to test products extensively for interoperability with other vendors’ products and with Microsoft products. This has traditionally been a great way to understand interoperability scenarios and flush out any interoperability-related bugs.

- Get exclusive talks and informative sessions organized by the File System Minifilter team about topics that affect the filter driver community.

- Great opportunity to meet with the file systems team, the network team, the cluster team, and various other teams at Microsoft and get answers to your unanswered technical questions.

- Early exposure to new hardware innovations, that affect filter functionality

How do I register?

To register, please complete the Registration Form at your earliest convenience (registration closes 03/23/2021). PLEASE NOTE: We will confirm your registration via email. Confirmation emails will be sent out after registration closes.

You can learn more about File System Minifilters and Network Filters in the given links.

If you have any additional questions please email us at amshandi@microsoft.com (Amrit Shandilya).

– Amrit & The Microsoft File System Minifilter Team

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

With Power Apps, we can rapidly build custom business applications that connect to our business data in a low code manner. This means that not only professional developers can develop applications but that a lot more people will be able to make apps that fit their specific use cases.

But as development is not only writing code, there are certainly some things we should do before we hit make.powerapps.com. Many developers will agree that development is 20% about writing code and 80% about communications (meetings, gathering requirements, adjusting things).

If we now introduce ‘low code development’, we work on these 20%, not on those 80%.

The issue with that is that the narrative of ‘everyone should make apps’ doesn’t reflect that there is much content out there to teach business users how to use controls, components, connectors, and ask the community when in doubt which function they should use. But there is about near to zero guidance around making better decisions about how we should approach building apps. Regardless of by whom it is developed, every app needs to be documented, maintained, and supported. Pro-developers are very aware of this, as DevOps is what they live and breathe every single day. But apart from IT departments, who will need to care about governance, application lifecycle management, etc., there are some things on the business user side that we should consider.

This post will list ten things that we should think about before we start building our apps

1- which problem does the app solve?

This sounds obvious that one would first do a proper analysis of value proposition and if the app they have in mind is a must-have solution to a specific business case or if it’s more or less ‘yet another thing to use’, although there are working alternatives in place.

understand your market

Let us take some time to segment our market and understand who (even if we build for our colleagues) needs our app. There will be roles that will rely on our app, and it will become mission-critical to them. Others will have already found applications that do similar things, or the app doesn’t solve a relevant problem. We can specifically look at underserved groups, such as blue-collar workers, and see if we can address their needs. Becoming obsessed with solving specific needs without assumptions is critical here. This means that we will need to validate the market that we have in mind. Even if we consider ourselves a business user/power user /citizen developer, doesn’t necessarily mean that we are our user and precisely know what they need.

psychological strain vs. energy of change

To make users love an app, we will need to solve their pains or address their needs and make it not too hard for them to change their behavior. If the energy they will need to change their behavior is higher than the psychological strain they face right now, they will not adopt your app. It’s unfortunately as simple as that.

2- calculate value

Now you have a fair idea of who will use your app for which use cases and also know what users are doing now:

- abuse other tools

- work on paper

- purchase 3rd party tools

- use unapproved shadow IT tools

and to which results this leads

- be busy with tasks that don’t add value

- lose information

- cause additional costs

- severe risks in terms of data security, governance, compliance etc.

tl;dr: it costs time & money

But we need to make an effort to calculate the higher costs in terms of money and time and make an estimation for the next 12 or 24 months in order. This will also help with any approval process/ get funding.

If the app we have in mind doesn’t create (enough) value, we can take this as a learning opportunity better to meet the needs of our (internal) customers.

The goal is to provide more value, not to deliver a poorly designed app that costs a little less. Of course, we can build apps ‘for fun’ or because we want to learn, or ‘just because we can’, but we should carefully distinguish those apps from apps that we want to pitch and ‘sell internally’.

3- scope

The mother of all questions: Does it scale? Will our app be something for our personal productivity? Ease a workload for a small team? Or do we talk about a mission-critical process? And even if we start with a small group of users to try out, is there a way where a broader audience could want to use it? We need to carefully identify our app’s scope, as this will impact many decisions.

4- data model

Let’s talk about our data model. Which data source will you need to get data, in which services will you write data? Which dependencies do you have, which other apps, flows, bots will be part of the solution? Of course, the consultant answer on when to use what will always be an ‘it depends’, but there are probably more reasons to look into different data sources as you are aware of:

licensing

As this is a pretty emotionally driven subject, we should handle this some more cool-headed. The idea of first understanding which needs your app solves, who would benefit from it, and how much money and time all users would save together by using it is the licensing discussion’s counterpart. Of course, organizations tend not to want to pay for additional licenses, but the idea that one could deliver excellent business value without any costs is somehow romanticized. Incorporate licensing fees for premium connectors (as you need them) in your calculation, and if the app still delivers more value than it costs, we will probably get approval/green lights for it.

If the app isn’t worth more than ~10$ per month and user, we should probably not be building it.

performance

The data sources we choose have not only impacted the licensing model but also our apps’ performance. For instance, if data needs to travel through an on-premises data gateway to a SQL server, most probably, our app will not be as fast as if the data sits in Dataverse. For an elaborated comparison on this and other data sources such as Excel, SharePoint, and more, please read this article about Considerations for optimized performance in Power Apps

developer and user experience

We can also impact the experience you as a developer will have while building the app, depending on the data source. If you ever ‘loved’ to deal with, for example, lookup columns from a SharePoint list, you will agree that you didn’t choose the easiest way to build an app. If you need to find workarounds as a developer, this will impact your user, which means that their experience won’t be as good as possible.

5- delivery

Let’s say we are about to hit make.powerapps.com, and we have a fair idea of what to do there to make an app. We will publish our app, share it, and mentally move on to something different. Now, who cares for that app? Who will maintain our app? Things can easily change when we create apps on top of cloud services. This can quickly become a not to be underestimated workload, and probably we as a business user won’t have the capacity to cover that. And even if we are not talking about adjusting to things that changed: Who will support our app? Who will answer questions? Who will implement new features?

Delivery of software should contain code (and yes, this applies to Power Apps) and proper documentation. Sharing knowledge about our app (what we use, inputs, outputs, dependencies, licensing, data model, accessibility, features, etc.) very early by writing it down to enable those who need to maintain and support our solution is essential. Making it a habit to have a changelog, where we document which features we add, remove or change, is crucial. If we think that this is too time-consuming, we should be aware that someone will need to spend more time on fixing the lack of documentation for us.

A lack of documentation will create technical debt

6- what is your minimal l vable product?

vable product?

Yes, I mean it! Define your minimal lovable product. If you only heard about a minimal viable product so far, please read this article How to Build a Minimum Loveable Product. In essence? Which features will we need to make users fall in love with our app? We will build this. We will not fall into the rabbit hole of delivering the whole software in one piece but focus on the crucial parts. Once we published our first version, we gather feedback on how we can improve it. Becoming comfortable with a mindset that software is never finished can be a good idea.

7- mock-up your app

Finally- let’s talk frontend – how shall our app look like? It’s super tempting to start building screens and buttons and decide on colors etc., but we will get a better impression on the big picture of our app if we first do a mock-up. We may choose to use a professional app for that or if we will draw something (I personally do this in OneNote). Defining which screens and buttons and forms and galleries you will need will make the next steps easier.

8- componentize your app

Reinventing the wheel is always painful, and to avoid this, we can think about components in our apps so that we reuse what we (or even others in this environment in the component library) build before. While controls are an excellent way to start learning and understanding how everything works, components are the way to accelerate our process of making apps and make sure that we easily adjust them and don’t need to start over again for the next app we are making. It is also the low-code equivalent to the principle of ‘Don’t repeat yourself‘. Thinking upfront about which controls we would repeatedly use and planning to componentize these will ensure that we don’t need to start all over again. You can watch April Dunnam’s video about componetizing Power Apps to get up to speed.

9- accessibility

Accessibility is nothing that comes on top of a ready-to-publish app but should be one of your core concepts straight from the beginning. The accessibility checker in Power Apps is a good start, but it’s always worth exploring even more ideas. We can find lots of guidance on ensuring that more people can benefit from our apps, Microsoft Inclusive Design is an excellent go-to resource.

10- build in Microsoft Teams

As we are more and more working in Microsoft Teams, we should not only build applications for teams, but for (Microsoft) Teams! Considering the context of apps gives you even more ways to satisfy user needs. Coming back to the scope of our app, we need to carefully think if this app is just for our team, or if we want to make it available for the whole organization. Staying in Teams also has an impact on licensing, as you can for instance use Dataverse for Teams, which is then seeded in you Microsoft 365 license.

Conclusion

As we could see, there are quite some things to do and think about before we hit make.powerapps.com and I put these together as an approach for good practices. What do you do before you actually start developing? What would you like to add to my list?

Please comment below!

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

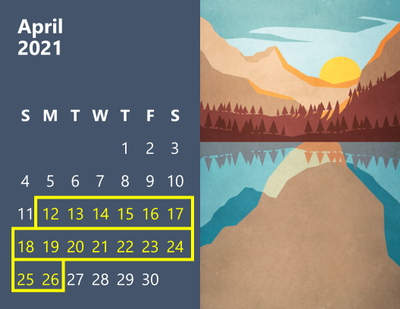

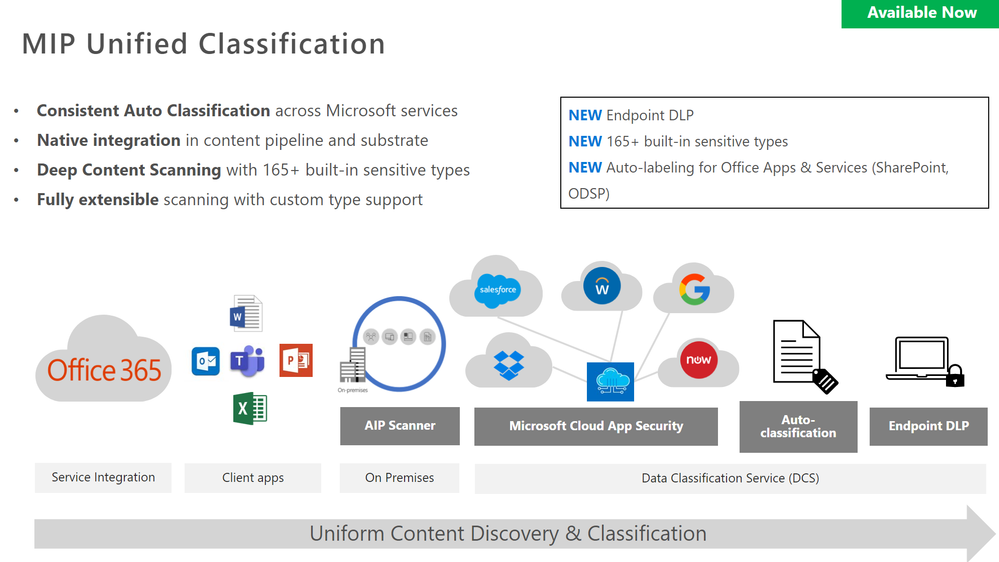

The Remote Workers DLP webinar provided an overview of Unified DLP, how to setup Teams DLP, understanding the end user experience, securing Teams content with container labels and securing Teams guests’ access.

Resources:

This webinar was presented on January 26, 2021, and the recording can be found here.

Attached to this post are:

- The FAQ document that summarizes the questions and answers that came up over the course of both Webinars.

- A PDF copy of the presentation.

Thanks to those of you who participated during the two sessions and if you haven’t already, don’t forget to check out our resources available on the Tech Community.

Thanks!

@Robin_Baldwin on behalf of the MIP and Compliance CXE team

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

Let’s give GlobalCon a collective high-5 and make it GlobalCon5. Hey, don’t leave me hangin’! I’m pleased to be joining in the fun along with a wonderful lineup of speakers and depth of content.

Yes, the Collab 365 team is at it again. I don’t think they ever stopped. They have been paving the way forward for virtual events for some time, and this go around won’t disappoint. They’re planning great, unique training, presented by world-class trainers and new content – across three days. It’s easy to plug in no matter where you live, engaging Q&A throughout, with much to take with you and learn at your own pace.

GlobalCon5: “I feel the need, the need for speed!” (that’s the kind of high-5 I’m talkin’ about) ;)

GlobalCon5 – March 16-18, 2021 (online training)

GlobalCon5 – March 16-18, 2021 (online training)

Microsoft 365 is big and changes often – the GC5 team could run a conference every week! Each session brings a fresh new perspective. You’ll learn the latest to keep your skills fresh. GlobalCon5 covers Teams, Power Platform, SharePoint, and everything else stacked into Microsoft 365.

Below is a quick view of the sessions by day – including my kickoff session:

- Day 1 sessions | March 16th

- Day 2 sessions | March 17th

- Day 3 workshops | March 18th

Shout out to community “high-5’ers” Helen Jones, Mark Jones, and the #GlobalCon5 crew who are navigating this conference by day and night, supporting, and promoting the knowledge and expertise that reaffirms this: Microsoft 365 has the best tech community in the world – one that spans the globe.

See you there, Mark

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

Double Key Encryption (DKE) enables customers to protect their most confidential content using a key they control, thereby allowing them to comply with regulatory requirements. DKE ensures that Microsoft cannot access their data under any circumstances.

Most customers implementing DKE are trying to limit access to their most sensitive content to users of their own tenant. But some customers asked how DKE can also be used for B2B scenarios. This blog shows the additional steps for allowing Contoso to share DKE protected content with Fabrikam users.

Please observe that this blog post does not replace the official documentation for implementing DKE, it merely describes the additional steps required.

Prerequisites

This section defines the technical prerequisites.

- The URL of the DKE service https://dke.contoso.com needs to be accessible both for Contoso and Fabrikam users.

- The DKE URL needs to be based on a DNS domain registered in the Azure AD tenant of your organisation. For instance, if you plan to use the URL https://dke.contoso.com for the DKE service, the DNS domain contoso.com needs to be registered in your tenant. Please refer to our documentation for registering a custom domain.

Overview on the steps

Making DKE available for users of the Fabrikam tenant requires several steps:

- Adapt the app registration to allow «Multitenant» authentication, if that’s not already the case.

- Trusting the Fabrikam Azure AD tenant as valid token issuer and adding the email addresses of the Fabrikam users in the configuration file.

- Grant permissions to the Fabrikam users in the sensitivity label protection settings.

- Have a Fabrikam user access a DKE protected document as first step to grant consent.

- Ask the Fabrikam Global Admin to grant consent for accessing the DKE service on behalf of all Fabrikam users.

Details to these steps are provided in the following sections.

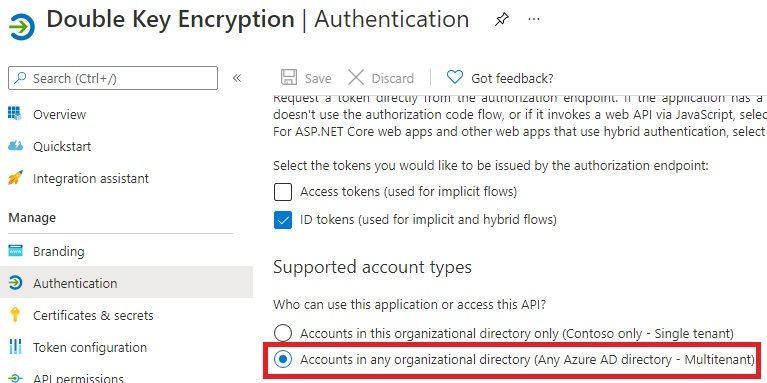

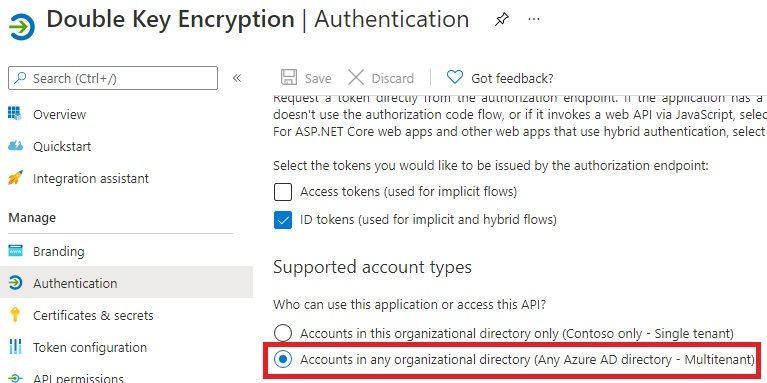

Make sure the app registration for DKE supports «Multitenant» authentication

If a DKE service were meant for users of your tenant exclusively, its app registration authentication may be limited to «single tenant».

But since the DKE content needs to be accessibly to users from the Fabrikam tenants, you have to select the option «Accounts in any organizational directory (Any Azure AD directory – Multitenant)», as shown here:

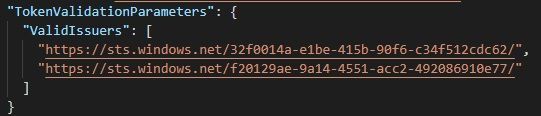

Changes required on the configuration file

You need to ensure both the home tenant and all tenants of your business partners are contained in the configuration file.

The following configuration file excerpt shows both Contoso and Fabrikam tenants are trusted:

Email addresses of the Fabrikam users also need to be included in the configuration file. The following excerpt from the configuration file shows how Adele Wilber from Fabrikam is also allowed to access the DKE service:

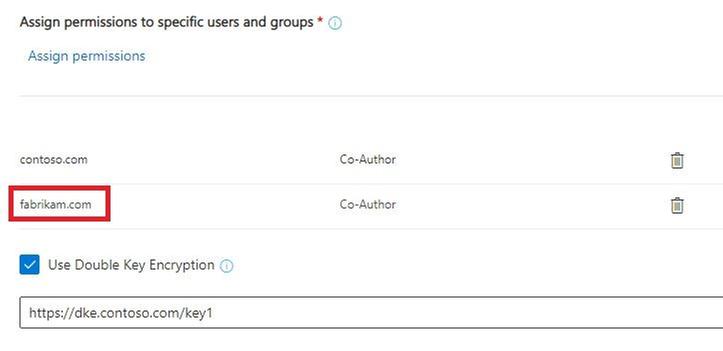

Make sure the sensitivity label grants permission to Fabrikam users

Fabrikam users may only access content from your tenant, if the respective label grants them access – this applies to DKE labels as well.

Here all users both from contoso.com and fabrikam.com may access data protected by the DKE label:

Initial steps for granting consent for users of the Fabrikam tenant

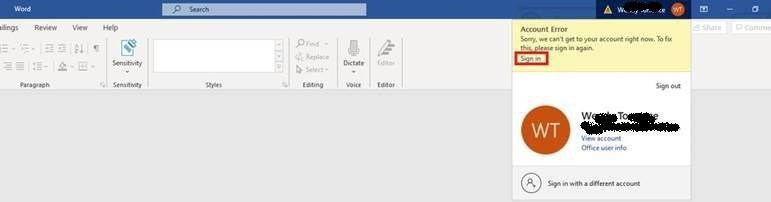

To initiate granting consent for Fabrikam users to the DKE service, a user of the Fabrikam tenant with normal privileges first needs to open a DKE protected document from Contoso.

This initial attempt is expected to fail, the user will see an exclamation mark besides the account in the title bar, indicating there’s an issue with the account. (Please observe that Contoso users opening content protected by their own DKE service do not get this experience.)

The user performs the following steps:

1. Click on the account in the title bar:

2. Select «Sign in» and re-authenticate as needed:

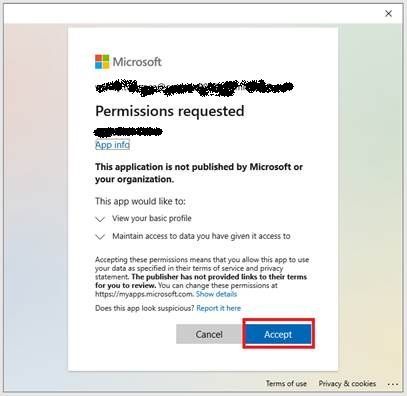

3. Accept requested permissions:

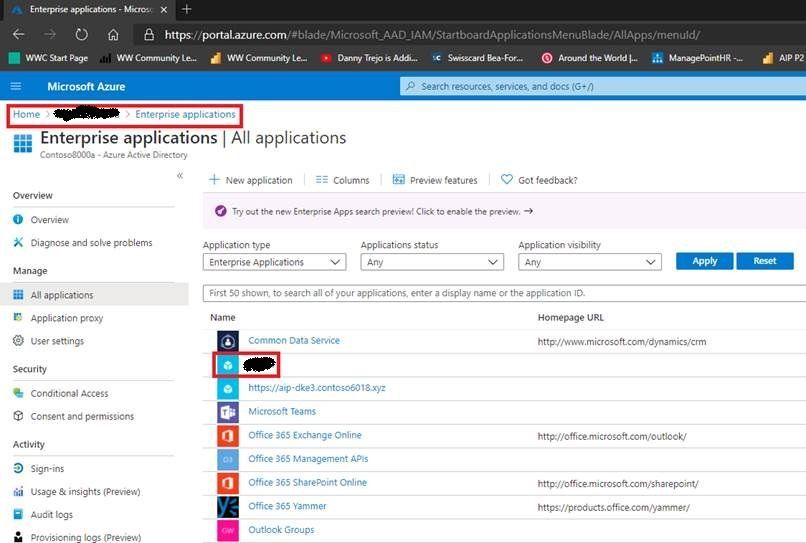

Global Admin of Fabrikam tenant grants consent for all tenant users

The following steps are needed by the Global Admin of the Fabrikam tenant in order to grant consent on behalf of his users:

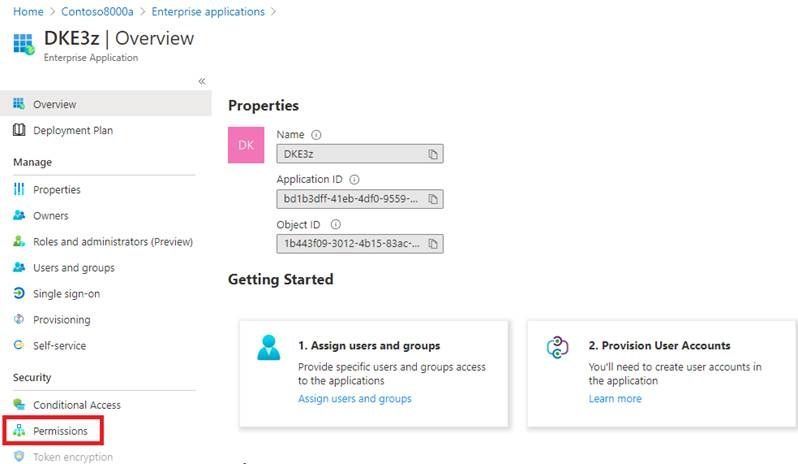

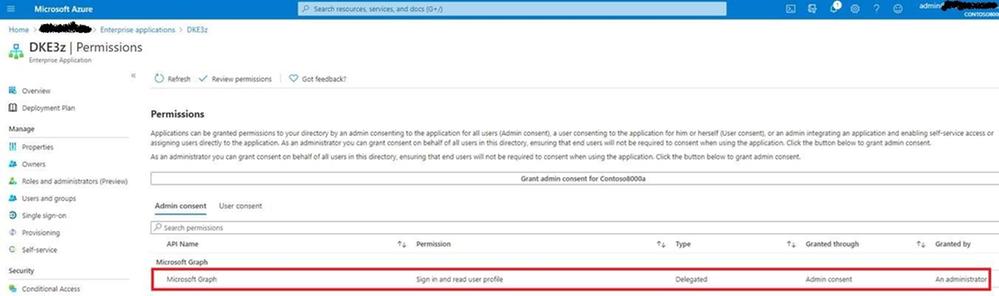

1. Sign in to the Azure portal, open “Azure Active Directory” and select “Enterprise applications”.

2. Select the Contoso DKE app:

3. Select «Permissions»:

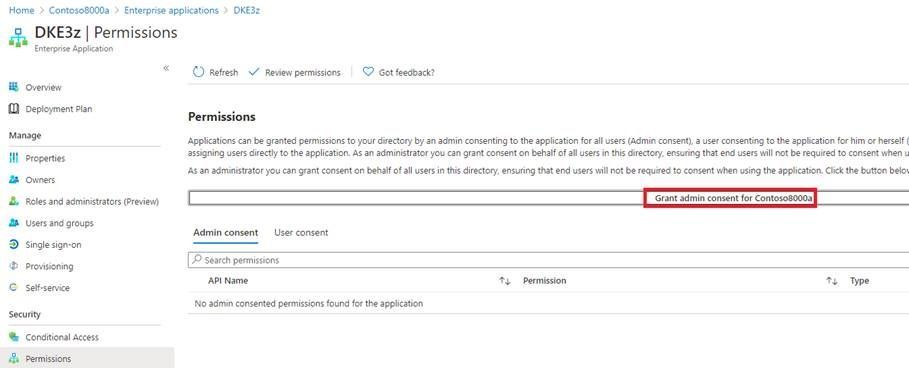

4. Select «Grant admin consent for Fabrikam»:

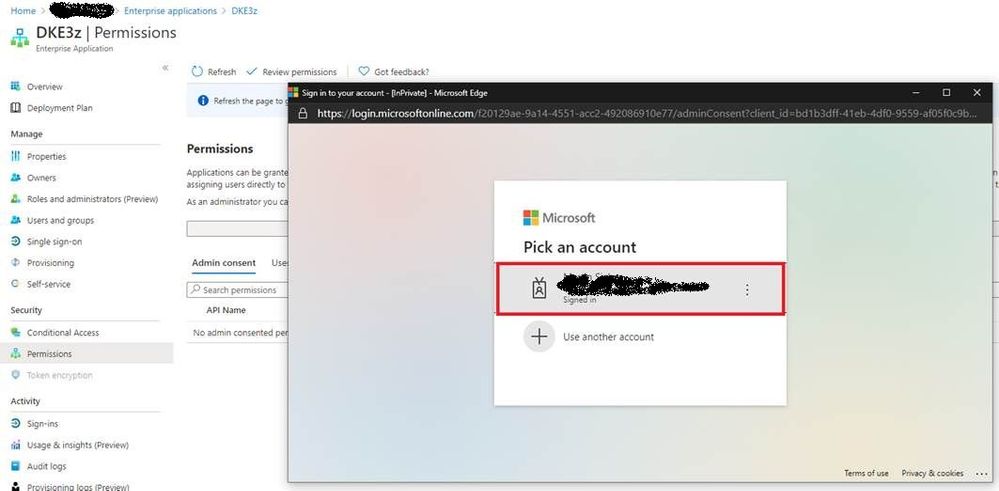

5. Re-authenticate as needed:

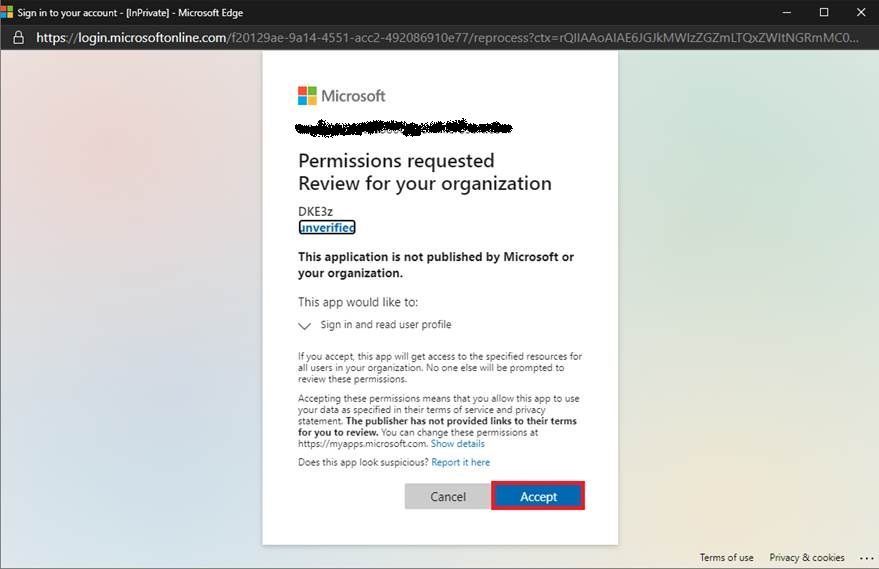

6. Accept permissions:

7. Refresh and verify the permissions are available:

Conclusion and next steps

After performing these steps, both Contoso and Fabrikam users may open DKE protected content by Contoso.

Please observe that Fabrikam users may not protect new content with the Contoso DKE service, they need to implement a DKE service of their own instead. If they intend to share DKE protected content with users from the Contoso tenant, they also need to go through the steps in this blog post.

If Contoso decides to share content with Woodgrove Bank as well, the steps described in this blog post need to be repeated with their tenant.

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

In addition to my role as a Principal Cloud Advocate and Lead for IoT Advocacy at Microsoft, I act as a professor at the University of Houston where I teach a course focused on Cloud-Powered App Development. As part of this course, we focus on AI @ the Edge Scenarios backed by Microsoft Azure IoT Services. To teach this concepts, we have chosen to target the very afforodable ($60 USD) NVIDIA Jetson Nano DevKit. This experience is catalogued in-depth in a previous article on the Microsoft Educator Developer Blog.

We find that student’s most requested point of customization for AI @ Edge solutions is in the customization of the AI models themselves. This can pose issues as there are various formats out there for developing computer vision based models, including: PyTorch, MXNet, Caffe, and Tensorflow. Oftentimes, the tooling to develop these formats results in vendor lock-in, meaning that future applications of your model may be bound by limitations depending on tooling and runtime compatibility for building and executing the model in question.

The Open Neural Network Exchange Format (ONNX) is a model standard/ format for exchanging deep learning models across platforms. It’s ability to be portable across model formats and even computer architectures makes it a prime candidate for AI model development without limitations. It can even adapt to the presence of say GPU acceleration on a given computational platform to offer enhancement of your model at runtime, without any need to redevelop your model to take advantage of those optimizations. Simply put, if you start with ONNX you can go anywhere and optimize without any extra effort.

Combining this fact with our target NVIDIA Jetson hardware, we can develop course content rooted in the development of ONNX based AI models to provide an open platform for students to build and experiment on, with the added benefit of GPU accelerated inference on low-cost embedded hardware. This allows the students to apply concepts on a device that they can physically alter with the addition of cameras, microphones, or other sensors to aid in their solutioning.

As a basis for formal understanding of how ONNX works with NVIDIA GPUs, we recommend starting with Manash Goswami’s presentation on the topic at the recent NVIDIA GTC 2020 conference (Note: that viewing this resource will require completing a free registration to NVIDIA’s Developer Program Membership).

After you have familiarized with the fundamentals, we recommend the following resources as hands-on lab or supplemental material for applying the concepts to run ONNX models on NVIDIA Jetson hardware for development of AI @ Edge solutions:

With these resources, we hope that you can employ the teaching of AI @ Edge scenarios without the fear of encountering the limitations inherent in less adaptable model formats. ONNX based model development can ensure that your models are portable across architectures and adaptable to the existence of accelerators on compatible hardware. For more information on the ONNX model format, be sure to check out https://www.onnxruntime.ai/ and for details on how to acquire NVIDIA Jetson embedded devices, check out this link.

Until next time,

Paul

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

Overview

In 2021, each month we will be releasing a monthly blog covering the webinar of the month for the Low-code application development (LCAD) on Azure solution. LCAD on Azure is a new solution to demonstrate the robust development capabilities of integrating low-code Microsoft Power Apps and the Azure products you may be familiar with.

This month’s webinar is ‘Deliver Java Apps Quickly using Custom Connectors in Power Apps’ In this blog I will briefly recap Low-code application development on Azure, how the app was built with Java on Azure, app deployment, and building the app’s front end and UI with Power Apps.

What is Low-code application development on Azure?

Low-code application development (LCAD) on Azure was created to help developers build business applications faster with less code, leveraging the Power Platform, and more specifically Power Apps, yet helping them scale and extend their Power Apps with Azure services.

For example, a pro developer who works for a manufacturing company would need to build a line-of-business (LOB) application to help warehouse employees’ track incoming inventory. That application would take months to build, test, and deploy, however with Power Apps’ it can take hours to build, saving time and resources.

However, say the warehouse employees want the application to place procurement orders for additional inventory automatically when current inventory hits a determined low. In the past that would require another heavy lift by the development team to rework their previous application iteration. Due to the integration of Power Apps and Azure a professional developer can build an API in Visual Studio (VS) Code, publish it to their Azure portal, and export the API to Power Apps integrating it into their application as a custom connector. Afterwards, that same API is re-usable indefinitely in the Power Apps’ studio, for future use with other applications, saving the company and developers more time and resources. To learn more, visit the LCAD on Azure page, and to walk through the aforementioned scenario try the LCAD on Azure guided tour.

Java on Azure Code

In this webinar the sample application will be a Spring Boot application, or a Spring application on Azure, that is generated using JHipster and will deploy the app with Azure App service. The app’s purpose is to catalog products, product descriptions, ratings and image links, in a monolithic app. To learn how to build serverless PowerApps, please refer to last month’s Serverless Low-code application development on Azure blog for details. During the development of the API Sandra used H2SQL and in production she used MySQL. She then adds descriptions, ratings, and image links to the API in a JDS studio. Lastly, she applies the API to her GitHub repository prior to deploying to Azure App service.

Deploying the Sample App

Sandra leverages the Maven plug-in in JHipster to deploy the app to Azure App service. After providing an Azure resource group name due to her choice of ‘split and deploy’ in GitHub Actions she only manually deploys once, and any new Git push from her master branch will be automatically deployed. Once the app is successfully deployed it is available at myhispter.azurewebsites.net/V2APIdocs, where she copies the Swagger API file into a JSON, which will be imported into Power Apps as a custom connector.

Front-end Development

The goal of the front-end development is to build a user interface that end users will be satisfied with, to do so the JSON must be brought into Power Apps as a custom connector so end users can access the API. The first step is clearly to import the open API into Power Apps, note that much of this process has been streamlined via the tight integration of Azure API management with Power Apps. To learn more about this tighter integration watch a demo on integrating APIs via API management into Power Apps.

After importing the API, you must create a custom connector, and connect that custom connector with the Open API the backend developer built. After creating the custom connector Dawid used Power Apps logic formula language to collect data into a dataset, creating gallery display via the collected data. Lastly, Dawid will show you the data in a finalized application and walk you through the process of sharing the app with a colleague or making them a co-owner. Lastly, once the app is shared, Dawid walks you through testing the app and soliciting user feedback via the app.

Conclusion

To conclude, professional developers can rapidly build the back and front ends of the application using Java, or any programming language with Power Apps. Fusion development teams, professional developers and citizen developers, can collaborate on apps together, reducing much of the lift for professional developers. Please watch the webinar and complete the survey so, we can improve these blogs and webinars in the future.

Resources

Webinar

Low-code application development on Azure

Java on Azure resources

Power Apps resources

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

Azure Data Factory now enables Azure Database for MySQL connector in Data Flow for you to build powerful ETL processes.

With Azure Database for MySQL as source in data flows, you are able to pull your data from a table or via custom query, then apply data transformations or join with other data. When using Azure Database for MySQL as a sink, you can perform inserts, updates, deletes, and upserts so as to publish the transformation result set for downstream consumption.

You can point to Azure Database for MySQL data using either a dataset or inline mode in data flow.

Learn more from Azure Database for MySQL connector documentation.

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

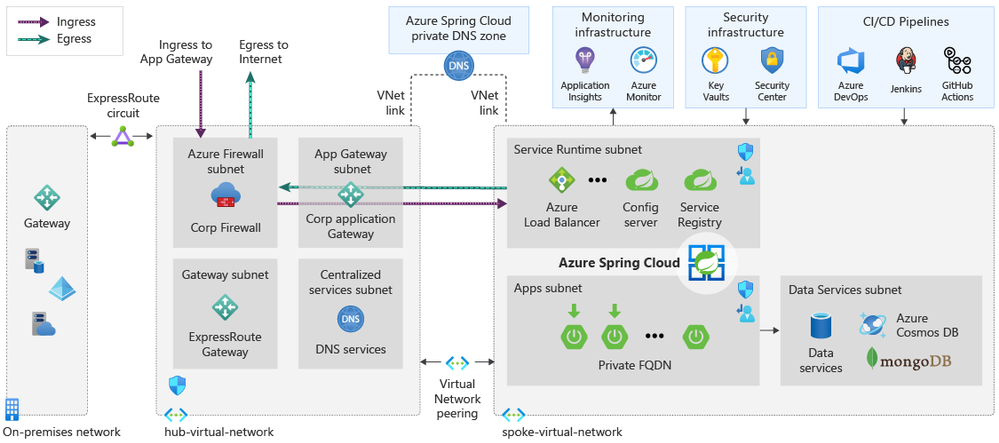

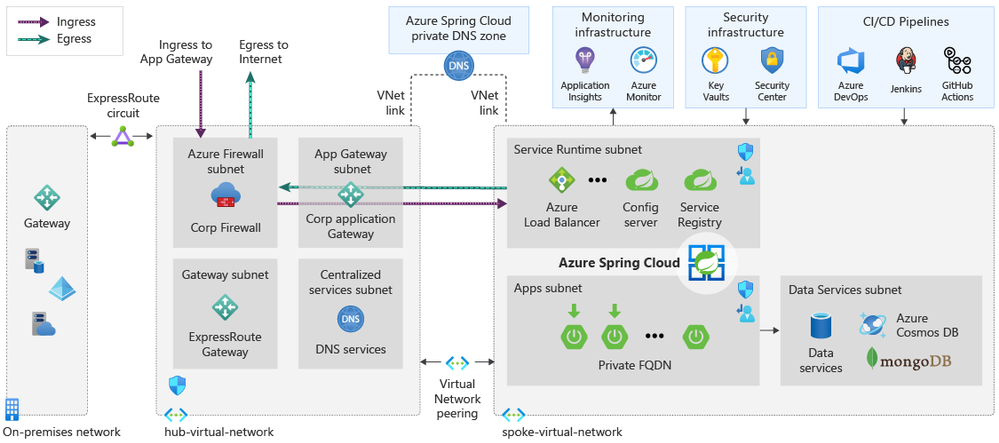

Today, we’re excited to announce the availability of the Azure Spring Cloud Reference Architecture. You can get started by deploying the Azure Spring Cloud Reference Architecture to accelerate and secure Spring Boot applications in the cloud at scale using validated best practices.

Over the past year, we worked with many enterprise customers to learn about their scenarios including thoughts on scaling properly, security, deployment, and cost requirements. Many of these customers have thousands of Spring Boot applications running in on-premises data centers. As they migrate these applications to the cloud, they need battle-tested architectures that instill confidence to meet the requirements set forth by their IT departments and/or regulatory bodies. In many customer environments, they also need to show direct mappings from architectures to industry-defined security controls and benchmarks. We thank these customers for the opportunity to work with them, and for helping us to build an Azure Spring Cloud Reference Architecture. Using this reference architecture, you can deploy and customize to meet your specific requirements and showcase pre-defined mappings to security controls and benchmarks.

“The availability of Azure Spring Cloud Reference architecture reduced our internal cycles of researching architecture options and Spring Cloud feature sets, which allowed us to rapidly determine how we would want to implement and scale globally.” — Devon Yost, Enterprise Architect, Digital Realty Trust

“Congratulations to you and your team for creating and providing the Azure Spring Cloud Reference Architecture free to all customers. The reference architecture is a great way for users to compare their design to how the experts at Microsoft design deployments. It is incredible for the reference architecture to include deployments using multiple technologies. We were able to compare the reference Terraform implementation and quickly understand the architecture. We have even started testing Azure DNS as highlighted in the architecture to manage our DNS using Infrastructure as Code principles.” – Armando Guzman, Principal Software Engineer, Unified Commerce, Raley’s

Ease of deploying Java applications

Azure Spring Cloud is jointly built, operated, and supported by Microsoft and VMware. It is a fully managed service for Spring Boot applications that lets you focus on building the applications that run your business without the hassle of managing infrastructure. The service incorporates Azure compute, network, and storage services in a well-architected design, reducing the number of infrastructure decisions. The Azure Spring Cloud Reference Architecture provides a deployable design that is mapped to industry security benchmarks providing a head start for compliance approval. The implementation and configuration of each service referenced in the architecture were evaluated against security controls to ensure a secure design.

Security and Managed Virtual Network

Security is a key tenet of Azure Spring Cloud, and you can secure Spring Boot applications by deploying to Azure Spring Cloud in Managed Virtual Networks (VNETs). With VNETs, you can secure the perimeters around your Spring Boot applications and other dependencies by:

- Isolating Azure Spring Cloud from the Internet and placing your applications and Azure Spring Cloud in your private networks.

- Selectively exposing Spring Boot applications as Internet-facing applications.

- Enabling applications to interact with on-premises systems such as databases, messaging systems, and directories.

- Controlling inbound and outbound network communications for Azure Spring Cloud.

- Composing with Azure network resources such as Application Gateway, Azure Firewall, Azure Front Door, and Express Route, and popular network products such as Palo Alto Firewall, F5 Big-IP, Cloudflare, and Infoblox.

Reliable deployment patterns

When you deploy a collection of Azure Resources, including Azure Spring Cloud, in your private network and interconnect these resources with on-premises systems, you can be faced with multiple questions such as:

- How do you manage costs to maximize the value delivered?

- How do you build operational processes to keep the system up and running in production?

- How do you account for performance efficiency where your system can adapt to changes in load?

- How can your system recover from failures and continue to function?

- How do you protect applications and data from threats and risks?

To address these questions, you can start with a trial-and-error approach but that takes time. The time it takes to get it right and achieve these outcomes is time not spent on your organizational objectives. A repeatable, tested deployment pattern can help you to address issues from the start.

The Azure Spring Cloud Reference Architecture addresses the following solution design components:

- Hub and spoke alignment. Aligns with the Azure landing zone, which enables application migrations and greenfield development at enterprise-scale in Azure. A landing zone is an environment for hosting your workloads, pre-provisioned through infrastructure as code.

- Well-Architected Framework. Incorporates the guiding pillars of the Azure Well-Architected Framework to improve the quality of a workload. The framework consists of five pillars of architecture excellence: Cost Optimization, Operational Excellence, Performance Efficiency, Reliability, and Security.

- Perimeter security. Secures the perimeter for full egress management, managing secrets and certificates using Azure Key Vault. Wires up with networking resources of your choice, incorporating your IT-team-supplied route tables filled with user-defined network routes. And it is ready for interacting with private links exposed by Azure resources or endpoints exposed by your on-premises systems.

- Authorized access to deployed environments. Includes securing and authorizing access into a deployed environment through a jump host machine with necessary development tools via Azure Bastion.

- Monitoring. Enables observability by wiring up for Application Performance Monitoring (APM) and publishing logs and metrics for all the resources through Azure Monitor. This provides the option to aggregate logs and metrics in an aggregator of your choice, such as Azure Log Analytics, Elastic Stack, or Splunk.

- Smoke tests. Supplies deployment scripts to deploy a line of business system and to smoke test the deployed environment.

Figure 1 – the diagram represents a well-architected hub and spoke design for applications selectively exposed as public applications

Start here

This Azure Spring Cloud Reference Architecture is a foundation using a typical enterprise hub and spoke design for the use of Azure Spring Cloud. In the design, Azure Spring Cloud is deployed in a single spoke that is dependent on shared services hosted in the hub.

For an implementation of this architecture, see the Azure Spring Cloud Reference Architecture repository on GitHub. Deployment options for this architecture include ready-to-go Azure Resource Manager (ARM), Terraform, and Azure CLI scripts. The artifacts in this repository provide groundwork that you can customize for your environment and automated provisioning pipelines.

Meet the team

This Azure Spring Cloud Reference Architecture is created and maintained by Cloud Solution Architects, Java experts, and content authors at Microsoft, here in alphabetical order and row-wise from left to right:

- Armen Kaleshian – Cloud Solution Architect

- Arshad Azeem – Cloud Solution Architect

- Asir Selvasingh – Architect, Java on Azure

- Bowen Wan – Software Engineering Manager, Java on Azure

- Brendan Mitchell – Content Developer

- David Apolinar – Cloud Solution Architect

- Dylan Reed – Customer Engineer

- Karl Erickson – Content Developer

- Matt Felton – Cloud Solution Architect

- Ryan Hudson – Cloud Solution Architect

- Troy Ault – Cloud Solution Architect

Learn more about Azure Spring Cloud and start building today!

Azure Spring Cloud abstracts away the complexity of infrastructure management and Spring Cloud middleware management, so you can focus on building your business logic and let Azure take care of dynamic scaling, patches, security, compliance, and high availability. With a few steps, you can provision Azure Spring Cloud, create applications, deploy, and scale Spring Boot applications and start monitoring in minutes. We’ll continue to bring more developer-friendly and enterprise-ready features to Azure Spring Cloud.

We’d love to hear how you are building impactful solutions using Azure Spring Cloud. Get started with the Azure Spring Cloud Reference Architecture and these resources!

Resources

by Contributed | Mar 11, 2021 | Technology

This article is contributed. See the original author and article here.

SQL Assessment API provides a mechanism to evaluate the configuration of your SQL Server for best practices. The API is delivered with a ruleset containing best practice rules suggested by SQL Server Team. This ruleset is enhancing with the release of new versions but at the same time, the API is built with the intent to give a highly customizable and extensible solution. So, users can tune the default rules and create their own ones. The API can be used to assess SQL Server versions 2012 and higher and Azure SQL Managed Instance.

In this episode with Aaron Nelson, we’ll show you the basics of the SQL Assessment PowerShell commands, and how you can run the assessments for your Azure SQL Managed Instances as well as your on-prem SQL Server instances.

Watch on Data Exposed

Resources:

Recent Comments