by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

Now you can print Workbooks ! Azure portal enables printing and allows to save workbooks as PDF

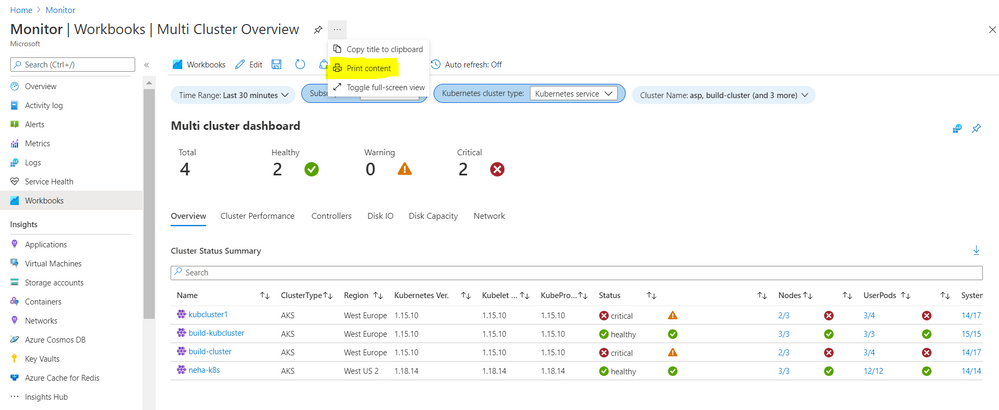

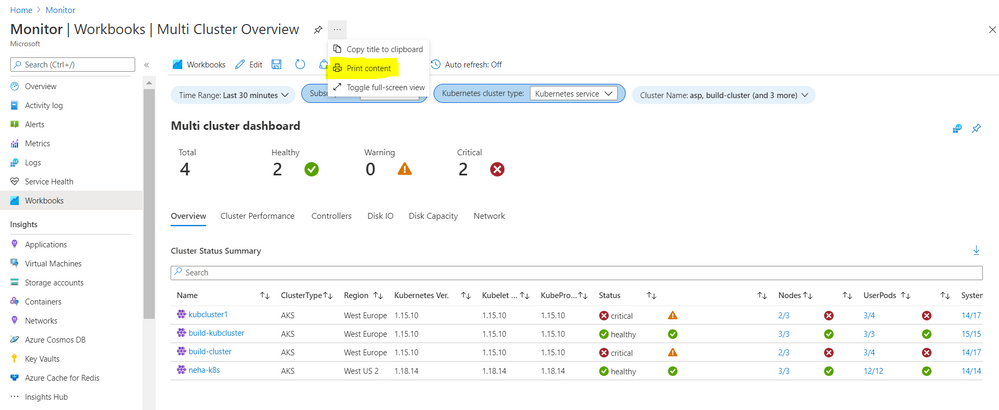

1. Print is available on the blade next to the name under action ellipses

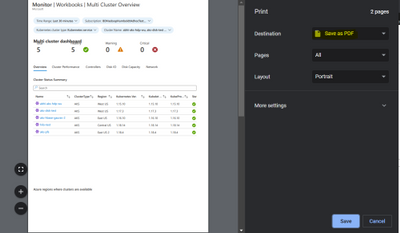

2. A print dialog opens and you can select the destination to ‘Save as PDF’ and change the layout as needed for your Workbook

3. After save this workbooks will be available as a pdf file wherever you saved it.

Happy printing !

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

This month’s community call will continue with our every 4th Tuesday of the month schedule, occurring on March 23rd! Join us at either 8:00 AM or 5:00 PM PST.

We will be covering topics around some of the top new features announced at Ignite, a demo of our champion management platform, as well as a preview of a storytelling toolkit for building good stories inside your groups.

If you aren’t a member of our champion community, signup here to get the resource links that contain access to the call calendar, invites, and previous calls!

http://aka.ms/m365champions

We look forward to seeing you there!

/Josh

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

Join us for this series of webinars to learn about all the announcements and updates from Ignite regarding Insider Risk Management & Communications Compliance, Information Governance & Records Management and Compliance Manager. Though there weren’t any announcements made at Ignite relating to Advanced eDiscovery, we’ve scheduled a webinar to cover recent updates that you won’t want to miss!

click on the links to download the calendar invitation!

US & EMEA Sessions

What’s New from Ignite regarding Insider Risk & Communication Compliance

March 24, 2021: 8:00 AM PDT, 11:00 AM EDT, 3:00 PM UK

Download invite: https://aka.ms/GetInvite-IRCCatIgnite-EMEA/NA

Join: https://aka.ms/Join-IRCCatIgnite-EMEA/NA

What’s New with Advanced eDiscovery Spring 2021

April 7, 2021: 8:00 AM PDT, 11:00 AM EDT, 4:00 PM UK

Download invite: https://aka.ms/GetInvite-eDiscoverySpring2021-EMEA/NA

Join: https://aka.ms/Join-eDiscoverySpring2021-EMEA/NA

What’s New from Ignite regarding Information Governance & Records Management

April 14, 2021: 8:00 AM PDT, 11:00 AM EDT, 4:00 PM UK

Download invite: https://aka.ms/GetInvite-MIGatIgnite-EMEA/NA

Join: https://aka.ms/Join-MIGatIgnite-EMEA/NA

What’s New from Ignite regarding Compliance Manager

April 20, 2021: 8:00 AM PDT, 11:00 AM EDT, 4:00 PM UK

Download invite: https://aka.ms/GetInvite-ComplianceMgratIgnite-EMEA/NA

Join: https://aka.ms/Join-ComplianceMgratIgnite-EMEA/NA

APAC Sessions

What’s New from Ignite regarding Insider Risk & Communication Compliance

March 24, 2021: 4:00 PM AEDT (Sydney), 10:30 AM IST (Delhi)

Download Invite: https://aka.ms/GetInvite-IRCCatIgnite-APAC

Join: https://aka.ms/Join-IRCCatIgnite-APAC

What’s New with Advanced eDiscovery Spring 2021

April 7, 2021: 4:00 PM AEST (Sydney), 11:30 AM IST (Delhi)

Download Invite: https://aka.ms/GetInvite-eDiscoverySpring2021-APAC

Join: https://aka.ms/Join-eDiscoverySpring2021-APAC

What’s New from Ignite regarding Information Governance & Records Management

April 14, 2021: 4:00 PM AEST (Sydney), 11:30 AM IST (Delhi)

Download Invite: https://aka.ms/GetInvite-MIGatIgnite-APAC

Join: https://aka.ms/Join-MIGatIgnite-APAC

What’s New from Ignite regarding Compliance Manager

April 20, 2021: 4:00 PM AEST (Sydney), 11:30 AM IST (Delhi)

Download Invite: https://aka.ms/GetInvite-ComplianceMgratIgnite-APAC

Join: https://aka.ms/Join-ComplianceMgratIgnite-APAC

|

![[Customer Story] Enabling an Event Driven Architecture with DevTest Labs](https://www.drware.com/wp-content/uploads/2021/03/large-1115)

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

SharePoint Online (SPO) is Microsoft’s enterprise-class document management service for Microsoft365. The SharePoint Online (SPO) team at Microsoft has created a solution, built on Azure DevTest Labs (DTL), to make SPO testing environments readily available to SharePoint engineers, leveraging the strengths of DevTest Labs and Azure. This is the second in a series of blog entries enabling anyone to build, configure and run a similar system using Azure and DTL. For part 1, see How the SharePoint Online Team Leverages DevTest Labs.

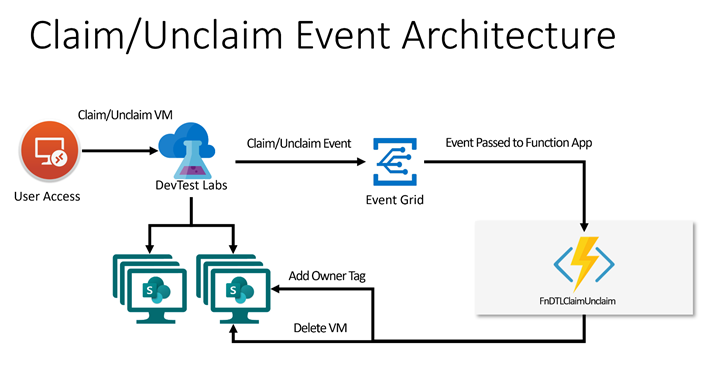

In this blog post, two use cases of the solution’s expected VM lifecycle are described. A simple architecture is proposed that involves hooking up an Azure Function App to DevTest Lab Events by way of Azure Event Grid to implement the two use cases. There is a brief walk-through of the implementation intended to be used by other Azure customers to build similar solutions. If you are building an event-driven DTL application, consider using Azure Functions and Event Grid as the SPO team did. This article is of interest to you!

Scenario

As introduced in Part 1 of this blog series, environments in Azure DevTest Labs are a natural fit for SPO integration testing. SPO engineers can claim test environments that are miniaturized data centers, with all components shrunk down and interacting on a single Azure VM inside DTL. Pools of these VMs are made available for SPO engineers so that test environments are readily available at any time. When finished, they unclaim them.

There are two features that the SPO team has built on top of existing DevTest Labs Claim/Unclaim operations:

Feature 1 – Tag a Claimed VM

By default, DTL does not allow an easy way for the admin to know the ownership of claimed VMs. A common way to do this is to add an “Owner” tag to the VM using Azure Tags. Tags are flexible and allow lab admins to easily organize Azure resources and make them easy to query, for example, to list VMs assigned to a given user or set of users. In this solution, when a user claims a VM, an event is fired, and the handler attaches an “Owner” tag, with associated user name as data, to the VM. The tag shows up in the VM’s properties in the Azure Portal.

Feature 2 – Delete an Unclaimed VM

In SPO’s use case, VMs are not re-usable. Once a VM is claimed by a lab user, the state on the VM may change in such a way as to make the VM unsuitable for future users’ needs. For example, users can change the state of one of the SharePoint web applications, or introduce state into SharePoint by simply adding a document to a document library as part of a test. (For a better understanding of SharePoint’s document libraries, try: What is a document library?) The engineer may also update the code running on the VM, which can change behavior in a way undesirable for other engineers.

In general, once a VM is assigned to a lab user, its validity as a clean test environment can no longer be trusted. With this use case in mind, VMs in the SPO solution are single-use. Once unclaimed, they are deleted.

Note: Replenishment of the pool of available VMs is described in a future blog entry in this series, entitled “Maintaining a Pool of Claimable VMs”.

Both of these features can be added to DevTest Labs using Azure Event Grid, with an Azure Function listening and handling events. The solution is simple and elegant; please read on to see how this is configured.

Event Handling with Azure Functions

Azure Functions are a flexible, serverless computing platform in Azure. Functions can be configured to trigger when an Azure event fires, and DevTest Labs fires “Claim” and “Unclaim” events when VMs are claimed or unclaimed, respectively. When configured properly through Event Grid, events are dispatched to an Azure Function subscriber.

For simplicity and brevity, the solution described herein implements a single Azure function to handle both Claim and Unclaim events. The function is written in PowerShell and implements the tagging and delete business logic for these events. It is deployed in a Function App, triggered when events fire, in accordance with this table:

DevTestLab Event

|

Event Name

|

Action

|

Claim

|

microsoft.devtestlab/labs/virtualmachines/claim/action

|

Add an “Owner” tag to the VM

|

Unclaim

|

microsoft.devtestlab/labs/virtualmachines/unclaim/action

|

Delete the VM

|

Note: You can find a complete list of DevTestLab events here: DevTest Lab Events. There are several useful ones that, when paired with the technique described in this post, can be used to solve other event-driven scenarios using the template given here.

Solution Architecture

This solution builds on the ideas presented in the article Use Azure Functions to extend DevTest Labs. A key difference is how the events are triggered: In the article, the Functions are triggered via an HTTP call. In this example, Claim/Unclaim events are generated from the DevTest Lab and routed via Event Grid to the function app. We will also be building on the coding concepts in Azure Event Grid trigger for Azure Functions.

Walkthrough

The following solution assumes a pre-created Azure DevTest Lab, such as one created using the steps in Create a lab in Azure DevTest Labs. In this example, the Lab has been freshly deployed with name “PetesDTL” and resource group “PetesDTL_rg” with no VMs yet created.

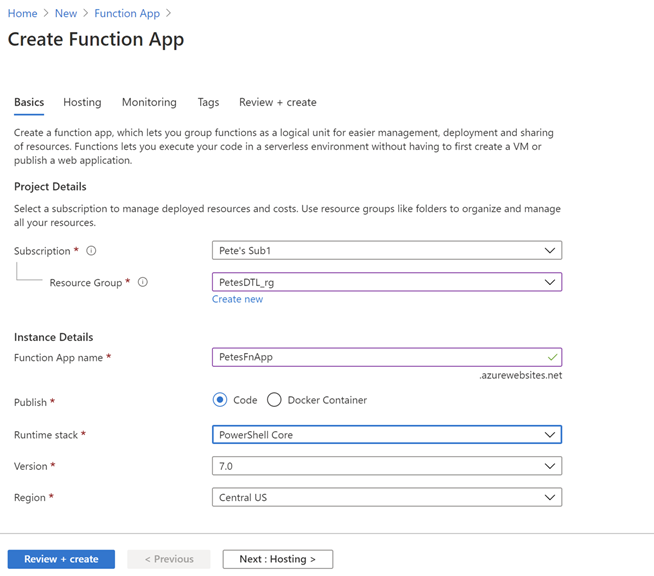

Step 1: Create a Function App

Use the “Create a Resource” UI and find “Function App” in your Azure subscription, and hit “Create”. Select the resource group for your DevTest Lab. This is optional but is a convenience for grouping your app with the other resources for your Lab. In this example, the Name of the app is “PetesFnApp” and is in the “PetesDTL_rg” resource group with a Runtime stack that is PowerShell Core. PowerShell Core is a flexible, operating system independent control language that is easy to get to work with Azure.

Leaving the majority of the settings with their defaults, hit “Review + create”, then “Create” to deploy the function app after the verification step.

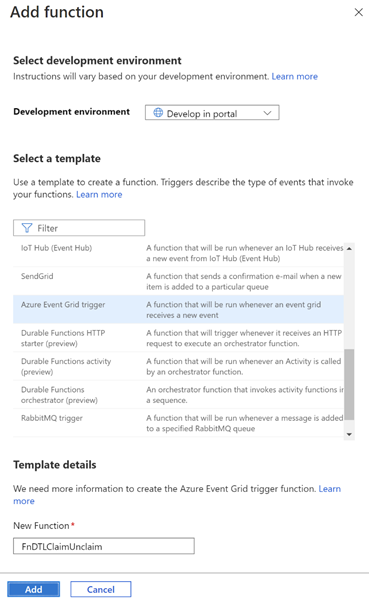

Step 2: Create the FnDTLClaimUnclaim Function

Navigate to the newly-deployed Function App, hit “Functions” and then “+ Add” to add a new function to the App. Leave the “Development environment” to be “Develop in portal” and select “Azure Event Grid trigger” for the template. In this example, the new function is named “FnDTLClaimUnclaim”. The name indicates that this Powershell Core function is dual-purpose and will handle both the “Claim” and “Unclaim” events from the DevTest Lab. Click “Add”.

Step 3: Add logic to the function

Navigate to the newly-created function, and hit “Code + Test”. There is some sample code in the edit window, which you can replace with the following code:

param($eventGridEvent, $TriggerMetadata)

$operationName = $eventGridEvent.data["operationName"]

# Apply an Owner tag to a VM based on passed-in claims

function ApplyOwnerTag($vmId, $claims) {

# Get the VM

$dtlVm = Get-AzResource -ResourceId $vmId -ErrorAction SilentlyContinue

# Fetch user.

# Prefer user specified in claims, then owner, then createdBy

$claimPropName = $claims.keys | Where-Object {$_ -like "*/identity/claims/name"}

if ($claimPropName) {

$user = $claims[$claimPropName]

}

if (-not $user) {

$user = $dtlVm.Properties.ownerUserPrincipalName

}

if (-not $user) {

$user = $dtlVm.Properties.createdByUser

}

$tags = $dtlvm.Tags

if ($tags) {

if (-not $tags.keys.Contains("Owner")) {

$tags.Add("Owner", $user)

}

}

else {

$tags = @{ Owner=$user }

}

# Save the VM's tags

Set-AzResource -ResourceId $vmId -Tag $tags -Force | Out-Null

}

# Event Action

# ------------------------------------------

# Claim Add "owner" tag to the VM

# Unclaim Delete the VM

$claimAction = ($operationName -eq "microsoft.devtestlab/labs/virtualmachines/claim/action")

$unclaimAction = ($operationName -eq "microsoft.devtestlab/labs/virtualmachines/unclaim/action")

if ($claimAction -or $unclaimAction) {

$vmId = $eventGridEvent.subject

Connect-AzAccount -Identity

$dtlVm = Get-AzResource -ResourceId $vmId -ErrorAction SilentlyContinue

if ($claimAction) {

if (-not $dtlVm.Properties.allowClaim) {

ApplyOwnerTag $vmId $eventGridEvent.data.claims

}

}

else {

if ($dtlVm.Properties.allowClaim) {

Remove-AzResource -ResourceId $vmId -Force -ErrorAction SilentlyContinue

}

}

}

Click “Save” to save the function.

The code snippet contains an ApplyOwnerTag function and a main body. The ApplyOwnerTag function gets the user from variety of sources, preferring first the identity from the passed-in claims, then looking at the owner or created-by user from the DevTestLabs VM. Once it has a valid user name, it adds the “Owner” tag to the VM.

The main body of the Azure Function first determines which event is being handled. On a Claim event, the ApplyOwnerTag function is called to add the “Owner” tag to the given VM. On Unclaim, the VM is removed. This logic implements the behavior desired for the SharePoint Online application for these two events.

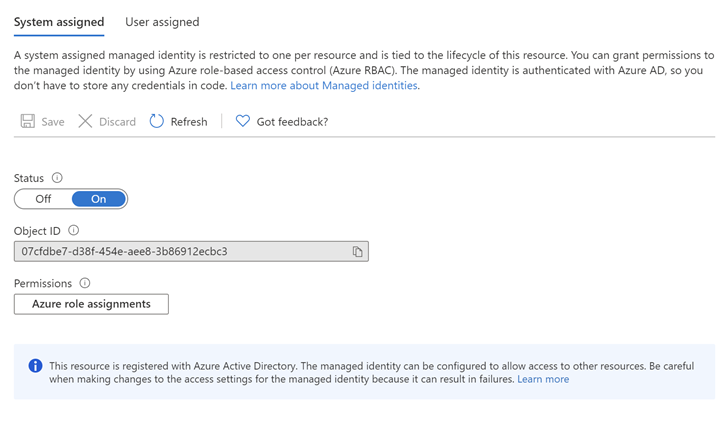

Step 4: Assign an Identity and Role for the Azure Function

The Azure function has a call to Connect-AzAccount, which requires that the function app uses a System-assigned identity. (User-assigned identities can also be configured, however for simplicity this example uses a System-assigned identity.) Further, the identity in question needs access to resources in the subscription in order to add tags and to delete VMs. To configure this, click on the Function App, then Identity. Change the Status to “On”.

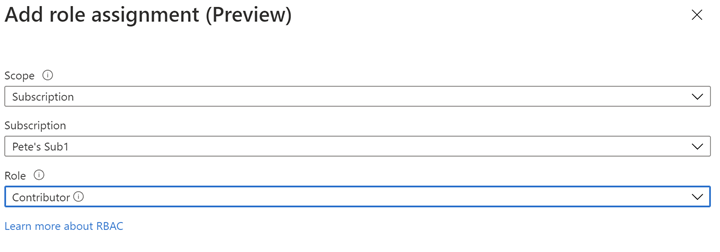

Next, Click on “Azure role assignments” to configure role-based access control for this Function App. In this example, Contributor role is assigned for the entire Azure subscription. While it’s possible to configure more fine-grained access to the Identity, this example in order to keeps things simple.

The resultant role assignment should look like this:

Step 5: Create an Event Subscription for the Function App

At this point we have logic ready to handle the DevTestLabs events, but the Function App is not configured to subscribe to those events. Azure Event Grid is infrastructure for mapping Azure events to logic and can be used to create rich applications. To get deeper into Azure Event Grid, please check out Azure Event Grid Overview.

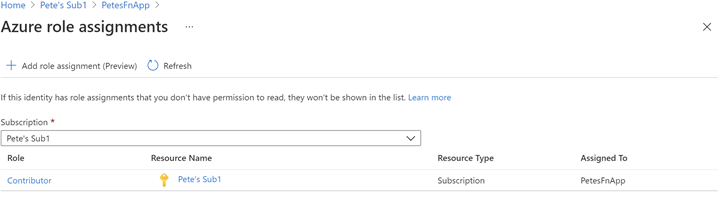

Navigate to the subscription, then click Events. Under “Get Started”, click on “Azure Function”:

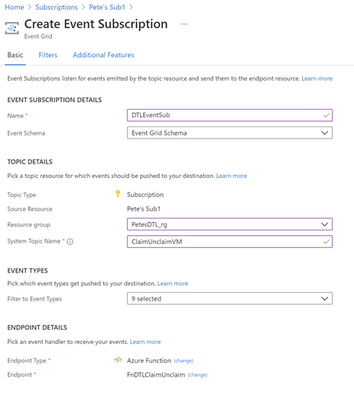

Enter “DTLEventSub” as the name. Use “ClaimUnclaimVM” for the System Topic Name. For Endpoint Type select “Azure Function” with Endpoint “FnDTLClaimUnclaim”.

Hit Create. This will create the ClaimUnclaimVM Topic and the Event Subscription.

Step 6: Test the Claim functionality

Navigate to the DevTest Lab in your subscription, “PetesDTL” in this example, and click “+ Add” to create a new VM. The type of VM you choose is not relevant for this example – you can select the operating system and resources that are appropriate for your application. In this example the VM is named “petesvm001”.

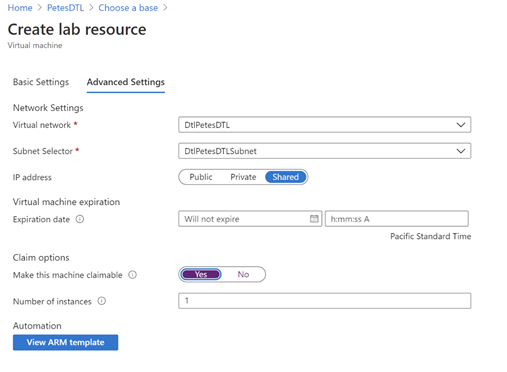

In “Advanced Settings”, select “Yes” for “Make this machine claimable” under “Claim options”, then in “Basic Settings” click “Create”. The reason is that we expect to Claim the VM through the UI to trigger the tagging behavior. Later, when we Unclaim the VM, we will expect to see the VM being deleted.

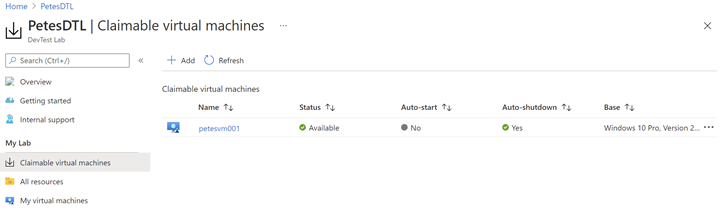

After the VM is created (this may take several minutes, perhaps tens of minutes, to complete) it should show in the “Claimable virtual machines” section of the DevTest Labs UI:

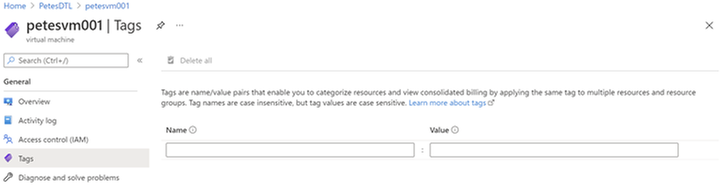

Note that this VM does not have an “Owner” tag yet, since it has not yet been claimed:

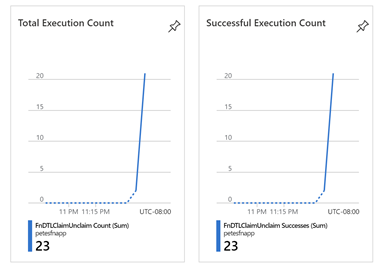

Now, click the ellipsis (…) on the VM in the Claimable virtual machines, and select “Claim”. This operation will also take several tens of minutes before the VM is successfully claimed and the Azure event has made its way through the Event Grid infrastructure and called our Azure Function. You can monitor when your Function has been called by selecting the Function in the Function App UI and seeing Total and Successful Execution Count in the Overview section:

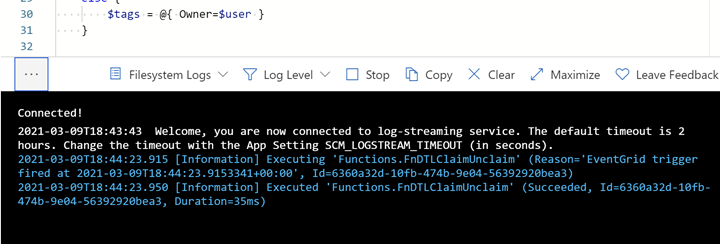

You can also see what the Azure Function logs in near real-time by using the “Code + Test” UI and opening the Logs popup at the bottom:

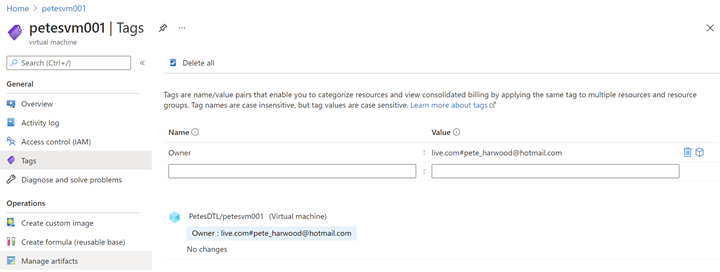

After several minutes, the Function is called, and the ApplyOwnerTag PowerShell function adds the current user’s name in the Owner tag, as expected:

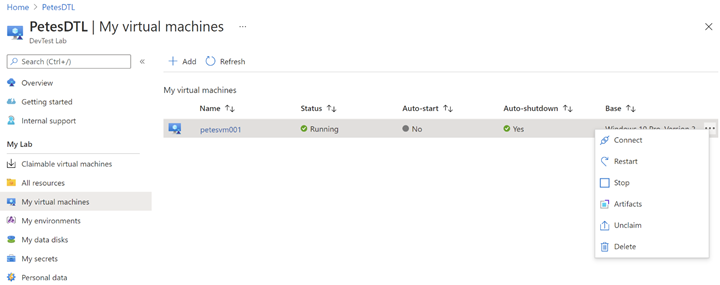

Now navigate to “My virtual machines” in the DevTest Labs UI, click the ellipsis (…) and select “Unclaim”:

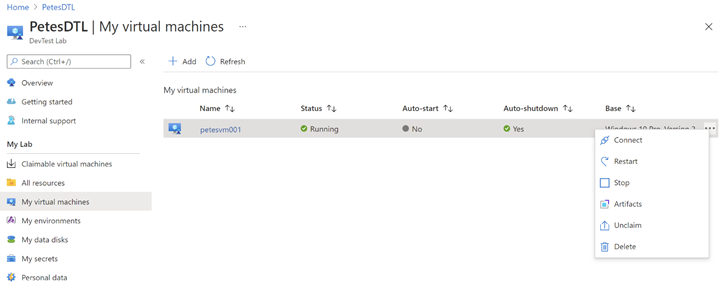

Once again, it will take several minutes for the event to make its way through Azure to call FnDTLClaimUnclaim, but when it does the Remove-AzResource call will ensure the VM gets deleted:

This completes the full Claim/Unclaim cycle that a SharePoint Online user would experience and demonstrates the two features built on top of DevTest Labs.

Some Notes on Performance

The Azure Function App used in this example uses the PowerShell Core runtime. PowerShell was chosen for its strength as a simple control language for Azure, and its amenability to concise code samples. However, there is overhead to the boot time and resource consumption for PowerShell-based Function Apps over, say, C#-based Apps that should not be overlooked for performance-sensitive applications.

Eventing in Azure has its own set of performance characteristics, and you will notice with these samples delays of minutes and sometimes up to tens of minutes for events to fire and be handled by the Function App. This can be improved somewhat by upgrading from a Consumption Plan to an Dedicated App Service Plan, but one should bear in mind that there is always inherent and unavoidable latency due to the Azure’s eventing model, and this should be accounted for in the design of the Azure application.

What’s Next?

The next blog post will describe how the SharePoint team built an Azure VPN that complements the DevTest Lab, securing the connection between lab users and the VMs they connect to. If you are interested in securing your environment, this is not one you will want to miss!

If you run into any problems with the content, or have any questions, please feel free to drop a comment below this post and we will respond. Your feedback is always welcome.

– Pete Harwood, Principal Engineering Manager, OneDrive and SharePoint Engineering Fundamentals at Microsoft

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

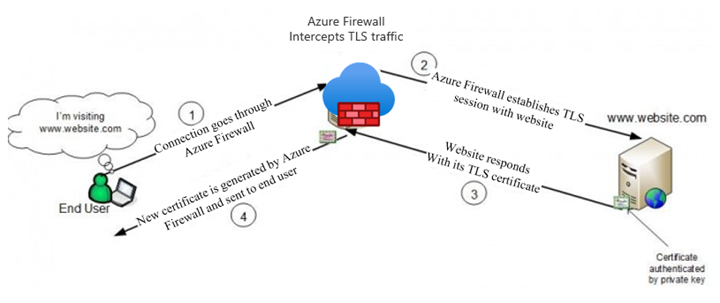

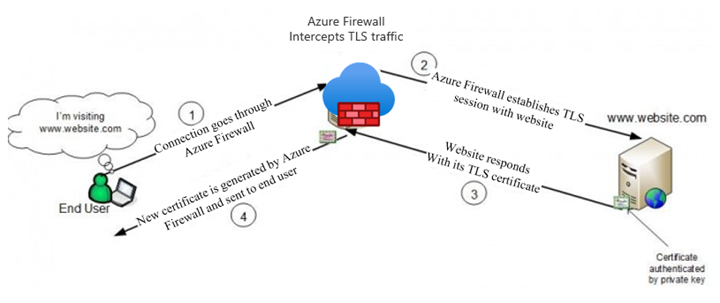

Azure Firewall Premium, which entered Public Preview on February 16th, introduces some important new security features, including IDPS, TLS termination, and more powerful application rules that now handle full URLs and categories. This blog will focus on TLS termination, and more specifically how to deal with the complexities of certificate management.

There is an overview of the TLS certificates used by clients, websites, and Azure Firewall in a typical web request that is subject to TLS termination in our documentation (diagram below). In summary, a Subordinate (Intermediate) CA certificate needs to be imported to a Key Vault for Azure Firewall to use. To ensure a seamless experience for clients, they all must trust the certificate issued by Azure Firewall.

The rough steps for enabling TLS Inspection are:

- Issue and export a subordinate, or intermediate, CA certificate along with its private key.

- Save the certificate and key in a Key Vault.

- Create a Managed Identity for Firewall to use and allow it to access the Key Vault.

- Configure your Firewall Policy for TLS Inspection.

- Ensure that clients trust the certificate that will be presented by Azure Firewall.

The rest of the blog will walk through the different ways to accomplish steps 1 and 5.

General Certificate Requirements

From our docs, the certificate issued must conform to the following:

- It must be a single certificate, and shouldn’t include the entire chain of certificates.

- It must be valid for one year forward.

- It must be an RSA private key with minimal size of 4096 bytes.

- It must have the KeyUsage extension marked as Critical with the KeyCertSign flag (RFC 5280; 4.2.1.3 Key Usage).

- It must have the BasicContraints extension marked as Critical (RFC 5280; 4.2.1.9 Basic Constraints).

- The CA flag must be set to TRUE.

- The Path Length must be greater than or equal to one.

These requirements can be fulfilled by either generating self-signed certificates on any server, or by using an existing Certificate Authority, possibly as part of a Private Key Infrastructure (PKI). Public Certificate Authorities will not issue a certificate of this type because it will be used to issue other certificates on behalf of the root or issuing CA. Since most public CAs are trusted by default on client operating systems, allowing others to issue certificates on behalf of those would be a major security risk.

Self-Signed Certificates

The quickest and easiest method of generating a certificate for use on Azure Firewall is to generate root and subordinate CA certs on any Windows, Linux, or MacOS machine using openssl. This is the recommended method to use for testing environments, due to its simplicity.

There are scripts in our documentation that make this process very easy. If you are using these certificates in a production environment, be sure to secure the root CA certificate by storing it in a Key Vault.

Establishing Trust

If the certificate used on Azure Firewall is not trusted by the client making a web request, they will be met with an error, which would disrupt normal operations. The best way to establish trust is to add the Root CA that issued the Firewall certificate as a Trusted Root CA on every client device that will be sending traffic through the Firewall. You will need an exported .cer file from your Root CA.

Using Ubuntu as the example for Linux, this can be done using update-ca-certificates.

On Windows, you can use the UI or import using Powershell.

This process can be scripted and run remotely if the environment allows it.

PKI

A Private Key Infrastructure can be used by organizations to manage trust within an enterprise. There are several advantages to using this approach rather than self-signed certificates, including:

- CA infrastructure may already be in place in some environments, especially hybrid ones.

- Enterprise Root CA is automatically trusted by all domain-joined Windows computers. No extra steps are needed to establish trust.

- Certificate rotation and revocation can be done centrally via Group Policy, so changes are more easily managed.

Using PKI, you will not have to import your certificate on your Windows clients, since they will all automatically trust your Enterprise Root CA. The full process of generating, exporting, and configuring Azure Firewall to use a PKI certificate is documented in a new article here.

Intune

Intune does not generate certificates, but it can be a great tool to manage them on clients. If your Azure VMs are managed by Intune, you can use certificate profiles to add your chosen CA as trusted.

Custom Images

If your environment is not connected to or managed by Active Directory, Intune, MEM, or any other client management tool, you still have an option to deploy certificates at scale. Using custom images, you can install the trusted Root CA certificate, capture an image, and use that image to deploy or re-deploy your VM instances.

This process works best in environments where servers are treated as “cattle” rather than “pets,” meaning that they are spun up and down often and automatically configured, rather than manually configured and maintained for long periods of time.

Summary

This has been an overview of some different methods available to create certificates for use on Azure Firewall Premium and establish trust for those certificates on your clients. This is certainly not an exhaustive list of the options out there, so we would like to hear more from you. Please leave a comment telling us what methods you are currently using or would like to use. We will use your feedback to create more documentation and other instructional content.

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

(part 3 of my series of articles on security principles in Microsoft SQL Servers & Databases)

Security concept: Delegation of Authority

To be precise from the beginning: Delegation is a process or concept rather than a principle. But it is a particularly useful practice to keep in mind when designing any security concept and it is strongly connected to the discussed security principles like the Principle of Least Privilege and Separation of Duties as you will find out.

So, what is it about? Delegation (of authority) is the process to pass on certain permissions to other users, often temporarily, without raising their overall privileges to the same level as the delegating account.

There are two slightly different approaches. Delegation can be done either..

1) at the Identity level: allowing Identity A to be used utilized by identity B. Here B assumes A’s identity and powers/permissions. Examples in Operating Systems are the “Runas” and “sudo”-commands in Windows, respectively Unix/Linux.

Or

2) it can be done at the Authorization level: essentially by passing on a specified set of permissions via a form of Role Based Access Control (RBAC*) to another identity.

When you look at two-tier application architectures and the authentication flows, you will probably notice that delegation is somewhat a common pattern.

OAuth and on-behalf-of token for authentication

For example, OAuth is an open standard for access delegation which is also utilized in Azure Active Directory (AAD). Using the OAuth 2.0 On-Behalf-Of flow (OBO) applications can invoke a service API, which in turn needs to call another service API – like SQL Server.

Instead of using the invoking application’s identity, the delegated user’s identity (and permissions) is propagated through the request chain. To do this, the second-tier application authenticates to the resource for example a SQL database, with its own token, a so called “on-behalf-of” token that originated from the first-tier application..

This is an example of delegation at identity level.

Delegation of Authority in the SQL realm

In SQL Server there are multiple options available to implement Delegation.

Azure AD User’s creation

One scenario where delegation is being used under the covers is the following:

Assume, an AAD principal, such as the AAD admin account for Azure SQL, wants to create an AAD user in the database (statement: CREATE USER <AADPrincipalName> FROM EXTERNAL PROVIDER).

In this case, the managed service identity (MSI) which is assigned to Azure SQL server is required. Using this MSI, Azure SQL server sends the information about the AAD user which the AAD admin account wants to create as a user inside SQL to AAD graph (in future: MS graph) for verification. Therefore, the MSI (and not the AAD admin account) requires the proper permission in Azure AD (such as the “Directory Readers” role).

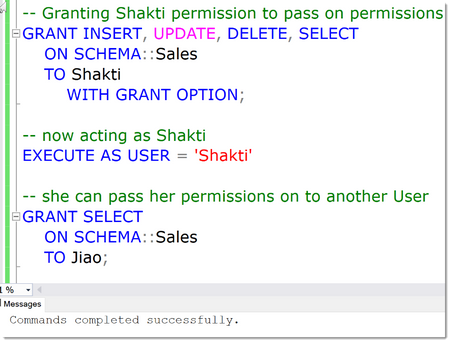

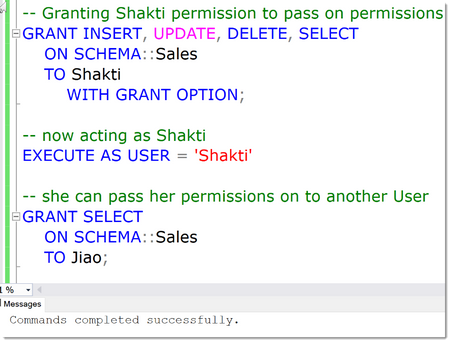

GRANT WITH GRANT OPTION

This one may be a less widely known possibility in SQL: it is possible to Grant a permission to Users and to allow them to pass on this permission. This is what the “WITH GRANT OPTION” of the GRANT statement is for.

In the below example, a User Shakti has been granted the various privileges, INSERT, UPDATE, DELETE and SELECT a Schema named “Sales”. On top of that, by using the WITH GRANT OPTION, she is allowed to pass on those permissions.

This is demoed by impersonating her account using the EXECUTE AS clause (btw: this is also an example of using delegation at the identity level) and now “being” Shakti we can grant permissions to anyone else, Jiao in this case.

EXECUTE AS and privilege bracketing for temporarily delegating permissions

A powerful and commonly used technique is the possibility to run stored procedures under a separate user account for just the task at hand, no matter who the original caller is, and by that means use the privileges of the impersonated account for the runtime of the stored procedure.

This is typically used to delegate tasks that otherwise require high privileges in SQL Server.

Strictly speaking, the delegation happens when access to the stored procedure is granted. The use of a stored procedure is technically a separate concept, referred to as “privilege bracketing” which is somewhat in the same space as just-in-time privileges (JIT). JIT is yet another technique which allows the use of certain elevated permissions for a certain period of time (aka “time bound”) only.

Privileged Identity Management in Azure offers these capabilities: What is Privileged Identity Management? – Azure AD | Microsoft Docs

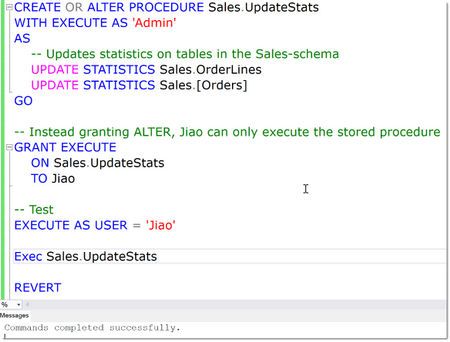

See the below example: ALTER is the minimal (“least”) permission necessary to Update Statistics on tables. But instead of granting ALTER on each table or the whole database, Jiao will only get permission to run this stored procedure which in turn runs under elevated permissions that are assumed for the runtime of the procedure only.

Note

If you are auditing User’s activities (which in general you should, but this is another topic coming up), you need to be aware that since Impersonation has the effect that the “current user” is not the actual User who is acting, looking only at Server or Database Principal ID’s does not give the right picture. Luckily, SQL Auditing by design always captures the session_server_principal_name. This contains the name of the principal who is connected to the instance of SQL Server originally, no matter how many levels of impersonations are done. This is the same like using the SQL Function ORIGINAL_LOGIN(), which you should use when implementing custom Logging solutions.

Signing Modules for temporarily delegating permissions

There is an alternative option when using modules for delegation to EXECUTE AS: signing the module. In SQL Server, modules (such as stored procedures, functions and triggers) can be signed with an asymmetric key or a certificate (technically just another form of asymmetric key).

The trick is that this key or certificate can be mapped to a database user. And when the module is executed, and the signature has been verified, the module will inherit the permissions of the mapped user. And here is the difference to the EXECUTE AS-clause: the permissions of the certificate-mapped user will be added to the permissions of the original caller, not replace them. This is because the execution context actually does not change. Which leads to the second big difference: All the built-in functions that return login and user names return the name of the caller, not the certificate user name.

In the resources you will find a couple of links with various examples.

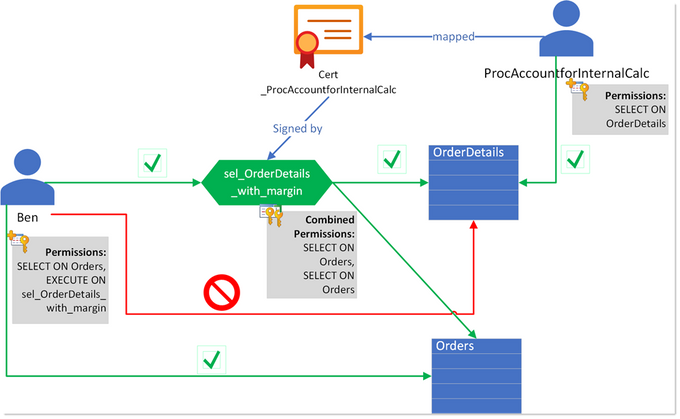

Here is a diagram of how this can be used in a simple example:

In this example, Ben alone only has SELECT on the table Orders, but does not have access to the OrderDetails-tables. Instead, the intention is, that he can only access this table by using the specifically prepared stored procedure ProcAccountforInternalCalc.

Only at runtime of this procedure, the permissions of the original caller, Ben, are extended with the permissions of another user, ProcAccountforInternalCalc, which has been specifically created for this use-case: to grant the SELECT permission on the table OrderDetails only when the stored procedure sel_OrderDetails_with_margin is being used. For that, this user is mapped to a certificate, Cert

_ProcAccountforInternalCalc, which has been used to sign the stored procedure.

Now, anyone who has permission to execute this procedure, will inherit the additional permissions of ProcAccountforInternalCalc and can then see the data from the table – using the business logic from the procedure only.

Note

Module signing should only ever be used to GRANT permissions, and not be used as a mechanism to enforce DENY, let alone REVOKE permissions.

Most SQL Server environments I have seen already some use these concepts one way or the other. Delegation is a very powerful and useful technique that can also help ensuring the Principle of Least Privilege is adhered to and help implement Separation of Duties, which is why I felt it deserves a place in this article-series.

Happy delegating

Andreas

Thank you to my Reviewer:

Mirek Sztajno, Senior Program Manager in SQL Security and expert in Authentication

Resources

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

Welcome to Bing Insiders Community. We’re excited to read about your experience with Bing! To make sure our space is a safe environment for all and that your comments don’t get lost check out these guidelines before you post.

Community Guidelines

Be respectful to Microsoft employees, Microsoft Valued Professionals (MVPs), moderators, and the other members of this community. Disrespectful behaviors include condescension, microaggressions, disrespectful language (being rude, insulting, berating, or commenting upon anything personal), etc…

Low quality or low effort posts, clickbait titles, and reposts are not allowed and will be removed. Topics with little discussion merit are considered low quality and may be removed. It is good etiquette to use the forum search feature for active discussions before posting a new topic.

Do not “bump” or double post in a topic unnecessarily or more than once in 48 hours. If you do this it will result in the bump post being deleted. Additionally, do not revive old threads. Instead of replying to an inactive conversation that was an issue in the past, create a new thread.

Post your comments, opinions, and ideas in a constructive and respectful way. Don’t be afraid to hop in and add to a conversation. Introduce yourself, meet people. If you think someone is wrong it may be because they are new; don’t jump on them, but try to be tactful and civil. Please do not try and provoke anyone into a fight. This includes following people to different sections of these forums and replying to them to fuel rivalry or continuing a discussion after it has gone downhill.

You should not post your or anyone else’s personal information on these forums including email or physical addresses, phone numbers, credit card numbers, or sensitive log-in information. Furthermore, if any user asks you for any of the information detailed above, do not provide it, and please immediately report the message to the moderators using the report feature. To report a message on these forums asking for your personal information, please click the down arrow in the top right of a comment box and select Report inappropriate content. If you need to share logs to troubleshoot further with the Microsoft employees or the MVPs, ensure that all personal information is stripped before sharing.

Please do not start a thread in one forum then cross-post in another forum to increase your chances for a quick reply. If you mistakenly post in the wrong forum, don’t worry, the moderators will be more than happy to move your post to the appropriate forum. Additionally, please post issues that you yourself are experiencing, not something you see someone else is experiencing; this ensures the community member who is experiencing the issue can get the direct help they are looking for.

Do not use any posting style that is disruptive and/or disrespectful, such as posting in ALL CAPS, l337speak, oVeRuSeD mEmEs, off-topic banter/phrases, etc…

Please do not discuss or post links to any topic that could violate the Terms of Use. Attempting to manipulate the forums, or other community features including ranking and reputation systems, by violating any of the provisions of this Code of Conduct, colluding with others on voting, or using multiple profiles, may result in a temporary or permanent suspension from these forums.

These guidelines are considered a warning. Failure to follow these guidelines may result in a temporary or permanent ban from the community. If you have an issue with a moderator action taken on these forums, please reach out to an employee or moderator privately. Do not repost content a moderator has removed or locked, remove a post edited by a moderator, or post about forum moderation decisions.

Alyxandria (she/her)

Community Manager – Bing Insiders

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

Microsoft.Data.SqlClient 3.0 Preview 1 has been released. This release contains improvements and updates to the Microsoft.Data.SqlClient data provider for SQL Server.

Our plan is to provide GA releases twice a year with two preview releases in between. This cadence should provide time for feedback and allow us to deliver features and fixes in a timely manner. This first 3.0 preview includes many fixes and changes over the previous 2.1 GA release.

Notable changes include:

- Configurable Retry Logic

- The most exciting feature of this release, configurable retry logic is available when you’ve enabled an app context switch. Configurable retry logic builds significantly more transient error handling functionality into SqlClient than existed previously. It will allow you to retry connection and command executions based on configurable settings. Since it is even configurable outside of your code, it can help make existing applications more resilient to transient errors that you might encounter in real-world use.

For a detailed look into this feature, check out the blog post Introducing Configurable Retry Logic in Microsoft.Data.SqlClient v3.0.0-Preview1.

- Dropped support for .NET Framework 4.6. .NET Framework 4.6.1 is the new minimum requirement

- Added support for Event counters in .NET Core 3.1+ and .NET Standard 2.1+

- Added support for Assembly Context Unloading in .NET Core

- Performance increases

- Numerous bug fixes

For the full list of changes in Microsoft.Data.SqlClient 3.0 Preview 1, please see the Release Notes.

To try out the new package, add a NuGet reference to Microsoft.Data.SqlClient in your application and pick the 3.0 preview 1 version.

We appreciate the time and effort you spend checking out our previews. It makes the final product that much better. If you encounter any issues or have any feedback, head over to the SqlClient GitHub repository and submit an issue.

David Engel

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

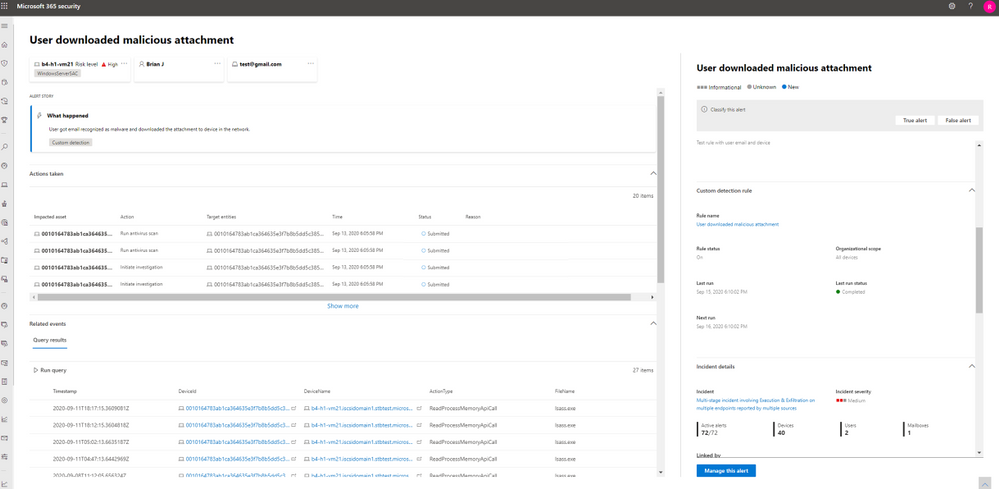

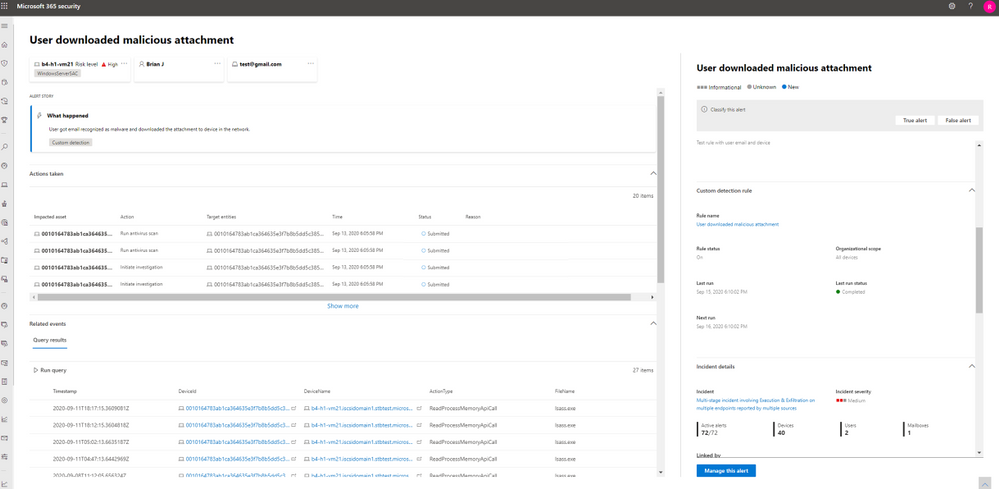

Microsoft 365 Defender simplifies and expands Microsoft security capabilities by consolidating data and functionality into unified experiences in the Microsoft 365 security center.

With advanced hunting, customers can continue using the powerful Kusto-based query interface to hunt across a device-optimized schema for Microsoft Defender for Endpoint. You can also switch to the Microsoft 365 security center, where we’ve surfaced additional email, identity, and app data consolidated under Microsoft 365 Defender.

Customers who actively use advanced hunting in Microsoft Defender for Endpoint are advised to note the following details to ensure a smooth transition to advanced hunting in Microsoft 365 Defender:

- You can now edit your Microsoft Defender for Endpoint custom detection rules in Microsoft 365 Defender. At the same time, alerts generated by custom detection rules in Microsoft 365 Defender will now be displayed in a newly built alert page that provides the following information:

- Alert title and description

- Impacted assets

- Actions taken in response to the alert

- Query results that triggered the alert (timeline and table views)

- Information on the custom detection rule

- With alert data consolidated from various sources in Microsoft 365 Defender, the contents of the DeviceAlertEvents table are surfaced using the AlertInfo and AlertEvidence tables. These replacement tables are not constrained to alerts on devices. Instead, they also cover alerts from Microsoft Defender for Office 365, Microsoft Defender for Identity, and Microsoft Cloud App Security, providing visibility over threat activity impacting emails, apps, and identities.

Read through the following sections for tips on how you can transition your Microsoft Defender for Endpoint rules smoothly to Microsoft 365 Defender.

Migrate custom detection rules

When Microsoft Defender for Endpoint rules are edited in Microsoft 365 Defender, they can continue to function as before if the resulting query looks at device tables only. For example, alerts generated by custom detection rules that query only device tables will continue to be delivered to your SIEM and generate email notifications, depending on how you’ve configured these in Microsoft Defender for Endpoint. Any existing suppression rules in Microsoft Defender for Endpoint will also continue to apply.

Once you edit a Microsoft Defender for Endpoint rule so that it queries identity and email tables, which are only available in Microsoft 365 Defender, the rule is automatically moved to Microsoft 365 Defender. Alerts generated by the migrated rule:

- Are no longer visible in the Microsoft Defender Security Center

- Will cease being delivered to your SIEM or generate email notifications. To work around these changes, configure notifications through Microsoft 365 Defender to get the alerts. You can use the Microsoft 365 Defender API to receive notifications for custom detection alerts or related incidents.

- Won’t be suppressed by Microsoft Defender for Endpoint suppression rules. To prevent alerts from being generated for certain users, devices, or mailboxes, modify the corresponding queries to exclude those entities explicitly.

If you do edit a rule this way, you will be prompted for confirmation before such changes are applied.

Write queries without DeviceAlertEvents

In the Microsoft 365 Defender, the AlertInfo and AlertEvidence tables are provided to accommodate the diverse set of information that accompany alerts from various sources. Once you transition to advanced hunting in Microsoft 365 Defender, you’ll need to make adjustments so your queries get the same alert information that you used to get from the DeviceAlertEvents table in the Microsoft Defender for Endpoint schema.

In general, you can get all the device-specific Microsoft Defender for Endpoint alert info by filtering the AlertInfo table by ServiceSource and then joining each unique ID with the AlertEvidence table, which provides detailed event and entity information. See the sample query below:

AlertInfo

| where Timestamp > ago(7d)

| where ServiceSource == “Microsoft Defender for Endpoint“

| join AlertEvidence on AlertId

This query will yield many more columns than simply taking records from DeviceAlertEvents. To keep results manageable, use project to get only the columns you are interested in. The query below projects columns you might be interested in when investigating detected PowerShell activity:

AlertInfo

| where Timestamp > ago(7d)

| where ServiceSource == “Microsoft Defender for Endpoint“

and AttackTechniques has “powershell“

| join AlertEvidence on AlertId

| project Timestamp, Title, AlertId, DeviceName, FileName, ProcessCommandLine

Important note on the visibility of data in Microsoft Defender for Endpoint

Saved queries and custom detection rules that use tables that are not in Microsoft Defender for Endpoint are visible in Microsoft 365 security center(security.microsoft.com) only—you will not see them in the Microsoft Defender Security Center. In the Microsoft Defender Security Center, you will see only the queries and rules that are based on the tables available in this portal.

Let us know how we can help

While the move to Microsoft 365 Defender offers limitless benefits especially to customers who have deployed multiple Microsoft 365 security solutions, we understand how change can always present challenges. We’d like to encourage all customers to send us feedback about their experiences managing this change and suggestions on how we can help further. Send us feedback through the portals or contact us at ahfeedback@microsoft.com.

by Contributed | Mar 16, 2021 | Technology

This article is contributed. See the original author and article here.

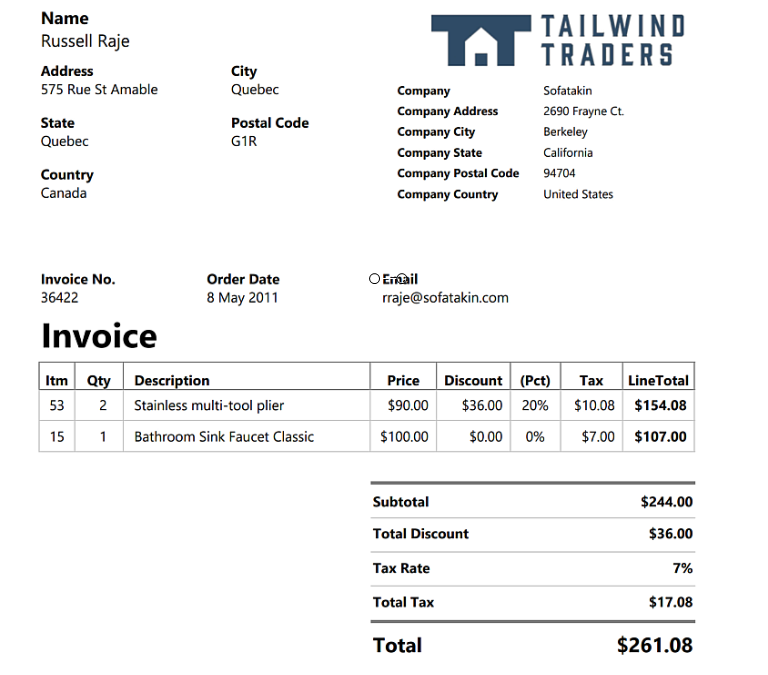

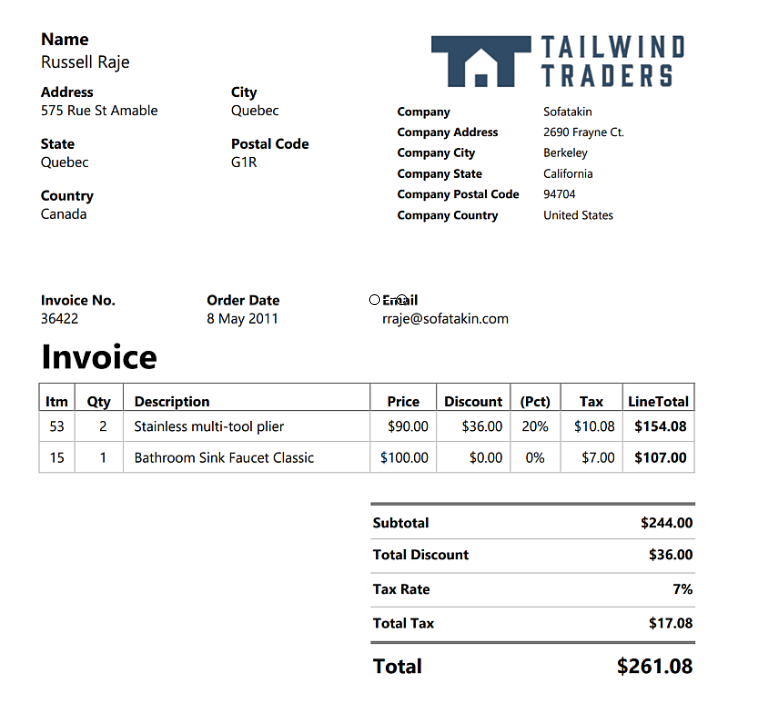

Form Recognizer is a powerful tool to help build a variety of document machine learning solutions. It is one service however its made up of many prebuilt models that can perform a variety of essential document functions. You can even custom train a model using supervised or unsupervised learning for tasks outside of the scope of the prebuilt models! Read more about all the features of Form Recognizer here. In this example we will be looking at how to use one of the prebuilt models in the Form Recognizer service that can extract the data from a PDF document dataset. Our documents are invoices with common data fields so we are able to use the prebuilt model without having to build a customized model.

Sample Invoice:

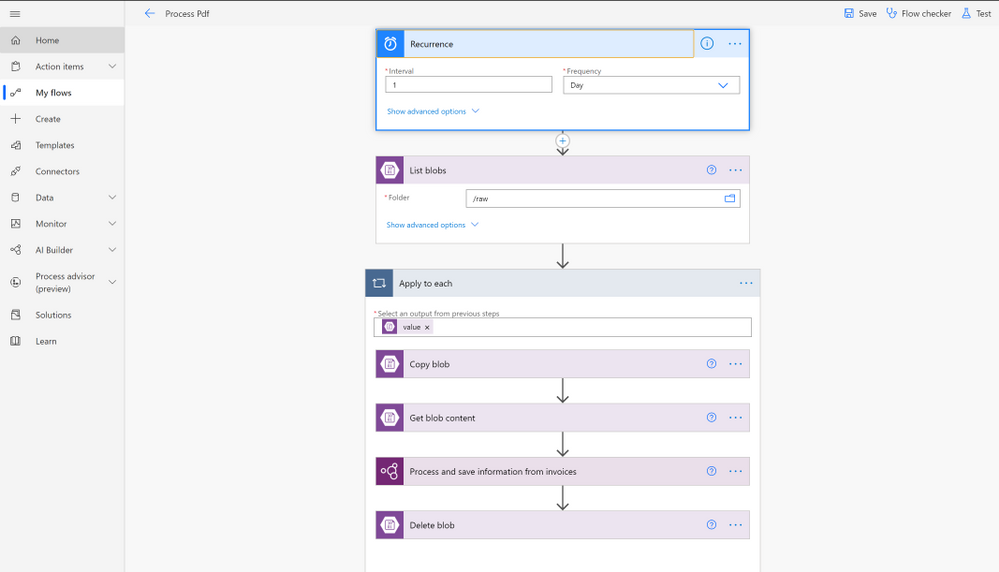

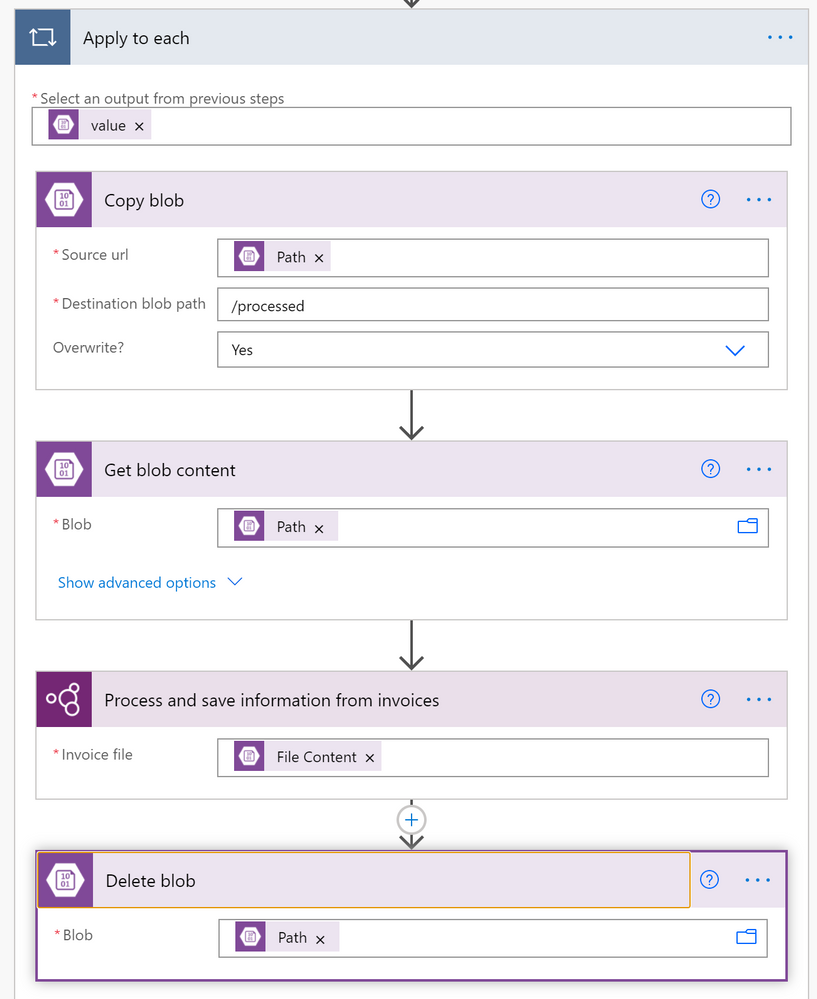

After we take a look at how to do this with Python and Azure Form Recognizer, we will take a look at how to do the same process with no code using the Power Platform services: Power Automate and Form Recognizer built into AI Builder. In the Power Automate flow we are scheduling a process to happen every day. What the process does is look in the raw blob container to see if there is new files to be processed. If there is new files to be processed it gets all blobs from the container and loops through each blob to extract the PDF data using a prebuilt AI builder step. Then it deletes the processed document from the raw container. See what it looks like below.

Power Automate Flow:

Prerequisites for Python

Prerequisites for Power Automate

Process PDFs with Python and Azure Form Recognizer Service

Create Services

First lets create the Form Recognizer Cognitive Service.

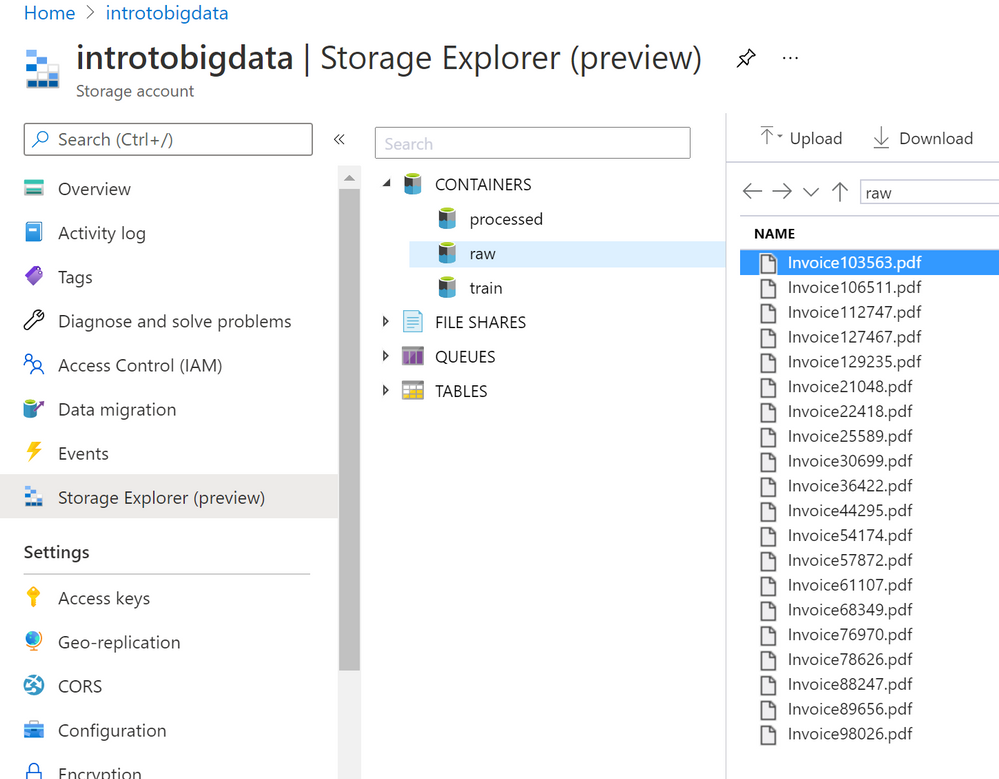

Now lets create a storage account to store the PDF dataset we will be using in containers. We want two containers, one for the processed PDFs and one for the raw unprocessed PDF.

Upload data

Upload your dataset to the Azure Storage raw folder since they need to be processed. Once processed then they would get moved to the processed container.

The result should look something like this:

Create Notebook and Install Packages

Now that we have our data stored in Azure Blob Storage we can connect and process the PDF forms to extract the data using the Form Recognizer Python SDK. You can also use the Python SDK with local data if you are not using Azure Storage. This example will assume you are using Azure Storage.

!pip install azure–ai–formrecognizer ––pre

- Then we need to import the packages.

import os

from azure.core.exceptions import ResourceNotFoundError

from azure.ai.formrecognizer import FormRecognizerClient

from azure.core.credentials import AzureKeyCredential

import os, uuid

from azure.storage.blob import BlobServiceClient, BlobClient, ContainerClient, __version__

Create FormRecognizerClient

- Update the

endpoint and key with the values from the service you created. These values can be found in the Azure Portal under the Form Recongizer service you created under the Keys and Endpoint on the navigation menu.

endpoint = “<your endpoint>”

key = “<your key>”

form_recognizer_client = FormRecognizerClient(endpoint, AzureKeyCredential(key))

- Create the

print_results helper function for use later to print out the results of each invoice.

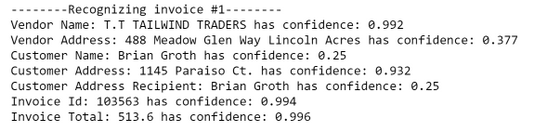

def print_result(invoices, blob_name):

for idx, invoice in enumerate(invoices):

print(“——–Recognizing invoice {}——–“.format(blob_name))

vendor_name = invoice.fields.get(“VendorName”)

if vendor_name:

print(“Vendor Name: {} has confidence: {}”.format(vendor_name.value, vendor_name.confidence))

vendor_address = invoice.fields.get(“VendorAddress”)

if vendor_address:

print(“Vendor Address: {} has confidence: {}”.format(vendor_address.value, vendor_address.confidence))

customer_name = invoice.fields.get(“CustomerName”)

if customer_name:

print(“Customer Name: {} has confidence: {}”.format(customer_name.value, customer_name.confidence))

customer_address = invoice.fields.get(“CustomerAddress”)

if customer_address:

print(“Customer Address: {} has confidence: {}”.format(customer_address.value, customer_address.confidence))

customer_address_recipient = invoice.fields.get(“CustomerAddressRecipient”)

if customer_address_recipient:

print(“Customer Address Recipient: {} has confidence: {}”.format(customer_address_recipient.value, customer_address_recipient.confidence))

invoice_id = invoice.fields.get(“InvoiceId”)

if invoice_id:

print(“Invoice Id: {} has confidence: {}”.format(invoice_id.value, invoice_id.confidence))

invoice_date = invoice.fields.get(“InvoiceDate”)

if invoice_date:

print(“Invoice Date: {} has confidence: {}”.format(invoice_date.value, invoice_date.confidence))

invoice_total = invoice.fields.get(“InvoiceTotal”)

if invoice_total:

print(“Invoice Total: {} has confidence: {}”.format(invoice_total.value, invoice_total.confidence))

due_date = invoice.fields.get(“DueDate”)

if due_date:

print(“Due Date: {} has confidence: {}”.format(due_date.value, due_date.confidence))

Connect to Blob Storage

# Create the BlobServiceClient object which will be used to get the container_client

connect_str = “<Get connection string from the Azure Portal>”

blob_service_client = BlobServiceClient.from_connection_string(connect_str)

# Container client for raw container.

raw_container_client = blob_service_client.get_container_client(“raw”)

# Container client for processed container

processed_container_client = blob_service_client.get_container_client(“processed”)

# Get base url for container.

invoiceUrlBase = raw_container_client.primary_endpoint

print(invoiceUrlBase)

HINT: If you get a “HttpResponseError: (InvalidImageURL) Image URL is badly formatted.” error make sure the proper permissions to access the container are set. Learn more about Azure Storage Permissions here

Extract Data from PDFs

We are ready to process the blobs now! Here we will call list_blobs to get a list of blobs in the raw container. Then we will loop through each blob, call the begin_recognize_invoices_from_url to extract the data from the PDF. Then we have our helper method to print the results. Once we have extracted the data from the PDF we will upload_blob to the processed folder and delete_blob from the raw folder.

print(“nProcessing blobs…”)

blob_list = raw_container_client.list_blobs()

for blob in blob_list:

invoiceUrl = f’{invoiceUrlBase}/{blob.name}‘

print(invoiceUrl)

poller = form_recognizer_client.begin_recognize_invoices_from_url(invoiceUrl)

# Get results

invoices = poller.result()

# Print results

print_result(invoices, blob.name)

# Copy blob to processed

processed_container_client.upload_blob(blob, blob.blob_type, overwrite=True)

# Delete blob from raw now that its processed

raw_container_client.delete_blob(blob)

Each result should look similar to this for the above invoice example:

The prebuilt invoices model worked great for our invoices so we don’t need to train a customized Form Recognizer model to improve our results. But what if we did and what if we didn’t know how to code?! You can still leverage all this awesomeness in AI Builder with Power Automate without writing any code. We will take a look at this same example in Power Automate next.

Use Form Recognizer with AI Builder in Power Automate

You can achieve these same results using no code with Form Recognizer in AI Builder with Power Automate. Lets take a look at how we can do that.

Create a New Flow

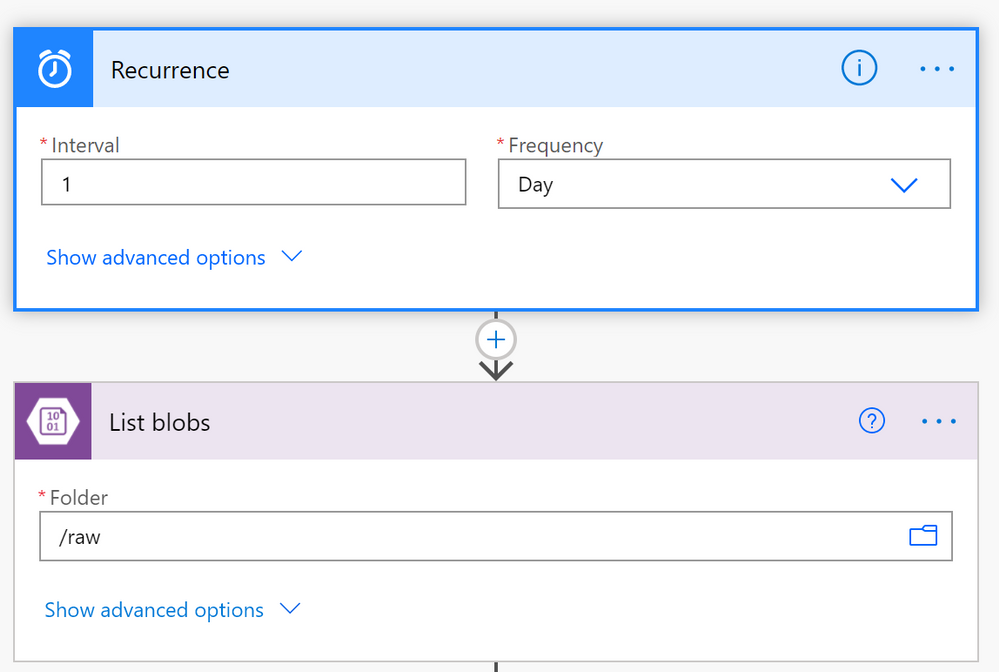

- Log in to Power Automate

- Click

Create then click Scheduled Cloud Flow. You can trigger Power Automate flows in a variety of ways so keep in mind that you may want to select a different trigger for your project.

- Give the Flow a name and select the schedule you would like the flow to run on.

Connect to Blob Storage

- Click

New Step

List blobs Step

- Search for

Azure Blob Storage and select List blobs

- Select the ellipsis click

Create new connection if your storage account isn’t already connected

- Fill in the

Connection Name, Azure Storage Account name (the account you created), and the Azure Storage Account Access Key (which you can find in the resource keys in the Azure Portal)

- Then select

Create

- Once the storage account is selected click the folder icon on the right of the list blobs options. You should see all the containers in the storage account, select

raw.

Your flow should look something like this:

Loop Through Blobs to Extract the Data

- Click the plus sign to create a new step

- Click

Control then Apply to each

- Select the textbox and a list of blob properties will appear. Select the

value property

- Next select

add action from within the Apply to each Flow step.

- Add the

Get blob content step:

- Search for

Azure Blob Storage and select Get blob content

- Click the textbox and select the

Path property. This will get the File content that we will pass into the Form Recognizer.

- Add the

Process and save information from invoices step:

- Click the plus sign and then

add new action

- Search for

Process and save information from invoices

- Select the textbox and then the property

File Content from the Get blob content section

- Add the

Copy Blob step:

- Repeat the add action steps

- Search for

Azure Blob Storage and select Copy Blob

- Select the

Source url text box and select the Path property

- Select the

Destination blob path and put /processed for the processed container

- Select

Overwrite? dropdown and select Yes if you want the copied blob to overwrite blobs with the existing name.

- Add the

Delete Blob step:

- Repeat the add action steps

- Search for

Azure Blob Storage and select Delete Blob

- Select the

Blob text box and select the Path property

The Apply to each block should look something like this:

- Save and Test the Flow

- Once you have completed creating the flow save and test it out using the built in test features that are part of Power Automate.

This prebuilt model again worked great on our invoice data. However if you have a more complex dataset, use the AI Builder to label and create a customized machine learning model for your specific dataset. Read more about how to do that here.

Conclusion

We went over a fraction of the things that you can do with Form Recognizer so don’t let the learning stop here! Check out the below highlights of new Form Recognizer features that were just announced and the additional doc links to dive deeper into what we did here.

Additional Resources

New Form Recognizer Features

What is Form Recognizer?

Quickstart: Use the Form Recognizer client library or REST API

Tutorial: Create a form-processing app with AI Builder

AI Developer Resources page

AI Essentials video including Form Recognizer

Recent Comments