by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Below is the latest information on Project for the web. If you use on-premises Project and want to learn about Project 2021, please see below or go to the article.

New Features:

- Email assignment notifications ~ Your teammates will receive an email whenever they are assigned a task in Project for the web. Edit your notification settings by clicking on the gear icon in the top Office ribbon and clicking on the “Notification settings” under the Project heading.

- Actions on Project Home ~ Copy, rename, export, or delete your project directly from Project Home, without needing to open it in the browser.

- Open task from Project Power Bi Report ~ You can now directly open task details in Project Board from the Project Power Bi ‘Task Overview’ page. You can download the updated content pack available here.

Upcoming Features

- Import from Project desktop ~ Import your .mpp files from Project desktop to Project for the web.

- Filtering on Board & Timeline views ~ Find your tasks quickly on the Board and Timeline views by filtering by keyword or assignee.

Announcing Microsoft Office 2021

The entire Microsoft Office team is excited to announce the commercial preview of Microsoft Office Long Term Servicing Channel (LTSC) for Windows and Office 2021 for Mac. You can learn more about this preview by reading the Tech Community blog post here.

Microsoft Project Trivia

Last Month:

Thanks to everyone who put their guesses in the comments last month!

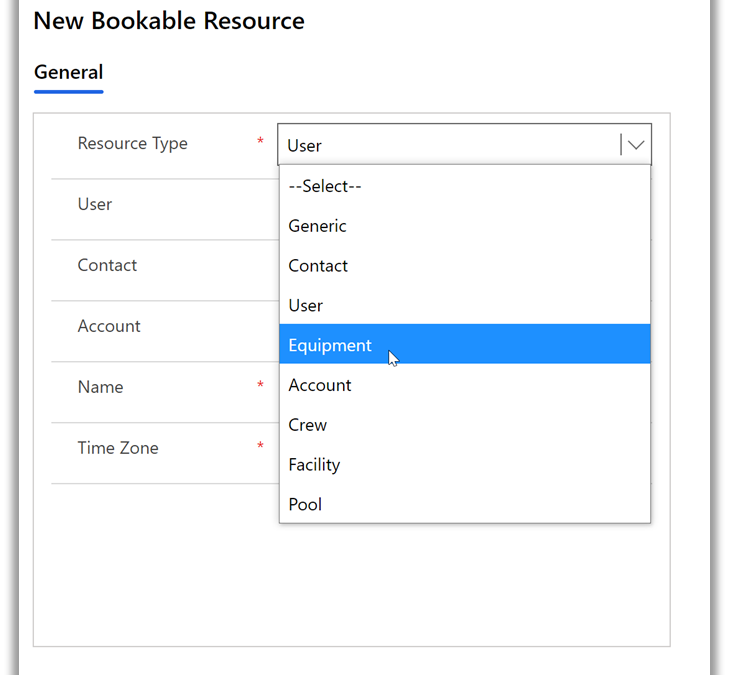

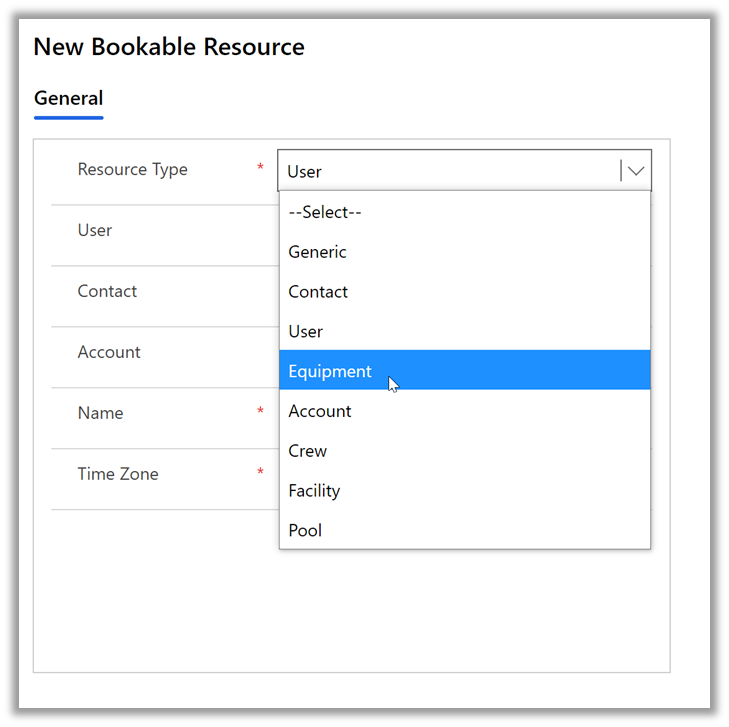

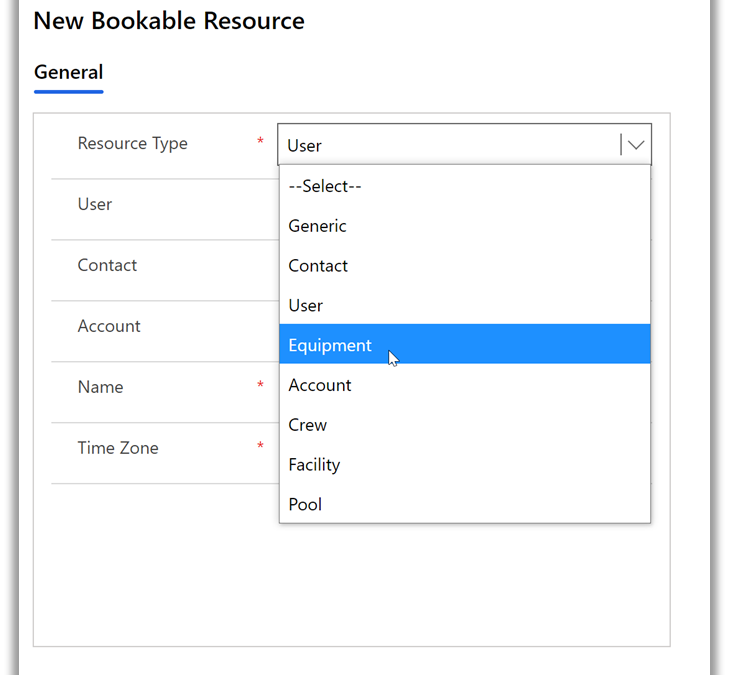

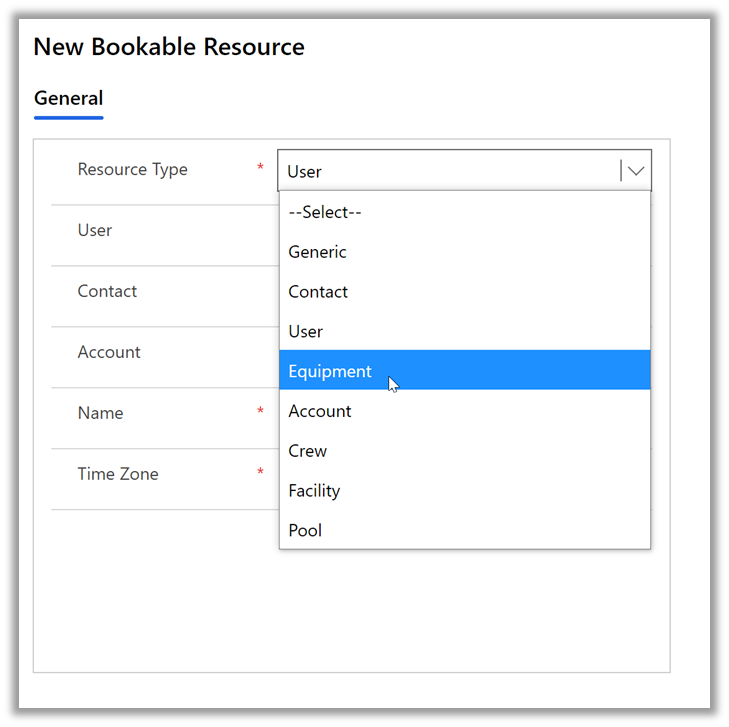

Question: Which type of resource is NOT supported by Project for the web?

A:

- Equipment

- Placeholder

- Crew

- Generic

The correct answer was Placeholder. You can create non-user resources in Project for the web through Power Apps:

Learn more about how to create non-user resources in Project for the web here.

This Month:

QUESTION: Users with Project Plan 3 or 5 can create roadmaps including all their project information. What year did Roadmap in Microsoft Project first become available to users?

Feedback

Microsoft Project loves feedback! Feel free to leave feedback in the comments of this blog or give us feedback through our in-app feedback button. For the latter option, be sure to leave your email address so we can reach out with any questions or updates we may have.

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Call summary

April’s call, hosted by David Chesnut, featured the following presenters and topics:

• Richard Taylor discussed different support options available on Microsoft 365, and how to submit a new support ticket.

• Sudhi Ramamurthy shared news about updates to APIs, Recorder, and Admin-control for Office Scripts.

• Leslie Black (Analysis Cloud Limited) demonstrated a TICTACUFO application he created with Office Scripts.

• David Chesnut demonstrated a new PnP sample that shows how to handle event-based activation and set the signature in Outlook.

To watch the call, tap the following link.

Office Add-ins community call – April 2021 – YouTube

Q&A (Question & Answers)

We welcome you to submit questions and topic suggestions prior to each call by using our short survey form.

Support options

When we try to open tickets in the admin center regarding SharePoint Development they are getting immediately closed with the comment “we don’t provide support for developer issues”. Is that different for Office Add-ins?

The comment you see applies if you don’t have a Premier account, or Enterprise SKU. If you do have a Premier account and are seeing this comment, please reach out to us on the next community call web chat so we can follow up with you.

What level of support can I get for my tenant from the Microsoft 365 Developer Program?

The Microsoft 365 Developer Program provides standard Office 365 support, and does not include developer support. You can get help with developer questions at Microsoft Q&A for Office Add-ins, or at Stack Overflow [office-js].

Office Scripts

ServiceHub is not enabled for my Enterprise ID. Is it possible for an admin to configure without admin access to rest of the Office 365 tenant?

If you have a Premier account, we can reach out to your ADC/TAM and ensure you have access. If you just have an Enterprise subscription, use the O365 admin instead. Either should work.

Are Office Scripts for Excel on the web available to Microsoft 365 family accounts or just Enterprise?

Currently, Office Scripts are only available on Enterprise, though we’re exploring expanding availability to other licenses.

Miscellaneous questions

When will the current Outlook preview requirement set, including event-based activation, be available in production?

We don’t have a specific date yet, but we hope to make it available soon.

The Outlook REST API is going to be decommissioned. Is it safe to continue using EWS (Exchange Web Services) with Outlook add-ins going forward or is it also at risk of being decommissioned?

At present, there is no plan to decommission EWS.

Is it possible to port Office.addin.showAsTaskpane common API function to Outlook JS?

This is a great suggestion! Can you please provide more details on this idea, and scenario at Microsoft 365 Developer Platform – Microsoft Tech Community?

One of our custom functions must access data (a table) not specified in the arguments. We’ve noticed that calling Excel.run to request that data through the Excel request context on every formula invocation leads to concurrency issues (RichApi.Error: Wait until the previous call completes.) Is there a flexible way to get this to work?

There is a code approach used in GitHub issue 483 in office-js that may help you. If that doesn’t work for your scenario, please post a question with more information at Microsoft Q&A: Office JavaScript API.

In Excel custom functions, is there a way to pause the calculations and resume later (other than setting calculation mode to manual)? End users want to pass an additional flag to the custom function that indicates if it should run or not run.

We suggest switching to manual recalc mode, instead of using an argument to the function. Our goal is for Excel custom functions to run using the same paradigm as built-in functions. We don’t have a design pattern where we pause recalc based on an argument to a function. It’s clearer for users if you ask them to change to manual recalc, that you could implement in the task pane UI. Then when completed, you can switch back to automatic recalculation and the custom functions will run.

In Excel custom functions, you can get the address of the calling cell from the invocation object. Is there a way to get the current value from the cell along with the address?

We suggest that you use the onCalculated event which is called when custom functions are calculated. Here’s an example:

async function onCalculated(event) {

await Excel.run(async (context) => {

console.log(event.address);

if (event.address == "A13") // “A13” here is an example of the cell that has the custom function. It can be replaced by a real cell address.

{

context.load (event.address, “values”);

await context.sync();

console.log(`The range value was "${cellC1.values}".`);

}

});

}

Can the Microsoft Graph API be used by an add-in without a dedicated server to proxy requests to the Graph API? We’re trying to replace the usage of the Outlook REST API in an add-in that makes API calls from the client-side. We are struggling to find tutorials covering that case.

If you’re Outlook add-in is basically a single-page app (SPA) this tutorial will help you call the Microsoft Graph API from the add-in: Tutorial: Create a JavaScript single-page app that uses auth code flow – Microsoft identity platform | Microsoft Docs. There are also additional Microsoft Graph samples at https://github.com/Azure-Samples/active-directory-aspnetcore-webapp-openidconnect-v2/tree/master/2-WebApp-graph-user

When will Outlook sideload and debug from VS Code (as demoed in November community call) be available?

This feature is available, but there is an issue that prevents it from working in all scenarios. We’re working to get a fix out soon.

Resources

Microsoft 365 support

Office Scripts demo by Leslie Black

PnP: Outlook set signature

Office Add-ins community call

Office Add-ins feedback

The next Office Add-ins community call is on Wednesday, May 12, 2021 at 8:00AM PDT. You can download the calendar invite at https://aka.ms/officeaddinscommunitycall.

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Please join Microsoft, Ardalyst, and featured defense experts Tuesday May 3rd 2:00 – 3:00 PM EDT | 11:00 -12:00 PDT where we will discuss the critical components of a mature cyber defense program from the cradle to the grave of DoD systems. As most DoD warfighting capabilities are born and die in the DIB, we will explore the current art and science of IT and OT cyber defense and its implications from a full lifecycle perspective through a combined government and industry panel.

Defending commercial industry and Defense Industrial Base (DIB) from an Information and Operational Technologies (IT and OT) perspectives underpins the challenges we have in a Joint fight. As the Cybersecurity Maturity Model Certification (CMMC) comes online, it parallels the need for the DoD to mature internal cyber defense requirements and the resilient supply chain necessary to support it.

During the webinar, we will help you better understand:

· Why can’t we fix the vulnerabilities in technologies, specifically OT?

· What is the best way to effectively sensor a system?

· How do we leverage threat intelligence to understand and adapt against adversaries?

· How can OT sensors deter adversaries?

· What is the right workforce to deter, detect, and engage adversaries?

Speakers

Joe DiPietro

Principal Technical Specialist,

Azure IoT Security

Microsoft

Chris Cleary

Principal Cyber Advisor (PCA)

Department of the Navy

Josh O’Sullivan

Chief Technology Officer

Ardalyst

Register here today.

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Update: Monday, 03 May 2021 17:51 UTC

We continue to investigate issues within Application Insights for South UK. Root cause is not fully understood at this time. Some customers continue to experience data latency and potential data gaps in Application Insights data. This could cause delayed or misfired alerts. We are working to establish the start time for the issue, initial findings indicate that the problem began at 5/03 17:11 UTC. We currently have no estimate for resolution.

- Work Around: none

- Next Update: Before 05/03 20:00 UTC

-Ian

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Overview

In 2021, each month there will be a monthly blog covering the webinar of the month for the Low-code application development (LCAD) on Azure solution.

LCAD on Azure is a solution to demonstrate the robust development capabilities of integrating low-code Microsoft Power Apps and the Azure products you may be familiar with.

This month’s webinar is ‘Unlock the Future of Azure IoT through Power Platform’.

In this blog I will briefly recap Low-code application development on Azure, provide an overview of IoT on Azure and Azure Functions, how to pull an Azure Function into Power Automate, and how to integrate your Power Automate flow into Power Apps.

What is Low-code application development on Azure?

Low-code application development (LCAD) on Azure was created to help developers build business applications faster with less code.

Leveraging the Power Platform, and more specifically Power Apps, yet helping them scale and extend their Power Apps with Azure services.

For example, a pro developer who works for a manufacturing company would need to build a line-of-business (LOB) application to help warehouse employees’ track incoming inventory.

That application would take months to build, test, and deploy. Using Power Apps, it can take hours to build, saving time and resources.

However, say the warehouse employees want the application to place procurement orders for additional inventory automatically when current inventory hits a determined low.

In the past that would require another heavy lift by the development team to rework their previous application iteration.

Due to the integration of Power Apps and Azure a professional developer can build an API in Visual Studio (VS) Code, publish it to their Azure portal, and export the API to Power Apps integrating it into their application as a custom connector.

Afterwards, that same API is re-usable indefinitely in the Power Apps’ studio, for future use with other applications, saving the company and developers more time and resources.

IoT on Azure and Azure Functions

The goal of this webinar is to understand how to use IoT hub and Power Apps to control an IoT device.

To start, one would write the code in Azure IoT Hub, to send commands directly to your IoT device. In this webinar Samuel wrote in Node for IoT Hub, and wrote two basic commands, toggle fan on, and off.

The commands are sent via the code in Azure IoT Hub, which at first run locally. Once tested and confirmed to be running properly the next question is how can one rapidly call the API from anywhere across the globe?

The answer is to create a flow in Power Automate, and connect that flow to a Power App, which will be a complete dashboard that controls the IoT device from anywhere in the world.

To accomplish this task, you have to first create an Azure Function, which will then be pulled into Power Automate using a “Get” function creating the flow.

Once you’ve built the Azure Function, run and test it locally first, test the on and off states via the Function URL.

To build a trigger for the Azure Function, in this case a Power Automate flow, you need to create an Azure resources group to check the Azure Function and test its local capabilities.

If the test fails it could potentially be that you did not create or have an access token for the IoT device. To connect a device, IoT or otherwise to the cloud, you need to have an access token.

In the webinar Samuel added two application settings to his function for the on and off commands.

After adding these access tokens and adjusting the settings of the IoT device, Samuel was able to successfully run his Azure Function.

Azure Function automated with Power Automate

After building the Azure Function, you now can build your Power Automate flow to start building your globally accessible dashboard to operate your IoT device.

Samuel starts by building a basic Power Automate framework, then flow, and demonstrates how to test the flow once complete.

He starts with a HTTP request, and implements a “Get” command. From there it is a straightforward process, to test and get the IoT Device to run.

Power Automate flow into Power Apps

After building your Power Automate flow, you develop a simple UI to toggle the fan on and off. Do this by building a canvas Power App and importing the Power Automate flow into the app.

To start, create a blank canvas app, and name it. In the Power Apps ribbon, you select “button”, and pick the button’s source, selecting “Power Automate” and “add a flow”.

Select the flow that is connected to the Azure IoT device, its name should be reflected in the selection menu.

If everything is running properly your IoT device will turn on.

FYI in the webinar Samuel is running out of time, so he creates a new Power Automate flow, which he imports into the canvas app.

Summary

Make sure to watch the webinar to learn more about Azure IoT and how to import Azure Functions into your Power Apps.

Additionally, there will be a Low-code application development on Azure ‘Learn Live’ session during Build, covering the new .NET x Power Apps learning path, covering integrations with Azure Functions, Visual Studio, and API Management.

Lastly, tune in to the Power Apps x Azure featured session at Build on May 25th, to learn more about the next Visual Studio extensions, Power Apps source code, and the ALM accelerator package. Start registering for Microsoft Build at Microsoft Build 2021.

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

We’re pleased to announce that the Microsoft Information Protection SDK version 1.9 is now generally available via NuGet and Download Center.

Highlights

In this release of the Microsoft Information Protection SDK, we’ve focused on quality updates, enhancing logging scenarios, and several internal updates.

- Full support for CentOS 8 (native only).

- When using custom

LoggerDelegate you can now pass in a logger context. This context will be written to your log destination, enabling easier correlation and troubleshooting between your apps and services and the MIP SDK logs.

- The following APIs support providing the logger context:

LoggerDelegate::WriteToLogWithContext

TaskDispatcherDelegate::DispatchTask or ExecuteTaskOnIndependentThread

FileEngine::Settings::SetLoggerContext(const std::shared_ptr<void>& loggerContext)

FileProfile::Settings::SetLoggerContext(const std::shared_ptr<void>& loggerContext)

ProtectionEngine::Settings::SetLoggerContext(const std::shared_ptr<void>& loggerContext)

ProtectionProfile::Settings::SetLoggerContext(const std::shared_ptr<void>& loggerContext)

PolicyEngine::Settings::SetLoggerContext(const std::shared_ptr<void>& loggerContext)

PolicyProfile::Settings::SetLoggerContext(const std::shared_ptr<void>& loggerContext)

FileHandler::IsProtected()

FileHandler::IsLabeledOrProtected()

FileHanlder::GetSerializedPublishingLicense()

PolicyHandler::IsLabeled()

Bug Fixes

- Fixed a memory leak when calling

mip::FileHandler::IsLabeledOrProtected().

- Fixed a bug where failure in

FileHandler::InspectAsync() called incorrect observer.

- Fixed a bug where SDK attempted to apply co-authoring label format to Office formats that don’t support co-authoring (DOC, PPT, XLS).

- Fixed a crash in the .NET wrapper related to

FileEngine disposal. Native PolicyEngine object remained present for some period and would attempt a policy refresh, resulting in a crash.

- Fixed a bug where the SDK would ignore labels applied by older versions of AIP due to missing SiteID property.

For a full list of changes to the SDK, please review our change log.

Links

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Overview

In 2021, each month there will be a monthly blog covering the webinar of the month for the Low-code application development (LCAD) on Azure solution.

LCAD on Azure is a solution to demonstrate the robust development capabilities of integrating low-code Microsoft Power Apps and the Azure products you may be familiar with.

This month’s webinar is ‘Unlock the Future of Azure IoT through Power Platform’.

In this blog I will briefly recap Low-code application development on Azure, provide an overview of IoT on Azure and Azure Functions, how to pull an Azure Function into Power Automate, and how to integrate your Power Automate flow into Power Apps.

What is Low-code application development on Azure?

Low-code application development (LCAD) on Azure was created to help developers build business applications faster with less code.

Leveraging the Power Platform, and more specifically Power Apps, yet helping them scale and extend their Power Apps with Azure services.

For example, a pro developer who works for a manufacturing company would need to build a line-of-business (LOB) application to help warehouse employees’ track incoming inventory.

That application would take months to build, test, and deploy. Using Power Apps, it can take hours to build, saving time and resources.

However, say the warehouse employees want the application to place procurement orders for additional inventory automatically when current inventory hits a determined low.

In the past that would require another heavy lift by the development team to rework their previous application iteration.

Due to the integration of Power Apps and Azure a professional developer can build an API in Visual Studio (VS) Code, publish it to their Azure portal, and export the API to Power Apps integrating it into their application as a custom connector.

Afterwards, that same API is re-usable indefinitely in the Power Apps’ studio, for future use with other applications, saving the company and developers more time and resources.

IoT on Azure and Azure Functions

The goal of this webinar is to understand how to use IoT hub and Power Apps to control an IoT device.

To start, one would write the code in Azure IoT Hub, to send commands directly to your IoT device. In this webinar Samuel wrote in Node for IoT Hub, and wrote two basic commands, toggle fan on, and off.

The commands are sent via the code in Azure IoT Hub, which at first run locally. Once tested and confirmed to be running properly the next question is how can one rapidly call the API from anywhere across the globe?

The answer is to create a flow in Power Automate, and connect that flow to a Power App, which will be a complete dashboard that controls the IoT device from anywhere in the world.

To accomplish this task, you have to first create an Azure Function, which will then be pulled into Power Automate using a “Get” function creating the flow.

Once you’ve built the Azure Function, run and test it locally first, test the on and off states via the Function URL.

To build a trigger for the Azure Function, in this case a Power Automate flow, you need to create an Azure resources group to check the Azure Function and test its local capabilities.

If the test fails it could potentially be that you did not create or have an access token for the IoT device. To connect a device, IoT or otherwise to the cloud, you need to have an access token.

In the webinar Samuel added two application settings to his function for the on and off commands.

After adding these access tokens and adjusting the settings of the IoT device, Samuel was able to successfully run his Azure Function.

Azure Function automated with Power Automate

After building the Azure Function, you now can build your Power Automate flow to start building your globally accessible dashboard to operate your IoT device.

Samuel starts by building a basic Power Automate framework, then flow, and demonstrates how to test the flow once complete.

He starts with a HTTP request, and implements a “Get” command. From there it is a straightforward process, to test and get the IoT Device to run.

Power Automate flow into Power Apps

After building your Power Automate flow, you develop a simple UI to toggle the fan on and off. Do this by building a canvas Power App and importing the Power Automate flow into the app.

To start, create a blank canvas app, and name it. In the Power Apps ribbon, you select “button”, and pick the button’s source, selecting “Power Automate” and “add a flow”.

Select the flow that is connected to the Azure IoT device, its name should be reflected in the selection menu.

If everything is running properly your IoT device will turn on.

FYI in the webinar Samuel is running out of time, so he creates a new Power Automate flow, which he imports into the canvas app.

Summary

Make sure to watch the webinar to learn more about Azure IoT and how to import Azure Functions into your Power Apps.

Additionally, there will be a Low-code application development on Azure ‘Learn Live’ session during Build, covering the new .NET x Power Apps learning path, covering integrations with Azure Functions, Visual Studio, and API Management.

Lastly, tune in to the Power Apps x Azure featured session at Build on May 25th, to learn more about the next Visual Studio extensions, Power Apps source code, and the ALM accelerator package. Start registering for Microsoft Build at Microsoft Build 2021.

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Citus is an extension to Postgres that lets you distribute your application’s workload across multiple nodes. Whether you are using Citus open source or using Citus as part of a managed Postgres service in the cloud, one of the first things you do when you start using Citus is to distribute your tables. While distributing your Postgres tables you need to decide on some properties such as distribution column, shard count, colocation. And even before you decide on your distribution column (sometimes called a distribution key, or a sharding key), when you create a Postgres table, your table is created with an access method.

Previously you had to decide on these table properties up front, and then you went with your decision. Or if you really wanted to change your decision, you needed to start over. The good news is that in Citus 10, we introduced 2 new user-defined functions (UDFs) to make it easier for you to make changes to your distributed Postgres tables.

Before Citus 9.5, if you wanted to change any of the properties of the distributed table, you would have to create a new table with the desired properties and move everything to this new table. But in Citus 9.5 we introduced a new function, undistribute_table. With the undistribute_table function you can convert your distributed Citus tables back to local Postgres tables and then distribute them again with the properties you wish. But undistributing and then distributing again is … 2 steps. In addition to the inconvenience of having to write 2 commands, undistributing and then distributing again has some more problems:

- Moving the data of a big table can take a long time, undistribution and distribution both require to move all the data of the table. So, you must move the data twice, which is much longer.

- Undistributing moves all the data of a table to the Citus coordinator node. If your coordinator node isn’t big enough, and coordinator nodes typically don’t have to be, you might not be able to fit the table into your coordinator node.

So, in Citus 10, we introduced 2 new functions to reduce the steps you need to make changes to your tables:

alter_distributed_table

alter_table_set_access_method

In this post you’ll find some tips about how to use the alter_distributed_table function to change the shard count, distribution column, and the colocation of a distributed Citus table. And we’ll show how to use the alter_table_set_access_method function to change, well, the access method. An important note: you may not ever need to change your Citus table properties. We just want you to know, if you ever do, you have the flexibility. And with these Citus tips, you will know how to make the changes.

Altering the properties of distributed Postgres tables in Citus

When you distribute a Postgres table with the create_distributed_table function, you must pick a distribution column and set the distribution_column parameter. During the distribution, Citus uses a configuration variable called shard_count for deciding the shard count of the table. You can also provide colocate_with parameter to pick a table to colocate with (or colocation will be done automatically, if possible).

However, after the distribution if you decide you need to have a different configuration, starting from Citus 10, you can use the alter_distributed_table function.

alter_distributed_table has three parameters you can change:

- distribution column

- shard count

- colocation properties

How to change the distribution column (aka the sharding key)

Citus divides your table into shards based on the distribution column you select while distributing. Picking the right distribution column is crucial for having a good distributed database experience. A good distribution column will help you parallelize your data and workload better by dividing your data evenly and keeping related data points close to each other. However, choosing the distribution column might be a bit tricky when you’re first getting started. Or perhaps later as you make changes in your application, you might need to pick another distribution column.

With the distribution_column parameter of the new alter_distributed_table function, Citus 10 gives you an easy way to change the distribution column.

Let’s say you have customers and orders that your customers make. You will create some Postgres tables like these:

CREATE TABLE customers (customer_id BIGINT, name TEXT, address TEXT);

CREATE TABLE orders (order_id BIGINT, customer_id BIGINT, products BIGINT[]);

When first distributing your Postgres tables with Citus, let’s say that you decided to distribute the customers table on customer_id and the orders table on order_id.

SELECT create_distributed_table ('customers', 'customer_id');

SELECT create_distributed_table ('orders', 'order_id');

Later you might realize distributing the orders table by the order_id column might not be the best idea. Even though order_id could be a good column to evenly distribute your data, it is not a good choice if you frequently need to join the orderstable with the customers table on the customer_id. When both tables are distributed by customer_id you can use colocated joins, which are very efficient compared to joins on other columns.

So, if you decide to change the distribution column of orders table into customer_id here is how you do it:

SELECT alter_distributed_table ('orders', distribution_column := 'customer_id');

Now the orders table is distributed by customer_id. So, the customers and the orders of the customers are in the same node and close to each other, and you can have fast joins and foreign keys that include the customer_id.

You can see the new distribution column on the citus_tables view:

SELECT distribution_column FROM citus_tables WHERE table_name::text = 'orders';

How to increase (or decrease) the shard count in Citus

Shard count of a distributed Citus table is the number of pieces the distributed table is divided into. Choosing the shard count is a balance between the flexibility of having more shards, and the overhead for query planning and execution across the shards. Like distribution column, the shard count is also set while distributing the table. If you want to pick a different shard count than the default for a table, during the distribution process you can use the citus.shard_count configuration variable, like this:

CREATE TABLE products (id BIGINT, name TEXT);

SET citus.shard_count TO 20;

SELECT create_distributed_table ('products', 'id');

After distributing your table, you might decide the shard count you set was not the best option. Or your first decision on the shard count might be good for a while but your application might grow in time, you might add new nodes to your Citus cluster, and you might need more shards. The alter_distributed_table function has you covered in the cases that you want to change the shard count too.

To change the shard count you just use the shard_count parameter:

SELECT alter_distributed_table ('products', shard_count := 30);

After the query above, your table will have 30 shards. You can see your table’s shard count on the citus_tables view:

SELECT shard_count FROM citus_tables WHERE table_name::text = 'products';

How to colocate with a different Citus distributed table

When two Postgres tables are colocated in Citus, the rows of the tables that have the same value in the distribution column will be on the same Citus node. Colocating the right tables will help you with better relational operations. Like the shard count and the distribution column, the colocation is also set while distributing your tables. You can use the colocate_with parameter to change the colocation.

SELECT alter_distributed_table ('products', colocate_with := 'customers');

Again, like the distribution column and shard count, you can find information about your tables’ colocation groups on the citus_tables view:

SELECT colocation_id FROM citus_tables WHERE table_name IN ('products', 'customers');

You can also use default and none keywords with colocate_with parameter to change the colocation group of the table to default, or to break any colocation your table has.

To colocate distributed Citus tables, the distributed tables need to have the same shard counts. But if the tables you want to colocate don’t have the same shard count, worry not, because alter_distributed_table will automatically understand this. Then your table’s shard count will also be updated to match the new colocation group’s shard count.

How to change more than one Citus table property at a time

Here is a tip! If you want to change multiple properties of your distributed Citus tables at the same time, you can simply use multiple parameters of the alter_distributed_table function.

For example, if you want to change both the shard count and the distribution column of a table here’s how you do it:

SELECT alter_distributed_table ('products', distribution_column := 'name', shard_count := 35);

How to alter the Citus colocation group

If your table is colocated with some other tables and you want to change the shard count of all of the tables to keep the colocation, you might be wondering if you have to alter them one by one… which is multiple steps.

Yes (you can see a pattern here) the Citus tip is that you can use the alter_distributed_table function to change the properties of all of the colocation group.

If you decide the change you make with the alter_distributed_table function needs to be done to all the tables that are colocated with the table you are changing, you can use the cascade_to_colocated parameter:

SET citus.shard_count TO 10;

SELECT create_distributed_table ('customers', 'customer_id');

SELECT create_distributed_table ('orders', 'customer_id', colocate_with := 'customers');

-- when you decide to change the shard count

-- of all of the colocation group

SELECT alter_distributed_table ('customers', shard_count := 20, cascade_to_colocated := true);

You can see the updated shard count of both tables on the citus_tables view:

SELECT shard_count FROM citus_tables WHERE table_name IN ('customers', 'orders');

How to change your Postgres table’s access method in Citus

Another amazing feature introduced in Citus 10 is columnar storage. This Citus 10 columnar blog post walks you through how it works and how to use columnar tables (or partitions) with Citus—complete with a Quickstart. Oh, and Jeff made a short video demo about the new Citus 10 columnar functionality too—it’s worth the 13 minutes to watch IMHO.

With Citus columnar, you can optionally choose to store your tables grouped by columns—which gives you the benefits of compression, too. Of course, you don’t have to use the new columnar access method—the default access method is “heap” and if you don’t specify an access method, then your tables will be row-based tables (with the heap access method.)

It would not be fair to introduce this cool new Citus columnar access method without also giving you a way to convert your tables to columnar. So Citus 10 also introduced a way to change the access method of tables.

SELECT alter_table_set_access_method('orders', 'columnar');

You can use alter_table_set_access_method to convert your table to any other access method too, such as heap, Postgres’s default access method. Also, your table doesn’t even need to be a distributed Citus table. You can also use alter_table_set_access_method with Citus reference tables as well as regular Postgres tables. You can even change the access method of a Postgres partition with alter_table_set_access_method.

Under the hood: How do these new Citus functions work?

If you’ve read the blog post about undistribute_table, the function Citus 9.5 introduced for turning distributed Citus tables back to local Postgres tables, you mostly know how the alter_distributed_table and alter_table_set_access_method functions work. Because we use the same underlying methodology as the undistribute_table function. Well, we improved upon it.

The alter_distributed_table and alter_table_set_access_method functions:

- Create a new table in the way you want (with the new shard count or access method etc.)

- Move everything from your old table to the new table

- Drop the old table and rename the new one

Dropping a table for the purpose of re-creating the same table with different properties is not a simple task. Dropping the table will also drop many things that depend on the table.

Just like the undistribute_table function, the alter_distributed_table and alter_table_set_access_method functions do a lot to preserve the properties of the table you didn’t want to change. The functions will handle indexes, sequences, views, constraints, table owner, partitions and more—just like undistribute_table.

alter_distributed_table and alter_table_set_access_method will also recreate the foreign keys on your tables whenever possible. For example, if you change the shard count of a table with the alter_distributed_table function and use cascade_to_colocated := true to change the shard count of all the colocated tables, then foreign keys within the colocation group and foreign keys from the distributed tables of the colocation group to Citus reference tables will be recreated.

Making it easier to experiment with Citus—and to adapt as your needs change

If you want to learn more about our previous work which we build on for alter_distributed_table and alter_table_set_access_method functions go check out our blog post on undistribute_table.

In Citus 10 we worked to give you more tools and more capabilities for making changes to your distributed database. When you’re just starting to use Citus, the new alter_distributed_table and alter_table_set_access_method functions—along with the undistribute_table function—are all here to help you experiment and find the database configuration that works the best for your application. And in the future, if and when your application evolves, these three Citus functions will be ready to help you evolve your Citus database, too.

by Scott Muniz | May 3, 2021 | Security, Technology

This article is contributed. See the original author and article here.

Official websites use .gov

A .gov website belongs to an official government organization in the United States.

Secure .gov websites use HTTPS A

lock ( )

) or

https:// means you’ve safely connected to the .gov website. Share sensitive information only on official, secure websites.

by Contributed | May 3, 2021 | Technology

This article is contributed. See the original author and article here.

Deprecated accounts are the accounts that were once deployed to a subscription for some trial/pilot initiative or some other purpose and are not required anymore. The user accounts that have been blocked from signing in should be removed from subscriptions. These accounts can be targets for attackers finding ways to access your data without being noticed.

The Azure Security Center recommends identifying those accounts and removing any role assignments on them from the subscription, however, it could be tedious in the case of multiple subscriptions.

Pre-Requisite:

– Az Modules must be installed

– Service principal created as part of Step 1 must be having owner access to all subscriptions

Steps to follow:

Step 1: Create a service principal

Post creation of service principal, please retrieve below values.

- Tenant Id

- Client Secret

- Client Id

Step 2: Create a PowerShell function which will be used in generating authorization token

function Get-apiHeader{

[CmdletBinding()]

Param

(

[Parameter(Mandatory=$true)]

[System.String]

[ValidateNotNullOrEmpty()]

$TENANTID,

[Parameter(Mandatory=$true)]

[System.String]

[ValidateNotNullOrEmpty()]

$ClientId,

[Parameter(Mandatory=$true)]

[System.String]

[ValidateNotNullOrEmpty()]

$PasswordClient,

[Parameter(Mandatory=$true)]

[System.String]

[ValidateNotNullOrEmpty()]

$resource

)

$tokenresult=Invoke-RestMethod -Uri https://login.microsoftonline.com/$TENANTID/oauth2/token?api-version=1.0 -Method Post -Body @{"grant_type" = "client_credentials"; "resource" = "https://$resource/"; "client_id" = "$ClientId"; "client_secret" = "$PasswordClient" }

$token=$tokenresult.access_token

$Header=@{

'Authorization'="Bearer $token"

'Host'="$resource"

'Content-Type'='application/json'

}

return $Header

}

Step 3: Invoke API to retrieve authorization token using function created in above step

Note: Replace $TenantId, $ClientId and $ClientSecret with value captured in step 1

$AzureApiheaders = Get-apiHeader -TENANTID $TenantId -ClientId $ClientId -PasswordClient $ClientSecret -resource "management.azure.com"

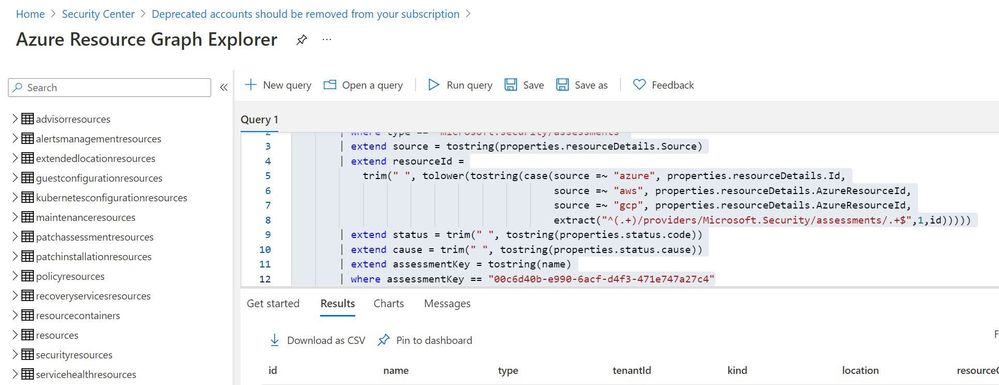

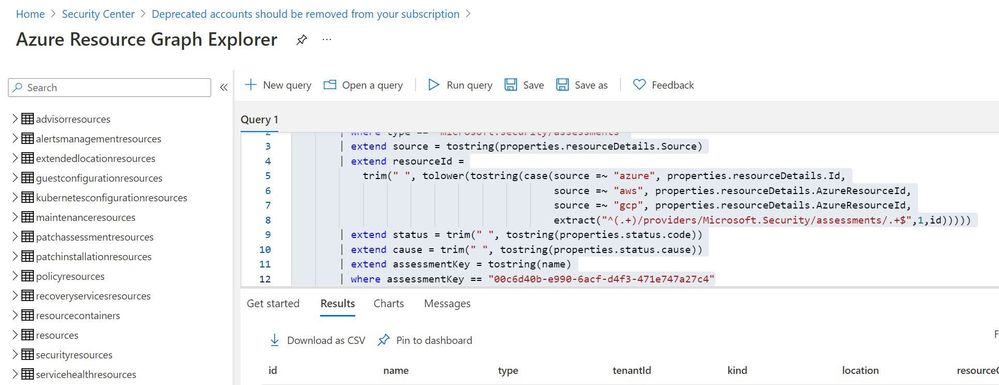

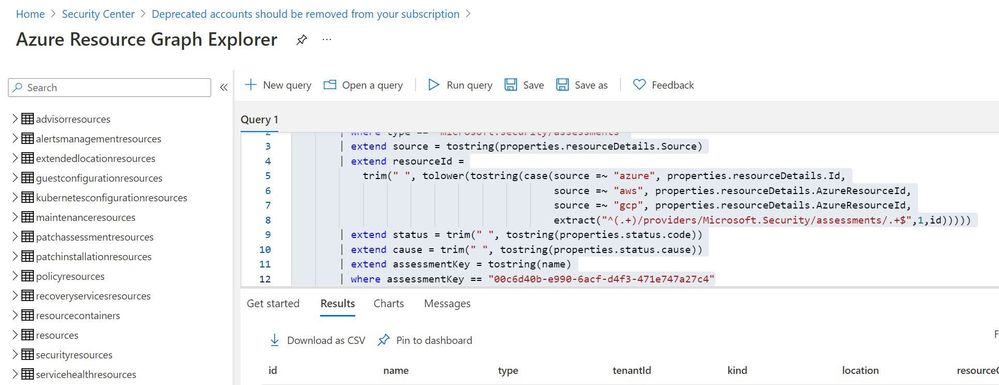

Step 4: Extracting csv file containing list of all deprecated accounts from Azure Resource Graph

Please refer: https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/governance/resource-graph/first-query-portal.md

Azure Resource graph explorer: https://docs.microsoft.com/en-us/azure/governance/resource-graph/overview

Query:

securityresources

| where type == "microsoft.security/assessments"

| extend source = tostring(properties.resourceDetails.Source)

| extend resourceId =

trim(" ", tolower(tostring(case(source =~ "azure", properties.resourceDetails.Id,

extract("^(.+)/providers/Microsoft.Security/assessments/.+$",1,id)))))

| extend status = trim(" ", tostring(properties.status.code))

| extend cause = trim(" ", tostring(properties.status.cause))

| extend assessmentKey = tostring(name)

| where assessmentKey == "00c6d40b-e990-6acf-d4f3-471e747a27c4"

Click on “Download as CSV” and store at location where removal of deprecated account script is present. Rename the file as “deprecatedaccountextract“

Set-Location $PSScriptRoot

$RootFolder = Split-Path $MyInvocation.MyCommand.Path

$ParameterCSVPath =$RootFolder + "deprecatedaccountextract.csv"

if(Test-Path -Path $ParameterCSVPath)

{

$TableData = Import-Csv $ParameterCSVPath

}

foreach($Data in $TableData)

{

#Get resourceID for specific resource

$resourceid=$Data.resourceId

#Get control results column for specific resource

$controlresult=$Data.properties

$newresult=$controlresult | ConvertFrom-Json

$ObjectIdList=$newresult.AdditionalData.deprecatedAccountsObjectIdList

$regex="[^a-zA-Z0-9-]"

$splitres=$ObjectIdList -split(',')

$deprecatedObjectIds=$splitres -replace $regex

foreach($objectId in $deprecatedObjectIds)

{

#API to get role assignment details from Azure

$resourceURL="https://management.azure.com$($resourceid)/providers/microsoft.authorization/roleassignments?api-version=2015-07-01"

$resourcedetails=(Invoke-RestMethod -Uri $resourceURL -Headers $AzureApiheaders -Method GET)

if( $null -ne $resourcedetails )

{

foreach($value in $resourcedetails.value)

{

if($value.properties.principalId -eq $objectId)

{

$roleassignmentid=$value.name

$remidiateURL="https://management.azure.com$($resourceid)/providers/microsoft.authorization/roleassignments/$($roleassignmentid)?api-version=2015-07-01"

Invoke-RestMethod -Uri $remidiateURL -Headers $AzureApiheaders -Method DELETE

}

}

}

else

{

Write-Output "There are no Role Assignments in the subscription"

}

}

}

References:

https://github.com/Azure/Azure-Security-Center/blob/master/Remediation%20scripts/Remove%20deprecated%20accounts%20from%20subscriptions/PowerShell/Remove-deprecated-accounts-from-subscriptions.ps1

https://docs.microsoft.com/en-us/azure/security-center/policy-reference#:~:text=Deprecated%20accounts%20are%20accounts%20that%20have%20been%20blocked%20from%20signing%20in.&text=Virtual%20machines%20without%20an%20enabled,Azure%20Security%20Center%20as%20recommendations.

Recent Comments