by Scott Muniz | Jul 23, 2020 | Alerts, Microsoft, Technology, Uncategorized

This article is contributed. See the original author and article here.

Monitoring your database is one of the most crucial tasks to ensure a continued healthy and steady workload. Azure Database for PostgreSQL, our managed database service for Postgres, provides a wealth of metrics to monitor your Postgres database on Azure. But what if the very metric that you are after is not yet available?

Worry not because there are ample options to easily create and monitor custom metrics with Azure Database for PostgreSQL. One solution you can use with Postgres on Azure is Datadog’s custom metrics.

If you are not familiar with Datadog, it is one of many solid 3rd party solutions that provides a set of canned metrics for various technologies, including PostgreSQL. Datadog also enables you to poll our databases with the help of custom queries to emit custom metrics data to a central location where you can monitor how well your workload is doing.

If you don’t yet have a Datadog account, no problem, you can use a free trial Datadog account to try out everything I’m going to show you in this post.

What is bloat in Postgres & why should you monitor it?

As a proud owner of a PostgreSQL database, you will inevitably have to experience and manage bloat, which is a product of PostgreSQL’s storage implementation for multi-version concurrency control. Concurrency is achieved by creating different versions of tuples as they receive modifications. As you can imagine, PostgreSQL will keep as many versions of the same tuple as the number of concurrent transactions at any time and make the last committed version visible to the consecutive transactions. Eventually, this creates dead tuples in pages that later need to be reclaimed.

To keep your database humming, it’s important to understand how your table and index bloat values progress over time—and to make sure that garbage collection happens as aggressively as it should. So you need to monitor your bloat in Postgres and act on it as needed.

Before you start, I should clarify that this post is focused on how to monitor bloat on Azure Database for PostgreSQL – Single Server. On Azure, our Postgres managed database service also has a built-in deployment option called Hyperscale (Citus)—based on the Citus open source extension—and this Hyperscale (Citus) option enables you to scale out Postgres horizontally. Because the code snippets and instructions below are a bit different for monitoring a single Postgres server vs. monitoring a Hyperscale (Citus) server group, I plan to publish the how-to instructions for using custom monitoring metrics on a Hyperscale (Citus) cluster in a separate/future blog post. Stay tuned! Now, let’s get started.

First, prepare your monitoring setup for Azure Database for PostgreSQL – Single Server

If you do not already have an Azure Database for PostgreSQL server, you may create one as prescribed in our quickstart documentation.

Create a read-only monitoring user

As a best practice, you should allocate a read-only user to poll your data from database. Depending on what you want to collect, granting pg_monitor role, which is a member of pg_read_all_settings, pg_read_all_stats and pg_stat_scan_tables starting from Postgres 10, could be sufficient.

For this situation, we will also need to GRANT SELECT for the role to all the tables that we want to track for bloat.

CREATE USER metrics_reader WITH LOGIN NOSUPERUSER NOCREATEDB NOCREATEROLE INHERIT NOREPLICATION CONNECTION LIMIT 1 PASSWORD 'xxxxxx';

GRANT pg_monitor TO metrics_reader;

--Rights granted here as blanket for simplicity.

GRANT SELECT ON ALL TABLES IN SCHEMA public to metrics_reader;

Create your bloat monitoring function

To keep Datadog configuration nice and tidy, let’s first have a function to return the bloat metrics we want to track. Create the function below in the Azure Database for PostgreSQL – Single Server database you would like to track.

If you have multiple databases to track, you can consider an aggregation mechanism from different databases into a single monitoring database to achieve the same objective. This how-to post is designed for a single database, for the sake of simplicity.

The bloat tracking script used here is a popular choice and was created by Greg Sabino Mullane. There are other bloat tracking scripts out there in case you want to research a better fitting approach to track your bloat estimates and adjust your get_bloat function.

CREATE OR REPLACE FUNCTION get_bloat ()

RETURNS TABLE (

database_name NAME,

schema_name NAME,

table_name NAME,

table_bloat NUMERIC,

wastedbytes NUMERIC,

index_name NAME,

index_bloat NUMERIC,

wastedibytes DOUBLE PRECISION

)

AS $$

BEGIN

RETURN QUERY SELECT current_database() as databasename, schemaname, tablename,ROUND((CASE WHEN otta=0 THEN 0.0 ELSE sml.relpages::FLOAT/otta END)::NUMERIC,1) AS tbloat,CASE WHEN relpages < otta THEN 0 ELSE bs*(sml.relpages-otta)::BIGINT END AS wastedbytes,iname, ROUND((CASE WHEN iotta=0 OR ipages=0 THEN 0.0 ELSE ipages::FLOAT/iotta END)::NUMERIC,1) AS ibloat,CASE WHEN ipages < iotta THEN 0 ELSE bs*(ipages-iotta) END AS wastedibytes FROM (SELECT schemaname, tablename, cc.reltuples, cc.relpages, bs,CEIL((cc.reltuples*((datahdr+ma-(CASE WHEN datahdr%ma=0 THEN ma ELSE datahdr%ma END))+nullhdr2+4))/(bs-20::FLOAT)) AS otta,COALESCE(c2.relname,'?') AS iname, COALESCE(c2.reltuples,0) AS ituples, COALESCE(c2.relpages,0) AS ipages,COALESCE(CEIL((c2.reltuples*(datahdr-12))/(bs-20::FLOAT)),0) AS iotta FROM (SELECT ma,bs,schemaname,tablename,(datawidth+(hdr+ma-(CASE WHEN hdr%ma=0 THEN ma ELSE hdr%ma END)))::NUMERIC AS datahdr,(maxfracsum*(nullhdr+ma-(CASE WHEN nullhdr%ma=0 THEN ma ELSE nullhdr%ma END))) AS nullhdr2 FROM (SELECT schemaname, tablename, hdr, ma, bs,SUM((1-null_frac)*avg_width) AS datawidth,MAX(null_frac) AS maxfracsum,hdr+(SELECT 1+COUNT(*)/8 FROM pg_stats s2 WHERE null_frac<>0 AND s2.schemaname = s.schemaname AND s2.tablename = s.tablename) AS nullhdr FROM pg_stats s, (SELECT(SELECT current_setting('block_size')::NUMERIC) AS bs,CASE WHEN SUBSTRING(v,12,3) IN ('8.0','8.1','8.2') THEN 27 ELSE 23 END AS hdr,CASE WHEN v ~ 'mingw32' THEN 8 ELSE 4 END AS ma FROM (SELECT version() AS v) AS foo) AS constants GROUP BY 1,2,3,4,5) AS foo) AS rs JOIN pg_class cc ON cc.relname = rs.tablename JOIN pg_namespace nn ON cc.relnamespace = nn.oid AND nn.nspname = rs.schemaname AND nn.nspname <> 'information_schema' LEFT JOIN pg_index i ON indrelid = cc.oid LEFT JOIN pg_class c2 ON c2.oid = i.indexrelid) AS sml WHERE schemaname NOT IN ('pg_catalog') ORDER BY wastedbytes DESC;

END; $$

LANGUAGE 'plpgsql';

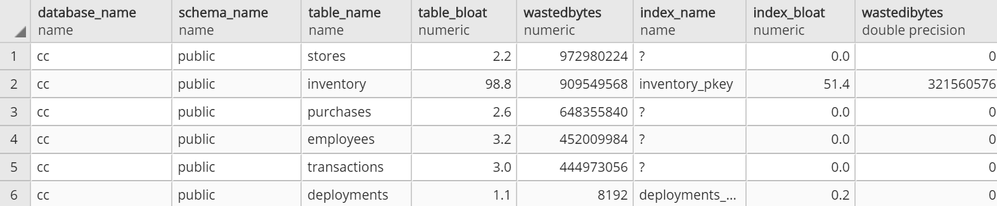

Confirm your read-only Postgres user can observe results

At this point, you should be able to connect to your Azure Database for PostgreSQL server with your read-only user and run SELECT * FROM get_bloat(); to observe results.

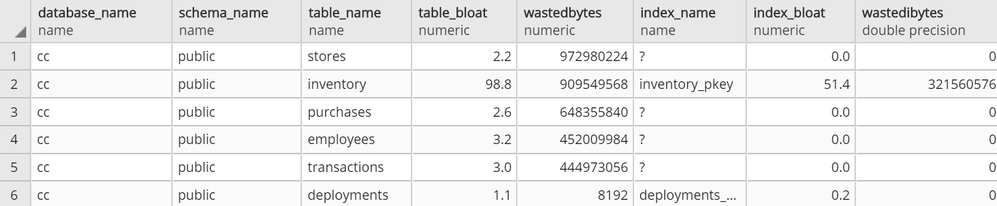

get_bloat function’s sample output

get_bloat function’s sample output

If you don’t get anything in the output, see if the following steps remedy this:

- Check your pg_stat records with

SELECT * FROM pg_stats WHERE schemaname NOT IN ('pg_catalog','information_schema');

- If you don’t see your table and columns in there, make sure to run

ANALYZE <your_table> and try again

- If you still don’t see your table in the result set from #1, your user very likely does not have select privilege on a table that you expect to see in the output

Then, setup your 3rd party monitoring (in this case, with Datadog)

Once you confirm that your read-only user is able to collect the metrics you want to track on your Azure Postgres single server, you are now ready to set up your 3rd party monitoring!

For this you will need two things. First, a Datadog account. Second, a machine that will host your Datadog agent, to do the heavy lifting of connecting to your database to extract the metrics you want and to push the metrics into your Datadog workspace.

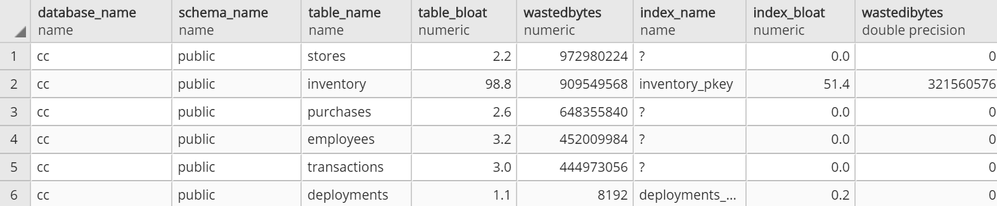

For this exercise, I had an Azure Linux virtual machine handy that I could use as the agent host, but you can follow quickstart guides available for Azure Virtual Machines to create a new machine or use an existing one. Datadog provides scripts to set up diverse environments, which you can find after you log in to your Datadog account and go to the Agents section in Datadog’s Postgres Integrations page. Following the instructions, you should get message similar to the following.

datadog agent setup success state

datadog agent setup success state

Next step is to configure datadog agent for Postgres specific collection. If you aren’t already working with an existing postgres.d/conf.yaml, just copy the conf.yaml.example in /etc/datadog-agent/conf.d/postgres.d/ and adjust to your needs.

Once you follow the directions and set up your host, port, user, and password in /etc/datadog-agent/conf.d/postgres.d/conf.yaml, the part that remains is to set up your custom metrics section with below snippet.

custom_queries:

- metric_prefix: azure.postgres.single_server.custom_metrics

query: select database_name, schema_name, table_name, table_bloat, wastedbytes, index_name, index_bloat, wastedibytes from get_bloat();

columns:

- name: database_name

type: tag

- name: schema_name

type: tag

- name: table_name

type: tag

- name: table_bloat

type: gauge

- name: wastedbytes

type: gauge

- name: index_name

type: tag

- name: index_bloat

type: gauge

- name: wastedibytes

type: gauge

Once this step is done, all you need to do is to restart your datadog-agent sudo systemctl restart datadog-agent for your custom metrics to start flowing in.

Setup your new bloat monitoring dashboard for Azure Database for PostgreSQL – Single Server

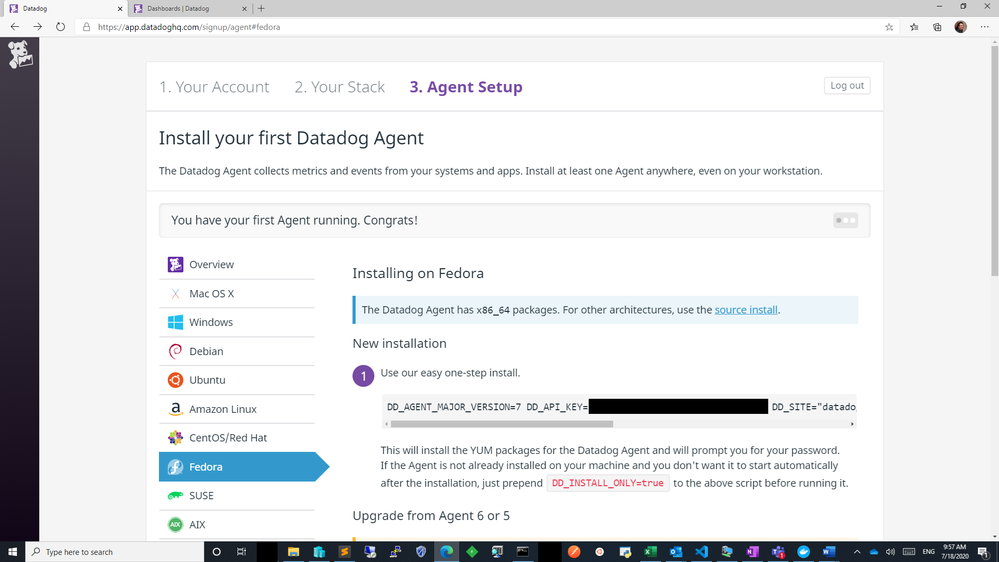

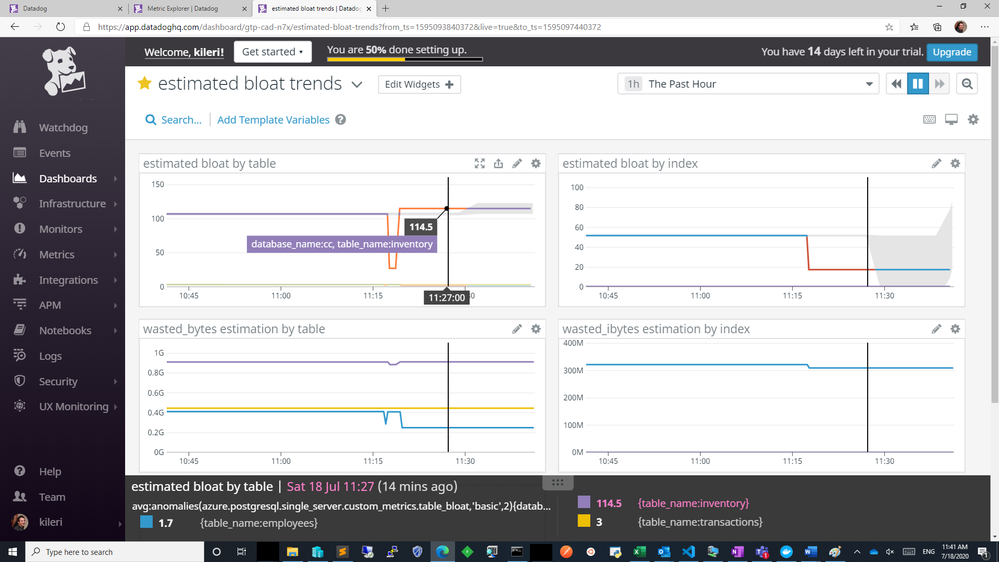

If all goes well, you should be able to see your custom metrics in Metrics Explorer shortly!

azure postgresql custom metrics flowing successfully into datadog workspace

azure postgresql custom metrics flowing successfully into datadog workspace

From above you can export these charts to a new or existing dashboard and edit the widgets to your needs to show separate visuals by dimensions as table or index or you can simply overlay them as below. Datadog documentation is quite rich to help you out.

custom metrics added to a new dashboard

custom metrics added to a new dashboard

Knowing how your bloat metrics are trending will help you investigate performance problems and help you to identify if bloat is contributing to performance fluctuations. Monitoring bloat in Postgres will also help you evaluate whether your workload (or your Postgres tables) are configured optimally for autovacuum to perform its function.

Using custom metrics makes it easy to monitor bloat in Azure Database for PostgreSQL

You can and absolutely should track bloat. And with custom metrics and Datadog, you can easily track bloat in your workload for an Azure Database for PostgreSQL server. You can track other types of custom Postgres metrics easily in the same fashion.

One more thing to keep in mind: I recommend you always be intentional on what and how to collect, as metric polling can impact your workload.

If you have a much more demanding workload and are using Hyperscale (Citus) to scale out Postgres horizontally, I will soon have a post on how you can monitor bloat with custom metrics in Azure Database for Postgres – Hyperscale (Citus). I look forward to seeing you there!

by Scott Muniz | Jul 23, 2020 | Uncategorized

This article is contributed. See the original author and article here.

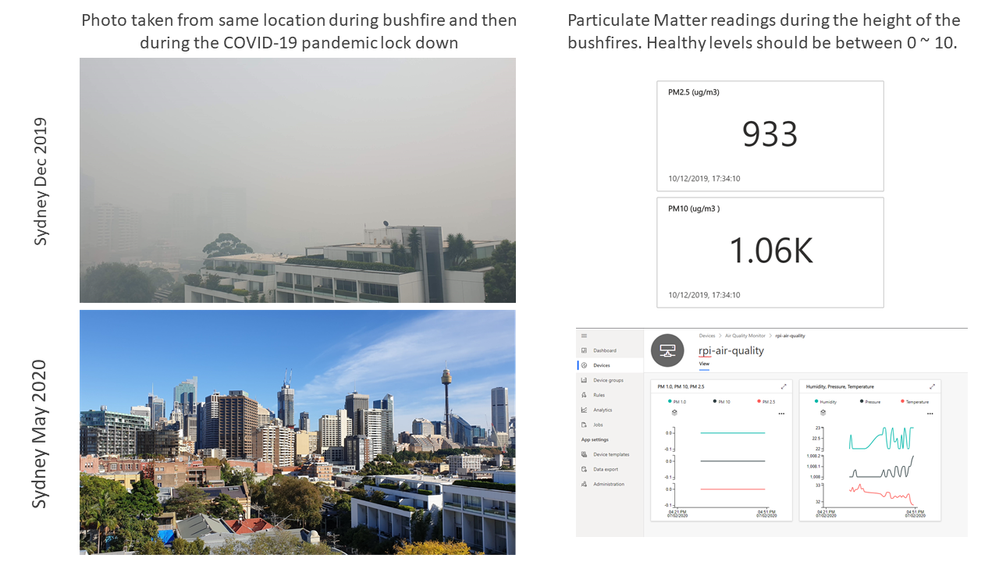

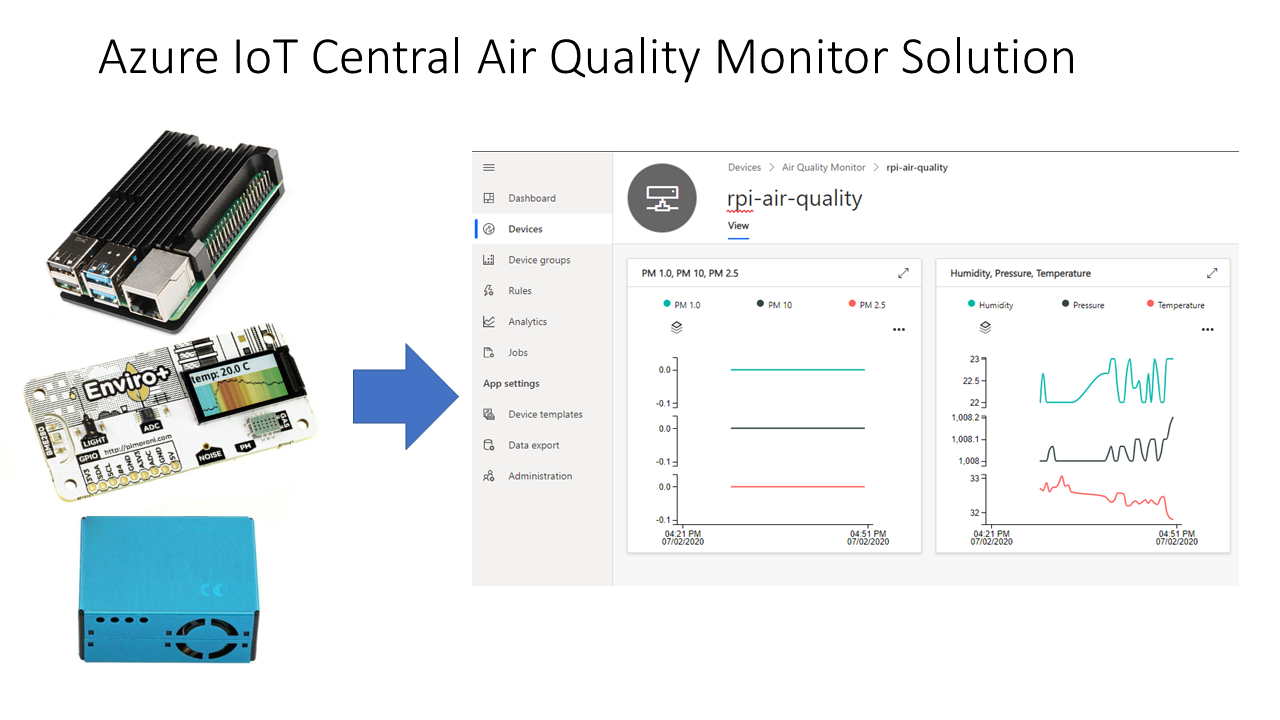

Monitor Air Pollution with a Raspberry Pi, a Particulate Matter sensor and IoT Central

Background

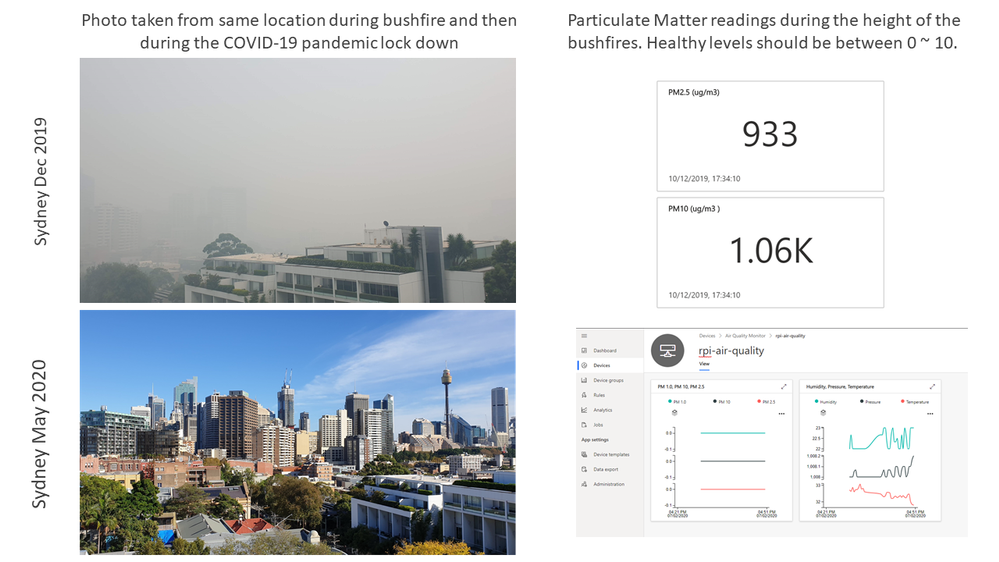

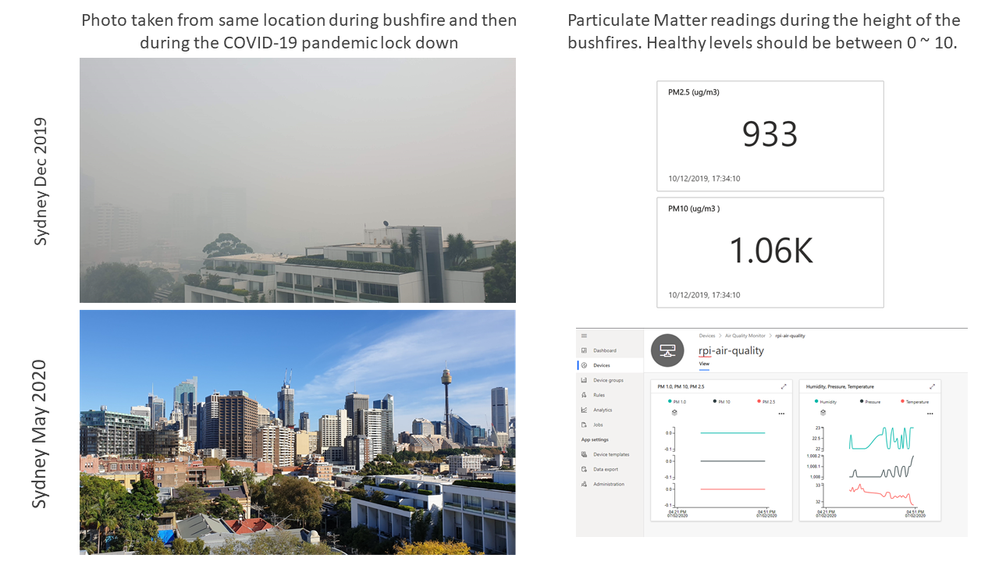

Born of necessity, this project tracks the air quality over Sydney during the height of the Australian bushfires. I wanted to gauge when it was safe to go outside, or when it was better to close up the apartment and stay in for the day.

#JulyOT

This is part of the #JulyOT IoT Tech Community series, a collection of blog posts, hands-on-labs, and videos designed to demonstrate and teach developers how to build projects with Azure Internet of Things (IoT) services. Please also follow #JulyOT on Twitter.

Introduction

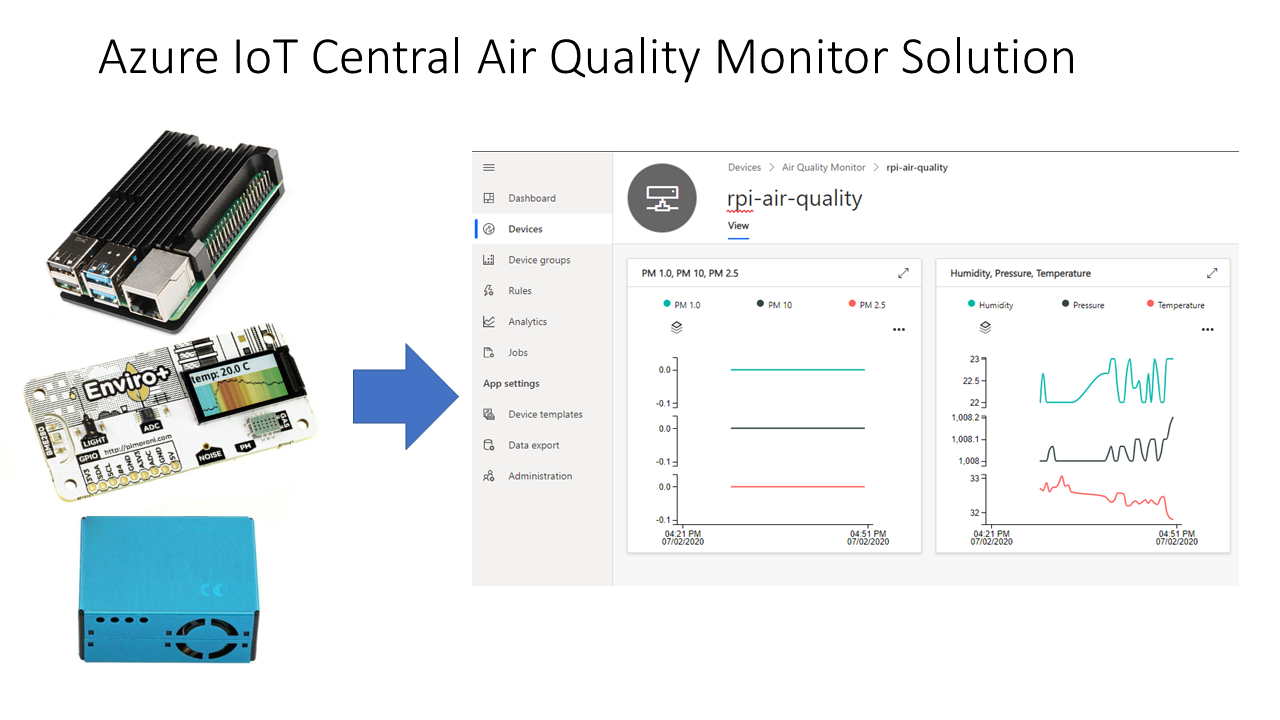

In this hands-on lab, you will learn how to create and debug a Python application on a Raspberry Pi with Visual Studio Code and the Remote SSH extension. The app requires the Pimoroni Enviro+ pHAT, and reads data from the PMS5003 particulate matter (PM) and BME280 sensors and streams the data to Azure IoT Central.

Parts required

- Raspberry Pi 2 or better, SD Card, and Raspberry Pi power supply

- Pimoroni Enviro+ pHAT

- PMS5003 Particulate Matter Sensor with Cable available from Pimoroni and eBay.

This lab depends on Visual Studio Code and Remote SSH development. Remote SSH development is supported on Raspberry Pis built on ARMv7 chips or better. The Raspberry Pi Zero is built on ARMv6 architecture. The Raspberry Pi Zero is capable of running the solution, but it does not support Remote SSH development.

Solution Architecture

Let’s get started

Head to Raspberry Pi Air Pollution Monitor

There are five modules covering the following topics:

- Module 1: Create an Azure IoT Central application

- Module 2: Set up your Raspberry Pi

- Module 3: Set up your development environment

- Module 4: Run the solution

- Module 5: Dockerize the Air Quality Monitor solution

Source code

All source code available for the Raspberry Pi Air Pollution monitor

Acknowledgements

This tutorial builds on the Azure IoT Python SDK 2 samples.

Have fun and stay safe and be sure to follow us on #JulyOT.

by Scott Muniz | Jul 23, 2020 | Uncategorized

This article is contributed. See the original author and article here.

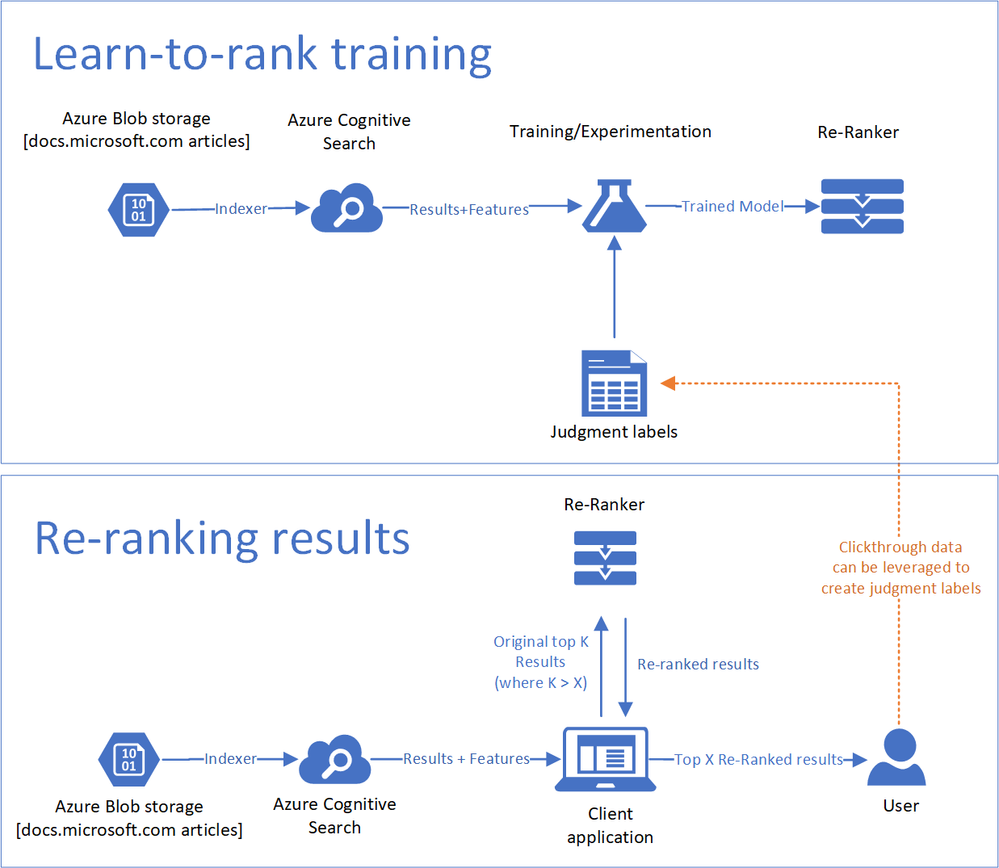

Are you looking for ways to fine-tune your model relevance? Sometimes developers create a customized ranking model to re-rank the results returned by Azure Cognitive Search. This allows them to use application-specific context as part of that model. To help facilitate this, Azure Cognitive Search is introducing a new query parameter called featuresMode. When this parameter is set, the response will contain information used to compute the search score of retrieved documents, which can be leveraged to train a re-ranking model using a Machine Learning approach.

We have created a new sample and tutorial that walks you through the learning to rank process end-to-end, with steps for designing, training, testing, and consuming a ranking model. The tutorial shows you how to extract features using the featuresMode parameter and train a ranking model to increase total search relevance as measured by the offline NDCG metric.

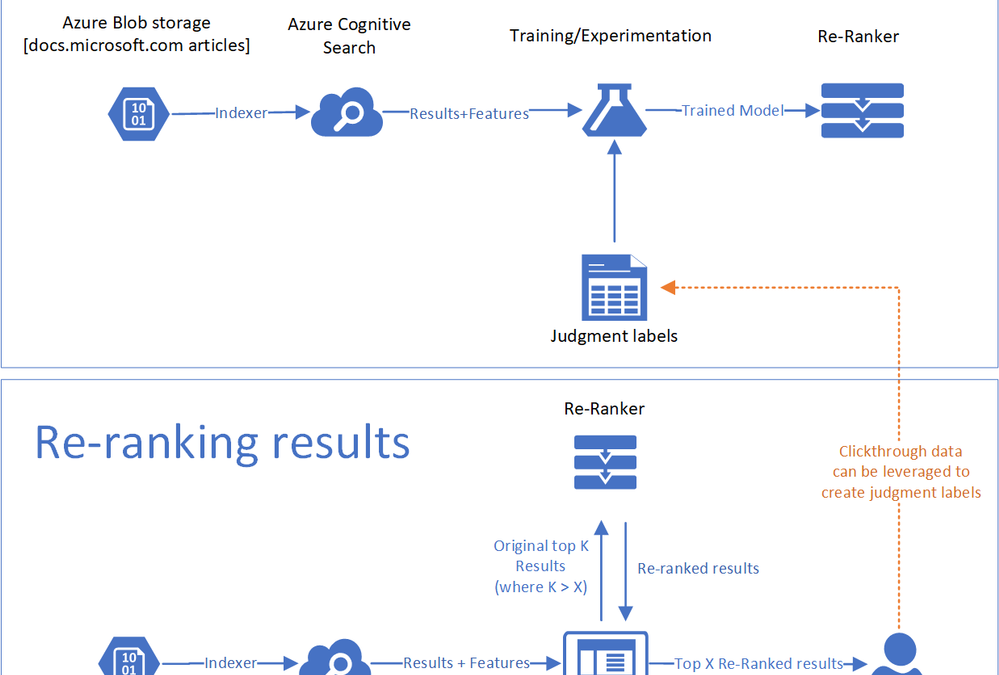

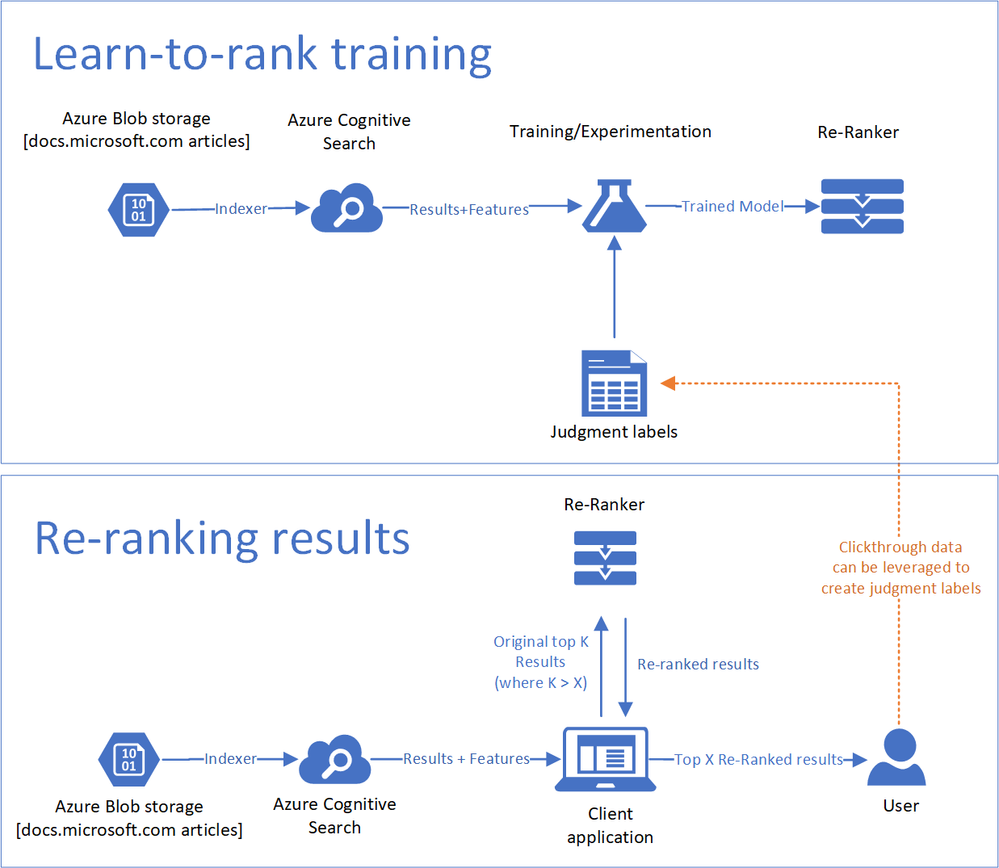

For customers who are less familiar with machine learning, a learn-to-rank method re-ranks top results based on a machine learning model. The re-ranking process can incorporate clickthrough data or domain expertise as a reflection of what is truly relevant to users. The is a visualization of the components of a learn-to-rank method used in the tutorial.

|

Legend

|

Description

|

|

Data

|

The articles and search statistics that reside in Azure Blob storage.

|

|

Search Index

|

Azure Cognitive Search ingests the data into a search index.

|

|

Re-ranker

|

Queries against the index produce scores and scoring features that are used to train a machine learning model based on labels derived from clickthrough data. After the model is trained, you can use it to re-rank your documents.

|

|

Judgement labels

|

To train the machine learning model, you need to have labeled data that contains signal for what documents are most relevant for different queries. One way to do this is to collect clickthrough data to understand which documents are most popular. Another mechanism may be to find human judges to label the most relevant documents.

|

The featuresMode parameter is currently in preview and can be accessed through the Azure Cognitive Search REST APIs.

Sample Request

POST https://[service name].search.windows.net/indexes/[index name]/docs/search?api-version=[api-version]

Content-Type: application/json

api-key: [admin or query key]

Request Body

{

“search”: “.net core”,

“featuresMode”: “enabled”,

“select”: “title_en_us, description_en_us”,

“searchFields”: “body_en_us,description_en_us,title_en_us,apiNames,urlPath,searchTerms, keyPhrases_en_us”,

“scoringStatistics”: “global”

}

Sample Response

{

“value”: [

{

“@search.score”: document_score (if a text query was provided),

“@search.highlights”: {

field_name: [ subset of text, … ],

…

},

“@search.features”: {

“field_name_1”: {

“uniqueTokenMatches”: 1.0,

“similarityScore”: 0.29541412,

“termFrequency”: 2

},

“field_name_2”: {

“uniqueTokenMatches”: 3.0,

“similarityScore”: 1.75345345,

“termFrequency”: 6

},

…

},

…

},

…

]

}

If you are interested in this new capability, contact us at azuresearchrelevance@microsoft.com

References

Search Ranking Tutorial Github

FeaturesMode REST API Reference

by Scott Muniz | Jul 23, 2020 | Uncategorized

This article is contributed. See the original author and article here.

Summer has always been a well-deserved break, but none more so than this year. To all teachers and educators who’ve been supporting our students—thank you. You deserve this chance to recharge. While you might not know if you’ll be teaching in classrooms or adopting a hybrid model, here are some quick ways that Microsoft Edge can help you save time and stay organized for the new school year.

Ready to try these out? Download the new Microsoft Edge and read on.

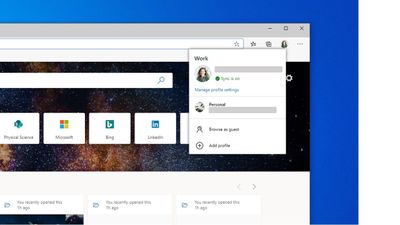

Keep teaching and personal browsing separate by creating your own teacher profile

Crafting a lesson on plants for your students and looking up how to make the perfect sourdough bread are two vastly different activities. Why mix the two in your browser? Keep your tabs, passwords, favorites, and extensions separate by creating a teacher profile for school and a personal profile for the rest of your day-to-day life. That way, you won’t mix (or lose) tabs as you go from exploring photosynthesis to learning how to bake bread.

How to set up profiles:

Step 1: Click the profile icon to the right of the address bar.

Step 2: From the flyout menu, click “add a new profile”.

Step 3: Enter your school email and password.

Step 4: Create a personal profile by repeating the previous steps but with your personal email and password.

Step 5: To switch between profiles, click on the profile icon and select the profile you want to use.

Access your files and lessons faster as you work from the browser

Remote teaching has meant going all-digital and keeping track of that content isn’t easy. In the new Microsoft Edge, you can set up an easy-to-use Office 3651 dashboard so every time you open a new tab you can quickly find the files you need. You can customize this dashboard to pin files and websites you always use, or you can launch an Office 365 app from the app menu. By connecting Office 365 to Microsoft Edge, you get a fast, intelligent way to access your files that will save you time.

How to set up the new tab page:

Step 1: Sign-in to Office 365 using your teacher account and profile.

Step 2: Open a new tab in the browser.

Step 3: Click on the gear icon in the top right corner of the frame (under where you see your profile picture).

Step 4: Select “Office 365” under Page Content.

Step 5: Chose which page layout you like by trying out Focused, Informational, or Inspirational.

Step 6: Explore the page to see what’s available!

Easily build a lesson plan (or collect anything) using Collections

The best lessons use great content but finding and organizing that content can be time-consuming. In the past, this has meant a lot of open tabs and a lot of copying and pasting from the web. With Collections, we hope to make lesson planning a little bit easier! Now you can easily grab the web content that you need and save it in one place without leaving the browser. You can save a link to an entire page or simply highlight pictures or text and drag them into your collection. Now, all your resources are in one, convenient location.

How to use Collections:

Step 1: Click on the  icon next to your profile icon to open the Collections pane.

icon next to your profile icon to open the Collections pane.

Step 2: Click the blue + sign to start a new collection.

Step 3: Start adding content! Find a web page with something you want to save and click “Add current page”.

Step 4: Just want something specific on the page? Highlight text or pictures you want and drag it over to the pane to add it to the collection.

Step 5: Save your collection to a Word doc so you can share it with your students by clicking the  menu and then “Send to Word”. Voila!

menu and then “Send to Word”. Voila!

We hope you found these tips helpful—we truly can’t thank you enough. If you found them useful, share them with your fellow teachers so they can save time and stay organized too!

Want to become even more familiar with the new Microsoft Edge? Check out our How To Get Started User Guide!

1 Azure Active Directory (AAD) and Office 365 subscription required.

by Scott Muniz | Jul 23, 2020 | Alerts, Microsoft, Technology, Uncategorized

This article is contributed. See the original author and article here.

In my introductory post we saw that there are many different ways in which you can host a GraphQL service on Azure and today we’ll take a deeper look at one such option, Azure App Service, by building a GraphQL server using dotnet. If you’re only interested in the Azure deployment, you can jump forward to that section. Also, you’ll find the complete sample on my GitHub.

Getting Started

For our server, we’ll use the graphql-dotnet project, which is one of the most common GraphQL server implementations for dotnet.

First up, we’ll need an ASP.NET Core web application, which we can create with the dotnet cli:

Next, open the project in an editor and add the NuGet packages we’ll need:

<PackageReference Include="GraphQL.Server.Core" Version="3.5.0-alpha0046" />

<PackageReference Include="GraphQL.Server.Transports.AspNetCore" Version="3.5.0-alpha0046" />

<PackageReference Include="GraphQL.Server.Transports.AspNetCore.SystemTextJson" Version="3.5.0-alpha0046" />

At the time of writing graphql-dotnet v3 is in preview, we’re going to use that for our server but be aware there may be changes when it is released.

These packages will provide us a GraphQL server, along with the middleware needed to wire it up with ASP.NET Core and use System.Text.Json as the JSON seralizer/deserializer (you can use Newtonsoft.Json if you prefer with this package).

We’ll also add a package for GraphiQL, the GraphQL UI playground, but it’s not needed or recommended when deploying into production.

<PackageReference Include="GraphQL.Server.Ui.Playground" Version="3.5.0-alpha0046" />

With the packages installed, it’s time to setup the server.

Implementing a Server

There are a few things that we need when it comes to implementing the server, we’re going to need a GraphQL schema, some types that implement that schema and to configure our route engine to support GraphQL’s endpoints. We’ll start by defining the schema that’s going to support our server and for the schema we’ll use a basic trivia app (which I’ve used for a number of GraphQL demos in the past). For the data, we’ll use Open Trivia DB.

.NET Types

First up, we’re going to need some generic .NET types that will represent the underlying data structure for our application. These would be the DTOs (Data Transfer Objects) that we might use in Entity Framework, but we’re just going to run in memory.

public class Quiz

{

public string Id

{

get

{

return Question.ToLower().Replace(" ", "-");

}

}

public string Question { get; set; }

[JsonPropertyName("correct_answer")]

public string CorrectAnswer { get; set; }

[JsonPropertyName("incorrect_answers")]

public List IncorrectAnswers { get; set; }

}

As you can see, it’s a fairly generic C# class. We’ve added a few serialization attributes to help converting the JSON to .NET, but otherwise it’s nothing special. It’s also not usable with GraphQL yet and for that, we need to expose the type to a GraphQL schema, and to do that we’ll create a new class that inherits from ObjectGraphType<Quiz> which comes from the GraphQL.Types namespace:

public class QuizType : ObjectGraphType<Quiz>

{

public QuizType()

{

Name = "Quiz";

Description = "A representation of a single quiz.";

Field(q => q.Id, nullable: false);

Field(q => q.Question, nullable: false);

Field(q => q.CorrectAnswer, nullable: false);

Field<NonNullGraphType<ListGraphType<NonNullGraphType>>>("incorrectAnswers");

}

}

The Name and Description properties are used provide the documentation for the type, next we use Field to define what we want exposed in the schema and how we want that marked up for the GraphQL type system. We do this for each field of the DTO that we want to expose using a lambda like q => q.Id, or by giving an explicit field name (incorrectAnswers). Here’s also where you control the schema validation information as well, defining the nullability of the fields to match the way GraphQL expects it to be represented. This class would make a GraphQL type representation of:

type Quiz {

id: String!

question: String!

correctAnswer: String!

incorrectAnswers: [String!]!

}

Finally, we want to expose a way to query our the types in our schema, and for that we’ll need a Query that inherits ObjectGraphType:

public class TriviaQuery : ObjectGraphType

{

public TriviaQuery()

{

Field<NonNullGraphType<ListGraphType<NonNullGraphType<QuizType>>>>("quizzes", resolve: context =>

{

throw new NotImplementedException();

});

Field<NonNullGraphType<QuizType>>("quiz", arguments: new QueryArguments() {

new QueryArgument<NonNullGraphType<StringGraphType>> { Name = "id", Description = "id of the quiz" }

},

resolve: (context) => {

throw new NotImplementedException();

});

}

}

Right now there is only a single type in our schema, but if you had multiple then the TriviaQuery would have more fields with resolvers to represent them. We’ve also not implemented the resolver, which is how GraphQL gets the data to return, we’ll come back to that a bit later. This class produces the equivalent of the following GraphQL:

type TriviaQuery {

quizzes: [Quiz!]!

quiz(id: String!): Quiz!

}

Creating a GraphQL Schema

With the DTO type, GraphQL type and Query type defined, we can now implement a schema to be used on the server:

public class TriviaSchema : Schema

{

public TriviaSchema(TriviaQuery query)

{

Query = query;

}

}

Here we would also have mutations and subscriptions, but we’re not using them for this demo.

Wiring up the Server

For the Server we integrate with the ASP.NET Core pipeline, meaning that we need to setup some services for the Dependency Injection framework. Open up Startup.cs and add update the ConfigureServices:

public void ConfigureServices(IServiceCollection services)

{

services.AddTransient<HttpClient>();

services.AddSingleton<QuizData>();

services.AddSingleton<TriviaQuery>();

services.AddSingleton<ISchema, TriviaSchema>();

services.AddGraphQL(options =>

{

options.EnableMetrics = true;

options.ExposeExceptions = true;

})

.AddSystemTextJson();

}

The most important part of the configuration is lines 8 – 13, where the GraphQL server is setup and we’re defining the JSON seralizer, System.Text.Json. All the lines above are defining dependencies that will be injected to other types, but there’s a new type we’ve not seen before, QuizData. This type is just used to provide access to the data store that we’re using (we’re just doing in-memory storage using data queried from Open Trivia DB), so I’ll skip its implementation (you can see it on GitHub).

With the data store available, we can update TriviaQuery to consume the data store and use it in the resolvers:

public class TriviaQuery : ObjectGraphType

{

public TriviaQuery(QuizData data)

{

Field<NonNullGraphType<ListGraphType<NonNullGraphType<QuizType>>>>("quizzes", resolve: context => data.Quizzes);

Field<NonNullGraphType<QuizType>>("quiz", arguments: new QueryArguments() {

new QueryArgument<NonNullGraphType<StringGraphType>> { Name = "id", Description = "id of the quiz" }

},

resolve: (context) => data.FindById(context.GetArgument<string>("id")));

}

}

Once the services are defined we can add the routing in:

public void Configure(IApplicationBuilder app, IWebHostEnvironment env)

{

if (env.IsDevelopment())

{

app.UseDeveloperExceptionPage();

app.UseGraphQLPlayground();

}

app.UseRouting();

app.UseGraphQL<ISchema>();

}

I’ve put the inclusion GraphiQL. within the development environment check as that’d be how you’d want to do it for a real app, but in the demo on GitHub I include it every time.

Now, if we can launch our application, navigate to https://localhost:5001/ui/playground and run the queries to get some data back.

Deploying to App Service

With all the code complete, let’s look at deploying it to Azure. For this, we’ll use a standard Azure App Service running the latest .NET Core (3.1 at time of writing) on Windows. We don’t need to do anything special for the App Service, it’s already optimised to run an ASP.NET Core application, which is all this really is. If we were using a different runtime, like Node.js, we’d follow the standard setup for a Node.js App Service.

To deploy, we’ll use GitHub Actions, and you’ll find docs on how to do that already written. You’ll find the workflow file I’ve used in the GitHub repo.

With a workflow committed and pushed to GitHub and our App Service waiting, the Action will run and our application will be deployed. The demo I created is here.

Conclusion

Throughout this post we’ve taken a look at how we can create a GraphQL server running on ASP.NET Core using graphql-dotnet and deploy it to an Azure App Service.

When it comes to the Azure side of things, there’s nothing different we have to do to run the GraphQL server in an App Service than any other ASP.NET Core application, as graphql-dotnet is implemented to leverage all the features of ASP.NET Core seamlessly.

Again, you’ll find the complete sample on my GitHub for you to play around with yourself.

This post was originally published on www.aaron-powell.com .

datadog agent setup success state

azure postgresql custom metrics flowing successfully into datadog workspace

custom metrics added to a new dashboard

Recent Comments