This article is contributed. See the original author and article here.

Guest Blog Teaching Assistant: Patrick Chao

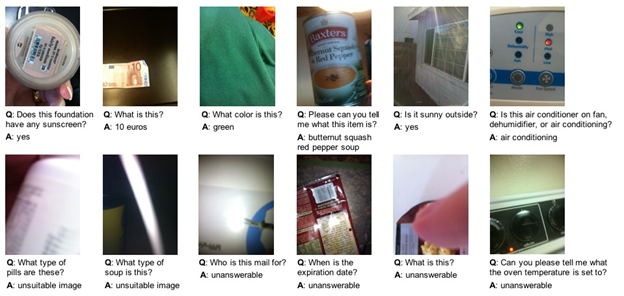

Applications that utilize machine learning have greatly improved human life in many fields. One such application is to help people who are blind or with low vision learn about their visual surroundings using computer vision. By creating such applications, people with visual impairments can more independently accomplish daily tasks such as recognizing the denomination of currency, whether their socks match, and what flavor of yogurt they select to eat for breakfast. To spur research in developing such applications, a series of VizWiz datasets were created to support several AI challenges. One such dataset challenge includes images taken by visually impaired users and an associated question about each image.

In the course “Introduction to Machine Learning,” in the spring semester of 2021, Professor Danna Gurari invited all 19 UT Austin graduate students in the class to join the visual question answering challenge. In this challenge, students would analyze the images taken by visually impaired people and their questions to each image and train a model to predict whether a question can be answered. Therefore, students would learn how to process image and text data, and combine them to train models. Before the challenge, students learned the fundamental theories of machine learning; however, the students were from various backgrounds and only few students had programming experience. For most of them, this would be their first machine learning challenge.

In the class, Professor Gurari introduced various methods of extracting features in Scikit-learn. Meanwhile, the professor also introduced how to use the powerful Azure API to extract more advanced features during in-class labs. After a brief introduction, even the students who did not have programming experience could easily apply Azure’s services to practice advanced feature extraction tasks for both images and text. Moreover, even if the students encountered problems, Azure provided clear documentation and learning resources so that students could explore on their own and quickly learn how to use the powerful services. The services also inspire the students with more ideas for designing experiments and finally help them train better models to complete the challenge.

Within two weeks, 70% of students completed the challenge, and almost half of the models performed with an accuracy over 60%. Students with many majors, such as User Experience, English Literature, and Library Science were all able to complete this machine learning challenge with the power of Azure services.

[2] https://vizwiz.org/tasks-and-datasets/vqa/

Additional Learning Resources

Interested in learning more about AI and Vision see the following:

Detect objects in images with the Custom Vision service – Learn | Microsoft Docs

Classify images with the Custom Vision service – Learn | Microsoft Docs

Explore computer vision in Microsoft Azure – Learn | Microsoft Docs

Process images with the Computer Vision service – Learn | Microsoft Docs

Classify images with the Microsoft Custom Vision Service – Learn | Microsoft Docs

Process and classify images with the Azure cognitive vision services – Learn | Microsoft Docs

Identify faces and expressions by using the Computer Vision API in Azure Cognitive Services – Learn | Microsoft Docs

Classify endangered bird species with Custom Vision – Learn | Microsoft Docs

Use AI to recognize objects in images by using the Custom Vision service – Learn | Microsoft Docs

Introduction to Computer Vision with PyTorch – Learn | Microsoft Docs

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments