by Contributed | May 2, 2024 | Technology

This article is contributed. See the original author and article here.

We are excited to announce the release of Single Sign-On (SSO) for the Defender for IoT Sensor Console! This powerful feature simplifies the login process, enhances security, and provides a seamless experience for all users. Let’s dive into the details:

What’s New?

SSO Support on the sensor console

With SSO, users can log in once and gain access to the sensor console without the hassle of re-entering credentials.

Figure 1: Defender for IoT login page

Figure 1: Defender for IoT login page

New integration with Microsoft Entra ID

By using Entra ID, your organization ensures consistent access controls across different sensors and sites. SSO simplifies onboarding and offboarding processes, reduces administrative overhead, and strengthens security.

Getting Started

Ready to set up SSO for your sensor console?

Follow this step-by-step guide by visiting our documentation: Set up single sign-on for Microsoft Defender for IoT sensor console.

Learn More

What’s new in Microsoft Defender for IoT?

Get ready to experience enhanced security and seamless access with SSO for the Sensor Console. If you have any questions, feel free to comment below!

by Contributed | May 1, 2024 | Technology

This article is contributed. See the original author and article here.

Get ready for the pinnacle of startup innovation as the Imagine Cup World Championship unfolds live at Microsoft Build on May 21! Three outstanding startups from across the globe are poised to showcase their AI-solutions on the global stage, vying for the coveted title and a chance to win USD100,000 and a mentorship session with Microsoft Chairman and CEO, Satya Nadella.

Since the start of the 2024 season back in October, the competition has been a journey of collaboration with expert mentors and growth for participating startups. From a pool of tens of thousands of applications, the field was narrowed to the elite semifinalists, and now, only three world finalists remain.

As the anticipation mounts for the grand finale, our esteemed panel of judges face a daunting task. Drawing on their industry expertise and personal insights, they will meticulously evaluate each startup’s pitch and engage in Q&A sessions. Their evaluation criteria extends beyond mere innovation to encompass the responsible use of AI technology, accessibility for all users and the fundamental business viability of each startup.

The culmination of this journey promises to be nothing short of spectacular. Live on the global stage, the judges’ decision will be unveiled, determining the ultimate champion of the 2024 Imagine Cup!

But who are the discerning minds tasked with determining the 2024 World Champion?

Let’s meet the judges!

Ali Partovi

CEO, Neo; Co-founder of Code.org

Ali Partovi heads Neo, a startup accelerator, diverse mentorship community, and VC fund that helps tomorrow’s tech leaders maximize their potential. Ali invests in people smarter than himself and has backed Airbnb, Dropbox, Facebook, & Uber.

He grew up in Tehran during the Iran-Iraq war, attended Harvard, and sold his first startup, LinkExchange, in 1998. He co-founded Code.org (#HourOfCode) to bring Computer Science to classrooms. He’s passionate about education and loves climbing, guitar, puzzles, and family.

Annie Pearl

Microsoft Corporate Vice President of Ecosystems

As Microsoft Corporate Vice President of Ecosystems, Annie Pearl leads a globally-distributed organization that empowers current and future customers to discover and engage with AI capabilities on the Microsoft Cloud. Teams under her oversight develop and build on platforms, such as Founders Hub and Microsoft Learn, to reach new audiences, skill them on Microsoft’s technology, and help them build the most innovative and AI-driven solutions.

Annie joins Microsoft with +15 years of tech leadership experience in both startup ventures and established enterprises. She served as the Chief Product Officer at Calendly, a premier scheduling automation platform. There, she led the end-to-end strategy and execution of the product vision and roadmap. Under her guidance, Calendly achieved remarkable growth, solidifying its position as the leading scheduling automation tool in the market.

Before her tenure at Calendly, Annie held the role of Chief Product Officer at Glassdoor, where she shaped the product vision and user experience for millions of job seekers and employers worldwide. Earlier in her career, she led Enterprise product teams at Box, contributing to its trajectory both before and after its 2015 IPO. Notably, Annie also played a pivotal role as the VP of Product and a founding team member at Xpert Financial, an early-stage financial services startup.

Annie started her career as a Lawyer and held roles in management consulting before transitioning to the tech industry.

Elnaz Sarraf

Founder & CEO ROYBI (Roybi Robot & RoybiVerse)

Elnaz is a successful entrepreneur and CEO, renowned for her innovations in the field of EdTech, AI, and Robotics. She is the founder of ROYBI® Robot, an AI-powered smart toy that teaches children language and STEM skills. This groundbreaking product has won several prestigious awards, including being named one of TIME Magazine’s Best Inventions in Education and winning the World Economic Forum smart toy award.

With over 15 years of experience as a serial entrepreneur, Elnaz has established herself as a leader in the industry. As the CEO of ROYBI, an investor-backed EdTech company, she has raised millions in funding to focus on early childhood education and self-guided learning through artificial intelligence.

Elnaz’s journey to success has been shaped by her early experiences growing up as a woman in Iran, where opportunities were limited. However, her drive and passion for entrepreneurship led her to the U.S., where she has significantly contributed to the tech industry. Her achievements include being selected as Inc. Top 100 Female Founders, Nasdaq Entrepreneurial Center Milestone Maker, named the Woman of Influence by Silicon Valley Business Journal, and Entrepreneur of The Year in Silicon Valley.

_________

Whether you’re a tech enthusiast, aspiring entrepreneur, or simply someone who loves to witness the inspiring passion and innovation of students – this is an event you won’t want to miss! Gain insights into cutting-edge use cases of AI technology and discover how these startups are shaping the future to make a real impact on the world.

Tune in, cheer for your favorites, follow along, and get inspired by the ingenuity of these student founders.

Mark your calendars for May 21 to witness this moment!

by Contributed | Apr 30, 2024 | Technology

This article is contributed. See the original author and article here.

Introduction

When migrating from Oracle to SQL Server, Azure SQL Database or Azure SQL Managed Instance, an application using the Microsoft JDBC Driver for SQL Server is often used to avoid re-writing the application. However, after migration it’s often discovered that performance is not the same as it was when the data was on Oracle. Optimizations are necessary for query tuning due to the distinct behaviors of the two database engines.

An unnoticed yet significant issue arises from implicit conversions due to JDBC driver settings, leading to performance degradation. This blog seeks to highlight this easily overlooked problem, offering solutions to ensure optimal performance with SQL backend while preserving the JDBC application.

How to Detect Implicit Conversion

Obtain execution plans for your most CPU-intensive queries by enabling the query store. Be aware that implicit conversions might be happening in smaller queries with high execution counts, even if they don’t individually consume significant resources. An easy way to identify the type conversion is given in this blog.

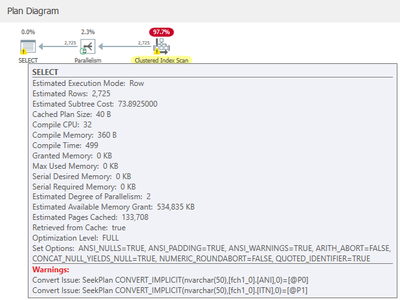

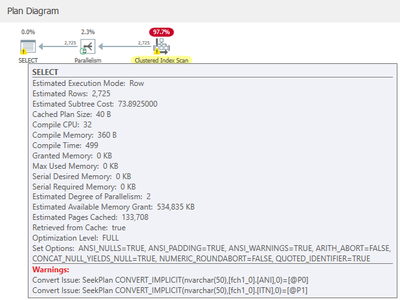

Looking at the execution plan you would see something like this:

While if you look at the statement text, you will see that the JDBC Driver presents the statement like this, which looks innocuous:

(@P0 nvarchar(4000),@P1 nvarchar(4000))select col1, col2 from table1 where col1 = @P0 ….

Nevertheless, implicit conversion will result in queries consuming more CPU resources than anticipated, hindering the scalability of your application. Implicit conversion occurs when the data types of SQL Server columns differ from those presented by the parameter data types of the JDBC driver. Typically, SQL columns are configured with varchar(x) to conserve space compared to Nvarchar(x), while the JDBC driver defaults to transmitting strings as Unicode.

Preventing Implicit Conversion with JDBC Driver

You have two options to choose from based on ease of implementation:

- Change the underlying SQL Server column types to align with the parameter datatype. However, this may not be ideal as Nvarchar occupies more space, and altering SQL column types entails significant design changes.

- For applications utilizing JDBC, utilize a driver connection property known as “sendStringParametersAsUnicode.” This setting determines whether strings are sent to SQL as Unicode parameters or not. It’s the recommended option. If your SQL column datatypes involved in implicit conversion are varchar, set the value to false.

Once this is implemented, check the query plan again. If the value of the “sendStringParametersAsUnicode” setting is false, the parameters presented by the driver will show up as follows:

(@P0 varchar(4000),@P1 varchar(4000))select col1, col2 from table1 where col1 = @P0 ….

As the underlying SQL column types are also varchar, there is no implicit conversion, leading to improved performance and reduced CPU usage!

You can find a list of all the JDBC driver settings here.

Feedback and suggestions

If you have feedback or suggestions for improving this data migration asset, please send an email to Databases SQL Engineering Team.

by Contributed | Apr 29, 2024 | Technology

This article is contributed. See the original author and article here.

Whether you are running a startup or an already thriving small business, harnessing AI-driven solutions will help you discover new opportunities, streamline operations, and make data-driven decisions with confidence. Understanding and exploring the possibilities of AI is essential for small businesses and key to unlocking growth, driving innovation, and maintaining a competitive edge.

The first step is understanding the potential of AI for your business. Microsoft has developed several online resources to help. In recognition of National Small Business Week, we have curated a list of those resources that may be helpful for small business professionals who want to get started with AI.

Establish an AI foundation

Start your AI journey by visiting the Microsoft WorkLab and examining a rich collection of content that addresses the real-world scenarios of how AI is impacting work today. New articles are regularly added that will help you understand not just AI’s high-level capabilities, but also the nuances of AI and how to directly apply AI to your day-to-day work. Key resources include:

Build your AI skills

When you’re ready to build a deeper AI skill, you explore the Microsoft AI Learning Hub. You’ll find a variety of tools to help you go from understanding AI to preparing for it. You can learn the mechanics of using the technology and even how to build it into your own apps and services.

Start with the learning journey for Business Users, which is foundational for getting an underlying understanding of AI, and then move into a more detailed guidance on how to use and implement its capabilities. If you’re an IT professional, look at the learning journey for IT Professionals, which provides a thorough grounding on the particulars of AI adoption, deployment, and small business concerns, like data classification and regulatory considerations.

To define your own path, get skilling recommendations based on your job responsibilities and objectives. No matter where you want to go, you can use the AI learning assessment to define a customized learning journey to get you there.

Put AI to work

To put your AI skills into practice or if you’re already using Copilot for Microsoft 365, visit the Microsoft Copilot Lab. This site provides easy, visual introductions into what Copilot is and how it helps you do more no matter which Microsoft 365 app you are using. These tools are designed for professionals that need a fast, tactical grounding so they can benefit from AI every day.

One example is the prompt writing guide, which explains how to write effective prompts so Copilot can deliver exactly what you need. This toolkit teaches the art and science of prompting. It walks through a series of easy initial prompting exercises like writing an AI-powered email or creating an image, so you’ll understand how to edit a prompt to tailored it to your scenario.

Microsoft Learn has a series of freely available, advanced courses to help you gain a deeper understanding of Copilot, how it works with the apps in Microsoft 365 and best practices for everyday use.

Get started

National Small Business Week may be an annual event, but you can build your AI skills year-round. Join the Microsoft SMB Tech Community to network with other professionals using Copilot. You can come here anytime to ask questions, get help, keep up with the latest AI news specific to small and medium-sized businesses and find out about upcoming online or local events.

by Contributed | Apr 26, 2024 | Technology

This article is contributed. See the original author and article here.

The domain name code.microsoft.com has an interesting story behind it. Today it’s not linked to anything but that wasn’t always true. This is the story of one of my most successful honeypot instances and how it enabled Microsoft to collect varied threat intelligence against a broad range of actor groups targeting Microsoft. I’m writing this now as we’ve decided to retire this capability.

In the past the domain ‘code.microsoft.com’ was an early domain used to host Visual Studio Code and some helpful documentation. The domain was active until around 2021, when this documentation was moved to a new home. The site behind the domain was an Azure AppService site that performed the redirection thus preventing existing links from being broken.

Sometime around mid-2021 the existing Azure AppService instance was shutdown leaving code.microsoft.com pointing to a service that no longer existed. This created a vulnerability.

This situation is what’s called a dangling subdomain which refers to a subdomain that once pointed to a valid resource but now hangs in limbo. Imagine a subdomain like blog.somedomain.com that used to handle a blog application. When the underlying service is deleted (the blog engine) you might update your page link and assume the service has been retired. However, there is still a subdomain that pointed to the blog entire, this is now “dangling” and can’t be resolved.

A malicious actor can discover the dangling subdomain. Provision a cloud Azure resource with the same name and now visiting blog.somedomain.com will redirect to the attacker’s resource. Here they control the content.

This happened in 2021 when the domain was temporarily used to host a malware C2 service. Thanks to multiple reports from our great community this was quickly spotted and taken down before it could be used. As a response to this Microsoft now has more robust tools in place to catch similar threats.

How did it become a honeypot?

Today it is relatively routine that MSTIC takes control of attacker-controlled resources and repurposes these for threat intelligence collection. Taking control of a malware C2 environment for example enables us to potentially discover new infected nodes.

At the time of the dangling code subdomain this process was relatively new. We wanted a good test case to show the value of taking over resources over taking them down. So instead of removing the dangling subdomain we pointed this instead to a node in our existing vast honeypot sensor network.

A honeypot is a decoy system designed to attract and monitor malicious activity. Honeypots can be used to collect information about the attackers, their tools, their techniques, and their intentions. Honeypots can also be used to divert the attackers from the real targets and to waste their time and resources.

Microsoft’s honeypot sensor network has been in development since 2018. It’s used to collect information on emerging threats to both our and our customers environments. The data we collect helps us be better informed when a new vulnerability is disclosed and gives us retrospective information on how, when and where exploits are deployed.

This data becomes enriched with other tools Microsoft has available and turns it from a source of raw threat data to threat intelligence. This is incorporate into a variety of our security products. Customers can also get access to this via Sentinel’s emerging threat feed.

The honeypot itself is a custom designed framework written in C#. It enables security researchers to quickly deploy anything from a single HTTP exploit handler in one or two lines of code all the way up to complex protocols like SSH and VNC. For even more complex protocols we can hand off to real systems when we detect exploit traffic and revert these shortly after.

It is our mission to deny threat actors access to resources or enable them to use our infrastructure to create further victims. That’s why in almost all scenarios the attacker is playing in a high interaction, simulated environment. No code is run, everything is a trick or deception designed to get them to reveal their intentions to us.

Substantial engineering has gone into our simulation framework. Today over 300 vulnerabilities can be triggered through the same exploit proof-of-concepts available in places like GitHub and exploitdb. Threat actors can communicate with over 30 different protocol and can even ‘log in’ and deploy scripts and execute payloads that look like they are operating on a real system. There is no real system and almost everything is being simulated.

Even so it’s important that in standing up a honeypot on an important domain like Microsoft.com that it wasn’t possible for attackers to use this as environment to perform other web attacks. Attacks that might rely on same origin trust. To mitigate this further we added the sandbox policy to the pages which prevents these kinds of attacks.

What have we learnt from the honeypot?

Our sensor network has contributed to many successes over the year. We’ve presented on these at computer security conferences in the past as well as shared our data with academia and the community. We incorporate this data into our security products to enable them to be aware of the latest threats.

In recent years this capability has been crucial to understanding the 0day and nDay ecosystem. During Log4shell incident we were able to use our sensor network to track each iteration of the underlying vulnerability and associated proof-of-concept all the way back to GitHub. This helped us understand the groups involved in productionising the exploit and where it was being targeted. Our data enables internal teams to be much better prepared to remediate and provides the analysis for detection authors to improved products like MDE in real time.

The team developing this capability also works closely with the MSRC who our track our own security issues. When the Exchange ProxyLogon vulnerability was announced we had already written a full exploit handler in our environment to track and understand not just the exploit but the groups deploying it. This situational awareness this enables us to give clearer advice to industry, better protect our customers and integrate new threats we were seeing into Windows Defender and MDE.

The domain code.microsoft.com was often critical to the success of this as well as a useful early warning system. When new vulnerabilities have been announced, threat actors can often be too consumed with trying to use the vulnerability as quickly as possible than checking for Deception infrastructure like a honeypot. As a result, code.microsoft.com often saw exploits first, many of these exploits were attributed to threat actors MSTIC already tracks.

What happened next?

The code subdomain had been known to bug bounty researchers for several years. When receiving reports for this domain these would be closed to let them know they had found a honeypot.

In the past we’ve asked these security professionals to refrain from publishing the details of this service is in effort to protect the value we received from it. We’ve also understood for a while that this subdomain would need to be retired when it because well know what was behind it.

That time is now.

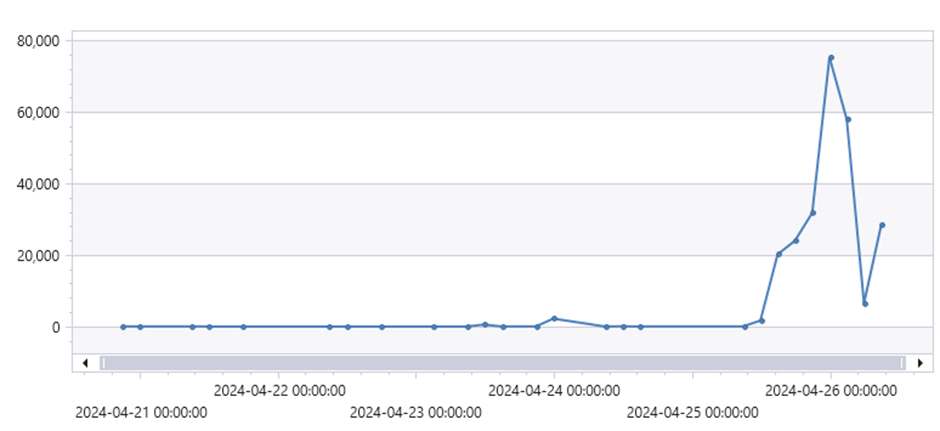

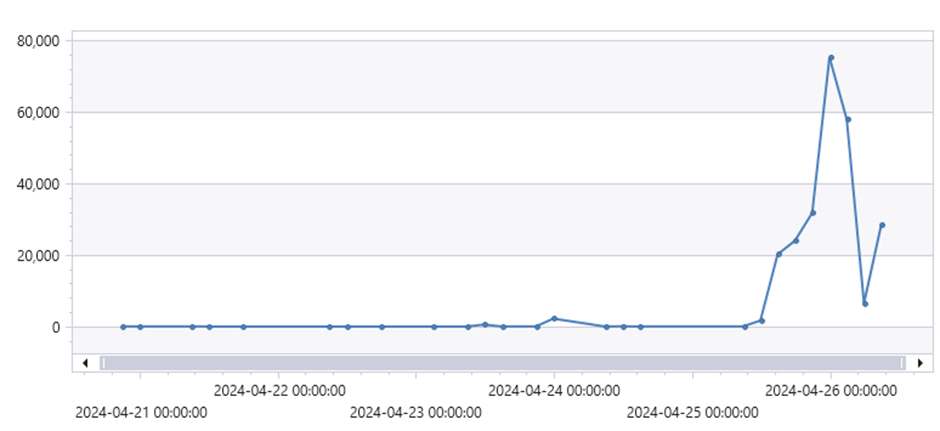

On the 25th April an uptick in traffic to the subdomain and posts on Twitter showed that domain was being investigated by a broad groups of individuals. We don’t want to waste effort researchers put into finding issues with our production systems so it was decided that the truth would finally be revealed and the system retired.

The timeline below gives an order of events from our perspective. It’s unknown exactly how the full exploit URL for our server ended up in Google search database, but it looks like this and the associated discovery on Twitter/X culminated in almost 80k Wechat exploits in a 3 hour period. it’s unlikely the Google crawler would have naturally found the URL. Our current theory is that a security researcher found this and submitted a report to Microsoft. As part of this process either the Chrome browser or another app found this URL and submitted it for indexing.

March 2024 |

WeChat exploit appear in Google search results for the first time |

15th April 2024 |

Sumit Jain posts a redacted screenshot of a exploit mitigation, some debate occurs about whether the domain is the code subdomain. |

21st April 2024 |

Google trends show that many people are now search for this domain |

24th April 2024 |

We start to notice a significant uptick in traffic to the subdomain |

26th April 2024 |

We are handling 126 thousand times more requests than average |

By 26th April we were handling ~160k requests per day, up from the usual 5-100. Most of these requests were to a single endpoint handling a vulnerability in the Wechat Broadcast plugin for WordPress (CVE-2018-16283). This enabled anyone to ‘run’ a command from a parameter in the URI.

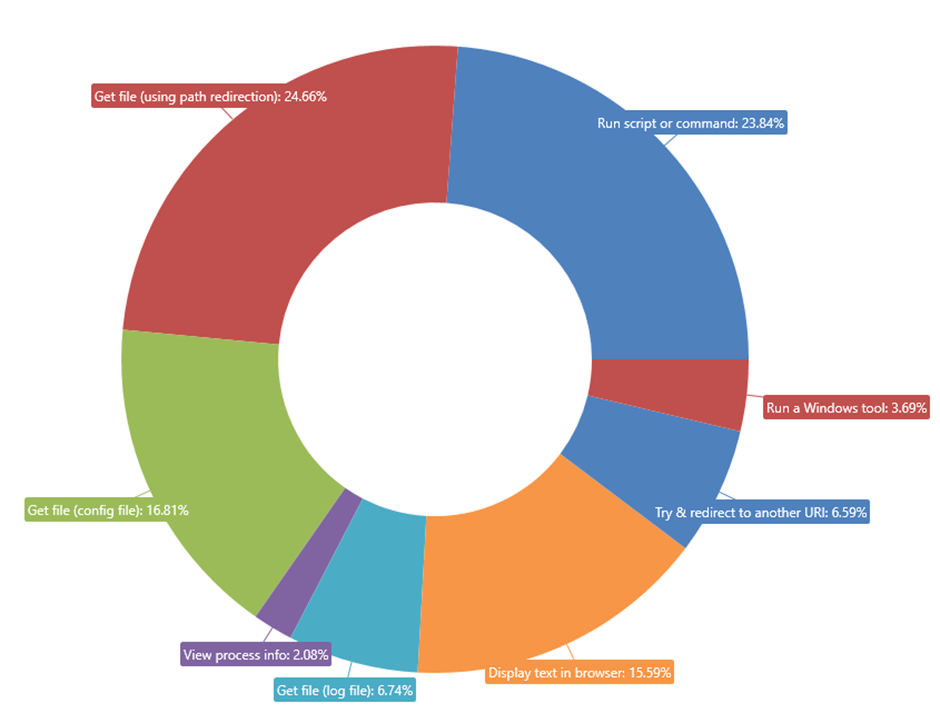

Looking at these URI’s we found 11k different commands being run. Most of these pushed a message by some group or another stating that the site had been hacked by them. As this was a simulation, this did not happen.

Removing these messages gives a clearer picture of the kinds of commands people were entering.

Most commands entered were Linux recon commands. These attempted to find out what the system was, what files it contained and more broadly what value it was to Microsoft. The next biggest group were running command, these ranged from basic Linux commands like ‘whoami’ but a few enterprising folks went on to run scripts of various languages.

Most people who interacted didn’t get further that the Wechat exploit. Over the three days that infosec Twitter took interest 63 different exploits in total were triggered. The biggest surprise to me was that most researchers stuck to HTTP, only three groups probed the other ports and even fewer logged into the other services that were available.

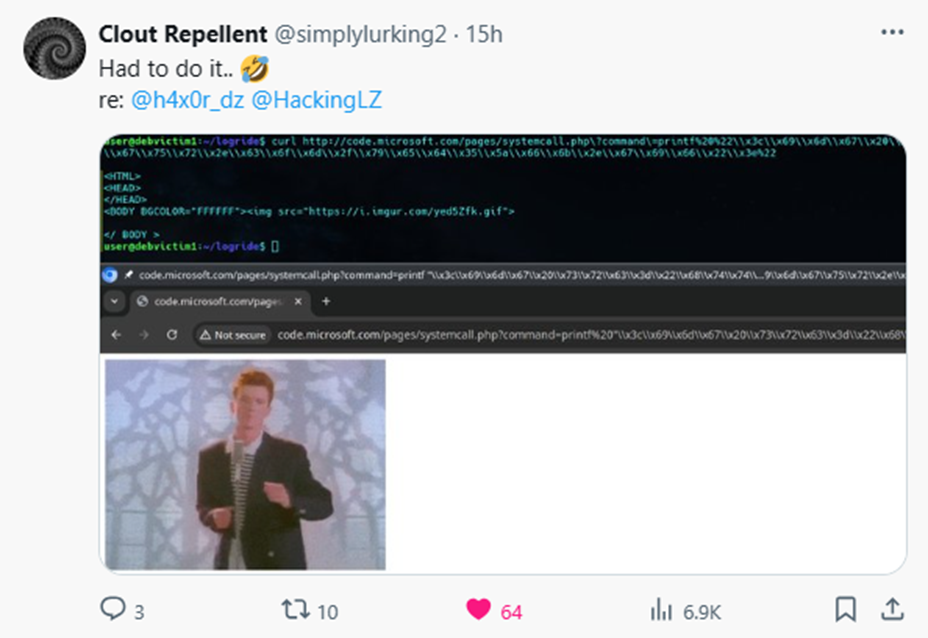

Some of the best investigation came from @simplylurking2 on Twitter/X who after finding out the system was a honeypot continued to analyse what we had in place and constructed. First constructing a rick roll and then a URL that when visited would display a message to right click and save a payload.

With such a lot of information now publicly available the usefulness of this subdomain has also diminished. On April 26th we replaced the site with a 404 message and are working on retiring the subdomain completely.

Our TI collection is undiminished, Microsoft runs many of these collection services across multiple datacentres. Our concept has been proved and we have rolled out similar capabilities at higher scales in many other locations worldwide. These continue to give us a detailed picture of emerging threats.

Figure 1: Defender for IoT login page

Recent Comments