This article is contributed. See the original author and article here.

Background and Overview

Azure Machine Learning (AML) natively supports deploying a model as a web service on Azure Kubernetes Service (AKS). Based on the official AML documentation, deploying models to AKS offers the following benefits: Fast response time, Auto-scaling of the deployed service, Logging, Model data collection, Authentication, TLS termination, Hardware acceleration options such as GPU and field-programmable gate arrays (FPGA). Please refer to the official documentation for directions on using AML Python SDK, Azure CLI, or even Visual Studio Code to deploy models to AKS.

This blog article, as well as the accompanying GitHub repo, demonstrates an alternative option, which offers significant flexibility in model deployment. In particular, this solution template helps enable the following use cases:

- Enable multi-region deployment

- More flexibility in endpoint configuration and management

- Model agnostic–one endpoint can invoke several models, providing the required environment is built beforehand. One environment can be reused across several models

- Controlled rollout of model inference deployment

- Enable higher automation across various AML workspaces for CI/CD purposes

- The solution can be customized to retrieve models directly from Azure storage, without invoking AML workspace at all, providing further flexibility

- The solution can be modified to include use cases beyond model inferencing. Data engineering via AKS endpoint without any specified model is also possible.

Contributor:

Han Zhang (Microsoft Data & AI Cloud Solution Architect)

Ganesh Radhakrishnan (Microsoft Senior App & Infra Cloud Solution Architect)

Prerequisites

Before you proceed, please complete the following prerequisites:

- Review and complete all modules in Azure Fundamentals course.

- An Azure Resource Group with Owner Role permission. All Azure resources will be deployed into this resource group.

- A GitHub Account to fork and clone this GitHub repository.

- An Azure DevOps Services (formerly Visual Studio Team Services) Account. You can get a free Azure DevOps account by accessing the Azure DevOps Services web page.

- An Azure Machine Learning workspace. AML is an enterprise-grade machine learning service to build and deploy models faster. In this project, you will use AML to register and retrieve models.

- This project assumes readers/attendees are familiar with Azure Machine Learning, Git SCM, Linux Containers (docker engine), Kubernetes, DevOps (Continuous Integration/Continuous Deployment) concepts and developing Microservices in one or more programming languages. If you are new to any of these technologies, go thru the resources below.

- Build AI solutions with Azure Machine Learning

- Introduction to Git SCM

- Git SCM Docs

- Docker Overview

- Kubernetes Overview

- Introduction to Azure DevOps Services

- (Optional) Download and install Postman App, a REST API Client used for testing the Web API’s.

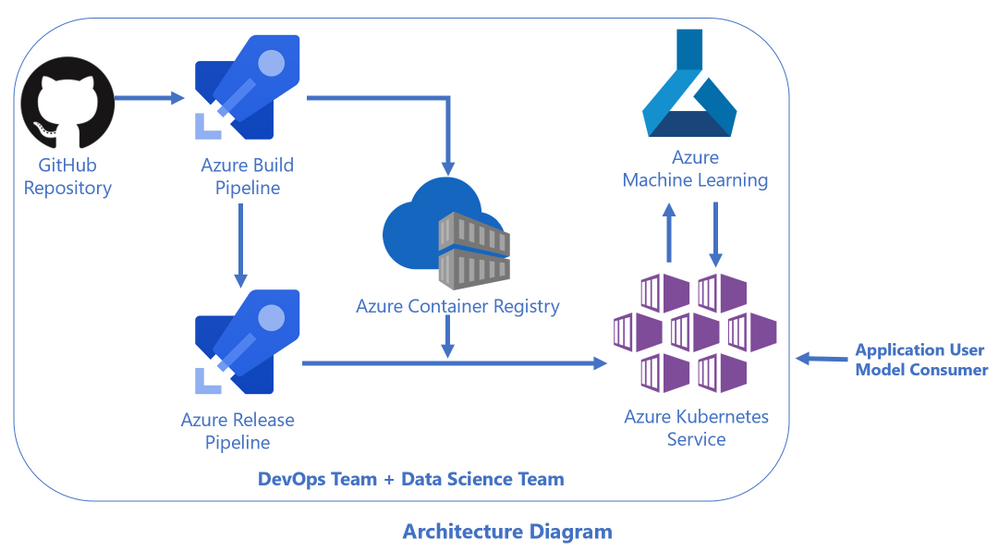

Architecture Diagram

Here is the architecture diagram for this solution template:

For easy and quick reference, readers can refer to the following online resources as needed.

- Docker Documentation

- Kubernetes Documentation

- Helm 3.x Documentation

- Azure Kubernetes Service (AKS) Documentation

- Azure Container Registry Documentation

- Azure DevOps Documentation

- Azure Machine Learning Documentation

Step by Step Instructions

Set up Azure DevOps Project

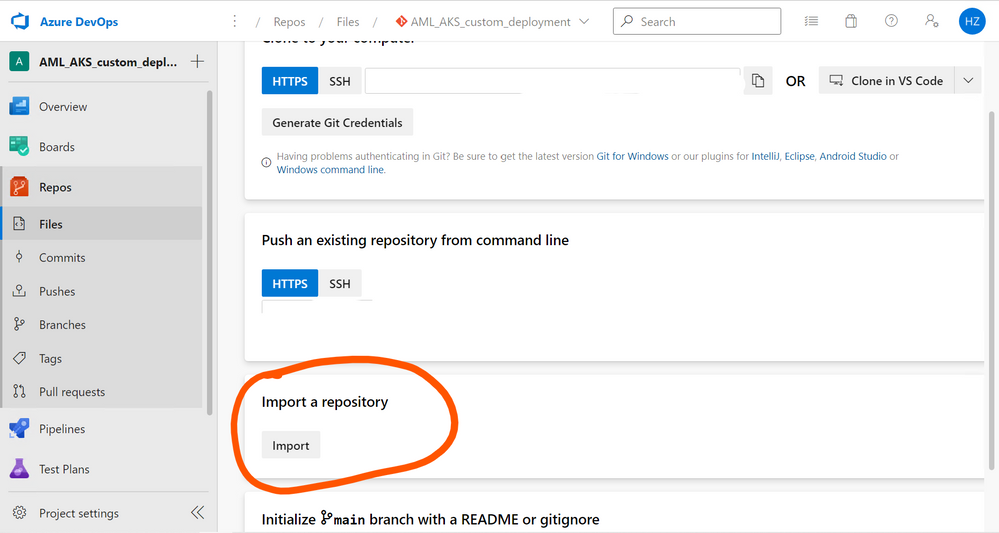

- Go to Azure Devops website, and set up a project named AML_AKS_custom_deployment (Substitute any name as you see fit.)

Set up Project

- Go to Repos on the left side, and find Import under Import a repository

Use https://github.com/HZ-MS-CSA/aml_aks_generic_model_deployment as clone URL

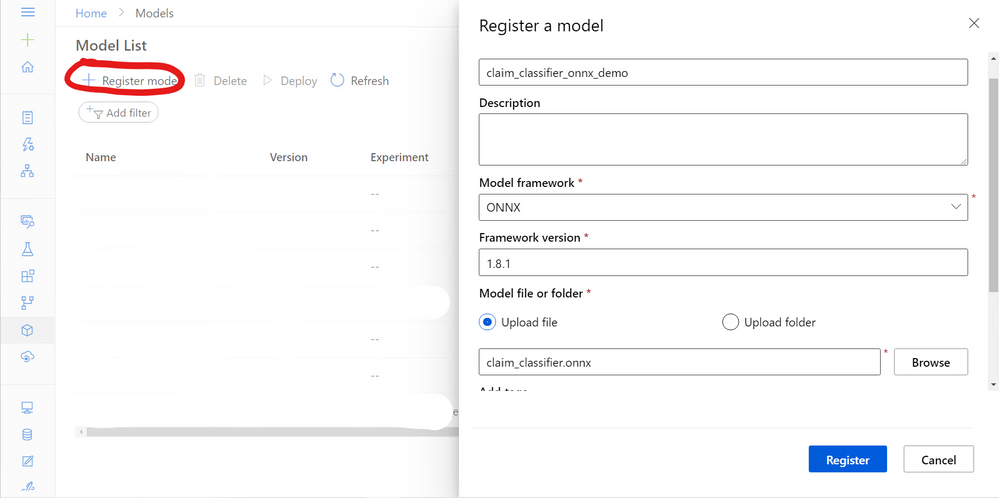

Upload AML Model

As a demonstration, we will be using an onnx model from a Microsoft Cloud Workshop activity.

- “This is a classification model for claim text that will predict

1if the claim is an auto insurance claim or0if it is a home insurance claim. The model will be built using a type of Deep Neural Network (DNN) called the Long Short-Term Memory (LSTM) recurrent neural network using TensorFlow via the Keras library.” Source here. - For step by step guidance on how to create and train this model, please see the MCW workshop here.

- For your convenience, you can find the onnx model under sample_model/claim_classifer.zip

- Download and unzip the file, and upload the onnx model to Azure ML workspace

Modify Azure DevOps Repo Content

There are two files that need to be modified to accommodate the onnx model

- ./main-generic.py: This is essentially a scoring entry script that calls AML SDK, retrieve the model from the registry, and wrap it in a flask API. The original main-generic.py is a template, and you can add any relevant codes to execute the model in this file. Please replace the content of this file with ./sample_model/main-generic.py (An example of how to customize this python script)

- ./sample_model/main-generic.py is an adapted version of the original MCW-Cognitive services and deep learning Claim Classification Jupyter Notebook. Please see source here.

- ./project_env.yml: This specifies the dependencies required for the model to execute. Please replace the content of this file with ./sample_model/project_env.yml (An example of how to customize this yml file)

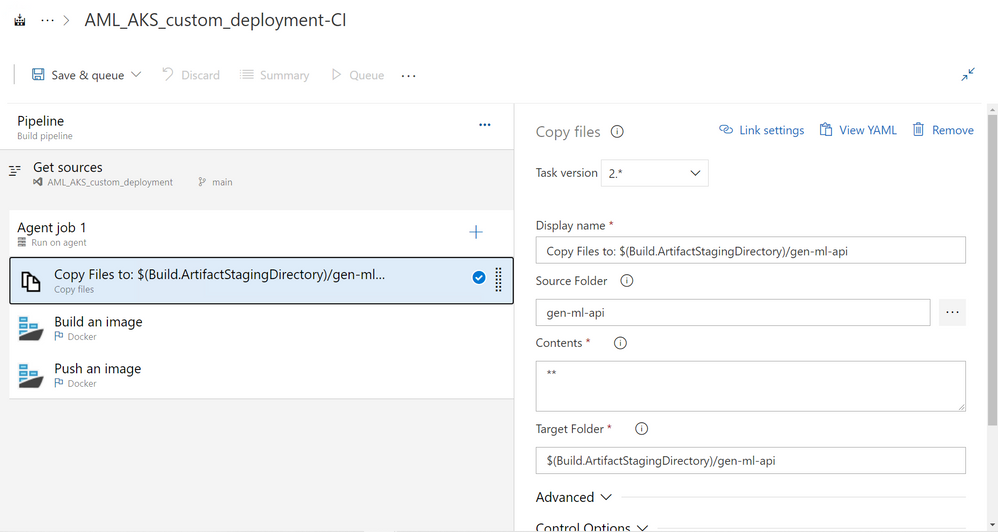

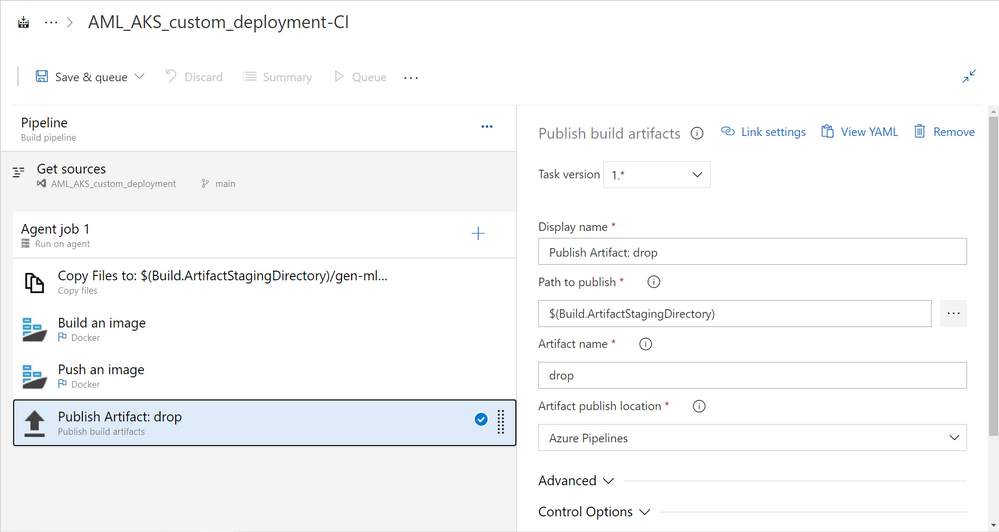

Set up Build Pipeline

- Create a pipeline by using the classical editor. Select your Azure Repos Git as source. Then start with an empty job.

- Change the agent specification as ubuntu-18.04 (same for release pipeline as well)

- Copy Files Activity: Configure the activity based on the screenshot below

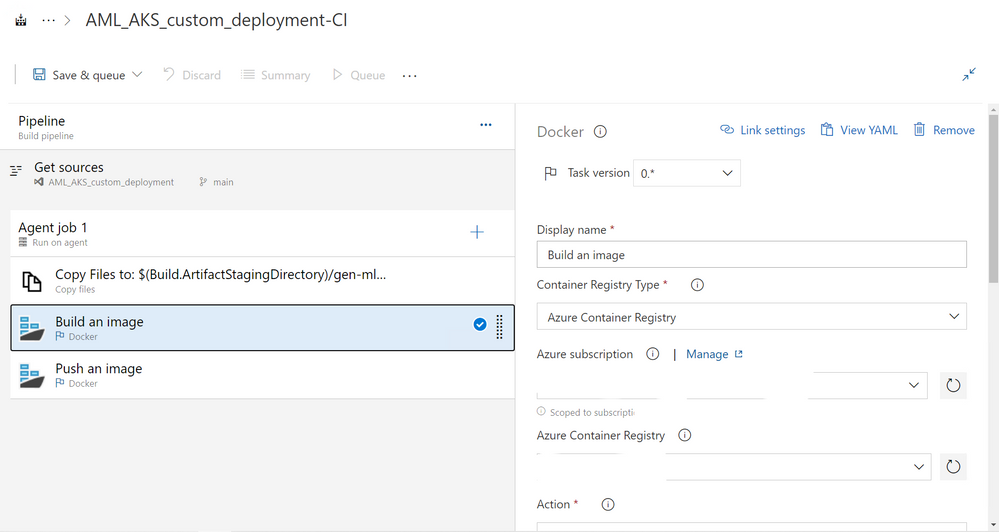

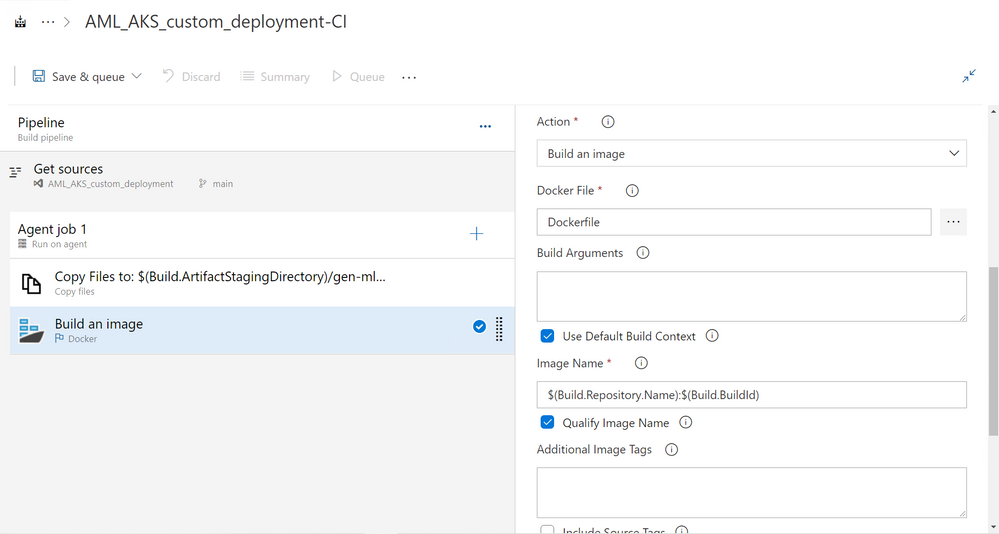

Docker-Build an Image: Configure the activity based on the notes and screenshot below

- Change task version to be 0.*

- Select an Azure container registry, and authorize Azure Devops’s Azure connection

- In the “Docker File” section, select the Dockerfile in Azure Devops repo

- Leave everything else as default

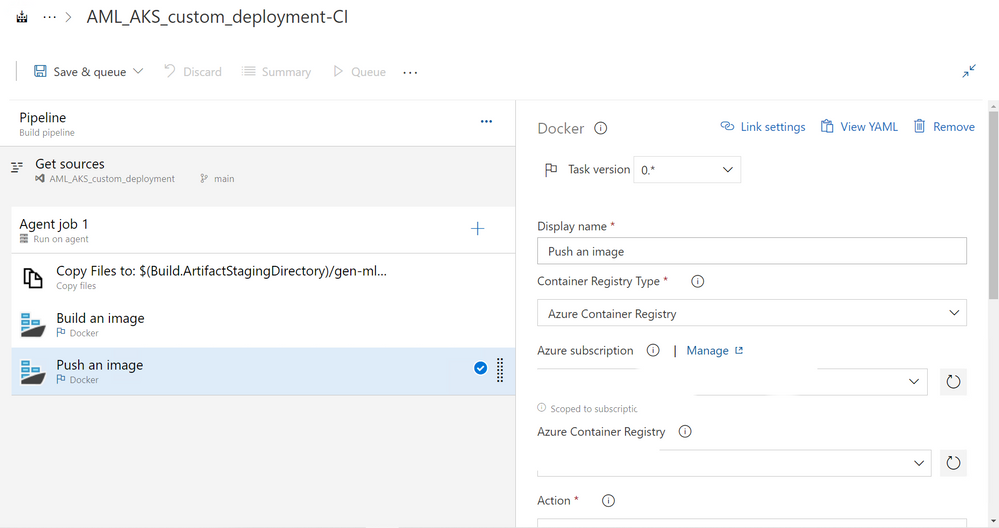

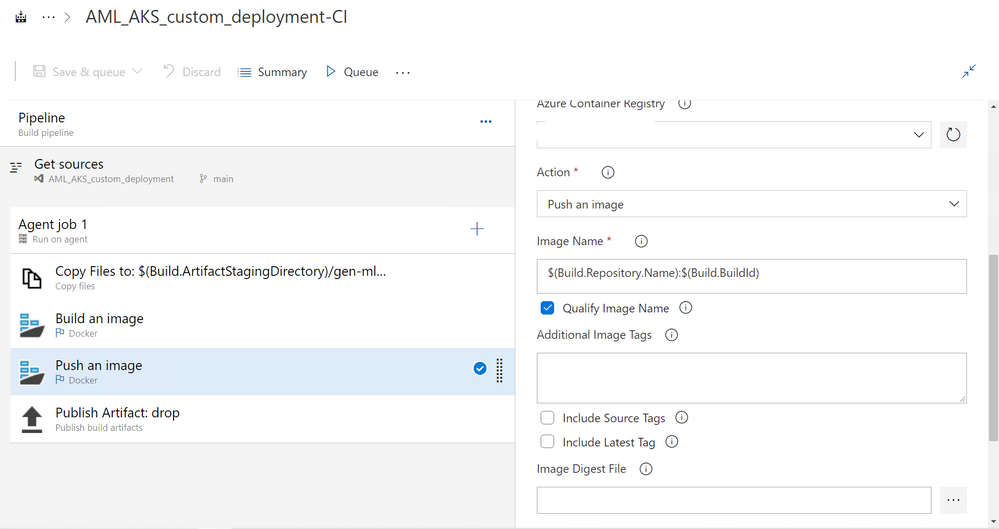

- Docker-Push an Image: Configure the activity based on the notes and screenshot below

- Change task version to be 0.*

- Select the same ACR as Build an Image step above

- Leave everything else as default

Publish Build Artifact: Leave everything as default

Save and queue the build pipeline.

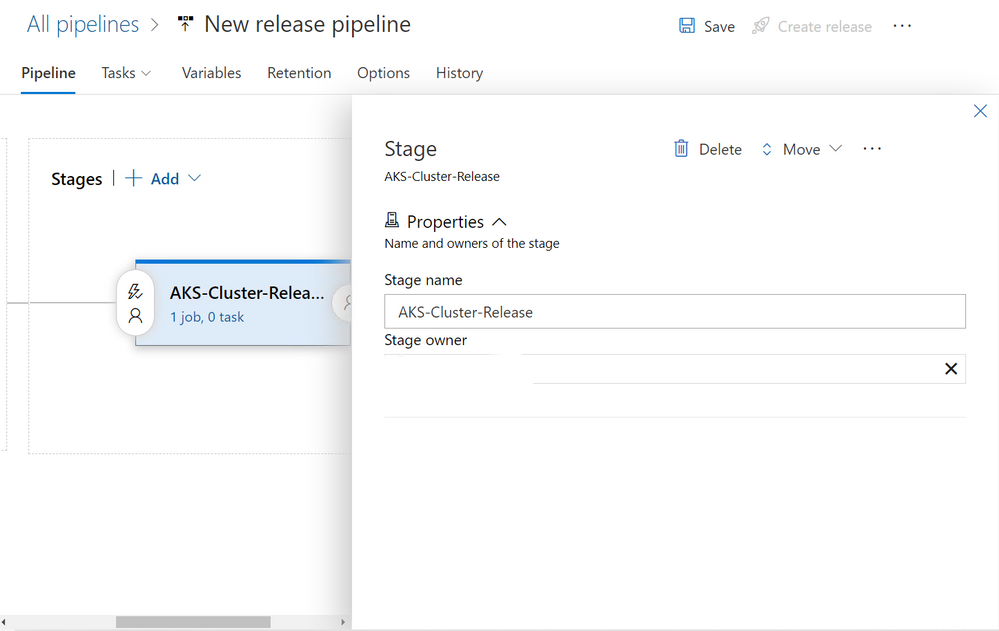

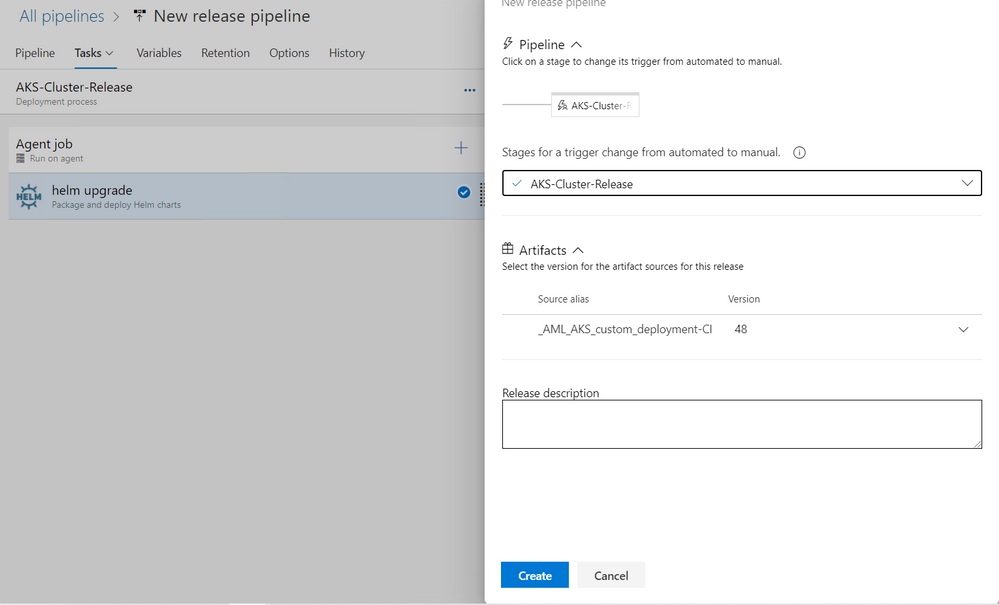

Set up Release Pipeline

Start with an empty job

Change Stage name to be AKS-Cluster-Release

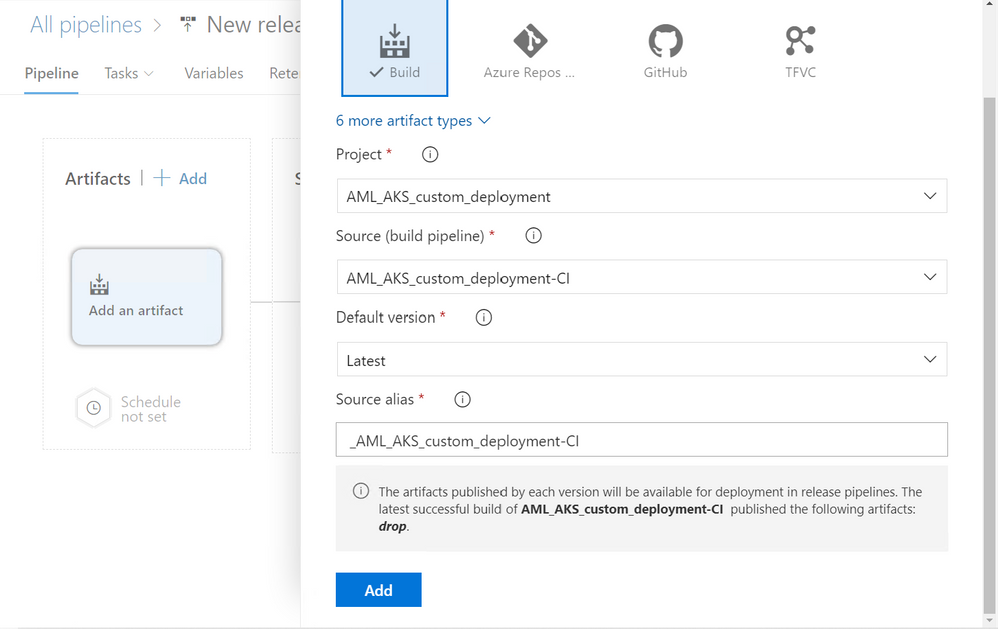

Add build artifact

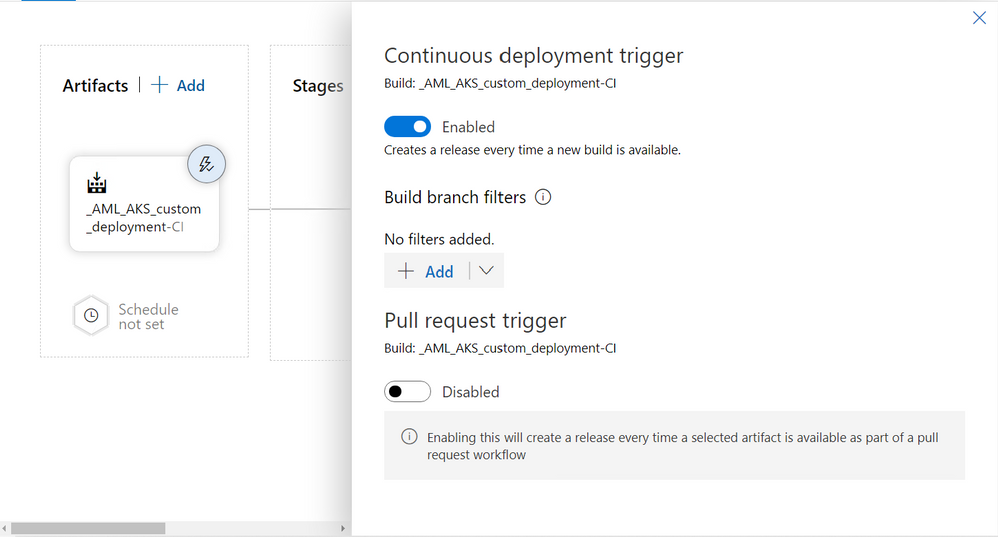

Set up continuous deployment trigger–the release pipeline will be automatically kicked off every time a build pipeline is modified

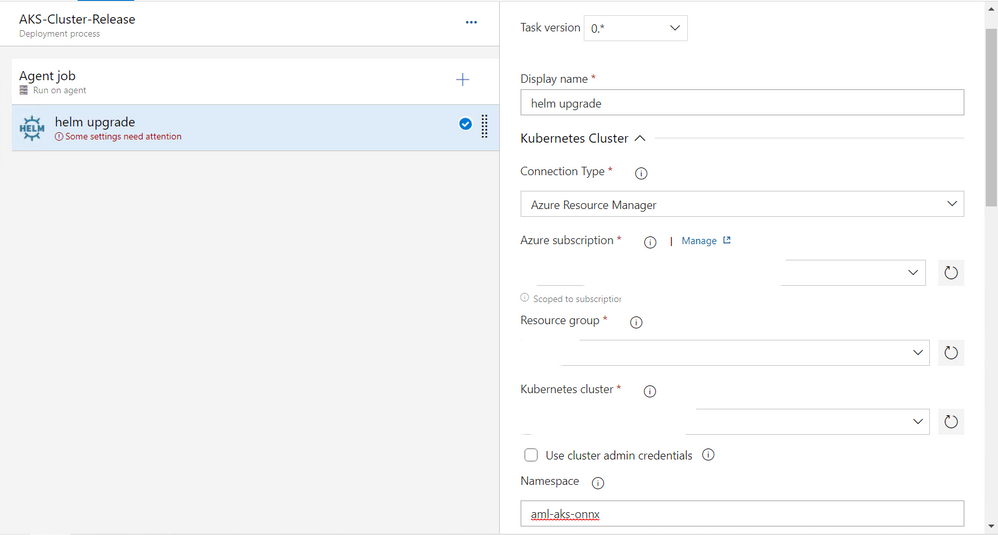

helm upgrade: Package and deploy helm charts activity.

- Select an appropriate AKS cluster

- Enter a custom namespace for this release. For this demo, the namespace is aml-aks-onnx

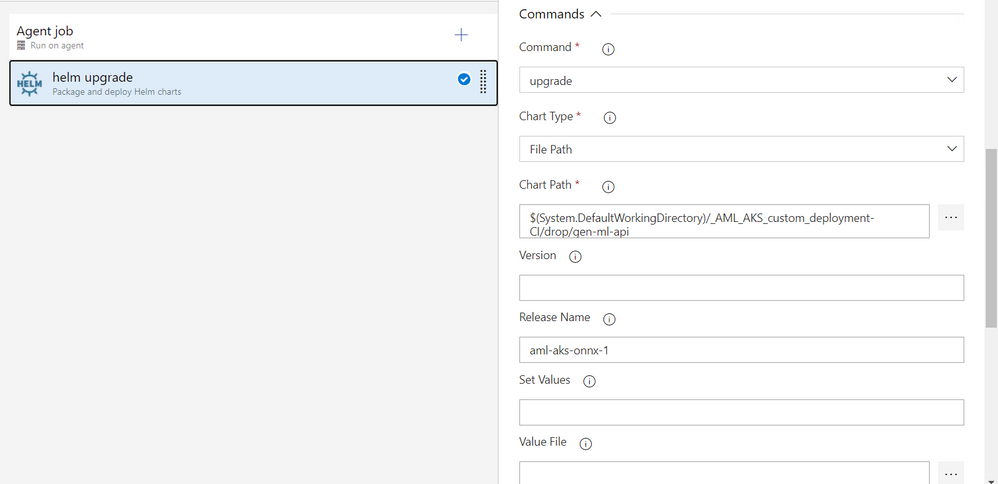

- Command is “upgrade”

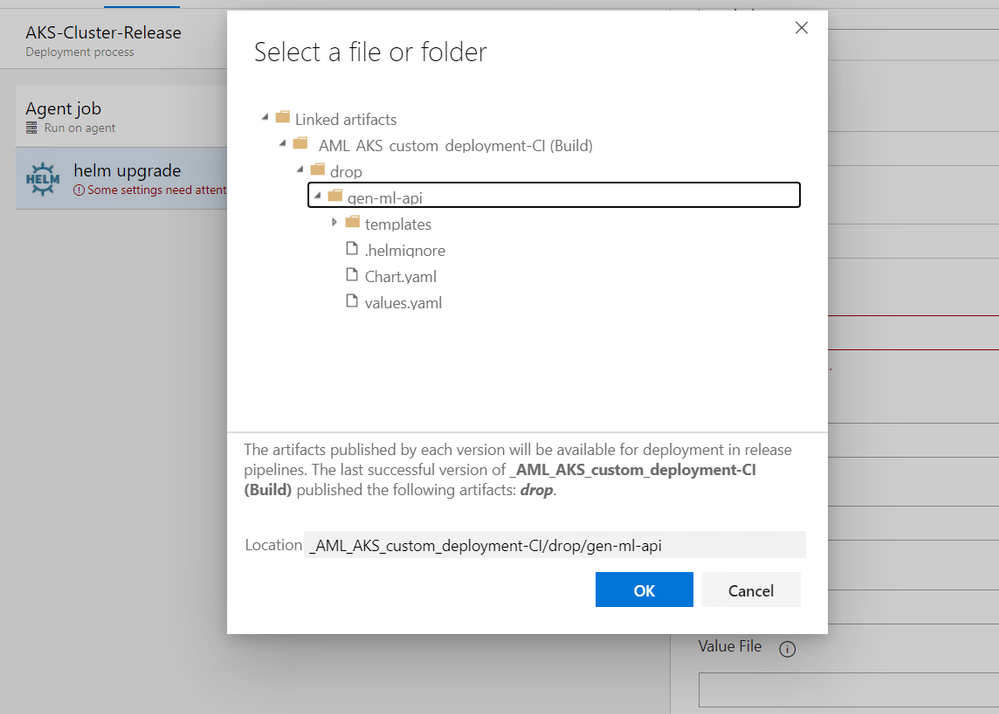

- Chart type is “File path”. Chart path is shown in the screenshot below

- Set release name as aml-aks-onnx-1

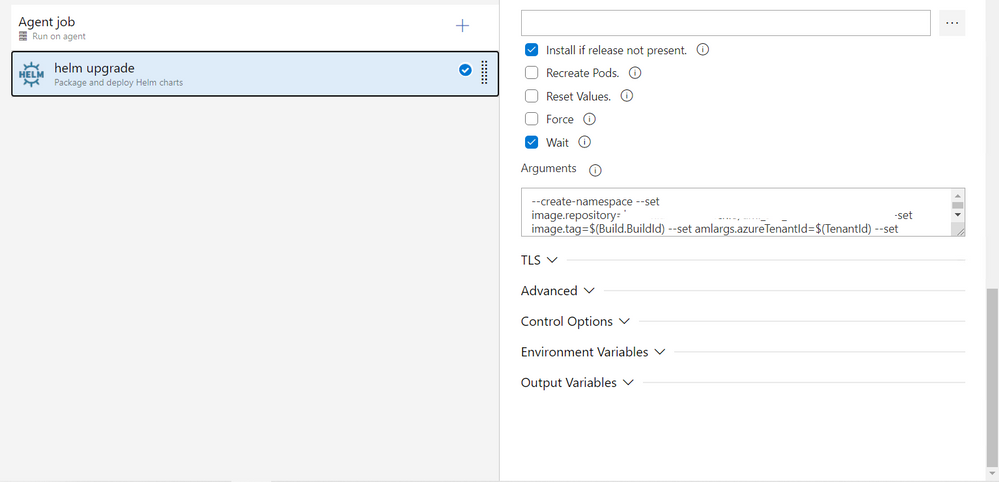

- Make sure to select Install if not present and wait

- Go to your Azure Container Registry, and find Login server URL. Your Image repository path is LOGIN_SERVER_URL/REPOSITORY_NAME.

- In arguments, enter the following content:

–create-namespace –set image.repository=IMAGE_REPOSITORY_PATH –set image.tag=$(Build.BuildId) –set amlargs.azureTenantId=$(TenantId) –set amlargs.azureSubscriptionId=$(SubscriptionId) –set amlargs.azureResourceGroupName=$(ResourceGroup) –set amlargs.azureMlWorkspaceName=$(WorkspaceName) –set amlargs.azureMlServicePrincipalClientId=$(ClientId) –set amlargs.azureMlServicePrincipalPassword=$(ClientSecret)

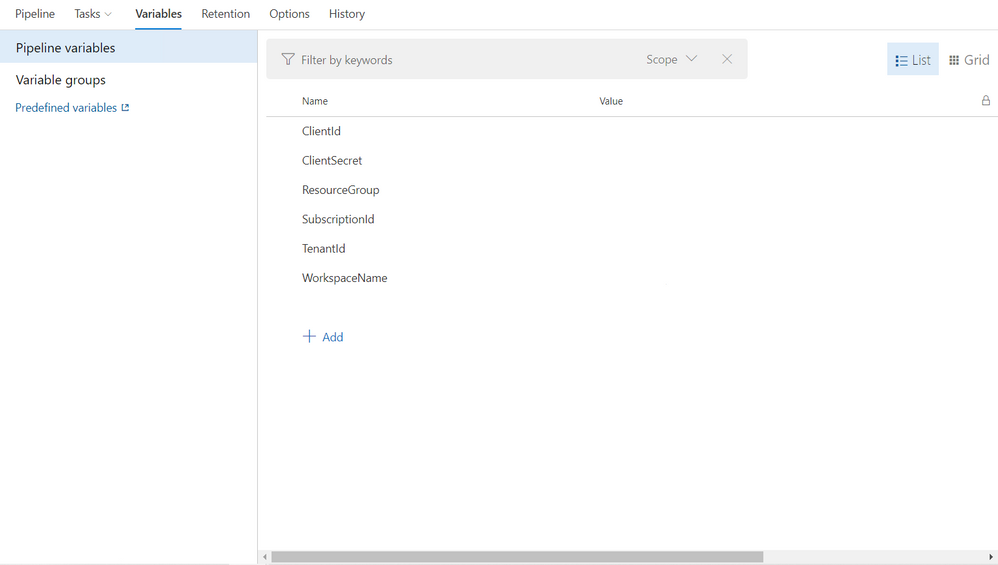

In Variables/Pipeline Variables, create and enter the following required values

- ClientId: Follow How to: Use the portal to create an Azure AD application and service principal that can access resources to create a service principal that can access Azure ML workspace

- ClientSecret: See the instruction for ClientId

- ResourceGroup: Resource Group for AML workspace

- SubscriptionId: Can be found on AML worksapce overview page.

- TenantId: Can be found in Azure Activate Directory

- WorkspaceName: AML workspace name

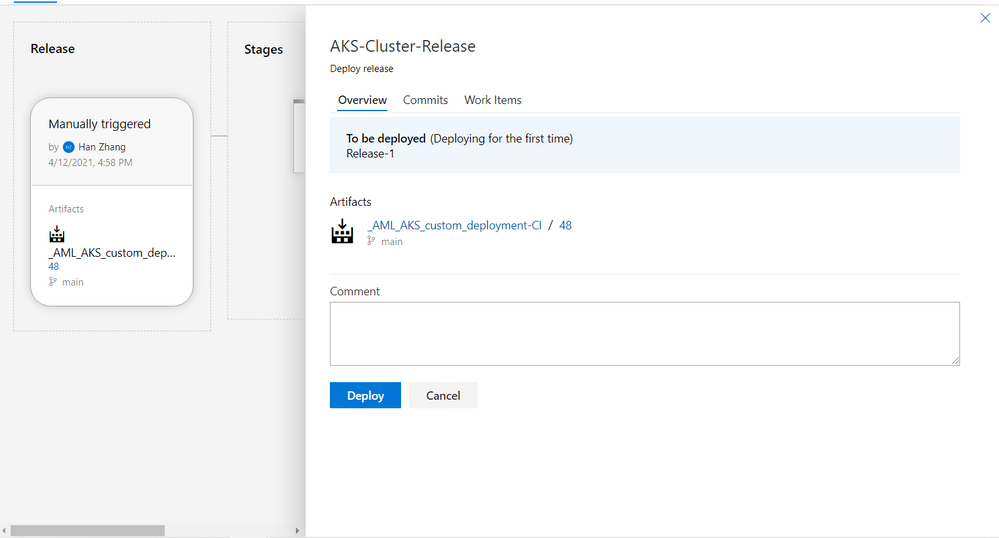

Save, create, and deploy release

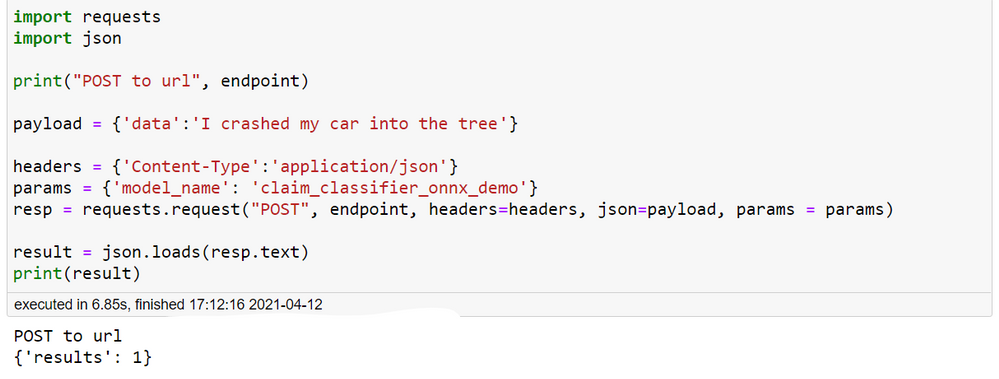

Testing

- Retrieve external IP for deployed service

- Open powershell

az account set –subscription SUBSCRIPTION_IDaz aks get-credentials –resource-group RESOURCE_GROUP_NAME –name AKS_CLUSTER_NAMEkubectl get deployments –all-namespaces=true- Find the

aml-aks-onnxnamespace, make sure it’s ready kubectl get svc –namespace aml-aks-onnx. External IP will be listed there

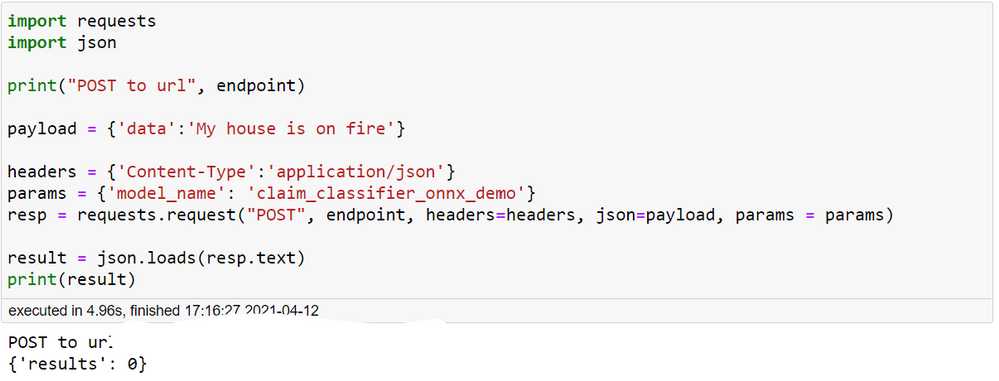

- Use test.ipynb to test it out

- endpoint is

http://EXTERNAL_IP:80/score. You can optionally set it to behttp://EXTERNAL_IP:80/healthcheckand then use the get method to do a quick health check - In the post method section, make sure to enter the model name. In this demo, the model name is claim_classifier_onnx_demo. Enter any potential insurance claim text, and see the model classifies it into auto or home insurance claim in real time.

- endpoint is

License

MIT License

Copyright (c) 2021 HZ-MS-CSA

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the “Software”), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED “AS IS”, WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments