This article is contributed. See the original author and article here.

Detecting and recognizing faces in videos is a common task. Models are trained to detect the general attributes of the human face, as well as delicate details that are the unique features that make each one of us one of a kind. With the right algorithms and sufficient training models can detect a face in the video, and even, when trained for it, assign the correct name to each face.

What about an animated character? Can the human-based models detect a cartoon version of a human, or even a talking fork? The answer is that in most cases, no. Animated characters have their own unique features, and not only that they are different from humans, but they also differ a lot between themselves.

The animated characters detection and recognition task is indeed a big challenge for the Data Science community. The Video Indexer DS team has been dealing with this task in the past year and half, and today we release a big milestone of improvement in these models. The final goal of this task is to recognize the animated character by its name and specify all its appearances in the video. This is a big task that is separated to three sub models.

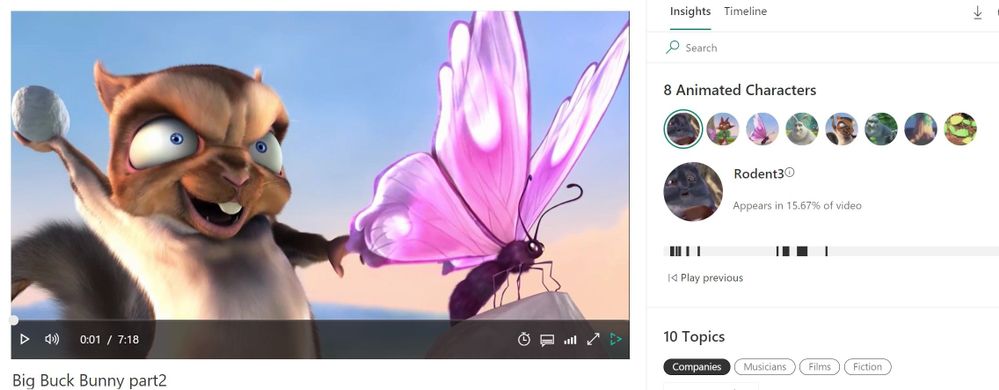

Example of Animated Characters Recognition

We would like to create a model that recognizes the characters in the video [1]. Since there is only one episode for this video, to demonstrate that the recognition of characters works on parts of the video that were not used for training the recognition model, I trimmed the video to two parts – part 1 of ~3 minutes, and part 2 – the rest of the video.

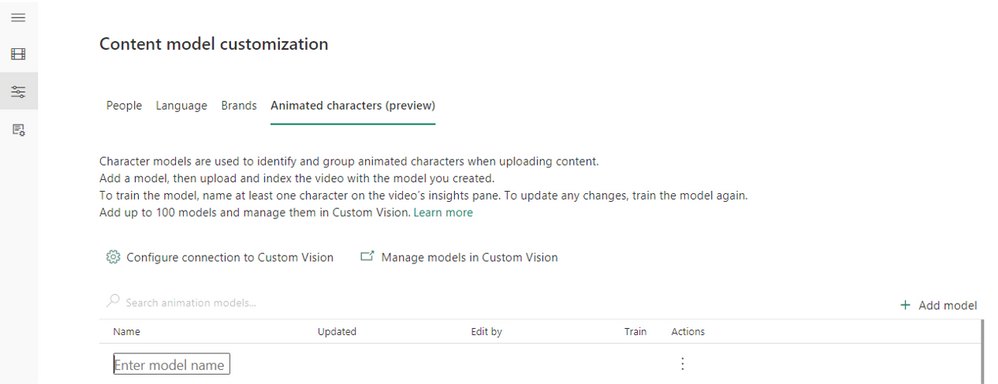

We start from creating an empty animation model:

Go to Model Customization (the left arrow), and to the “Animated characters (preview)” tab. Press on the “Add model” (red arrow), and enter a model name.

Pic 1: screenshot from Video Indexer website creating a custom model for animated characters.

Pic 2: screenshot from Video Indexer website after creating a custom model for animated characters.

Next, we index the first part of the video using the “Animation models (preview)” option, and our new model:

Pic 3: screenshot from Video Indexer website uploading a video selecting an animation characters custom model

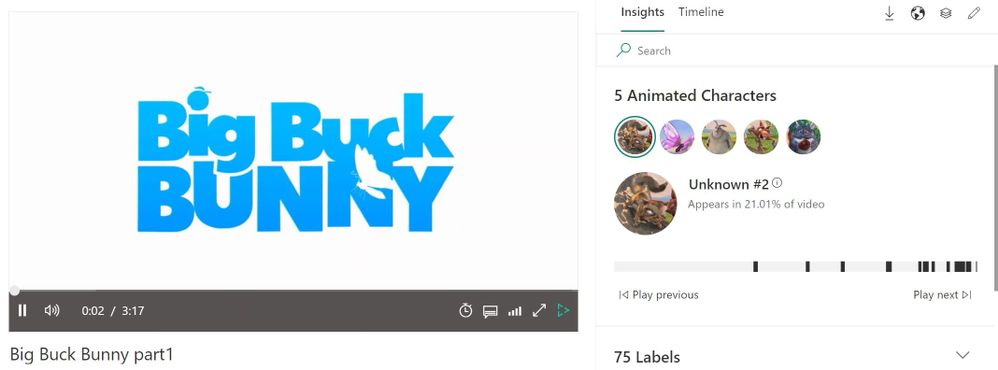

This is how the video looks after indexing:

Pic 4: screenshot of Video Indexer player page, showing the detected animated characters of the indexed video [1]

We see that 5 characters were detected in the video, and I’m interested in creating a model for the characters Big Buck Bunny, Rodent1, Rodent2, Rodent3 and Butterfly:

Pic 5: Representative images of the characters that were detected and grouped when indexing the video [1]

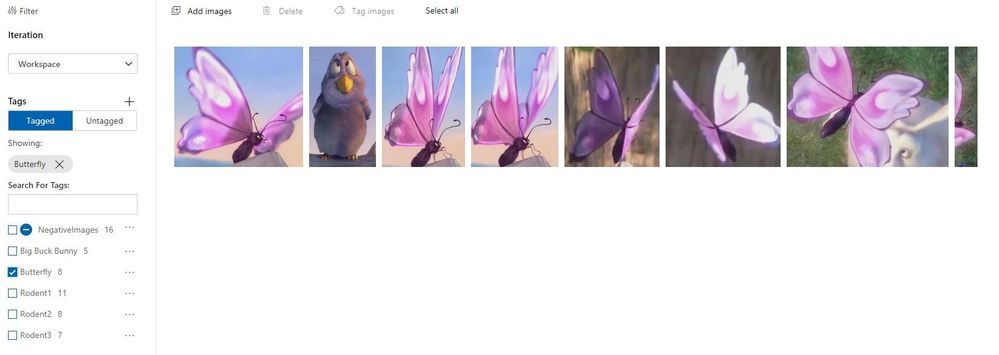

I edit the relevant group names in Video Indexer website, and they are automatically shown in the Custom Vision website for the model, with all the images behind each character.

Pic 6: screenshot of custom vision website showing the group of one of the characters [1]

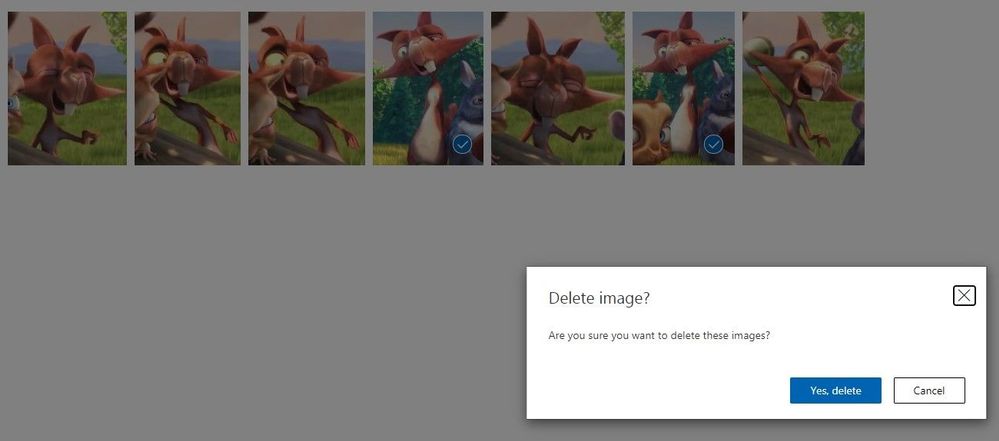

I go over the groups in the Custom Vision website, and delete images that were grouped wrongly, or contain multiple characters in the same image:

Pic 7: screenshot of custom vision website showing the group of one of the characters when images are being deleted [1]

I train the model from the Video Indexer website. It is important to train the model from Video Indexer and not from Custom Vision website.

I then index the second part video with the trained model:

Pic 8: screenshot of the player page of video indexer website. Rodent3 was recognized.[1]

We see that all 5 characters were recognized:

Pic 9: screenshot of the insight panel of video indexer website, showing all 5 characters were recognized successfully. [1]

The black lines below the recognized character indicate the places in the video that this character appears at.

Getting the best results from your animation model

To get good results, we recommend following these guidelines:

- For the initial training, each should be preferably longer than 15 minutes.,. If you have shorter episodes, we recommend uploading at least 30 minutes of video content before training to ensure variability of the training. The multiple videos will contribute to more angles, versions, backgrounds, and outfits of the different characters, and will enable better recognition later.

- Before naming a group, view its images in the Custom Vision website to make sure it’s a good group.

- Remove images that are mistakenly placed in a group. For example, if an image contains multiple characters, it is recommended not to leave it in the group. In addition, an image that is very blurred, or contains an object that is not the wanted characters, should be deleted. This can be done in the Custom Vision website, by checking the images and pressing “Delete”.

- If an image is mistakenly grouped with the wrong character, it is recommended to change its group. This can be done by checking the image, pressing “Tug Images”, writing the correct group’s name, and removing the incorrect one.

- Merge groups of the same character by giving them the same name in Video Indexer.

- Do not leave groups that are smaller than 5 images.

Once you have completed this initial work on the model’s output you are ready to train the model and start using it for recognition.

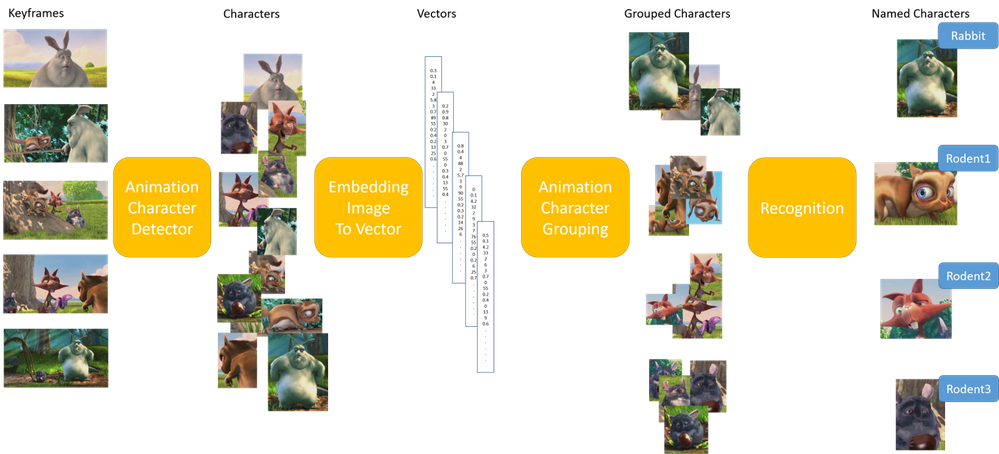

How does it work?

The first step is detecting that a specific object in the video is indeed an animated character, and not a still object. A character in animation videos can be anything from a girl to a talking car, therefore the detector has a difficult and wide mission. The animation detector was built a year ago and was incorporated in Video Indexer successfully.

The second step is grouping all the instances of the same animated character together. This is in fact a clustering problem, which is an unsupervised task. Our work here included analyzing many animation videos of different types, manually tagging the characters’ images to their assigned groups, and searching for the best algorithmic solution that fits the widest range of animation types. The input for the clustering is an embedding representing the detected animated characters – a numeric representation of each image by a vector of numbers, which is the output of the first step – the detector. We take the embedding, fit it to our needs and apply the clustering algorithm to it, to find the groups of images that represent the same character. Our solution relies on an algorithm of the density-based clustering family, which we trained and expanded for the animation specific needs.

Finally, comes the recognition of the character group that allows for naming the group. This is a step that you might know from using Video Indexer that can recognize hundreds of thousands of celebrities by their name, or any other person that you train your own custom models to recognize. The same can be done with animated characters. After indexing one or a few videos of the same series, the groups of character images that were found, can be named, and merged if necessary. You can then train your own animated model and index new episodes of the same series. The known (trained) characters will be recognized and named automatically.

The recognition step was also improved in the new version we released to be more precise.

Pic 10: Illustration of the algorithmic process [1]

Pic 10: Illustration of the algorithmic process [1]

Learn More

Review the animated character’s model customization concept to learn how to upload animated videos, tag the characters’ names, train the model and then use it for recognition or use the how to guide.

In closing, we’d like to call you to Review the animated character’s model customization concept to learn how to upload animated videos, tag the characters’ names, train the model and then use it for recognition or use the how to guide. We are looking to get your feedback.

For those of you who are new to our technology, we’d encourage you to get started today with these helpful resources in general:

- Use https://vi.microsoft.com/en-us to access Video Indexer Website and get a free trial experience.

- a quick tour of Video Indexer in Video Indexer Website

- Use stack overflow community for technical questions.

- To report an issue with Video Indexer (paid account customers) Go to Azure portal Help + support. Create a new support request. Your request will be tracked within SLA.

- Read our recent blogs in Azure Tech Community

- Visit Video Indexer Developer Portal

- Search the Video Indexer GitHub repository

- Review our product documentation.

- Get to know the recent features using Video Indexer release notes

[1] Big Buck Bunny is licensed under the Creative Commons Attribution 3.0 Unported license. © copyright Blender Foundation | www.bigbuckbunny.org

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments