This article is contributed. See the original author and article here.

Azure Stream Analytics is a full managed service for real-time analytics. In addition to the experience in Azure portal we have developer tools which make development and debugging easier. This blog will introduce a new debugging feature in Azure Stream Analytics tools extension for Visual Studio Code.

Have you ever faced a situation where your streaming job produces no result or unexpected results and you don’t know how to trouble shoot which parts go wrong? We are happy to announce the roll out of the newest debugging feature – job diagram debugging in Visual Studio Code extension for Azure Stream Analytics. This feature brings together job diagrams, metrics, diagnostic logs, and intermediate results to help you quickly isolate the source of a problem. You can not only test your query on your local machine but also connect to live sources such as Event Hub, IoT Hub. The job diagram helps you to understand how data flows between each step, and you can view the intermediate result set and metrics to debug issues. You can iterate fast because each local test of the job only takes seconds.

This blog will use a real example to show you how to debug an Azure Stream Analytics job using job diagram in Visual Studio Code.

Note

This job diagram only shows the data and metrics for local testing in a single node on your own machine. It should not be used for performance and scalability tuning.

Step 1 Install the tools

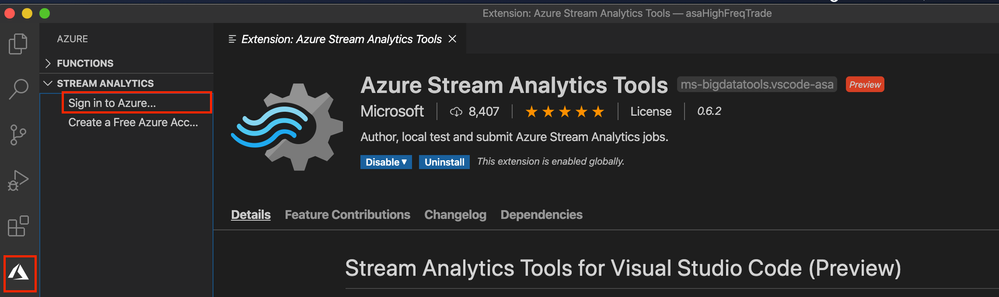

- Install Visual Studio Code and Azure Stream Analytics tools extension for Visual Studio Code.

- Install .NET core SDK and restart Visual Studio Code.

- Sign-in Visual Studio Code using your Azure account.

Step 2 Open your job in Visual Studio Code

Please go to step 3 if you already have your Azure Stream Analytics project opened in Visual Studio Code.

Open the Azure Stream Analytics job you want to debug on the Query Editor on Azure portal. Select from drop down Open in Visual Studio and choose Visual Studio Code. Then choose Open job in Visual Studio Code. The job will be exported as an Azure Stream Analytics project in Visual Studio Code.

Step 3 Run job locally

Since the credentials are already auto-populated, only thing you need to do is to open the script and Run locally. Make sure the input data is sending to your job input sources.

Step 4 Debug using job diagram

The job diagram shown on the right window of the editor shows how data flows from input sources, like Event Hub or IoT Hub, through multiple query steps to output sinks. You can also view the data as well as metrics of each query step in each intermediate result set to find the source of an issue.

Now, let’s look at a real example job below. We have a job receiving stock quotes for different stocks. In the query there are filters on a few stocks but one output does not have any data.

We run the job locally against live input stream from Event Hub. Through job diagram we can see that the step ‘msftquotes’ does not have data flowing in.

To troubleshoot that, let’s zoom in the diagram and select the upstream step ‘typeconvertedquotes’ to see if there is any output. In the node it shows that there are 3135 output events. Also, from the Result tab below it is easy to find out that there are data been output from this step with symbol ‘MSFT’.

Then we select the step ‘msftquotes’ and locate to the corresponding script to take a closer look.

Now we find the root cause – there is a typo in the script, ‘%MSFT%’ is mistakenly typed as ‘%MSFA%’.

MSFTQuotes AS (

SELECT typeconvertedquotes.* FROM typeconvertedquotes

WHERE symbol like ‘%MSFA%’

AND bidSize > 0

),

Let’s fix the typo, stop the job and run again.

Look, the data is flowing into step ‘msftquotes’ and the other downstream steps.

Other than checking the result for each step, you can also view logs and metrics for the job.

Step 5 Submit to Azure

When local testing is done, submit the job to Azure to run in the cloud environment and further validate the job status in a distributed manner.

Hope you find these new features helpful, and please let us know what capabilities you’re looking for with regard to job debugging in Azure Stream Analytics!

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments