by Contributed | Mar 5, 2021 | Technology

This article is contributed. See the original author and article here.

Numerous announcements to cover following a very busy Microsoft Ignite. News this week includes New capabilities for Azure Automanage now in public preview, Governance and management tools for Azure Machine Learning made generally available, Assessments for migration to Azure VMware Solution, Low code tools and the enablement Power Platform can offer, March 2021 Exchange Server Security Update and the Microsoft Learn module of the week.

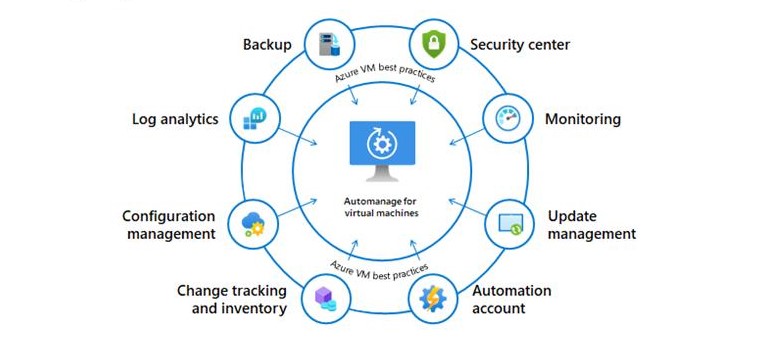

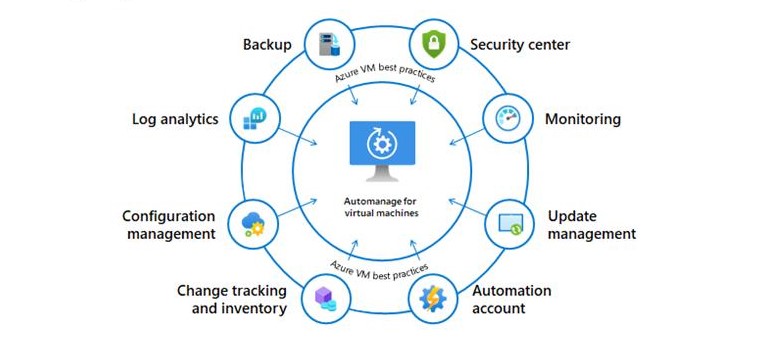

New Capabilities for Azure Automanage Now Public Preview

Azure Automanage helps reduce day-to-day management tasks with automated operations across the entire lifecycle of Windows Server virtual machines in Azure. IT Admins can manage their VMs with point-and-click simplicity, individually or at scale. Not only that, Azure Automanage monitors machines to ensure that it continues to comply with best practices across its entire lifecycle. New capabilities were introduced into the public preview at MS Ignite and they include:

- Azure Automanage for Windows Server – helps enable rebootless security patching for new Windows Server VMs

- Expansion of Azure Automanage to Linux – will now support CentOS, RHEL, Ubuntu, and SLES with more Linux distributions planned in the future

Check out the following product group blog post to learn more: Azure Automanage for virtual machines – Public Preview update

Azure Machine Learning announcements at Ignite

There was a lot of announcements made at MS Ignite surrounding new functionality being added to Azure Machine Learning. Biggest interest on our end however was the inclusion of governance tools being made available to system administrators to make it easier to gain a handle on data scientist resource use. These services include:

- Role-based access control (RBAC) – now generally available, allows creation of custom roles to provide granular actions on workspace asset and resources. The experience for creating custom roles requires using a template to define a role definition and then assigning it to users at a specific scope.

- Arc enabled machine learning – use any Kubernetes cluster and extend machine learning to run close to where the data lives. Manage all the resources through a single pane via Azure Arc.

- Setup scripts (coming soon) – provides an automated way to customize and configure compute at provisioning time.

Check out What’s new in Azure Machine Learning – hybrid with Azure Arc to learn more.

Assessments for migration to Azure VMware Solution now Generally Available

Azure Migrate assessment for Azure VMware Solution is now generally available. Analyze readiness for migrating to Azure VMware Solution, assess suitability, cost planning, performance-based rightsizing using Azure Migrate. The Azure VMware Solution assessment now supports more customizable properties to help you with better node sizing and utilization. Azure VMware Solution empowers organizations to seamlessly extend or migrate your existing on-premises VMware applications to Azure without the cost, effort, or risk of re-architecting applications or retooling operations.

Low code / No code enablement via Power Platform

“People no longer need to be coders to solve today’s problems with technology” was a big theme shared at MS Ignite. Microsoft’s announcements of Power Automate (formally Microsoft Flow) and Power Fx (formally Canvas Apps) aimed to address this opportunity. Here is a breakdown of the new power platform offerings:

- Power Fx

A low-code language that makers can work with directly in an Excel-like formula bar or Visual Studio Code text window. It enables the full spectrum of development from “no-code” for those who have never programmed before to “pro code” for the seasoned professional, with no learning or rewriting cliffs in between, enabling diverse teams to collaborate.

- Power Automate – helps create automated workflows between apps and services to synchronize files, get notifications, collect data, and more. Anyone from a basic business user to an IT professional can create automated processes using Power Automate’s no-code/low-code platform

Exchange Server Security Updates for March 2021

Microsoft has released a set of out of band security updates for vulnerabilities for Exchange Server 2013, 2016 anfd 2019. Microsoft’s recommendation is to install these updates immediately to protect against these attacks as they are aware of active exploits of related vulnerabilities in the wild. The vulnerabilities affect Microsoft Exchange Server. Exchange Online is not affected.

More information reguarding this can be found here: March 2021 Exchange Server Security Updates

Community Events

- Patch and Switch – It’s been a fortnight and it convienetly lands on the Friday after MS Ignite. Tune in to hear thier recap of the event.

MS Learn Module of the Week

Automate processes using Power Automate

This learning path introduces you to Power Automate, teaches you how to build workflows, and how to administer flows.

This learning path can help you prepare for the Microsoft Certified: Power Platform App Maker Associate certification.

Let us know in the comments below if there are any news items you would like to see covered in the next show. Be sure to catch the next AzUpdate episode and join us in the live chat.

by Contributed | Mar 4, 2021 | Technology

This article is contributed. See the original author and article here.

You spoke, We Listened!

MRTK 2.6 is available for download through the Mixed Reality Feature Tool, a new way to discover, update and add Mixed Reality packages to Unity projects. To get started, see Welcome to the Mixed Reality Feature Tool.

If you prefer to manually import packages, you can download the MRTK from Github.

A New Docs Experience

We’ve migrated our documentation for MRTK-Unity from Github to a new MRTK docs website! Starting with MRTK Unity 2.6, new docs will only be available on Microsoft’s official docs website, docs.microsoft.com. MRTK APIs are also now discoverable through the .Net API browser. This puts information alongside other Microsoft Mixed Reality content you so you can stay closer to the action while learning about new tools. Please note that feature documentation and API references on Github will no longer be updated.

Release Highlights

MRTK 2.6 is full of great new features, bug fixes and updates. These changes include:

- Additional support for the OpenXR preview

- Scene Understanding as an experimental spatial awareness observer

- HP Motion Controller Support

- Teleport gesture now supported on all platforms

- Runtime profile switching

- Reading Mode

- New Solver Graduations

- Support for 3D App Launchers

- Hand Coach as an Experimental Feature

- and more!

Looking for more info, keep reading below or check out the release notes.

Don’t forget to tell us what you think. You can file bugs and feature requests on the MRTK Github.

Additional support for OpenXR

Additional support for Unity’s OpenXR preview package and Microsoft’s Mixed Reality OpenXR package has been added. See the MRTK/XRSDK getting started page, Unity’s forum post, or Microsoft’s documentation for more information.

OpenXR in Unity is only supported on Unity 2020.2 and higher.

Currently, it also only supports x64 and ARM64 builds.

HP Motion Controllers now supported with MRTK

Controllers for the HP Reverb G2 now work natively with MRTK.

Teleportation with the teleport gesture now supported on all platforms

Users can now use the teleport gesture to move around their play space across all platforms. To teleport with a controller on MR devices with default configurations, use the thumbstick. To teleport with articulated hands, make a gesture with your palm facing up with the index and thumb sticking outwards, completing the teleport by curling the index finger. To teleport with input simulation, please see our updated Input Simulation Service documentation.

Scene Understanding now available in MRTK as an experimental spatial awareness observer

Experimental support of Scene Understanding is introduced in MRTK 2.6. Users can incorporate the scene understanding capabilities of HoloLens 2 as a spatial awareness observer in MRTK based projects. Please read the Scene Understanding documentation for more information.

Note: Scene Understanding is only supported on HoloLens 2 and Unity 2019.4 and higher.

This feature requires the Scene Understanding package, which is now available via the Mixed Reality Feature Tool.

Runtime profile switching support

MRTK now allows profile switching both before the initialization of the MRTK instance (i.e. Pre MRTK initialization profile switch) and after a profile has been in active use (i.e. Active profile switch). The former switch can be used to enable select components based on capabilities of the hardware, while the latter can be used to modify experience as the user enters a subpart of the application. Please read the documentation on profile switching for more information and code samples.

Directional Indicator and Follow Solvers Graduated from Experimental

Two new solvers are ready for use with mainline MRTK.

Hand Coach Graduated from Experimental

The Hand Coach feature is now ready for use with mainline MRTK.

Input Recording Service improvements

InputRecordingService and InputPlaybackService can now record and play back eye gaze input. Recording has been optimized to ensure a consistent framerate throughout the recording period while recording file size and save time are also reduced by about 50%. Saving and loading of recording files can now be performed asynchronously. Note the file format of the recording has changed in this MRTK version, please see here for more information on the new version 1.1 specifications.

Reading mode

Added support for reading mode on HoloLens 2. Reading mode reduces the system’s field of view but eliminates a scaling of Unity’s output. A pixel rendered by Unity will correspond to a projected pixel on HoloLens 2. Application authors should do tests with multiple individuals to be sure this is a tradeoff they want in their app.

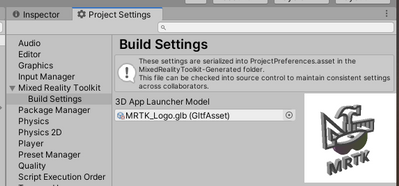

Support for 3D app launchers on UWP

Adds the ability to set a 3D app launcher for UWP. This setting is exposed both in the MRTK Build Window and the MRTK Project Settings, under Build Settings. It’s automatically written into the project during the build in Unity.

Want more information? Check out the full release notes.

Try it out!

Want to use the MRTK? Visit the aka.ms/mrtkdocs and get started today.

Don’t forget to tell us what you think. You can file bugs and feature requests on the MRTK Github.

Thanks,

The MRTK Team :smiling_face_with_smiling_eyes:

by Contributed | Mar 4, 2021 | Technology

This article is contributed. See the original author and article here.

Introduction

In this post we’ll demonstrate how we can use the NVIDIA® Jetson Nano™ device running AI on IoT edge combined with power of Azure platform to create an end-to-end AI on edge solution. We are going to use a custom AI model that is developed using NVIDIA® Jetson Nano™ device, but you can use any AI model that fits your needs. We will see how we can leverage the new Azure SDKs to create a complete Azure solution.

This post has been divided into three sections. The Architecture overview section discusses the overall architecture at a high-level. The Authentication Front-end section discusses the starting and ending points of the system flow. The Running AI on the Edge section talks about details on how the NVIDIA® Jetson Nano™ as an IoT Edge device can run AI and leverage the Azure SDK to communicate with the Azure Platform.

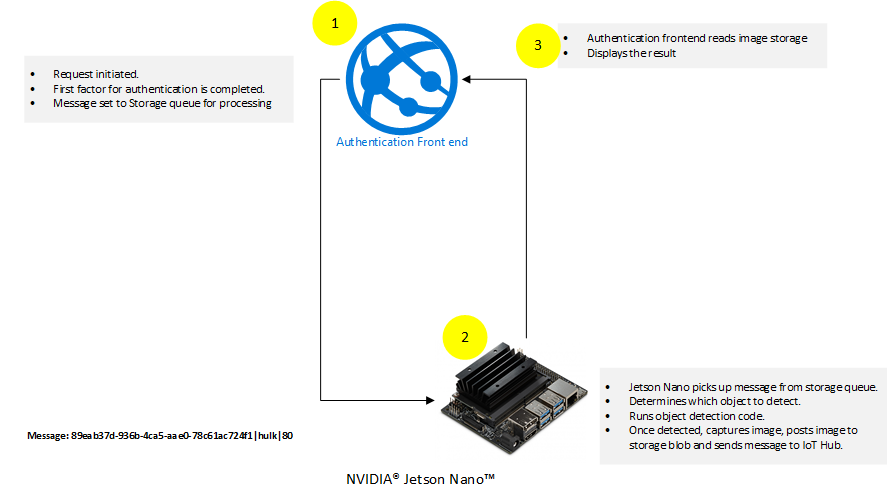

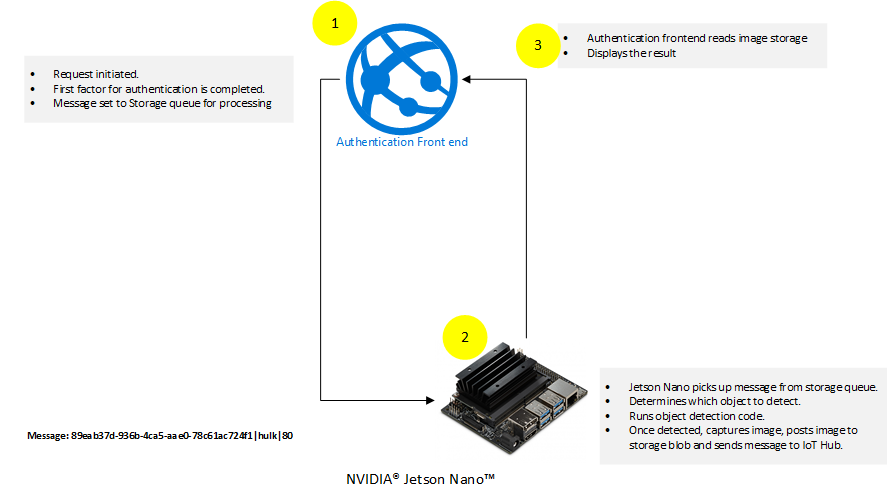

Architecture overview

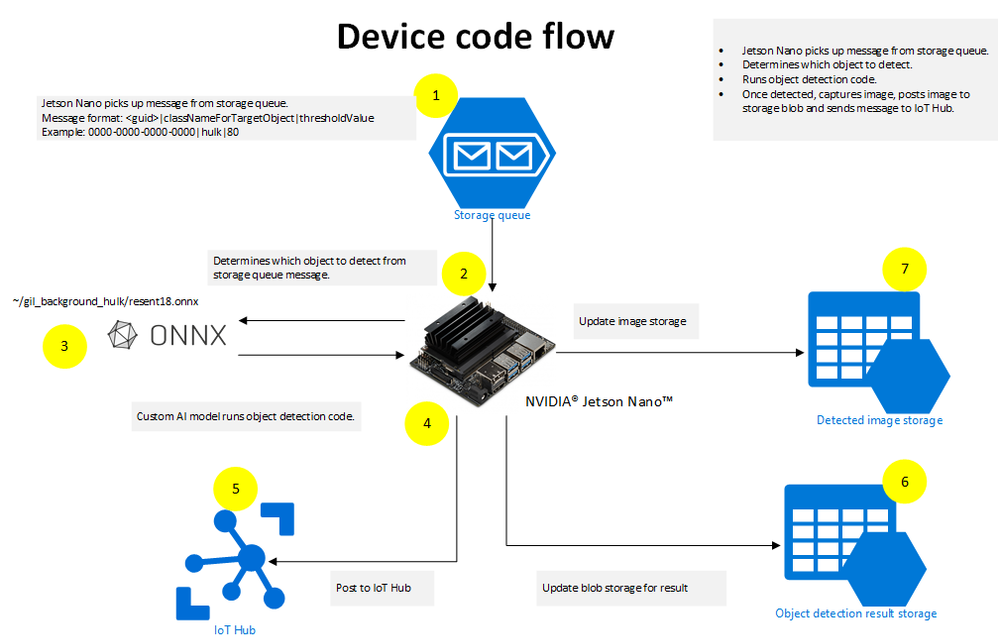

There are two main components of the architecture:

- The Authentication Front-end and AI on the edge run by device side code. The Authentication Front-end is responsible for creating a request, which is added to an Azure Storage Queue.

- The device side code is running Python code that is constantly listening to Azure Storage Queue for new requests. It picks up the requests and runs AI on it according to the requests. Once the device side code detects the object, it captures the image of the detected object and posts the captured image to Azure Storage Blob.

The underlying core of the architecture is the use of te new Azure SDKs by the Authentication Front-end and the AI running on the edge. This is done by adding requests to Azure Storage Queue by Authentication Front-end for AI processing and updating the Azure Storage Blob with captured image by the device side Python code.

Control flow

At a high-level, the following actions are taking place:

- Authentication Front-end initiates flow by completing the first factor authentication. Once first factor authentication is complete the flow is passed to NVIDIA® Jetson Nano™ device.

- NVIDIA® Jetson Nano™ device runs custom AI model using code mentioned in following sections. The result of this step is completion of the second factor of authentication.

The control is passed back to Authentication Front-end which validates the results that came from NVIDIA® Jetson Nano™ device.

Authentication Front-end

The role of the Authentication Front-end is to initiate the two-factor flow and interact with Azure using the new Azure SDKs.

Code running on Authentication Front-end

The code running on Authentication Front-end is mainly comprised of two controllers.

The following describes the code for each of those controllers.

SendMessageController.cs

The SendMessageController.cs’s main job is to complete the first factor of the authentication. The code simulates the completion of the first factor by just ensuring that the username and passwords are the same. In a real world implementation, this should be done by a valid secure authentication mechanism. An example of how to implement secure authentication mechanism is mentioned in the article Authentication and authorization in Azure App Service and Azure Functions. The second task that SendMessageController.cs is doing is to queue the messages up for the second factor. This is done using the new Azure SDKs.

Here is the code snippet for SendMesssageController.cs:

[HttpPost("sendmessage")]

public IActionResult Index()

{

string userName = string.Empty;

string password = string.Empty;

if (!string.IsNullOrEmpty(Request.Form["userName"]))

{

userName = Request.Form["userName"];

}

if (!string.IsNullOrEmpty(Request.Form["password"]))

{

password = Request.Form["password"];

}

// Simulation of first factor authentication presented here.

// For real world example visit: https://docs.microsoft.com/azure/app-service/overview-authentication-authorization

if(!userName.Equals(password, StringComparison.InvariantCultureIgnoreCase))

{

return View(null);

}

var objectClassificationModel = new ObjectClassificationModel()

{

ClassName = userName,

RequestId = Guid.NewGuid(),

ThresholdPercentage = 70

};

_ = QueueMessageAsync(objectClassificationModel, storageConnectionString);

return View(objectClassificationModel);

}

public static async Task QueueMessageAsync(ObjectClassificationModel objectClassificationModel, string storageConnectionString)

{

string requestContent = $"{objectClassificationModel.RequestId}|{objectClassificationModel.ClassName}|{objectClassificationModel.ThresholdPercentage.ToString()}";

// Instantiate a QueueClient which will be used to create and manipulate the queue

QueueClient queueClient = new QueueClient(storageConnectionString, queueName);

// Create the queue

var createdResponse = await queueClient.CreateIfNotExistsAsync();

if (createdResponse != null)

{

Console.WriteLine($"Queue created: '{queueClient.Name}'");

}

await queueClient.SendMessageAsync(requestContent);

}

In the code snippet mentioned above, the code is simulating the first factor by comparing username and password. After the simulation of first factor, the code is sending a message to an Azure Storage Queue using the new Azure SDK.

ObjectClassificationController.cs

The ObjectClassificationController.cs is initiated after the custom code on AI at the Edge has completed. The code validates if the request has been completed by the NVIDIA® Jetson Nano™ device and then shows the resultant captured image of the detected object.

Here is the code snippet:

public IActionResult Index(string requestId, string className)

{

string imageUri = string.Empty;

Guid requestGuid = default(Guid);

if (Guid.TryParse(requestId, out requestGuid))

{

BlobContainerClient blobContainerClient = new BlobContainerClient(storageConnectionString, containerName);

foreach (BlobItem blobItem in blobContainerClient.GetBlobs(BlobTraits.All))

{

if (string.Equals(blobItem?.Name, $"{requestId}/{imageWithDetection}", StringComparison.InvariantCultureIgnoreCase))

{

imageUri = $"{blobContainerClient.Uri.AbsoluteUri}/{blobItem.Name}";

}

}

ObjectClassificationModel objectClassificationModel = new ObjectClassificationModel()

{

ImageUri = new Uri(imageUri),

RequestId = requestGuid,

ClassName = className

};

return View(objectClassificationModel);

}

return View(null);

}

[HttpGet("HasImageUploaded")]

[Route("objectclassification/{imageContainerGuid}/hasimageuploaded")]

public async Task<IActionResult> HasImageUploaded(string imageContainerGuid)

{

BlobContainerClient blobContainerClient = new BlobContainerClient(storageConnectionString, "jetson-nano-object-classification-responses");

await foreach(BlobItem blobItem in blobContainerClient.GetBlobsAsync(BlobTraits.All))

{

if (string.Equals(blobItem?.Name, $"{imageContainerGuid}/{imageWithDetection}", StringComparison.InvariantCultureIgnoreCase))

{

return new Json($"{blobContainerClient.Uri.AbsoluteUri}/{blobItem.Name}");

}

}

return new Json(string.Empty);

}

The above mentioned code snippet shows two methods that are using the new Azure SDK. The HasImageUploaded method queries the Azure Storage Blob to find if the image has been uploaded or not. The Index method simply gets the image reference from Azure Storage Blob. For more information on how to read Azure Blob Storage using the new Azure SDK visit Quickstart: Azure Blob Storage client library v12 for .NET.

The following steps are taken on the Authentication Front-end:

- User initiates login by supplying username and password.

- User is authenticated on the first factor using the combination of username and password.

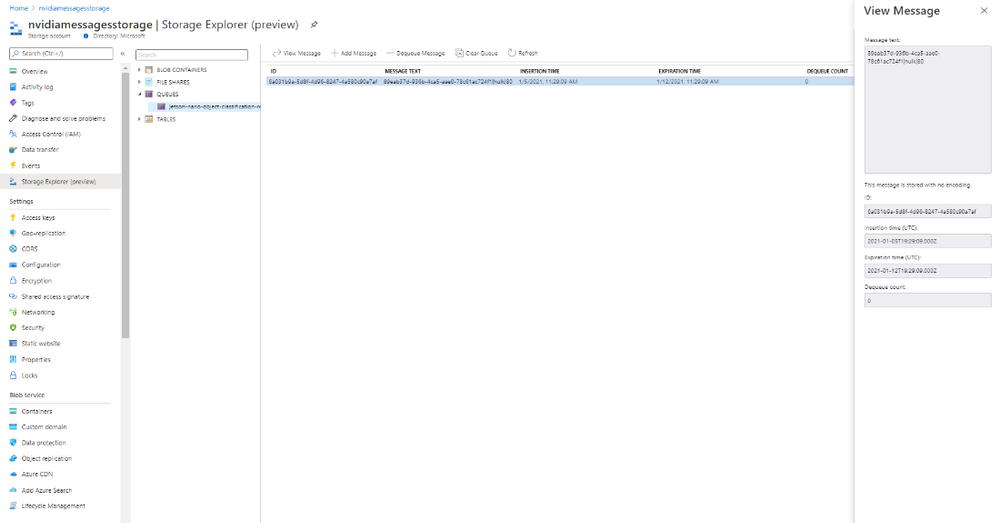

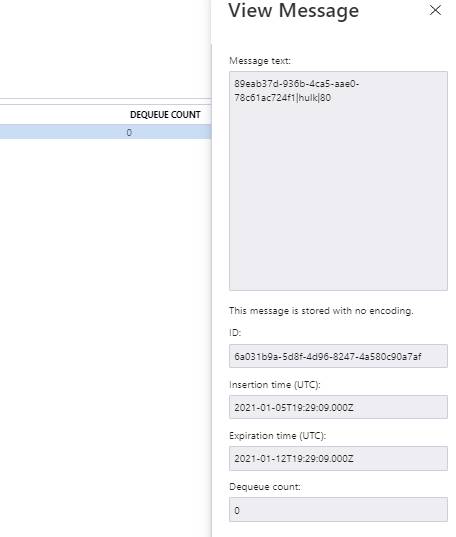

- On successful completion of the first factor, the web interface creates a request and sends that to Azure Storage as shown below:

- The NVIDIA® Jetson Nano™ device, which is listening to Azure Storage Queue, initiates the second factor and completes the second factor.

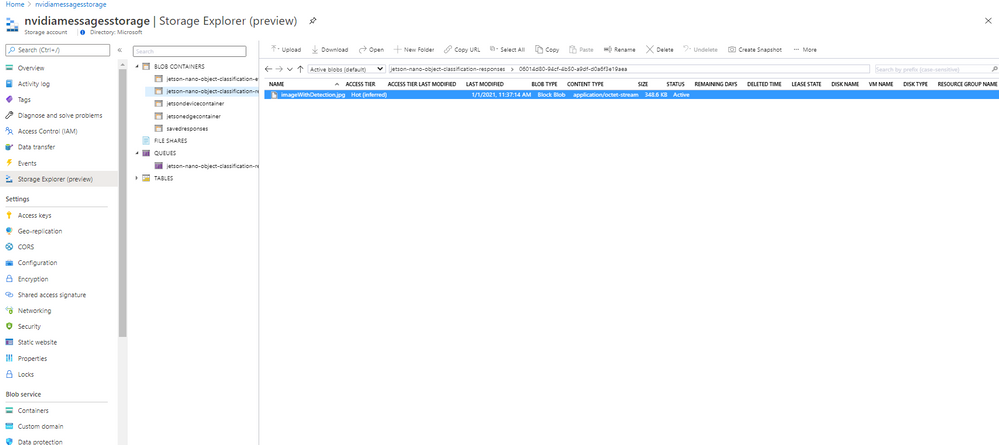

- Once the second factor is completed, the NVIDIA® Jetson Nano™ device posts the captured image for the second factor to Azure Storage Blob as shown below:

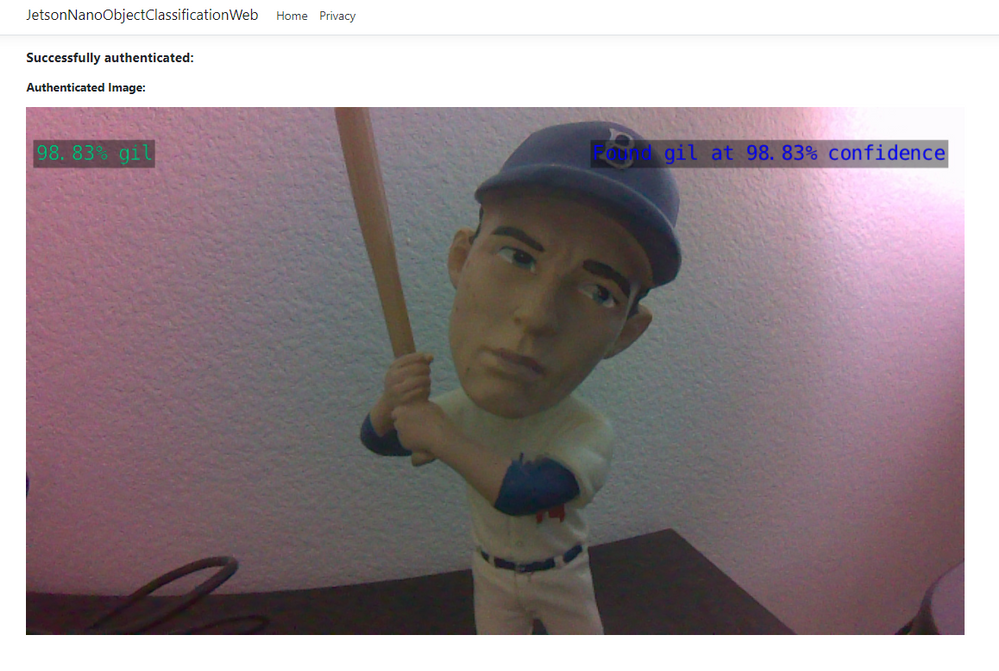

The web interface shows the captured image, completing the flow as shown below:

Running AI on the Edge

Device pre-requisites

- NVIDIA® Jetson Nano™ device with camera attached to capture video image.

- Custom pre-training model deployed on the device.

- Location path to the custom model file (.onnx file). This information is presented as –model parameter to the command mentioned in Steps section. For this tutorial we have prepared a custom model and saved as “~/gil_background_hulk/resenet18.onnx”.

- Location path to the classification text file (labels.txt). This information is presented as –labels parameter to the command mentioned in Steps section.

- Class name of the object that is target object that needs to be detected. This is presented as –classNameForTargetObject.

- Azure IoT Hub libraries for Python. Install the azure-iot-device package for IoTHubDeviceClient.

pip3 install azure-iot-device

Code running AI on the Edge

If we look at the technical specifications for NVIDIA® Jetson Nano™ device, we will notice that it is based on ARM architecture running Ubuntu (in my case it was release: 18.04 LTS). With that knowledge it became clear that Python would be good choice of language running at device side. The device side code is shown below:

#!/usr/bin/python

import jetson.inference

import jetson.utils

import argparse

import sys

import os

import asyncio

from azure.iot.device.aio import IoTHubDeviceClient

from azure.storage.queue.aio import QueueClient

from azure.storage.blob.aio import BlobServiceClient, BlobClient, ContainerClient

# A helper class to support async blob and queue actions.

class StorageHelperAsync:

async def block_blob_upload_async(self, upload_path, savedFile):

blob_service_client = BlobServiceClient.from_connection_string(

os.getenv("STORAGE_CONNECTION_STRING")

)

container_name = "jetson-nano-object-classification-responses"

async with blob_service_client:

# Instantiate a new ContainerClient

container_client = blob_service_client.get_container_client(container_name)

# Instantiate a new BlobClient

blob_client = container_client.get_blob_client(blob=upload_path)

# Upload content to block blob

with open(savedFile, "rb") as data:

await blob_client.upload_blob(data)

# [END upload_a_blob]

# Code for listening to Storage queue

async def queue_receive_message_async(self):

# from azure.storage.queue.aio import QueueClient

queue_client = QueueClient.from_connection_string(

os.getenv("STORAGE_CONNECTION_STRING"),

"jetson-nano-object-classification-requests",

)

async with queue_client:

response = queue_client.receive_messages(messages_per_page=1)

async for message in response:

queue_message = message

await queue_client.delete_message(message)

return queue_message

async def main():

# Code for object detection

# parse the command line

parser = argparse.ArgumentParser(

description="Classifying an object from a live camera feed and once successfully classified a message is sent to Azure IoT Hub",

formatter_class=argparse.RawTextHelpFormatter,

epilog=jetson.inference.imageNet.Usage(),

)

parser.add_argument(

"input_URI", type=str, default="", nargs="?", help="URI of the input stream"

)

parser.add_argument(

"output_URI", type=str, default="", nargs="?", help="URI of the output stream"

)

parser.add_argument(

"--network",

type=str,

default="googlenet",

help="Pre-trained model to load (see below for options)",

)

parser.add_argument(

"--camera",

type=str,

default="0",

help="Index of the MIPI CSI camera to use (e.g. CSI camera 0)nor for VL42 cameras, the /dev/video device to use.nby default, MIPI CSI camera 0 will be used.",

)

parser.add_argument(

"--width",

type=int,

default=1280,

help="Desired width of camera stream (default is 1280 pixels)",

)

parser.add_argument(

"--height",

type=int,

default=720,

help="Desired height of camera stream (default is 720 pixels)",

)

parser.add_argument(

"--classNameForTargetObject",

type=str,

default="",

help="Class name of the object that is required to be detected. Once object is detected and threshhold limit has crossed, the message would be sent to Azure IoT Hub",

)

parser.add_argument(

"--detectionThreshold",

type=int,

default=90,

help="The threshold value 'in percentage' for object detection",

)

try:

opt = parser.parse_known_args()[0]

except:

parser.print_help()

sys.exit(0)

# load the recognition network

net = jetson.inference.imageNet(opt.network, sys.argv)

# create the camera and display

font = jetson.utils.cudaFont()

camera = jetson.utils.gstCamera(opt.width, opt.height, opt.camera)

display = jetson.utils.glDisplay()

input = jetson.utils.videoSource(opt.input_URI, argv=sys.argv)

# Fetch the connection string from an environment variable

conn_str = os.getenv("IOTHUB_DEVICE_CONNECTION_STRING")

device_client = IoTHubDeviceClient.create_from_connection_string(conn_str)

await device_client.connect()

counter = 1

still_looking = True

# process frames until user exits

while still_looking:

storage_helper = StorageHelperAsync()

queue_message = await storage_helper.queue_receive_message_async()

print("Waiting for request queue_messages")

print(queue_message)

if queue_message:

has_new_message = True

queue_message_array = queue_message.content.split("|")

request_content = queue_message.content

correlation_id = queue_message_array[0]

class_for_object_detection = queue_message_array[1]

threshold_for_object_detection = int(queue_message_array[2])

while has_new_message:

# capture the image

# img, width, height = camera.CaptureRGBA()

img = input.Capture()

# classify the image

class_idx, confidence = net.Classify(img)

# find the object description

class_desc = net.GetClassDesc(class_idx)

# overlay the result on the image

font.OverlayText(

img,

img.width,

img.height,

"{:05.2f}% {:s}".format(confidence * 100, class_desc),

15,

50,

font.Green,

font.Gray40,

)

# render the image

display.RenderOnce(img, img.width, img.height)

# update the title bar

display.SetTitle(

"{:s} | Network {:.0f} FPS | Looking for {:s}".format(

net.GetNetworkName(),

net.GetNetworkFPS(),

opt.classNameForTargetObject,

)

)

# print out performance info

net.PrintProfilerTimes()

if (

class_desc == class_for_object_detection

and (confidence * 100) >= threshold_for_object_detection

):

message = request_content + "|" + str(confidence * 100)

font.OverlayText(

img,

img.width,

img.height,

"Found {:s} at {:05.2f}% confidence".format(

class_desc, confidence * 100

),

775,

50,

font.Blue,

font.Gray40,

)

display.RenderOnce(img, img.width, img.height)

savedFile = "imageWithDetection.jpg"

jetson.utils.saveImageRGBA(savedFile, img, img.width, img.height)

# Create the BlobServiceClient object which will be used to create a container client

blob_service_client = BlobServiceClient.from_connection_string(

os.getenv("STORAGE_CONNECTION_STRING")

)

container_name = "jetson-nano-object-classification-responses"

# Create a blob client using the local file name as the name for the blob

folderMark = "/"

upload_path = folderMark.join([correlation_id, savedFile])

await storage_helper.block_blob_upload_async(upload_path, savedFile)

await device_client.send_message(message)

still_looking = True

has_new_message = False

await device_client.disconnect()

if __name__ == "__main__":

# asyncio.run(main())

loop = asyncio.get_event_loop()

loop.run_until_complete(main())

loop.close()

Here is the link for code to try out: https://gist.github.com/nabeelmsft/f079065d98d39f271b205b71bc8c48bc

Here is the command line to execute the code:

export DATASET=~/IoT/datasets/gil_background_hulk

python3 detect-post-object.py --model=gil_background_hulk/resnet18.onnx --input_blob=input_0 --output_blob=output_0 --labels=$DATASET/labels.txt --camera=csi://0 --classNameForTargetObject=hulk --detectionThreshold=95

Code flow

The following actions take place in python code running on the device side:

- The device code is constantly reading the request coming to Azure Storage Queue.

- Once request is received; code extracts out which object to detect and what threshold to use for object detection. The example mentioned in the diagram shows the message as: 0000-0000-0000-0000|hulk|80. The code will extract “hulk” as the object that needs to be detected and “80” as threshold value. This format is just an example that is used to provide input values to device side code.

- Using the custom AI model (example: ~/gil_background_hulk/resenet18.onnx) running on Jetson Nano device, object is searched based on the request.

- As soon as object is detected, the python code running on Jetson Nano device posts captured image to Azure Blob storage.

- In addition, the code running on Jetson Nano device sends message to Azure IoT hub informing of correct match for the request.

Once the device side code completes the flow, the object detected image is posted to Azure Storage blob along with posting to Azure IoT Hub. The web interface then takes control and completes the rest of the steps.

Conclusion

In this post we have seen how simple it is for running AI on edge using NVIDIA® Jetson Nano™ device leveraging Azure platform. Azure SDKs are designed to work great with a python on Linux based IoT devices. We have also seen how Azure SDK plays the role of stitching different components together for a complete end to end solution.

Recent Comments