by Contributed | May 12, 2023 | Technology

This article is contributed. See the original author and article here.

OpenFOAM (Open Field Operation and Manipulation) is an open-source computational fluid dynamics (CFD) software package. It provides a comprehensive set of tools for simulating and analyzing complex fluid flow and heat transfer phenomena. It is widely used in academia and industry for a range of applications, such as aerodynamics, hydrodynamics, chemical engineering, environmental simulations, and more.

Azure offers services like Azure Batch and Azure CycleCloud that can help individuals or organizations run OpenFOAM simulations effectively and efficiently. In both scenarios, these services allow users to create and manage clusters of VMs, enabling parallel processing and scaling of OpenFOAM simulations. While CycleCloud provides a similar experience to on-premises thanks to its support to common schedulers like OpenPBS or SLURM; Azure Batch provides a cloud native resource scheduler that simplifies the configuration, maintenance and support of your required infrastructure.

This article covers a step-by-step guide on a minimal Azure Batch setup to run OpenFOAM simulations. Further analysis should be performed to identify the right sizing both in terms of compute and storage. A previous article on How to identify the recommended VM for your HPC workloads could be helpful.

Step 1: Provisioning required infrastructure

To get started, create a new Azure Batch account. At this point a pool, job or task is not required. In our scenario, the pool allocation method would be configure as “User Subscription” and public network access configured to “All Networks”.

A shared storage across all nodes would be also required to share the input model and store the outputs. In this guide, an Azure Files NFS share would be used. Alternatives like Azure NetApp Files or Azure Managed Lustre could also be an option base on your scalability and performance needs.

Step 2: Customizing the virtual machine image

OpenFOAM provides pre-compiled binaries packaged for Ubuntu that can be installed through its oficial APT repositories. If Ubuntu is your distribution of choice, you can follow the oficial documentation on how to install it, using a pool’s start task is a good approach to do it. As an alternative, you can create a custom image with everything already pre-configured.

This article would cover the second option using CentOS 7.9 as base image to show the end-to-end configuration and compilation of the software from source code. To simplify the process, it would rely on the available HPC images that provide the required pre-requisites already installed. The reference URN for those images is: OpenLogic:CentOS-HPC:s7_9-gen2:latest. The SKU of the VM we would use both to create the custom image and run the simulations is a HBv3.

Start the configuration creating a new VM. After the VM is up and running, execute the following script to download and compile OpenFOAM source code.

## Downloading OpenFoam

sudo mkdir /openfoam

sudo chmod 777 /openfoam

cd /openfoam

wget https://dl.openfoam.com/source/v2212/OpenFOAM-v2212.tgz

wget https://dl.openfoam.com/source/v2212/ThirdParty-v2212.tgz

tar -xf OpenFOAM-v2212.tgz

tar -xf ThirdParty-v2212.tgz

module load mpi/openmpi

module load gcc-9.2.0

## OpenFoam 10 requires cmake 3. CentOS 7.9 cames with a previous version.

sudo yum install epel-release.noarch -y

sudo yum install cmake3 -y

sudo yum remove cmake -y

sudo ln -s /usr/bin/cmake3 /usr/bin/cmake

source OpenFOAM-v2212/etc/bashrc

foamSystemCheck

cd OpenFOAM-v2212/

./Allwmake -j -s -q -l

The last command compiles with all cores (-j), reduced output (-s, -silent), with queuing (-q, -queue) and logs (-l, -log) the output to a file for later inspection. After the initial compilation, review the output log or re-run the last command to make sure that everything was compiled without errors. Output is so verbose that errors could be missed in a quick review of the logs.

It would take a while before the compilation process finishes. After that, you can delete the installers and any other folder not required in your scenario and capture the image into a Shared Image Gallery.

Step 3. Batch pool configuration

Add a new pool to your previously created Azure Batch account. You can create a new pool using the standard wizard (Add) and fulfilling the required fields with the values mentioned in the following JSON, or you can copy and paste this file into the Add (JSON editor).

Make sure you customize the properties between .

{

"properties": {

"vmSize": "STANDARD_HB120rs_V3",

"interNodeCommunication": "Enabled",

"taskSlotsPerNode": 1,

"taskSchedulingPolicy": {

"nodeFillType": "Pack"

},

"deploymentConfiguration": {

"virtualMachineConfiguration": {

"imageReference": {

"id": ""

},

"nodeAgentSkuId": "batch.node.centos 7",

"nodePlacementConfiguration": {

"policy": "Regional"

}

}

},

"mountConfiguration": [

{

"nfsMountConfiguration": {

"source": "",

"relativeMountPath": "data",

"mountOptions": "-o vers=4,minorversion=1,sec=sys"

}

}

],

"networkConfiguration": {

"subnetId": "",

"publicIPAddressConfiguration": {

"provision": "BatchManaged"

}

},

"scaleSettings": {

"fixedScale": {

"targetDedicatedNodes": 0,

"targetLowPriorityNodes": 0,

"resizeTimeout": "PT15M"

}

},

"targetNodeCommunicationMode": "Simplified"

}

}

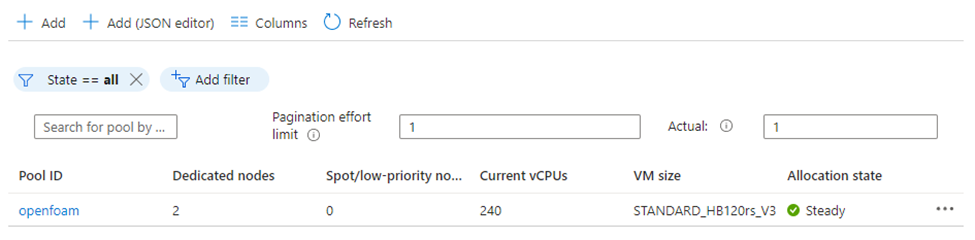

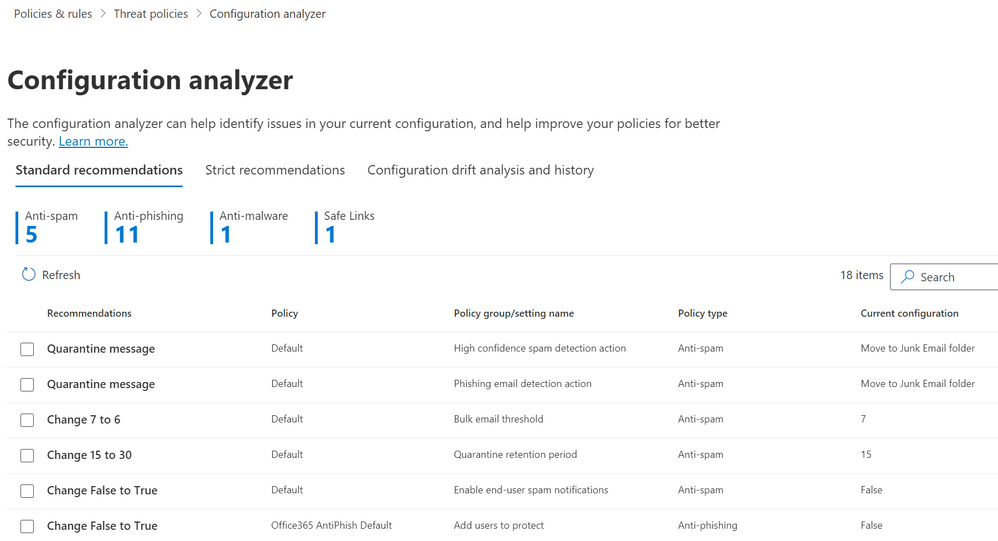

Wait till the pool is created and the nodes are available to accept new tasks. Your pool view should look similar to the following image.

Step 4. Batch Job Configuration

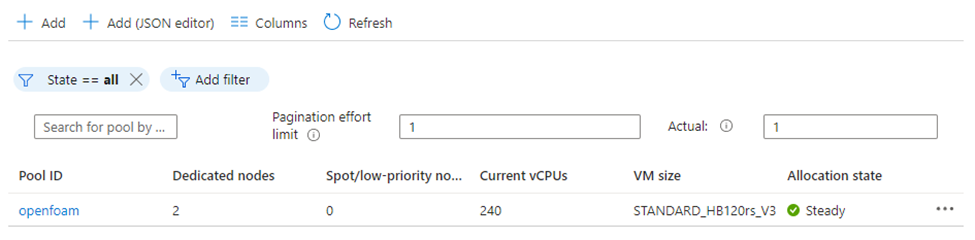

Once the pool allocation state value is “Ready”, continue with the next step: create a new Job. Default configuration is enough in this case. In our case, the job is called “flange” because we would use the flange example from OpenFOAM tutorials.

Step 5. Task Pool Configuration

Once the job state value changes to “Active”, it is ready to admit new tasks. You can create a new task using the standard wizard (Add) and fulfilling the required fields with the values mentioned in the following JSON, or you can copy and paste this file into the Add (JSON editor).

Make sure you customize the properties between .

{

"id": "",

"commandLine": "/bin/bash -c '$AZ_BATCH_NODE_MOUNTS_DIR/data/init.sh'",

"resourceFiles": [],

"environmentSettings": [],

"userIdentity": {

"autoUser": {

"scope": "pool",

"elevationLevel": "nonadmin"

}

},

"multiInstanceSettings": {

"numberOfInstances": 2,

"coordinationCommandLine": "echo "Coordination completed!"",

"commonResourceFiles": []

}

}

Task commandline parameter is configured to execute a Bash script stored into the Azure Files that Batch is mounting automatically into the ‘$AZ_BATCH_NODE_MOUNTS_DIR/data’ folder. You need to copy first the following scripts and the flange example mentioned above into a folder called flange inside that directory.

Command Line Task Script

This script would configure the environment variables and pre-process the input files before launching the mpirun command to execute the solver in parallel across all the available nodes. In this case, 2 nodes with 240 cores.

#! /bin/bash

source /etc/profile.d/modules.sh

module load mpi/openmpi

# Azure Files is mounted automatically in this directory based on the pool configuration

DATA_DIR="$AZ_BATCH_NODE_MOUNTS_DIR/data"

# OpenFoam was installed on this folder

OF_DIR="/openfoam/OpenFOAM-v2212"

# A new folder is created per execution and the input data copied there.

mkdir -p "$DATA_DIR/flange"

unzip -o "$DATA_DIR/flange.zip" -d "$DATA_DIR/$AZ_BATCH_TASK_ID"

# Configures OpenFoam environment

source "$OF_DIR/etc/bashrc"

source "$OF_DIR/bin/tools/RunFunctions"

# Preprocessing of the files

cd "$DATA_DIR/$AZ_BATCH_JOB_ID-flange"

runApplication ansysToFoam "$OF_DIR/tutorials/resources/geometry/flange.ans" -scale 0.001

runApplication decomposePar

# Configure the host file

echo $AZ_BATCH_HOST_LIST | tr "," "n" > hostfile

sed -i 's/$/ slots=120/g' hostfile

# Launching the secondarr script to perform the parallel computation.

mpirun -np 240 --hostfile hostfile "$DATA_DIR/run.sh" > solver.log

Mpirun Processing Script

This script would launch the task in all the nodes available. It is required to configure the environment variables and folders the solver would need to access. If this script is not executed and the solver is invoked directly on the mpirun command, only the primary task node would have the right configuration applied and the rest of the nodes would fail with file not found errors.

#! /bin/bash

source /etc/profile.d/modules.sh

module load gcc-9.2.0

module load mpi/opennmpi

DATA_DIR="$AZ_BATCH_NODE_MOUNTS_DIR/data"

OF_DIR="/openfoam/OpenFOAM-v2212"

source "$OF_DIR/etc/bashrc"

source "$OF_DIR/bin/tools/RunFunctions"

# Execute the code across the nodes.

laplacianFoam -parallel > solver.log

Step 6. Checking the results

Mpirun output is redirected to a file called solver.log in the directory where the model is stored inside the Azure Files file share. Checking the first lines of the log, it’s possible to validate that the execution has properly started and it’s running on top of two HBv3 with 240 processes.

/*---------------------------------------------------------------------------*

| ========= | |

| / F ield | OpenFOAM: The Open Source CFD Toolbox |

| / O peration | Version: 2212 |

| / A nd | Website: www.openfoam.com |

| / M anipulation | |

*---------------------------------------------------------------------------*/

Build : _66908158ae-20221220 OPENFOAM=2212 version=v2212

Arch : "LSB;label=32;scalar=64"

Exec : laplacianFoam -parallel

Date : May 04 2023

Time : 15:01:56

Host : 964d5ce08c1d4a7b980b127ca57290ab000000

PID : 67742

I/O : uncollated

Case : /mnt/resource/batch/tasks/fsmounts/data/flange

nProcs : 240

Hosts :

(

(964d5ce08c1d4a7b980b127ca57290ab000000 120)

(964d5ce08c1d4a7b980b127ca57290ab000001 120)

)

Conclusion

By leveraging Azure Batch’s scalability and flexible infrastructure, you can run OpenFOAM simulations at scale, achieving faster time-to-results and increased productivity. This guide demonstrated the process of configuring Azure Batch, customizing the CentOS 7.9 image, installing dependencies, compiling OpenFOAM, and running simulations efficiently on Azure Batch. With Azure’s powerful capabilities, researchers and engineers can unleash the full potential of OpenFOAM in the cloud.

by Contributed | May 11, 2023 | Technology

This article is contributed. See the original author and article here.

Spear phishing campaign is a type of attack where phishing emails are tailored to specific organization, organization’s department, or even specific person. Spear phishing is a targeted attack by its definition and rely on preliminary reconnaissance, so attackers are ready to spend more time and resources to achieve their targets. In this blog post, we will discuss steps that can be taken to respond to such a malicious mailing campaign using Microsoft 365 Defender.

What makes phishing “spear”

Some of the attributes of such attacks are:

- Using local language for subject, body, and sender’s name to make it harder for users to identify email as phishing.

- Email topics correspond to the recipient’s responsibilities in the organization, e.g., sending invoices and expense reports to the finance department.

- Using real compromised mail accounts for sending phishing emails to successfully pass email domain authentication (SPF, DKIM, DMARC).

- Using large number of distributed mail addresses to avoid bulk mail detections.

- Using various methods to make it difficult for automated scanners to reach malicious content, such as encrypted ZIP-archives or using CAPTCHA on phishing websites.

- Using polymorphic malware with varying attachment names to complicate detection and blocking.

In addition to reasons listed above, misconfigured mail filtering or transport rules can also lead to the situation where malicious emails are hitting user’s inboxes and some of them can eventually be executed.

Understand the scope of attack

After receiving first user reports or endpoint alerts, we need to understand the scope of attack to provide adequate response. To better understand the scope, we need to try to answer the following questions:

- How many users are affected? Is there anything common between those users?

- Is there anything shared across already identified malicious emails, e.g. mail subject, sender address, attachment names, sender domain, sender mail server IP address?

- Are there similar emails delivered to other users within the same timeframe?

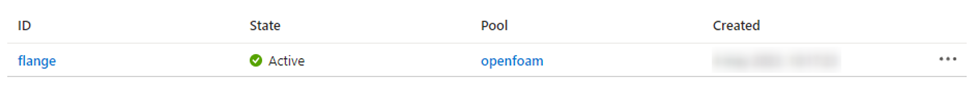

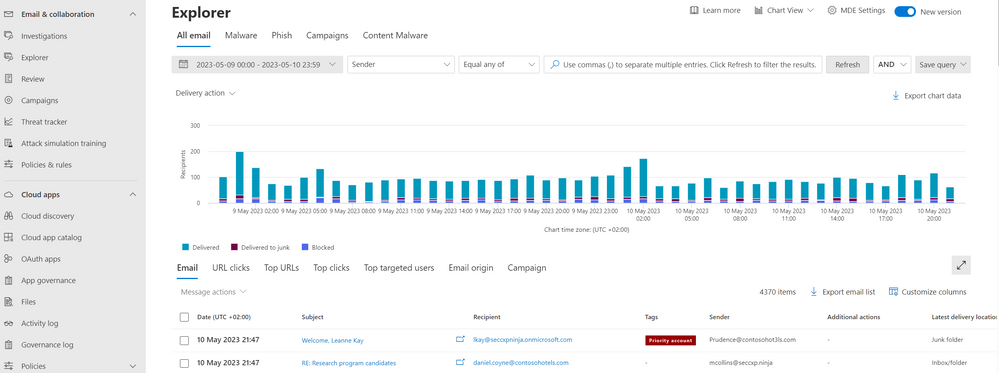

Basic hunting will need to be done at this point, starting with information we have on reported malicious email, luckily Microsoft 365 Defender provides extensive tools to do that. For those who prefer interactive UI, Threat Explorer is an ideal place to start.

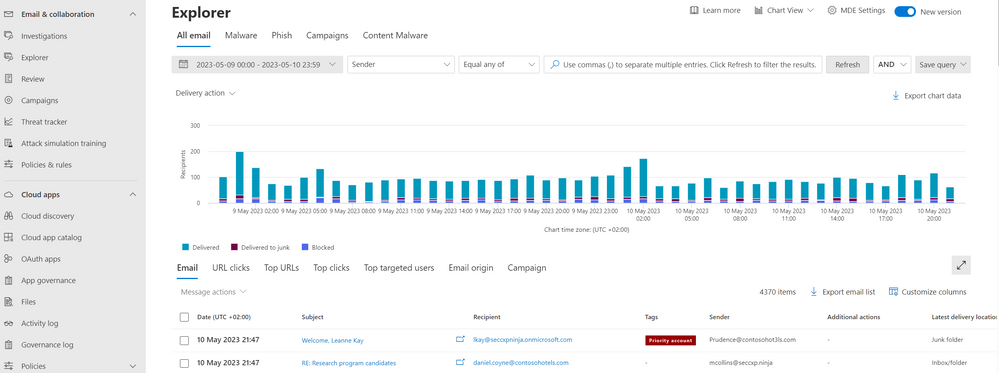

Figure 1: Threat Explorer user interface

Figure 1: Threat Explorer user interface

Using filter at the top, identify reported email and try to locate similar emails sent to your organization, with the same parameters, such as links, sender addresses/domains or attachments.

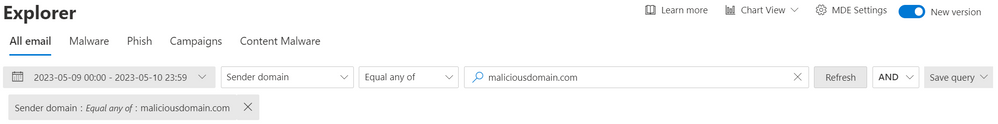

Figure 2: Sample mail filter query in Threat Explorer

Figure 2: Sample mail filter query in Threat Explorer

For even more flexibility, Advanced Hunting feature can be used to search for similar emails in the environment. There are five tables in Advanced Hunting schema that contain Email-related data:

- EmailEvents – contains general information about events involving the processing of emails.

- EmailAttachmentInfo – contains information about email attachments.

- EmailUrlInfo – contains information about URLs on emails and attachments.

- EmailPostDeliveryEvents – contains information about post-delivery actions taken on email messages.

- UrlClickEvents – contains information about Safe Links clicks from email messages

For our purposes we will be interested in the first three tables and can start with simple queries such as the one below:

EmailAttachmentInfo

| where Timestamp > ago(4h)

| where FileType == "zip"

| where SenderFromAddress has_any (".br", ".ru", ".jp")

This sample query will show all emails with ZIP attachments received from the same list of TLDs as identified malicious email and associated with countries where your organization is not operating. In a similar way we can hunt for any other attributes associated with malicious emails.

Check mail delivery and mail filtering settings

Once we have some understanding of how attack looks like, we need to ensure that the reason for these emails being delivered to user inboxes is not misconfiguration in mail filtering settings.

Check custom delivery rules

For every mail delivered to your organization, Defender for Office 365 provides delivery details, including raw message headers. Right from the previous section, whether you used Threat Explorer or Advanced Hunting, by selecting an email item and clicking Open email entity button, you can pivot to email entity page to view all the message delivery details, including any potential delivery overrides, such as safe lists or Exchange transport rules.

Figure 3: Sample email with delivery override by user’s safe senders list

Figure 3: Sample email with delivery override by user’s safe senders list

It might be the case that email was properly detected as suspicious but was still delivered to mailbox due to an override, like on screenshot above where sender is on user’s Safe Senders list, other delivery override types are:

- Allow entries for domains and email addresses (including spoofed senders) in the Tenant Allow/Block List.

- Mail flow rules (also known as transport rules).

- Outlook Safe Senders (the Safe Senders list that’s stored in each mailbox that affects only that mailbox).

- IP Allow List (connection filtering)

- Allowed sender lists or allowed domain lists (anti-spam policies)

If a delivery override has been identified, then it should be removed accordingly. Good news is that malware or high confidence phishing are always quarantined, regardless of the safe sender list option in use.

Check phishing mail header for on-prem environment

One more reason for malicious emails to be delivered to users’ inboxes can be found in hybrid Exchange deployments, where on-premises Exchange environment is not configured to handle phishing mail header appended by Exchange Online Protection.

Check threat policies settings

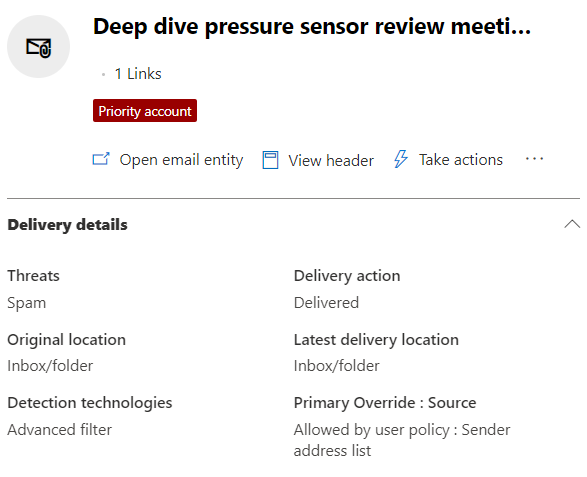

If there were no specific overrides identified it is always a good idea to double check mail filtering settings in your tenant, the easiest way to do that, is to use configuration analyzer that can be found in Email & Collaboration > Policies & Rules > Threat policies > Configuration analyzer:

Figure 4: Defender for Office 365 Configuration analyzer

Figure 4: Defender for Office 365 Configuration analyzer

Configuration analyzer will quickly help to identify any existing misconfigurations compared to recommended security baselines.

Make sure that Zero-hour auto purge is enabled

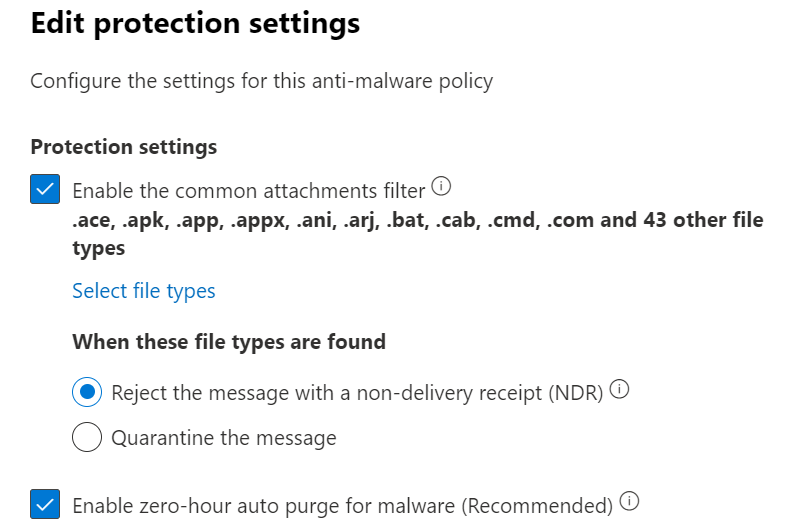

In Exchange Online mailboxes and in Microsoft Teams (currently in preview), zero-hour auto purge (ZAP) is a protection feature that retroactively detects and neutralizes malicious phishing, spam, or malware messages that have already been delivered to Exchange Online mailboxes or over Teams chat. Which exactly fits into the discussed scenario. This setting for email with malware can be found in Email & Collaboration > Policies & rules > Threat policies > Anti-malware. Similar setting for spam and phishing messages is located under Anti-spam policies. It is important to note that ZAP doesn’t work for on-premises Exchange mailboxes.

Figure 5: Zero-hour auto purge configuration setting in Anti-malware policy

Figure 5: Zero-hour auto purge configuration setting in Anti-malware policy

Performing response steps

Once we have identified malicious emails and confirmed that all the mail filtering settings are in order, but emails are still coming through to users’ inboxes (see the introduction part of this article for reasons for such behavior), it is time for manual response steps:

Report false negatives to Microsoft

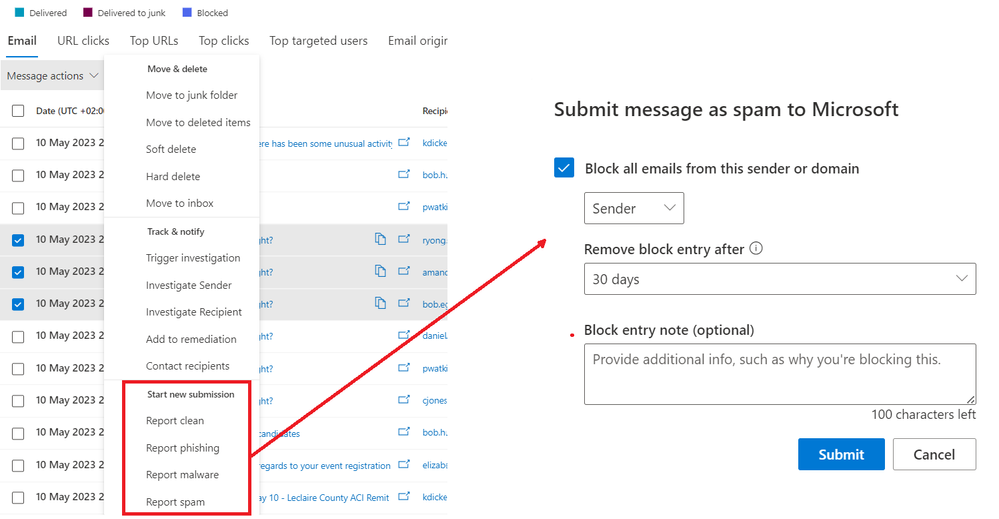

In Email & Collaboration > Explorer, actions can be performed on emails, including reporting emails to Microsoft for analysis:

Figure 6: Submit file to Microsoft for analysis using Threat Explorer

Figure 6: Submit file to Microsoft for analysis using Threat Explorer

Actions can be performed on emails in bulk and during the submission process, corresponding sender addresses can also be added to Blocked senders list.

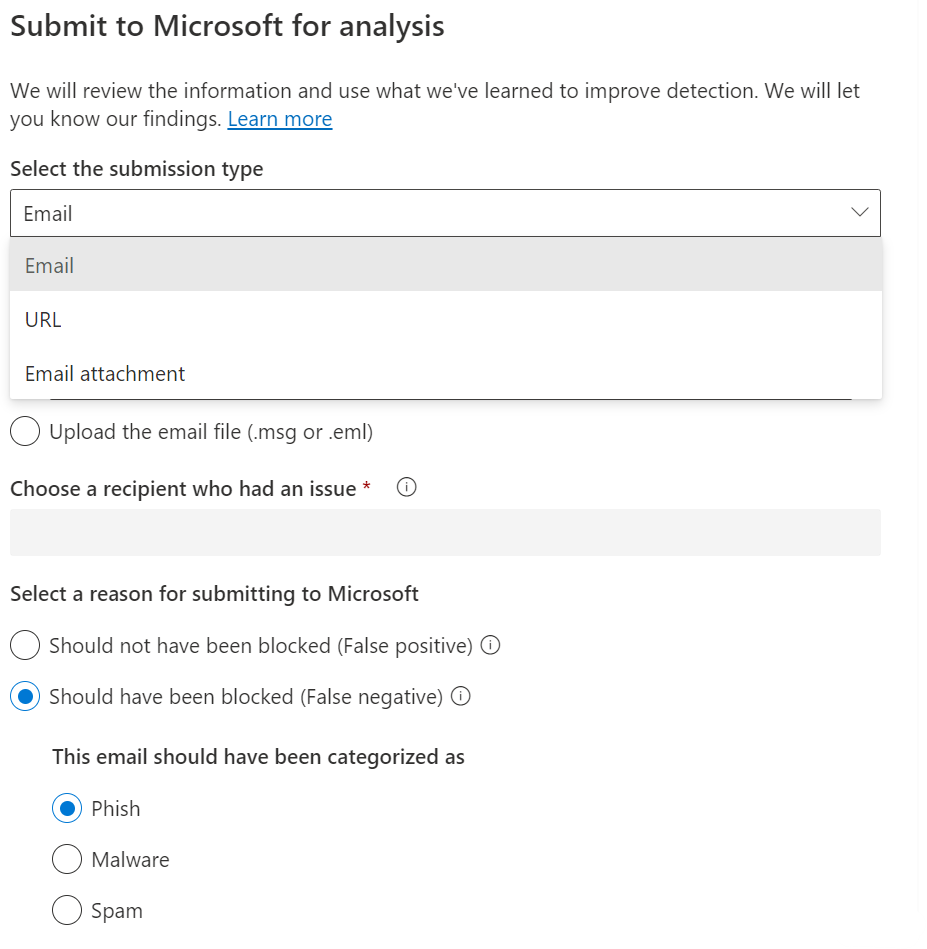

Alternatively, emails, specific URLs or attached files can be manually submitted through Actions & Submissions > Submissions section of the portal. Files can also be submitted using public website.

Figure 7: Submit file to Microsoft for analysis using Actions & submissions

Figure 7: Submit file to Microsoft for analysis using Actions & submissions

Timely reporting is critical, the sooner researchers will get their hands on unique samples from your environment, and start their analysis, the sooner those malicious mails will be detected and blocked automatically.

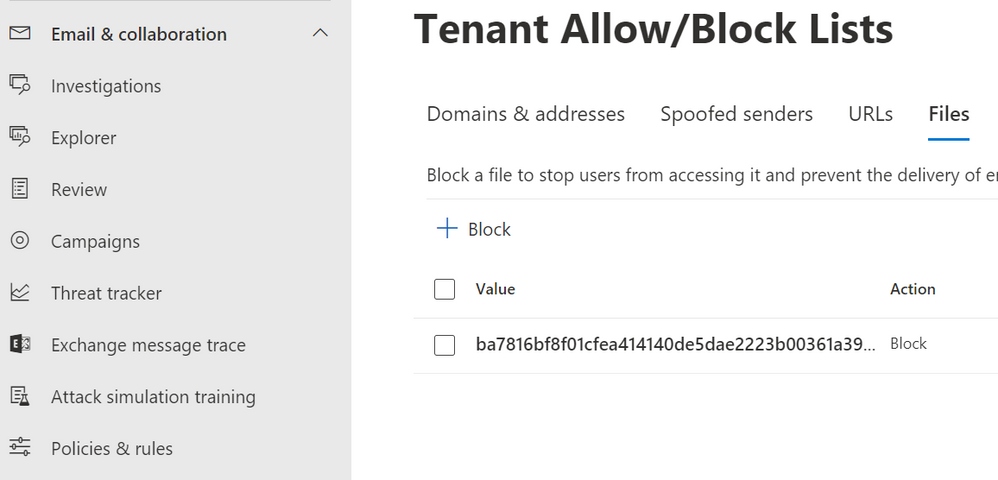

Block malicious senders/files/URLs on your Exchange Online tenant

While you have an option to block senders, files and URLs during submission process, that can also be done without submitting using Email & Collaboration > Policies & rules > Threat policies > Tenant Allow/Block List, that UI also supports bulk operations and provides more flexibility.

Figure 8: Tenant Allow/Block Lists

Figure 8: Tenant Allow/Block Lists

The best way to obtain data for block lists is Advanced Hunting query, e.g. the following query can be used to return list of hashes:

EmailAttachmentInfo

| where Timestamp > ago(8h)

| where FileType == "zip"

| where FileName contains "invoice"

| distinct SHA256, FileName

Note: such a simple query might be too broad and include some legitimate attachments, make sure to adjust it further to get an accurate list and avoid false positive blockings.

Block malicious files/URLs/IP addresses on endpoints

Following defense-in-depth principle, even when malicious email slips through mail filters, we still have a good chance of detecting and blocking it on endpoints using Microsoft Defender for Endpoint. As an extra step, identified malicious attachments and URLs can be added as custom indicators to ensure their blocking on endpoints.

EmailUrlInfo

| where Timestamp > ago(4h)

| where Url contains "malicious.example"

| distinct Url

Results can be exported from Advanced Hunting and later on imported on Settings > Endpoints > Indicators page (Note: Network Protection needs to be enabled on devices to block URLs/IP addresses). The same can be done for malicious files using SHA256 hashes of attachments from EmailAttachmentInfo table.

Some other steps that can be taken to better prepare your organization for similar incident:

- Ensure that EDR Block Mode is enabled for machines where AV might be running in passive mode.

- Enable Attack Surface Reduction (ASR) rules to mitigate some of the risks associated with mail-based attacks on endpoints.

- Train your users to identify phishing mails with Attack simulation feature in Microsoft Defender for Office 365

Learn more

by Contributed | May 10, 2023 | Technology

This article is contributed. See the original author and article here.

Azure Virtual Machines are an excellent solution for hosting both new and legacy applications. However, as your services and workloads become more complex and demand increases, your costs may also rise. Azure provides a range of pricing models, services, and tools that can help you optimize the allocation of your cloud budget and get the most value for your money.

Let’s explore Azure’s various cost-optimization options to see how they can significantly reduce your Azure compute costs.

The major Azure cost optimization options can be grouped into three categories: VM services, pricing models and programs, and cost analysis tools.

Let’s have a quick overview of these 3 categories:

VM services – Several VM services give you various options to save, depending on the nature of your workloads. These can include things like dynamically autoscaling VMs according to demand or utilizing spare Azure capacity at up to 90% discount versus pay-as-you-go rates.

Pricing models and programs – Azure also offers various pricing models and programs that you can take advantage of depending on your needs and desires of how you plan to spend your Azure costs. For example, committing to purchase compute capacity for a certain time period can lower your average costs per VM by up to 72%.

Cost analysis tools – This category of optimization features various tools available for you to calculate, track, and monitor costs of your Azure spend. This deep insight and data into your spending allows you to make better decisions about where your compute costs are being spent and how to allocate them in a way that best suits your needs.

When it comes to VMs, the various VMs services are probably the first place you want to start when looking to save cost. While this blog will focus mostly on VM services, stay tuned for blogs about pricing models & programs and cost analysis tools!

Spot Virtual Machines

Spot Virtual Machines provide compute capacity at drastically reduced costs by leveraging compute capacity that isn’t being currently used. While it’s possible to have your workloads evicted, this compute capacity is charged at a greatly reduced price, up to 90%. This makes Spot Virtual Machines ideal for workloads that are interruptible and non-time sensitive, like machine learning model training, financial modeling, or CI/CD.

Incorporating Spot VMs can undoubtedly play a key role in your cost savings strategy. Azure provides significant pricing incentives to utilize any current spare capacity. The opportunity to leverage Spot VMs should be evaluated for every appropriate workload to maximize cost savings. Let’s learn more about how Spot Virtual Machines work and if they are right for you.

Deployment Scenarios

There are a variety of cases in which Spot VMs can be ideal for, let’s look at some examples:

- CI/CD – CI/CD is one of the easiest places to get started with Spot Virtual Machines. The temporary nature of many development and test environments makes them suited for Spot VMs. The difference in time of a couple minutes to a couple hours when testing an application is often not business-critical. Thus, deploying CI/CD workloads and build environments with Spot VMs can drastically lower the cost of operating your CI/CD pipeline. Customer story

- Financial modeling – creating financial models is also compute resource intensive, but often transient in nature. Researchers often struggle to test all the hypotheses they want with non-flexible infrastructure. But with Spot VMs, they add extra compute resources during periods of high demand without having to commit to purchasing a higher amount of dedicated VM resources, creating more and better models faster. Customer story

- Media rendering – media rendering jobs like video encoding and 3D modeling can require lots of computing resources but may not necessarily demand resources consistently throughout the day. These workloads are also often computationally similar, not dependent on each other, and not requiring immediate responses. These attributes make it another ideal case for Spot VMs. For rendering infrastructure often at capacity, Spot VMs are also a great way to add extra compute resources during periods of high demand without having to commit to purchasing a higher amount of dedicated VM resources to meet capacity, lowering overall TCO of running a render farm. Customer story

Generally speaking, if the workload is stateless, scalable, or time, location, and hardware-flexible, then they may be a good fit for Spot VMs. While Spot VMs can offer significant cost savings, they are not suitable for all workloads. Workloads that require high availability, consistent performance, or long-running tasks may not be a good fit for Spot VMs.

Features & Considerations

Now that you have learned more about Spot VMs and may be considering using them for your workloads, let’s talk a bit more about how Spot VMs work and the controls available to you to optimize cost savings even further.

Spot VMs are priced according to demand. With this flexible pricing model, Spot VMs also give you the ability to set a price limit for the Spot VMs that you’ll use. If the demand is high enough that the price for a Spot VM exceeds what you’re willing to pay, you can simply use this limit to opt to not run your workloads at that time and wait for demand to decrease. If you anticipate the Spot VMs you want to use are in a region that will have high utilization rates a time of day or month, you may want to choose another region, or plan for creating higher price limits for workloads that occur during higher demand times. If the time when the workload runs isn’t important, you may opt to set the price limit low, such that your workloads only run during periods that Spot capacity is the cheapest to minimize your Spot VM costs.

While using Spot VMs with price limits, we also must look at the different eviction types and policies, which are options you can set in place to determine what happens to your Spot VMs when they are to be reclaimed by a pay-as-you-go customer. To maximize cost savings, it’s best to prioritize the delete eviction policy first. VMs can be redeployed faster, meaning less downtime waiting for Spot capacity, and not having to pay for disk storage. However, if your workload is region or size specific, and requires some level of persistent data in the event of an eviction, then the Deallocate policy will be a better option.

These things may only be a small slice of all the considerations to best utilize Spot VMs. Learn more about best practices for building apps with Spot VMs here.

So how can we actually deploy and manage Spot VMs at scale? Using Virtual Machine Scale Sets is likely your best option. Virtual Machine Scale Sets, in addition to Spot VMs, offer a plethora of cost savings features and options for your VM deployments and easily allow you to deploy your Spot VMs in conjunction with standard VMs. In our next section, we’ll look at some of these features in Virtual Machine Scale Sets and how we can use them to deploy Spot VMs at scale.

Virtual Machine Scale Sets

Virtual Machine Scale Sets enable you to manage and deploy groups of VMs at scale with a variety of load balancing, resource autoscaling, and resiliency features. While a variety of these features can indirectly save costs like making deployments simpler to manage or easier to achieve high availability, some of these features contribute directly to reducing costs, namely autoscaling and Spot Mix. Let’s dive deeper into how these two features can optimize costs.

Autoscaling

Autoscaling is a critical feature included within Virtual Machine Scale Sets that give you the ability to dynamically increase or decrease the number of virtual machines running within the scale set. This allows you to scale out your infrastructure to meet demand when it is required, and scale it in when compute demand lowers, reducing the likelihood that you’ll be paying to have extra VMs running when you don’t have to.

VMs can be autoscaled according to rules that you can define yourself from a variety of metrics. These rules can be based off host-based metrics available from your VM like CPU usage or memory demand or application-level metrics like session counts and page load performance. This flexibility gives you the option to scale in or out your workload to very specific requirements, and it is with this specificity that you can control your infrastructure scaling to optimally meet your compute demand without extra overhead.

You can also scale in or out according to a schedule, for cases in which you can anticipate cyclical changes to VM demand throughout certain times of the day, month, or year. For example, you can automatically scale out your workload at the beginning of the workday when application usage increases, and then scale in the number of VM instances to minimize resource costs overnight when application usage lowers. It’s also possible to scale out on certain days when events occur such as a holiday sale or marketing launch. Additionally, for more complex workloads, Virtual Machines Scale Sets also provides the option to leverage machine learning to predictively autoscale workloads according to historical CPU usage patterns.

These autoscaling policies make it easy to adapt your infrastructure usage to many variables and leveraging autoscale rules to best fit your application demand will be critical to reducing cost.

Spot Mix

With Spot Mix in Virtual Machine Scale Sets, you can configure your scale in or scale out policy to specify a ratio of standard to Spot VMs to maintain as VMs increase or decrease. Say if you specify a ratio of 50%, then for every 10 new VMs the scale out policy adds to the scale set, 5 of the machines will be standard VMs, while the other 5 will be Spot. To maximize cost savings, you may want to have a low ratio standard to Spot VMs, meaning more Spot VMs will be deployed instead of standard VMs as the scale set grows. This can work well for workloads that don’t need much guaranteed capacity at larger scales. However, for workloads that need greater resiliency at scale, then you may want to increase the ratio to ensure adequate baseline standard capacity.

You can learn more about choosing which VM families and sizes might be right for you with the VM selector and the Spot Advisor, which we will cover more in depth a later blog of this VM cost optimization blog series.

Wrapping up

We’ve learned how Spot VMs and Virtual Machines Scale Sets, especially when combined, equip you with various features and options to control how your VMs behave and how you can use those controls in a manner to maximize your cost savings.

Next time, we’ll go in depth the various pricing models and programs available in Azure that can even further optimize your cost, allowing you to do more with less with Azure VMs. Stay tuned for more blogs!

by Contributed | May 9, 2023 | AI, Business, Microsoft 365, Technology, Work Trend Index

This article is contributed. See the original author and article here.

In March, we introduced Microsoft 365 Copilot—your copilot for work. Today, we’re announcing that we’re bringing Microsoft 365 Copilot to more customers with an expanded preview and new capabilities.

The post Introducing the Microsoft 365 Copilot Early Access Program and new capabilities in Copilot appeared first on Microsoft 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

by Contributed | May 8, 2023 | Technology

This article is contributed. See the original author and article here.

This blog post has been co-authored by Microsoft and Dhiraj Sehgal, Reza Ramezanpur from Tigera.

Container orchestration pushes the boundaries of containerized applications by preparing the necessary foundation to run containers at scale. Today, customers can run Linux and Windows containerized applications in a container orchestration solution, such as Azure Kubernetes Service (AKS).

This blog post will examine how to set up a Windows-based Kubernetes environment to run Windows workloads and secure them using Calico Open Source. By the end of this post, you will see how simple it is to apply your current Kubernetes skills and knowledge to rule a hybrid environment.

Container orchestration at scale with AKS

After creating a container image, you will need a container orchestrator to deploy it at scale. Kubernetes is a modular container orchestration software that will manage the mundane parts of running such workloads, and AKS abstracts the infrastructure on which Kubernetes runs, so you can focus on deploying and running your workloads.

In this blog post, we will share all the commands required to set up a mixed Kubernetes cluster (Windows and Linux nodes) in AKS – you can open up your Azure Cloud Shell window from the Azure Portal and run the commands if you want to follow along.

If you don’t have an Azure account with a paid subscription, don’t worry—you can sign up for a free Azure account to complete the following steps.

Resource group

To run a Kubernetes cluster in Azure, you must create multiple resources that share the same lifespan and assign them to a resource group. A resource group is a way to group related resources in Azure for easier management and accessibility. Keep in mind that each resource group must have a unique name.

The following command creates a resource group named calico-win-container in the australiaeast location. Feel free to adjust the location to a different zone.

az group create --name calico-win-container --location australiaeast

Cluster deployment

Note: Azure free accounts cannot create any resources in busy locations. Feel free to adjust your location if you face this problem.

A Linux control plane is necessary to run the Kubernetes system workloads, and Windows nodes can only join a cluster as participating worker nodes.

az aks create --resource-group calico-win-container --name CalicoAKSCluster --node-count 1 --node-vm-size Standard_B2s --network-plugin azure --network-policy calico --generate-ssh-keys --windows-admin-username

Windows node pool

Now that we have a running control plane, it is time to add a Windows node pool to our AKS cluster.

Note: Use `windows` as the value for the ‘–os-type’ argument.

az aks nodepool add --resource-group calico-win-container --cluster-name CalicoAKSCluster --os-type Windows --name calico --node-vm-size Standard_B2s --node-count 1

Calico for Windows

Calico for Windows is officially integrated into the Azure platform. Every time you add a Windows node in AKS, it will come with a preinstalled version of Calico. To check this, use the following command to ensure EnableAKSWindowsCalico is in a Registered state:

az feature list -o table --query "[?contains(name, 'Microsoft.ContainerService/EnableAKSWindowsCalico')].{Name:name,State:properties.state}"

Expected output:

Name State

------------------------------------------------- ----------

Microsoft.ContainerService/EnableAKSWindowsCalico Registered

If your query returns a Not Registered state or no items, use the following command to enable AKS Calico integration for your account:

az feature register --namespace "Microsoft.ContainerService" --name "EnableAKSWindowsCalico"

After EnableAKSWindowsCalico becomes registered, you can use the following command to add the Calico integration to your subscription:

az provider register --namespace Microsoft.ContainerService

Exporting the cluster key

Kubernetes implements an API Server that provides a REST interface to maintain and manage cluster resources. Usually, to authenticate with the API server, you must present a certificate, username, and password. The Azure command-line interface (Azure CLI) can export these cluster credentials for an AKS deployment.

Use the following command to export the credentials:

az aks get-credentials --resource-group calico-win-container --name CalicoAKSCluster

After exporting the credential file, we can use the kubectl binary to manage and maintain cluster resources. For example, we can check which operating system is running on our nodes by using the OS labels.

kubectl get nodes -L kubernetes.io/os

You should see a similar result to:

NAME STATUS ROLES AGE VERSION OS

aks-nodepool1-64517604-vmss000000 Ready agent 6h8m v1.22.6 linux

akscalico000000 Ready agent 5h57m v1.22.6 windows

Windows workloads

If you recall, Kubernetes API Server is the interface that we can use to manage or maintain our workloads.

We can use the same syntax to create a deployment, pod, service, or Kubernetes resource for our new Windows nodes. For example, we can use the same OS selector that we previously used for our deployments to ensure Windows and Linux workloads are deployed to their respective nodes:

kubectl apply -f https://raw.githubusercontent.com/frozenprocess/wincontainer/main/Manifests/00_deployment.yaml

Since our workload is a web server created by Microsoft’s .NET technology, the deployment YAML file also packages a service load balancer to expose the HTTP port to the Internet.

Use the following command to verify that the load balancer successfully acquired an external IP address:

kubectl get svc win-container-service -n win-web-demo

You should see a similar result:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

win-container-service LoadBalancer 10.0.203.176 20.200.73.50 80:32442/TCP 141m

Use the “EXTERNAL-IP” value in a browser, and you should see a page with the following message:

Perfect! Our pod can communicate with the Internet.

Securing Windows workloads with Calico

The default security behavior for the Kubernetes NetworkPolicy resource permits all traffic. While this is a great way to set up a lab environment in a real-world scenario, it can severely impact your cluster’s security.

First, use the following manifest to enable the API server:

kubectl apply -f https://raw.githubusercontent.com/frozenprocess/wincontainer/main/Manifests/01_apiserver.yaml

Use the following command to get the API Server deployment status:

kubectl get tigerastatus

You should see a similar result to:

NAME AVAILABLE PROGRESSING DEGRADED SINCE

apiserver True False False 10h

calico True False False 10h

Calico offers two security policy resources that can cover every corner of your cluster. We will implement a global policy since it can restrict Internet addresses without the daunting procedure of explicitly writing every IP/CIDR in a policy.

kubectl apply -f https://raw.githubusercontent.com/frozenprocess/wincontainer/main/Manifests/02_default-deny.yaml

If you go back to your browser and click the Try again button, you will see that the container is isolated and cannot initiate communication to the Internet.

Note: The source code for the workload is available here.

Clean up

If you have been following this blog post and did the lab section in Azure, please make sure that you delete the resources, as cloud providers will charge you based on usage.

Use the following command to delete the resource group:

Conclusion

While network policy is not relevant for lab scenarios, production workloads have a different level of security requirements to meet. Calico offers a simple and integrated way to apply network policies to Windows workloads on Azure Kubernetes Service. In this blog post, we covered the basics for implementing a network policy to a simple web server. You can check out more information on how Calico works with Windows on AKS in our documentation page.

Additional links:

Recent Comments