by Contributed | Apr 19, 2021 | Technology

This article is contributed. See the original author and article here.

Unreal Engine allows developers to create industry-leading visuals for a wide array of real-time experiences. Compared to other platforms, mixed reality provides some new visual challenges and opportunities. MRTK-Unreal’s Graphics Tools empowers developers to make the most of these visual opportunities.

Graphics Tools is an Unreal Engine plugin with code, blueprints, and example assets created to help improve the visual fidelity of mixed reality applications while staying within performance budgets.

When talking about performance in mixed reality, questions normally arise around “what is visually possible on HoloLens 2?” The device is a self-contained computer that sits on your head and renders to a stereo display. The mobile graphics processing unit (GPU) on the HoloLens 2 supports a wide gamut of features, but it is important to play to the strengths and avoid the weaknesses of the GPU.

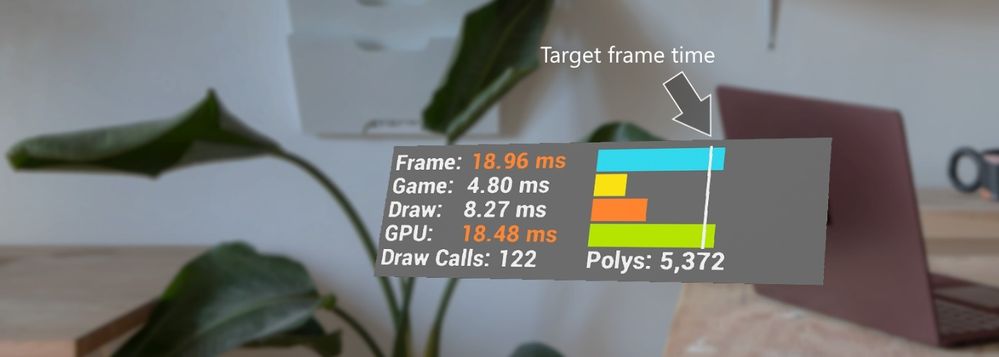

On HoloLens 2 the target framerate is 60 frames per second (or 16.66 milliseconds for your application to preset a new frame). Applications which do not hit this frame rate can result in deteriorated user experiences such as worsened hologram stabilization, hand tracking, and world tracking.

One common bottleneck in “what is possible” is how to achieve efficient real-time lighting techniques in mixed reality. Let’s outline how Graphics Tools solves this problem below.

Lighting, simplified

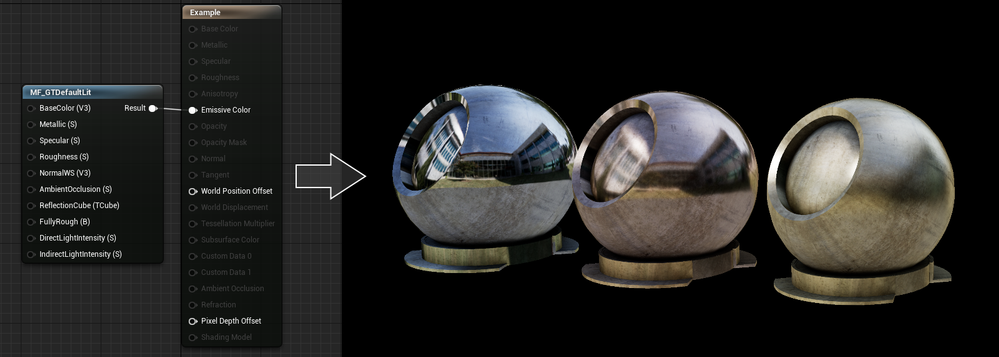

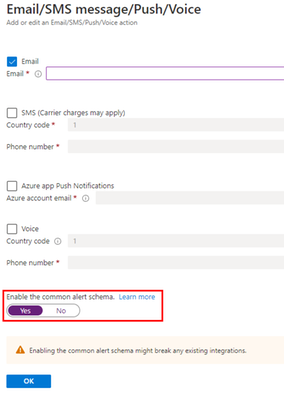

By default, Unreal uses the mobile lighting rendering path for HoloLens 2. This lighting path is well suited for mobile phones and handhelds, but is often too costly for HoloLens 2. To ensure developers have access to a lighting path that is performant, Graphics Tools incudes a simplified physically based lighting system accessible via the MF_GTDefaultLit material function. This lighting model restricts the number of dynamic lights and enforces some graphics features are disabled.

If you are familiar with Unreal’s default lighting material setup, the inputs to the MF_GTDefaultLit function should look very similar. For example, changing the values to the Metallic and Roughness inputs can provide convincing looking metal surfaces as seen below.

If you are interested in learning more about the lighting model it is best to take a peek at the HLSL shader code that lives with the Graphics Tools plugin and read the documentation.

What is slow?

More often than not, you are going to get to a point in your application development where your app isn’t hitting your target framerate and you need to figure out why. This is where profiling comes into the picture. Unreal Engine has tons of great resources for profiling (one of our favorites is Unreal Insights which works on HoloLens 2 as of Unreal Engine 4.26).

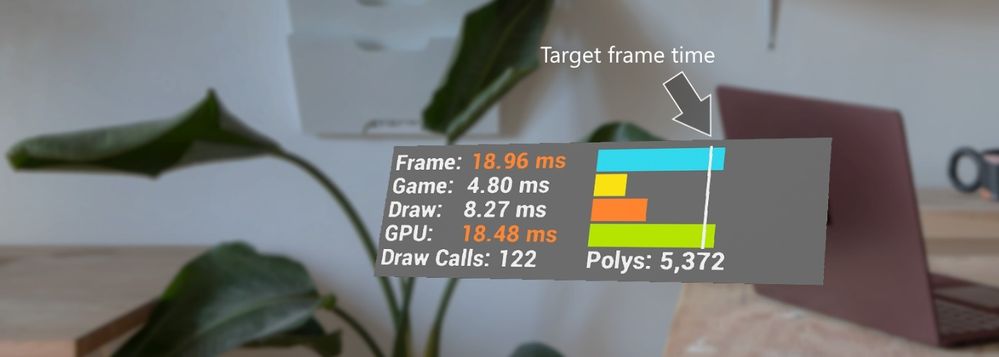

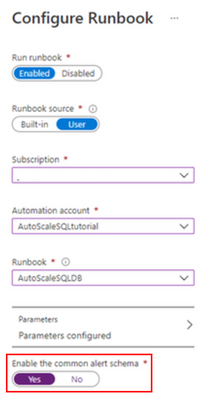

Most of the aforementioned tools require connecting your HoloLens 2 to a development PC, which is great for fine grained profiling, but often you just need a high-level overview of performance within the headset. Graphics Tools provides the GTVisualProfiler actor which gives real-time information about the current frame times represented in milliseconds, draw call count, and visible polygon count in a stereo friendly view. A snapshot of the GTVisualProfiler is demonstrated below.

In the above image a developer can, at a glance, see their application is limited by GPU time. It is highly recommended to always show a framerate visual while running & debugging an application to continuously track performance.

Performance can be an ambiguous and constantly changing challenge for mixed reality developers, and the spectrum of knowledge to rationalize performance is vast. There are some general recommendations for understanding how to approach performance for an application in the Graphics Tools profiling documentation.

Peering inside holograms

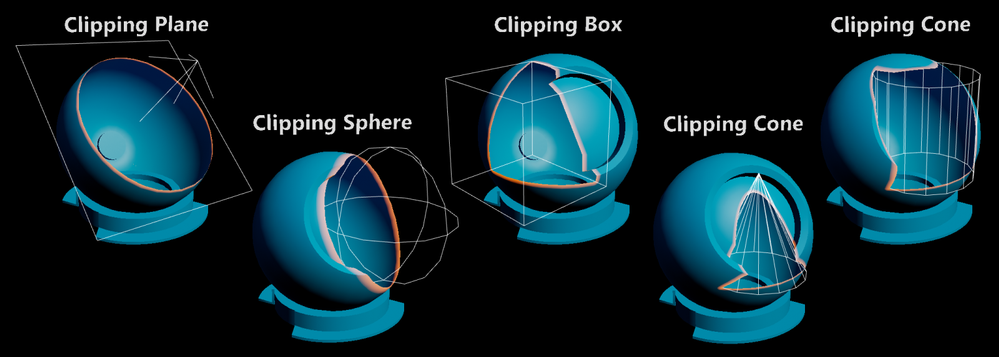

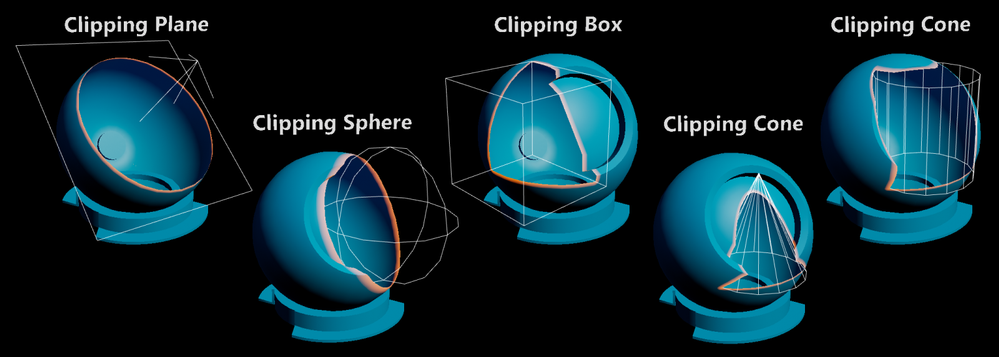

Many developers ask for tools to “peer inside” a hologram. When reviewing complex assemblies, it’s helpful to cut away portions of a model to see parts which are normally occluded. To solve this scenario Graphics Tools has a feature called clipping primitives.

A clipping primitive represents an analytic shape that passes its state and transformation data into a material. Material functions can then take this primitive data and perform calculations, such as returning the signed distance from the shape’s surface. Included with Graphics Tools are the following clipping primitive shapes.

Note, the clipping cone can be adjusted to also represent a capped cylinder. Clipping primitives can be configured to clip pixels within their shape or outside of their shape. Some other use cases for clipping primitives are as a 3D stencil test, or as a mechanism to get the distance from an analytical surface. Being able to calculate the distance from a surface within a shader allows one to do effects like the orange border glow in the above image.

To learn more about clipping primitives please see the associated documentation.

Making things “look good”

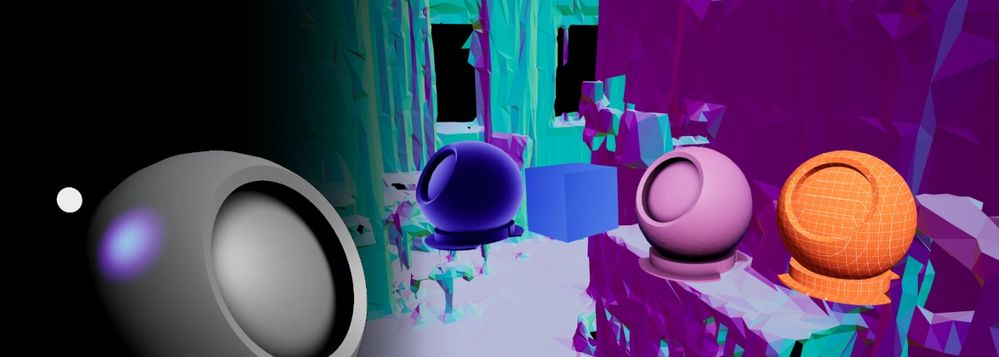

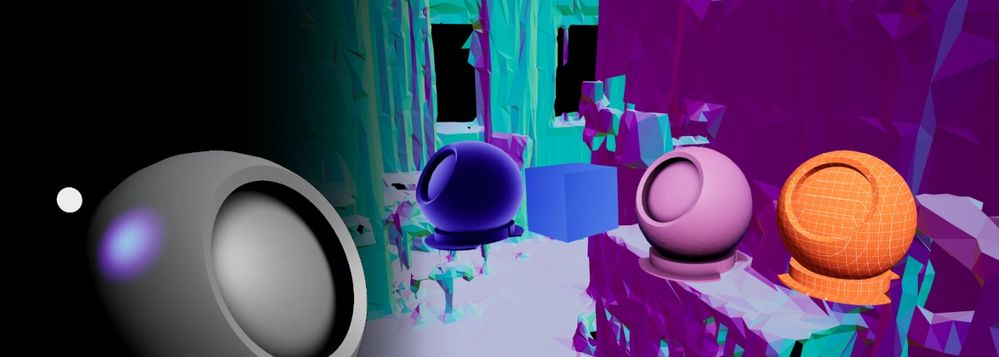

The world is our oyster when it comes to creating visual effects for HoloLens 2. Unreal Engine has a powerful material editor that allows people without shader experience to create and explore. To bootstrap developers, Graphics Tools contains a few out-of-the-box effects.

Effects include:

- Proximity based lighting (docs)

- Procedural mesh texturing & lighting (docs)

- Simulated iridescence, rim lighting, + more (docs)

To see all of these effects, plus everything described above in action, Graphics Tools includes an example plugin which can be cloned directly from GitHub or downloaded from the releases page. If you have a HoloLens 2, you can also download and sideload a pre-built version of the example app from the releases page.

Questions?

We are always eager to hear more from the community for ways to improve the toolkit. Please feel free to share your experiences and suggestions below, or on our newly created GitHub discussions page.

by Contributed | Apr 19, 2021 | Technology

This article is contributed. See the original author and article here.

Since we announced in 2019 that we would be retiring Basic Authentication for legacy protocols we have been encouraging our customers to switch to Modern Authentication. Modern Authentication, based on OAuth2, has a lot of advantages and benefits as we have covered before, and we’ve yet to meet a customer who doesn’t think it is a good thing. But the ‘getting there’ part might be the hard part, and that’s what this blog post is about.

This post is specifically about enabling Modern Authentication for Outlook for Windows. This is the client most widely used by many of our customers, and the client that huge numbers of people spend their day in. Any change that might impact those users is never to be taken lightly.

As Admin, you know you need to get those users switched from Basic to Modern Auth, and you know all it takes is one PowerShell command. You took a look at our docs, found the article called Enable or disable Modern Authentication for Outlook in Exchange Online | Microsoft Docs and saw that all you need to do is read the article (which it says will take just 2 minutes) and then run:

Set-OrganizationConfig -OAuth2ClientProfileEnabled $true

That sounds easy enough. So why didn’t you do it already?

Is it because it all sounds too easy? Or because there is a fear of the unknown? Or spiders? (We’re all scared of spiders, it’s ok.)

We asked some experts at Microsoft who have been through this with some of our biggest customers for their advice. And here it comes!

Expert advice and things to know

“Once Exchange Online Modern Authentication is enabled for Outlook for Windows, wait a few minutes.”

That was the first response we got. It was certainly encouraging, but wasn’t exactly a lot of information we realized, so we dug in some more, and here’s what we found.

One thing you need to remember that enabling Modern Authentication for Exchange Online using the Set-OrganizationConfig parameter only impacts Outlook for Windows. Outlook on the Web, Exchange ActiveSync, Outlook Mobile or for Mac etc., will continue to authenticate as they do today and will not be impacted by this change.

Once Modern Authentication is turned on in Exchange Online, a Modern Authentication supported version of Outlook for Windows will start using Modern Authentication after a restart of Outlook. Users will get a browser-based pop up asking for UPN and Password or if SSO is setup and they are already logged in to some other services, it should be seamless.

If the login domain is setup as Federated, the user will be redirected to login to the identity provider (ADFS, Ping, Okta, etc.) that was set up. If the domain is managed by Azure or set up for Pass Through Authentication, the user won’t be redirected but will authenticate with Azure directly or with Azure on behalf of your Active Directory Domain Service respectively.

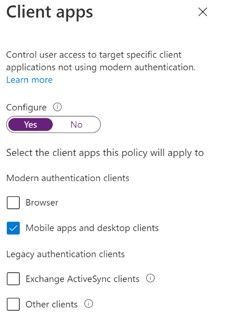

Take a look at your Multi-Factor Authentication (MFA)/Conditional Access (CA) settings. If MFA has been enabled for the user and/or Conditional Access requiring MFA has been setup for the user account for Exchange Online (or other workloads that have a dependency on Exchange Online), then the user/computer will be evaluated against the Conditional Access Policy.

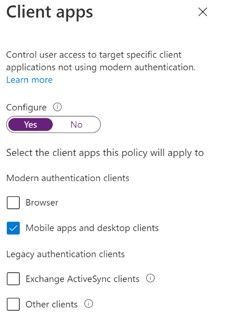

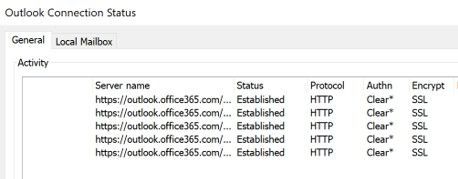

- Here is an example of a CA policy with Condition of Client App “Mobile apps and desktop clients”. This will impact Outlook for Windows with Modern Authentication whereas “Other Clients” would impact Outlook for Windows using Basic Authentication, for example.

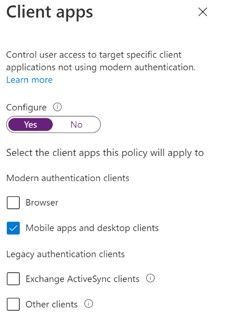

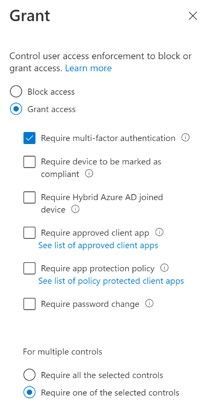

Next is Access Control Grant in CA requiring MFA. If Outlook for Windows was using Basic Authentication, this would not apply since MFA depends on Modern Authentication. But once you enable Modern Authentication, users in the scope of this CA policy would be required to use MFA to access Exchange Online.

Next is Access Control Grant in CA requiring MFA. If Outlook for Windows was using Basic Authentication, this would not apply since MFA depends on Modern Authentication. But once you enable Modern Authentication, users in the scope of this CA policy would be required to use MFA to access Exchange Online.

The Modern Authentication setting for Exchange Online is tenant-wide. It’s not possible to enable it per-user, group or any such structure. For this reason, we recommend turning this on during a maintenance period, testing, and if necessary, rolling back by changing the setting back to False. A restart of Outlook is required to switch from Basic to Modern Auth and vice versa if roll back is required.

It may take 30 minutes or longer for the change to be replicated to all servers in Exchange Online so don’t panic if your clients don’t immediately switch, it’s a very big infrastructure.

Be aware of other apps that authenticate with Exchange Online using Modern Authentication like Skype for Business. Our recommendation is to enable Modern Authentication for both Exchange and Skype for Business.

Here is something rare, but we have seen it… After you enable Modern Authentication in an Office 365 tenant, Outlook for Windows cannot connect to a mailbox if the user’s primary Windows account is a Microsoft 365 account that does not match the account they use to log in to the mailbox. The mailbox shows “Disconnected” in the status bar.

This is due to a known issue in Office which creates a miscommunication between Office and Windows that causes Windows to provide the default credential instead of the appropriate account credential that is required to access the mailbox.

This issue most commonly occurs if more than one mailbox is added to the Outlook profile, and at least one of these mailboxes uses a login account that is not the same as the user’s Windows login.

The most effective solution to this issue is to re-create your Outlook profile. The fix was shipped in the following builds:

- For Monthly Channel Office 365 subscribers, the fix to prevent this issue from occurring is available in builds 16.0.11901.20216 and later.

- For Semi-Annual Customers, the fix is included in builds 16.0.11328.20392 (Version 1907) and later.

You can find more info on this issue here and here.

That’s a list of issues we got from the experts. Many customers have made the switch with little or no impact.

How do you know Outlook for Windows is now using Modern Auth?

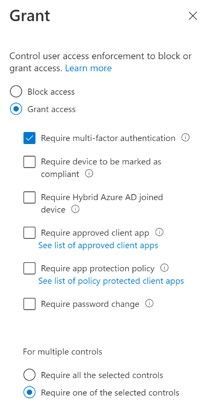

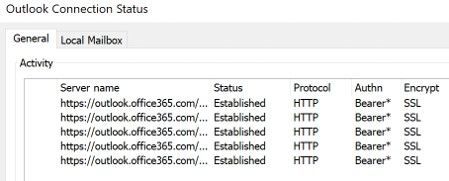

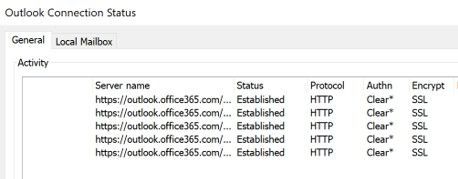

When using Basic Auth, the Outlook Connection Status “Authn” column shows “Clear*”

Once you switch to Modern Auth, the Connection Status in Outlook showing Modern Authentication “Authn” column shows “Bearer*”

And that’s it!

The biggest thing to check prior to making the change are your CA/MFA settings, just to make sure nothing will stop access from happening and making sure your users know there will be a change that might require them to re-authenticate.

Now you know what to expect, there is no need to be afraid of enabling Modern Auth. (Spiders, on the other hand… are still terrifying, but that’s not something we can do much about.)

Huge thanks to Smart Kamolratanapiboon, Rob Whaley and Denis Vilaca Signorelli for the effort it took to put this information into a somewhat readable form.

If you are aware of some other issues that might be preventing you from turning this setting on, let us know in comments below!

The Exchange Team

by Contributed | Apr 19, 2021 | Technology

This article is contributed. See the original author and article here.

The Microsoft 365 compliance center provides easy access to solutions to manage your organization’s compliance needs and delivers a modern user experience that conforms to the latest accessibility standards (WCAG 2.1). From the compliance center, you can access popular solutions such as Compliance Manager, Information Protection, Information Governance, Records Management, Insider Risk Management, Advanced eDiscovery, and Advanced Audit.

Over the coming months, we will begin automatically redirecting users from the Office 365 Security & Compliance Center (SCC) to the Microsoft 365 compliance center for the following solutions: Audit, Data Loss Prevention, Information Governance, Records Management, and Supervision (now Communication Compliance). This is a continuation of our migration to the Microsoft 365 compliance center, which began in September 2020 with the redirection of the Advanced eDiscovery solution.

We are continuing to innovate and add value to solutions in the Microsoft 365 compliance center, with the goal of enabling users to view all compliance solutions within one portal. While redirection is enabled by default, should you need additional transition time, Global admins and Compliance admins can enable or disable redirection in the Microsoft 365 compliance center by navigating to Settings > Compliance Center and using the Automatic redirection toggle switch under Portal redirection.

We will eventually retire the Security & Compliance Center experience, so we encourage you to explore and transition to the new Microsoft 365 compliance center experience. Learn more about the Microsoft 365 compliance center.

by Contributed | Apr 19, 2021 | Technology

This article is contributed. See the original author and article here.

Azure SQL Database offers an easy several-clicks solution to scale database instances when more resources are needed. This is one of the strengths of PaaS, you pay for only what you use and if you need more or less, it’s easy to do the change. A current limitation, however, is that the scaling operation is a manual one. The service doesn’t support auto-scaling as some of us would expect.

Having said that, using the power of Azure we can set up a workflow that auto-scales an Azure SQL Database instance to the next immediate tier when a specific condition is met. For example: what if you could auto-scale the database as soon as it goes over 85% CPU usage for a sustained period of 5 minutes? Using this tutorial we will achieve just that.

Supported SKUs: because there is no automatic way to get the list of available tiers at script runtime, these must be hard-coded into it. For this reason, the script below only supports DTU and vCore (provisioned compute) databases. Hyperscale, Serverless, Fsv2, DC and M series are not supported. Having said that, the logic is the same not matter the tier so feel free to modify the script to suit your particular SKU needs.

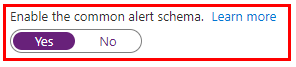

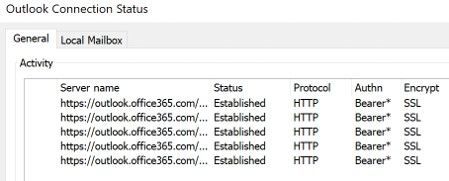

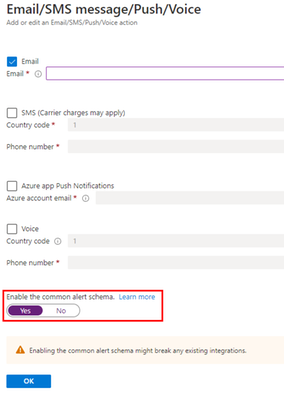

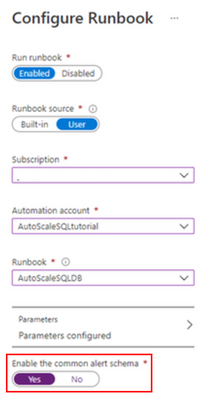

Important: every time any part of the setup asks if the Common Alert Schema (CAS) should be enabled, select Yes. The script used in this tutorial assumes the CAS will be used for the alerts triggering it.

|

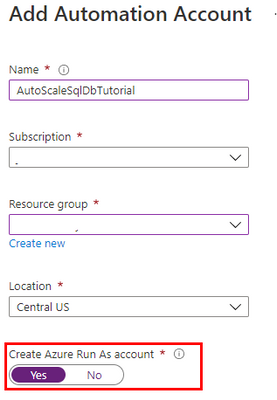

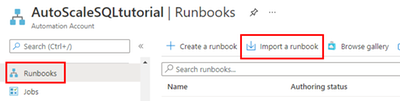

Step #1: deploy Azure Automation account and update its modules

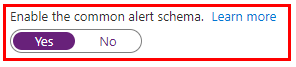

The scale operation will be executed by a PowerShell runbook inside of an Azure Automation account. Search Automation in the Azure Portal search bar and create a new Automation account. Make sure to create a Run As Account while doing this:

Once the Automation account has been created, we need to update the PowerShell modules in it. The runbook we will use uses PowerShell cmdlets but by default these are old versions when the Automation account is provisioned. To update the modules to be used:

- Save the PowerShell script here to your computer with the name Update-AutomationAzureModulesForAccountManual.ps1. The Manual word is added to the file name as to not overwrite the default internal runbook the account uses to update other modules once it gets imported.

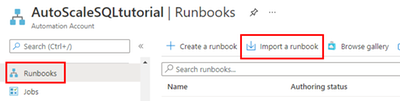

- Import a new module and select the file you saved on step #1:

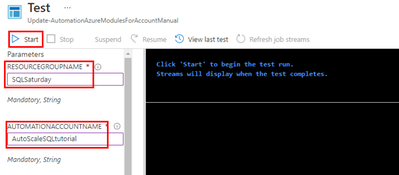

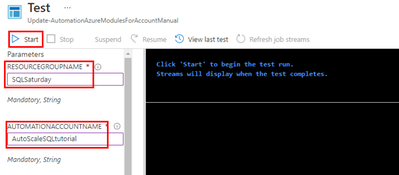

- When the runbook has been imported, click Test Pane, fill in the details for the Resource Group and the Azure Automation account name we are using and click Start:

- When it finishes, the cmdlets will be fully updated. This benefits not only the SQL cmdlets used below but any other cmdlets any other runbook may use on this same Automation account.

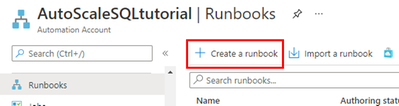

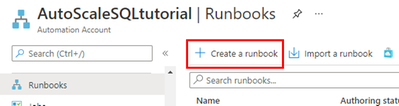

Step #2: create scaling runbook

With our Automation account deployed and updated, we are now ready to create the script. Create a new runbook and copy the code below:

The script below uses Webhook data passed from the alert. This data contains useful information about the resource the alert gets triggered from, which means the script can auto-scale any database and no parameters are needed; it only needs to be called from an alert using the Common Alert Schema on an Azure SQL database.

param

(

[Parameter (Mandatory=$false)]

[object] $WebhookData

)

# If there is webhook data coming from an Azure Alert, go into the workflow.

if ($WebhookData){

# Get the data object from WebhookData

$WebhookBody = (ConvertFrom-Json -InputObject $WebhookData.RequestBody)

# Get the info needed to identify the SQL database (depends on the payload schema)

$schemaId = $WebhookBody.schemaId

Write-Verbose "schemaId: $schemaId" -Verbose

if ($schemaId -eq "azureMonitorCommonAlertSchema") {

# This is the common Metric Alert schema (released March 2019)

$Essentials = [object] ($WebhookBody.data).essentials

Write-Output $Essentials

# Get the first target only as this script doesn't handle multiple

$alertTargetIdArray = (($Essentials.alertTargetIds)[0]).Split("/")

$SubId = ($alertTargetIdArray)[2]

$ResourceGroupName = ($alertTargetIdArray)[4]

$ResourceType = ($alertTargetIdArray)[6] + "/" + ($alertTargetIdArray)[7]

$ServerName = ($alertTargetIdArray)[8]

$DatabaseName = ($alertTargetIdArray)[-1]

$status = $Essentials.monitorCondition

}

else{

# Schema not supported

Write-Error "The alert data schema - $schemaId - is not supported."

}

# If the alert that triggered the runbook is Activated or Fired, it means we want to autoscale the database.

# When the alert gets resolved, the runbook will be triggered again but because the status will be Resolved, no autoscaling will happen.

if (($status -eq "Activated") -or ($status -eq "Fired"))

{

Write-Output "resourceType: $ResourceType"

Write-Output "resourceName: $DatabaseName"

Write-Output "serverName: $ServerName"

Write-Output "resourceGroupName: $ResourceGroupName"

Write-Output "subscriptionId: $SubId"

# Because Azure SQL tiers cannot be obtained programatically, we need to hardcode them as below.

# The 3 arrays below make this runbook support the DTU tier and the provisioned compute tiers, on Generation 4 and 5 and

# for both General Purpose and Business Critical tiers.

$DtuTiers = @('Basic','S0','S1','S2','S3','S4','S6','S7','S9','S12','P1','P2','P4','P6','P11','P15')

$Gen4Cores = @('1','2','3','4','5','6','7','8','9','10','16','24')

$Gen5Cores = @('2','4','6','8','10','12','14','16','18','20','24','32','40','80')

# Here, we connect to the Azure Portal with the Automation Run As account we provisioned when creating the Automation account.

$connectionName = "AzureRunAsConnection"

try

{

# Get the connection "AzureRunAsConnection "

$servicePrincipalConnection=Get-AutomationConnection -Name $connectionName

"Logging in to Azure..."

Add-AzureRmAccount `

-ServicePrincipal `

-TenantId $servicePrincipalConnection.TenantId `

-ApplicationId $servicePrincipalConnection.ApplicationId `

-CertificateThumbprint $servicePrincipalConnection.CertificateThumbprint

}

catch {

if (!$servicePrincipalConnection)

{

$ErrorMessage = "Connection $connectionName not found."

throw $ErrorMessage

} else{

Write-Error -Message $_.Exception

throw $_.Exception

}

}

# Gets the current database details, from where we'll capture the Edition and the current service objective.

# With this information, the below if/else will determine the next tier that the database should be scaled to.

# Example: if DTU database is S6, this script will scale it to S7. This ensures the script continues to scale up the DB in case CPU keeps pegging at 100%.

$currentDatabaseDetails = Get-AzureRmSqlDatabase -ResourceGroupName $ResourceGroupName -DatabaseName $DatabaseName -ServerName $ServerName

if (($currentDatabaseDetails.Edition -eq "Basic") -Or ($currentDatabaseDetails.Edition -eq "Standard") -Or ($currentDatabaseDetails.Edition -eq "Premium"))

{

Write-Output "Database is DTU model."

if ($currentDatabaseDetails.CurrentServiceObjectiveName -eq "P15") {

Write-Output "DTU database is already at highest tier (P15). Suggestion is to move to Business Critical vCore model with 32+ vCores."

} else {

for ($i=0; $i -lt $DtuTiers.length; $i++) {

if ($DtuTiers[$i].equals($currentDatabaseDetails.CurrentServiceObjectiveName)) {

Set-AzureRmSqlDatabase -ResourceGroupName $ResourceGroupName -DatabaseName $DatabaseName -ServerName $ServerName -RequestedServiceObjectiveName $DtuTiers[$i+1]

break

}

}

}

} else {

Write-Output "Database is vCore model."

$currentVcores = ""

$currentTier = $currentDatabaseDetails.CurrentServiceObjectiveName.SubString(0,8)

$currentGeneration = $currentDatabaseDetails.CurrentServiceObjectiveName.SubString(6,1)

$coresArrayToBeUsed = ""

try {

$currentVcores = $currentDatabaseDetails.CurrentServiceObjectiveName.SubString(8,2)

} catch {

$currentVcores = $currentDatabaseDetails.CurrentServiceObjectiveName.SubString(8,1)

}

Write-Output $currentGeneration

if ($currentGeneration -eq "5") {

$coresArrayToBeUsed = $Gen5Cores

} else {

$coresArrayToBeUsed = $Gen4Cores

}

if ($currentVcores -eq $coresArrayToBeUsed[$coresArrayToBeUsed.length]) {

Write-Output "vCore database is already at highest number of cores. Suggestion is to optimize workload."

} else {

for ($i=0; $i -lt $coresArrayToBeUsed.length; $i++) {

if ($coresArrayToBeUsed[$i] -eq $currentVcores) {

$newvCoreCount = $coresArrayToBeUsed[$i+1]

Set-AzureRmSqlDatabase -ResourceGroupName $ResourceGroupName -DatabaseName $DatabaseName -ServerName $ServerName -RequestedServiceObjectiveName "$currentTier$newvCoreCount"

break

}

}

}

}

}

}

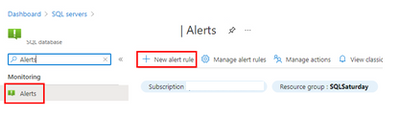

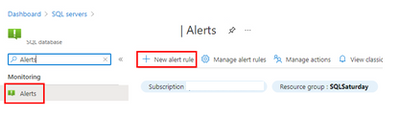

Step #3: create Azure Monitor Alert to trigger the Automation runbook

On your Azure SQL Database, create a new alert rule:

The next blade will require several different setups:

- Scope of the alert: this will be auto-populated if +New Alert Rule was clicked from within the database itself.

- Condition: when should the alert get triggered by selecting a signal and defining its logic.

- Actions: when the alert gets triggered, what will happen?

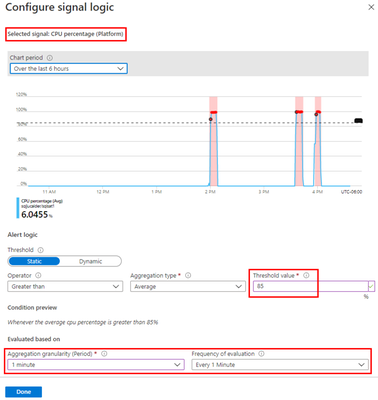

Condition

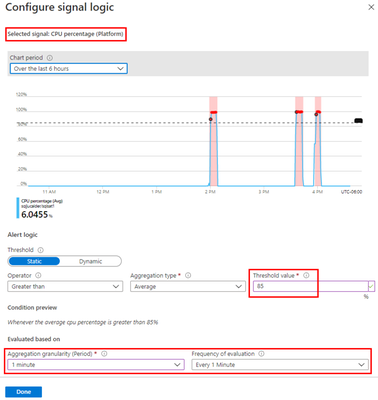

For this example, the alert will monitor the CPU consumption every 1 minute. When the average goes over 85%, the alert will be triggered:

Actions

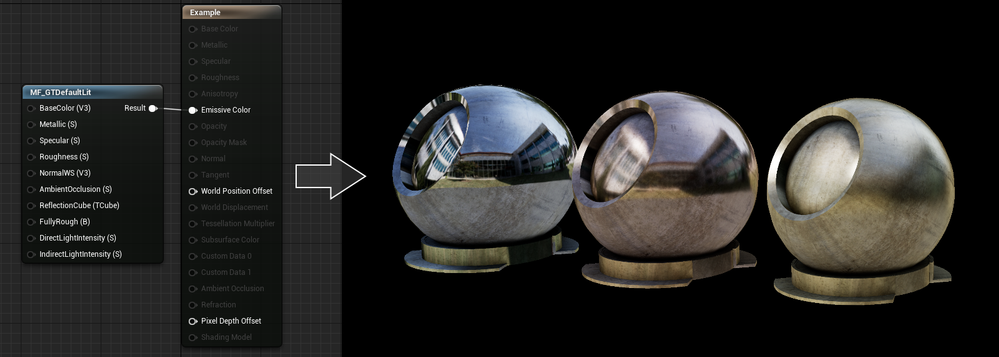

After the signal logic is created, we need to tell the alert what to do when it gets fired. We will do this with an action group. When creating a new action group, two tabs will help us configure sending an email and triggering the runbook:

Notifications

Actions

After saving the action group, add the remaining details to the alert.

That’s it! The alert is now enabled and will auto-scale the database when fired. The runbook will be executed twice per alert: once when fired and another when resolved but it will only perform a scale operation when fired.

by Contributed | Apr 19, 2021 | Technology

This article is contributed. See the original author and article here.

We are pleased to announce an update to the Azure HPC Cache service!

HPC Cache helps customers enable High Performance Computing workloads in Azure Compute by providing low-latency, high-throughput access to Network Attached Storage (NAS) environments. HPC Cache runs in Azure Compute, close to customer compute, but has the ability to access data located in Azure as well as in customer datacenters.

Preview Support for Blob NFS 3.0

The Azure Blob team introduced preview support for the NFS 3.0 protocol this past fall. This change enables the use of both NFS 3.0 and REST access to storage accounts, moving cloud storage further along the path to a multi-tiered, multi-protocol storage platform. It empowers customers to run their file-dependent workloads directly against blob containers using the NFS 3.0 protocol.

There are certain situations where caching NFS data makes good sense. For example, your workload might run across many virtual machines and requires lower latency than what the NFS endpoint provides. Adding the HPC Cache in front of the container will provide sub-millisecond latencies and improved client scalability. This makes the joint NFS 3.0 endpoint and HPC Cache solution ideal for scale-out read-heavy workloads such as genomic secondary analysis and media rendering.

Also, certain applications might require NLM interoperability, which is unsupported for NFS-enabled blob storage. HPC Cache responds to client NLM traffic and manages lock requests as the NLM service. This capability further enables file-based applications to go all-in to the cloud.

Using HPC Cache’s Aggregated Namespace, you can build a file system that incorporates your NFS 3.0-enabled containers into a single directory structure – even if you have multiple storage accounts and containers that you want to operate against. And you can also add your on-premises NAS exports into the namespace, for a truly hybrid file system!

HPC Cache support for NFS 3.0 is in preview. To use it, simply configure a Storage Target of the type “ADLS-NFS” type and point at your NFS 3.0-enabled container.

Customer-Managed Key Support

HPC Cache has had support for CMK-enabled cache disks since mid-2020, but it was limited to specific regions. As of now, you can use CMK-enabled cache disks in all regions where CMK is supported.

Zone-Redundant Storage (ZRS) Blob Containers Support for Blob-As-POSIX

Blob-as-POSIX is a 100% POSIX compliant file system overlaid on a container. Using Blob-as-POSIX, HPC Cache can provide NAS support for all POSIX file system behaviors, including hard links. As of April 2nd, you can use both ZRS and LRS container types.

Custom DNS and NTP Server Support

Typically, HPC Cache will use the built-in Azure DNS and NTP services. When using HPC Cache and your on-premises NAS environment, there are some situations where you might want to use your own DNS and NTP servers. This special configuration is now supported in HPC Cache. Note that using your own servers in this case requires additional network configuration and you should consult with your Azure technical partners for further information. You can find more documentation here.

Client Access Policies

Traditional NAS environments support export policies that restrict access to an export based on networks or host information. Further, they typically allow the remapping of root to another UID, known as root squash. HPC Cache now offers the ability to configure such policies, called client access policies, on the junction path of your namespace. Further, you will be able to squash root to both a unique UID and GID value.

Extended Groups Support

HPC Cache now supports the use of NFS auxiliary groups, which are additional GIDs that might be configured for a given UID. Any group count above 16 falls into the auxiliary, or extended, group definition. HPC Cache now supports the use of such group integration with your existing directory mechanisms (such as Active Directory or LDAP, or even a recurring file upload of these definitions). Using HPC Cache in combination with Azure NetApp Files, for example, allows you to leverage your extended groups.

Get Started

To create storage cache in your Azure environment, start here to learn more about HPC Cache. You also can explore the documentation to see how it may work for you.

Tell Us About It!

Building features in HPC Cache that help support hybrid HPC architectures in Azure is what we are all about! Try HPC Cache, use it, and tell us about your experience and ideas. You can post them on our feedback forum.

Recent Comments