This article is contributed. See the original author and article here.

Mithun Prasad, PhD, Senior Data Scientist at Microsoft and Manprit Singh, CSA at Microsoft

Speech is an essential form of communication that generates a lot of data. As more systems provide a modal interface with speech, it becomes critical to be able to analyze human to computer interactions. Interesting market trends point that voice is the future of UI. This claim is further bolstered now with people looking to embrace contact less surfaces with the recent pandemic.

Interactions between agents and customers in a contact center remains dark data that is often untapped. We believe the ability to transcribe speech in the local dialects/slang should be in the midst of a call center advanced analytics road map such as the one proposed in this McKinsey recommendation. To enable this, we want to bring the best from the current speech transcription landscape, and present it in a coherent platform which businesses can leverage to get a head start on local speech to text adaptation use cases.

There is tremendous interest in Singapore to understand Singlish.

Singlish is a local form of English in Singapore that blends words borrowed from the cultural mix of communities.

An example of what Singlish looks like

A speech recognition system that could interpret and process the unique vocabulary used by Singaporeans (including Singlish and dialects) in daily conversations is very valuable. This automatic speech transcribing system could be deployed at various government agencies and companies to assist frontline officers in acquiring relevant and actionable information while they focus on interacting with customers or service users to address their queries and concerns.

Efforts are on to understand calls made to transcribe emergency calls at Singapore’s Civil Defence Force (SCDF) while AI Singapore has launched Speech Lab to channel efforts in this direction. Now, with the release of the IMDA National Speech Corpus, local AI developers now have the ability to customize AI solutions with locally accented speech data.

IMDA National Speech Corpus

The Infocomm Media Development Authority of Singapore has released a large dataset, which is:

• A 3 part speech corpus each with 1000 hours of recordings of phonetically-balanced scripts from ~1000 local English speakers.

• Audio recordings with words describing people, daily life, food, location, brands, commonly found in Singapore. These are recorded in quiet rooms using a combination of microphones and mobile phones to add acoustic variety.

• Text files which have transcripts. Of note are certain terms in Singlish such as ‘ar’, ‘lor’, etc.

This is a bounty for the open AI community in accelerating efforts towards speech adaptation. With such efforts, the trajectory for the local AI community and businesses are poised for major breakthroughs in Singlish in the coming years.

We have leveraged the IMDA national speech corpus as a starting ground to see how adding customized audio snippets from locally accented speakers drives up accuracy of transcription. An overview of the uptick is in the below chart. Without any customization, the holdout set performed with an accuracy of 73%. As more data snippets were added, we can validate that with the right datasets, we can drive accuracy up using human annotated speech snippets.

On the left is the uplift in terms of accuracy. The right correspondingly shows the Word Error Rate dropping on addition of more audio snippets

Keeping human in the loop

The speech recognition models learn from humans, based on “human-in-the-loop learning”. Human-in-the-Loop Machine Learning is when humans and Machine Learning processes interact to solve one or more of the following:

- Making Machine Learning more accurate

- Getting Machine Learning to the desired accuracy faster

- Making humans more accurate

- Making humans more efficient

An illustration of what a human in the loop looks like is as follows.

In a nutshell, human in the loop learning is giving AI the right calibration at appropriate junctures. An AI model starts learning for a task, which eventually can plateau over time. Timely interventions by a human in this loop can give the model the right nudge. “Transfer learning will be the next driver of ML success.”- Andrew Ng, in his Neural Information Processing Systems (NIPS) 2016 tutorial

Not everybody has access to volumes of call center logs, and conversation recordings collected from a majority of local speakers which are key sources of data to train localized speech transcription AI. In the absence of significant amounts of local accented data with ground truth annotations, and our belief behind transfer learning to be a powerful driver in accelerating AI development, we leverage existing models and maximize their ability to understand towards local accents.

The framework allows extensive room for human in the loop learning and can connect with AI models from both cloud providers and open source projects. A detailed treatment of the components in the framework include:

- The speech to text model can be any kind of Automatic Speech Recognition (ASR) engine or Custom Speech API, which can run on cloud or on premise. The platform is designed to be agnostic to the ASR technology being used.

- Search for ground truth snippets. In a lot of cases when the result is available, a quick search of the training records can point to the number of records trained, etc.

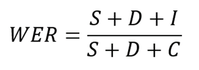

- Breakdown on Word Error Rates (WER): The industry standard to measure Automatic Speech Recognition (ASR) systems is based on the Word Error Rate, defined as the below

where S refers to the number of words substituted, D refers to the number of words deleted, and I refer to the number of words inserted by the ASR engine.

A simple example illustrating this is as below, where there is 1 deletion, 1 insertion, and 1 substitution in a total of 5 words in the human labelled transcript.

Word Error Rate comparison between ground truth and transcript (Source: https://docs.microsoft.com/en-us/azure/cognitive-services/speech-service/how-to-custom-speech-evaluate-data)

So, the WER of this result will be 3/5, which is 0.6. Most ASR engines will return the overall WER numbers, and some might return the split between the insertions, deletions and substitutions.

However, in our work (platform), we can provide a detailed split between the insertions, substitutions and deletions.

- The platform built has ready interfaces that allow human annotators to plug audio files with relevant labeled transcriptions, to augment data

- It ships with dashboards which show detailed substitutions, such as how often was the term ‘kaypoh’ transcribed as ‘people’.

The crux of the platform is the ability to control the existing transcription accuracy, by getting a detailed overview of how often the engine is having trouble transcribing certain vocabulary, and allowing human to give the right nudges to the model.

References and useful links

- https://yourstory.com/2019/03/why-voice-is-the-future-of-user-interfaces-1z2ue7nq80?utm_pageloadtype=scroll

- https://www.mckinsey.com/business-functions/operations/our-insights/how-advanced-analytics-can-help-contact-centers-put-the-customer-first

- https://www.straitstimes.com/singapore/automated-system-transcribing-995-calls-may-also-recognise-singlish-shanmugam

- https://www.aisingapore.org/2018/07/ai-singapore-harnesses-advanced-speech-technology-to-help-organisations-improve-frontline-operations/

- https://livebook.manning.com/book/human-in-the-loop-machine-learning/chapter-1/v-6/17

- https://www.youtube.com/watch?v=F1ka6a13S9I

- https://ruder.io/transfer-learning/

- https://www.imda.gov.sg/programme-listing/digital-services-lab/national-speech-corpus

*** This work was performed in collaboration with Avanade Data & AI and Microsoft.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments