This article is contributed. See the original author and article here.

Introduction

Azure platform gives you the ability to scale SAP applications on-demand as required. In broad terms scaling can be achieved in 2 ways

- Vertical Scaling – Also referred to as Scale Up/Scale Down, this is achieved by changing the Virtual Machine size up or down as per the requirement. Mostly suitable for SAP Databases.

- Horizontal Scaling – Also referred to as Scale Out/Scale In, this is achieved by adding or removing Virtual Machine instances. This approach is better suited for SAP application servers.

In this blog we will look at an approach to achieve Auto Scaling of SAP application servers in Azure based on Horizontal scaling method.

Overview

Autoscaling strategy typically involves the following pieces.

- Telemetry collection – This involves capturing the key application metrics to gauge the performance of the application.

- Decision making component – The component which evaluates the metrics against set threshold and decides whether scaling needs to be triggered.

- Scaling Components – Components involved in scaling the application.

For detailed guidance on best practices of autoscaling in general refer this document

1. Telemetry Collection

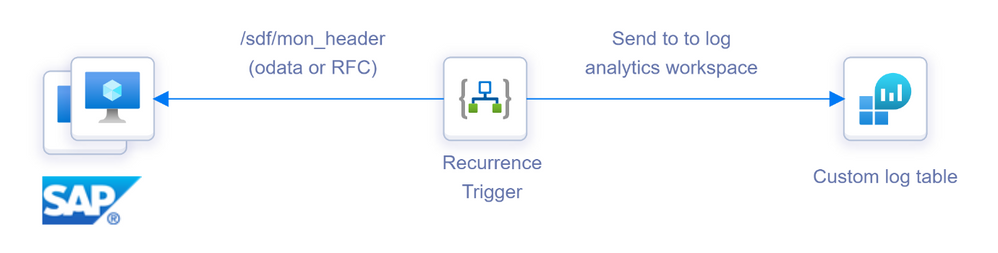

Azure monitor provides out of the box capability to capture key performance metrics of a VM which are then stored in a Log Analytics Workspace. However, to accurately measure the load on an SAP application server, SAP specific performance metrics like work process utilization, user sessions, SAP application memory usage etc. are required. SAP provides a snapshot monitoring utility SMON (or /SDF/MON) which collects this information and stores it in a transparent table (header table) within the SAP database. One way of getting this data into Log Analytics Workspace is to use Logic App for ingestion.

In this architecture Logic App is used to ingest the SAP performance metrics data from /SDF/MON_HEADER (or /SDF/SMON_HEADER depending on what is scheduled) into a custom log table in log analytics workspace. The Logic app can be scheduled to run periodically with a recurrence trigger to pull the data from SAP based on time filter and push it into Log analytics workspace. In addition to forming the basis for autoscaling, getting /SDF/MON data into Log Analytics has other advantages.

- Data can be used to create real time performance dashboards of the SAP systems.

- Enables longer retention of SAP performance data in a cheaper storage and not within the SAP database.

Sample implementation of this can be found in this GitHub repo https://github.com/karthikvenkat17/sapautoscaling

2. Decision making component

Once the required performance is data collected the next step is to setup a detection mechanism on one or more of the collected attributes. As the required telemetry data is available in Log Analytics Workspace, Azure monitor can be used to initiate actions using the Log alerts. Log alerts allow users to use a query to evaluate resources logs at set frequency and fire an alert based on the results. Rules can trigger one or more actions using Action Groups.

For Example in an SAP application server with 10 dialog work process and maximum user sessions to be restricted to 60, sample query for high load would look like below

SAPPerfmon_CL

| where No__of_free_RFC_WPs_d <= 1 or Active_Dia_WPs_d >= 8 or Users_d > 50

| summarize count() by Servername_s

Similarly sample query for Low load will look like below

SAPPerfmon_CL

| where No__of_free_RFC_WPs_d >= 7 and Active_Dia_WPs_d <= 2 and Users_d < 50

| summarize count() by Servername_s

Once you have defined the query use Threshold, Frequency and Period to control when the alerts need to be fired. Note that Log alerts are stateless. Alerts fire each time the condition is met, even if fired previously. Use the Suppress Alert feature within the Azure Monitor to prevent alerts from continuously getting triggered for a period after the first occurrence.

3. Scaling Components

Now that the alert triggering mechanism is finalized, the next step is to carry out the Scaling (Scale Out or Scale In) action as required. There are couple of ways of approaching this.

- Use pre-built SAP instances which are snoozed. They are then started/stopped based on the alert criteria. This can be achieved using Azure Automation Runbook.

- Create/Delete new application servers and add/remove them to the required groups dynamically. High level architecture of this option is shown below.

Scale Out Architecture

Scale In architecture

Detailed explanation of this architecture and sample implementation can be found in this GitHub repo https://github.com/karthikvenkat17/sapautoscaling

Things to Consider

- As you scale the number of SAP application servers, the SAP database should be able to handle the load. Ensure that the database has enough capacity to handle the load of the maximum application server count.

- If there are known pattern of spikes in workload, consider autoscaling based on schedule rather than based on metrics. Autoscaling based on metrics should be used for unforeseen spikes in workload.

- Have adequate margin between Scale Out and Scale In thresholds to avoid continuous flapping. For example if the Scale Out happens at Dialog work process utilization of 90% have Scale In at a low value like 20% utilization.

- For dynamic application server creation only addition/removal from Dialog/RFC server groups are considered. If the application servers need to be added/removed from Batch/Update groups, this needs to be a custom RFC which can then be called from Logic App (as there are no standard RFCs available for this functionality).

- Tune the SAP Soft shutdown timeout settings to fit the kind of traffic the dynamic SAP instances are going to serve. See here for details.

- Consider a mechanism to handle batch jobs if any that may start on the Application servers which get added dynamically. Soft shutdown command waits for batch or update tasks to finish until the time out is reached but If there are known long running jobs or jobs which impact business processes consider creating a separate batch group for them which includes only the static instances.

- If message server ACL file is used, it needs to be updated when new application servers are added dynamically.

- For new VMs created as part of autoscaling ensure any additional monitoring required is setup as part of the post deployment steps.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments