This article is contributed. See the original author and article here.

Tracking your project’s software dependencies is an integral part of the machine learning lifecycle. But managing these entities and ensuring reproducibility can be a challenging process leading to delays in the training and deployment of models. Azure Machine Learning Environments capture the Python packages and Docker settings for that are used in machine learning experiments, including in data preparation, training, and deployment to a web service. And we are excited to announce the following feature releases:

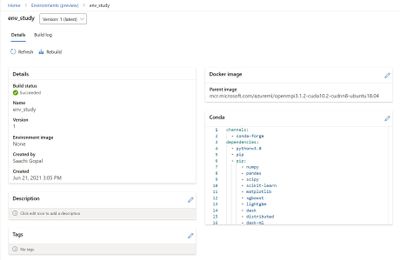

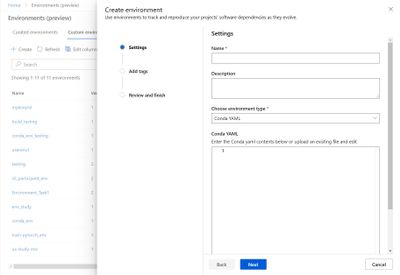

Environments UI in Azure Machine Learning studio

The new Environments UI in Azure Machine Learning studio is now in public preview.

- Create and edit environments through the Azure Machine Learning studio.

- Browse custom and curated environments in your workspace.

- View details around properties, dependencies (Docker and Conda layers), and image build logs.

- Edit tag and description along with the ability to rebuild existing environments.

Curated Environments

Curated environments are provided by Azure Machine Learning and are available in your workspace by default. They are backed by cached Docker images that use the latest version of the Azure Machine Learning SDK and support popular machine learning frameworks and packages, reducing the run preparation cost and allowing for faster deployment time. Environment details as well as their Dockerfiles can be viewed through the Environments UI in the studio. Use these environments to quickly get started with PyTorch, Tensorflow, Sci-kit learn, and more.

Inference Prebuilt Docker Images

At Microsoft Build 2021 we announced Public Preview of Prebuilt docker images and curated environments for Inferencing workloads. These docker images come with popular machine learning frameworks and Python packages. These are optimized for inferencing only and provided for CPU and GPU based scenarios. They are published to Microsoft Container Registry (MCR). Customers can pull our images directly from MCR or use Azure Machine Learning curated environments. The complete list of inference images is documented here: List of Prebuilt images and curated environments.

The difference between current base images and inference prebuilt docker images:

- The prebuilt docker images run as non-root.

- The inference images are smaller in size than compared to current base images. Hence, improving the model deployment latency.

- If users want to add extra Python dependencies on top of our prebuilt images, they can do so without triggering an image build during model deployment. Our Python package extensibility solution provides two ways for customers to install these packages:

- Dynamic Installation: This method is recommended for rapid prototyping. In this solution, we dynamically install extra python packages during container boot time.

- Create a requirements.txt file alongside your score.py script.

- Add all your required packages to the requirements.txt file.

- Set the AZUREML_EXTRA_REQUIREMENTS_TXT environment variable in your Azure Machine Learning environment to the location of requirements.txt file.

- Pre-installed Python packages: This method is recommended for production deployments. In this solution, we mount the directory containing the packages.

- Set AZUREML_EXTRA_PYTHON_LIB_PATH environment variable, and point it to the correct site packages directory.

- Dynamic Installation: This method is recommended for rapid prototyping. In this solution, we dynamically install extra python packages during container boot time.

https://channel9.msdn.com/Shows/Docs-AI/Prebuilt-Docker-Images-for-Inference/player

Dynamic installation:

https://docs.microsoft.com/en-us/azure/machine-learning/how-to-prebuilt-docker-images-inference-python-extensibility#dynamic-installation

Summary

Use the environments to track and reproduce your projects’ software dependencies as they evolve.

- Environments in Azure Machine Learning.

- Manage software environments in Azure Machine Learning studio.

- Curated Environments.

- Prebuilt Docker Images and curated environments for Inference.

- Python Package Extensibility solution

- Extend a Prebuilt docker image

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments