Help us shape Kusto data exploration experience

This article is contributed. See the original author and article here.

Please take this short survey (8 questions, 4 mins) ADX short survey to help us shape ADX data exploration experiences.

This article is contributed. See the original author and article here.

Please take this short survey (8 questions, 4 mins) ADX short survey to help us shape ADX data exploration experiences.

This article is contributed. See the original author and article here.

| Before implementing data extraction from SAP systems please always verify your licensing agreement. |

Over the last five episodes, we’ve built quite a complex Synapse Pipeline that allows extracting SAP data using OData protocol. Starting from a single activity in the pipeline, the solution grew, and it now allows to process multiple services on a single execution. We’ve implemented client-side caching to optimize the extraction runtime and eliminate short dumps at SAP. But that’s definitely not the end of the journey!

Today we will continue to optimize the performance of the data extraction. Just because the Sales Order OData service exposes 40 or 50 properties, it doesn’t mean you need all of them. One of the first things I always mention to customers, with who I have a pleasure working, is to carefully analyze the use case and identify the data they actually need. The less you copy from the SAP system, the process is faster, cheaper and causes fewer troubles for SAP application servers. If you require data only for a single company code, or just a few customers – do not extract everything just because you can. Focus on what you need and filter out any unnecessary information.

Fortunately, OData services provide capabilities to limit the amount of extracted data. You can filter out unnecessary data based on the property value, and you can only extract data from selected columns containing meaningful data.

Today I’ll show you how to implement two query parameters: $filter and $select to reduce the amount of data to extract. Knowing how to use them in the pipeline is essential for the next episode when I explain how to process only new and changed data from the OData service.

ODATA FILTERING AND SELECTION

There is a GitHub repository with source code for each episode. Learn more: https://github.com/BJarkowski/synapse-pipelines-sap-odata-public |

To filter extracted data based on the field content, you can use the $filter query parameter. Using logical operators, you can build selection rules, for example, to extract only data for a single company code or a sold-to party. Such a query could look like this:

/API_SALES_ORDER_SRV/A_SalesOrder?$filter=SoldToParty eq 'AZ001'

The above query will only return records where the field SoldToParty equals AZ001. You can expand it with logical operators ‘and’ and ‘or’ to build complex selection rules. Below I’m using the ‘or’ operator to display data for two Sold-To Parties:

/API_SALES_ORDER_SRV/A_SalesOrder/?$filter=SoldToParty eq 'AZ001' or SoldToParty eq 'AZ002'

You can mix and match fields we’re interested in. Let’s say we would like to see orders for customers AZ001 and AZ002 but only where the total net amount of the order is lower than 10000. Again, it’s quite simple to write a query to filter out data we’re not interested in:

/API_SALES_ORDER_SRV/A_SalesOrder?$filter=(SoldToParty eq 'AZ001' or SoldToParty eq 'AZ002') and TotalNetAmount le 10000.00

Let’s be honest, filtering data out is simple. Now, using the same logic, you can select only specific fields. This time, instead of the $filter query parameter, we will use the $select one. To get data only from SalesOrder, SoldToParty and TotalNetAmount fields, you can use the following query:

/API_SALES_ORDER_SRV/A_SalesOrder?$select=SalesOrder,SoldToParty,TotalNetAmount

There is nothing stopping you from mixing $select and $filter parameters in a single query. Let’s combine both above examples:

/API_SALES_ORDER_SRV/A_SalesOrder?$select=SalesOrder,SoldToParty,TotalNetAmount&$filter=(SoldToParty eq 'AZ001' or SoldToParty eq 'AZ002') and TotalNetAmount le 10000.00

By applying the above logic, the OData response time reduced from 105 seconds to only 15 seconds, and its size decreased by 97 per cent. That, of course, has a direct impact on the overall performance of the extraction process.

FILTERING AND SELECTION IN THE PIPELINE

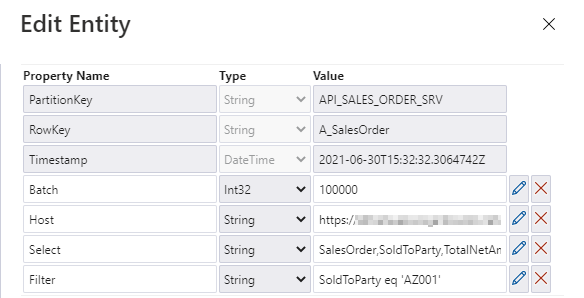

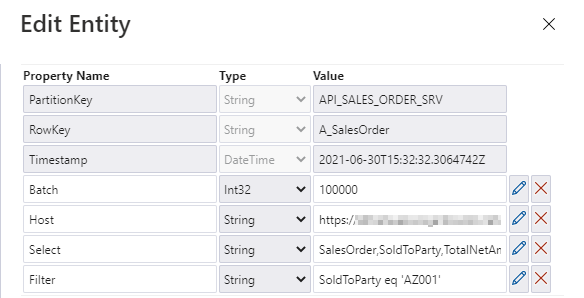

The filtering and selection options should be based on the entity level of the OData service. Each entity has a unique set of fields, and we may want to provide different filtering and selection rules. We will store the values for query parameters in the metadata store. Open it in the Storage Explorer and add two properties: filter and select.

I’m pretty sure that based on the previous episodes of the blog series, you could already implement the logic in the pipeline without my help. But there are two challenges we should be mindful of. Firstly, we should not assume that $filter and $select parameters will always contain a value. If you want to extract the whole entity, you can leave those fields empty, and we should not pass them to the SAP system. In addition, as we are using the client-side caching to chunk the requests into smaller pieces, we need to ensure that we pass the same filtering rules in the Lookup activity where we check the number of records in the OData service.

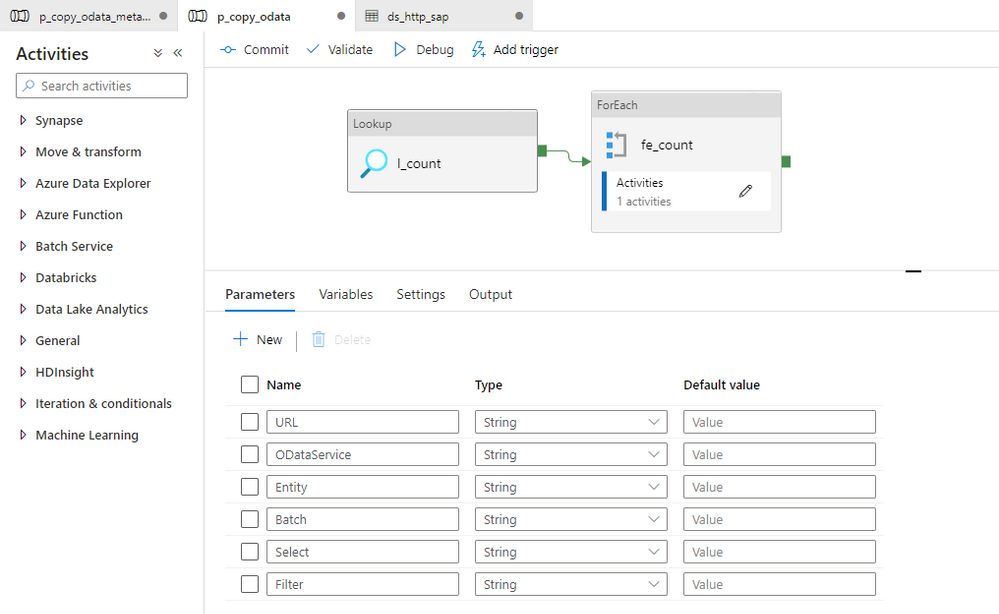

Let’s start by defining parameters in the child pipeline to pass filter and select values from the metadata table. We’ve done that already in the third episode, so you know all steps.

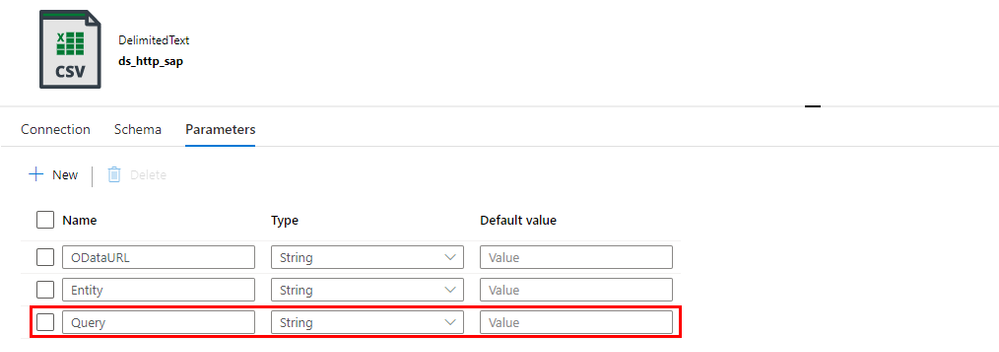

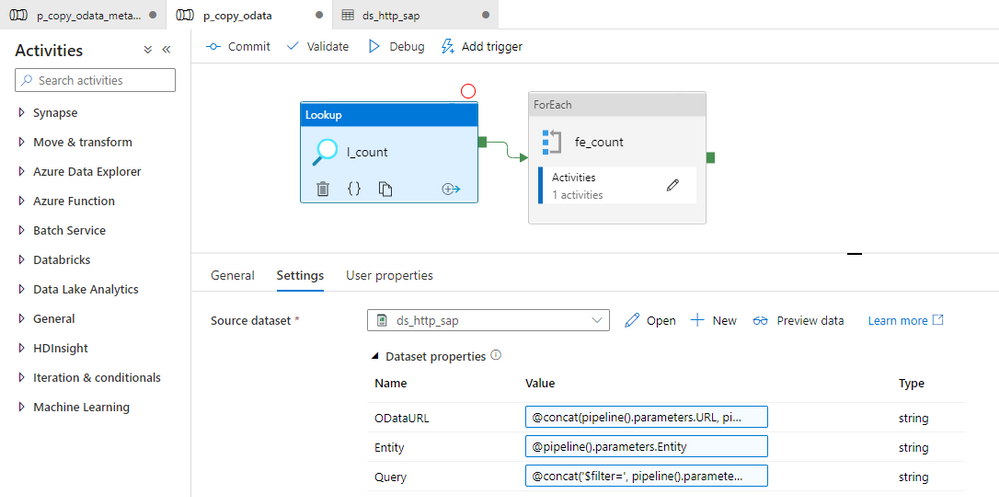

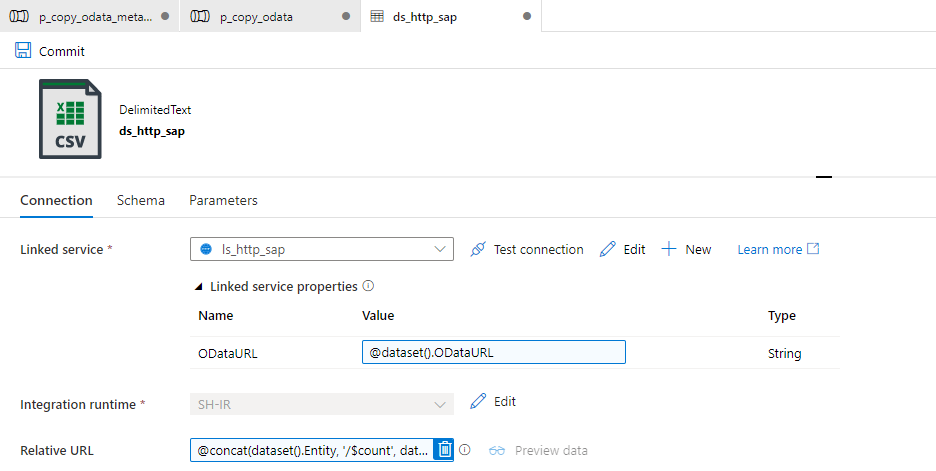

To correctly read the number of records, we have to consider how to combine these additional parameters with the OData URL in the Lookup activity. So far, the dataset accepts two dynamic fields: ODataURL and Entity. To pass the newly defined parameters, you have to add the Query one.

You can go back to the Lookup activity to define the expression to pass the $filter and $query values. It is very simple. I check if the Filter parameter in the metadata store contains any value. If not, then I’m passing an empty string. Otherwise, I concatenate the query parameter name with the value.

@if(empty(pipeline().parameters.Filter), '', concat('?$filter=', pipeline().parameters.Filter))

Finally, we can use the Query field in the Relative URL of the dataset. We already use that field to pass the entity name and the $count operator, so we have to slightly extend the expression.

@concat(dataset().Entity, '/$count', dataset().Query)

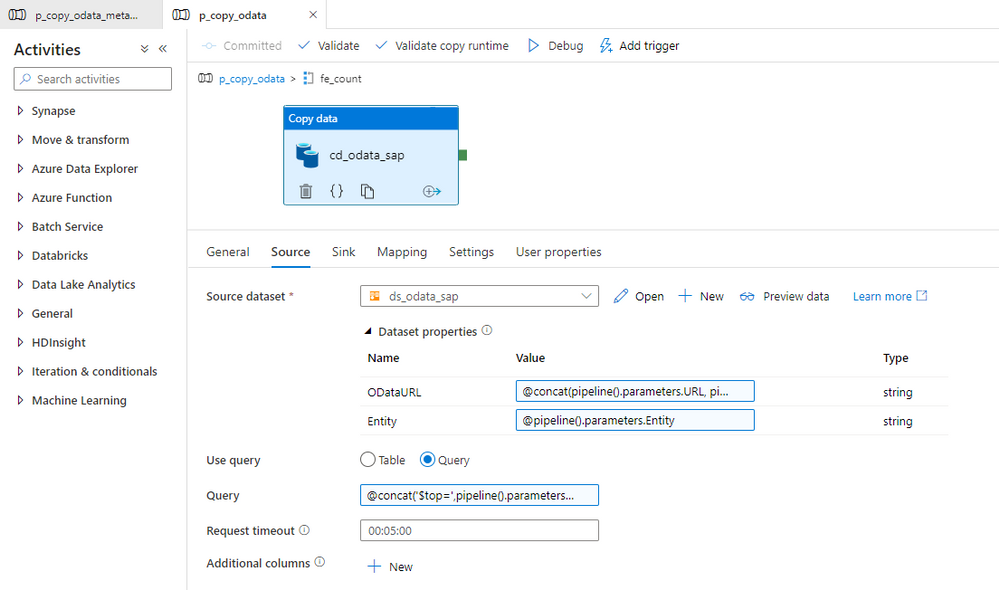

Changing the Copy Data activity is a bit more challenging. The Query field is already defined, but the expression we use should include the $top and $skip parameters, that we use for the client-side paging. Unlike at the Lookup activity, this time we also have to include both $select and $filter parameters and check if they contain a value. It makes the expression a bit lengthy.

@concat('$top=',pipeline().parameters.Batch, '&$skip=',string(mul(int(item()), int(pipeline().parameters.Batch))), if(empty(pipeline().parameters.Filter), '', concat('&$filter=',pipeline().parameters.Filter)), if(empty(pipeline().parameters.Select), '', concat('&$select=',pipeline().parameters.Select)))

With above changes, the pipeline uses filter and select values to extract only the data you need. It reduces the amount of processed data and improves the execution runtime.

IMPROVING MONITORING

As we develop the pipeline, the number of parameters and expressions grows. Ensuring that we haven’t made any mistakes becomes quite a difficult task. By default, the Monitoring view only gives us basic information on what the pipeline passes to the target system in the Copy Data activity. At the same time, parameters influence which data we extract. Wouldn’t it be useful to get a more detailed view?

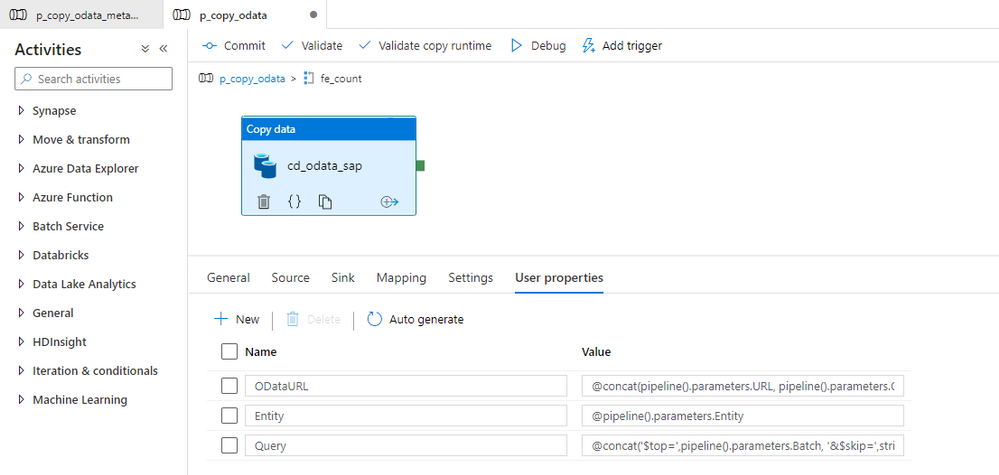

There is a way to do it! In Azure Synapse Pipelines, you can define User Properties, which are highly customizable fields that accept custom values and expressions. We will use them to verify that our pipeline works as expected.

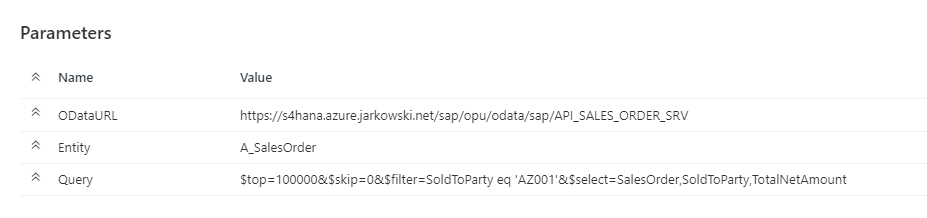

Open the Copy Data activity and select the User Properties tab. Add three properties we want to monitor – the OData URL, entity name, and the query passed to the SAP system. Copy expression from the Copy Data activity. It ensures the property will have the same value as is passed to the SAP system.

Once the user property is defined we start the extraction job.

MONITORING AND EXECUTION

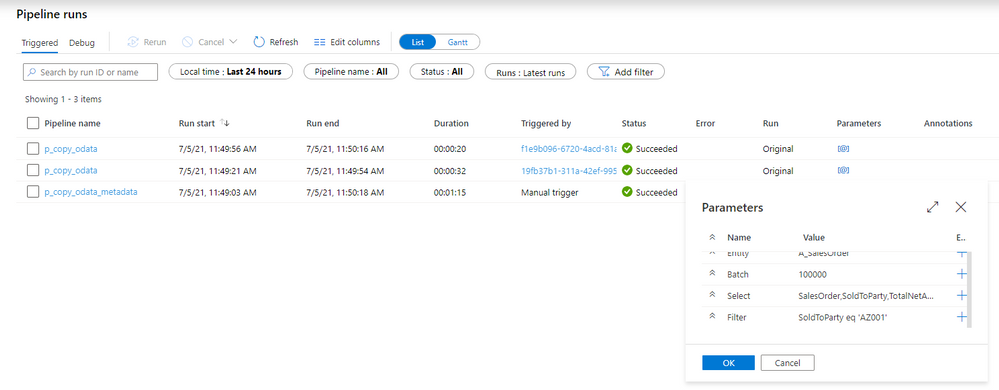

Let’s start the extraction. I process two OData services, but I have defined Filter and Select parameters to only one of them.

Once the processing has finished, open the Monitoring area. To monitor all parameters that the metadata pipeline passes to child one, click on the [@] sign:

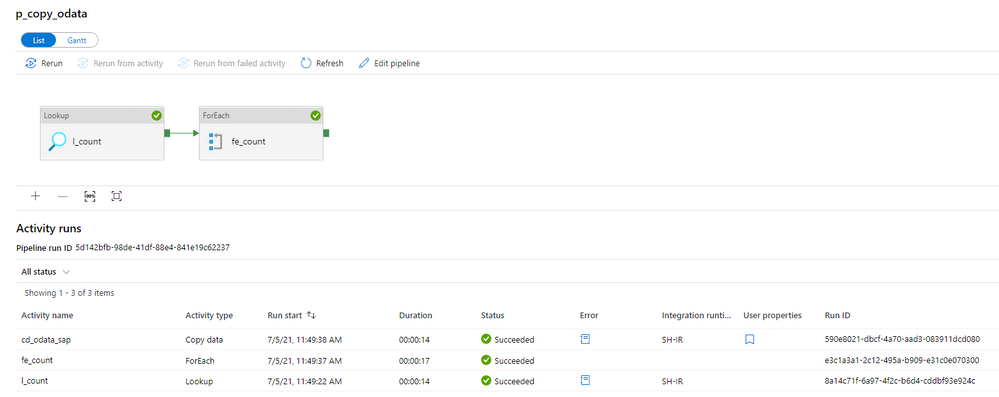

Now, enter the child pipeline to see the details of the Copy Data activity.

When you click on the icon in the User Properties column, you can display the defined user properties. As we use the same expressions as in the Copy Activity, we clearly see what was passed to the SAP system. In case of any problems with the data filtering, this is the first place to start the investigation.

The above parameters are very useful when you need to troubleshoot the extraction process. Mainly, it shows you the full request query that is passed to the OData service – including the $top and $skip parameters that we defined in the previous episode.

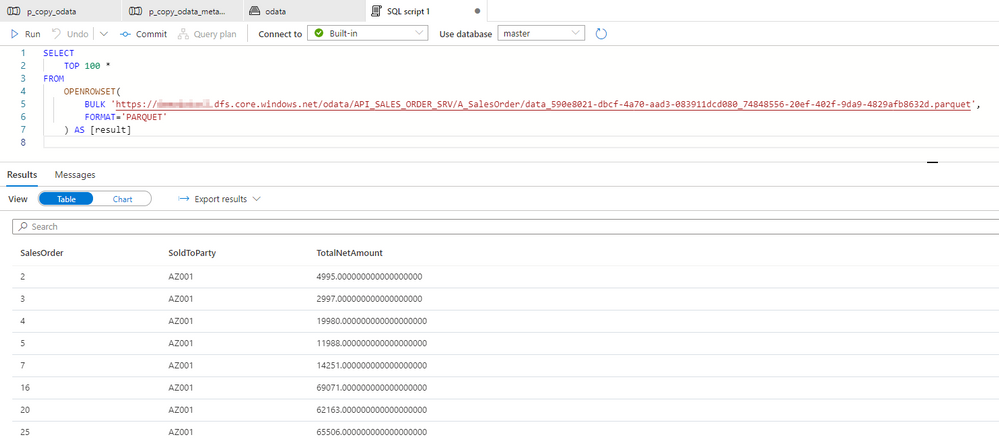

The extraction was successful, so let’s have a look at the extracted data in the lake.

There are only three columns, which we have selected using the $select parameter. Similarly, we can only see rows with SoldToParty equals AZ001 and the TotalNetAmount above 1000. It proves the filtering works fine.

I hope you enjoyed this episode. I will publish the next one in the upcoming week, and it will show you one of the ways to implement delta extraction. A topic that many of you wait for!

This article is contributed. See the original author and article here.

CISA has added thirteen new vulnerabilities to its Known Exploited Vulnerabilities Catalog, based on evidence that threat actors are actively exploiting the vulnerabilities listed in the table below. These types of vulnerabilities are a frequent attack vector for malicious cyber actors of all types and pose significant risk to the federal enterprise.

|

CVE Number |

CVE Title |

Remediation Due Date |

|

Apache Log4j2 Remote Code Execution Vulnerability |

12/24/2021 |

|

|

CVE-2021-44515 |

Zoho Corp. Desktop Central Authentication Bypass Vulnerability |

12/24/2021 |

|

CVE-2021-44168 |

Fortinet FortiOS Arbitrary File Download Vulnerability |

12/24/2021 |

|

Realtek Jungle SDK Remote Code Execution Vulnerability |

12/24/2021 |

|

|

Pi-Hole AdminLTE Remote Code Execution Vulnerability |

6/10/2022 |

|

|

Fuel CMS SQL Injection Vulnerability |

6/10/2022 |

|

|

Sonatype Nexus Repository Manager Incorrect Access Control Vulnerability |

6/10/2022 |

|

|

Linux Kernel Improper Privilege Management Vulnerability |

6/10/2022 |

|

|

MongoDB mongo-express Remote Code Execution Vulnerability |

6/10/2022 |

|

|

Apache Solr DataImportHandler Code Injection Vulnerability |

6/10/2022 |

|

|

Embedthis GoAhead Remote Code Execution Vulnerability |

6/10/2022 |

|

|

Red Hat Jboss Application Server Remote Code Execution Vulnerability |

6/10/2022 |

|

|

Red Hat Linux JBoss Seam 2 Remote Code Execution Vulnerability |

6/10/2022 |

Binding Operational Directive (BOD) 22-01: Reducing the Significant Risk of Known Exploited Vulnerabilities established the Known Exploited Vulnerabilities Catalog as a living list of known CVEs that carry significant risk to the federal enterprise. BOD 22-01 requires FCEB agencies to remediate identified vulnerabilities by the due date to protect FCEB networks against active threats. See the BOD 22-01 Fact Sheet for more information.

Although BOD 22-01 only applies to FCEB agencies, CISA strongly urges all organizations to reduce their exposure to cyberattacks by prioritizing timely remediation of Catalog vulnerabilities as part of their vulnerability management practice. CISA will continue to add vulnerabilities to the Catalog that meet the meet the specified criteria.

This article is contributed. See the original author and article here.

The Azure PowerShell team is proud to announce a new major version of the Az PowerShell module. Following our release cadence, this is the second breaking change release for 2021. Because this release includes updates related to security and the switch to MS Graph, we recommend that you review the release notes before upgrading.

Azure PowerShell offers a set of cmdlets allowing basic management of AzureAD resources (Applications, Service Principal, Users, and Groups). Through Az 6.x, those cmdlets were using the AzureAD Graph API. Starting with Az 7, those cmdlets are now using the Microsoft Graph API. Because the AzureAD Graph API has announced its retirement, we highly recommend that you consider upgrading to Az 7 at your earliest convenience.

The parameters required depend on the API definition and so does the object returned on the response of the API. Our north star in this effort has been to minimize the breaking changes exposed by the cmdlets. Because of the behavior differences between MS Graph API and AzureAD Graph API, some breaking changes could not be avoided. For example, the MS Graph API does not allow setting the password when creating a Service Principal. We removed this parameter from the new cmdlets.

In some cases, cmdlets of a service transparently execute Azure AD operations. For example, when creating an AKS cluster, a service principal will be created if it is not provided. Az.KeyVault, Az.AKS, Az.SQL have been updated and now use Microsoft Graph for those transparent operations. Az.HDInsights, Az.StorageSync, Az.Synapse and Authorization cmdlets in Az.Resources will be updated shortly, this will be transparent.

For your convenience, we have compiled the breaking changes in the article: AzureAD to Microsoft Graph migration changes in Azure PowerShell.

Should you face issues with the Graph cmdlets, please consult our troubleshooting guide or open an issue on GitHub.

The purpose of the `Invoke-AzRestMethod` cmdlet is to offer a backup solution for when a native cmdlet does not exist for a given resource.

Our initial implementation of this cmdlet supported only management plane operations for Azure Resource Manager. With the support for MS Graph, we updated this cmdlet so it could also serve as a backup to manage MS Graph resources. From the module implementation, the MS Graph API is considered like a data plane API so we added support for MS Graph any Azure data plane.

For example, the following command will retrieve information about the current signed in user via the MS Graph API:

Invoke-AzRestMethod –Uri https://graph.microsoft.com/v1.0/me

When connecting with a service principal, we identified that the secret associated with a service principal or the certificate password would be exposed in a nested property of the object returned by `Connect-AzAccount`. We removed the properties named `ServicePrincipalSecret` and `CertificatePassword` from this object.

Since this property could be exposed in logs or debugging traces of scripts running in automation environments like ADO, we highly recommend that you consider upgrading to the most recent version of Az.Accounts or Az.

We are continuing our efforts to improve the support of container-based services. In this release, we focused on AKS and ACI.

`Invoke-AzAksRunCommand` has been added to run a shell command using kubectl or helm against an AKS cluster. The response is available as a property of the returned object. This cmdlet greatly simplifies the management of the resources in a cluster. Since the cmdlet also supports file attachment, it is possible to manage Kubernetes clusters and associated applications (for example via a helm chart) directly from PowerShell.

We have greatly improved networking support of AKS clusters. We’ve added support for the following parameters: ‘NetworkPolicy’, ‘PodCidr’, ‘ServiceCidr’, ‘DnsServiceIP’, ‘DockerBridgeCidr’, ‘NodePoolLabel’, ‘AksCustomHeader’, ‘EnableNodePublicIp’, and ‘NodePublicIPPrefixID’.

We also improved the manageability of nodes in an AKS cluster using Azure PowerShell. It is now possible to perform the following operations:

We made two additions to the ACI (Azure Container Instance) module:

We will continue to improve the PowerShell experience with services running cloud native applications.

The Azure PowerShell team is listening to your feedback on the following channels:

Thank you!

Damien,

on behalf of the Azure PowerShell team

This article is contributed. See the original author and article here.

The Apache Software Foundation has released a security advisory to address a remote code execution vulnerability (CVE-2021-44228) affecting Log4j versions 2.0-beta9 to 2.14.1. A remote attacker could exploit this vulnerability to take control of an affected system. Log4j is an open-source, Java-based logging utility widely used by enterprise applications and cloud services.

CISA encourages users and administrators to review the Apache Log4j 2.15.0 Announcement and upgrade to Log4j 2.15.0 or apply the recommended mitigations immediately.

This article is contributed. See the original author and article here.

CISA has released an Industrial Controls Systems Medical Advisory (ICSMA) detailing a vulnerability in multiple Hillrom Welch Allyn cardiology products. An attacker could exploit this vulnerability to take control of an affected system.

CISA encourages technicians and administrators to review ICSMA-21-343-01: Hillrom Welch Allyn Cardio Products for more information and apply the necessary mitigations.

Recent Comments