by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

We are announcing a general availability for Azure AD user creation support for Azure SQL Database on behalf of Azure AD Applications (service principals). See Azure Active Directory service principal with Azure SQL.

What support for Azure AD user creation on behalf of Azure AD Applications means?

Azure SQL Database and SQL Managed Instance support the following Azure AD objects:

- Azure AD users (managed, federated and guest)

- Azure AD groups (managed and federated)

- Azure AD applications

For more information on Azure AD applications, see Application and service principal objects in Azure Active Directory and Create an Azure service principal with Azure PowerShell.

Formerly, only SQL Managed Instance supported the creation of those Azure AD object types on behalf of an Azure AD Application (using service principal). Support for this functionality in Azure SQL Database is now generally available.

This functionality is useful for automated processes where Azure AD objects are created and maintained in Azure SQL Database without human interaction by Azure AD applications. Since service principals could be an Azure AD admin for SQL DB as part of a group or an individual user, automated Azure AD object creation in SQL DB can be executed. This allows for a full automation of a database user creation. This functionality is also supported for Azure AD system-assigned managed identity and user-assigned managed identity that can be created as users in SQL Database on behalf of service principals (see the article, What are managed identities for Azure resources?).

Prerequisites

To enable this feature, the following steps are required:

1) Assign a server identity (a system managed identity) during SQL logical server creation or after the server is created.

See the PowerShell example below:

- To create a server identity during the Azure SQL logical server creation, execute the following command:

New-AzSqlServer -ResourceGroupName <resource group>

-Location <Location name> -ServerName <Server name>

-ServerVersion “12.0” -SqlAdministratorCredentials (Get-Credential)

-AssignIdentity

(See the New-AzSqlServer command for more details)

- For existing Azure SQL logical servers, execute the following command:

Set-AzSqlServer -ResourceGroupName <resource group>

-ServerName <Server name> -AssignIdentity

(See the Set-AzSqlServer command for more details)

To check if a server identity is assigned to the Azure SQL logical

server, execute the following command:

Get-AzSqlServer -ResourceGroupName <resource group>

– ServerName <Server name>

(See the Get-AzSqlServer command for more details)

2) Grant the Azure AD “Directory Readers” permission to the server identity

created above

(For more information, see Provision Azure AD admin (SQL Managed Instance)

How to use it

Once steps 1 and 2 are completed, an Azure AD application with the right permissions can create an Azure AD object (user/group or service principal) in Azure SQL DB. For more information, see the step-by-step tutorial doc

(see Tutorial: Create Azure AD users using Azure AD applications ).

Example

Using SMI (System-assigned Managed Identity) set up as an Azure AD admin for SQL DB,

create an Azure AD application as a SQL DB user.

Preparation

Enable steps 1 and 2 indicated above for the Azure SQL logical server

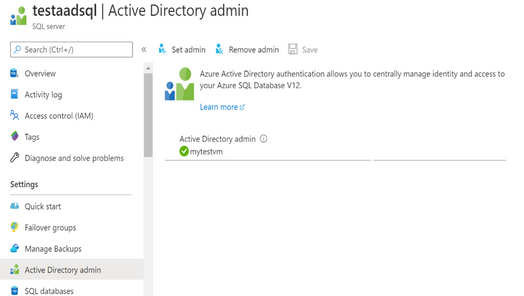

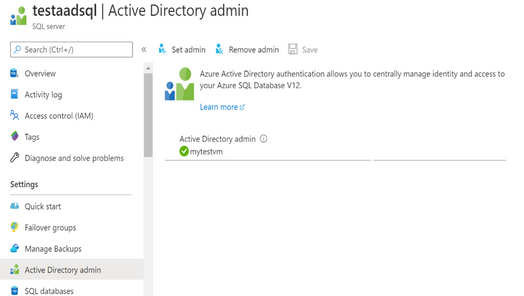

- In the example below, the server name is ‘testaadsql’

- The user database created under this serve is ‘testdb’

- Copy the display name of the application

- In the example below the app name is ‘myapp’

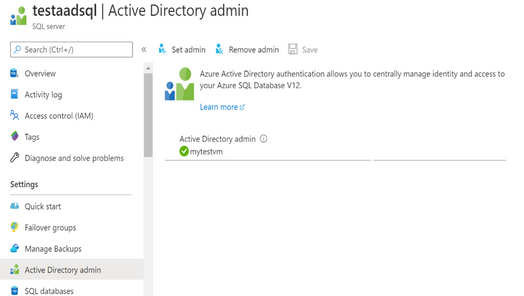

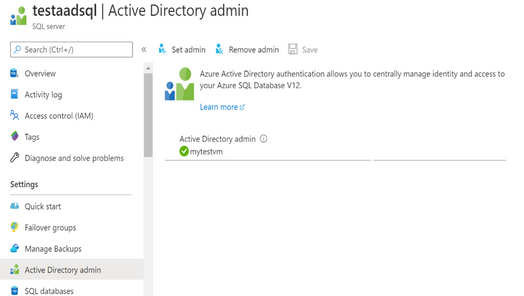

- Using the Azure portal, assign your SMI (display name mytestvm) as an Azure AD admin for the Azure SQL logical server (see the screenshot below).

- Create Azure AD application user in SQL DB on behalf of the SMI

- To check that the user ‘myapp’ was created in the database ‘testdb’ you can execute the T-SQL command select * from sys.database_principals.

PowerShell Script

# PS script creating a SQL user myapp from an Azure AD application on behalf of SMI “mytestvm”

# that is also set as Azure AD admin for SQ DB

# Execute this script from the Azure VM with SMI name ‘mytestvm’

# Azure AD application – display name ‘myapp’

# This is the user name that is created in SQL DB ‘testdb’ in the server ‘testaadsql’

# Metadata service endpoint for SMI, accessible only from within the VM:

$response = Invoke-WebRequest -Uri

‘http://169.254.169.254/metadata/identity/oauth2/token?api-version=2018-02-01&resource=https%3A%2F%2Fdatabase.windows.net%2F‘ -Method GET -Headers @{Metadata=”true”}

$content = $response.Content | ConvertFrom-Json

$AccessToken = $content.access_token

# Specify server name and database name

# For the server name, the server identity must be assigned and “Directory Readers”

# permission granted to the identity

$SQLServerName = “testaadsql”

$DatabaseName = ‘testdb’

$conn = New-Object System.Data.SqlClient.SQLConnection

$conn.ConnectionString = “Data Source=$SQLServerName.database.windows.net;Initial Catalog=$DatabaseName;Connect Timeout=30”

$conn.AccessToken = $AccessToken

$conn.Open()

# Create SQL DB user [myapp] in the ‘testdb’ database

$ddlstmt = ‘CREATE USER [myapp] FROM EXTERNAL PROVIDER;’

$command = New-Object -TypeName System.Data.SqlClient.SqlCommand($ddlstmt, $conn)

Write-host ” “

Write-host “SQL DDL command was executed”

$ddlstmt

Write-host “results”

$command.ExecuteNonQuery()

$conn.Close()

For more information see

For feedback/questions on this preview feature, please reach out to the SQL AAD team at SQLAADFeedback@Microsoft.com

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

Final Update: Wednesday, 12 May 2021 18:29 UTC

We’ve confirmed that all systems are back to normal with no customer impact as of 05/12, 17:45 UTC. Our logs show the incident started on 05/12, 17:18 UTC and that during the ~27 min that it took to resolve the issue customers in EastUS2 using Azure Log Analytics may have encountered data access issues and/or delayed or missing Log Search Alerts for resources hosted in this region.

- Root Cause: The failure was due to one of the backend services becoming unhealthy

- Incident Timeline: 27 minutes – 05/12, 17:18 UTC through 05/12, 17:45 UTC.

We understand that customers rely on Azure Log Analytics as a critical service and apologize for any impact this incident caused.

-Anupama

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

As we continue our journey to provide world class threat protection for our customers, we announce general availability of our cloud-native breadth threat protection capabilities, deliver better integration with Microsoft’s threat protection portfolio and expand our threat protection for multi-cloud scenarios.

At RSA this year we are happy to announce general availability for our cloud breadth threat protection solutions: Azure Defender for DNS and Azure Defender for Resource Manager. By detecting suspicious management operations and DNS queries, these cloud-native agentless solutions are helping organizations protect all their cloud resources connected to the Azure DNS & Azure management layer from attacks. Together these new solutions provide breadth protection for your entire Azure environment, which is complementary to our existing Azure Defender in-depth protection for popular Azure workloads.

We are also announcing general availability of built-in and custom reports in Security Center: you can leverage built-in reports created as Azure Workbooks for tasks like tracking your Secure Score over time, vulnerability management, and monitoring missing system updates. In addition, you can create your own custom reports on top of Security Center data using Azure Workbooks or pick up workbook templates created by our community, share those across your organization and leverage to relay security status and insights across the organization. Learn more in Create rich, interactive reports of Security Center data.

At RSA, we are also introducing new capabilities to create a seamless experience between Azure Defender and Azure Sentinel. The enhanced Azure Defender connector, makes it easier to connect to Azure Sentinel by allowing to turn on Azure Defender for some of the subscriptions or for the entire organization from within the connector. We are also combining alerts from Azure Defender with the new raw log connectors for Azure resources in Azure Sentinel. This allows security teams to investigate Azure Defender alerts using raw logs in Azure Sentinel. We also added new recommendations in Azure Security Center to help deploy these log connectors at scale for an entire organization.

Today’s hybrid work environment spans multi-platform, multi-cloud, and on-premises. According to Gartner 2/3 of customers are multi-cloud. We recently extended the multi-cloud support in Azure Defender to include not just servers and SQL but also Kubernetes – and all using Azure Arc. Azure Security Center remains the only security portal from a cloud vendor with multi-cloud support including AWS and GCP.

As always – don’t forget to enable Azure Defender for your cloud services and especially for virtual machines, storage, and SQL databases. Make sure you are actively working to improve your to improve your security posture and please continue to reach out with feedback.

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

MidDay Cafe Episode 9 – MBAS, Single Platform, VIVA Connections

MidDay Cafe Episode 9 – MBAS, Single Platform, VIVA Connections

In this episode of MidDay Cafe host Michael Gannotti is joined by Microsoft’s Kendra Burgess and Sue Vencill as they discuss the Microsoft Business Application Summit (MBAS)), Why I Came to Microsoft/Single Platform, and Next Generation Intranets with Microsoft VIVA Connections.

Resources:

Keep up to date with MidDay Café:

Thanks for visiting – Michael Gannotti LinkedIn | Twitter

Michael Gannotti

Michael Gannotti

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

We are happy to announce that REST APIs for scanning data plane are now released. Software engineers or developers in your organization can now call these APIs to register data sources, set up scans and classifications programmatically to integrate with other systems or products in your company.

Purview Scaning Data Plane Endpoints

You need to have the purview account name to call scanning APIs. Below is how the endpoint will look:

https://{your-purview-account-name}.scan.purview.azure.com

Set up authentication using service principal.

To call the scanning APIs, the first thing you need to do is to register an application and create a client secret for that application in Azure Active Directory. When you register an application a service principal is automatically created in your tenant. For more information on how to create a service principal (application) and client secret, please refer here.

Once service principal is created, you need to assign ‘Data source Admin’ role of your purview account to the service principal created above. The below steps need to be followed to assign role to establish trust between the service principal and purview account.

- Navigate to your Purview account.

- On the Purview account page, select the tab Access control (IAM)

- Click + Add.

- Select Add role assignment.

- For the Role select Purview Data Source Administrator from the drop down.

- For Assign access to leave the default, User, group, or service principal.

- For Select enter the name of the previously created service principal you wish to assign and then click on their name in the results pane.

- Click on Save.

You’ve now configured the service principal as an application administrator, which enables it to send content to the scanning APIs. Learn about roles here.

Get Token

You can send a POST request to the following URL to get access token.

https://login.microsoftonline.com/{your-tenant-id}/oauth2/token

The following parameters needs to be passed to the above URL.

- client_id: client id of the application registered in Azure Active directory and is assigned ‘Data Source Admin’ role for the Purview account.

- client_secret: client secret created for the above application.

- grant_type: This should be ‘client_credentials’.

- resource: This should be ‘https://purview.azure.net’

Figure 1: Screenshot showing a sample response in Postman.

Scanning Data Plane REST APIs

Once you have followed all the above steps and have received access token you can now call various scanning APIs programmatically. The different types of entities you can interact with are listed below:

- Classification Rules

- Data Sources

- Key Vault Connections

- Scans and scan related functionality like triggers and scan rule sets.

The below examples explains the APIs you need to call to configure a data source , set up and run a scan for the data source but for complete information on all the REST APIs supported by scanning data plane refer here –

1. To create or update a data source using APIs the following REST API can be leveraged:

PUT {Endpoint}/datasources/{dataSourceName}?api-version=2018-12-01-preview

You can register an Azure storage data source with name ‘myStorage’ by sending a PUT request to the following URL

{Endpoint}/datasources/myStorage?api-version=2018-12-01-preview with the below request body:

{

“name”: “myStorage”,

“kind”: “AzureStorage”,

“properties”: {

“endpoint”: “https://azurestorage.core.windows.net/“

}

}

2. To create a scan for a data source already registered in Purview the following REST API can be leveraged:

PUT {Endpoint}/datasources/{dataSourceName}/scans/{scanName}?api-version=2018-12-01-preview

You can schedule a scan ‘myStorageScan’ using a credential ‘CredentialAKV’ and system scan rule set ‘AzureStorage’ for the already registered data source ‘myStorage’ by sending a PUT request to the following URL with the below request body:

{Endpoint}/datasources/myStorage/scans/myStorageScan?api-version=2018-12-01-preview

{

“kind”: “AzureStorageCredential”,

“properties”: {

“credential”: {

“referenceName”: “CredentialAKV”,

“credentialType”: “AccountKey”

},

“connectedVia”: null,

“scanRulesetName”: “AzureStorage”,

“scanRulesetType”: “System”

}

}

The above call with return the following response:

{

“name”: “myStorageScan”,

“id”: “datasources/myDataSource/scans/myScanName”,

“kind”: “AzureStorageCredential”,

“properties”: {

“credential”: {

“referenceName”: “CredentialAKV”,

“credentialType”: “AccountKey”

},

“connectedVia”: null,

“scanRulesetName”: “AzureStorage”,

“scanRulesetType”: “System”,

“workers”: null

},

“scanResults”: null

}

3. Once the scan is created you need to add filters to the scan which is basically scoping your scan or determining what objects should be included as part of scan. To create a filter, you can leverage the following REST API

PUT {Endpoint}/datasources/{dataSourceName}/scans/{scanName}/filters/custom?api-version=2018-12-01-preview

You can create a filter for the above scan ‘myStorageScan’ by sending a PUT request to the following URL with the below request body. This will create a scope to include folders /share1/user and /share1/aggregated and exclude folder /share1/user/temp/ as part of the scan.

{Endpoint}/datasources/myStorage/scans/myStorageScan/filters/custom?api-version=2018-12-01-preview

{

“properties”: {

“includeUriPrefixes”: [

“https://myStorage.file.core.windows.net/share1/user“,

“https://myStorage.file.core.windows.net/share1/aggregated“

],

“excludeUriPrefixes”: [

“https://myStorage.file.core.windows.net/share1/user/temp“

]

}

}

The above call will return the following response:

{

“name”: “custom”,

“id”: “datasources/myStorage/scans/myStorageScan/filters/custom”,

“properties”: {

“includeUriPrefixes”: [

“https://myStorage.file.core.windows.net/share1/user“,

“https://myStorage.file.core.windows.net/share1/aggregated“

],

“excludeUriPrefixes”: [

“https://myStorage.file.core.windows.net/share1/user/temp“

]

}

}

4.To run a scan, you need to use the following REST API

PUT {Endpoint}/datasources/{dataSourceName}/scans/{scanName}/runs/{runId}?api-version=2018-12-01-preview

You can now trigger the above scan ‘myStorageScan’ by sending a PUT request to the below URL. The runId is a guid.

{Endpoint}/datasources/myStorage/scans/myStorageScan/runs/138301e4-f4f9-4ab5-b734-bac446b236e7?api-version=2018-12-01-preview

The above call will return the following response:

{

“scanResultId”: “138301e4-f4f9-4ab5-b734-bac446b236e7”,

“startTime”: “2019-05-16T17:01:37.3089193Z”,

“endTime”: null,

“status”: “Accepted”,

“error”: null

}

To learn more about Azure Purview, check out our full documentation today.

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

As the retirement of Skype for Business Online approaches, we want to help customers with hybrid deployments of Skype for Business (Server + Online) successfully plan for the changes ahead. This post provides guidance for upgrade readiness, post-retirement experiences for hybrid deployments, and transitioning on-premises users to Teams after Skype for Business Online retires.

How can hybrid customers prepare for Skype for Business Online retirement?

Hybrid customers must upgrade Skype for Business Online users to Teams Only or move them on-premises by July 31, 2021. For any users homed in Skype for Business Online, you’ll need to ensure the user’s mode is set to TeamsOnly, as some may be using Teams while homed in Skype for Business Online.

What if an organization needs to maintain an on-premises instance of Skype for Business?

Although we encourage organizations to adopt Teams to fully benefit from an expanded set of communications and collaboration experiences, those that require an on-premises deployment of Skype for Business may continue to use Skype for Business Server as the support lifecycle of Skype for Business Server is not impacted by the retirement of Skype for Business Online.

Post-retirement, hybrid organizations can have:

- Users homed on-premises that use Teams, but not in TeamsOnly mode, and

- Users that have been upgraded to Teams Only, whether from Skype for Business Server or Skype for Business Online

What can customers with hybrid Skype for Business configurations expect as Skype for Business Online retires?

If all Skype for Business Online users have already been upgraded to Teams Only, their experiences will not change as interop with Skype for Business Server will continue to work as it currently does.

If your organization still has users homed in Skype for Business Online, you may be scheduled for a Microsoft-assisted upgrade to transition remaining Skype for Business Online users to Teams. Scheduling notifications will be sent to customers with users homed in Skype for Business Online 90 days before these users are upgraded to Teams. Assisted upgrades will begin in August 2021.

Even after being scheduled for a Microsoft-assisted upgrade, we recommend customers upgrade remaining Skype for Business Online users to Teams Only themselves prior to their scheduled date to better control the timing of their upgrade.

Once a user has been upgraded to Teams Only, they:

- Will receive all calls and chats in Teams.

- Can only initiate calls and chats, and schedule new meetings in Teams. Attempts to open the Skype for Business client will be redirected to Teams.

- Will be able to interoperate with other users who use Skype for Business Server.

- Will be able to communicate with users in federated organizations.

- Can still join Skype for Business meetings.

- Will have their online meetings and contacts migrated to Teams.

Users homed online will now be in TeamsOnly mode, while users homed on Skype for Business Server will remain on-premises. Please see this blog post for more details about Microsoft assisted upgrades.

After Skype for Business Online retires, what is the path from Skype for Business Server to Teams?

After Skype for Business Online retires, organizations that plan to transition users from on-premises to the Teams cloud can still do so by following the Teams upgrade guidance. Skype for Business Server customers who haven’t done so must plan hybrid connectivity. Hybrid connectivity enables customers to move on-premises users to Teams and take advantage of Microsoft 365 cloud services. After establishing hybrid connectivity, on-premises users can be moved to Teams Only.

We are working to simplify how organizations move to Teams. When moving a user from Skype for Business Server to Teams, it will no longer be required to specify the ‘-MoveToTeams’ switch in ‘Move-CsUser’, to move users directly from on-premises to Teams Only. Currently if this switch is not specified, users transition from being homed in Skype for Business Server to Skype for Business Online, and their mode remains unchanged. After retirement, when moving a user from on-premises to the cloud with ‘Move-CsUser’, users will automatically be assigned TeamsOnly mode, and their meetings will be automatically converted to Teams meetings even if ‘-MoveToTeams’ is not specified. We expect to release this functionality before July 31, 2021.

Enable a full migration to the cloud

As the timing, technical requirements and economics make sense, Skype for Business Server customers may choose to make a full migration to Microsoft 365. But before decommissioning the on-premises Skype for Business deployment and removing any hardware, all users should be upgraded to Teams Only, and the on-premises deployment must be separated from Microsoft 365 by disabling hybrid. After this three-step process is complete, customers may decommission their Skype for Business Server.

Still need help?

Leverage resources including Microsoft Teams admin documentation, online upgrade guidance, and Teams upgrade planning workshops to help plan your path to Teams Only.

You can also reach out to your Microsoft account team, FastTrack (as eligible) or a Microsoft Solution Partner to assist with the process.

See you in Teams!

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

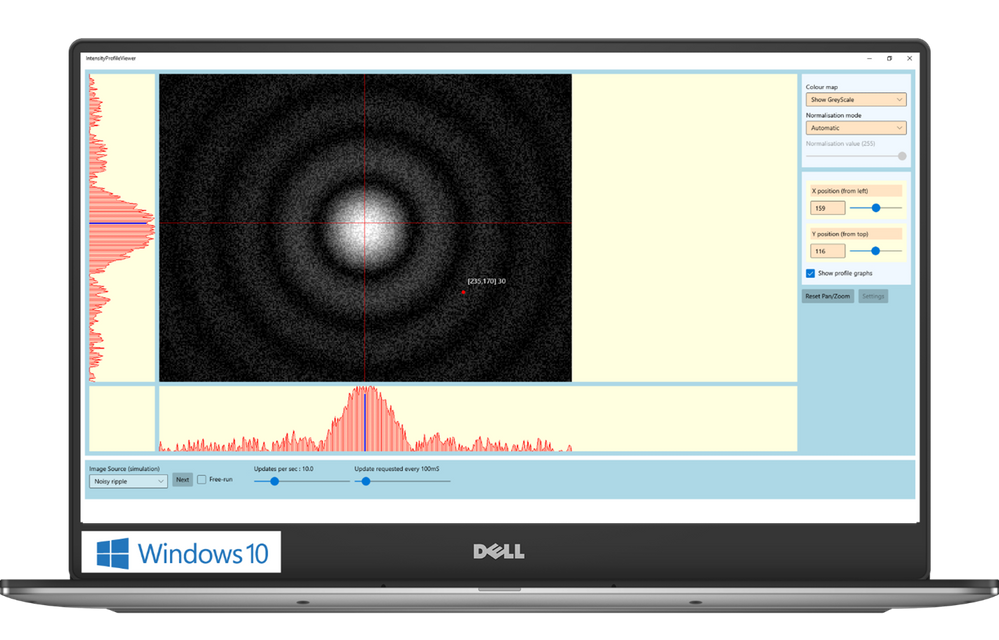

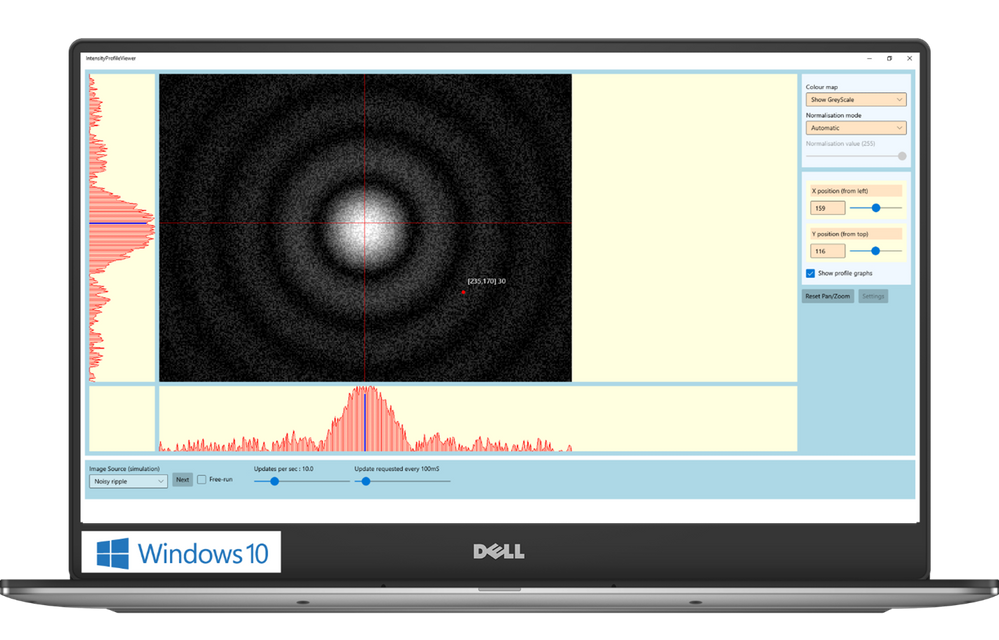

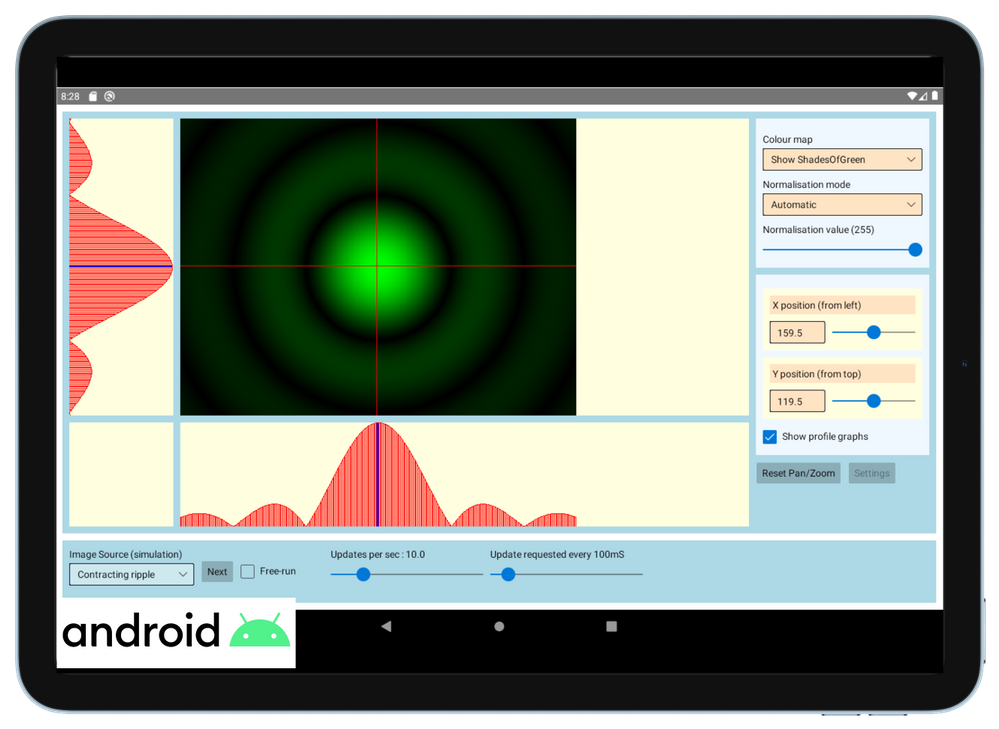

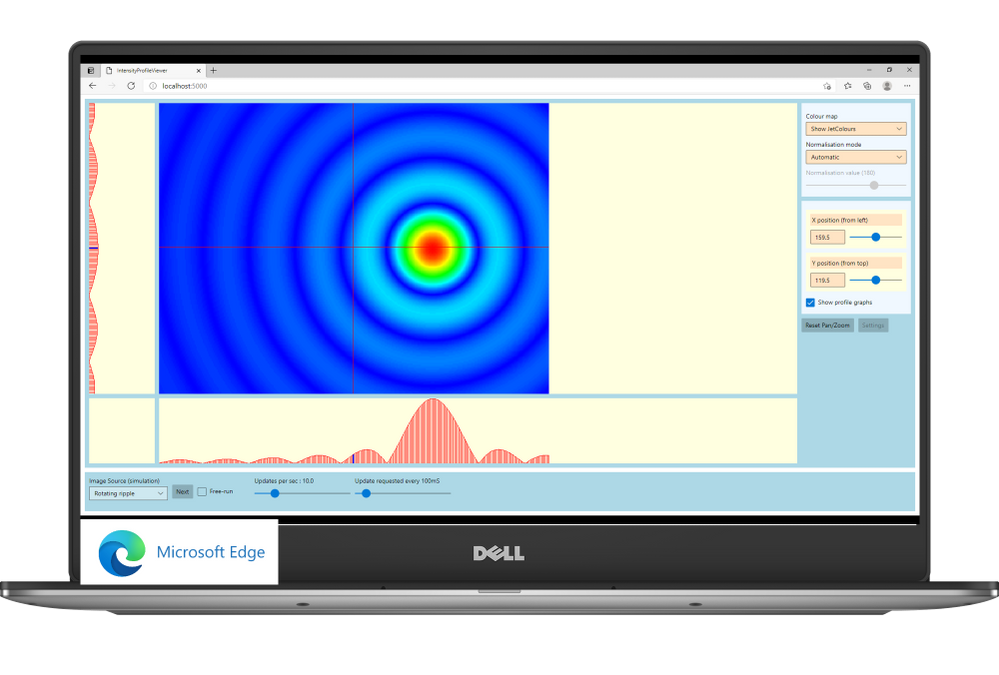

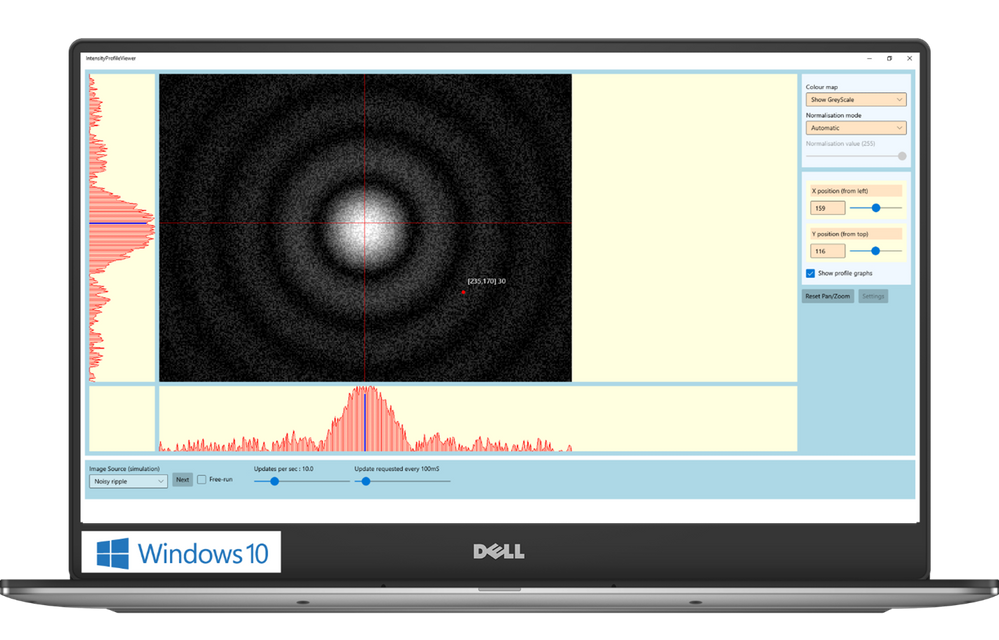

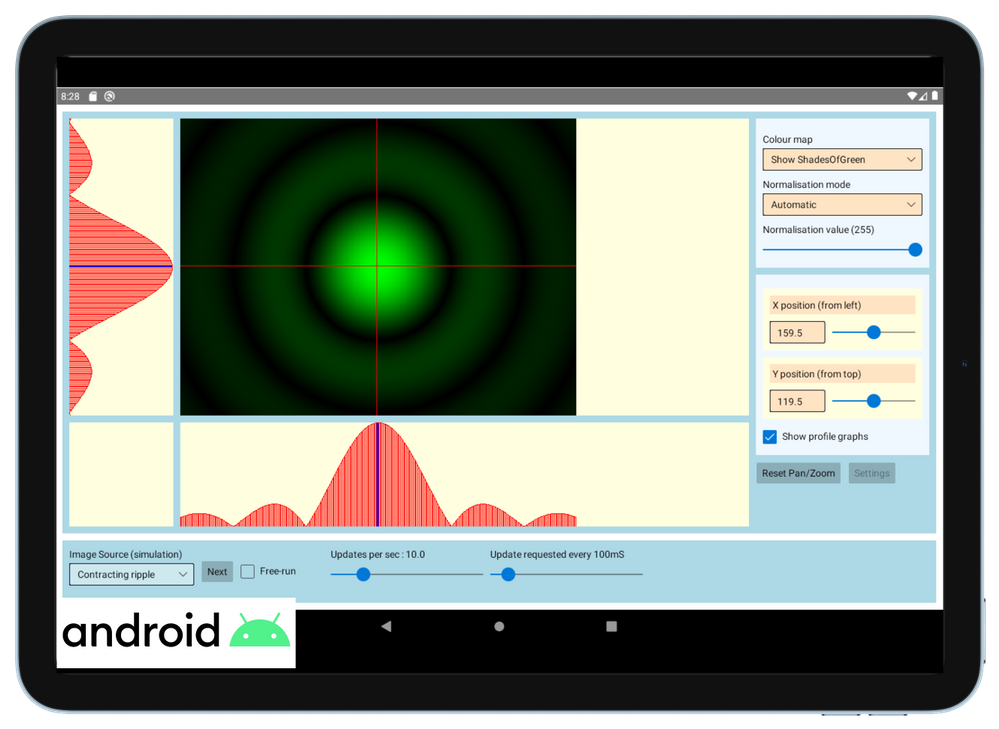

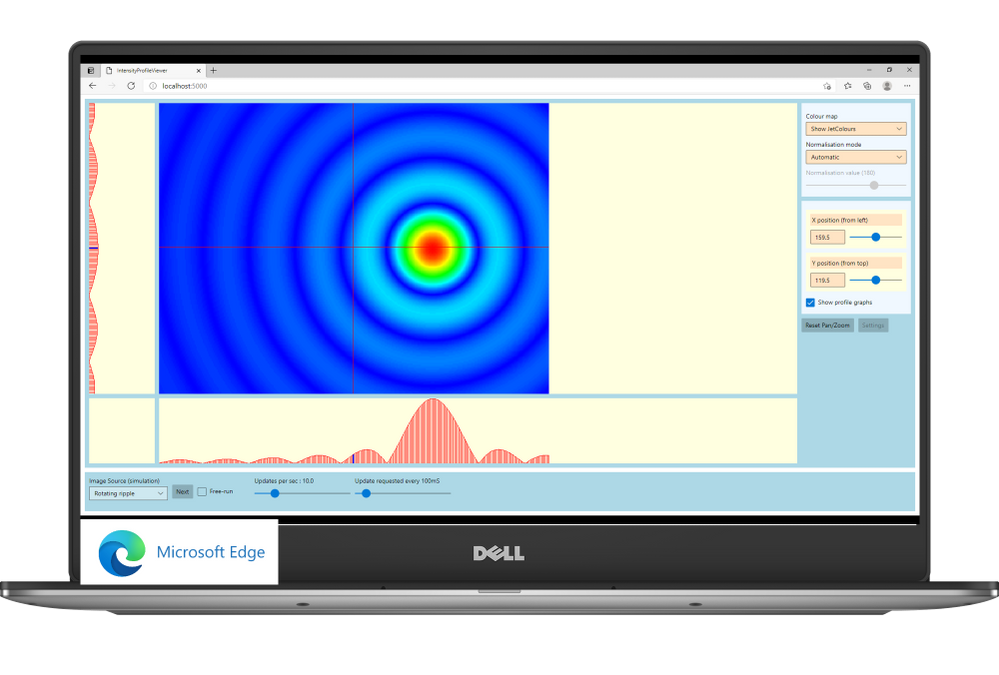

The Central Laser Facility (CLF) carries out research using lasers to investigate a broad range of science areas, spanning physics, chemistry, and biology. Their suite of laser systems allows them to focus light to extreme intensities, to generate exceptionally short pulses of light, and to image extremely small features.

The Central Laser Facility is currently building the Extreme Photonics Applications Centre (EPAC) in Oxfordshire, UK. EPAC is a new national facility to support UK science, technology, innovation and industry. It will bring together world-leading interdisciplinary expertise to develop and apply novel, laser based, non-conventional accelerators and particle sources which have unique properties.

The software control team inside Central Laser Facility develops applications that enable scientists to monitor and communicate with a wide range of scientific instruments. For example, the application can be used to move a motorised mirror to direct the laser beam toward a target, to watch a camera feed showing the current status of the system, or to configure and record data from a suite of cutting-edge scientific instruments such as x-ray cameras or electron spectrometers. These applications aggregate data and controls for specific tasks that a user needs to undertake – say, point the laser at a new target – and present them in a single screen to avoid the need to individually access all the different hardware necessary to make that happen.

The Challenge: Moving forward the control system for EPAC

As CLF started planning the design of a new control system for EPAC, their main goal was to tackle some of the challenges they were facing with the existing set of applications:

- Minimise the adaptations needed to run on multiple operating systems. CLF currently supports Windows and Linux, with other platforms like Android and Web in planning.

- Maximize code reuse while, at the same time, creating a scalable user interface. Their applications need to scale from mobile devices to large displays placed around the facility.

- Support advanced graphical features, like themes for easily changing colour schemes; the palette needed for viewing a screen through laser goggles in a laboratory is different than one would use in a control room, where no goggles are necessary.

The Solution

WinUI and Uno Platform were a perfect combination to tackle these challenges.

WinUI provides a state-of-the-art UI platform, which offers the powerful rendering capabilities needed by the application to show the real time feed coming from the cameras; to generate complex graphs that display in real-time the data captured by the instruments; to adapt to different layouts and form factors; ultimately, to easily create easy-to-use experiences thanks to a wide range of modern controls with full support to accessibility and multiple input types. Uno Platform is enabling the Central Laser Facility to take these features which empower the experience built for Windows and run it with no or minimal code changes on all the other platforms targeted by Central Laser Facility: Linux, Android and Web.

“Thanks to WinUI and Uno Platform, we were able to leverage the excellent set of developer tools that exists in .NET, and provides access to the reusable content in the Windows Community Toolkit and the XAML controls gallery.” shared Chris Gregory, Software Control Engineer. “The primary attraction for us was the ability to deploy applications cross-platform. This will allow us to visualise what’s happening with our instrumentation on the Windows machines in the control room, the Linux systems running the back end, on a tablet inside the laboratory, or on a mobile device for off-site monitoring.

This flexibility means that scientists and engineers can see a uniform presentation of the information they need no matter where they are in our facility, with minimal extra developer effort. Added to this, the availability of such a rich set of controls will result in the development of applications that are much more intuitive to use”.

by Contributed | May 12, 2021 | Dynamics 365, Microsoft 365, Technology

This article is contributed. See the original author and article here.

Identity authentication is a crucial part of any fraud protection and access management service. That is why Microsoft Dynamics 365 Fraud Protection and Microsoft Azure Active Directory work well together to provide customers a comprehensive authentication seamless access experience. In this blog of our fraud trend series, we explore how proper authentication prevents fraud and loss before it happens by blocking unauthorized or illegitimate access to the information and services provided. Check out our previous blogs in this series where we explore fraud in the food service industry, holiday fraud, and account takeover.

While most places still have some degree of lockdown in place, people must rely on online services more than ever before, from streaming and ordering takeout to mobile banking and remote connection. Today users have to manage more accounts than ever before. Each of these online services can be compromised and their identity stolen. While total combined fraud losses climbed to $56 billion in 2020, identity fraud scams accounted for $43 billion of that cost, according to Business Wire. Businesses need to have a way of protecting their users even when their identity has been compromised.

A good identity and access management (IAM) protects users, a great IAM does it without being seen. Customers today already must deal with too many MFA, 2FA, CAPTCHA, and other hurdles to prove their identity. While these are important tools to differentiate humans from bots, they can also be a pain to deal with. That is why leading IAM companies are working to stay ahead of the competition by enabling inclusive security with Azure Active Directory and Dynamics 365 Fraud Protection.

These capabilities will help you protect your users without burdening users

- Device fingerprinting. Our first line of defense, before users attempt an account creation or login event. Using device telemetry and attributes from online actions we can identify the device that is being used to a high degree of accuracy. This information includes hardware information, browser information, geographic information, and the Internet Protocol (IP) address.

- Risk assessment. Dynamics 365 Fraud Protection uses AI models to generate risk assessment scores for account creation and account login events. Merchants can apply this score in conjunction with the rules they’ve configured to approve, challenge, reject, or review these account creation and account login attempts based on custom business needs.

- Bot detection. An advanced adaptive artificial intelligence (AI) quickly generates a score that is mapped to the probability that a bot is initiating the event. This helps detect automated attempts to use compromised credentials or brute force DDOS attacks.

- Velocities. The frequency of events from a user or entity (such as a credit card) might indicate suspicious activity and potential fraud. For example, after fraudsters try a few individual orders, they often use a single credit card to quickly place many orders from a single IP address or device. They might also use many different credit cards to quickly place many orders. Velocity checks help you identify these types of event patterns. By defining velocities, you can watch incoming events for these types of patterns and use rules to define thresholds beyond which you want to treat the patterns as suspicious.

- External calls. External calls let you ingest data from APIs outside Dynamics 365 Fraud Protection. This enables you to use your own or a partner’s authentication and verification service and use that data to make informed decisions in real time. For example, third-party address and phone verification services, or your own custom scoring models, might provide critical input that helps determine the risk level for some events.

- Azure Active Directory External Identities. Your customers can use their preferred social, enterprise, or local account identities to get single sign-on access to your services. Customize your user experience with your brand so that it blends seamlessly with your web and mobile applications. Explore common use cases for External Identities.

- Risk-based Authentication. Most users have a normal behavior that can be tracked. When they fall outside of this norm, it could be risky to allow them to successfully sign in. Instead, you may want to block that user or ask them to perform a multi-factor authentication. Azure Active Directory B2C risk-based authentication will only challenge login attempts that are over your risk threshold while allowing normal logins to proceed unhampered.

Next steps

Learn more about Dynamics 365 Fraud Protection and other capabilities including how purchase protection helps protect your revenue by improving the acceptance rate of e-commerce transactions and how loss prevention helps protect revenue by identifying anomalies on returns and discounts. Check out our e-book “Protecting Customers, Revenue, and Reputation from Online Fraud” for a more in-depth look at Dynamics 365 Fraud Protection.

The post Fraud trends part 4: balancing identity authentication with user experience appeared first on Microsoft Dynamics 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

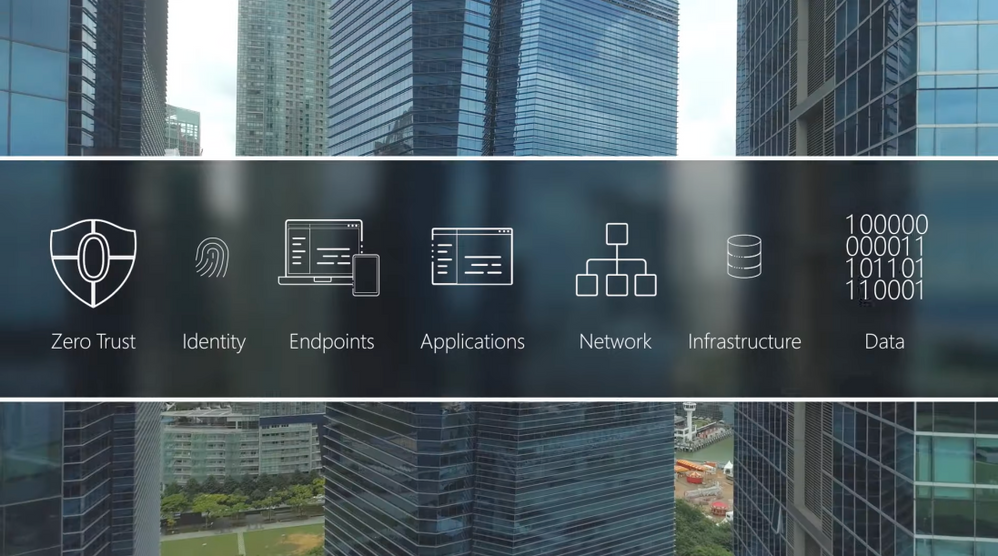

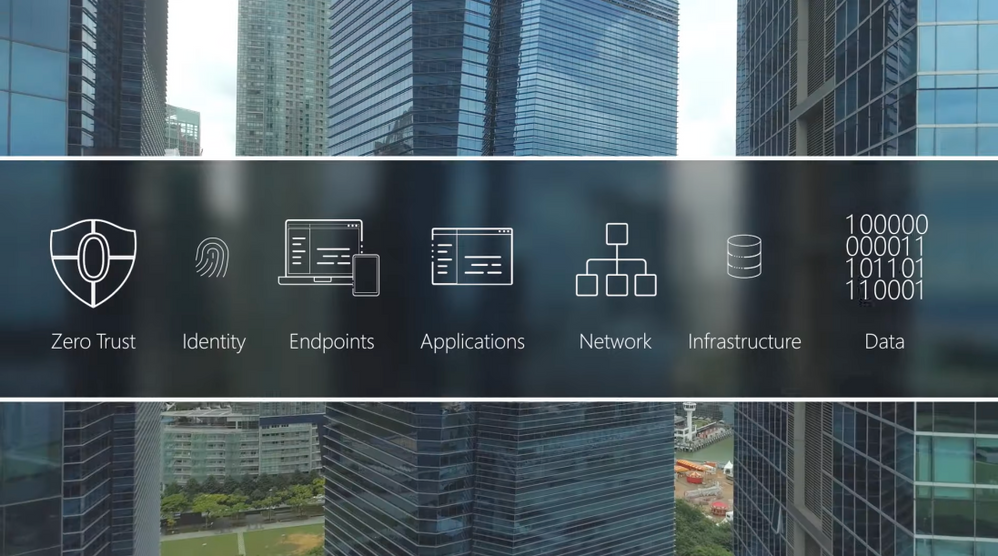

Adopt a Zero Trust approach for security and benefit from the core ways in which Microsoft can help. In the past, your defenses may have been focused on protecting network access with on-premises firewalls and VPNs, assuming everything inside the network was safe. But as corporate data footprints have expanded to sit outside your corporate network, to live in the Cloud or a hybrid across both, the Zero Trust security model has evolved to address a more holistic set of attack vectors.

Based on the principles of “verify explicitly”, “apply least privileged access” and “always assume breach”, Zero Trust establishes a comprehensive control plane across multiple layers of defense:

Identity

Azure Active Directory assigns identity and conditional access controls for your people, the service accounts used for apps and processes, and your devices.

Endpoints

Microsoft Endpoint Manager assures devices and their installed apps meet your security and compliance policy requirements

Applications

Microsoft Endpoint Manager can be used to configure and enforce policy management. Microsoft Cloud App Security can discover and manage Shadow IT services in use.

Network

Get a number of controls, including Network Segmentation, Threat protection, and Encryption.

Infrastructure

Azure landing zones, Blueprints and Policies can ensure newly deployed infrastructure meets compliance requirements for cloud resources. Azure Security Center and Log Analytics help with configuration and software update management for on-premises, cross-cloud and cross-platform infrastructure.

Data

Limit data access to only the people and processes that need it.

QUICK LINKS:

00:37 — Six layers of defense

02:31 — Identity

03:48 — Endpoints

04:48 — Applications

05:46 — Network

06:36 — Infrastructure

07:18 — Data

08:11 — Wrap Up

Link References:

Learn more at https://aka.ms/zerotrust

For tips and demonstrations, check out https://aka.ms/ZeroTrustMechanics

Unfamiliar with Microsoft Mechanics?

We are Microsoft’s official video series for IT. You can watch and share valuable content and demos of current and upcoming tech from the people who build it at Microsoft.

Keep getting this insider knowledge, join us on social:

Video Transcript:

-Welcome to Microsoft Mechanics and our new series on Zero Trust Essentials. In the next few minutes, I’ll break down what you can do to adopt a Zero Trust approach for security and how Microsoft can help. In the past, you may have focused your defenses on protecting network access with on-premise firewalls and VPNs assuming that everything inside the network was safe. But as corporate data footprints have expanded to sit outside your corporate network, to live in the cloud or hybrid or across both, the Zero Trust security model has evolved to address a more holistic set of attack vectors.

-Based on the principles of verify explicitly, apply least privileged access, and always assume breach, Zero Trust establishes a comprehensive control plan across multiple layers of defense. And this starts with identity and verifying that only people, devices and processes that have been granted access to your resources can access them. Followed by device endpoints including IoT systems at the edge where the security compliance of the hardware accessing your data is assessed. Now, this oversight applies to your applications too, whether local or in the cloud, as the software-level entry points to your information. Then there are protections at the network layer for access to resources, especially those that are within your corporate perimeter. Followed by the infrastructure, hosting your data both on premises or in the cloud. This can be physical or virtual including containers and microservices, and the underlying operating systems and firmware. And finally, the data itself across your files and content, as well as structured and unstructured data wherever it resides.

-Now, each of these layers are important links in the end-to-end chain of Zero Trust, and each can be exploited by malicious actors or inadvertently by users as entry points or channels to leak sensitive information. That said, core to Microsoft’s approach for Zero Trust is not to disrupt end users but work behind the scenes to keep users secure and in their flow as they work. The key here is end-to-end visibility and bringing then all this together with threat intelligence, risk detection and conditional access policies to reason over access requests and automate response.

-Here, as we’ll explore in the series, the good news is that both Microsoft 365 and Azure are designed with Zero Trust as a core architectural principle and have built-in and best-in-class controls to help deliver a Zero Trust environment. And you can then use these tools to extend Zero Trust to hybrid or even multi-cloud.

-In fact, let me walk you through some highlights for how Microsoft can help you implement Zero Trust starting with identity. Here, Azure Active Directory is the underlying service that assigns identity and conditional access controls for your people, the service accounts used for apps and processes, and your devices. Importantly, beyond Microsoft services, Azure AD can provide a single identity control plane with common authentication and authorization services for your cloud-based services and your on-premises resources. This prevents the use of multiple credentials and weak passwords spread across different services and helps you to universally apply strong authentication methods, like passwordless multifactor authentication for your users.

-Also to make the authentication process significantly less intrusive to users, you can take advantage of real-time intelligence at sign-in with conditional access and Azure AD. You can set policies to assess the risk level of the user or a sign-in, the device platform along with a sign-in location, to make point of log on decisions and enforce access policies in real time to either block access outright, grant access but require an additional authentication factor such as a biometric or a FIDO2 key, or limit it for example, to just view-only privileges.

-And moving on to end points, because not all devices accessing corporate data are managed or owned by your organization, they can represent another weak link in establishing Zero Trust. They may not be up to date or protected and run the risk of data exfiltration from unknown apps or services. Using Microsoft Endpoint Manager, you can make sure that devices and their installed apps meet your security and compliance policy requirements, regardless of whether the device is corporate owned or personally owned, wherever they’re connecting from, whether that’s on a network perimeter including over a VPN, on the home network or the public internet. Also on Microsoft Defender with its extended detection and response or XDR management controls can identify and contain breaches discovered on an endpoint and then force the device back into a trustworthy state before it’s allowed to connect back to resources.

-Next we’ve already touched on the benefits of Azure AD as the single identity provider for authenticated sign-in along with the use of conditional access, and these recommendations also apply to cloud apps and local apps that connect to cloud-based resources as well. Now for your local apps, Microsoft Endpoint Manager can be used to configure and enforce policy management for both desktop and mobile apps including browsers. For example, you can prevent work-related data from being copied and used in personal apps. that said on the SaaS side of the house knowing what apps and services are in use within your organization including those acquired by other teams known as Shadow IT is critical to mitigate any new vulnerabilities. Microsoft Cloud app security and its catalog of more than 17,000 apps can discover and manage Shadow IT services in use. And you can then set policies against your security requirements to scope how information may be accessed or shared within those services. For example, you can use policies to block actions within the cloud app such as downloading confidential files or discussing sensitive topics while using unmanaged devices.

-And this brings us to our fourth layer, the network. With modern architectures and hybrid services spanning on-premises and multiple cloud services, virtual networks or VNets and VPNs, we give you a number of controls starting with network segmentation to limit the blast radius and lateral movement of attacks on your network. We also enable threat protection to harden the network perimeter from things like DDoS or brute force attacks, then the ability to quickly detect and respond to incidents and encryption for all network traffic, whether that’s internal, inbound, or outbound. Microsoft offers several solutions to help secure networks such as Azure Firewall and Azure DDoS Protection to protect your Azure VNet resources. And Microsoft’s XDR and SIEM solution comprising Microsoft Defender and Azure Sentinel, help you to quickly identify and contain security incidents.

-Next, for your infrastructure, the most important consideration here is around configuration management and software updates so that all deployed infrastructure meets your security and policy requirements. For cloud resources, Azure landing zones, blueprints and policies can ensure that newly deployed infrastructure meets compliance requirements and the Azure Security Center, along with Log Analytics, help with configuration and software update management for your on-premises, cross-cloud and cross-platform infrastructure. Also monitoring is critical for detection of vulnerabilities, attacks and anomalies. Here again, Microsoft Defender plus Azure Sentinel provide threat protection for multi-cloud workloads enabling automated detection response.

-Of course, at the end of the day, Zero Trust is all about understanding, then applying the right controls to protect your data. Now we give you the controls to limit data access to only the people and processes that need it. The policies that you set along with real-time monitoring can then restrict or block the unwanted sharing of sensitive data and files. For example, with Microsoft Information Protection, you can automate labeling and classification of files and content. Policies are then assigned to the labels to trigger protective actions, such as encryption or limiting access, restricting third-party apps and services and much more. Additionally, for data outside of Microsoft 365, Azure Purview automatically discovers and maps data sitting across your Azure data sources, on premises and SaaS data sources, and works with Microsoft Information Protection to help you classify your sensitive information.

-So that was a quick overview, the Zero Trust security model and examples of some of the core ways that Microsoft can help. Moving to Zero Trust doesn’t have to be all or nothing. You can use a phased approach and close the most exploitable vulnerabilities first. Of course, keep checking back at aka.ms/ZeroTrustMechanics for more in our series where I’ll share tips and hands-on demonstrations of the tools for implementing the Zero Trust security model across the six layers of defense that I covered today. And you can also learn more at aka.ms/zerotrust. Thanks for watching.

by Contributed | May 12, 2021 | Technology

This article is contributed. See the original author and article here.

Today we released an exciting new feature in Microsoft Endpoint Manager that we call “Filters”. The feature adds greater flexibility for assigning apps and policies to groups of users or devices. Using filters, you can now combine a group assignment with characteristics of a device to achieve the right targeting outcome. For example, you can use filters to ensure that an assignment to a user group only targets corporate devices and doesn’t touch the personal ones. Read more about the announcement here and review the feature documentation here.

The feature is enabled with the service-side update of the 2105 service release, so you can expect to see it in the Microsoft Endpoint Manager admin center starting May 7 and continuing as the service-side updates.

Here is an overview of the feature: Use Microsoft Endpoint Manager filters to target apps and policies to specific users | YouTube.

The feature is released for Public preview and is supported by Microsoft to use in production environments. The following known issues apply. We will remove items off this list as issues are resolved.

Known issues:

- Compliance policy for “Risk score” and “Threat Level”: If your tenant is connected to a partner Mobile Threat Detection (MTD) partner service or Microsoft Defender for Endpoint (MDE), Compliance policies for Windows 10, iOS or Android can include the optional setting “Require the device to be at or under the machine risk score” or “Require the device to be at or under the Device Threat Level”. Using this setting configures the Microsoft Endpoint Manager compliance calculation engine to include signals from external services in the overall compliance state for the device. Using Filters on assignments for compliance policies with these settings is not currently supported.

- Available Apps on Android DA enrolled devices: Filter evaluation for available apps requires Company Portal app version 5.0.4868.0 (released to Android Play store August 2020) or higher for filter evaluation. If a device does not have this version (or higher) installed, apps will incorrectly show as available in the Company portal but be blocked from installing on the device.

- OSversion property for macOS devices: MacOS version numbers are reflected in Intune as a string that combines version number and build version in Intune, for example: “11.2.3 (20D91)” includes version number 11.2.3 along with build version 20D91. When creating a filter based on OS version for macOS device you can specify the full string including both components. There is a known issue where the device check-in gateway does not gather the full build version for evaluation, resulting in an incorrect evaluation result. For example, if you specified a filter (Device.OSversion -eq “11.2.3 (20D91)”) and a device of this version was evaluated, the result would be “Not match” because only the first half of the version number is evaluated.

Known issues for reporting:

- Filter evaluation reports for Available Apps: Evaluation results are currently not collected for apps assigned to groups with the “Available” intent. The “Managed Apps” (Device > [Devicename] > Managed Apps) “Resolved intent” column incorrectly shows a status of “Available for install” even if the device should be excluded by a Filter.

- Delay in filter evaluation report data: There is a case where filter evaluation reports (Devices > All Devices > [Devicename]> Filter evaluation) are not updated with the most recent evaluation results. This case only occurs in a special case where a filter is used in an assignment, some evaluation results are produced and then the filter is later removed from that assignment. In this scenario the report may take up to 48 hours to reflect. Note, this occurs only if the filter is removed from the assignment and not if the assignment is recreated by removing the group and then re-adding it without a filter.

- Win32 apps reporting: There is a known issue where Win32 apps reports (Apps > All apps > [App Name] > Device install status] for a device may be incomplete when a filter was used for any of the targeted apps for a device. Apps that were filtered out during a check-in may fail to report evaluation status events back to the MEM admin center. This impacts the app that used the filter, along with other Win32 apps in the same check-in session.

- Unexpected policies for other platform types show up in filter evaluation report: The Filter evaluation report for a single device (Devices > All Devices > [DeviceName]> Filter evaluation (preview)) shows all policies and apps that were targeted at the device or primary user of the device for which there was a filter evaluation performed, even if the policy type is not applicable for the platform of the device you are interested in. For example, if you assign a Windows 10 configuration policy to a user (a group that contains the user) along with a filter, you will notice that the filter evaluation report lists of policies for that user’s iOS, Android and macOS devices will also show Windows 10 policies and evaluation results. Note: The evaluation results will always be “Not Match” due to platform.

- No way to see which policies and apps are using a filter: When editing or deleting an existing filter, there is no way in the UX to see where that filter is currently being used. We’re working on adding this (see “Features in development” below). As a workaround, you can use this PowerShell script that will walk through all assignments in your tenant and return the policies and apps where the filter has been used. See Get-AssociatedFilter.ps1 script here.

Features in development:

- Pre-deployment reporting – We’re working on adding reporting experiences that make it possible to know the impact of a Filter before using it in a workload assignment.

- Associated assignments – We’re working on an improvement to select a filter and be able to identify all the associated policies and apps where that filter is being used.

- More workloads – We’re adding filters to the assignment pages of more MEM workloads including Endpoint Security, Proactive remediation scripts, Windows update policies and more.

Frequently Asked Questions

Do filters replace group assignments?

No. Filters are used on top of groups when you assign apps and policies and give you more granular targeting options. Assignments still require you to target a group and then refine that scope using a filter. In some scenarios, you may wish to target “All users” or “All devices” virtual groups and further refine using filters in include or exclude mode.

What about “Excluded groups”? Can I use a filter on these assignments?

While filters cannot be added on top of an “Excluded group” assignment the desired outcome can be achieved by combining Included groups with filters. Filters provide greater flexibility than Excluded groups because the “excluded groups” feature does not support mixing group types. See: supportability matrix to learn more.

Excluded groups are still a great option for user exception management – For example, you deploy to “All Users” and exclude “VIP Users”.

Now with filters you can build on top of existing capability by mixing user and device targeting. You can, for example define a filter to – Deploy to “All Users”, exclude “VIP Users” and only install on the “Corporate-owned” devices.

Here is summary guidance on how to use Groups, Exclude groups and Filters:

- Filters complement Azure AD groups for scenarios where you want to target a user group but filter ‘in’/’out’ devices from that group. For example: assign a policy to “All Finance Users” but then only apply it on corporate devices.

- Filters provide the ability to target assignments to ‘All Users’ and ‘All Devices’ virtual groups while filtering in/out specific devices. The “All users” groups are not Azure AD groups, but rather Intune “virtual” groups that have improved performance and latency characteristics. For latency-sensitive scenarios admins can use these groups and then further refine targeted devices using filters.

- “Excluded groups” option for Azure AD groups is supported, but you should use it mainly for excluding user groups. When it comes to excluding devices, we recommend using filters because they offer faster evaluation over dynamic device groups.

General recommendations on groups and assignment:

- Think of Include/Exclude groups as an initial starting point for deploying. The AAD group is the limiting group so use the smallest group scope possible.

- Assigned (also known as static) Azure AD groups can be used for Included or Excluded groups, however it usually is not practical to statically assign devices into an AAD group unless they are pre-registered in AAD (eg: via autopilot) or if you want to collect them for a one-off, ad-hoc deployment.

- Dynamic Azure AD user groups can be used for Include/Exclude groups.

- Dynamic AAD device groups can be used for Include groups but there may be latency in populating group membership. In latency-sensitive scenarios where it is critical for targeting to occur instantly upon enrollment, consider using an assignment to User groups and then combine with filters to target the intended set of devices. If the scenario is userless, consider using the “All devices” group assignment in combination with filters.

- Avoid using Dynamic Azure AD device groups for Excluded groups. Latency in dynamic device group calculation at enrollment time can cause undesirable results such as unwanted apps and policy being delivered before the excluded group membership can be populated.

Are Intune Roles (RBAC) and scope tags supported?

Yes. During the filter creation wizard, you can add scope tags to the filter. (Note: The “Scope Tags” wizard screen only shows if your tenant has configured scope tags). There are four new privileges available for filters (Read, Create, Update, Delete). These permissions exist for built-in roles (Policy admin, Intune admin, School admin, App admin). To use a filter when assigning a workload, you must have the right permissions: You must have permission to the filter, permission to the workload and permission to assign to the group you chose.

Is the Audit Logs feature supported?

Yes. Any action performed by an admin on assignment filter objects is recorded in audit logs (Tenant administration -> Audit logs). This also includes the action of enabling the Filters feature in your account.

Can I use filters with user group assignments?

Yes. This is a good scenario for using filters. For example, you can assign a policy to “All finance users” and then apply an assignment filter to only include “Surface Laptop” devices.

Can I create a filter based on any device property I can see in MEM?

No, not yet but we plan to add more filter properties over time. The list of supported properties is here. Please let us know about the properties that would help in your scenarios to: aka.ms/MEMfiltersfeedback.

Can I use filters in any assignment in MEM?

While in preview, filters are available to use in a core set of workload types (Apps, Compliance and Configuration profiles). The list of supported properties is here. Please let us know about the properties that would help in your scenarios at aka.ms/MEMFiltersfeedback.

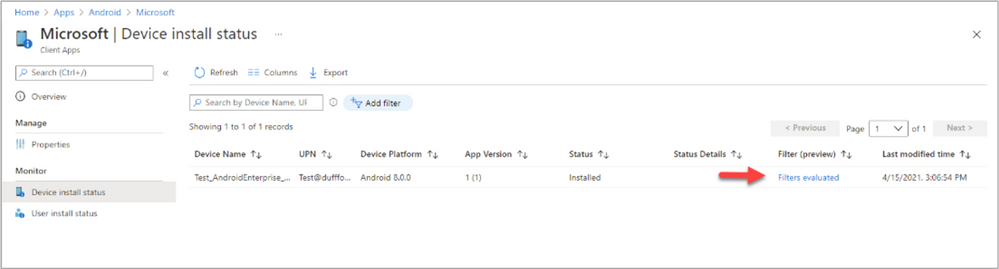

How does assignment filtering get reflected in device status and device install status reports in the MEM admin center?

Filter reporting information exists for each device under a new stand-alone report area called “Filter evaluation” and we’re working to further integrate reporting information into existing reports such as the “Device status” and “Device install” reports. As an example of where this is going, the apps report has a new column called “Filter (preview)” under Device install status. Over time you will see further integration of the filter information into other workload type reports.

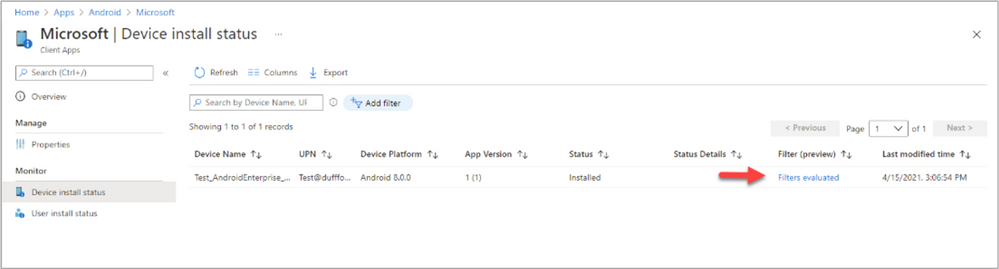

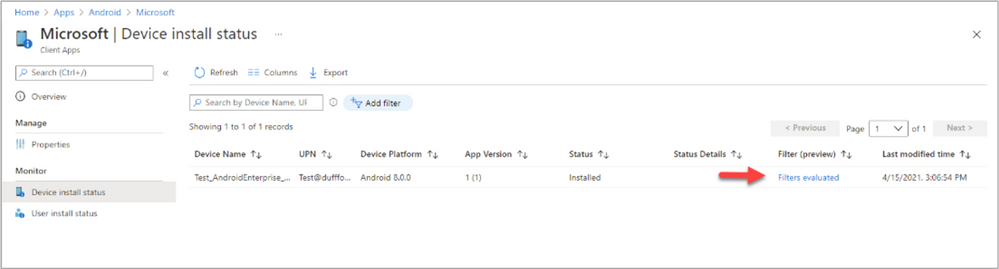

If you deploy a policy (Compliance or Configuration) to a group and navigate to the “Device status” report, there is a row in the report for each targeted device. When each targeted device checks-in the device will be evaluated against the associated filter and this status will be updated (For example, the status will show “Not Applicable” if the assignment filter filtered the policy out). For apps, the experience (in the Device install status report) is similar, except that you can view details on the filter evaluation by clicking on the “Filters evaluated” link.

Example of filter evaluation under the Device install status report

Example of filter evaluation under the Device install status report

How many filters can I create?

There is a limit of 50 filters per customer tenant.

How many expressions can I have in a filter?

There is a limit of 3072 characters per filter.

Can I use more than one filter in an assignment?

No. An assignment includes the combination of Group + Filter + Other deployment settings. While you can’t use more that one filter per assignment you can certainly use more than one assignment per policy or app. For example, you can deploy an iOS device restriction policy to “Finance users” and “HR users” groups and have a different assignment filter linked to each of those assignments. However, be careful not to create overlaps or conflicts. We don’t recommend it but have documented the behavior here.

How do Filters work with the Windows 10 “Applicability Rules” feature?

Filters are a super-set of functionality from “Applicability rules” and as such we recommend that you use filters instead. We do not recommend combining the two together or know of a reason to, but if you do have a policy assigned with both, the expected result is that both will apply. The filter will be processed first, then a second iteration of applicability will be undertaken by the applicability rules feature.

Documentation

Let us know if you have any questions by replying to this post or reaching out to @IntuneSuppTeam on Twitter.

Recent Comments