This article is contributed. See the original author and article here.

Those responsible for data will tell you that no matter what they do, at the end of the day, they’re value is only seen when the customer can get to the data they want. As much as we want to say this has to do with the data architecture, the design, the platforming and the code, it also has to incorporate the backup, retention and recovery of said data, too.

Oracle on Azure is a less known option for our beloved cloud and for our customers, we spend considerable time on this topic. Again, the data professional is often judged on how good they are by the ability to get the data back in times of crisis. With this in mind, we’re going to talk about backup and recovery for Oracle on Azure, along with key concepts and what to keep in mind when designing and implementing a solution.

Backups Pressure Points

A customer’s RPO and RTO can really impact when going to the cloud. They assume that everything will work the same way as it did on-prem and for most, it really does, but for backups, this is one of the big leaps that have to be made. It’s essential to embrace newer snapshot technologies over older, more archaic utilities for backups and Oracle is no different. We’re already more pressured by IO demands and to add RMAN backups and datapump exports on top of nightly statistics gathering jobs is asking for a perfect storm of IO demands the likes of which no one has ever seen, so this all has to be taken into consideration when architecting the solution.

As we size out the database using extended window AWR reports, we expect backups and any exports to be part of the workload, but if they aren’t, either due to differences in design or products used, this needs to be discussed from the very beginning of the project.

Using all of this information, we are going to identify the VM series not just by the DTU, (combination calculation for CPU and memory) but the VM throughput allowed and then the disk configuration to handle the workload. There are considerable disk options available towards backups, not just blob storage for inexpensive backup dump location, but locally managed disks that can be allocated for faster and more immediate access.

The Discussion

One of the first discussions that should be had when anyone is moving to the cloud around backup and recovery is clearly identifying Recovery Point Objective, (RPO) and Recovery Time Objective, (RTO), which for Oracle, centers around RMAN, Datapump, (imports/exports) and third-party tools.

Recovery Point Objective, (RPO) is the interval of time acceptable before a disruption in the quantity of data lost exceeds the business’ maximum ability to tolerate. Having a clear understanding of what this allowable threshold is essential to determining RPO. Recovery Time Objective (RTO) has to do with how much time it took to recover and return to business. This allowable time duration and any SLA includes when a database must be restored by after an outage or disaster and the time in order to avoid unacceptable consequences associated with a break in business continuity.

Specific questions around expectations for RPO and RTO revolve around:

- What is the time a database will complete a full or incremental backup and how often?

- What time is required to restore to be completed to meet SLAs?

- Are there any dependencies on archive log backups that will impact the first two scenarios?

- Is there the option to restore the entire VM vs. the database level recovery?

- Is it a multi-tier system that more than the database or VM must be considered in the recovery?

When customers use less-modern practices for development and refreshes, it’s important to help them evolve:

- Are there requirements for table level refreshes?

- Is there a subset of table data that can satisfy the request?

- Can objects be exported as needed during the workday?

- Consider snapshot restores

- Once a snapshot is restored to a secondary VM, the datapump copy would be used to retrieve the table to the destination.

- Consider flashback database from a secondary working database that could be used for a remote table copy to the primary database.

- Size considerations for the Flashback Recovery Area, (FRA) must be considered when implementing this solution and archiving must be enabled.

- The idea is flashback copy could be used to take a copy of the table and even use this table to copy over to a secondary database.

- Are database refreshes for secondary environments used for developers and testing?

- Consider using snapshots vs. RMAN clones or full recoveries.

- The latter are both time and resource intensive.

- Snapshots restores to secondary servers can be created in very short turn-around saving considerable time.

- Consider using snapshots vs. RMAN clones or full recoveries.

These types of changes can make a world of difference for the customers future, allowing them to not just migrate to the cloud, but completely evolve the way they work today.

RMAN on Azure

RMAN, i.e. Recovery Manager, is still our go-to tool for Oracle backups on Azure, but it can add undo pressure on a cloud environment and this has to be recognized as so. Following best practices can help eliminate some of these challenges.

- Ensure that Redo is separated from Data

- Stripe disks and use the correct storage for the IO performance

- Size the VM appropriately, taking VM IO throughput limits into sizing considerations.

- Incorporate optimization features in RMAN, such as parallel matched to number of channels, RATE for throttling backups,

- Compress backup set AFTER and outside of RMAN if time is a consideration

- Turn on optimization:

RMAN> CONFIGURE BACKUP OPTIMIZATION ON;

By default this parameter is set to OFF, which results in Oracle not skipping unchanged data blocks in datafiles or backup of files that haven’t changed. Why backup something that’s already backed up and retained?

If you want to write backups to blob storage, blob fuse will still make a back up to locally managed disk and then move it to the less expensive blob storage. The time taken to perform this copy is what most are concerned about and if the time consideration isn’t a large concern for the customer, we choose to do this, but for those with stricter RPO/RTO, we’re less likely to go this route, choosing locally managed, striped disk instead.

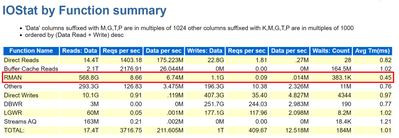

As we’re still dependent on limits per VM on IO throughput, it’s important to take this all into consideration when sizing out the VM series and storage choices. We may size up from an E-series to an M-series that offers higher thresholds and write acceleration, along with ultra disk for our redo logs to assist with archiving. Identifying not only the IO but latency at the Oracle database level is important as these decisions are made. Don’t assume, but gather the information provided in an AWR for when a backup is taken, (datapump or other scenario being researched) to identify the focal point.

For many customers, ensuring that storage best practices around disk striping and mirroring are essential to improve performance of RMAN on Azure IaaS.

Using Azure Site Recovery Snapshots with Oracle

Azure Site Recovery isn’t “Oracle aware” like it is for Azure SQL, SQL Server or other managed database platforms, but that doesn’t mean that you can use it with Oracle. I just assumed that it was evident how to use it with Oracle but discovered that I shouldn’t assume. The key is putting the database in hot backup mode before the snapshot is taken and then taking it back out of backup mode once finished. The snapshot takes a matter of seconds, so it’s a very short amount of time to perform the task and then release the database to proceed forward.

This relies on the following to be performed in a script from a jump box, (not on the database server)

- Main script executes an “az vm run-command invoke” to the Oracle database server and run a script

- Script on the database server receives two arguments: Oracle SID, begin. This places the database into hot backup mode –

alter database begin backup;

- Main script the executes an “az vm snapshot” against each of the drives it captures for the Resource Group and VM Name. Each snapshot only takes a matter of seconds to complete.

- Main script executes an “az vm run-command invoke” to the Oracle database server and run a script

- Script on database server receives two arguments: Oracle SID, end. This takes the database out of hot backup mode

alter database end backup;

- Main script checks log for any errors and if any our found, notifies, if none, exits.

This script would run once per day to take a snapshot that could then be used to recover the VM and the database from.

Third Party Backup Utilities

As much as backup with RMAN is part of the following backup utilities, I’d like to push again for snapshots at the VM level that are also database enforced, allowing for less footprint on the system and quicker recovery times. Note that we again are moving away from full backups in RMAN and moving towards RMAN aware snapshots that can provide us with a database consistent snapshot that can be used to recover from and eliminate extra IO workload on the virtual machine.

Some popular services in the Azure Marketplace that are commonly used by customers for backups and snapshots are:

- Commvault

- Veeam

- Pure Storage Backup

- Rubrik

I’m starting to see a larger variety of tools available that once were only available on-prem. If the customer is comfortable with a specific third-party tool, I’d highly recommend researching and discovering if it’s feasible to also use it in Azure to ease the transition. One of the biggest complaints by customers going to the cloud is the learning curve, so why not make it simpler by keeping those tools that can remain the same, do just that?

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments