This article is contributed. See the original author and article here.

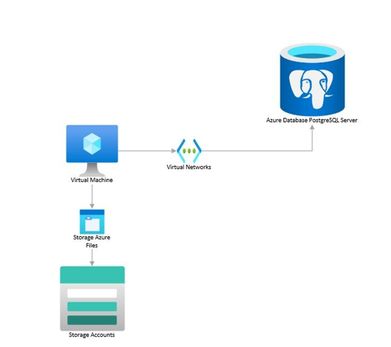

You can perform logical backups of your database from Azure Database for PostgreSQL to azure storage accounts for long term retention. These backups can be restored to your on-prem environment or to another PostgreSQL instance on a Virtual Machine.

Follow the steps below to extract a backup from Azure Database for PostgreSQL to a Storage Account.

In an nutshell, what we will need to do is the following:

- Use an existing VM or spin a Linux VM in the same region as the database (we use Ubuntu Server for this example).

- Mount your storage account as file share in the VM.

- Create a bash script that uses pg_dump to create a backup from your database.

- Schedule a task to run this script using crontab and to deallocate the VM when completed.

- Schedule the VM to start before the selected backup time using Logic Apps.

Prerequisites:

- Blob storage account with a File Share

- A Virtual Machine

- A Logic Apps instance.

Let’s break down each step.

- Spin up or use an existing Virtual Machine and configure it:

- Create a VM from the Azure portal. Refer to this QuickStart guide if needed.

- Start a remote session to your VM and install the following required packages:

sudo apt install postgresql-client-10 # installs PostgreSQL client utilities sudo apt install cifs-utils # installs the Common Internet File System utilities curl -sL https://aka.ms/InstallAzureCLIDeb | sudo bash # installs Azure CLI - If your PostgreSQL server is version 11 or above, please install the client tools with the following commands:

sudo apt install wget ca-certificates wget --quiet -O - https://www.postgresql.org/media/keys/ACCC4CF8.asc | sudo apt-key add - sudo sh -c 'echo "deb http://apt.postgresql.org/pub/repos/apt/ `lsb_release -cs`-pgdg main" >> /etc/apt/sources.list.d/pgdg.list' sudo apt update sudo apt-get install postgresql-client-11 #Or the required version above 10

- Mount your storage account as a File Share.

- Create a File Share in your storage account

If needed you can refer to this document on how to create an Azure File Share.

- Collect the following details from the Azure Portal: Resource Group, Storage Account Name and File Share Name.

- Log into your VM and declare the following variables:

resourceGroupName="myResourceGroup" storageAccountName="myStorageAccount" fileShareName="myFileShare" mntPath="/home/azureuser/myfolder/"#File share needs to be mounted in the home directory rather than the mnt directory. Otherwise, folder will be deleted after VM deallocation. - Create a folder where the storage account will be mounted:

mkdir /home/azureuser/myfolder - Login to your Azure subscription from the VM:

az login - Check that connection to the storage account through port 445 is possible:

httpEndpoint=$(az storage account show --resource-group $resourceGroupName --name $storageAccountName --query "primaryEndpoints.file" | tr -d '"') smbPath=$(echo $httpEndpoint | cut -c7-$(expr length $httpEndpoint)) fileHost=$(echo $smbPath | tr -d "/") nc -zvw3 $fileHost 445 - Storage account credentials are stored in the VM:

sudo mkdir /etc/smbcredentials storageAccountKey=$(az storage account keys list --resource-group $resourceGroupName --account-name $storageAccountName --query "[0].value" | tr -d '"') smbCredentialFile="/etc/smbcredentials/$storageAccountName.cred" if [ ! -f $smbCredentialFile ]; then echo "username=$storageAccountName" | sudo tee $smbCredentialFile > /dev/null echo "password=$storageAccountKey" | sudo tee -a $smbCredentialFile > /dev/null else echo "The credential file $smbCredentialFile already exists, and was not modified." fi - Change permissions so only root can read and modify the password file:

sudo chmod 600 $smbCredentialFile - Append mount point to /etc/fstab:

httpEndpoint=$(az storage account show --resource-group $resourceGroupName --name $storageAccountName --query "primaryEndpoints.file" | tr -d '"') smbPath=$(echo $httpEndpoint | cut -c7-$(expr length $httpEndpoint))$fileShareName if [ -z "$(grep $smbPath $mntPath /etc/fstab)" ]; then echo "$smbPath $mntPath cifs $nofail,vers=3.0,credentials=$smbCredentialFile,serverino,dir_mode=0777,file_mode=0$777" | sudo tee -a /etc/fstab > /dev/null else echo "/etc/fstab was not modified to avoid conflicting entries as this Azure file share was already present. You may want to double check /etc/fstab to ensure the configuration is as desired." fi - Mount the storage account:

sudo mount -a

3. Create a bash script that uses pg_dump to create a backup from your database. You can write something like this:

#!bin/bash

cd /home/azureuser/<folder to mount storage account>/

export PGPASSWORD="password"

date=$(date +%s)

echo $date

pg_dump -Fc -v --host=dbservername.postgres.database.azure.com --dbname=dbname --username=user@dbservername -f dbtest$date.dump

az vm deallocate -g MyResourceGroup -n MyVm #This deallocates the VM after the backup has been saved to the storage account

4. Schedule a task to run this script using crontab and to deallocate the VM when completed.

crontab -e

For example, use the following line if you would like to have the backup run every Friday at midnight (VM time zone is UTC):

0 0 * * 5 /home/azureuser/backup_script.sh

5. Schedule the VM to start before the selected backup time using Logic Apps.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments