by Contributed | Jun 15, 2021 | Technology

This article is contributed. See the original author and article here.

We just added new APIs in preview in the Azure IoT SDK for C# that will make it easier for device developers to implement IoT Plug and Play, and we’d love to have your feedback.

Since we released IoT Plug and Play back in September 2020, we provided the PnP-specific helpers functions in the form of samples to help demonstrate the PnP convention eg. how to format PnP telemetry message, how to ack on properties updates, etc.

As IoT Plug and Play is becoming the “new normal” for connecting devices to Azure IoT, it is important that there is a solid set of APIs in the C# client SDK to help you efficiently implement IoT Plug and Play in your devices. PnP models expose components, telemetry, commands, and properties. These new APIs format and send messages for telemetry and property updates. They can also help the client receive and ack writable property update requests and incoming command invocations.

These additions to the C# SDK can become the foundation of your solution, taking the load off your plate when it comes to formatting data and making your devices future proof. We introduced these functions in preview for now, so you can test them in your scenarios and give us feedback on the design.. As long we are in preview, it is easy to change these APIs, so it is the right time to give it a try and let us know if that fits your needs or if you see some improvements.

The NuGet package can be found here: https://www.nuget.org/packages/Microsoft.Azure.Devices.Client/1.38.0-preview-001

We have created a couple of samples to help you get started with these new APIs, have a look at:

https://github.com/Azure/azure-iot-sdk-csharp/blob/preview/iothub/device/samples/convention-based-samples/readme.md

In these new APIs, we introduced a couple of new types to help with convention operations. For telemetry, we expose TelemetryMessage that simplifies message formatting for the telemetry:

// Send telemetry "serialNumber".

string serialNumber = "SR-1234";

using var telemetryMessage = new TelemetryMessage

{

MessageId = Guid.NewGuid().ToString(),

Telemetry = { ["serialNumber"] = serialNumber },

};

await _deviceClient.SendTelemetryAsync(telemetryMessage, cancellationToken);

and in the casehere thermostat1) you can prepare the telemetry message this way:

using var telemetryMessage = new TelemetryMessage("thermostat1")

{

MessageId = Guid.NewGuid().ToString(),

Telemetry = { ["serialNumber"] = serialNumber },

};

await _deviceClient.SendTelemetryAsync(telemetryMessage, cancellationToken);

For properties, we introduced the type ClientProperties TryGetValue.

With this new type, accessing a device twin property becomes:

// Retrieve the client's properties.

ClientProperties properties = await _deviceClient.GetClientPropertiesAsync(cancellationToken);

// To fetch the value of client reported property "serialNumber" under component "thermostat1".

bool isSerialNumberReported = properties.TryGetValue("thermostat1", "serialNumber", out string serialNumberReported);

// To fetch the value of service requested "targetTemperature" value under component "thermostat1".

bool isTargetTemperatureUpdateRequested = properties.Writable.TryGetValue("thermostat1", "targetTemperature", out double targetTemperatureUpdateRequest);

Note that in that case, we have a component named thermostat1, first we get the serialNumber and second for a writable property, we use properties.Writable.

Same pattern for reporting properties, we have now the ClientPropertyCollection, that helps to update properties by batch, as we have here a collection and exposing the method AddComponentProperty:

// Update the property "serialNumber" under component "thermostat1".

var propertiesToBeUpdated = new ClientPropertyCollection();

propertiesToBeUpdated.AddComponentProperty("thermostat1", "serialNumber", "SR-1234");

ClientPropertiesUpdateResponse updateResponse = await _deviceClient

.UpdateClientPropertiesAsync(propertiesToBeUpdated, cancellationToken);

long updatedVersion = updateResponse.Version;

With this, it became much easier to Respond to top-level property update requests even for a component model:

await _deviceClient.SubscribeToWritablePropertiesEventAsync(

async (writableProperties, userContext) =>

{

if (writableProperties.TryGetValue("thermostat1", "targetTemperature", out double targetTemperature))

{

IWritablePropertyResponse writableResponse = _deviceClient

.PayloadConvention

.PayloadSerializer

.CreateWritablePropertyResponse(targetTemperature, CommonClientResponseCodes.OK, writableProperties.Version, "The operation completed successfully.");

var propertiesToBeUpdated = new ClientPropertyCollection();

propertiesToBeUpdated.AddComponentProperty("thermostat1", "targetTemperature", writableResponse);

ClientPropertiesUpdateResponse updateResponse = await _deviceClient.UpdateClientPropertiesAsync(propertiesToBeUpdated, cancellationToken);

}

As long as we stay in preview for these APIs, you‘ll find the set of usual PnP samples, migrated to use these news APIs in the code repository, in the preview branch:

https://github.com/Azure/azure-iot-sdk-csharp/tree/preview/iothub/device/samples/convention-based-samples

See project Thermostat for the non-component sample and TemperatureController for the component sample.

Again, it is the right time to let us know any feedback and comments on these APIs. Contact us, open an issue, and help us providing the right PnP API you need.

Happy testing,

Eric for the Azure IoT Managed SDK team

by Contributed | Jun 15, 2021 | Technology

This article is contributed. See the original author and article here.

Summary

As previously announced, we are deprecating all /microsoft org container images hosted in Docker Hub repositories on June 30th, 2021. This includes some old azure-cli images (pre v2.12.1), all of which are already available on Microsoft Container Registry (MCR).

In preparation for the deprecation, we are removing the latest tag from the microsoft/azure-cli container image in Dockerhub. If you are referencing microsoft/azure-cli:latest in your automation or Dockerfiles, you will see failures.

What should I do?

To avoid any impact on your development, deployment, or automation scripts, you should update docker pull commands, FROM statements in Dockerfiles, and other references to microsoft/azure-cli container images to explicitly reference mcr.microsoft.com/azure-cli instead.

What’s next?

On June 30th we will remove all version tags from Dockerhub for microsoft/azure-cli . After that date the only to consume the container images will be via MCR.

How to get additional help?

We understand that there may be unanswered questions. You can get additional help by submitting an issue on GitHub.

by Contributed | Jun 15, 2021 | Technology

This article is contributed. See the original author and article here.

Today, I got a very interesting question about if could be possible to connect from external tables to Azure SQL Managed Instance, SQL Database or Synase. In this article, I would like to explain it.

Besides the option that we have with Linked Server, my first option was to use SQL SERVER 2019 and Polybase, after installing Polybase and using the following TSQL statement I was able to connect to Managed Instance, SQL Database and Synapse from my OnPremises or Azure Virtual Machine.

CREATE MASTER KEY ENCRYPTION BY PASSWORD = 'Password';

CREATE DATABASE SCOPED CREDENTIAL AzureSQLExternalTableCredentials WITH IDENTITY = 'UserName', Secret = 'Password';

CREATE EXTERNAL DATA SOURCE AzureSQLExternalTableDataSource WITH (LOCATION = 'sqlserver://servername.database.windows.net', PUSHDOWN = ON, CREDENTIAL = AzureSQLExternalTableCredentials);

CREATE EXTERNAL TABLE [dbo].[AzureSQLExternalTable_MyTable] ([id] [int] NOT NULL) WITH (DATA_SOURCE = AzureSQLExternalTableDataSource ,location='databasename.schemaname.TableName')

Running a query Select * from AzureSQLExternaTable_MyTable I was able to obtain the data.

Unfortunately, it is not possible to insert data to the table AzureSQLExternalTable_MyTable because external tables in AzureSQL, Synapse and SQL Server OnPrem there is not supported run DML commands.

Enjoy!

by Contributed | Jun 15, 2021 | Technology

This article is contributed. See the original author and article here.

ORCA is an open-source software solution which helps academic institutions assess the effectiveness of online learning by analyzing data on students’ attendance and engagement with online platforms and content. As a group of students at University College London (UCL), we had the chance to develop ORCA through UCL’s Industry Exchange Network (IXN) programme in collaboration with Microsoft as part of a course within our degree.

Guest post by team leads Lydia Tsami and Omar Beyhum

Although we’re currently busy working on our dissertation projects as part of our degrees, 2 of our developers – Lydia Tsami and Omar Beyhum – are joining Ayca Bas from the Microsoft 365 Advocacy team in this webinar to talk about how we designed, developed, and delivered ORCA.

What is ORCA?

ORCA is designed to complement the online learning and collaboration tools of schools and universities, most notably Moodle and Microsoft Teams. In brief, it can generate visual reports based on student attendance and engagement metrics, and then provide them to the relevant teaching staff. To accomplish this, it leverages Microsoft Graph to cross reference student identities across different platforms and listen to events such as participants joining meetings. Data can be synthesized via templated Sharepoint lists or Power BI dashboards, then shared to the relevant staff members based on an institution’s Azure Active Directory.

You can check the full webinar below if you’re interested in seeing a demo of ORCA in use, how it was implemented, and how to get started with installing it or contributing to the project!

Make your own apps

Keen on developing your own applications on top of services like Teams, Sharepoint, and Microsoft Graph? Make sure to check out these resources, we found them pretty useful when we first got started:

by Contributed | Jun 15, 2021 | Technology

This article is contributed. See the original author and article here.

I’m excited to announce the second step in our normalization journey. Following our networking schema, we now extend our Azure Sentinel Information Model (ASIM) guidance and release our DNS schema. We expect to follow suit with additional schemas in the coming weeks.

Special thanks to Yaron Fruchtmann and Batami Gold, who made all this possible.

This release includes additional artifacts to ensure easier use of ASIM:

- All the normalizing parsers can be deployed in a click using an ARM template. The initial release contains normalizing parsers for Infoblox, Cisco Umbrella, and Microsoft DNS server.

- We have migrated analytic rules that worked on a single DNS source to use the normalized template. Those are available in GitHub and will be available in the in product gallery in the coming days. You can find the list at the end of this post.

With a single click deployment and support for normalized content in analytic rules, we believe we will see an accelerated adaption of the Azure Sentinel Information Model.

Join us to learn more about Azure Sentinel information model in two webinars:

- The Information Model: Understanding Normalization in Azure Sentinel

- Deep Dive into Azure Sentinel Normalizing Parsers and Normalized Content

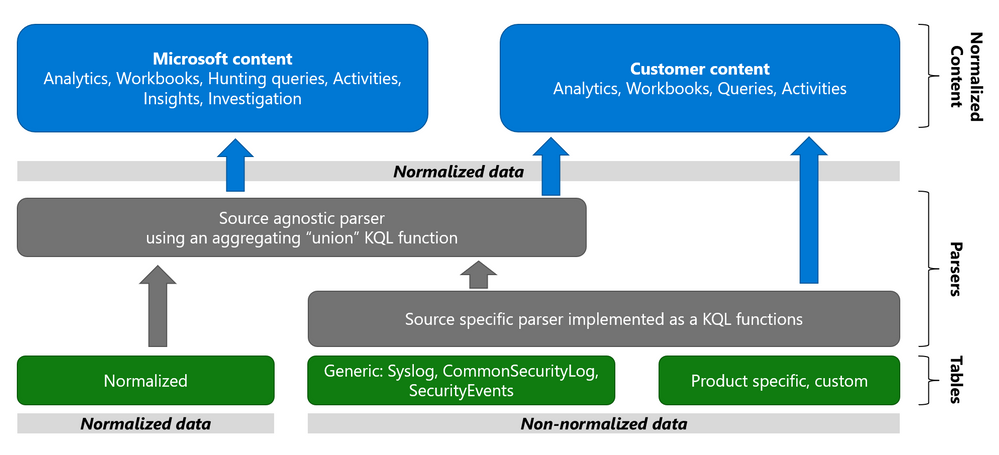

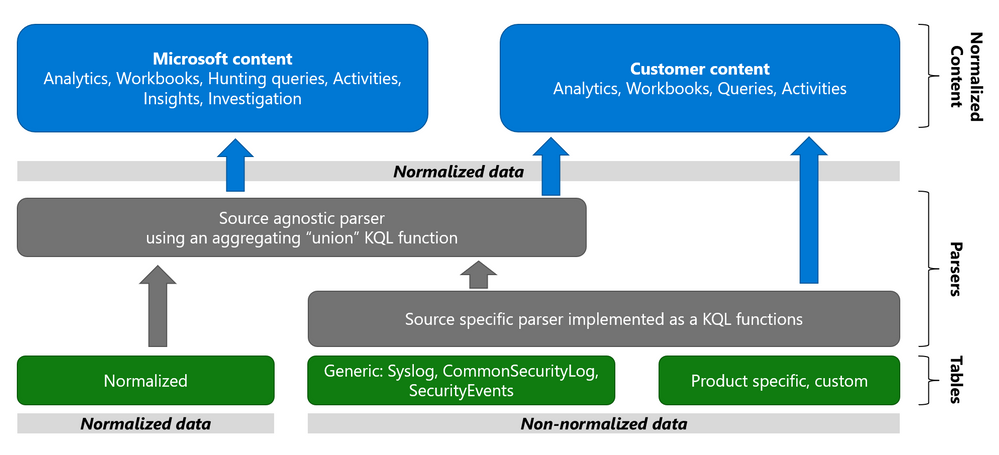

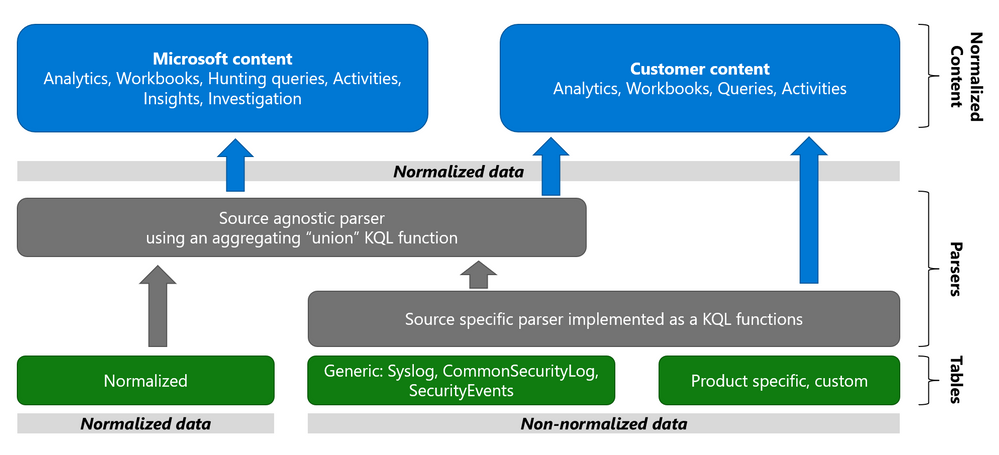

Why normalization, and what is the Azure Sentinel Information Model?

Working with various data types and tables together presents a challenge. You must become familiar with many different data types and schemas, write and use a unique set of analytics rules, workbooks, and hunting queries for each, even for those that share commonalities (for example, DNS servers). Correlation between the different data types necessary for investigation and hunting is also tricky.

The Azure Sentinel Information Model (ASIM) provides a seamless experience for handling various sources in uniform, normalized views. ASIM aligns with the Open-Source Security Events Metadata (OSSEM) common information model, promoting vendor agnostic, industry-wide normalization. ASIM:

- Allows source agnostic content and solutions

- Simplifies analyst use of the data in sentinel workspaces

The current implementation is based on query time normalization using KQL functions. And includes the following:

- Normalized schemas cover standard sets of predictable event types that are easy to work with and build unified capabilities. The schema defines which fields should represent an event, a normalized column naming convention, and a standard format for the field values.

- Parsers map existing data to the normalized schemas. Parsers are implemented using KQL functions.

- Content for each normalized schema includes analytics rules, workbooks, hunting queries, and additional content. This content works on any normalized data without the need to create source-specific content.

Why normalize DNS data?

ASIM is especially useful for DNS. Different DNS servers and DNS security solutions such as Infoblox, Cisco Umbrella & Microsoft DNS server provide highly non-standard logs, representing similar information, namely the DNS protocol. Using normalization, standard, source agnostic content can apply to all DNS servers without customizing it to each DNS server. In addition, an analyst investigating an incident can query the DNS data in the system without specific knowledge of the source providing it.

Analytic Rules added or updated to work with ASim DNS

- Added:

- Excessive NXDOMAIN DNS Queries (Normalized DNS)

- DNS events related to mining pools (Normalized DNS)

- DNS events related to ToR proxies (Normalized DNS)

- Updated to include normalized DNS:

- Known Barium domains

- Known Barium IP addresses

- Exchange Server Vulnerabilities Disclosed March 2021 IoC Match

- Known GALLIUM domains and hashes

- Known IRIDIUM IP

- NOBELIUM – Domain and IP IOCs – March 2021

- Known Phosphorus group domains/IP

- Known STRONTIUM group domains – July 2019

- Solorigate Network Beacon

- THALLIUM domains included in DCU takedown

- Known ZINC Comebacker and Klackring malware hashes

Recent Comments