Video Transcript:

– Up next, I’m joined by Lead Program Manager, CJ Tan, to go deeper on Viva Topics, which uses AI to organize information into accessible knowledge, within the apps and services you use every day. And we’re going to walk through the admin experience for setup, as well as the controls available to publish and curate topic pages and ensure content accuracy. So CJ, welcome to Microsoft Mechanics.

– Thank you for having me.

– Thanks for joining us today. So of course, before we get into Viva Topics, if you’re new to Microsoft Viva, it comprises four modules that deliver new employee experiences across knowledge, communications, resources, learning, and insights, all in the context of your work. Now, these leverage the foundational technologies of Microsoft 365, Microsoft Graph and AI to deliver a modern employee experience platform. In fact, you can learn more by watching our Essentials episode at aka.ms/VivaEssentials. And one of the foundational experiences in the new Viva platform is Topics. So CJ, can you explain what we’re solving for here?

– Of course, so the premise of Topics is to build a system that transforms information into knowledge and actively delivers it to you in the context of your work. This is particularly important, as many of us especially now, are working remotely or in more hybrid office environments. So it can be harder to stay informed. In any work environment, there are really two currencies. On one side, there is the data and information itself where you want to discover when you need it. On the other side, there are the people who have worked on content with their expertise, knowledge, and skills. So with Topics, we bring these two currencies together, So no matter where you are or whatever relationships you have, we can connect you to the knowledge and the people closest to it.

– Right, and one of the key things here is that the knowledge comes to you without having to search for it all in the context of what you’re doing every day. So can you show us an example?

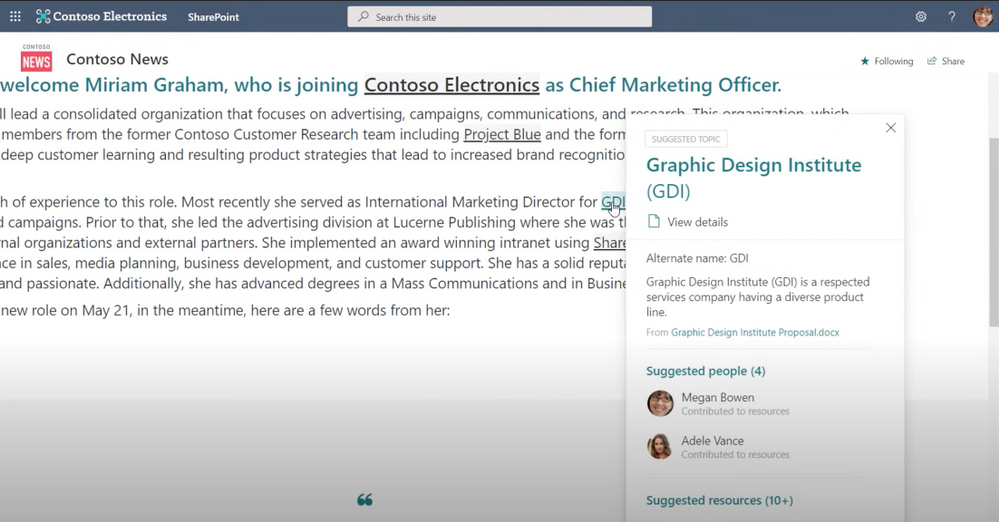

– Yes, so we can all relate to the experience of either being in a new job or a new role, and you have to get up to speed quickly. And there are terms, project names, or acronyms that feel like a foreign language. For example, here’s a news post on a SharePoint site that includes internal project names and terms like Project Blue and GDI, without any context.

– Right, and we’ve all been there where it takes time to acclimate. And some of this might be tribal knowledge, because no one’s really thinking about or explaining some of the terms and acronyms especially if you’re new to a role. So what you’ve got to do in these cases is a bunch of homework and really everything you can to avoid looking uninformed to your peers.

– Right, so now you don’t need to chase things down like an outsider. You can directly get insider knowledge to save you time and without needing to even search in many cases. So for example, I’ll hover over GDI and you’ll see a nice summary of what it is, who’s connected to it and suggested resources, right in the context of where that term came up. What I see here might be enough context and information, but if I want to go deeper, I can simply click in and find a topic page. And here you’ll see a lot more detail like the description, people who are domain experts related to this topic, and in suggested files and pages, I can see a lot of great documents here and sites related to the topic. One important thing to note is that it is only showing me resources that I have access to based on my individual permissions. This means there are no dead links and I don’t need to request permissions to anything I see. And conversely, I don’t see anything I shouldn’t see. All the content you see in this case was automatically discovered with the built-in AI and I was able to get up to speed quickly. That said, if I wanted to, I could also search for a topic. You’ll remember the other topic on the news post was called Project Blue. So here on office.com, I’ll search for that. And you’ll see, it brings up an answer card for that topic. And I can click in to see the topic page.

– Okay, so what’s happening behind the scenes to make all this possible?

– Yeah, there’s definitely a lot of magic through AI going on behind the scenes. So Viva Topics looks for nouns or acronyms being mentioned through the work that people are doing. The AI builds connections and inferences to content that lives inside of Microsoft 365. For example, it’s looking at SharePoint profiles. Microsoft Graph can see activity around the content and associated people to determine who may be connected to a given topic. So using natural language understanding with entity recognition, Viva is able to extract a summary description of the topic, AI infers the files, pages, and sites suggestions for the topic. And additionally, the people who have collaborated on these resources are selected and ranked based on their contribution as well. And you can think of the output as equivalent to posting to a Wikipedia page on the topic.

– What controls exist then to ensure both the accuracy and appropriateness then of the information and the knowledge that’s associated with that topic?

– Yeah, so here AI takes the first draft of bringing this material together and your domain knowledge experts review and edit the content on those topic pages, ensuring its accuracy while AI continues to suggest content to keep it up to date.

– So in other words, for this to work well, you need experts close to the content to review those topic pages, versus say just the people in the IT department?

– Yeah, right, you’ll want to assign knowledge managers who can guide the crowdsourcing efforts of your experts. In fact, let me walk you through the experience that we give you as a knowledge manager. So in the Viva Topics center, under Manage Topics, you can see the AI has suggested 98 topics. Next to each of these is a quality score, which is a rough gauge of how complete of a job the AI has done to start building that page; whether it has a topic summary, found resources, or related people. And second you’ll see impressions, which measures how frequently the topic has appeared to users. These two metrics combined can help you to prioritize where you want to place your review and curation efforts, or reach out to one of the suggested people listed to review and curate. Again, this is permission space. So even as a knowledge manager, I can only see topics that I personally have access to. For example, a knowledge manager in the human resources team would see different suggested topics than a knowledge manager in the research and development team. Now, if I click into one of these topics, for example, Rainier Project, you’ll see the AI has started a basic page with the information it could extract. From here, I can edit all of these web parts on the page. Here for example, I can edit the topic description. I’ll just add a word here, modern. Then I can add people. So I’ll go ahead and add Nester since he’s Lead Project Manager. You’ll see suggested people on the page discovered by AI as contributors to the topic. And the same thing goes for files. I can add additional files directly by hitting add or pin a file so it appears at the top for those who see the topic. I can also remove content by clicking on the X for each of the items. AI isn’t perfect, so this easy action makes it simple to guide the AI. I’ll remove this one. Next I can add related sites and resources for people. I’ll add the landing site in this case. And one of the things I love as a knowledge manager is I can also connect a topic to related topics that AI may not already have found. So here I’ll add Project Mu and say it is the precursor to the Rainier project. Next, comments are enabled by default. This is great for people to provide feedback and can create more engagement, but I can also disable them if I want to. And then once I’m happy, I can publish the topic page. And that will take just a few moments. Once I’m back in the knowledge manager experience, you’ll see the topic now appears in the published tab. Of course, as with any other SharePoint page, I can go back and review these entries at any time to make sure everything stays up to date, or unpublish and remove entries if I need to.

– And because the topic page is like a Wiki, as you showed, kind of benefit from user contribution, is there anything that users themselves can do to really add to the accuracy of your information?

– Yes, absolutely. As a user, you play a very important role here. For example, in the Viva Topics Center in SharePoint, as a topic contributor, you can see the topics where you’ve been suggested as a person who is knowledgeable about the topic. You can tap through to the page to contribute directly to the topic content, or you can quickly elect to remove yourself if you are not the right person to be listed. I’ll do that here for service revitalization. Also, you can manually create topics, as you can see here, with the new Topic Page, where if you have the right permissions, you can contribute topics from scratch. Let me show you something else. I’ll jump into SharePoint. You can manually use hashtags as you edit content to insert a topic highlight onto your page. This highlight will then show a topic card for your reader. Here I’m editing a page. And if I paste in a few words, then start typing a hashtag, it will list matching topics. So here I want to add Rainier Project. And after typing R-A-I, it finds it, and I can choose it from the dropdown. And one important thing to mention: as Viva Topics is a knowledge system, it’s important to take the time to make sure that as you create content in SharePoint, you aren’t default sharing with everyone and that you are setting the right permissions for who can view your content as you create it. That way, people can only see what they should have permissions to.

– Great, so the AI is saving a ton of time and it’s using the graph to discover and then build out those baseline topic pages. But ultimately it’s the knowledge managers who have control over the content on those pages. So what steps then does IT need to take in order to get the service up and running?

– Well, if you have Microsoft 365 running, it’s pretty straightforward to set up. First, you need to have access to the service itself. So here you can start with a trial. To find it, just go to billing, search for Viva and click on details. Here you’ll find the link to the free trial. So I’ll click on that, then I’ll select try now. So now Viva Topics is available to my tenant, but off by default. As soon as I configure the settings in admin set up, the topic discovery and experiences will be activated. So I’ll go to setup and I’ll scroll down to files and content, and then I’ll choose connect people to knowledge and click get started to get to our setup wizard. You’ll see the first page configures how Viva Topics finds topics using its built-in AI. Here I can choose all sites or all sites except the sites I want to opt out of. Here you’ll see there’s an option to upload a CSV for cases when you have dozens or hundreds of sites you want to exclude here. CSVs are found throughout setup to help with bulk entry. Moving down the other options, I can specifically choose the sites I want or choose no sites. I’ll stick with the recommendation of all sites.

– Great, and this step here, by the way, is foundational to AI then being able to find all that relevant content?

– Right, and something else coming soon, if you’ve invested in managed metadata services, you’ll be able to select term sets and use them to see topics in your knowledge base.

– Nice, but that said, I know a lot of people are wondering what controls exist over what information then ultimately gets indexed?

– Yeah, we get that question a lot. And here’s where you can work with team leaders and knowledge managers to protect sensitive information. This next control allows you to exclude specific keywords or topic names. These could be private code names that need to remain confidential. Once you exclude them, knowledge indexing will not identify this as a topic. You can also choose if the keyword needs to exactly or partially match the topic name you enter. Here again, working with your knowledge managers, you can figure out what needs to be excluded. For now, I’ll stick with don’t exclude any topics and hit next. Now I need to choose who can see topics. Importantly, if I choose only selected people or security groups, I can target this rollout. I might start with a pilot group then expand it over time and I can do that here. But in my case, I’ll keep the default, everyone in my organization, then hit next.

– So in addition then to protecting sensitive information, can you scope if people are able to contribute to the topics themselves, and how do you designate knowledge managers in this case?

– Yeah, you definitely can scope who can contribute. The whole idea around Viva Topics is to build this knowledge platform for your organization, but you have the full spectrum of control under permissions for topic management. Here, for example, if my organization favors a crowdsourcing approach, I can allow everyone to create and edit new topics. I can also choose specific groups of people and only allow them to create and edit topics. And to answer your question on assigning knowledge managers to manage topics, this next control is where you would do that. This lights up the managed topics experience that I just showed you in the topicscenter. In my case though, I’ll keep the defaults and hit next. Now the last step is to create the topic center in SharePoint. Here, I’ll give it a name and I will call this Infopedia, but I’ll leave the description and hit next. Finally, I can review these settings or make further edits. It’s important to point out that these initial settings can be modified. As new sites are built, new people join the organization or new code names are generated. So you’re not bound to what was just configured. The last step you’ll do is hit activate, and now the Topics service is live and running in my tenant.

– Okay, so now the AI can kind of start to do its thing, and then find all the topics in your Microsoft 365 environment. But how long does it take then before the service starts to find topics?

– It can take a few days, depending on the scope you’ve set, before you’ll see these initial set of AI-discovered topics. You can, however, manually create new topics immediately. And then once everything is set up, back on the Connect People to Knowledge page in the admin center, you can go in and change your settings at any time here with the manage button and it also links you directly to your topic center in the SharePoint right here with the Viva Topics dashboard link. And of course, Viva Topics will abide by the policies you have in place for information protection within your organization. So you have everything you need to safely connect people to knowledge for your organization. And we showed you the user experience in SharePoint and search today. We’ll be lighting up integrated experiences for Viva Topics across Microsoft 365, like Yammer, Outlook, and Microsoft Teams soon. And by the way, today you can already experience Viva Topics in Office clients by selecting a term and searching.

– Okay, so now we’ve covered the overall experience for users, knowledge managers, and the admin experience, and we’ve shown how you’re in complete control over your information and how it’s discovered. So what’s the best way then to get started?

– Yeah, so Viva Topics is generally available and ready for production use today. You can go ahead and activate the trial and try it out. Also, to help you adopt Viva Topics in your organization, under the Get Started tab in the topic center there are also best practices to help you to identify the right stakeholders in your organization and the workflow across executive sponsors, knowledge managers, and IT. And you can find additional tutorials and guidance at aka.ms/vivatopics.

– Thanks again CJ for joining us today and stay tuned also to the next episode in our series on Microsoft Viva. Of course, keep watching Microsoft Mechanics for the latest updates. Subscribe if you haven’t yet and we’ll see you soon.

Recent Comments