by Contributed | Nov 30, 2020 | Technology

This article is contributed. See the original author and article here.

SCCM (System Center Configuration Manager) management points are hosted on IIS. If Web Management Service service doesn’t start, these management points may run into issues.

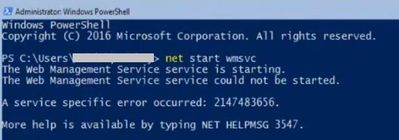

Here is the PowerShell error I saw while trying to run Web Management Service:

Solution

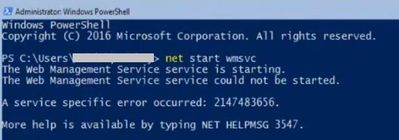

Open IIS Manager and go to Management Service Delegation. Check “Allow administrators to bypass rules” option. It’s in Edit Feature Settings window

Then go to “Management Service” and select the self-signed certificate (If there is no self signed certificate, you can create one in “Server Certificates”). Try to enable Management Service again. It should start without issues.

by Contributed | Nov 30, 2020 | Azure, Microsoft, Technology

This article is contributed. See the original author and article here.

The Azure Sphere 20.11 SDK feature release includes the following features:

- Updated Azure Sphere SDK for both Windows and Linux

- Updated Azure Sphere extension for Visual Studio Code

- New and updated samples

The 20.11 release does not contain an updated extension for Visual Studio or an updated OS.

To install the 20.11 SDK and Visual Studio Code extension, see the installation Quickstart for Windows or Linux:

New features in the 20.11 SDK

The 20.11 SDK introduces the first Beta release of the azsphere command line interface (CLI) v2. The v2 CLI is designed to match the Azure CLI more closely in functionality and usage. On Windows, you can run it in PowerShell or in a standard Windows command prompt; the Azure Sphere Developer Command Prompt is not required.

The Azure Sphere CLI v2 Beta supports all the commands that the original azsphere CLI supports, along with additional features:

- Tab completion for commands.

- Additional and configurable output formats, including JSON.

- Clear separation between stdout for command output and stderr for messages.

- Additional support for long item lists without truncation.

- Simplified object identification so that you can use either a name or an ID (a GUID) to identify tenants, products, and device groups.

For a complete list of additional features, see Azure Sphere CLI v2 Beta.

The CLI v2 Beta is installed alongside the existing CLI on both Windows and Linux, so you have access to either interface. We encourage you to use CLI v2 and to report any problems by using the v2 Beta CLI azsphere feedback command.

IMPORTANT: The azsphere reference documentation has been updated to note the differences between the two versions and to include examples of both. However, examples elsewhere in the documentation still reflect the original azsphere CLI v1. We will update those examples when CLI v2 is promoted out of the Beta phase.

We do not yet have a target date for promotion of the CLI v2 Beta or the deprecation of the v1 CLI. However, we expect to support both versions for at least two feature releases.

New and updated samples for 20.11

The 20.11 release includes the following new and updated sample hardware designs and applications:

In addition to these changes, we have begun making Azure Sphere samples available for download through the Microsoft Samples Browser . The selection is currently limited but will expand over time. To find Azure Sphere samples, search for Azure Sphere on the Browse code samples page.

Updated extension for Visual Studio Code

The 20.11 Azure Sphere extension for Visual Studio Code supports the new Tenant Explorer, which displays information about devices and tenants. See view device and tenant information in the Azure Sphere Explorer for details.

If you encounter problems

For self-help technical inquiries, please visit Microsoft Q&A or Stack Overflow. If you require technical support and have a support plan, please submit a support ticket in Microsoft Azure Support or work with your Microsoft Technical Account Manager. If you would like to purchase a support plan, please explore the Azure support plans.

by Contributed | Nov 30, 2020 | Azure, Microsoft, Technology

This article is contributed. See the original author and article here.

One of the most important questions customers ask when deploying Azure DDoS Protection Standard for the first time is how to manage the deployment at scale. A DDoS Protection Plan represents an investment in protecting the availability of resources, and this investment must be applied intentionally across an Azure environment.

Creating a DDoS Protection Plan and associating a few virtual networks using the Azure portal takes a single administrator just minutes, making it one of the easiest to deploy resources in Azure. However, in larger environments this can be a more difficult task, especially when it comes to managing the deployment as network assets multiply.

Azure DDoS Protection Standard is deployed by creating a DDoS Protection Plan and associating VNets to that plan. The VNets can be in any subscription in the same tenant as the plan. While the deployment is done at the VNet level, the protection and the billing are both based on the public IP address resources associated to the VNets. For instance, if an Application Gateway is deployed in a certain VNet, its public IP becomes a protected resource, even though the virtual network itself only directly contains private addresses.

A consideration worth making is that the cost is not insignificant – a DDoS Protection plan starts at $3,000 USD per month for up to 100 protected IPs, adding $30 per public IP beyond 100. When the commitment has been made to investing in this protection, it is very important for you to be able to ensure that investment is applied across all required assets.

Azure Policy to Audit and Deploy

We just posted an Azure Policy sample to the Azure network security GitHub repository that will audit whether a DDoS Protection Plan is associated to VNets, then optionally create a remediation task that will create the association to protect the VNet.

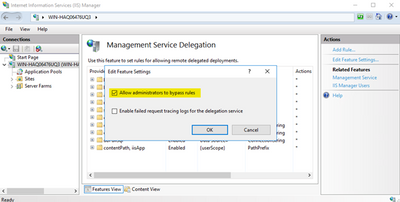

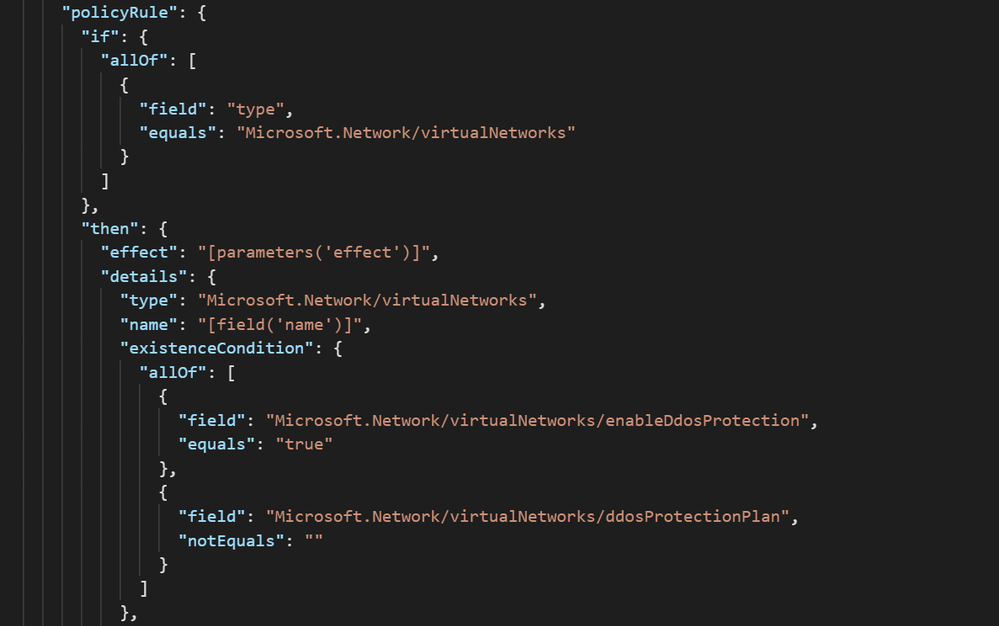

The logic of the policy can be seen in the screenshot below. All virtual networks in the assignment scope are evaluated against the criteria of whether DDoS Protection is enabled and has a plan attached:

Further down in the definition, there is a template that creates the association of the DDoS Protection Plan to the VNets in scope. Let’s look at what it takes to use this sample in a real environment.

Creating a Definition

To create an Azure Policy Definition:

- Navigate to Azure Policy –> Definitions and select ‘+ Policy Definition.’

- For the Definition Location field, select a subscription. This policy will still be able to be assigned to other subscriptions via Management Groups.

- Define an appropriate Name, Description, and Category for the Policy.

- In the Policy Rule box, replace the example text with the contents of VNet-EnableDDoS.json

- Save.

Assigning the Definition

Once the Policy Definition has been created, it must be assigned to a scope. This gives you the ability to either deploy the policy to everything, using either Management Group or Subscription as the scope, or select which resources get DDoS Protection Standard protection based on Resource Group.

To assign the definition:

- From the Policy Definition, click Assign.

- On the Basics tab, choose a scope and exclude resources if necessary.

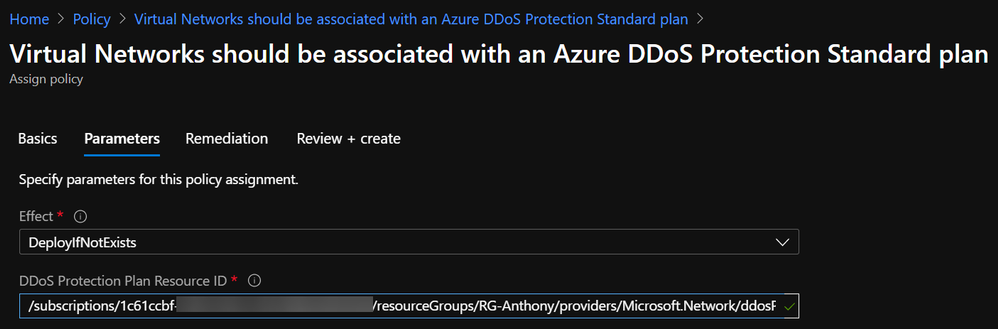

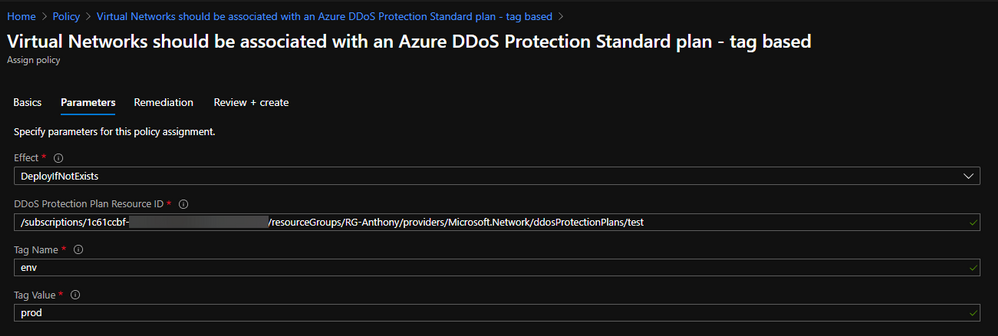

- On the Parameters tab, choose the Effect (DeployIfNotExists if you want to remediate) and paste in the Resource ID of the DDoS Protection Plan in the tenant:

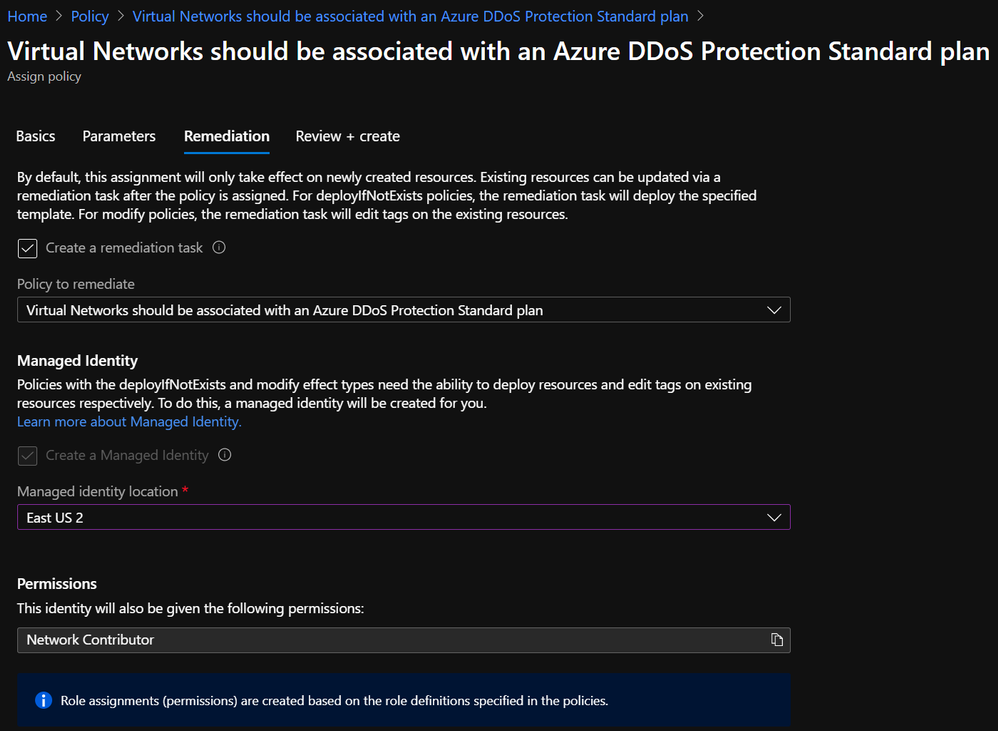

- On the Remediation tab, check the box to create a remediation task and choose a location for the managed identity to be created. Network Contributor is an appropriate role:

- Create.

Modifying the Policy Definition

The process outlined above can be used to apply DDoS Protection to collections of resources as defined by the boundaries of management groups, subscriptions, and resource groups. However, these boundaries do not always represent an exhaustive list of where DDoS Protection should or should not be applied. Sure, some customers want to attach a DDoS Protection Plan to every VNet, but most will want to be more selective.

Even if resource groups are granular enough to determine whether DDoS Protection should be applied, Policy Assignments are limited to a single RG per assignment, so the process of creating an assignment for every resource group is prohibitively tedious.

One solution to the problem of policy scoping is to modify the definition rather than the assignment. Let’s use the example of an environment where DDoS Protection is required for all production resources. Production environments could exist in many different subscriptions and resource groups, and this could change as new environments are stood up.

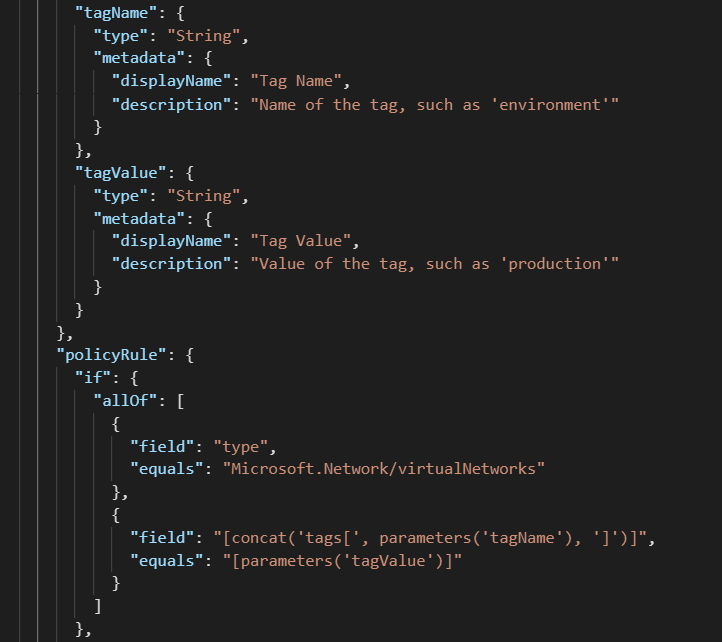

The solution here is to use tags as the identifier of production resources. In order to use this as a way to scope Azure Policy Assignments, you must modify the definition. To do this, a short snippet needs to be added to the policy rule, along with corresponding parameters (or copied from VNet-EnableDDoS-Tags.json)

After modifying a definition to look for tag values, the corresponding assignment will look slightly different:

In this configuration, a single Policy Definition can be assigned to a wide scope, such as a Management Group, and every tagged resource within will be in scope.

Verifying Compliance

When a Policy Assignment is created using a remediation action, the effect of the policy should guarantee compliance with requirements. To gain visibility into the auditing and remediation done by the policy, you can go to Azure Policy à Compliance and select the assignment to monitor:

A successful remediation task denotes that the VNet is now protected by Azure DDoS Protection Standard.

End-to-End Management with Azure Policy

Moving beyond plan association to VNets, there are some other requirements of DDoS Protection that Azure Policy can help with.

On the Azure network security GitHub repo, you can find a policy to restrict creation of more than one DDoS Protection Plan per tenant, which helps to ensure that those with access cannot inadvertently drive up costs.

Another sample is available to keep diagnostic logs enabled across all Public IP Addresses, which keeps valuable data flowing to the teams that care about such data.

The point that should be taken from this post is that Azure Policy is a great mechanism to audit and enforce compliance with DDoS Protection requirements, and it has the power to control most other aspects of Azure security and compliance.

by Contributed | Nov 30, 2020 | Dynamics 365, Microsoft 365, Technology

This article is contributed. See the original author and article here.

Effective December 1, 2020, for all 10.0+ versions of Dynamics 365 Financial Dimension Framework we are preventing deletion from the following Dimension tables:

- DimensionAttribute

- DimensionAttributeValue

- DimensionAttributeSet

- DimensionAttributeSetItem

- DimensionAttributeValueSet

- DimensionAttributeValueSetItem

- DimensionAttributeValueCombination

- DimensionAttributeValueGroupCombination

- DimensionAttributeValueGroup

- DimensionAttributeLevelValue

This change is applied directly in the database and follows the same business logic that is used within the application.

These changes protect the integrity of the financial accounting data. Any customizations or scripts will need to be immediately updated to comply with these changes. Note that updating data to blank values is not a valid solution because this corrupts data. In the future, we will also not allow table updates.

Why the changes were necessary

These tables were originally designed to be immutable data structures, which means that they are completely controlled by Microsoft or the official X++ APIs designed for extenders. This design allowed for high throughput and durable references that could stand the test of time when performing historical regulatory reporting.

Unfortunately, many extenders have worked around these guidelines to delete records from the tables directly, leading to data corruption and downstream impact. Examples of such issues include:

- Duplicate account rows in financial reports that are frequently used in year-end account reconciliation processes.

- Transactional processing failures that prevent the ability to post documents like Sales order invoices or Purchase order invoices, among others.

- Historical reporting errors and/or changes resulting in the inability to meet auditing and/or regulatory reporting requirements.

- Year-end processing that may be blocked due to out-of-balance errors.

Fixing these issues is extremely error-prone and time-consuming to both customers and Microsoft. Potential fixes also put the accounting data at risk, and greatly limit the ability to focus on go-forward improvements.

Product support

Microsoft does not provide support on customizations or direct SQL queries to the above-mentioned tables. This means that front-line support and engineering teams will not be able to help with issues caused by these customizations. If you encounter data corruption due to customizations or running direct SQL queries, contact our services team or delivery partners for assistance. It is very important that you update all customizations or direct SQL queries that delete records from these tables. In the future, Microsoft will provide a tool for self-service correction of data for targeted scenarios.

Next steps

For detailed information about financial dimensions and the risks of changing data directly, see Part 6: Advanced topics in the “Ledger account combinations” topic. To address one of the known requests of hiding or inactivating values that are no longer relevant, we will prioritize the ability to support “soft-deletions” and the corresponding removal of unused references. If you have additional requests, please share them in the Dynamics 365 Application Ideas portal.

The post Prepare for dimension table changes in Financial Dimension Framework appeared first on Microsoft Dynamics 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

by Contributed | Nov 30, 2020 | Technology

This article is contributed. See the original author and article here.

December 9th | 09:00 AM – 10:45 AM (GMT) London, UK

In this free webinar, we bring together Researchers and Research Technology Professionals to discuss how Cloud can accelerate your time to insights and breakthrough discoveries. Join Andrew Fitzgibbon and Pashmina Cameron from Microsoft Research as they discuss cloud-powered research facilitated by massive training and data acquisition and how to leverage the Azure’s current capabilities to build the next-gen Azure.

Hear from Tania Allard about how cloud-powered research can support FAIR principles and from Laura Redfern about real HPC in the cloud.

Brian Gibson will be launching a new Research Technology Professionals community created by Microsoft with a host of benefits.

Agenda

09:00 – 09:30

|

Cloud-powered training for Edge devices with Andrew Fitzgibbon, Partner Researcher, Microsoft Research

In this session we will be exploring the delivery of state-of-the-art computer vision technology on power-efficient machines, facilitated by massive training and data acquisition.

|

09:30 – 10:00

|

Building tomorrow’s Azure with today’s Azure with Pashmina Cameron, Principal RSE Lead, Microsoft Research

This talk will showcase how we leverage the current capabilities of Azure platform to build the next-gen Azure platform. All this over the life-cycle of a few different Azure research projects.

|

10:00 – 10:20 |

Cloud-powered research with Tania Allard, Senior Cloud Advocate, Microsoft Cloud and AI

As research has become more and more reliant on software and powerful compute, so has increased the need to ensure it is reproducible and FAIR (findable, accessible, interoperable and reusable). In this session we will explore the importance of reproducible research and how cloud computing offers mechanisms for sharing code, data, and environments that are critical for research reproducibility.

|

10:20 – 10:40 |

Cloud-powered HPC with Laura Redfern, Global Blackbelt HPC Specialist

HPC traditionally meant large, on-premise supercomputers requiring specialized networking, power and cooling. But with Cloud-powered HPC, the landscape is starting to change. This session will provide an overview of the services Azure provides to ensure the transition to cloud-powered HPC is as smooth as possible.

|

10:40 – 10:45 |

Research Technology Professional Community with Brian Gibson, EMEA Director, Higher Education & Research

What Research Software Engineers (RSEs), Data Scientists, Data Stewards and System Administrators can expect and gain by joining the community designed around them and their professional needs.

|

Register Now

Recent Comments