by Contributed | Nov 17, 2023 | Technology

This article is contributed. See the original author and article here.

Digital Marketing Content (DMC) OnDemand works as a personal digital marketing assistant and delivers fresh, relevant and customized content and share on social, email, website, or blog. It runs 3-to-12-week digital campaigns that include to-customer content and to-partner resources. This includes an interactive dashboard that will allow partners to track both campaign performance and leads generated in real time and to schedule campaigns in advance

TIPS AND TRICKS

DMC campaigns were created to assist you with your marketing strategies in automated way. However, we understand that you want to make sure the focus remains on your business as customers and prospects discover your posts. There are several ways you can customize campaigns to put the focus on your business and offerings:

- Customize the pre-written copy | Although we provide you with copy for your social posts, emails, and blog posts, pivoting this copy to highlight your unique value can help ensure customers and prospects understand more about your business and how you can help solve their current pain points.

- Upload your own content throughout the campaigns | If you have access to the Partner GTM Toolbox co-branded assets, you can create your own content quickly and easily through customizable templates. Choose your colors, photography, and copy to help customers and prospects understand more about your business. Alternatively, you can learn more about how to create your own content by reading the following blog posts: one-pagers and case studies. Once complete, click on “Add new content” within the any campaign under “Content to share”.

- Engage with your audience | Are people replying to your LinkedIn, Facebook, and X (formerly Twitter) posts? Take some time to respond to build a rapport.

- Access customizable content | Many campaigns in PMC contain content that was designed for you to customize. Microsoft copy is included, but designated sections are left blank for your copy and placeholders are added to ensure you are following co-branding guidelines. You can find examples here.

- Upload your logos | Cobranded content is being added on a regular basis, so make sure you’re taking advantage of this recently added functionality to extend your reach.

NEW CAMPAIGNS

NOTE: To access localized versions, click the product area link, then select the language from the drop-down menu.

- Product Area: Microsoft Security

- Data Security

- Now available in Dutch, English, French, German, Brazilian Portuguese, Spanish

- Defend against cybersecurity threats

- Now available in Dutch, English, French, German, Brazilian Portuguese, Spanish

- Modernize Security Operations

- Product Area: Microsoft 365

- Product Area: Microsoft Dynamics 365 & Power Platform

- Product Area: Microsoft Azure

by Contributed | Nov 16, 2023 | Technology

This article is contributed. See the original author and article here.

While there are multiple methods for obtaining explicit outbound connectivity to the internet from your virtual machines on Azure, there is also one method for implicit outbound connectivity – default outbound access. When virtual machines (VMs) are created in a virtual network without any explicit outbound connectivity, they are assigned a default outbound public IP address. These IP addresses may seem convenient, but they have a number of issues and therefore are only used as a “last resort”:

- These implicit IPs are subject to change which can result in issues later on if any dependency is taken on them.

- Their dynamic nature also makes them challenging to use for logs or filtering with network security groups (NSGs).

- Because these IPs are not associated with your subscription, they are very difficult to troubleshoot.

- This type of open egress does not adhere to Microsoft’s “secure-by-default” model which ensures customers have a strong security policy without having to take additional steps.

Because of these factors, we recently announced the retirement of this type of implicit connectivity, which will be removed for all VMs in subnets created after September 2025.

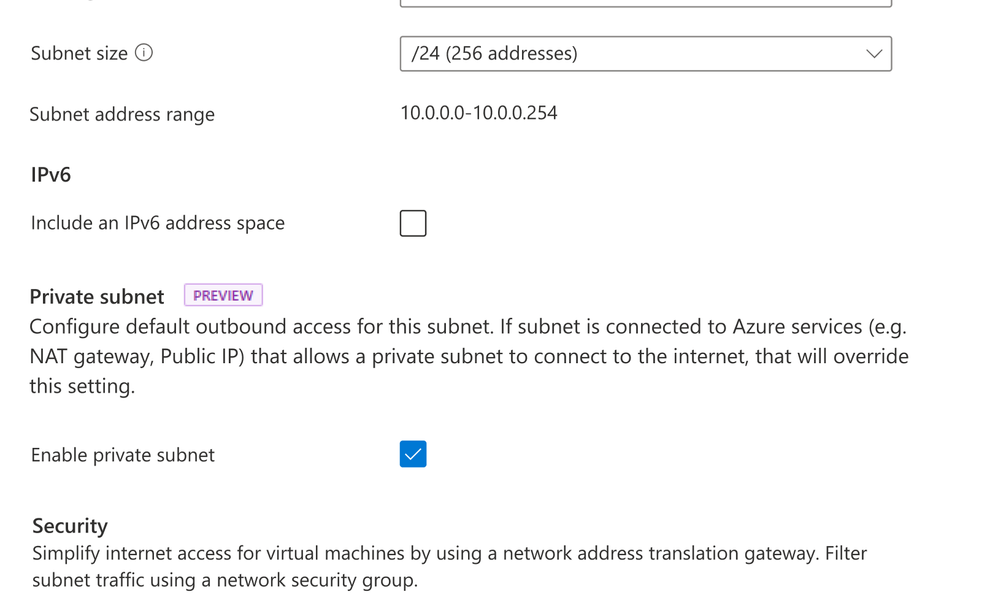

Private subnet

At Ignite, a new feature—private subnet—was released in preview. This feature will let you prevent this type of insecure implicit connectivity for all subnets with the “default outbound access” parameter set to false. Any virtual machines created on this subnet will be prevented from connecting to the Internet without an explicit outbound method specified. To enable this feature, simply ensure the option is selected when a new subnet is created as shown below.

View of Azure portal with Private subnet selection

View of Azure portal with Private subnet selection

Note that currently:

- A subnet must be created as private; this parameter cannot be changed following creation.

- Certain services will not function on a virtual machine in a private subnet without an explicit method of egress (examples are Windows Activation and Windows Updates).

- Both CLI and ARM templates can also be used; PowerShell is in development.

Azure’s recommended explicit outbound connectivity methods

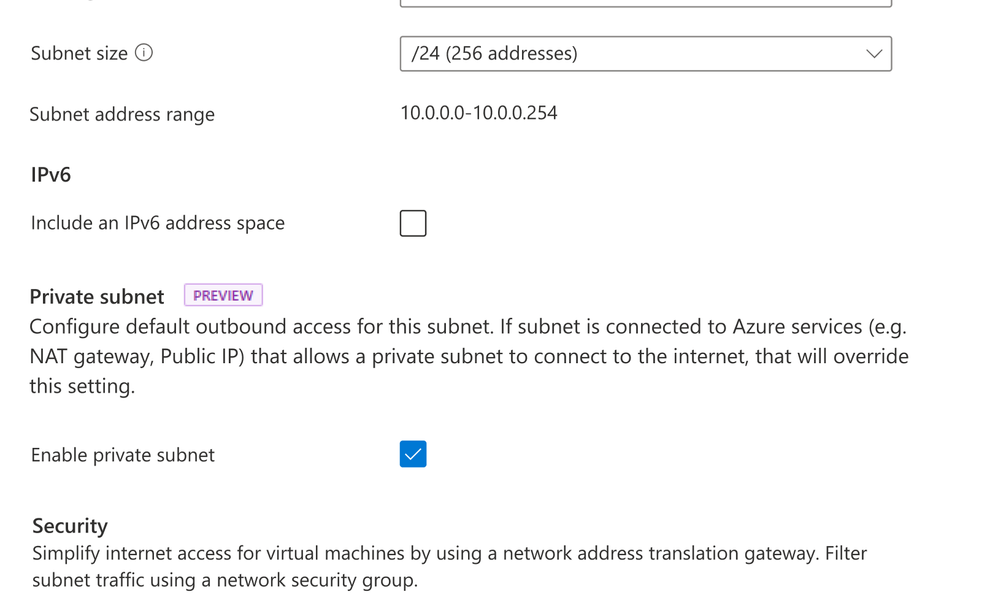

The good news is that there are much better, more scalable, and secure methods for having your VMs access the Internet. The three recommended options—in order of preference—are a NAT gateway, using outbound rules with a public load balancer, or placing a public IP directly on the VM network interface card (NIC).

Diagram of multiple explicit outbound methods

Diagram of multiple explicit outbound methods

Azure NAT Gateway

NAT Gateway is the best option for connecting outbound to the internet as it is specifically designed to provide highly secure and scalable outbound connectivity. NAT Gateway enables all instances within private subnets of Azure virtual networks to remain fully private and source network address translate (SNAT) to a static public IP address. No connections sourced directly from the internet are permitted through a NAT gateway. NAT Gateway also provides on-demand SNAT port allocation to all virtual machines in its associated subnets. Since ports are not in fixed amounts to each VM, this means you don’t need to worry about knowing the exact traffic patterns of each VM. To learn more, take a look at our documentation on SNATing with NAT Gateway or our blog that explores a specific outbound connectivity failure scenario where NAT Gateway came to the rescue.

Azure Load Balancer with outbound rules

Another method for explicit outbound connectivity is using public Azure load balancers with outbound rules. To provide outbound connectivity with Azure Load Balancer, you assign a dedicated public IP address or addresses as the frontend IP of the outbound rule. Private instances in the backend pool of the Load balancer then use the frontend IP of the outbound rule to connect outbound in a secure manner, similar to NAT Gateway. However, unlike NAT Gateway, SNAT ports are not allocated dynamically with outbound rules. Rather, using a load balancer requires manual allocation of SNAT ports in fixed amounts to each instance of your backend pool prior to deployment. This manual SNAT port allocation gives you full declarative control over outbound connectivity since you decide the exact amount of ports each VM is allowed. However, this manual allocation creates more overhead management in ensuring that you have assigned the correct amount of SNAT ports needed by your backend instances for connecting outbound. While you can scale your Load balancer by adding more frontend IPs to your outbound rule, this scaling requires you to then re-allocate assigned SNAT ports per backend instance to ensure that you are utilizing the full inventory of SNAT ports available.

Public IP address assignment to virtual machine NICs

Another option for providing explicit outbound connectivity from an Azure virtual network is the assignment of a public IP address directly to the NIC of a virtual machine. Customers that want to have control over which public IP address their VMs use for connecting to specific destination endpoints may benefit from assigning public IPs directly to the VM NIC. However, customers with more complex and dynamic workloads that need a many to one SNATing relationship that scales with their traffic volume should look to NAT Gateway or Load Balancer.

To learn more about each of these explicit outbound connectivity methods, see our public documentation on using source network address translation for outbound connections.

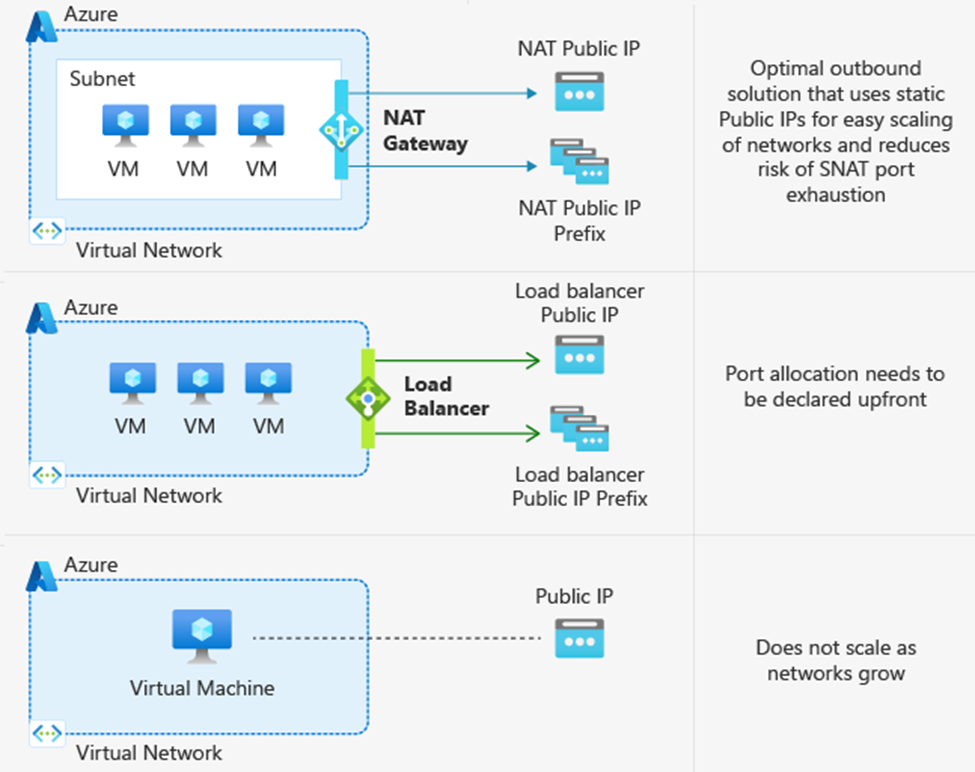

What outbound method am I currently using?

As mentioned earlier, default outbound access is enabled only when no other explicit outbound connectivity method exists. Note that there is an order of priority among the explicit methods, shown in the flowchart below. In other words, if your VM has multiple forms of outbound connectivity defined, then the higher order one will be used (e.g. NAT Gateway takes precedence above a public IP address attached to the VM NIC).

To check the form of outbound your deployments are using, you can refer to the flowchart below which lists them in the order of precedence.

Flowchart showing priority order for diffrent outbound methods

Flowchart showing priority order for diffrent outbound methods

To read more about default outbound access and private subnets, please refer to Default outbound access in Azure – Azure Virtual Network | Microsoft Learn.

To learn more about how to deploy a NAT Gateway, please use Quickstart: Create a NAT Gateway – Azure portal | Microsoft Learn.

by Contributed | Nov 15, 2023 | Technology

This article is contributed. See the original author and article here.

By Quentin Miller

We are excited to announce the public preview of Liveness Detection, an addition to the existing Azure AI Face API service. Facial recognition technology has been a longstanding method for verifying a user’s identity in device and online account login for its convenience and efficiency. However, the system encounters escalating challenges as malicious actors persist in their attempts to manipulate and deceive the system through various spoofing techniques. This issue is expected to intensify with the emergence of generative AIs such as DALL-E and ChatGPT. Many online services e.g., LinkedIn and Microsoft Entra, now support “Verified Identities” that attest to there being a real human behind the identity.

Face Liveness Detection

Liveness detection aims to verify that the system engages with a physically present, living individual during the verification process. This is achieved by differentiating between a real (live) and fake (spoof) representation which may include photographs, videos, masks, or other means to mimic a real person.

Face Liveness Detection has been an integrated part of Windows Hello Face for nearly a decade. “Every day, people around the world get access to their device in a convenient, personal and secure way using Windows Hello face recognition”, said Katharine Holdsworth, Principal Group Program Manager, Windows Security. The new Face API liveness detection is a combination of mobile SDK and Azure AI service. It is conformant to ISO/IEC 30107-3 PAD (Presentation Attack Detection) standards as validated by iBeta level 1 and level 2 conformance testing. It successfully defends against a plethora of spoof types ranging from paper printouts, 2D/3D masks, and spoof presentations on phones and laptops. Liveness detection is an active area of research, with continuous improvements being made to counteract increasingly sophisticated spoofing attacks over time, and continuous improvement will be rolled out to the client and the service components as the overall solution gets hardened against new types of attacks over time.

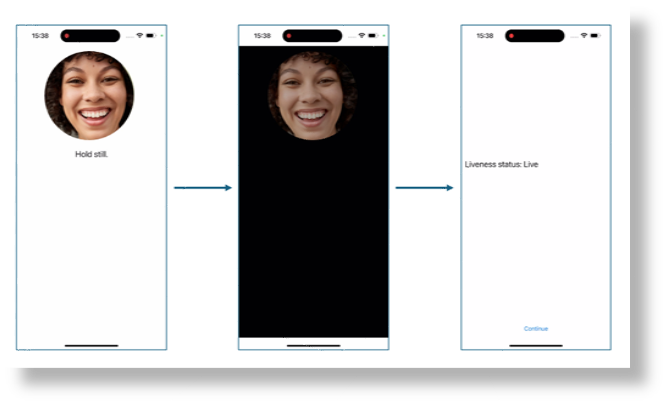

A simple illustration of the user experience from the SDK sample is shown below, where the screen background goes from white to black before the final liveness detection result is shown on the last page.

The following video demonstrates how the product works under different spoof conditions.

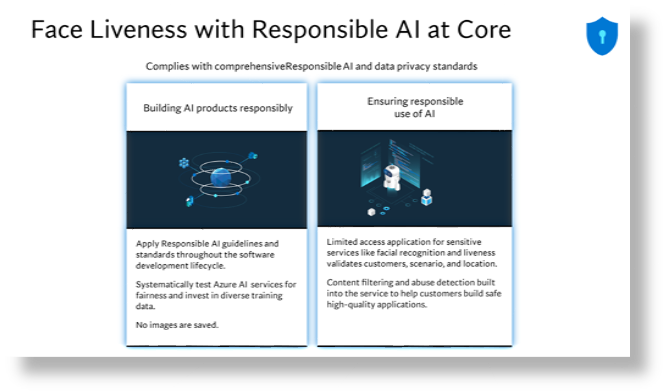

While blocking spoof attacks is the primary focus of the liveness solution, paramount importance is also given to allowing real users to successfully pass the liveness check with low friction. Additionally, the liveness solution complies with the comprehensive responsible AI and data privacy standards to ensure fair usage across demographics around the world through extensive fairness testing.

For more information, please visit: Empowering responsible AI practices | Microsoft AI.

Implementation

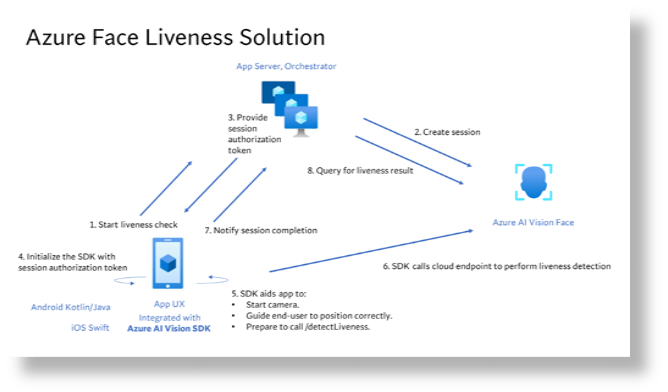

The overall liveness solution integration involves 2 different components: a mobile application and an app server/orchestrator.

Prepare mobile application to perform liveness detection

The newly released Azure AI Vision Face SDK for mobile (iOS and Android) allows for ease-of-integration of the liveness solution on front-end applications. To get started you would need to apply for the Face API Limited Access Features to get access to the SDK. For more information, see the Face Limited Access page.

Once you have access to the SDK, follow the instructions and samples included in the github-azure-ai-vision-sdk to integrate the UI and the code needed into your native mobile application. The SDK supports both Java/Kotlin for Android and Swift for iOS mobile applications.

Upon integration into your application, the SDK will handle starting the camera, guide the end-user to adjust their position, compose the liveness payload and then call the Face API to process the liveness payload.

Orchestrating the liveness solution

The high-level steps involved in the orchestration are illustrated below:

For more details, refer to the tutorial.

Face Liveness Detection with Face Verification

Integrating face verification with liveness detection enables biometric verification of a specific person of interest while ensuring an additional layer of assurance that the individual is physically present.

For more details, refer to the tutorial.

by Contributed | Nov 15, 2023 | Business, Microsoft 365, Technology, Work Trend Index

This article is contributed. See the original author and article here.

At Microsoft Ignite 2023, we are announcing new innovations across Microsoft Copilot—one copilot experience that runs across all our surfaces, understanding your context on the web, on your PC, and at work to bring the right skills to you when you need them across work and life.

The post Introducing Microsoft Copilot Studio and new features in Copilot for Microsoft 365 appeared first on Microsoft 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

by Contributed | Nov 15, 2023 | AI, Azure, Business, Microsoft 365, Technology

This article is contributed. See the original author and article here.

At Microsoft Ignite 2023, we’re excited to announce Microsoft Copilot Studio, a low-code tool to customize Microsoft Copilot for Microsoft 365 and build standalone copilots.

The post Announcing Microsoft Copilot Studio: Customize Copilot for Microsoft 365 and build your own standalone copilots appeared first on Microsoft 365 Blog.

Brought to you by Dr. Ware, Microsoft Office 365 Silver Partner, Charleston SC.

Recent Comments