by Contributed | Jun 19, 2024 | Technology

This article is contributed. See the original author and article here.

Have you ever gone toe to toe with the threat actor known as Octo Tempest? This increasingly aggressive threat actor group has evolved their targeting, outcomes, and monetization over the past two years to become a dominant force in the world of cybercrime. But what exactly defines this entity, and why should we proceed with caution when encountering them?

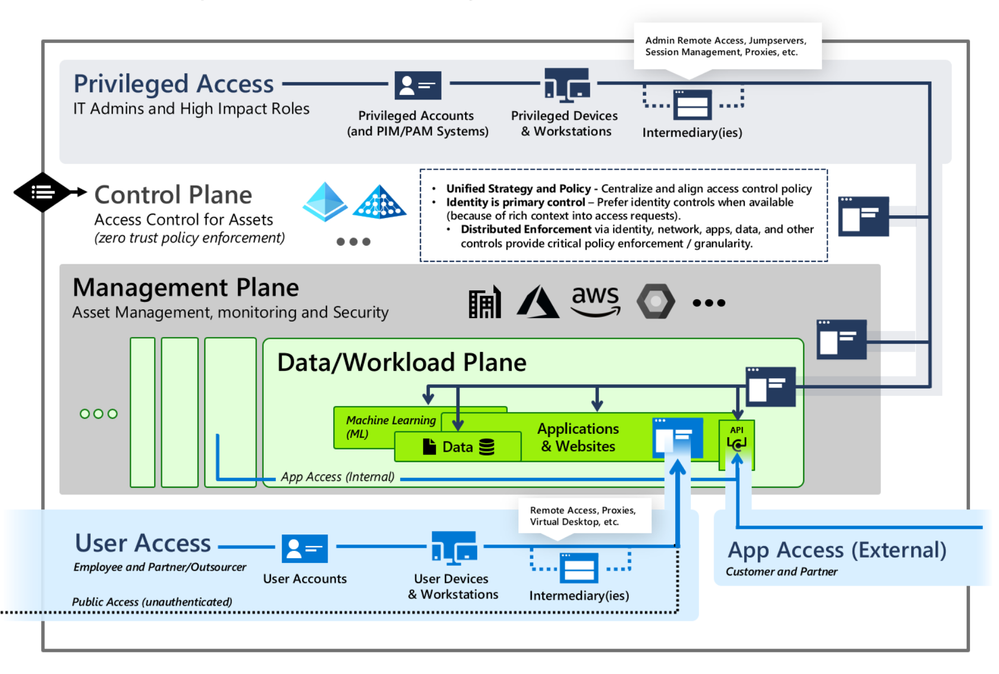

Octo Tempest (formerly DEV-0875) is a group known for employing social engineering, intimidation, and other human-centric tactics to gain initial access into an environment, granting themselves privilege to cloud and on-premises resources before exfiltrating data and unleashing ransomware across an environment. Their ability to penetrate and move around identity systems with relative ease encapsulates the essence of Octo Tempest and is the purpose of this blog post. Their activities have been closely associated with:

- SIM swapping scams: Seize control of a victim’s phone number to circumvent multifactor authentication.

- Identity compromise: Initiate password spray attacks or phishing campaigns to gain initial access and create federated backdoors to ensure persistence.

- Data breaches: Infiltrate the networks of organizations to exfiltrate confidential data.

- Ransomware attacks: Encrypt a victim’s data and demand primary, secondary or tertiary ransom fees to refrain from disclosing any information or release the decryption key to enable recovery.

Figure 1: The evolution of Octo Tempest’s targeting, actions, outcomes, and monetization.

Some key considerations to keep in mind for Octo Tempest are:

- Language fluency: Octo Tempest purportedly operates predominantly in native English, heightening the risk for unsuspecting targets.

- Dynamic: Have been known to pivot quickly and change their tactics depending on the target organizations response.

- Broad attack scope: They target diverse businesses ranging from telecommunications to technology enterprises.

- Collaborative ventures: Octo Tempest may forge alliances with other cybercrime cohorts, such as ransomware syndicates, amplifying the impact of their assaults.

As our adversaries adapt their tactics to match the changing defense landscape, it’s essential for us to continually define and refine our response strategies. This requires us to promptly utilize forensic evidence and efficiently establish administrative control over our identity and access management services. In pursuit of this goal, Microsoft Incident Response has developed a response playbook that has proven effective in real-world situations. Below, we present this playbook to empower you to tackle the challenges posed by Octo Tempest, ensuring the smooth restoration of critical business services such as Microsoft Entra ID and Active Directory Domain Services.

Cloud eviction

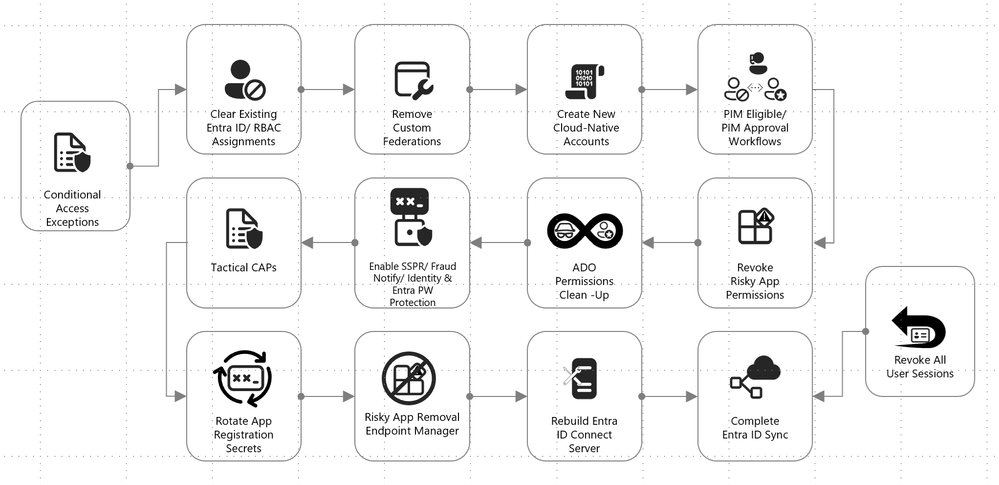

We begin with the cloud eviction process. If any actor takes control of the identity plane in Microsoft Entra ID, a set of steps should be followed to hit reset and take back administrative control of the environment. Here are some tactical measures employed by the Microsoft Incident Response team to ensure the security of the cloud identity plane:

Figure 2: Cloud response playbook.

Break glass accounts

Emergency scenarios require emergency access. For this purpose, one or two administrative accounts should be established. These accounts should be exempted from Conditional Access policies to ensure access in critical situations, monitored to verify their non-use, and passwords should be securely stored offline whenever feasible.

More information on emergency access accounts can be found here: Manage emergency access admin accounts – Microsoft Entra ID | Microsoft Learn.

Federation

Octo Tempest leverages cloud-born federation features to take control of a victim’s environment, allowing for the impersonation of any user inside the environment, even if multifactor authentication (MFA) is enabled. While this is a damaging technique, it is relatively simple to mitigate by logging in via the Microsoft Graph PowerShell module and setting the domain back from Federated to Managed. Doing so breaks the relationship and prevents the threat actor from minting further tokens.

Connect to your Azure/Office 365 tenant by running the following PowerShell cmdlet and entering your Global Admin Credentials:

Connect-MgGraph

Change federation authentication from Federated to Managed running this cmdlet:

Update-MgDomain -DomainId "test.contoso.com" -BodyParameter @{AuthenticationType="Managed"}

Service principals

Service principals have their own identities, credentials, roles, and permissions, and can be used to access resources or perform actions on behalf of the applications or services they represent. These have been used by Octo Tempest for persistence in compromised environments. Microsoft Incident Response recommends reviewing all service principals and removing or reducing permissions as needed.

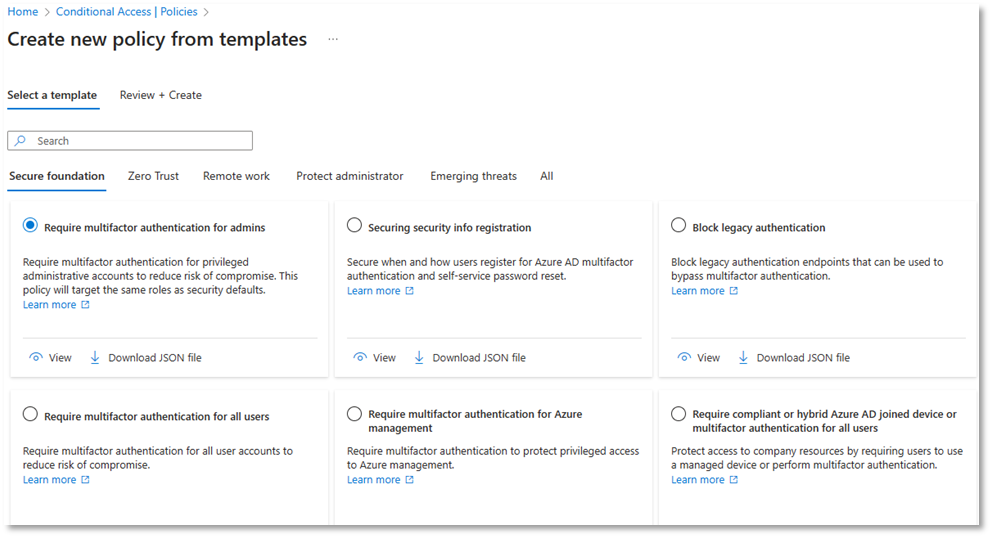

Conditional Access policies

These policies govern how an application or identity can access Microsoft Entra ID or your organization resources and configuring these appropriately ensures that only authorized users are accessing company data and services. Microsoft provides template policies that are simple to implement. Microsoft Incident Response recommends using the following set of policies to secure any environment.

Note: Any administrative account used to make a policy will be automatically excluded from it. These accounts should be removed from exclusions and replaced with a break glass account.

Figure 3: Conditional Access policy templates.

Conditional Access policy: Require multifactor authentication for all users

This policy is used to enhance the security of an organization’s data and applications by ensuring that only authorized users can access them. Octo Tempest is often seen performing SIM swapping and social engineering attacks, and MFA is now more of a speed bump than a roadblock to many threat actors. This step is essential.

Conditional Access policy: Require phishing-resistant multifactor authentication for administrators

This policy is used to safeguard access to portals and admin accounts. It is recommended to use a modern phishing-resistant MFA type which requires an interaction between the authentication method and the sign-in surface such as a passkey, Windows Hello for Business, or certificate-based authentication.

Note: Exclude the Entra ID Sync account. This account is essential for the synchronization process to function properly.

Conditional Access policy: Block legacy authentication

Implementing a Conditional Access policy to block legacy access prohibits users from signing in to Microsoft Entra ID using vulnerable protocols. Keep in mind that this could block valid connections to your environment. To avoid disruption, follow the steps in this guide.

Conditional Access policy: Require password change for high-risk users

By implementing a user risk Conditional Access policy, administrators can tailor access permissions or security protocols based on the assessed risk level of each user. Read more about user risk here.

Conditional Access policy: Require multifactor authentication for risky sign-ins

This policy can be used to block or challenge suspicious sign-ins and prevent unauthorized access to resources.

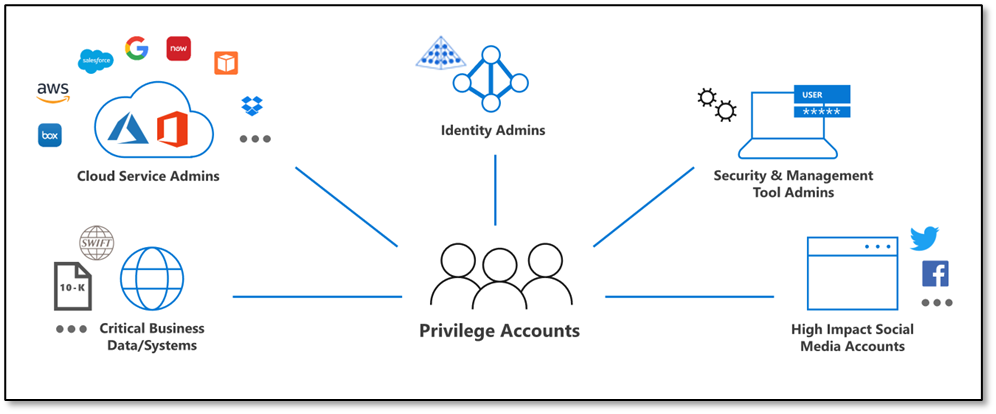

Segregate Cloud admin accounts

Administrative accounts should always be segregated to ensure proper isolation of privileged credentials. This is particularly true for cloud admin accounts to prevent the vertical movement of privileged identities between on-premises Active Directory and Microsoft Entra ID.

In addition to the enforced controls provided by Microsoft Entra ID for privileged accounts, organizations should establish process controls to restrict password resets and manipulation of MFA mechanisms to only authorized individuals.

During a tactical takeback, it’s essential to revoke permissions from old admin accounts, create entirely new accounts, and ensure that the new accounts are secured with modern MFA methods, such device-bound passkeys managed in the Microsoft Authenticator app.

Figure 4: CISO series: Secure your privileged administrative accounts with a phased roadmap | Microsoft Security Blog.

Review Azure resources

Octo Tempest has a history of manipulating resources such as Network Security Groups (NSGs), Azure Firewall, and granting themselves privileged roles within Azure Management Groups and Subscriptions using the ‘Elevate Access’ option in Microsoft Entra ID.

It’s imperative to conduct regular, and thorough, reviews of these services to carefully evaluate all changes to these services and effectively remove Octo Tempest from a cloud environment.

Of particular importance are the Azure SQL Server local admin accounts and the corresponding firewall rules. These areas warrant special attention to mitigate any potential risks posed by Octo Tempest.

Intune Multi-Administrator Approval (MAA)

Intune access policies can be used to implement two-person control of key changes to prevent a compromised admin account from maliciously using Intune, causing additional damage to the environment while mitigation is in progress.

Access policies are supported by the following resources:

- Apps – Applies to app deployments but doesn’t apply to app protection policies.

- Scripts – Applies to deployment of scripts to devices that run Windows.

Octo Tempest has been known to leverage Intune to deploy ransomware at scale. This risk can be mitigated by enabling the MAA functionality.

Review of MFA registrations

Octo Tempest has a history of registering MFA devices on behalf of standard users and administrators, enabling account persistence. As a precautionary measure, review all MFA registrations during the suspected compromise window and prepare for the potential re-registration of affected users.

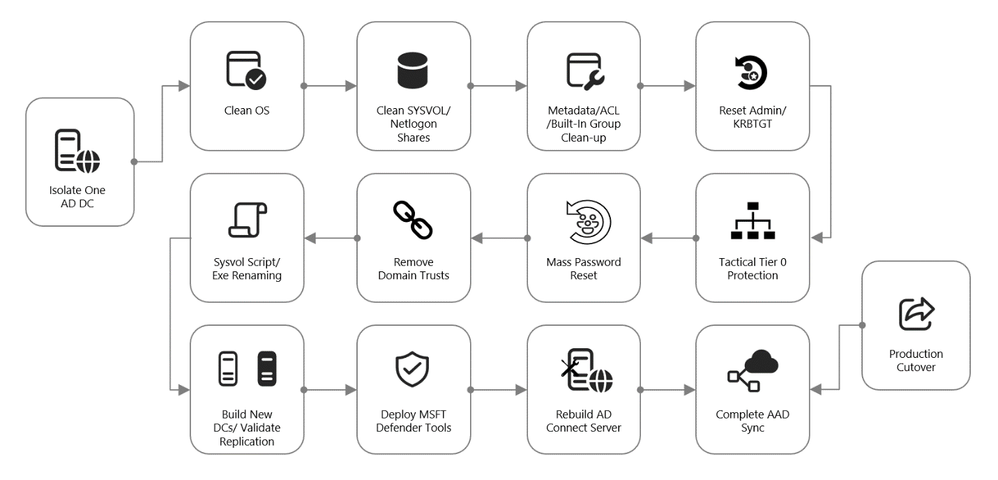

On-premises eviction

Additional containment efforts include the on-premises identity systems. There are tried and tested procedures for rebuilding and recovering on-premises Active Directory, post-ransomware, and these same techniques apply to an Octo Tempest intrusion.

Figure 5: On-premises recovery playbook.

Active Directory Forest Recovery

If a threat actor has taken administrative control of an Active Directory environment, complete compromise of all identities, in Active Directory, and their credentials should be assumed. In this scenario, on-premises recovery follows this Microsoft Learn article on full forest recovery:

Active Directory Forest Recovery – Procedures | Microsoft Learn

If there are good backups of at least one Domain Controller for each domain in the compromised forest, these should be restored. If this option is not available, there are other methods to isolate Domain Controllers for recovery. This can be accomplished with snapshots or by moving one good Domain Controller from each domain into an isolated network so that Active Directory sanitization can begin in a protective bubble.

Once this has been achieved, domain recovery can begin. The steps are identical for every domain in the forest:

- Metadata cleanup of all other Domain Controllers

- Seizing the Flexible Single Master Operations (FSMO) roles

- Raising the RID Pool and invalidating the RID Pool

- Resetting the Domain Controller computer account password

- Resetting the password of KRBTGT twice

- Resetting the built-in Administrator password twice

- If Read-Only Domain Controllers existed, removing their instance of krbtgt_xxxxx

- Resetting inter-domain trust account (ITA) passwords on each side of the parent/child trust

- Removing external trusts

- Performing an authoritative restore of the SYSVOL content

- Cleaning up DNS records for metadata cleaned up Domain Controllers

- Resetting the Directory Services Restore Mode (DSRM) password

- Removing Global Catalog and promoting to Global Catalog

When these actions have been completed, new Domain Controllers can be built in the isolated environment. Once replication is healthy, the original systems restored from backup can be demoted.

Octo Tempest is known for targeting Key Vaults and Secret Servers. Special attention will need to be paid to these secrets to determine if they were accessed and, if so, to sanitize the credentials contained within.

Tiering model

Restricting privilege escalation is critical to containing any attack since it limits the scope and damage. Identity systems in control of privileged access, and critical systems where identity administrators log onto, are both under the scope of protection.

Microsoft’s official documentation guides customers towards implementing the enterprise access model (EAM) that supersedes the “legacy AD tier model”. The EAM serves as an all-encompassing means of addressing where and how privileged access is used. It includes controls for cloud administration, and even network policy controls to protect legacy systems that lack accounts entirely.

However, the EAM has several limitations. First, it can take months, or even years, for an organization’s architects to map out and implement. Secondly, it spans disjointed controls and operating systems. Lastly, not all of it is relevant to the immediate concern of mitigating Pass-the-Hash (PtH) as outlined here.

Our customers, with on-premises systems, are often looking to implement PtH mitigations yesterday. The AD Tiering model is a good starting point for domain-joined services to satisfy this requirement. It is:

- Easier to conceptualize

- Has practical implementation guidance

- Rollout can be partially automated

The EAM is still a valuable strategy to work towards in an organization’s journey to security; but this is a better goal for after the fires and smoldering embers have been extinguished.

Figure 6: Securing privileged access Enterprise access model – Privileged access | Microsoft Learn.

Segregated privileged accounts

Accounts should be created for each tier of access, and processes should be put in place to ensure that these remain correctly isolated within their tiers.

Control plane isolation

Identify all systems that fall under the control plane. The key rule to follow is that anything that accesses or can manipulate an asset must be treated at the same level as the assets that they manipulate. At this stage of eviction, the control plane is the key focus area. As an example, SCCM being used to patch Domain Controllers must be treated as a control plane asset.

Backup accounts are particularly sensitive targets and must be managed appropriately.

Account disposition

The next phase of on-premises recovery and containment consists of a procedure known as account disposition in which all privileged or sensitive groups are emptied except for the account that is performing the actions. These groups include, but are not limited to:

- Built-In Administrators

- Domain Admins

- Enterprise Admins

- Schema Admins

- Account Operators

- Server Operators

- DNS Admins

- Group Policy Creator Owners

Any identity that gets removed from these groups goes through the following steps:

- Password is reset twice

- Account is disabled

- Account is marked with Smartcard is required for interactive login

- Access control lists (ACLs) are reset to the default values and the adminCount attribute is cleared

Once this is done, build new accounts as per the tiering model. Create new Tier 0 identities for only the few staff that require this level of access, with a complex password and marked with the Account is sensitive and cannot be delegated flag.

Access Control List (ACL) review

Microsoft Incident Response has found a plethora of overly-permissive access control entries (ACEs) within critical areas of Active Directory of many environments. These ACEs may be at the root of the domain, on AdminSDHolder, or on Organizational Units that hold critical services. A review of all the ACEs in the access control lists (ACLs) of these sensitive areas within Active Directory is performed, and unnecessary permissions are removed.

Mass password reset

In the event of a domain compromise, a mass password reset will need to be conducted to ensure that Octo Tempest does not have access to valid credentials. The method in which a mass password reset occurs will vary based on the needs of the organization and acceptable administrative overhead. If we simply write a script that gets all user accounts (other than the person executing the code) and resets the password twice to a random password, no one will know their own password and, therefore, will open tickets with the helpdesk. This could lead to a very busy day for those members of the helpdesk (who also don’t know their own password).

Some examples of mass password reset methods, that we have seen in the field, include but are not limited to:

- All at once: Get every single user (other than the newly created tier 0 accounts) and reset the password twice to a random password. Have enough helpdesk staff to be able to handle the administrative burden.

- Phased reset by OU, geographic location, department, etc.: This method targets a community of individuals in a more phased out approach which is less of an initial hit to the helpdesk.

- Service account password resets first, humans second: Some organizations start with the service account passwords first and then move to the human user accounts in the next phase.

Whichever method you choose to use for your mass password resets, ensure that you have an attestation mechanism in place to be able to accurately confirm that the person calling the helpdesk to get their new password (or enable Self-Service Password Reset) can prove they are who they say they are. An example of attestation would be a video conference call with the end user and the helpdesk and showing some sort of identification (for instance a work badge) on the screen.

It is recommended to also deploy and leverage Microsoft Entra ID Password Protection to prevent users from choosing weak or insecure passwords during this event.

Conclusion

The battle against Octo Tempest underscores the importance of a multi-faceted and proactive approach to cybersecurity. By understanding a threat actors’ tactics, techniques and procedures and by implementing the outlined incident response strategies, organizations can safeguard their identity infrastructure against this adversary and ensure all pervasive traces are eliminated. Incident Response is a continuous process of learning, adapting, and securing environments against ever-evolving threats.

by Contributed | Jun 18, 2024 | Technology

This article is contributed. See the original author and article here.

Seattle—June 18, 2024—Today, we are happy to announce new releases and enhancements to Azure AI Translator Service. We are introducing a new endpoint which unifies document translation async batch and sync operation and the SDKs are updated. Now, you can use and deploy document translation features in your organization to translate documents through Azure AI Studio and SharePoint without writing any code. Azure AI Translator container is now enhanced to translate both text and documents.

Overview

Document translation offers two operations: asynchronous batch and synchronous. Depending on the scenario, customers may use either operations or a combination of both. Today, we are delighted to announce that both operations have been unified and will share the same endpoint.

Asynchronous batch operation:

- Asynchronous batch translation supports the processing of multiple documents and large files. The batch translation processes source documents from an Azure Blob storage and uploads translated documents back into it.

The endpoint for the asynchronous batch operation is getting updated to:

{your-document translation-endpoint}/translator/document/batches?api-version=[Date]

The service will continue to support backward compatibility for the deprecated endpoint. We recommend new customers adapt the latest endpoint as new functions in the future will be added to the same.

Synchronous operation:

- Synchronous operation supports the processing of single document translation. It accepts source document as part of the request body, processes the document in memory and return translated document as part of the response body.

{your-document translation-endpoint}/translator/document:translate?api-version=[Date]

This unification is aimed to provide customers with consistency and simplicity while using either of the document translation operations.

Updated SDK

The updated document translation SDK supports both asynchronous batch operation and synchronous operation. Here’s how you can leverage it:

To run a translation operation for a document, you need a Translator endpoint and credentials. You can use the DefaultAzureCredential to try a number of common authentication methods optimized for both running as a service and development. The samples below uses a Translator API key credential by creating an AzureKeyCredential object. You can set endpoint and apiKey based on an environment variable, a configuration setting, or any way that works for your application.

Asynchronous batch method:

Creating a DocumentTranslationClient

string endpoint = "";

string apiKey = "";

SingleDocumentTranslationClient client = new SingleDocumentTranslationClient(new Uri(endpoint), new AzureKeyCredential(apiKey));

To Start a translation operation for documents in a blob container, call StartTranslationAsync. The result is a Long Running operation of type DocumentTranslationOperation which polls for the status of the translation operation from the API. To call StartTranslationAsync you need to initialize an object of type DocumentTranslationInput which contains the information needed to translate the documents.

Uri sourceUri = new Uri("");

Uri targetUri = new Uri("");

var input = new DocumentTranslationInput(sourceUri, targetUri, "es");

DocumentTranslationOperation operation = await client.StartTranslationAsync(input);

await operation.WaitForCompletionAsync();

Console.WriteLine($" Status: {operation.Status}");

Console.WriteLine($" Created on: {operation.CreatedOn}");

Console.WriteLine($" Last modified: {operation.LastModified}");

Console.WriteLine($" Total documents: {operation.DocumentsTotal}");

Console.WriteLine($" Succeeded: {operation.DocumentsSucceeded}");

Console.WriteLine($" Failed: {operation.DocumentsFailed}");

Console.WriteLine($" In Progress: {operation.DocumentsInProgress}");

Console.WriteLine($" Not started: {operation.DocumentsNotStarted}");

await foreach (DocumentStatusResult document in operation.Value)

{

Console.WriteLine($"Document with Id: {document.Id}");

Console.WriteLine($" Status:{document.Status}");

if (document.Status == DocumentTranslationStatus.Succeeded)

{

Console.WriteLine($" Translated Document Uri: {document.TranslatedDocumentUri}");

Console.WriteLine($" Translated to language code: {document.TranslatedToLanguageCode}.");

Console.WriteLine($" Document source Uri: {document.SourceDocumentUri}");

}

else

{

Console.WriteLine($" Error Code: {document.Error.Code}");

Console.WriteLine($" Message: {document.Error.Message}");

}

}

Synchronous method:

Creating a SingleDocumentTranslationClient

string endpoint = "";

string apiKey = "";

SingleDocumentTranslationClient client = new SingleDocumentTranslationClient(new Uri(endpoint), new AzureKeyCredential(apiKey));

To start a synchronous translation operation for a single document, call DocumentTranslate. To call DocumentTranslate you need to initialize an object of type MultipartFormFileData which contains the information needed to translate the documents. You would need to specify the target language to which the document must be translated to.

try

{

string filePath = Path.Combine("TestData", "test-input.txt");

using Stream fileStream = File.OpenRead(filePath);

var sourceDocument = new MultipartFormFileData(Path.GetFileName(filePath), fileStream, "text/html");

DocumentTranslateContent content = new DocumentTranslateContent(sourceDocument);

var response = client.DocumentTranslate("hi", content);

var requestString = File.ReadAllText(filePath);

var responseString = Encoding.UTF8.GetString(response.Value.ToArray());

Console.WriteLine($"Request string for translation: {requestString}");

Console.WriteLine($"Response string after translation: {responseString}");

}

catch (RequestFailedException exception)

{

Console.WriteLine($"Error Code: {exception.ErrorCode}");

Console.WriteLine($"Message: {exception.Message}");

}

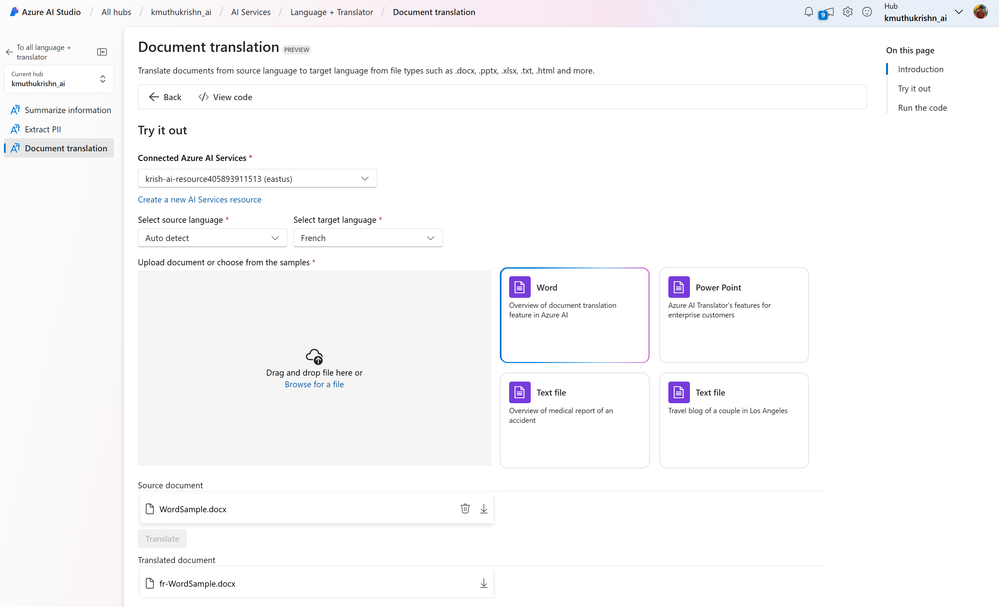

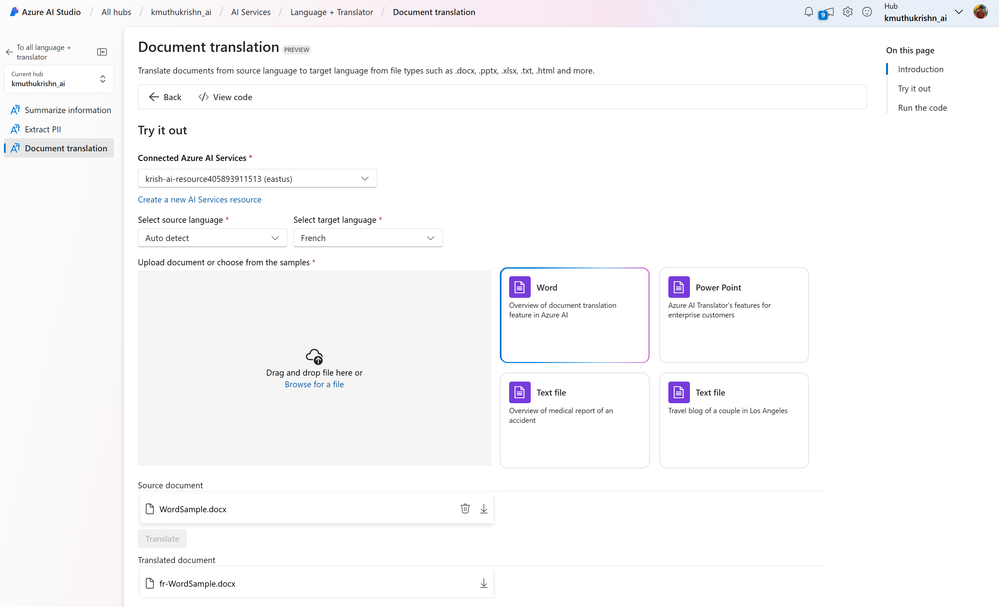

Ready to use solution in Azure AI Studio

Customers can easily build apps for their document translation needs using the SDK. One such example is the document translation tool in the Azure AI Studio, which was announced to be generally available at //build 2024. Here is a glimpse of how you may translate documents in this user interface:

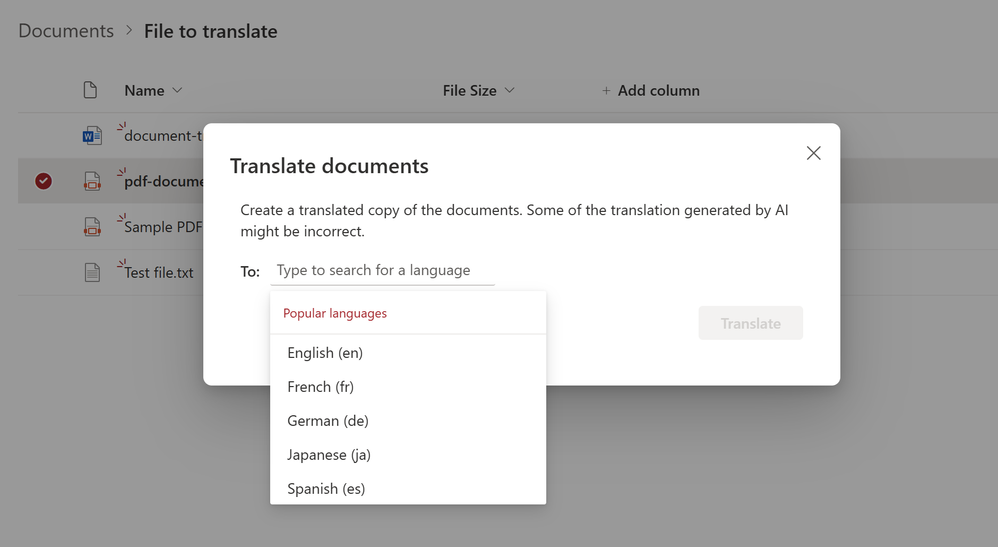

SharePoint document translation

The document translation integration in SharePoint lets you easily translate a selected file or a set of files into a SharePoint document library. This feature lets you translate files of different types either manually or automatically by creating a rule.

Learn more about the SharePoint integration here.

You can also use the translation feature for translating video transcripts and closed captioning files. More information here.

Document translation in container is generally available

In addition to the above updates, earlier this year, we announced the release of document translation and transliteration features for Azure AI Translator containers as preview. Today, both capabilities are generally available. All Translator container customers will get these new features automatically as part of the update.

Translator containers provide users with the capability to host the Azure AI Translator API on their own infrastructure and include all libraries, tools, and dependencies needed to run the service in any private, public, or personal computing environment. They are isolated, lightweight, portable, and are great for implementing specific security or data governance requirements.

With that update, the following are the operations that are now supported in Azure AI Translator containers:

- Text translation: Translate the text phrases between supported source and target language(s) in real-time.

- Text transliteration: Converts text in a language from one script to another script in real-time.

- Document translation: Translate a document between supported source and target language while preserving the original document’s content structure and format.

References

by Contributed | Jun 17, 2024 | Technology

This article is contributed. See the original author and article here.

Azure Storage is excited to announce customer managed Planned Failover for Azure Storage is now available in public preview.

Over the past few years Azure Storage has offered customer managed (unplanned) failover as a disaster recovery solution for geo-redundant storage accounts. This has enabled our users to meet their business requirements for disaster recovery testing and compliance. Planned failover now provides the same benefits while introducing additional benefits to our storage users.

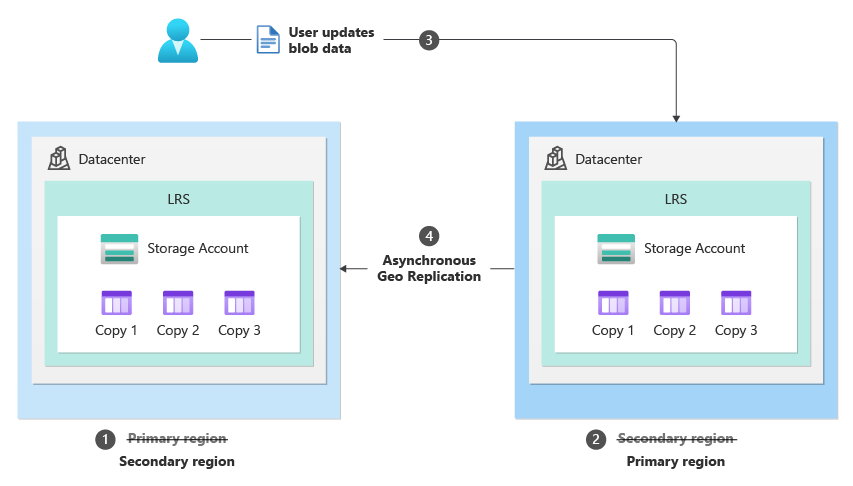

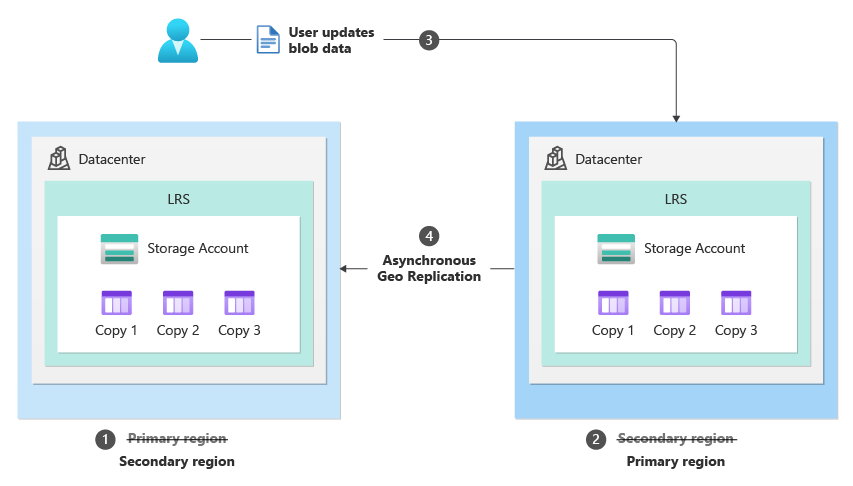

Planned Failover provides the ability to swap your geo primary and secondary region while the storage service endpoints are still healthy. As a result, a user can now failover their storage account while keeping geo-redundancy and with no data loss or additional cost. Users will no longer need to reconfigure geo-redundant storage (GRS) after their planned failover operation which will save them both time and cost. Once the planned failover operation is completed all new writes will be made to your original secondary region, which will now be your primary region.

After the planned failover is complete, the original primary region becomes the new secondary and the original secondary region becomes the new primary.

After the planned failover is complete, the original primary region becomes the new secondary and the original secondary region becomes the new primary.

There are multiple scenarios where Planned Failover can be utilized including:

- Planned disaster recovery testing drills to validate business continuity and disaster recovery.

- Recovering from a partial outage that occurs in the primary region where storage is not impacted. For example, if your storage service endpoints are healthy in both regions, but another Microsoft or 3rd party service is facing an outage in the primary region you can failover your storage services. In this scenario, once you failover the storage account and all other services your workloads can continue to work.

- A proactive solution in preparation of large-scale disasters that may impact a region. To prepare for a disaster such as a hurricane, users can leverage Planned Failover to failover to their secondary region then failback once things are resolved.

Planned failover supports Blob, ADLS Gen2, Table, File and Queue data.

Key differences between our customer managed (unplanned) failover and planned failover are highlighted below:

Unplanned Failover

|

Planned Failover

|

After an unplanned failover is completed the storage account is converted to Locally Redundant Storage (LRS) and the secondary region becomes the new primary region.

|

After a planned failover is completed the storage account remains Geo Redundant Storage (GRS). The secondary region becomes the new primary region, and the primary region becomes the new secondary region.

|

Once an unplanned failover is initiated the operation begins immediately. Writes that were pending replication to the secondary region (made after the Last Sync Time) will potentially be lost. Users can utilize their Last Sync Time to determine the recovery point of their storage account.

|

Once a planned failover is initiated the first step is replicating pending writes (writes made after the Last Sync Time) to the secondary region. As a result, the Last Sync Time is caught up before the and there should be no data loss.

|

Primary use case: a storage related outage in the primary region.

|

Primary use case: to test the Failover workflow or a non-storage related outage in the primary region.

|

An unplanned failover can be used when the primary storage endpoints are unavailable (there is a storage related outage on the primary region).

|

A planned failover works while the primary and secondary storage endpoints are available.

|

Regions Planned Failover currently supports

- Southeast Asia

- East Asia

- West Europe

- France Central

- East US 2

- Central US

- UK South

- Australia East

- Switzerland North

- Switzerland West

- India Central

- India West

To learn more about Planned Failover and to track the expansion of the preview into additional regions see, How customer managed planned failover works

Getting started

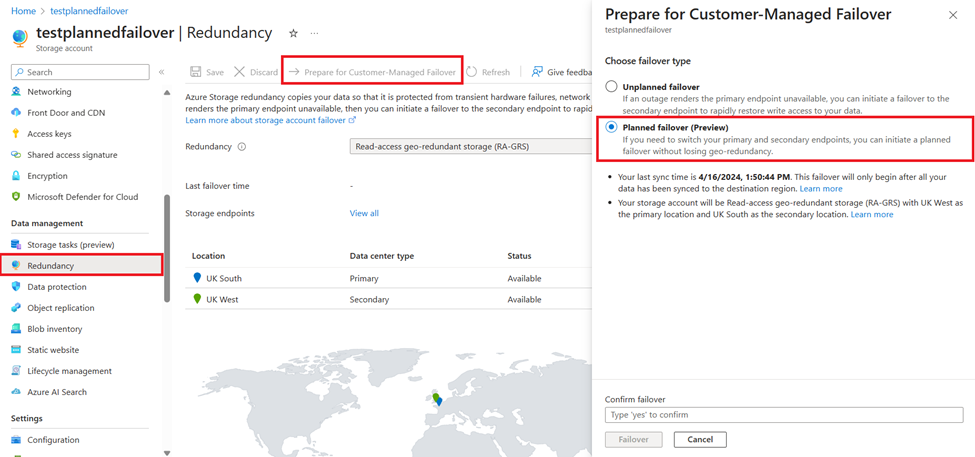

Getting started is simple, to learn more about the step-by-step process to initiate a planned failover review, Initiate account failover

The Azure Portal user experience for Planned Failover.

The Azure Portal user experience for Planned Failover.

Feedback

If you have questions or feedback, do not hesitate to reach out at storagefailover@service.microsoft.com

by Contributed | Jun 16, 2024 | Technology

This article is contributed. See the original author and article here.

This week, I’ve been working on a service request case where we need to export multiple databases using SqlPackage. Following, I would like to share my lesson learned to export simultaneous several databases, saving the export files to the F:sql folder and the logs of the operations to the F:sqllog folder.

Few recommendations when performing these exports:

- Enable Accelerated Networking: This enhances data transfer performance.

- Virtual Machine:

# Define the path to the SqlPackage.exe file

$SqlPackagePath = "C:Program FilesMicrosoft SQL ServerXXXDACbinSqlPackage.exe"

# Define the server, user, and password

$serverName = "servername.database.windows.net"

$username = "username"

$password = "password"

$outputFolder = "f:sql"

$logFolder = "f:sqllogs"

$databaseList = @("db1", "db2")

# Create the logs folder if it does not exist

if (-Not (Test-Path -Path $logFolder)) {

New-Item -ItemType Directory -Path $logFolder

}

foreach ($database in $databaseList) {

$outputFile = Join-Path -Path $outputFolder -ChildPath "$database.bacpac"

$logFile = Join-Path -Path $logFolder -ChildPath "$database.log"

$errorLogFile = Join-Path -Path $logFolder -ChildPath "$database-error.log"

$args = @(

"/Action:Export",

"/ssn:$serverName",

"/sdn:$database",

"/su:$username",

"/sp:$password",

"/tf:$outputFile"

)

# Start the background job with log redirection

Write-Host "Starting export of database $database to $outputFile in background"

Start-Job -ScriptBlock {

param($exePath, $arguments, $log, $errorLog)

& $exePath @arguments *>$log 2>$errorLog

} -ArgumentList $SqlPackagePath, $args, $logFile, $errorLogFile

}

Write-Host "Background export jobs started for all databases."

# Wait for all background jobs to complete

$jobs = Get-Job

foreach ($job in $jobs) {

Wait-Job -Job $job

$jobDetails = Receive-Job -Job $job

Write-Host "Job ID $($job.Id) completed with state: $($job.State)"

Remove-Job -Job $job

}

Write-Host "Export completed for all databases. Logs are located in the $logFolder folder."

Disclaimer

The script provided above is intended for illustrative purposes only. Before using it in a production environment, thoroughly review and test the script to ensure it meets your needs and adheres to your organization’s security and operational policies. Always safeguard sensitive information such as credentials and server details.

Also, remember that sqlpackage exports the data but does not guarantee transactional consistency. You will find more details about here: Using data-tier applications (BACPAC) to migrate a database from Managed Instance to SQL Server – Microsoft Community Hub

by Contributed | Jun 15, 2024 | Technology

This article is contributed. See the original author and article here.

A banner with the blog series title, “Grow with Copilot for Microsoft 365”

A banner with the blog series title, “Grow with Copilot for Microsoft 365”

Welcome to Grow with Copilot for Microsoft 365, where we curate key relevant news, insights, and resources to help small and medium organizations harness the power of Copilot.

In this edition, we take a deep dive into how small and medium-sized businesses are embracing AI in their everyday workflows despite the common hurdles SMB employees often encounter in adopting AI technologies. We also highlight Floww, a FinTech firm that capitalizes on Copilot for Microsoft 365 to boost operational efficiency and communications, discuss how Copilot is tackling the pressing issue of data security and privacy, and lastly, provide resources for the adoption of Copilot and best practices for skill development to help organizations fully leverage Copilot’s capabilities.

A deeper look at the state of AI in small & medium businesses

We are witnessing how AI is reshaping work for organizations of all sizes in last month’s 2024 Work Trend Index Annual Report. This month, we dove deeper into the report for findings specific to small and medium businesses.

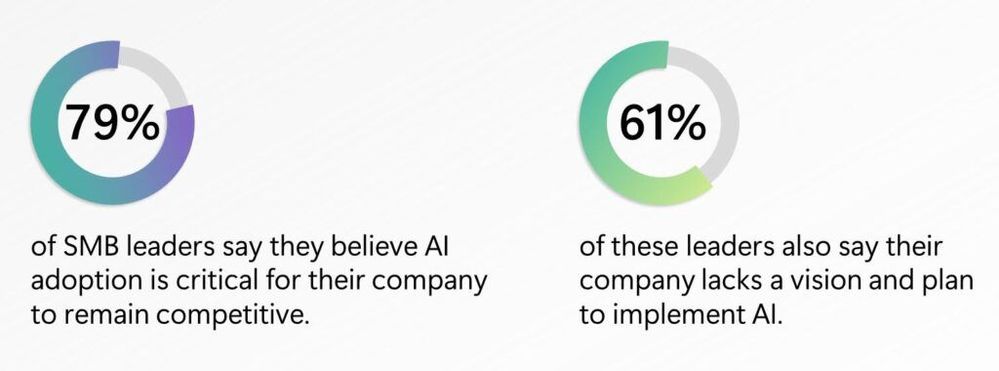

Like large businesses, 79% of SMB leaders believe AI adoption is critical for their company to remain competitive with 61% of these leaders saying their company lacks a vision and plan for AI

Like large businesses, 79% of SMB leaders believe AI adoption is critical for their company to remain competitive with 61% of these leaders saying their company lacks a vision and plan for AI

From the study, leaders believe that the top three benefits being increasing employee productivity, accelerating growth and innovation, and automating and streamlining business. In addition, while 78% of SMB workers have used generative AI at work, even more so (+10 ppts) compared to large businesses, SMB workers face more challenges in AI adoption, either feeling more (+9 ppts) intimidated to learn how to use generative AI tools or (-5 ppts) having received any AI training at work.

As we continue to learn in our work with SMB customers, there is a path forward: identify a business problem and apply AI, integrate AI tools across your business, take a top down and bottoms up approach, and prioritize training. Read more from Brenna Robinson’s blog on Microsoft 365 as well as her interviews in Fortune magazine, Inc. magazine, and Entrepreneur magazine.

SMB Customer spotlight: Floww

Speaking of working with customers, we’d like to you to meet Floww, a fast-growing FinTech company with about 100 employees in the United Kingdom that has created a financial infrastructure platform that connects investors and innovators. Floww helps startups and scaleups find funding from angels, VCs, and other private equity investors, and helps investors manage their portfolios and collaborate with each other.

Floww uses Copilot for Microsoft 365 to improve efficiency and communications:

Copilot for Microsoft 365 process, analyze, and summarize large amounts of data from various sources, such as financial documents, regulatory compliance, and customer profiles. Copilot also helps Floww create mockups, draft policies, and share information with customers faster and easier.

Floww trusts Microsoft to ensure data security and compliance:

Floww works with sensitive data that requires high levels of security and compliance. Floww relies on Microsoft’s security tools and features to protect its data and maintain its ISO certification. Floww also values the trust that Microsoft provides for its customers and partners.

“Some teams are saving 10 to 20% of their time with Copilot because it’s assisting with meeting notes, generating to-do lists, summarizing complicated documents, and freeing us up so we can concentrate on the bigger tasks.”—Alex Pilsworth, Chief Technology Officer, Floww

“Some teams are saving 10 to 20% of their time with Copilot because it’s assisting with meeting notes, generating to-do lists, summarizing complicated documents, and freeing us up so we can concentrate on the bigger tasks.”—Alex Pilsworth, Chief Technology Officer, Floww

Check out the full customer story and other customer stories here.

How Copilot for Microsoft 365 delivers on data security and privacy

Like Floww, many customers have data privacy and compliance top of mind. It’s no wonder as research shows 82% of ransomware attacks are targeted at small and medium-sized businesses[1].

Our customers, when using Microsoft 365 for Business and Copilot for Microsoft 365, get peace of mind with the highest standards of data protection. Microsoft 365 for Business protects passwords, secure access, keep your business data safe, manage your devices so they stay secure, and defend your business against cyberthreats. You can learn more about our three subscriptions plans here. Additionally, as a Microsoft 365 for Business user, your Web-grounded AI chat in the browser, Outlook, M365 apps or Teams come with enterprise data protection to safeguard your business data and devices with integrated identity, security, compliance, device management, and privacy protection.

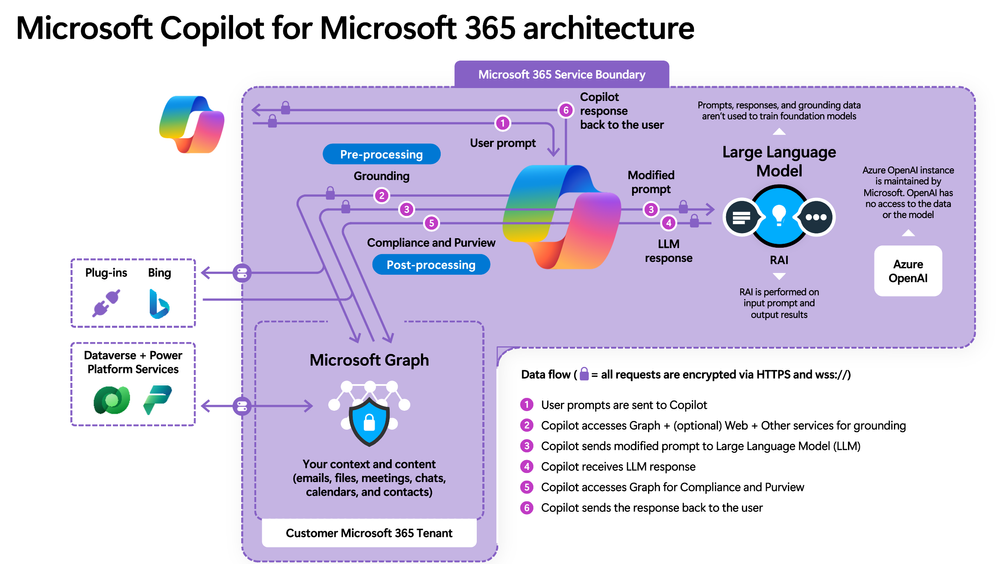

Now Copilot for Microsoft 365 also works with work & business data in the Microsoft 365 Graph – your emails, calendars, and documents you have access to. That is how it has the power to become your personal assistant at work, from recapping Teams meetings, to turning a Word document into a stunning PowerPoint. Here’s how Microsoft protects your data and gives you control over it.

A high level architecture diagram of Copilot for Microsoft 365, depicting service boundary, data flows, and prompt/response orchestration

A high level architecture diagram of Copilot for Microsoft 365, depicting service boundary, data flows, and prompt/response orchestration

Tenant Security

Copilot operates within the Microsoft 365 tenant, inheriting all the existing security, privacy, identity, and compliance requirements. It is built upon a foundation of trust, ensuring that organizational data is distinct and separate from other tenants. Watch this video to learn more about how Copilot is built upon a foundation of trust.

Data Defense

Data is considered the customer’s business, and Copilot respects the customer’s control over its collection, use, and distribution. It ensures that data is stored, encrypted, processed, and defended to the highest standards. Further, we don’t use your customer data to train foundation models, and Copilot adheres to existing data permissions and policies, and its responses to you based only on data that you personally can access. Watch the following video to learn how data is stored, encrypted, processed, and defended.

Azure OpenAI Service

Interactions with Copilot (prompts) are processed using Azure OpenAI services, which prioritize the integrity and protection of your organizational data. Watch this video for a deeper understanding of how Azure OpenAI services power Copilot while prioritizing data integrity.

For all the latest updates and deep dive information start at Microsoft Copilot for Microsoft 365 documentation | Microsoft Learn and aka.ms/copilotlab to learn more about how to use Copilot. Find adoption and skilling best practices at adoption.microsoft.com

[1] FBI Releases the Internet Crime Complaint Center 2021 Internet Crime Report, FBI. March 22, 2022.

More highlights: Build, Mechanics, new Partner resources

Last month, Microsoft Build 2024 had over 3000 in-person attendees in Seattle and over 200K+ virtual attendees. We announced exciting news across the company, but one not-to-be-missed for SMB is Team Copilot. Available as preview later in this calendar year, Team Copilot expands Copilot beyond a personal assistant to work on behalf of a team, improving collaboration and project management. Read Jared Spataro’s blog for how it works as well as other news in Copilot for Microsoft 365. Watch the 90-Second Recap: Satya Nadella’s Keynote if you want to get all the news announced across Microsoft.

Not to be missed: the top two Copilot videos in Microsoft Mechanics, the Microsoft official video programming to teach you how to apply Microsoft innovation to the work you do every day, explain how Copilot for Microsoft 365 works and how to get ready for Copilot in three simple set up steps.

If you are one of our CSPs, we recently launched new partner led adoption immersion experience – consisting of new click-through demos for Sales, Marketing HR, Exec personas (with more coming soon), facilitator guide to deliver this in a workshop, and participate guide for end users to follow along. Check here for the entirety of the content: aka.ms/CopilotImmersionCSPLed .Go here to quickly access the full list of demos: aka.ms/CopilotImmersion/DemosList.

That’s it for this edition! Thank you for reading. Let us know in the comments how you use Copilot for Microsoft 365 to grow your business!

Recent Comments